Storm入门(四)WordCount示例

一、关联代码

使用maven,代码如下。

pom.xml 和Storm入门(三)HelloWorld示例相同

RandomSentenceSpout.java

/**

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License"); you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package cn.ljh.storm.wordcount; import org.apache.storm.spout.SpoutOutputCollector;

import org.apache.storm.task.TopologyContext;

import org.apache.storm.topology.OutputFieldsDeclarer;

import org.apache.storm.topology.base.BaseRichSpout;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Values;

import org.apache.storm.utils.Utils;

import org.slf4j.Logger;

import org.slf4j.LoggerFactory; import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.Map;

import java.util.Random; public class RandomSentenceSpout extends BaseRichSpout {

private static final Logger LOG = LoggerFactory.getLogger(RandomSentenceSpout.class); SpoutOutputCollector _collector;

Random _rand; public void open(Map conf, TopologyContext context, SpoutOutputCollector collector) {

_collector = collector;

_rand = new Random();

} public void nextTuple() {

Utils.sleep(100);

String[] sentences = new String[]{

sentence("the cow jumped over the moon"),

sentence("an apple a day keeps the doctor away"),

sentence("four score and seven years ago"),

sentence("snow white and the seven dwarfs"),

sentence("i am at two with nature")};

final String sentence = sentences[_rand.nextInt(sentences.length)]; LOG.debug("Emitting tuple: {}", sentence); _collector.emit(new Values(sentence));

} protected String sentence(String input) {

return input;

} @Override

public void ack(Object id) {

} @Override

public void fail(Object id) {

} public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("word"));

} // Add unique identifier to each tuple, which is helpful for debugging

public static class TimeStamped extends RandomSentenceSpout {

private final String prefix; public TimeStamped() {

this("");

} public TimeStamped(String prefix) {

this.prefix = prefix;

} protected String sentence(String input) {

return prefix + currentDate() + " " + input;

} private String currentDate() {

return new SimpleDateFormat("yyyy.MM.dd_HH:mm:ss.SSSSSSSSS").format(new Date());

}

}

}

WordCountTopology.java

/**

* Licensed to the Apache Software Foundation (ASF) under one

* or more contributor license agreements. See the NOTICE file

* distributed with this work for additional information

* regarding copyright ownership. The ASF licenses this file

* to you under the Apache License, Version 2.0 (the

* "License"); you may not use this file except in compliance

* with the License. You may obtain a copy of the License at

*

* http://www.apache.org/licenses/LICENSE-2.0

*

* Unless required by applicable law or agreed to in writing, software

* distributed under the License is distributed on an "AS IS" BASIS,

* WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

* See the License for the specific language governing permissions and

* limitations under the License.

*/

package cn.ljh.storm.wordcount; import org.apache.storm.Config;

import org.apache.storm.LocalCluster;

import org.apache.storm.StormSubmitter;

import org.apache.storm.task.OutputCollector;

import org.apache.storm.task.TopologyContext;

import org.apache.storm.topology.BasicOutputCollector;

import org.apache.storm.topology.IRichBolt;

import org.apache.storm.topology.OutputFieldsDeclarer;

import org.apache.storm.topology.TopologyBuilder;

import org.apache.storm.topology.base.BaseBasicBolt;

import org.apache.storm.tuple.Fields;

import org.apache.storm.tuple.Tuple;

import org.apache.storm.tuple.Values; import java.util.ArrayList;

import java.util.Collections;

import java.util.HashMap;

import java.util.List;

import java.util.Map; public class WordCountTopology {

public static class SplitSentence implements IRichBolt {

private OutputCollector _collector;

public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("word"));

} public Map<String, Object> getComponentConfiguration() {

return null;

} public void prepare(Map stormConf, TopologyContext context,

OutputCollector collector) {

_collector = collector;

} public void execute(Tuple input) {

String sentence = input.getStringByField("word");

String[] words = sentence.split(" ");

for(String word : words){

this._collector.emit(new Values(word));

}

} public void cleanup() {

// TODO Auto-generated method stub }

} public static class WordCount extends BaseBasicBolt {

Map<String, Integer> counts = new HashMap<String, Integer>(); public void execute(Tuple tuple, BasicOutputCollector collector) {

String word = tuple.getString(0);

Integer count = counts.get(word);

if (count == null)

count = 0;

count++;

counts.put(word, count);

collector.emit(new Values(word, count));

} public void declareOutputFields(OutputFieldsDeclarer declarer) {

declarer.declare(new Fields("word", "count"));

}

} public static class WordReport extends BaseBasicBolt {

Map<String, Integer> counts = new HashMap<String, Integer>(); public void execute(Tuple tuple, BasicOutputCollector collector) {

String word = tuple.getStringByField("word");

Integer count = tuple.getIntegerByField("count");

this.counts.put(word, count);

} public void declareOutputFields(OutputFieldsDeclarer declarer) { } @Override

public void cleanup() {

System.out.println("-----------------FINAL COUNTS START-----------------------");

List<String> keys = new ArrayList<String>();

keys.addAll(this.counts.keySet());

Collections.sort(keys); for(String key : keys){

System.out.println(key + " : " + this.counts.get(key));

} System.out.println("-----------------FINAL COUNTS END-----------------------");

} } public static void main(String[] args) throws Exception { TopologyBuilder builder = new TopologyBuilder(); builder.setSpout("spout", new RandomSentenceSpout(), 5); //ShuffleGrouping:随机选择一个Task来发送。

builder.setBolt("split", new SplitSentence(), 8).shuffleGrouping("spout");

//FiledGrouping:根据Tuple中Fields来做一致性hash,相同hash值的Tuple被发送到相同的Task。

builder.setBolt("count", new WordCount(), 12).fieldsGrouping("split", new Fields("word"));

//GlobalGrouping:所有的Tuple会被发送到某个Bolt中的id最小的那个Task。

builder.setBolt("report", new WordReport(), 6).globalGrouping("count"); Config conf = new Config();

conf.setDebug(true); if (args != null && args.length > 0) {

conf.setNumWorkers(3); StormSubmitter.submitTopologyWithProgressBar(args[0], conf, builder.createTopology());

}

else {

conf.setMaxTaskParallelism(3); LocalCluster cluster = new LocalCluster();

cluster.submitTopology("word-count", conf, builder.createTopology()); Thread.sleep(20000); cluster.shutdown();

}

}

}

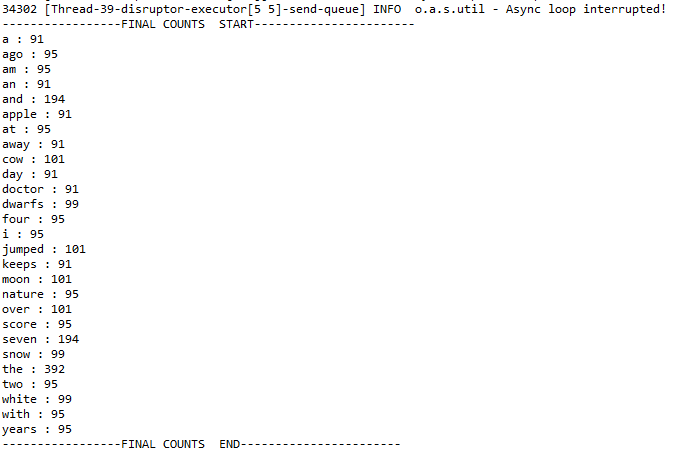

二、执行效果

Storm入门(四)WordCount示例的更多相关文章

- 【第四篇】ASP.NET MVC快速入门之完整示例(MVC5+EF6)

目录 [第一篇]ASP.NET MVC快速入门之数据库操作(MVC5+EF6) [第二篇]ASP.NET MVC快速入门之数据注解(MVC5+EF6) [第三篇]ASP.NET MVC快速入门之安全策 ...

- 《Storm入门》中文版

本文翻译自<Getting Started With Storm>译者:吴京润 编辑:郭蕾 方腾飞 本书的译文仅限于学习和研究之用,没有原作者和译者的授权不能用于商业用途. 译者序 ...

- 【原创】NIO框架入门(四):Android与MINA2、Netty4的跨平台UDP双向通信实战

概述 本文演示的是一个Android客户端程序,通过UDP协议与两个典型的NIO框架服务端,实现跨平台双向通信的完整Demo. 当前由于NIO框架的流行,使得开发大并发.高性能的互联网服务端成为可能. ...

- Storm系列(二):使用Csharp创建你的第一个Storm拓扑(wordcount)

WordCount在大数据领域就像学习一门语言时的hello world,得益于Storm的开源以及Storm.Net.Adapter,现在我们也可以像Java或Python一样,使用Csharp创建 ...

- 脑残式网络编程入门(四):快速理解HTTP/2的服务器推送(Server Push)

本文原作者阮一峰,作者博客:ruanyifeng.com. 1.前言 新一代HTTP/2 协议的主要目的是为了提高网页性能(有关HTTP/2的介绍,请见<从HTTP/0.9到HTTP/2:一文读 ...

- 大数据入门第七天——MapReduce详解(一)入门与简单示例

一.概述 1.map-reduce是什么 Hadoop MapReduce is a software framework for easily writing applications which ...

- hadoop学习第三天-MapReduce介绍&&WordCount示例&&倒排索引示例

一.MapReduce介绍 (最好以下面的两个示例来理解原理) 1. MapReduce的基本思想 Map-reduce的思想就是“分而治之” Map Mapper负责“分”,即把复杂的任务分解为若干 ...

- MapReduce 编程模型 & WordCount 示例

学习大数据接触到的第一个编程思想 MapReduce. 前言 之前在学习大数据的时候,很多东西很零散的做了一些笔记,但是都没有好好去整理它们,这篇文章也是对之前的笔记的整理,或者叫输出吧.一来是加 ...

- WordCount示例深度学习MapReduce过程(1)

我们都安装完Hadoop之后,按照一些案例先要跑一个WourdCount程序,来测试Hadoop安装是否成功.在终端中用命令创建一个文件夹,简单的向两个文件中各写入一段话,然后运行Hadoop,Wou ...

随机推荐

- C语言中的位段(位域)知识

在结构体或类中,为了节省成员的存储空间,可以定义某些由位组成的字段,这些字段可以不需要以byte为单位. 这些不同位长度的字段实际存储于一个或多个整形变量.位段成员必须声明为int, signed i ...

- 得力D991CN Plus计算器评测(全程对比卡西欧fx-991CN X)

得力在2018年出了一款高仿卡西欧fx-991CN X中文版的计算器,型号为D991CN Plus,在实现同样功能的前提下,网销价格是卡西欧的三分之一左右.但是这款计算器与卡西欧正版计算器差距是大是小 ...

- css+js调整当前界面背景音量

展示效果 html代码: <div> <audio id="audio" controls src="https://dbl.5518pay.com/r ...

- Linux下单机实现Zookeeper集群

安装配置JAVA开发环境 下载ZOOKEEPER zookeeper下载地址 在下载的zookeeper目录里创建3个文件,zk1,zk2,zk3,用于存放每个集群的数据文件. 并在三个目录下创建da ...

- 震惊!计算机连0.3+0.6都算不对?浅谈IEEE754浮点数算数标准

>>> 0.3+0.6 0.8999999999999999 >>> 1-0.9 0.09999999999999998 >>> 0.1+0.1+ ...

- 如何解决Mac无法读取外置硬盘问题?

在mac中插入一款硬盘设备后发现硬盘无法显示在mac中,导致mac无法读取设备,遇到这种问题时需要如何解决? 首先,硬盘不能正常在mac上显示可能是硬盘出现了错误无法使用,也可能是硬盘的文件系统格式不 ...

- Elasticsearch的基本概念和指标

背景 在13年的时候,我开始负责整个公司的搜索引擎.嗯……,不是很牛的那种大项目负责人.而是整个搜索就我一个人做.哈哈. 后来跳槽之后,所经历的团队都用Elasticsearch,基本上和缓存一样,是 ...

- 带你精读你不知道的Javasript(上)(一)

斌果在这几天看了下你不知道的js这本书,这本书讲的东西还是挺不错的,其中有很多平时我压根没接触到的概念和方法.借此也可以丰富一下我对js的了解. 第一部分 第一章 作用域是什么? 1.程序中一点源代码 ...

- github仓库的使用

业来源:https://edu.cnblogs.com/campus/gzcc/GZCC-16SE1/homework/2103 远程仓库地址是:https://github.com/BinGuo66 ...

- SQL之case when then用法(用于分类统计)

case具有两种格式.简单case函数和case搜索函数. --简单case函数 case sex when '1' then '男' when '2' then '女’ else '其他' end ...