hbase和mapreduce开发 WordCount

代码:

/**

* hello world by world 测试数据

* @author a

*

*/

public class DefinedMapper extends Mapper<LongWritable, Text, Text, LongWritable>{

@Override

protected void map(LongWritable key, Text value, Mapper<LongWritable, Text, Text, LongWritable>.Context context)

throws IOException, InterruptedException {

long num=1L;

if(null!=value){

String strValue=value.toString();

String arrValue[]=strValue.split(" ");

if(arrValue.length==4){

for(int i=0;i<arrValue.length;i++){

context.write(new Text(arrValue[i].toString()), new LongWritable(num));

}

}

}

}

}

public class DefinedReduce extends TableReducer{

@Override

protected void reduce(Object arg0, Iterable values, Context arg2) throws IOException, InterruptedException {

if(null!=values){

long num=0l;

Iterator<LongWritable> it=values.iterator();

while(it.hasNext()){

LongWritable count=it.next();

num+=Long.valueOf(count.toString());

}

Put put=new Put(String.valueOf(arg0).getBytes());//设置行键

put.add("context".getBytes(), "count".getBytes(), String.valueOf(num).getBytes());

arg2.write(arg0, put);

}

}

}

package com.zhang.hbaseandmapreduce; import java.io.IOException; import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.hbase.HBaseConfiguration;

import org.apache.hadoop.hbase.HColumnDescriptor;

import org.apache.hadoop.hbase.HTableDescriptor;

import org.apache.hadoop.hbase.client.HBaseAdmin;

import org.apache.hadoop.hbase.mapreduce.TableOutputFormat;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat; public class HBaseAndMapReduce {

public static void createTable(String tableName){

Configuration conf=HBaseConfiguration.create();

HTableDescriptor htable=new HTableDescriptor(tableName);

HColumnDescriptor hcol=new HColumnDescriptor("context");

try {

HBaseAdmin admin=new HBaseAdmin(conf);

if(admin.tableExists(tableName)){

System.out.println(tableName+" 已经存在");

return;

}

htable.addFamily(hcol);

admin.createTable(htable);

System.out.println(tableName+" 创建成功");

} catch (IOException e) {

e.printStackTrace();

} }

public static void main(String[] args) {

String tableName="workCount";

Configuration conf=new Configuration();

conf.set(TableOutputFormat.OUTPUT_TABLE, tableName);

conf.set("hbase.zookeeper.quorum", "192.168.177.124:2181");

createTable(tableName);

try {

Job job=new Job(conf);

job.setJobName("hbaseAndMapReduce");

job.setJarByClass(HBaseAndMapReduce.class);//jar的运行主类

job.setOutputKeyClass(Text.class);//mapper key的输出类型

job.setOutputValueClass(LongWritable.class);//mapper value的输出类型

job.setMapperClass(DefinedMapper.class);

job.setReducerClass(DefinedReduce.class);

job.setInputFormatClass(org.apache.hadoop.mapreduce.lib.input.TextInputFormat.class);

job.setOutputFormatClass(TableOutputFormat.class);

FileInputFormat.addInputPath(job, new Path("/tmp/dataTest/data.text"));

System.exit(job.waitForCompletion(true) ? 0:1);

} catch (Exception e) {

e.printStackTrace();

} } }

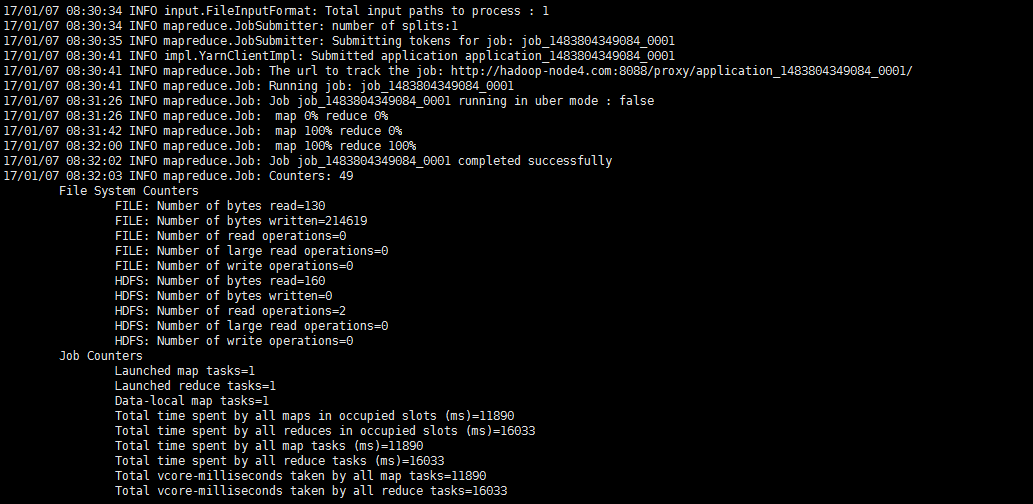

[root@node4 Desktop]# hadoop jar hbaseAndMapR.jar com.zhang.hbaseandmapreduce.HBaseAndMapReduce

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(LongWritable.class);

(1)2017-01-07 06:53:33,493 INFO org.apache.hadoop.yarn.server.nodemanager.containermanager.monitor.ContainersMonitorImpl: Memory usage of ProcessTree 14615 for container-id container_1483797859000_0001_01_000001: 80.9 MB of 2 GB physical memory used; 1.7 GB of 4.2 GB virtual memory used

(2)Detected pause in JVM or host machine (eg GC): pause of approximately 3999ms

(3)AttemptID:attempt_1462439785370_0055_m_000001_0 Timed out after 600 secs

MB of 1 GB physical memory used; 812.3 MB of 2.1 GB virtual memory used

<property>

<name>mapred.task.timeout</name>

<value>180000</value>

</property>

# The maximum amount of heap to use. Default is left to JVM default.

export HBASE_HEAPSIZE=2G

# Uncomment below if you intend to use off heap cache. For example, to allocate 8G of

# offheap, set the value to "8G".

export HBASE_OFFHEAPSIZE=2G

hbase和mapreduce开发 WordCount的更多相关文章

- HBase概念学习(七)HBase与Mapreduce集成

这篇文章是看了HBase权威指南之后,依据上面的解说搬下来的样例,可是略微有些不一样. HBase与mapreduce的集成无非就是mapreduce作业以HBase表作为输入,或者作为输出,也或者作 ...

- 基于 Eclipse 的 MapReduce 开发环境搭建

文 / vincentzh 原文连接:http://www.cnblogs.com/vincentzh/p/6055850.html 上周末本来要写这篇的,结果没想到上周末自己环境都没有搭起来,运行起 ...

- Hadoop MapReduce开发最佳实践(上篇)

body{ font-family: "Microsoft YaHei UI","Microsoft YaHei",SimSun,"Segoe UI& ...

- 【Hadoop学习之八】MapReduce开发

环境 虚拟机:VMware 10 Linux版本:CentOS-6.5-x86_64 客户端:Xshell4 FTP:Xftp4 jdk8 hadoop-3.1.1 伪分布式:HDFS和YARN 伪分 ...

- Hbase框架原理及相关的知识点理解、Hbase访问MapReduce、Hbase访问Java API、Hbase shell及Hbase性能优化总结

转自:http://blog.csdn.net/zhongwen7710/article/details/39577431 本blog的内容包含: 第一部分:Hbase框架原理理解 第二部分:Hbas ...

- [转] Hadoop MapReduce开发最佳实践(上篇)

前言 本文是Hadoop最佳实践系列第二篇,上一篇为<Hadoop管理员的十个最佳实践>. MapRuduce开发对于大多数程序员都会觉得略显复杂,运行一个WordCount(Hadoop ...

- hadoop程序MapReduce之WordCount

需求:统计一个文件中所有单词出现的个数. 样板:word.log文件中有hadoop hive hbase hadoop hive 输出:hadoop 2 hive 2 hbase 1 MapRedu ...

- HBase设计与开发

HBase设计与开发 @(HBase) 适合HBase应用的场景 成熟的数据分析主题,查询模式已经确定且不会轻易改变. 传统数据库无法承受负载. 简单的查询模式. 基本概念 行健:是hbase表自带的 ...

- MaxCompute Studio提升UDF和MapReduce开发体验

原文链接:http://click.aliyun.com/m/13990/ UDF全称User Defined Function,即用户自定义函数.MaxCompute提供了很多内建函数来满足用户的计 ...

随机推荐

- HDU 6034 Balala Power!【排序/进制思维】

Balala Power![排序/进制思维] Time Limit: 4000/2000 MS (Java/Others) Memory Limit: 131072/131072 K (Java ...

- Python的程序结构[1] -> 方法/Method[1] -> 静态方法、类方法和属性方法

静态方法.类方法和属性方法 在 Python 中有三种常用的方法装饰器,可以使普通的类实例方法变成带有特殊功能的方法,分别是静态方法.类方法和属性方法. 静态方法 / Static Method 在 ...

- H. Fake News (medium)

H. Fake News (medium) 题意 以前是给出 S T 串,问在 S 中有多少个子串为 T 的个数,子串可以不连续,保持位置相对一致. 现在给出 n ,要你构造 S T 串. 分析 这种 ...

- APP专项测试 | 内存及cpu

命令: adb shell dumpsys meminfo packagename 关注点: 1.Native/Dalvik 的 Heap 信息 具体在上面的第一行和第二行,它分别给出的是JNI层和 ...

- 基础博弈论之——简单的博弈问题【hdu1525】【Euclid‘s Game】

[pixiv] https://www.pixiv.net/member_illust.php?mode=medium&illust_id=60481118 由于今天考了一道博弈的问题,我竟什 ...

- Hibernate使用Criteria去重distinct+分页

写在前面: 最近在项目中使用了Criteria的分页查询,当查询的数据没有重复的记录还好,但是当数据有关联并出现重复记录的时候,就要去重,那么就会出现查询的记录数与实际的不一致的问题.这里也记录一下解 ...

- 数据库系统入门 | Not Exisits 结构的灵活应用

教材 /<数据库系统概念>第六版第三章内容 机械工程出版社:实验软件/Qracle 11g 写在前面 用下面的样例1引出我们讨论的这一类方法. 样例1:使用大学模式,用SQL写出以下查询, ...

- ZoomControls控件是一个可以缩放控件,可以实现两个按钮控制图片的大小

<?xml version="1.0" encoding="utf-8"?> <LinearLayout xmlns:android=&quo ...

- osgconv使用指南(转)

osgconv是一种用来读取3D数据库以及对它们实施一些简单的操作的实用应用程序,同时也被称作 一种专用3D数据库工具. 用osgconv把其他格式的文件转换为OSG所支持的格式 osgconv是一种 ...

- 天地图应用ArcGIS发布的服务(转)

天地图应用ArcGIS发布的服务 本文包含三个部分:利用ArcMap将Excel的数据转化为ArcGIS MXD文件.利用ArcMap发布服务.天地图添加ArcGIS发布的服务. 一 MXD文件的生成 ...