flink-----实时项目---day06-------1. 获取窗口迟到的数据 2.双流join(inner join和left join(有点小问题)) 3 订单Join案例(订单数据接入到kafka,订单数据的join实现,订单数据和迟到数据join的实现)

1. 获取窗口迟到的数据

主要流程就是给迟到的数据打上标签,然后使用相应窗口流的实例调用sideOutputLateData(lateDataTag),从而获得窗口迟到的数据,进而进行相关的计算,具体代码见下

WindowLateDataDemo

package cn._51doit.flink.day10; import org.apache.flink.api.common.functions.MapFunction;

import org.apache.flink.api.java.tuple.Tuple;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.*;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow;

import org.apache.flink.util.OutputTag; public class WindowLateDataDemo {

public static void main(String[] args) throws Exception {

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> lines = env.socketTextStream("feng05", 8888);

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

// 数据类型:时间戳,单词,获取时间戳

SingleOutputStreamOperator<String> assignTimestampsAndWatermarks = lines.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<String>(Time.seconds(2)) {

@Override

public long extractTimestamp(String element) {

String stampTime = element.split(",")[0];

return Long.parseLong(stampTime);

}

});

// 切分数据

SingleOutputStreamOperator<Tuple2<String, Integer>> wordAndOne = assignTimestampsAndWatermarks.map(new MapFunction<String, Tuple2<String, Integer>>() {

@Override

public Tuple2<String, Integer> map(String line) throws Exception {

String word = line.split(",")[1];

return Tuple2.of(word, 1);

}

});

// 按照key分组

KeyedStream<Tuple2<String, Integer>, Tuple> keyed = wordAndOne.keyBy(0);

OutputTag<Tuple2<String, Integer>> lateDataTag = new OutputTag<Tuple2<String, Integer>>("late-data"){};

// 划分窗口并进行计算

WindowedStream<Tuple2<String, Integer>, Tuple, TimeWindow> window = keyed.window(TumblingEventTimeWindows.of(Time.seconds(5)));

SingleOutputStreamOperator<Tuple2<String, Integer>> summed = window.sideOutputLateData(lateDataTag).sum(1);

// 获取迟到数据的测流

DataStream<Tuple2<String, Integer>> lateDataStream = summed.getSideOutput(lateDataTag);

summed.print("准时的数据: ");

SingleOutputStreamOperator<Tuple2<String, Integer>> result = summed.union(lateDataStream).keyBy(0).sum(1);

result.print();

env.execute();

}

}

2.双流join

补充:

Join、CoGroup和CoFlatMap这三个运算符都能够将双数据流转换为单个数据流。Join和CoGroup会根据指定的条件进行数据配对操作,不同的是Join只输出匹配成功的数据对,CoGroup无论是否有匹配都会输出。CoFlatMap没有匹配操作,只是分别去接收两个流的输入。

简单来说,就是两个数据流进行join操作,类似mysql中的join,其也包含inner join、left join、right join、full join等,以下是具体例子

- inner join

此处案例是先获取两个数据流,然后分别设置水位线,在进行join操作,从而得到joinedStream实例,然后调用joinStream中的where、equalTo方法,即可join到key相同的数据(注意此处需要自己定义key选择器),接着就是划分窗口,处理窗口中的数据

注意:条件相同并且在同一个窗口中的数据才能被join上

知识点:

一个窗口会有很多分区,只有当每个分区中的时间都满足窗口的触发条件,窗口才会被触发,所以下面的测试为了方便都将并行度设置为1

StreamDataSourceA

package cn._51doit.flink.day10; import org.apache.flink.api.java.tuple.Tuple3;

import org.apache.flink.streaming.api.functions.source.RichParallelSourceFunction; public class StreamDataSourceA extends RichParallelSourceFunction<Tuple3<String, String, Long>> {

private volatile boolean running = true; @Override

public void run(SourceContext<Tuple3<String, String, Long>> ctx) throws InterruptedException {

//事先准备好的数据

Tuple3[] elements = new Tuple3[]{

Tuple3.of("a", "1", 1000000050000L), //[50000 - 60000)

Tuple3.of("a", "2", 1000000054000L), //[50000 - 60000)

Tuple3.of("a", "3", 1000000079900L), //[70000 - 80000)

Tuple3.of("a", "4", 1000000115000L), //[110000 - 120000) // 115000 - 5001 = 109999 >= 109999

Tuple3.of("b", "5", 1000000100000L), //[100000 - 110000)

Tuple3.of("b", "6", 1000000108000L) //[100000 - 110000)

};

int count = 0;

while (running && count < elements.length) {

//将数据发出去

ctx.collect(new Tuple3<>((String) elements[count].f0, (String) elements[count].f1, (Long) elements[count].f2));

count++;

Thread.sleep(1000);

}

//Thread.sleep(100000000);

}

@Override

public void cancel() {

running = false;

}

}

StreamDataSourceB

package cn._51doit.flink.day10; import org.apache.flink.api.java.tuple.Tuple3;

import org.apache.flink.streaming.api.functions.source.RichParallelSourceFunction; public class StreamDataSourceB extends RichParallelSourceFunction<Tuple3<String, String, Long>> {

private volatile boolean running = true;

@Override

public void run(SourceContext<Tuple3<String, String, Long>> ctx) throws Exception {

// a ,1 hangzhou

Tuple3[] elements = new Tuple3[]{

Tuple3.of("a", "hangzhou", 1000000059000L), //[50000, 60000)

Tuple3.of("b", "beijing", 1000000105000L), //[100000, 110000)

};

int count = 0;

while (running && count < elements.length) {

//将数据发出去

ctx.collect(new Tuple3<>((String) elements[count].f0, (String) elements[count].f1, (long) elements[count].f2));

count++;

Thread.sleep(1000);

}

//Thread.sleep(100000000);

}

@Override

public void cancel() {

running = false;

}

}

FlinkTumblingWindowsInnerJoinDemo

package cn._51doit.flink.day10; import org.apache.flink.api.common.functions.JoinFunction;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple3;

import org.apache.flink.api.java.tuple.Tuple5;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.datastream.JoinedStreams;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time; public class FlinkTumblingWindowsInnerJoinDemo {

public static void main(String[] args) throws Exception {

int windowSize = 10;

long delay = 5001L;

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 设置时间类型

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

//通过Env设置并行度为1,即以后所有的DataStream的并行都是1

env.setParallelism(1);

// 设置数据源

//第一个流(左流)

DataStream<Tuple3<String, String, Long>> leftSource = env.addSource(new StreamDataSourceA()).name("Demo SourceA");

//第二个流(右流)

DataStream<Tuple3<String, String, Long>> rightSource = env.addSource(new StreamDataSourceB()).name("Demo SourceB");

// 对leftSource设置水位线

// ("a", "1", 1000)

SingleOutputStreamOperator<Tuple3<String, String, Long>> leftStream = leftSource.assignTimestampsAndWatermarks(

new BoundedOutOfOrdernessTimestampExtractor<Tuple3<String, String, Long>>(Time.milliseconds(delay)) {

@Override

public long extractTimestamp(Tuple3<String, String, Long> element) {

return element.f2;

}

});

// 对rightSource设置水位线

// ("a", "hangzhou", 6000)

SingleOutputStreamOperator<Tuple3<String, String, Long>> rightStream = rightSource.assignTimestampsAndWatermarks(

new BoundedOutOfOrdernessTimestampExtractor<Tuple3<String, String, Long>>(Time.milliseconds(delay)) {

@Override

public long extractTimestamp(Tuple3<String, String, Long> element) {

return element.f2;

}

}); // join操作

JoinedStreams<Tuple3<String, String, Long>, Tuple3<String, String, Long>> joinedStream = leftStream.join(rightStream);

joinedStream

.where(new LeftSelectKey())

.equalTo(new RightSelectKey())

.window(TumblingEventTimeWindows.of(Time.seconds(windowSize)))

.apply(new JoinFunction<Tuple3<String, String, Long>, Tuple3<String, String, Long>, Tuple5<String, String, String, Long, Long>>() {

// 两个流的key的值相等,并且在同一个窗口内

@Override

public Tuple5<String, String, String, Long, Long> join(Tuple3<String, String, Long> first, Tuple3<String, String, Long> second) throws Exception {

// a, 1, "hangzhou", 1000001000, 1000006000

return new Tuple5<>(first.f0, first.f1, second.f1, first.f2, second.f2);

}

}).print();

env.execute();

} public static class LeftSelectKey implements KeySelector<Tuple3<String, String, Long>,String>{

@Override

public String getKey(Tuple3<String, String, Long> w) throws Exception {

return w.f0;

}

}

public static class RightSelectKey implements KeySelector<Tuple3<String, String, Long>, String> {

@Override

public String getKey(Tuple3<String, String, Long> w) {

return w.f0;

}

} }

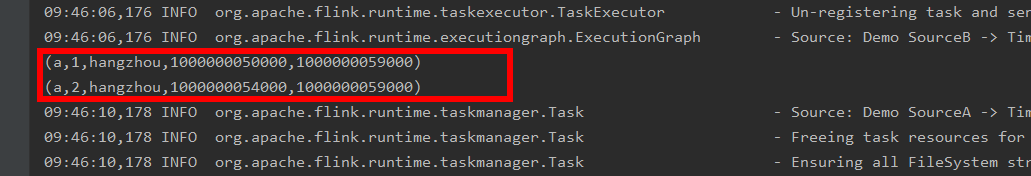

运行结果

为什么b没有被join上?

- left join

FlinkTumblingWindowLeftJoinDemo

package cn._51doit.flink.day10; import org.apache.flink.api.common.functions.CoGroupFunction;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple3;

import org.apache.flink.api.java.tuple.Tuple5;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.util.Collector; public class FlinkTumblingWindowsLeftJoinDemo {

public static void main(String[] args) throws Exception {

int windowSize = 10;

long delay = 5000L;

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

env.setStreamTimeCharacteristic(TimeCharacteristic.EventTime);

env.setParallelism(1); // 设置数据源

DataStream<Tuple3<String, String, Long>> leftSource = env.addSource(new StreamDataSourceA()).name("Demo SourceA");

DataStream<Tuple3<String, String, Long>> rightSource = env.addSource(new StreamDataSourceB()).name("Demo SourceB");

// 设置水位线

DataStream<Tuple3<String, String, Long>> leftStream = leftSource.assignTimestampsAndWatermarks(

new BoundedOutOfOrdernessTimestampExtractor<Tuple3<String, String, Long>>(Time.milliseconds(delay)) {

@Override

public long extractTimestamp(Tuple3<String, String, Long> element) {

return element.f2;

}

}

); DataStream<Tuple3<String, String, Long>> rightStream = rightSource.assignTimestampsAndWatermarks(

new BoundedOutOfOrdernessTimestampExtractor<Tuple3<String, String, Long>>(Time.milliseconds(delay)) {

@Override

public long extractTimestamp(Tuple3<String, String, Long> element) {

return element.f2;

}

}

);

int parallelism1 = leftStream.getParallelism();

int parallelism2 = rightStream.getParallelism();

// left join 操作

leftStream.coGroup(rightStream)

.where(new LeftSelectKey())

.equalTo(new RightSelectKey())

.window(TumblingEventTimeWindows.of(Time.seconds(windowSize)))

.apply(new LeftJoin())

.print(); env.execute("TimeWindowDemo");

} public static class LeftJoin implements CoGroupFunction<Tuple3<String, String, Long>, Tuple3<String, String, Long>, Tuple5<String, String, String, Long, Long>> {

//coGroup左流的数据和有流的数据取出来,可以将key相同,并且在同一个窗口的数据取出来

@Override

public void coGroup(

Iterable<Tuple3<String, String, Long>> leftElements, Iterable<Tuple3<String, String, Long>> rightElements, Collector<Tuple5<String, String, String, Long, Long>> out) {

//leftElements是左流的数据

for (Tuple3<String, String, Long> leftElem : leftElements) {

boolean hadElements = false;

//如果左边的流join上了右边的流rightElements就不为空

for (Tuple3<String, String, Long> rightElem : rightElements) {

//将join上的数据输出

out.collect(new Tuple5<>(leftElem.f0, leftElem.f1, rightElem.f1, leftElem.f2, rightElem.f2));

hadElements = true;

}

if (!hadElements) {

//没join上,给右边的数据赋空值

out.collect(new Tuple5<>(leftElem.f0, leftElem.f1, "null", leftElem.f2, -1L));

}

}

}

} public static class LeftSelectKey implements KeySelector<Tuple3<String, String, Long>, String> {

@Override

public String getKey(Tuple3<String, String, Long> w) {

return w.f0;

}

} public static class RightSelectKey implements KeySelector<Tuple3<String, String, Long>, String> {

@Override

public String getKey(Tuple3<String, String, Long> w) {

return w.f0;

}

} }

做法和join类似,只是此处使用coGroup算子

3. 订单Join案例

在各种各样的系统中,都订单数据表

订单表:订单主表、订单明细表 订单主表:

订单id、订单状态、订单总金额、订单的时间、用户ID 订单明细表:

订单主表的ID、商品ID、商品的分类ID、商品的单价、商品的数量 统计某个分类的成交金额

订单状态为成交的(主表中)

商品的分类ID(明细表中)

商品的金额即单价*数量(明细表中) 在京东或淘宝,下来一个单

o1000,已支付,600,2020-06-29 19:42:00,feng

p1, 100, 1, 食品

p1, 200, 1, 食品

p1, 300, 1, 食品

3.1 订单数据的join实现

读取kafka中topic的数据的工具类

package cn._51doit.flink.day10; import org.apache.flink.api.common.restartstrategy.RestartStrategies;

import org.apache.flink.api.common.serialization.DeserializationSchema;

import org.apache.flink.api.common.time.Time;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.runtime.state.filesystem.FsStateBackend;

import org.apache.flink.streaming.api.CheckpointingMode;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.CheckpointConfig;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.kafka.FlinkKafkaConsumer; import java.util.Arrays;

import java.util.List;

import java.util.Properties; public class FlinkUtilsV2 { private static StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment(); public static <T> DataStream<T> createKafkaDataStream(ParameterTool parameters, Class<? extends DeserializationSchema<T>> clazz) throws Exception {

String topics = parameters.getRequired("kafka.topics");

String groupId = parameters.getRequired("group.id");

return createKafkaDataStream(parameters, topics, groupId, clazz);

} public static <T> DataStream<T> createKafkaDataStream(ParameterTool parameters, String topics, Class<? extends DeserializationSchema<T>> clazz) throws Exception { String groupId = parameters.getRequired("group.id");

return createKafkaDataStream(parameters, topics, groupId, clazz);

} public static <T> DataStream<T> createKafkaDataStream(ParameterTool parameters, String topics, String groupId, Class<? extends DeserializationSchema<T>> clazz) throws Exception { //将ParameterTool的参数设置成全局的参数

env.getConfig().setGlobalJobParameters(parameters); //开启checkpoint

env.enableCheckpointing(parameters.getLong("checkpoint.interval", 10000L), CheckpointingMode.EXACTLY_ONCE); //重启策略

env.setRestartStrategy(RestartStrategies.fixedDelayRestart(parameters.getInt("restart.times", 10), Time.seconds(5))); //设置statebackend

String path = parameters.get("state.backend.path");

if(path != null) {

//最好的方式将setStateBackend配置到Flink的全局配置文件中flink-conf.yaml

env.setStateBackend(new FsStateBackend(path));

} //设置cancel任务不用删除checkpoint

env.getCheckpointConfig().enableExternalizedCheckpoints(CheckpointConfig.ExternalizedCheckpointCleanup.RETAIN_ON_CANCELLATION); env.getCheckpointConfig().setMaxConcurrentCheckpoints(3); //String topics = parameters.getRequired("kafka.topics"); List<String> topicList = Arrays.asList(topics.split(",")); Properties properties = parameters.getProperties(); properties.setProperty("group.id", groupId); //创建FlinkKafkaConsumer

FlinkKafkaConsumer<T> kafkaConsumer = new FlinkKafkaConsumer<T>(

topicList,

clazz.newInstance(),

properties

); return env.addSource(kafkaConsumer);

} public static StreamExecutionEnvironment getEnv() {

return env;

} }

OrderDetail

package cn._51doit.flink.day10;

import java.util.Date;

public class OrderDetail {

private Long id;

private Long order_id;

private int category_id;

private String categoryName;

private Long sku;

private Double money;

private int amount;

private Date create_time;

private Date update_time;

//对数据库的操作类型:INSERT、UPDATE

private String type;

public Long getId() {

return id;

}

public void setId(Long id) {

this.id = id;

}

public Long getOrder_id() {

return order_id;

}

public void setOrder_id(Long order_id) {

this.order_id = order_id;

}

public int getCategory_id() {

return category_id;

}

public void setCategory_id(int category_id) {

this.category_id = category_id;

}

public Long getSku() {

return sku;

}

public void setSku(Long sku) {

this.sku = sku;

}

public Double getMoney() {

return money;

}

public void setMoney(Double money) {

this.money = money;

}

public int getAmount() {

return amount;

}

public void setAmount(int amount) {

this.amount = amount;

}

public Date getCreate_time() {

return create_time;

}

public void setCreate_time(Date create_time) {

this.create_time = create_time;

}

public Date getUpdate_time() {

return update_time;

}

public void setUpdate_time(Date update_time) {

this.update_time = update_time;

}

public String getType() {

return type;

}

public void setType(String type) {

this.type = type;

}

public String getCategoryName() {

return categoryName;

}

public void setCategoryName(String categoryName) {

this.categoryName = categoryName;

}

@Override

public String toString() {

return "OrderDetail{" +

"id=" + id +

", order_id=" + order_id +

", category_id=" + category_id +

", categoryName='" + categoryName + '\'' +

", sku=" + sku +

", money=" + money +

", amount=" + amount +

", create_time=" + create_time +

", update_time=" + update_time +

", type='" + type + '\'' +

'}';

}

}

OrderMain

package cn._51doit.flink.day10;

import java.util.Date;

public class OrderMain {

private Long oid;

private Date create_time;

private Double total_money;

private int status;

private Date update_time;

private String province;

private String city;

//对数据库的操作类型:INSERT、UPDATE

private String type;

public Long getOid() {

return oid;

}

public void setOid(Long oid) {

this.oid = oid;

}

public Date getCreate_time() {

return create_time;

}

public void setCreate_time(Date create_time) {

this.create_time = create_time;

}

public Double getTotal_money() {

return total_money;

}

public void setTotal_money(Double total_money) {

this.total_money = total_money;

}

public int getStatus() {

return status;

}

public void setStatus(int status) {

this.status = status;

}

public Date getUpdate_time() {

return update_time;

}

public void setUpdate_time(Date update_time) {

this.update_time = update_time;

}

public String getProvince() {

return province;

}

public void setProvince(String province) {

this.province = province;

}

public String getCity() {

return city;

}

public void setCity(String city) {

this.city = city;

}

public String getType() {

return type;

}

public void setType(String type) {

this.type = type;

}

@Override

public String toString() {

return "OrderMain{" +

"oid=" + oid +

", create_time=" + create_time +

", total_money=" + total_money +

", status=" + status +

", update_time=" + update_time +

", province='" + province + '\'' +

", city='" + city + '\'' +

", type='" + type + '\'' +

'}';

}

}

业务代码

OrderJoin

package cn._51doit.flink.day10; import com.alibaba.fastjson.JSON;

import com.alibaba.fastjson.JSONArray;

import com.alibaba.fastjson.JSONObject;

import org.apache.flink.api.common.functions.CoGroupFunction;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.functions.ProcessFunction;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.util.Collector; public class OrderJoin { public static void main(String[] args) throws Exception { ParameterTool parameters = ParameterTool.fromPropertiesFile(args[0]); //使用EventTime作为时间标准

FlinkUtilsV2.getEnv().setStreamTimeCharacteristic(TimeCharacteristic.EventTime); DataStream<String> orderMainLinesDataStream = FlinkUtilsV2.createKafkaDataStream(parameters, "ordermain", "g1", SimpleStringSchema.class); DataStream<String> orderDetailLinesDataStream = FlinkUtilsV2.createKafkaDataStream(parameters, "orderdetail", "g1", SimpleStringSchema.class); //对数据进行解析

SingleOutputStreamOperator<OrderMain> orderMainDataStream = orderMainLinesDataStream.process(new ProcessFunction<String, OrderMain>() { @Override

public void processElement(String line, Context ctx, Collector<OrderMain> out) throws Exception {

//flatMap+filter

try {

JSONObject jsonObject = JSON.parseObject(line);

String type = jsonObject.getString("type");

if (type.equals("INSERT") || type.equals("UPDATE")) {

JSONArray jsonArray = jsonObject.getJSONArray("data");

for (int i = 0; i < jsonArray.size(); i++) {

OrderMain orderMain = jsonArray.getObject(i, OrderMain.class);

orderMain.setType(type); //设置操作类型

out.collect(orderMain);

}

}

} catch (Exception e) {

//e.printStackTrace();

//记录错误的数据

}

}

}); //对数据进行解析

SingleOutputStreamOperator<OrderDetail> orderDetailDataStream = orderDetailLinesDataStream.process(new ProcessFunction<String, OrderDetail>() { @Override

public void processElement(String line, Context ctx, Collector<OrderDetail> out) throws Exception {

//flatMap+filter

try {

JSONObject jsonObject = JSON.parseObject(line);

String type = jsonObject.getString("type");

if (type.equals("INSERT") || type.equals("UPDATE")) {

JSONArray jsonArray = jsonObject.getJSONArray("data");

for (int i = 0; i < jsonArray.size(); i++) {

OrderDetail orderDetail = jsonArray.getObject(i, OrderDetail.class);

orderDetail.setType(type); //设置操作类型

out.collect(orderDetail);

}

}

} catch (Exception e) {

//e.printStackTrace();

//记录错误的数据

}

}

}); int delaySeconds = 2; //提取EventTime生成WaterMark

SingleOutputStreamOperator<OrderMain> orderMainStreamWithWaterMark = orderMainDataStream.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<OrderMain>(Time.seconds(delaySeconds)) {

@Override

public long extractTimestamp(OrderMain element) {

return element.getCreate_time().getTime();

}

}); SingleOutputStreamOperator<OrderDetail> orderDetailStreamWithWaterMark = orderDetailDataStream.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<OrderDetail>(Time.seconds(delaySeconds)) {

@Override

public long extractTimestamp(OrderDetail element) {

return element.getCreate_time().getTime();

}

}); //Left Out JOIN,并且将订单明细表作为左表

DataStream<Tuple2<OrderDetail, OrderMain>> joined = orderDetailStreamWithWaterMark.coGroup(orderMainStreamWithWaterMark)

.where(new KeySelector<OrderDetail, Long>() {

@Override

public Long getKey(OrderDetail value) throws Exception {

return value.getOrder_id();

}

})

.equalTo(new KeySelector<OrderMain, Long>() {

@Override

public Long getKey(OrderMain value) throws Exception {

return value.getOid();

}

})

.window(TumblingEventTimeWindows.of(Time.seconds(5)))

.apply(new CoGroupFunction<OrderDetail, OrderMain, Tuple2<OrderDetail, OrderMain>>() { @Override

public void coGroup(Iterable<OrderDetail> first, Iterable<OrderMain> second, Collector<Tuple2<OrderDetail, OrderMain>> out) throws Exception { for (OrderDetail orderDetail : first) {

boolean isJoined = false;

for (OrderMain orderMain : second) {

out.collect(Tuple2.of(orderDetail, orderMain));

isJoined = true;

}

if (!isJoined) {

out.collect(Tuple2.of(orderDetail, null));

}

}

}

}); joined.print(); FlinkUtilsV2.getEnv().execute();

}

}

3.2 订单数据和迟到数据join的实现

OrderJoinAdv

package cn._51doit.flink.day10; import com.alibaba.fastjson.JSON;

import com.alibaba.fastjson.JSONArray;

import com.alibaba.fastjson.JSONObject;

import org.apache.flink.api.common.functions.CoGroupFunction;

import org.apache.flink.api.common.functions.RichMapFunction;

import org.apache.flink.api.common.serialization.SimpleStringSchema;

import org.apache.flink.api.java.functions.KeySelector;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.configuration.Configuration;

import org.apache.flink.streaming.api.TimeCharacteristic;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.functions.ProcessFunction;

import org.apache.flink.streaming.api.functions.timestamps.BoundedOutOfOrdernessTimestampExtractor;

import org.apache.flink.streaming.api.functions.windowing.AllWindowFunction;

import org.apache.flink.streaming.api.windowing.assigners.TumblingEventTimeWindows;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.streaming.api.windowing.windows.TimeWindow;

import org.apache.flink.util.Collector;

import org.apache.flink.util.OutputTag; import java.sql.Connection; public class OrderJoinAdv { public static void main(String[] args) throws Exception { ParameterTool parameters = ParameterTool.fromPropertiesFile(args[0]); //使用EventTime作为时间标准

FlinkUtilsV2.getEnv().setStreamTimeCharacteristic(TimeCharacteristic.EventTime); DataStream<String> orderMainLinesDataStream = FlinkUtilsV2.createKafkaDataStream(parameters, "ordermain", "g1", SimpleStringSchema.class); DataStream<String> orderDetailLinesDataStream = FlinkUtilsV2.createKafkaDataStream(parameters, "orderdetail", "g1", SimpleStringSchema.class); //对数据进行解析

SingleOutputStreamOperator<OrderMain> orderMainDataStream = orderMainLinesDataStream.process(new ProcessFunction<String, OrderMain>() { @Override

public void processElement(String line, Context ctx, Collector<OrderMain> out) throws Exception {

//flatMap+filter

try {

JSONObject jsonObject = JSON.parseObject(line);

String type = jsonObject.getString("type");

if (type.equals("INSERT") || type.equals("UPDATE")) {

JSONArray jsonArray = jsonObject.getJSONArray("data");

for (int i = 0; i < jsonArray.size(); i++) {

OrderMain orderMain = jsonArray.getObject(i, OrderMain.class);

orderMain.setType(type); //设置操作类型

out.collect(orderMain);

}

}

} catch (Exception e) {

//e.printStackTrace();

//记录错误的数据

}

}

}); //对数据进行解析

SingleOutputStreamOperator<OrderDetail> orderDetailDataStream = orderDetailLinesDataStream.process(new ProcessFunction<String, OrderDetail>() { @Override

public void processElement(String line, Context ctx, Collector<OrderDetail> out) throws Exception {

//flatMap+filter

try {

JSONObject jsonObject = JSON.parseObject(line);

String type = jsonObject.getString("type");

if (type.equals("INSERT") || type.equals("UPDATE")) {

JSONArray jsonArray = jsonObject.getJSONArray("data");

for (int i = 0; i < jsonArray.size(); i++) {

OrderDetail orderDetail = jsonArray.getObject(i, OrderDetail.class);

orderDetail.setType(type); //设置操作类型

out.collect(orderDetail);

}

}

} catch (Exception e) {

//e.printStackTrace();

//记录错误的数据

}

}

}); int delaySeconds = 2;

int windowSize = 5; //提取EventTime生成WaterMark

SingleOutputStreamOperator<OrderMain> orderMainStreamWithWaterMark = orderMainDataStream.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<OrderMain>(Time.seconds(delaySeconds)) {

@Override

public long extractTimestamp(OrderMain element) {

return element.getCreate_time().getTime();

}

}); SingleOutputStreamOperator<OrderDetail> orderDetailStreamWithWaterMark = orderDetailDataStream.assignTimestampsAndWatermarks(new BoundedOutOfOrdernessTimestampExtractor<OrderDetail>(Time.seconds(delaySeconds)) {

@Override

public long extractTimestamp(OrderDetail element) {

return element.getCreate_time().getTime();

}

}); //定义迟到侧流输出的Tag

OutputTag<OrderDetail> lateTag = new OutputTag<OrderDetail>("late-date") {}; //对左表进行单独划分窗口,窗口的长度与cogroup的窗口长度一样

SingleOutputStreamOperator<OrderDetail> orderDetailWithWindow = orderDetailStreamWithWaterMark.windowAll(TumblingEventTimeWindows.of(Time.seconds(windowSize)))

.sideOutputLateData(lateTag) //将迟到的数据打上Tag

.apply(new AllWindowFunction<OrderDetail, OrderDetail, TimeWindow>() {

@Override

public void apply(TimeWindow window, Iterable<OrderDetail> values, Collector<OrderDetail> out) throws Exception {

for (OrderDetail value : values) {

//out.collect(value);

}

}

}); //获取迟到的数据

DataStream<OrderDetail> lateOrderDetailStream = orderDetailWithWindow.getSideOutput(lateTag); //应为orderDetail表的数据迟到数据不是很多,没必要使用异步IO,直接使用RichMapFunction

SingleOutputStreamOperator<Tuple2<OrderDetail, OrderMain>> lateOrderDetailAndOrderMain = lateOrderDetailStream.map(new RichMapFunction<OrderDetail, Tuple2<OrderDetail, OrderMain>>() { @Override

public void open(Configuration parameters) throws Exception {

//创建书库的连接

} @Override

public Tuple2<OrderDetail, OrderMain> map(OrderDetail value) throws Exception {

return null;

} @Override

public void close() throws Exception {

//关闭数据库的连接

}

}); //Left Out JOIN,并且将订单明细表作为左表

DataStream<Tuple2<OrderDetail, OrderMain>> joined = orderDetailWithWindow.coGroup(orderMainStreamWithWaterMark)

.where(new KeySelector<OrderDetail, Long>() {

@Override

public Long getKey(OrderDetail value) throws Exception {

return value.getOrder_id();

}

})

.equalTo(new KeySelector<OrderMain, Long>() {

@Override

public Long getKey(OrderMain value) throws Exception {

return value.getOid();

}

})

.window(TumblingEventTimeWindows.of(Time.seconds(windowSize)))

.apply(new CoGroupFunction<OrderDetail, OrderMain, Tuple2<OrderDetail, OrderMain>>() { @Override

public void coGroup(Iterable<OrderDetail> first, Iterable<OrderMain> second, Collector<Tuple2<OrderDetail, OrderMain>> out) throws Exception { for (OrderDetail orderDetail : first) {

boolean isJoined = false;

for (OrderMain orderMain : second) {

out.collect(Tuple2.of(orderDetail, orderMain));

isJoined = true;

}

if (!isJoined) {

out.collect(Tuple2.of(orderDetail, null));

}

}

}

}); //join后,有可orderMain没有join上

SingleOutputStreamOperator<Tuple2<OrderDetail, OrderMain>> punctualOrderDetailAndOrderMain = joined.map(new RichMapFunction<Tuple2<OrderDetail, OrderMain>, Tuple2<OrderDetail, OrderMain>>() { private transient Connection connection; @Override

public void open(Configuration parameters) throws Exception {

//打开数据库连接

} @Override

public Tuple2<OrderDetail, OrderMain> map(Tuple2<OrderDetail, OrderMain> tp) throws Exception {

//每个关联上订单主表的数据,就查询书库

if (tp.f1 == null) {

OrderMain orderMain = queryOrderMainFromMySQL(tp.f0.getOrder_id(), connection);

tp.f1 = orderMain;

}

return tp;

} @Override

public void close() throws Exception {

//关闭数据库连接

}

}); //将准时的和迟到的UNION到一起

DataStream<Tuple2<OrderDetail, OrderMain>> allOrderStream = punctualOrderDetailAndOrderMain.union(lateOrderDetailAndOrderMain); //根据具体的场景,写入到Kafka、Hbase、ES、ClickHouse

allOrderStream.print(); FlinkUtilsV2.getEnv().execute();

} private static OrderMain queryOrderMainFromMySQL(Long order_id, Connection connection) {

return null;

}

}

flink-----实时项目---day06-------1. 获取窗口迟到的数据 2.双流join(inner join和left join(有点小问题)) 3 订单Join案例(订单数据接入到kafka,订单数据的join实现,订单数据和迟到数据join的实现)的更多相关文章

- 5.Flink实时项目之业务数据准备

1. 流程介绍 在上一篇文章中,我们已经把客户端的页面日志,启动日志,曝光日志分别发送到kafka对应的主题中.在本文中,我们将把业务数据也发送到对应的kafka主题中. 通过maxwell采集业务数 ...

- 6.Flink实时项目之业务数据分流

在上一篇文章中,我们已经获取到了业务数据的输出流,分别是dim层维度数据的输出流,及dwd层事实数据的输出流,接下来我们要做的就是把这些输出流分别再流向对应的数据介质中,dim层流向hbase中,dw ...

- 4.Flink实时项目之数据拆分

1. 摘要 我们前面采集的日志数据已经保存到 Kafka 中,作为日志数据的 ODS 层,从 kafka 的ODS 层读取的日志数据分为 3 类, 页面日志.启动日志和曝光日志.这三类数据虽然都是用户 ...

- 9.Flink实时项目之订单宽表

1.需求分析 订单是统计分析的重要的对象,围绕订单有很多的维度统计需求,比如用户.地区.商品.品类.品牌等等.为了之后统计计算更加方便,减少大表之间的关联,所以在实时计算过程中将围绕订单的相关数据整合 ...

- 10.Flink实时项目之订单维度表关联

1. 维度查询 在上一篇中,我们已经把订单和订单明细表join完,本文将关联订单的其他维度数据,维度关联实际上就是在流中查询存储在 hbase 中的数据表.但是即使通过主键的方式查询,hbase 速度 ...

- 11.Flink实时项目之支付宽表

支付宽表 支付宽表的目的,最主要的原因是支付表没有到订单明细,支付金额没有细分到商品上, 没有办法统计商品级的支付状况. 所以本次宽表的核心就是要把支付表的信息与订单明细关联上. 解决方案有两个 一个 ...

- 7.Flink实时项目之独立访客开发

1.架构说明 在上6节当中,我们已经完成了从ods层到dwd层的转换,包括日志数据和业务数据,下面我们开始做dwm层的任务. DWM 层主要服务 DWS,因为部分需求直接从 DWD 层到DWS 层中间 ...

- 3.Flink实时项目之流程分析及环境搭建

1. 流程分析 前面已经将日志数据(ods_base_log)及业务数据(ods_base_db_m)发送到kafka,作为ods层,接下来要做的就是通过flink消费kafka 的ods数据,进行简 ...

- 8.Flink实时项目之CEP计算访客跳出

1.访客跳出明细介绍 首先要识别哪些是跳出行为,要把这些跳出的访客最后一个访问的页面识别出来.那么就要抓住几个特征: 该页面是用户近期访问的第一个页面,这个可以通过该页面是否有上一个页面(last_p ...

- 1.Flink实时项目前期准备

1.日志生成项目 日志生成机器:hadoop101 jar包:mock-log-0.0.1-SNAPSHOT.jar gmall_mock |----mock_common |----mock ...

随机推荐

- 确定两串乱序同构 牛客网 程序员面试金典 C++ Python

确定两串乱序同构 牛客网 程序员面试金典 C++ Python 题目描述 给定两个字符串,请编写程序,确定其中一个字符串的字符重新排列后,能否变成另一个字符串.这里规定大小写为不同字符,且考虑字符串中 ...

- 【http】https加速优化

目录 前言 HTTPS 的连接很慢 https 步骤简要划分 握手耗时 证书验证 CRL OCSP 硬件优化 软件优化 软件升级 协议优化 证书优化 会话复用 会话票证 预共享密钥 前言 主要记录 h ...

- netty系列之:搭建客户端使用http1.1的方式连接http2服务器

目录 简介 使用http1.1的方式处理http2 处理TLS连接 处理h2c消息 发送消息 总结 简介 对于http2协议来说,它的底层跟http1.1是完全不同的,但是为了兼容http1.1协议, ...

- 匿名函数托管器 spring-boot-func-starter

spring-boot-func-starter spring-boot-func-starter 介绍 项目地址: https://gitee.com/yiur/spring-boot-func-s ...

- TDSQL | 在整个技术解决方案中HTAP对应的混合交易以及分析系统应该如何实现?

从主交易到传输,到插件式解决方案,每个厂商对HTAP的理解和实验方式都有自己的独到解法,在未来整个数据解决方案当中都会往HTAP中去牵引.那么在整个技术解决方案中HTAP对应的混合交易以及分析系统应该 ...

- VMware软件虚拟机不能全屏的问题 & CentOS 安装Vmware Tools

修改设置 1) 如下图右单击虚拟机名,选择[settings-],调出虚拟机设置界面. 2) 在设置界面选择[hardware]->[CD/DVD2(IDE)]->[Connection] ...

- [linux]centos7.4安装nginx

下载nginx wget http://nginx.org/download/nginx-1.5.6.tar.gz 解压包安装在/opt/nginx. 目录下, 1.安装gcc(centos 7之后一 ...

- airflow 并发上不去

airflow.cfg parallelism配置是否合适 任务池slot是否足够

- c++学习笔记5(函数的缺省参数)

例: void func(int x1,int x2=2,int x3=3){} func (10)//等效于func (10,2,3) func (10,8)//等效于func (10,8,3) f ...

- 第一周PTA笔记 德州扑克题解

德州扑克 最近,阿夸迷于德州扑克.所以她找到了很多人和她一起玩.由于人数众多,阿夸必须更改游戏规则: 所有扑克牌均只看数字,不计花色. 每张卡的值为1.2.3.4.5.6.7.8.9.10.11.12 ...