Lessons learned from manually classifying CIFAR-10

Lessons learned from manually classifying CIFAR-10

Apr 27, 2011

CIFAR-10

Note, this post is from 2011 and slightly outdated in some places.

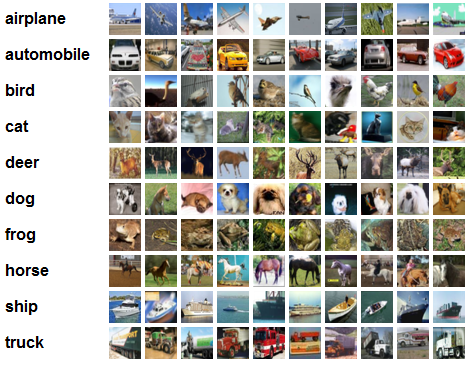

Statistics. CIFAR-10 consists of 50,000 training images, all of them in 1 of 10 categories (displayed left). The test set consists of 10,000 novel images from the same categories, and the task is to classify each to its category. The state of the art is currently at about 80% classification accuracy (4000 centroids), achieved by Adam Coates et al. (PDF). This paper achieved the accuracy by using whitening, k-means to learn many centroids, and then using a soft activation function as features.

State of the Art performance. By the way, running their method with 1600 centroids gives 77% classification accuracy. If you set the clusters to be random the accuracy becomes 70%, and if you set the clusters to be random patches from the training set, the accuracy goes up to 74%. It seems like the whole purpose of k-means is to nicely spread out the clusters around the data. I'm guessing that the 70% random clusters performance might be because many of the clusters are relatively too far away from data manifolds, and never become activated -- it's as if you had much fewer clusters to begin with.

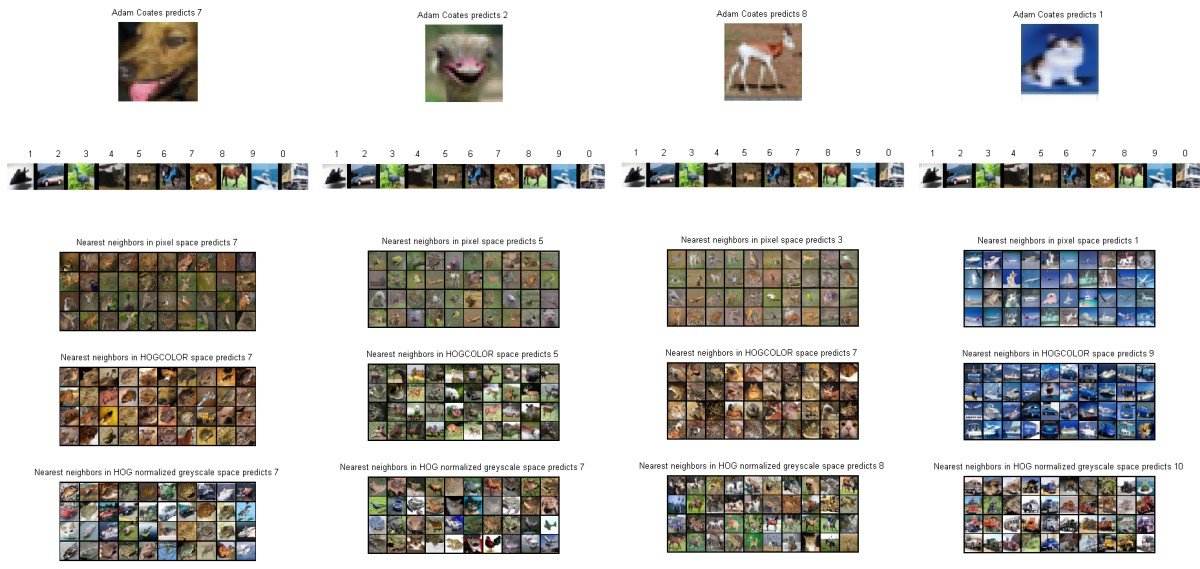

Human Accuracy. Over the weekend I wanted to see what kind of classification accuracy a human would achieve on this dataset. I set out to write some quick MATLAB code that would provide the interface to do this. It showed one image at a time and allowed me to press a key from 0-9 indicating my belief about its class category. My classification accuracy ended up at about 94% on 400 images. Why not 100%? Because some images are really unfair! To give you an idea, here are some questionable images from CIFAR-10:

CIFAR-10 human accuracy is approximately 94%

Observations

A few observations I derived from this exercise:

The objects within classes in this dataset can be extremely varied. For example the "bird" class contains many different types of bird (both big birds and small). Not only are there many types of bird, but the occur at many possible magnifications, all possible angles and all possible poses. Sometimes only parts of the bird are shown. The poses problem is even worse for the dog/cat category, because these animals occur at many many different types of poses, and sometimes only the head is shown. Or left part of the body, etc.

My classification method felt strangely dichotomous. Sometimes you can clearly see the animal or object and classify it based very highly-informative distinct parts (for example, you find ears of a cat). Other times, my recognition was purely based on context and the overall cues in the image such as the colors.

The CIFAR-10 dataset is too small to properly contain examples of everything that it is asking for in the test set. I base this conclusion at least on my multiple ways of visualizing the nearest image in the training set.

I don't quite understand how Adam Coates et al. perform so well on this dataset (80%) with their method. My guess is that it works along the following lines: looking at the image squinting your eyes you can almost always narrow down the category to about 2 or 3. The final disambiguation probably comes from finding very good specific informative patches (like a patch of some kind of fur, or pointy ear part, etc.). The k-means dictionary must be catching these cases and the SVM likely picks up on them.

My impression from this exercise is that it will be hard to go above 80%, but I suspect improvements might be possible up to range of about 85-90%, depending on how wrong I am about the lack of training data. (2015 update: Obviously this prediction was way off, with state of the art now in 95%, as seen in this Kaggle competition leaderboard. I'm impressed!)

I encourage people to try this for themselves (see my code, above), as it is very interesting and fun! I have trouble exactly articulating what I learned, but overall I feel like I gained more intuition for image classification tasks and more appreciation for the difficulty of the problem at hand.

Finally, here is an example of my debugging interface:

The Matlab code used to generate these results can be found here

Lessons learned from manually classifying CIFAR-10的更多相关文章

- Lessons Learned from Developing a Data Product

Lessons Learned from Developing a Data Product For an assignment I was asked to develop a visual ‘da ...

- Lessons learned developing a practical large scale machine learning system

原文:http://googleresearch.blogspot.jp/2010/04/lessons-learned-developing-practical.html Lessons learn ...

- 翻译 | Improving Distributional Similarity with Lessons Learned from Word Embeddings

翻译 | Improving Distributional Similarity with Lessons Learned from Word Embeddings 叶娜老师说:"读懂论文的 ...

- 【翻译】TensorFlow卷积神经网络识别CIFAR 10Convolutional Neural Network (CNN)| CIFAR 10 TensorFlow

原网址:https://data-flair.training/blogs/cnn-tensorflow-cifar-10/ by DataFlair Team · Published May 21, ...

- Elasticsearch Mantanence Lessons Learned Today

Today I troubleshooted an Elasticsearch-cluster-down issue. Several lessons were learned: When many ...

- 【神经网络与深度学习】基于Windows+Caffe的Minst和CIFAR—10训练过程说明

Minst训练 我的路径:G:\Caffe\Caffe For Windows\examples\mnist 对于新手来说,初步完成环境的配置后,一脸茫然.不知如何跑Demo,有么有!那么接下来的教 ...

- DL Practice:Cifar 10分类

Step 1:数据加载和处理 一般使用深度学习框架会经过下面几个流程: 模型定义(包括损失函数的选择)——>数据处理和加载——>训练(可能包括训练过程可视化)——>测试 所以自己写代 ...

- Lessons Learned 1(敏捷项目中的变更影响分析)

问题/现象: 业务信息流转的某些环节,会向相关人员发送通知邮件,邮件中附带有链接,供相关人员进入察看或处理业务.客户要求邮件中的链接,需要进行限制,只有特定人员才能进入处理或察看.总管想了想,应道没问 ...

- Paper Reading - Show and Tell: Lessons learned from the 2015 MSCOCO Image Captioning Challenge

Link of the Paper: https://arxiv.org/abs/1609.06647 A Correlative Paper: Show and Tell: A Neural Ima ...

随机推荐

- Nginx Location匹配举例

1.location / { if (!-f $request_filename){ rewrite ^/(.+)$ /uri.php last; }} ...

- Vmware为Ubuntu安装VmTools

From:http://www.cnblogs.com/killerlegend/p/3632443.html Author:KillerLegend 1:首先打开Vmware并运行里面的Ubuntu ...

- delphi xe6 打开andoridGPS设置

Androidapi.JNI.JavaTypes, Androidapi.JNI.GraphicsContentViewText, Androidapi.JNI.Location, ...

- Ubuntu 12.04 添加新用户并启用root登录

启动root sudo passwd 输入密码 输入root 新密码并重复 su 切换root 添加用户比如hduser 修改密码hduserchmod u+w /etc/sudoersvi sudo ...

- 2.python的变量与赋值

首先,为何要使用变量这里就不再多说了,我这里就介绍一下变量的命令规则和变量赋值的内存行为. 1.变量的命名规则 变量其实通过一个标记调用内存中的值,而变量名就是这个标记的名称,但是万一这个标记已经被提 ...

- Mybatis 实现传入参数是表名

<select id="totals" resultType="string"> select count(*) from ${table} < ...

- Android:简单实现ViewPager+TabHost+TabWidget实现导航栏导航和滑动切换

viewPager是v4包里的一个组件,可以实现滑动显示多个界面. android也为viewPager提供了一个adapter,此adapter最少要重写4个方法: public int getCo ...

- sql server 2016 management studio没有的解决方式

最近安装sql sever2016后发现没有 management studio管理工具,无法操作sql server,可以单独下载安装后即可. 下载地址: https://msdn.microsof ...

- wpa_supplicant 使用

(1)通过adb命令行,可以直接打开supplicant,从而运行wpa_cli,可以解决客户没有显示屏而无法操作WIFI的问题,还可以避免UI的问题带到driver.进一步来说,可以用在很多没有键盘 ...

- Extjs 下拉框下拉选项为Object object

使用Extjs的下拉框出现下拉选项为Object object的问题. 原因在于对store属性提供的是data信息,而不是store对象