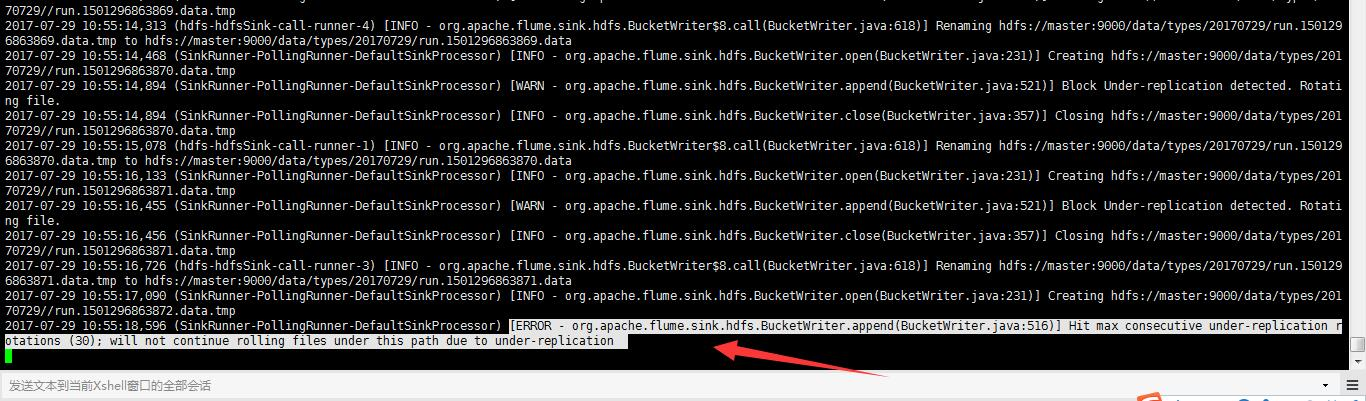

Flume启动报错[ERROR - org.apache.flume.sink.hdfs. Hit max consecutive under-replication rotations (30); will not continue rolling files under this path due to under-replication解决办法(图文详解)

前期博客

Flume自定义拦截器(Interceptors)或自带拦截器时的一些经验技巧总结(图文详解)

问题详情

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [WARN - org.apache.flume.sink.hdfs.BucketWriter.append(BucketWriter.java:)] Block Under-replication detected. Rotating file.

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.hdfs.BucketWriter.close(BucketWriter.java:)] Closing hdfs://master:9000/data/types/20170729//run.1501298449107.data.tmp

-- ::, (hdfs-hdfsSink-call-runner-) [INFO - org.apache.flume.sink.hdfs.BucketWriter$.call(BucketWriter.java:)] Renaming hdfs://master:9000/data/types/20170729/run.1501298449107.data.tmp to hdfs://master:9000/data/types/20170729/run.1501298449107.data

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.hdfs.BucketWriter.open(BucketWriter.java:)] Creating hdfs://master:9000/data/types/20170729//run.1501298449108.data.tmp

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [WARN - org.apache.flume.sink.hdfs.BucketWriter.append(BucketWriter.java:)] Block Under-replication detected. Rotating file.

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.hdfs.BucketWriter.close(BucketWriter.java:)] Closing hdfs://master:9000/data/types/20170729//run.1501298449108.data.tmp

-- ::, (hdfs-hdfsSink-call-runner-) [INFO - org.apache.flume.sink.hdfs.BucketWriter$.call(BucketWriter.java:)] Renaming hdfs://master:9000/data/types/20170729/run.1501298449108.data.tmp to hdfs://master:9000/data/types/20170729/run.1501298449108.data

-- ::, (SinkRunner-PollingRunner-DefaultSinkProcessor) [INFO - org.apache.flume.sink.hdfs.BucketWriter.open(BucketWriter.java:)] Creating hdfs://master:9000/data/types/20170729//run.1501298449109.data.tmp

2017-07-29 11:22:21,869 (SinkRunner-PollingRunner-DefaultSinkProcessor) [ERROR - org.apache.flume.sink.hdfs.BucketWriter.append(BucketWriter.java:516)] Hit max consecutive under-replication rotations (30); will not continue rolling files under this path due to under-replication

解决办法

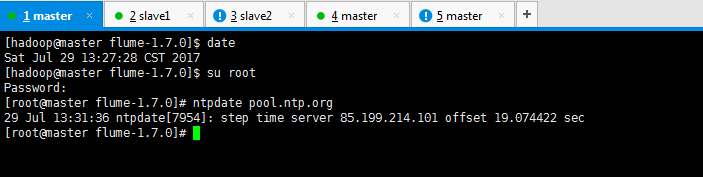

[hadoop@master flume-1.7.]$ su root

Password:

[root@master flume-1.7.]# ntpdate pool.ntp.org

Jul :: ntpdate[]: step time server 85.199.214.101 offset 19.074422 sec

[root@master flume-1.7.]#

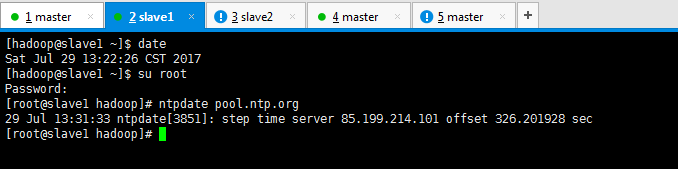

[hadoop@slave1 ~]$ su root

Password:

[root@slave1 hadoop]# ntpdate pool.ntp.org

Jul :: ntpdate[]: step time server 85.199.214.101 offset 326.201928 sec

[root@slave1 hadoop]#

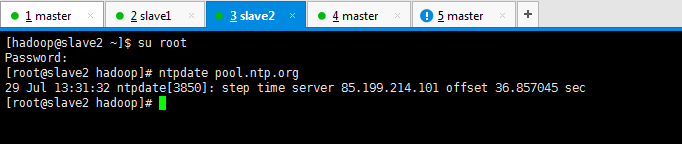

[hadoop@slave2 ~]$ su root

Password:

[root@slave2 hadoop]# ntpdate pool.ntp.org

Jul :: ntpdate[]: step time server 85.199.214.101 offset 36.857045 sec

[root@slave2 hadoop]#

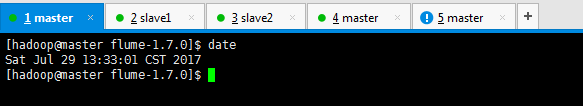

[hadoop@master flume-1.7.]$ date

Sat Jul :: CST

[hadoop@master flume-1.7.]$

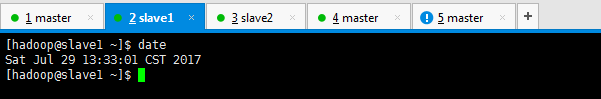

[hadoop@slave1 ~]$ date

Sat Jul :: CST

[hadoop@slave1 ~]$

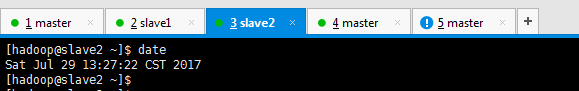

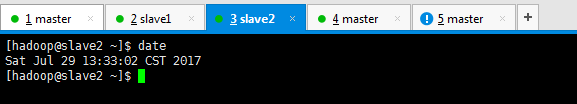

[hadoop@slave2 ~]$ date

Sat Jul :: CST

[hadoop@slave2 ~]$

或者

#source的名字

agent1.sources = fileSource

# channels的名字,建议按照type来命名

agent1.channels = memoryChannel

# sink的名字,建议按照目标来命名

agent1.sinks = hdfsSink # 指定source使用的channel名字

agent1.sources.fileSource.channels = memoryChannel

# 指定sink需要使用的channel的名字,注意这里是channel

agent1.sinks.hdfsSink.channel = memoryChannel agent1.sources.fileSource.type = exec

agent1.sources.fileSource.command = tail -F /usr/local/log/server.log #------- fileChannel-1相关配置-------------------------

# channel类型 agent1.channels.memoryChannel.type = memory

agent1.channels.memoryChannel.capacity =

agent1.channels.memoryChannel.transactionCapacity =

agent1.channels.memoryChannel.byteCapacityBufferPercentage =

agent1.channels.memoryChannel.byteCapacity =

agent1.channels.memoryChannel.keep-alive =

agent1.channels.memoryChannel.capacity = #---------拦截器相关配置------------------

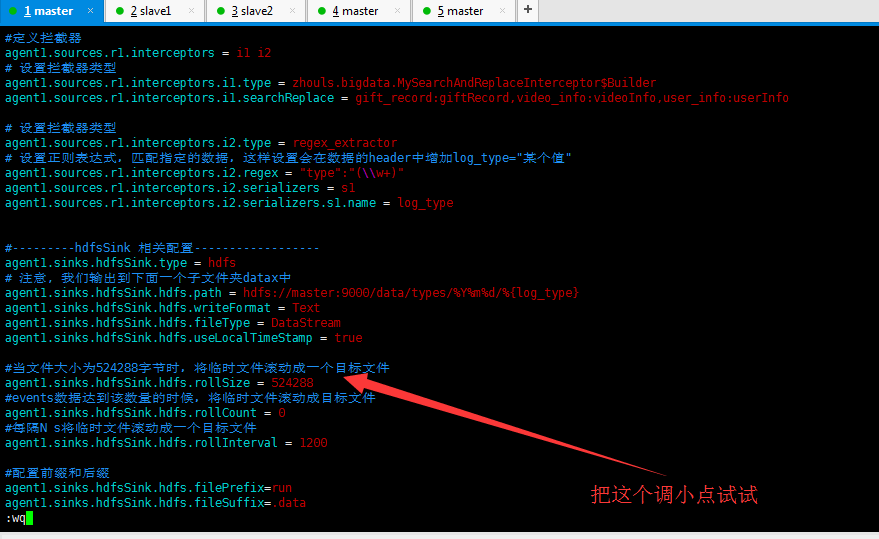

#定义拦截器

agent1.sources.r1.interceptors = i1 i2

# 设置拦截器类型

agent1.sources.r1.interceptors.i1.type = zhouls.bigdata.MySearchAndReplaceInterceptor$Builder

agent1.sources.r1.interceptors.i1.searchReplace = gift_record:giftRecord,video_info:videoInfo,user_info:userInfo # 设置拦截器类型

agent1.sources.r1.interceptors.i2.type = regex_extractor

# 设置正则表达式,匹配指定的数据,这样设置会在数据的header中增加log_type="某个值"

agent1.sources.r1.interceptors.i2.regex = "type":"(\\w+)"

agent1.sources.r1.interceptors.i2.serializers = s1

agent1.sources.r1.interceptors.i2.serializers.s1.name = log_type #---------hdfsSink 相关配置------------------

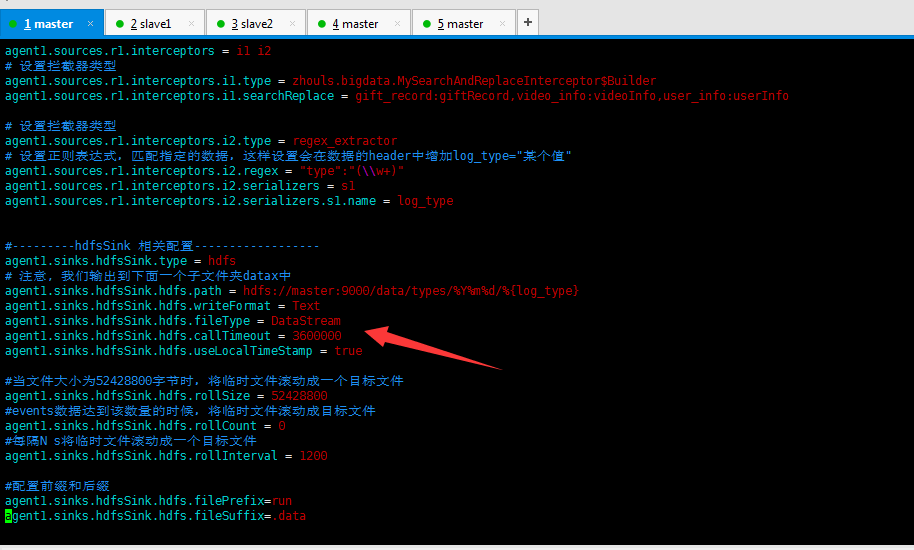

agent1.sinks.hdfsSink.type = hdfs

# 注意, 我们输出到下面一个子文件夹datax中

agent1.sinks.hdfsSink.hdfs.path = hdfs://master:9000/data/types/%Y%m%d/%{log_type}

agent1.sinks.hdfsSink.hdfs.writeFormat = Text

agent1.sinks.hdfsSink.hdfs.fileType = DataStream

agent1.sinks.hdfsSink.hdfs.callTimeout =

agent1.sinks.hdfsSink.hdfs.useLocalTimeStamp = true #当文件大小为52428800字节时,将临时文件滚动成一个目标文件

agent1.sinks.hdfsSink.hdfs.rollSize =

#events数据达到该数量的时候,将临时文件滚动成目标文件

agent1.sinks.hdfsSink.hdfs.rollCount =

#每隔N s将临时文件滚动成一个目标文件

agent1.sinks.hdfsSink.hdfs.rollInterval = #配置前缀和后缀

agent1.sinks.hdfsSink.hdfs.filePrefix=run

agent1.sinks.hdfsSink.hdfs.fileSuffix=.data

或者,

将机器重启,也许是网络的问题

或者,

进一步解决问题

https://stackoverflow.com/questions/22145899/flume-hdfs-sink-keeps-rolling-small-files

Flume启动报错[ERROR - org.apache.flume.sink.hdfs. Hit max consecutive under-replication rotations (30); will not continue rolling files under this path due to under-replication解决办法(图文详解)的更多相关文章

- Tomcat启动报错 ERROR org.apache.struts2.dispatcher.Dispatcher - Dispatcher initialization failed

背景: 在进行Spring Struts2 Hibernate 即SSH整合的过程中遇到了这个错误! 原因分析: Bean已经被加载了,不能重复加载 原来是Jar包重复了! 情形一: Tomcat ...

- flume启动报错

执行flume-ng agent -c conf -f conf/load_balancer_server.conf -n a1 -Dflume.root.logger=DEBUG,console , ...

- TOMCAT启动报错:org.apache.tomcat.jni.Error: 730055

TOMCAT启动报错:org.apache.tomcat.jni.Error: 730055 具体原因:不清楚 解决方式:重启应用服务器后,再启动tomcat就可以了 欢迎关注公众号,学习kettle ...

- android sdk启动报错error: could not install *smartsocket* listener: cannot bind to 127.0.0.1:5037:

android sdk启动报错error: could not install *smartsocket* listener: cannot bind to 127.0.0.1:5037: 问题原因: ...

- tomcat启动报错 ERROR o.a.catalina.session.StandardManager 182 - Exception loading sessions from persiste

系统:centos6.5 x86_64 jdk: 1.8.0_102 tomcat:8.0.37 tomcat 启动报错: ERROR o.a.catalina.session.StandardMan ...

- Tomcat启动报错ERROR:transport error 202:bind failed:Address already

昨天在服务器上拷贝了一个tomcat项目,修改了server.xml之后启动居然报错ERROR:transport error 202:bind failed:Address already,应该是远 ...

- Tomcat7.0.40注册到服务启动报错error Code 1 +connector attribute sslcertificateFile must be defined when using ssl with apr

Tomcat7.0.40 注册到服务启动遇到以下几个问题: 1.启动报错errorCode1 查看日志如下图: 解决办法: 这个是因为我的jdk版本问题,因为电脑是64位,安装的jdk是32位的所以会 ...

- springboot启动报错,Error starting ApplicationContext. To display the conditions report re-run your application with 'debug' enabled.

报错: Error starting ApplicationContext. To display the conditions report re-run your application with ...

- hbase shell中执行list命令报错:ERROR: org.apache.hadoop.hbase.PleaseHoldException: Master is initializing

问题描述: 今天在测试环境中,搭建hbase环境,执行list命令之后,报错: hbase(main):001:0> list TABLE ERROR: org.apache.hadoop.hb ...

随机推荐

- Windows Backdoor Tips

名称:在用户登录时,运行这些程序 位置: Computer Configuration\\Policies\\Administrative Templates\\System\\Logon\\ 中 d ...

- PDM中列举所有含取值范围、正则表达式约束的字段

Option Explicit ValidationMode = True InteractiveMode = im_Batch Dim mdl '当前model '获取当前活 ...

- MFC鼠标键盘消息处理

void CMainWindow::OnKeyDown(UINT nChar, UINT nRepCnt, UINT nFlags ){ )&&(GetKeyState(VK_LBUT ...

- java 多线程系列---JUC原子类(三)之AtomicLongArray原子类

AtomicLongArray介绍和函数列表 在"Java多线程系列--“JUC原子类”02之 AtomicLong原子类"中介绍过,AtomicLong是作用是对长整形进行原子操 ...

- hadoop启动脚本分析及常见命令

进程------------------ [hdfs]start-dfs.sh NameNode NN DataNode DN SecondaryNamenode 2NN [yarn]start-ya ...

- 控制器对应view生命周期

一.控制器view创建的六种方式 1.有没有同名xib创建2.通过 storyboard 创建3.有指定xib情况下创建4.有同名xib情况5.有同名去掉controll的情况6.loadveiw ...

- python爬虫框架(3)--Scrapy框架安装配置

1.安装python并将scripts配置进环境变量中 2.安装pywin32 在windows下,必须安装pywin32,安装地址:http://sourceforge.net/projects/p ...

- ie6下会拒绝高度小于字号的设置

<div style="height: 5px;background-color: yellow">wojitianhenkaixin</div> 此时浏览 ...

- QT5环境搭建

https://blog.csdn.net/liang19890820/article/details/53931813

- ReactNative安装配置

1.安装jdk1.8,配置好path, javac,java -version 2.安装设置Android sdk a. 解压:D:\www\sdk\adt-bundle-windows-x86_64 ...