(转) Deep learning architecture diagrams

FastML

Machine learning made easy

Deep learning architecture diagrams

2016-09-30

Like a wild stream after a wet season in African savanna diverges into many smaller streams forming lakes and puddles, deep learning has diverged into a myriad of specialized architectures. Each architecture has a diagram. Here are some of them.

Neural networks are conceptually simple, and that’s their beauty. A bunch of homogenous, uniform units, arranged in layers, weighted connections between them, and that’s all. At least in theory. Practice turned out to be a bit different. Instead of feature engineering, we now have architecture engineering, as described by Stephen Merrity:

The romanticized description of deep learning usually promises that the days of hand crafted feature engineering are gone - that the models are advanced enough to work this out themselves. Like most advertising, this is simultaneously true and misleading.

Whilst deep learning has simplified feature engineering in many cases, it certainly hasn’t removed it. As feature engineering has decreased, the architectures of the machine learning models themselves have become increasingly more complex. Most of the time, these model architectures are as specific to a given task as feature engineering used to be.

To clarify, this is still an important step. Architecture engineering is more general than feature engineering and provides many new opportunities. Having said that, however, we shouldn’t be oblivious to the fact that where we are is still far from where we intended to be.

Not quite as bad as doings of architecture astronauts, but not too good either.

An example of architecture specific to a given task

LSTM diagrams

How to explain those architectures? Naturally, with a diagram. A diagram will make it all crystal clear.

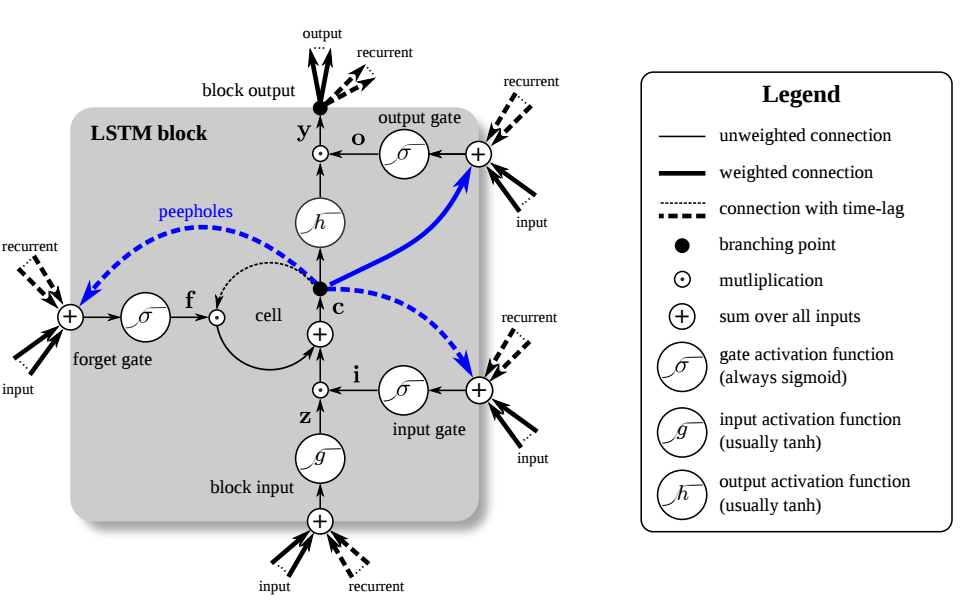

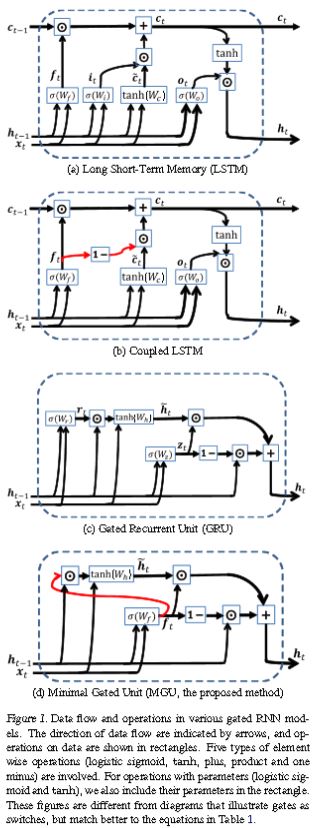

Let’s first inspect the two most popular types of networks these days, CNN and LSTM. You’ve already seen a convnet diagram, so turning to the iconic LSTM:

It’s easy, just take a closer look:

As they say, in mathematics you don’t understand things, you just get used to them.

Fortunately, there are good explanations, for example Understanding LSTM Networks and Written Memories: Understanding, Deriving and Extending the LSTM.

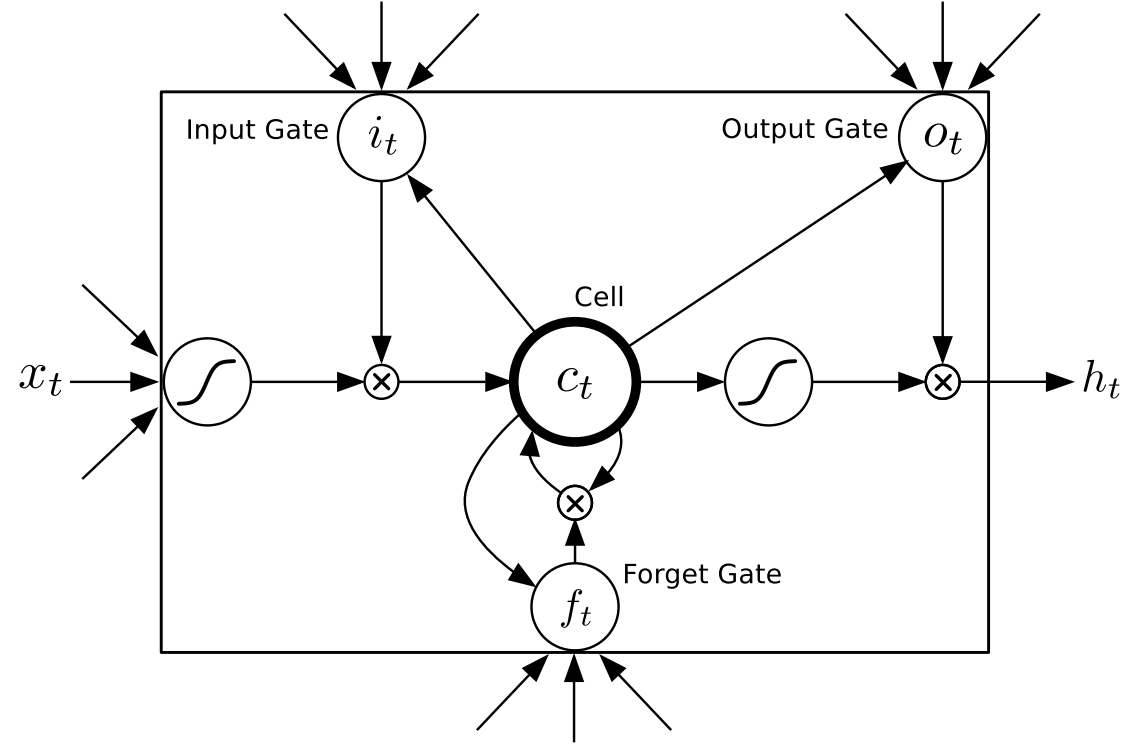

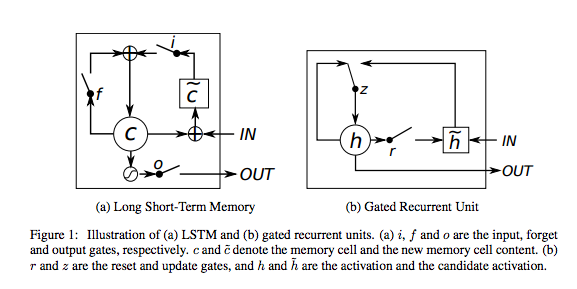

LSTM still too complex? Let’s try a simplified version, GRU (Gated Recurrent Unit). Trivial, really.

Especially this one, called minimal GRU.

More diagrams

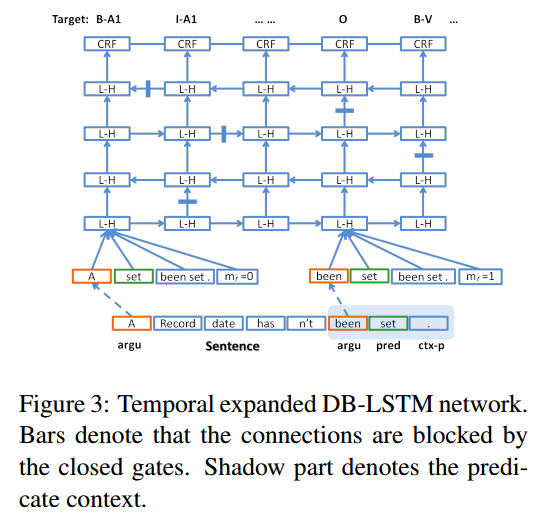

Various modifications of LSTM are now common. Here’s one, called deep bidirectional LSTM:

DB-LSTM, PDF

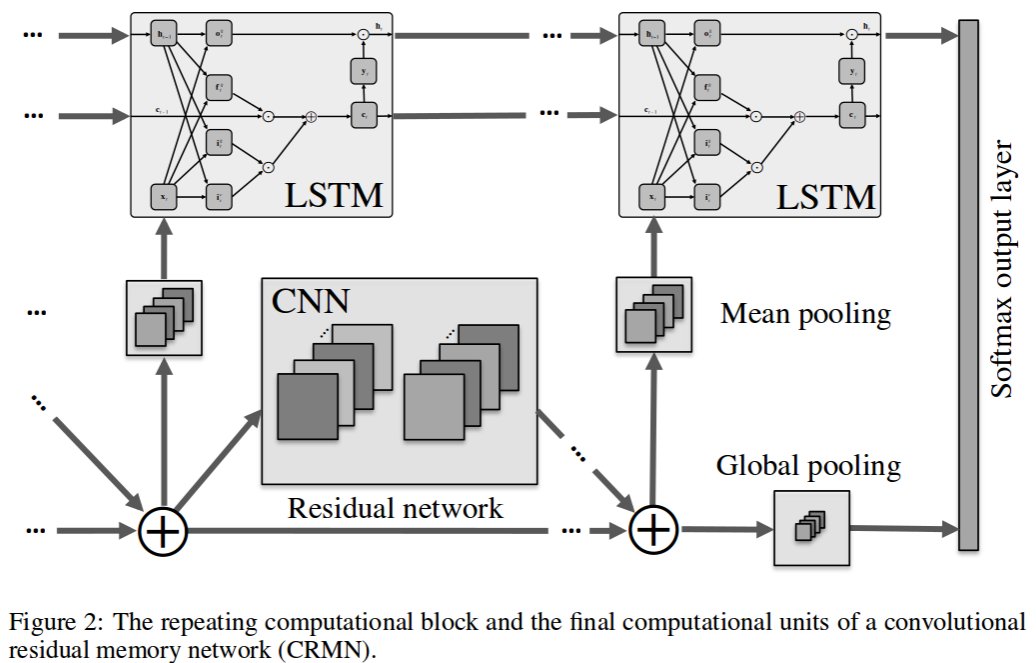

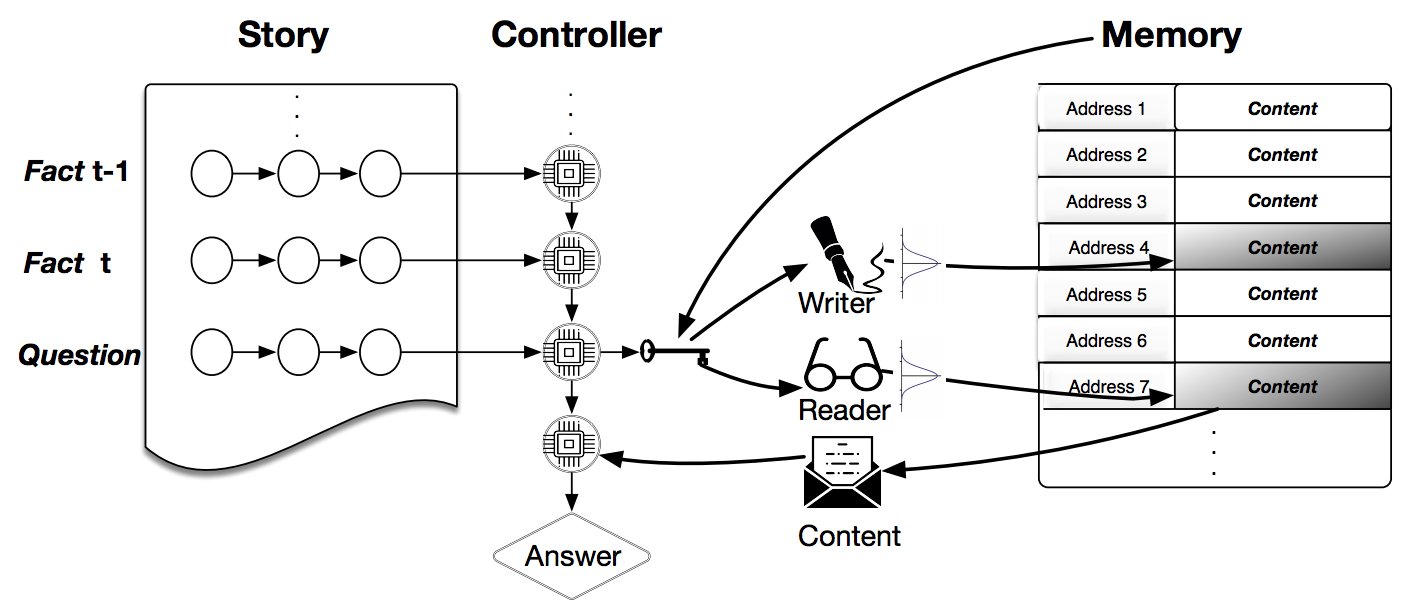

The rest are pretty self-explanatory, too. Let’s start with a combination of CNN and LSTM, since you have both under your belt now:

Convolutional Residual Memory Network, 1606.05262

Dynamic NTM, 1607.00036

Evolvable Neural Turing Machines, PDF

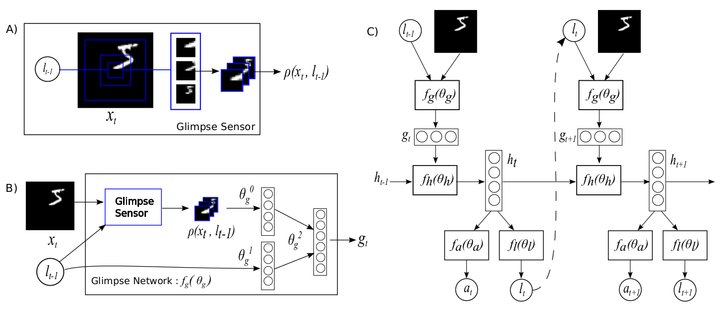

Recurrent Model Of Visual Attention, 1406.6247

Unsupervised Domain Adaptation By Backpropagation, 1409.7495

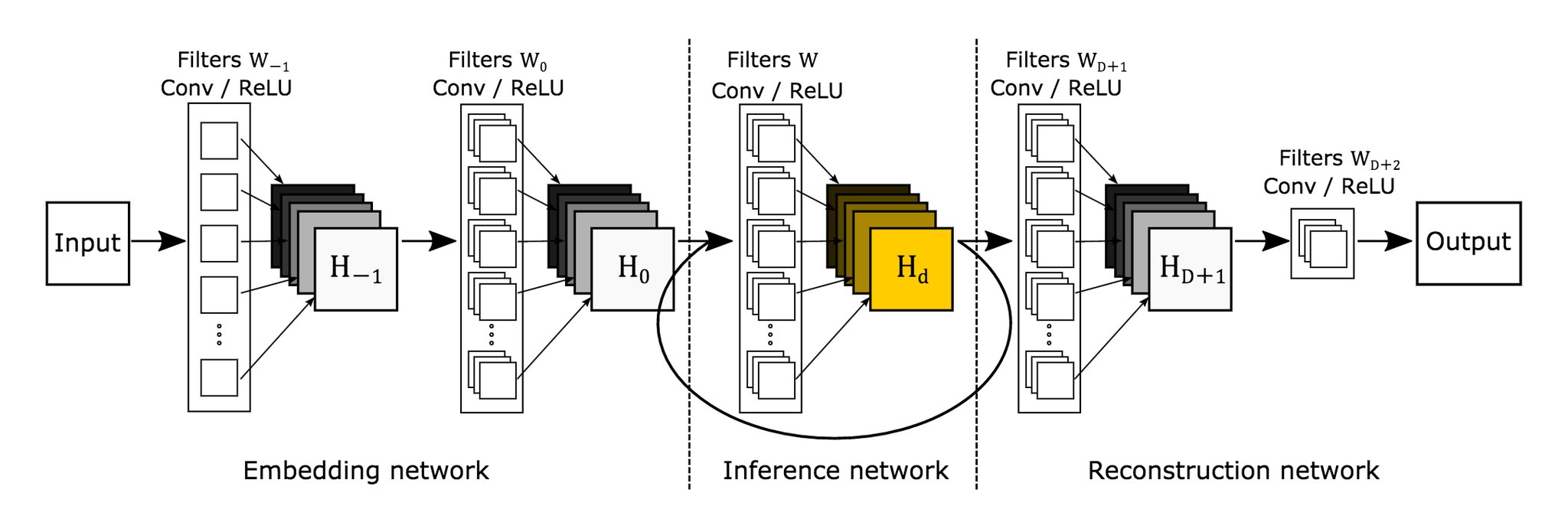

Deeply Recursive CNN For Image Super-Resolution, 1511.04491

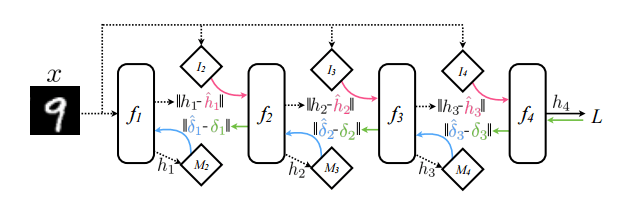

This diagram of multilayer perceptron with synthetic gradients scores high on clarity:

MLP with synthetic gradients, 1608.05343

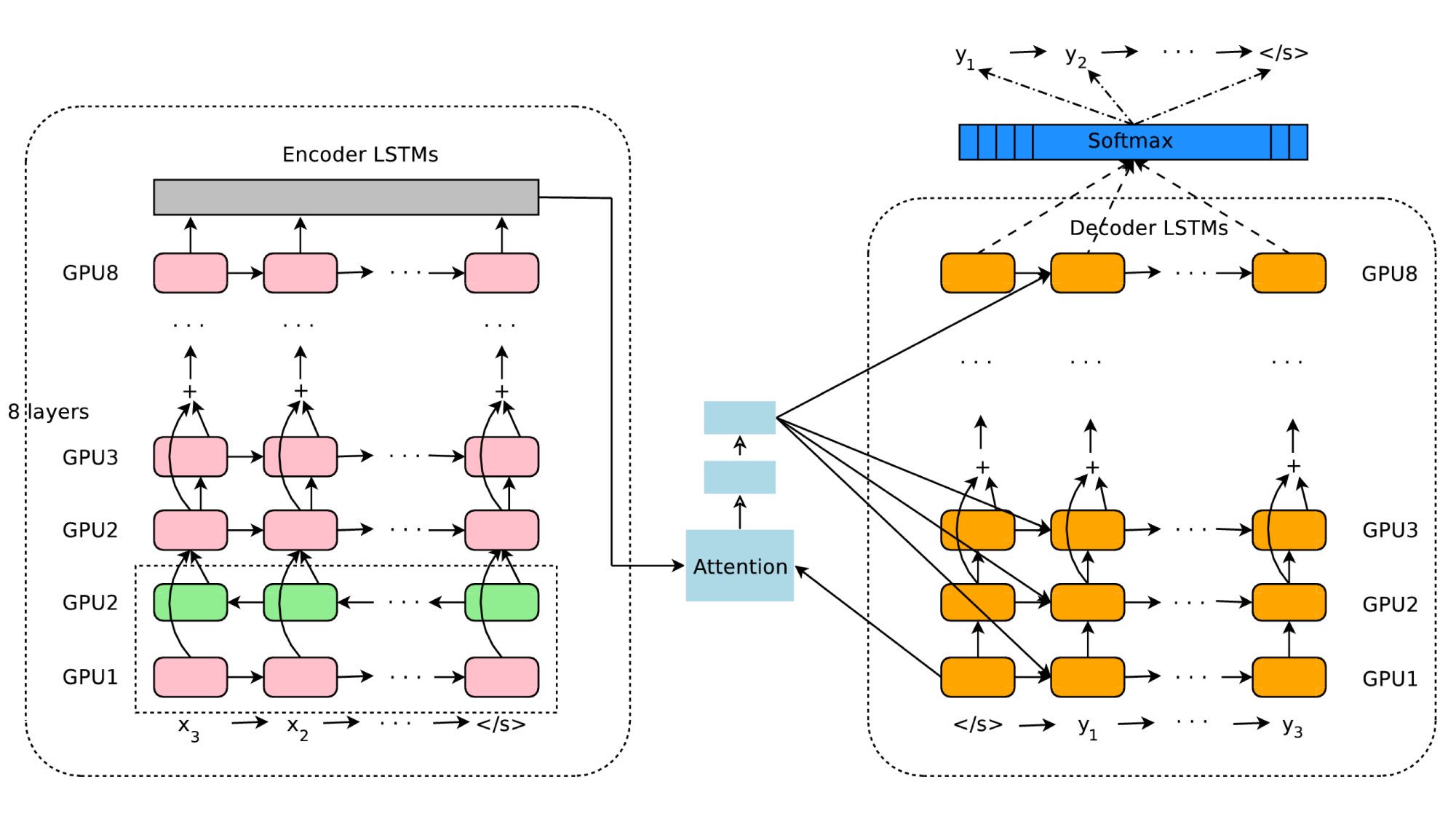

Every day brings more. Here’s a fresh one, again from Google:

Google’s Neural Machine Translation System, 1609.08144

And Now for Something Completely Different

Drawings from the Neural Network ZOO are pleasantly simple, but, unfortunately, serve mostly as eye candy. For example:

ESM, ESN and ELM

These look like not-fully-connected perceptrons, but are supposed to represent a Liquid State Machine, an Echo State Network, and an Extreme Learning Machine.

How does LSM differ from ESN? That’s easy, it has green neuron with triangles. But how does ESN differ from ELM? Both have blue neurons.

Seriously, while similar, ESN is a recursive network and ELM is not. And this kind of thing should probably be visible in an architecture diagram.

Posted by Zygmunt Z. 2016-09-30 basics, neural-networks

« Factorized convolutional neural networks, AKA separable convolutions

Comments

Recent Posts

- Deep learning architecture diagrams

- Factorized convolutional neural networks, AKA separable convolutions

- How to make those 3D data visualizations

- Adversarial validation, part two

- ^one weird trick for training char-^r^n^ns

- Adversarial validation, part one

- Coming out

Follow @fastml for notifications about new posts.

- Status updating...

Also check out @fastml_extra for things related to machine learning and data science in general.

GitHub

Most articles come with some code. We push it to Github.

Cubert

Visualize your data in interactive 3D, as described here.

Copyright © 2016 - Zygmunt Z. - Powered by Octopress

(转) Deep learning architecture diagrams的更多相关文章

- 15 cvpr An Improved Deep Learning Architecture for Person Re-Identification

http://www.umiacs.umd.edu/~ejaz/ * 也是同时学习feature和metric * 输入一对图片,输出是否是同一个人 * 包含了一个新的层: include a lay ...

- Deep Learning in a Nutshell: History and Training

Deep Learning in a Nutshell: History and Training This series of blog posts aims to provide an intui ...

- 深度学习材料:从感知机到深度网络A Deep Learning Tutorial: From Perceptrons to Deep Networks

In recent years, there’s been a resurgence in the field of Artificial Intelligence. It’s spread beyo ...

- 【Deep Learning】genCNN: A Convolutional Architecture for Word Sequence Prediction

作者:Mingxuan Wang.李航,刘群 单位:华为.中科院 时间:2015 发表于:acl 2015 文章下载:http://pan.baidu.com/s/1bnBBVuJ 主要内容: 用de ...

- Why GEMM is at the heart of deep learning

Why GEMM is at the heart of deep learning I spend most of my time worrying about how to make deep le ...

- 【深度学习Deep Learning】资料大全

最近在学深度学习相关的东西,在网上搜集到了一些不错的资料,现在汇总一下: Free Online Books by Yoshua Bengio, Ian Goodfellow and Aaron C ...

- (转) Awesome - Most Cited Deep Learning Papers

转自:https://github.com/terryum/awesome-deep-learning-papers Awesome - Most Cited Deep Learning Papers ...

- (转) Deep Learning Research Review Week 2: Reinforcement Learning

Deep Learning Research Review Week 2: Reinforcement Learning 转载自: https://adeshpande3.github.io/ad ...

- deep learning 的综述

从13年11月初开始接触DL,奈何boss忙or 各种问题,对DL理解没有CSDN大神 比如 zouxy09等 深刻,主要是自己觉得没啥进展,感觉荒废时日(丢脸啊,这么久....)开始开文,即为记录自 ...

随机推荐

- Greenplum 在Linux下的安装

1.实验环境 1.1.硬件环境 Oracle VM VirtualBox虚拟机软件:三台Linux虚拟机:Centos 6.5:数据库:greenplum-db-4.3.9.1-build-1-rhe ...

- MFC编程入门之十(对话框:设置对话框控件的Tab顺序)

前面几节为大家演示了加法计算器程序完整的编写过程,本节主要讲对话框上控件的Tab顺序如何调整. 上一讲为"计算"按钮添加了消息处理函数后,加法计算器已经能够进行浮点数的加法运算.但 ...

- [原创] RT7 Lite win7旗舰版精简方案

[原创] RT7 Lite win7旗舰版精简方案 墨雪SEED 发表于 2016-1-26 21:23:54 https://www.itsk.com/thread-362912-1-5.html ...

- Java队列工具类(程序仅供练习)

public class QueueUtils<T> { public int defaultSize; public Object[] data; public int front = ...

- logistic回归模型

一.模型简介 线性回归默认因变量为连续变量,而实际分析中,有时候会遇到因变量为分类变量的情况,例如阴性阳性.性别.血型等.此时如果还使用前面介绍的线性回归模型进行拟合的话,会出现问题,以二分类变量为例 ...

- oracle修改序列

Oracle 序列(Sequence)主要用于生成流水号,在应用中经常会用到,特别是作为ID值,拿来做表主键使用较多. 但是,有时需要修改序列初始值(START WITH)时,有同仁使用这个语句来 ...

- oracle数据库的乱码问题解决方案

我的电脑-----高级系统设置----高级-----环境变量 LANG=zh_CN.GBK NLS_LANG=SIMPLIFIED CHINESE_CHINA.ZHS16GBK

- js里function的apply vs. bind vs. call

js里除了直接调用obj.func()之外,还提供了另外3种调用方式:apply.bind.call,都在function的原型里.这3种方法的异同在stackoverflow的这个答案里说的最清楚, ...

- [Weekly] 2014.03.01-2014.03.08

这周写过好多东西,虽然还没有完全弄明白线段树,但是progress还是有的! 不过有时候真的很想哭,因为自己的梦想连别人看看韩剧.无所事事还要分量轻,实在不明白政治课的Teamwork意义何在,花两分 ...

- HTML5全局属性和事件

全局属性和事件能够应用到所有标签元素上,在HTML4中有许多全局属性,比如id,class等.HTML5中又新增了一些特殊功能的全局属性和事件. 属性: HTML5属性能够赋给标签元素含义和语 ...