Kubernetes K8S之Taints污点与Tolerations容忍详解

Kubernetes K8S之Taints污点与Tolerations容忍详解与示例

主机配置规划

| 服务器名称(hostname) | 系统版本 | 配置 | 内网IP | 外网IP(模拟) |

|---|---|---|---|---|

| k8s-master | CentOS7.7 | 2C/4G/20G | 172.16.1.110 | 10.0.0.110 |

| k8s-node01 | CentOS7.7 | 2C/4G/20G | 172.16.1.111 | 10.0.0.111 |

| k8s-node02 | CentOS7.7 | 2C/4G/20G | 172.16.1.112 | 10.0.0.112 |

Taints污点和Tolerations容忍概述

节点和Pod亲和力,是将Pod吸引到一组节点【根据拓扑域】(作为优选或硬性要求)。污点(Taints)则相反,它们允许一个节点排斥一组Pod。

容忍(Tolerations)应用于pod,允许(但不强制要求)pod调度到具有匹配污点的节点上。

污点(Taints)和容忍(Tolerations)共同作用,确保pods不会被调度到不适当的节点。一个或多个污点应用于节点;这标志着该节点不应该接受任何不容忍污点的Pod。

说明:我们在平常使用中发现pod不会调度到k8s的master节点,就是因为master节点存在污点。

Taints污点

Taints污点的组成

使用kubectl taint命令可以给某个Node节点设置污点,Node被设置污点之后就和Pod之间存在一种相斥的关系,可以让Node拒绝Pod的调度执行,甚至将Node上已经存在的Pod驱逐出去。

每个污点的组成如下:

key=value:effect

每个污点有一个key和value作为污点的标签,effect描述污点的作用。当前taint effect支持如下选项:

- NoSchedule:表示K8S将不会把Pod调度到具有该污点的Node节点上

- PreferNoSchedule:表示K8S将尽量避免把Pod调度到具有该污点的Node节点上

- NoExecute:表示K8S将不会把Pod调度到具有该污点的Node节点上,同时会将Node上已经存在的Pod驱逐出去

污点taint的NoExecute详解

taint 的 effect 值 NoExecute,它会影响已经在节点上运行的 pod:

- 如果 pod 不能容忍 effect 值为 NoExecute 的 taint,那么 pod 将马上被驱逐

- 如果 pod 能够容忍 effect 值为 NoExecute 的 taint,且在 toleration 定义中没有指定 tolerationSeconds,则 pod 会一直在这个节点上运行。

- 如果 pod 能够容忍 effect 值为 NoExecute 的 taint,但是在toleration定义中指定了 tolerationSeconds,则表示 pod 还能在这个节点上继续运行的时间长度。

Taints污点设置

污点(Taints)查看

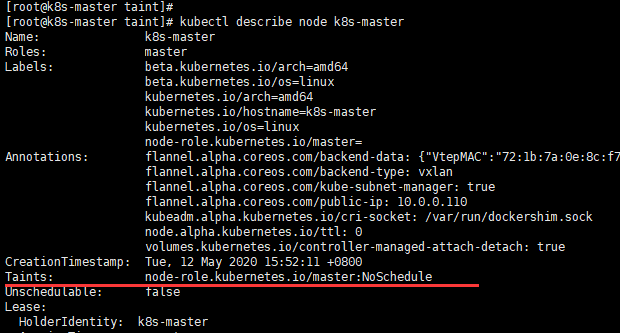

k8s master节点查看

kubectl describe node k8s-master

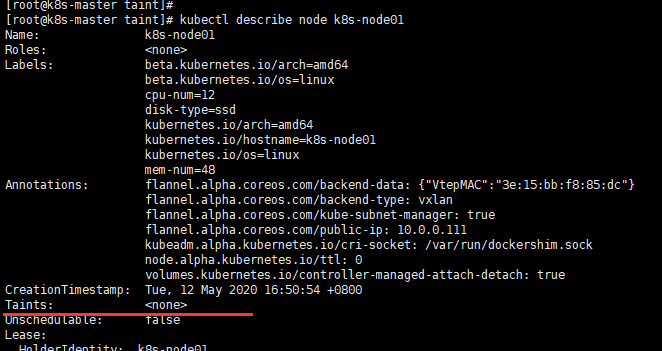

k8s node查看

kubectl describe node k8s-node01

污点(Taints)添加

1 [root@k8s-master taint]# kubectl taint nodes k8s-node01 check=zhang:NoSchedule

2 node/k8s-node01 tainted

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# kubectl describe node k8s-node01

5 Name: k8s-node01

6 Roles: <none>

7 Labels: beta.kubernetes.io/arch=amd64

8 beta.kubernetes.io/os=linux

9 cpu-num=12

10 disk-type=ssd

11 kubernetes.io/arch=amd64

12 kubernetes.io/hostname=k8s-node01

13 kubernetes.io/os=linux

14 mem-num=48

15 Annotations: flannel.alpha.coreos.com/backend-data: {"VtepMAC":"3e:15:bb:f8:85:dc"}

16 flannel.alpha.coreos.com/backend-type: vxlan

17 flannel.alpha.coreos.com/kube-subnet-manager: true

18 flannel.alpha.coreos.com/public-ip: 10.0.0.111

19 kubeadm.alpha.kubernetes.io/cri-socket: /var/run/dockershim.sock

20 node.alpha.kubernetes.io/ttl: 0

21 volumes.kubernetes.io/controller-managed-attach-detach: true

22 CreationTimestamp: Tue, 12 May 2020 16:50:54 +0800

23 Taints: check=zhang:NoSchedule ### 可见已添加污点

24 Unschedulable: false

在k8s-node01节点添加了一个污点(taint),污点的key为check,value为zhang,污点effect为NoSchedule。这意味着没有pod可以调度到k8s-node01节点,除非具有相匹配的容忍。

污点(Taints)删除

1 [root@k8s-master taint]# kubectl taint nodes k8s-node01 check:NoExecute-

2 ##### 或者

3 [root@k8s-master taint]# kubectl taint nodes k8s-node01 check=zhang:NoSchedule-

4 node/k8s-node01 untainted

5 [root@k8s-master taint]#

6 [root@k8s-master taint]# kubectl describe node k8s-node01

7 Name: k8s-node01

8 Roles: <none>

9 Labels: beta.kubernetes.io/arch=amd64

10 beta.kubernetes.io/os=linux

11 cpu-num=12

12 disk-type=ssd

13 kubernetes.io/arch=amd64

14 kubernetes.io/hostname=k8s-node01

15 kubernetes.io/os=linux

16 mem-num=48

17 Annotations: flannel.alpha.coreos.com/backend-data: {"VtepMAC":"3e:15:bb:f8:85:dc"}

18 flannel.alpha.coreos.com/backend-type: vxlan

19 flannel.alpha.coreos.com/kube-subnet-manager: true

20 flannel.alpha.coreos.com/public-ip: 10.0.0.111

21 kubeadm.alpha.kubernetes.io/cri-socket: /var/run/dockershim.sock

22 node.alpha.kubernetes.io/ttl: 0

23 volumes.kubernetes.io/controller-managed-attach-detach: true

24 CreationTimestamp: Tue, 12 May 2020 16:50:54 +0800

25 Taints: <none> ### 可见已删除污点

26 Unschedulable: false

Tolerations容忍

设置了污点的Node将根据taint的effect:NoSchedule、PreferNoSchedule、NoExecute和Pod之间产生互斥的关系,Pod将在一定程度上不会被调度到Node上。

但我们可以在Pod上设置容忍(Tolerations),意思是设置了容忍的Pod将可以容忍污点的存在,可以被调度到存在污点的Node上。

pod.spec.tolerations示例

1 tolerations:

2 - key: "key"

3 operator: "Equal"

4 value: "value"

5 effect: "NoSchedule"

6 ---

7 tolerations:

8 - key: "key"

9 operator: "Exists"

10 effect: "NoSchedule"

11 ---

12 tolerations:

13 - key: "key"

14 operator: "Equal"

15 value: "value"

16 effect: "NoExecute"

17 tolerationSeconds: 3600

重要说明:

- 其中key、value、effect要与Node上设置的taint保持一致

- operator的值为Exists时,将会忽略value;只要有key和effect就行

- tolerationSeconds:表示pod 能够容忍 effect 值为 NoExecute 的 taint;当指定了 tolerationSeconds【容忍时间】,则表示 pod 还能在这个节点上继续运行的时间长度。

当不指定key值时

当不指定key值和effect值时,且operator为Exists,表示容忍所有的污点【能匹配污点所有的keys,values和effects】

1 tolerations:

2 - operator: "Exists"

当不指定effect值时

当不指定effect值时,则能匹配污点key对应的所有effects情况

1 tolerations:

2 - key: "key"

3 operator: "Exists"

当有多个Master存在时

当有多个Master存在时,为了防止资源浪费,可以进行如下设置:

1 kubectl taint nodes Node-name node-role.kubernetes.io/master=:PreferNoSchedule

多个Taints污点和多个Tolerations容忍怎么判断

可以在同一个node节点上设置多个污点(Taints),在同一个pod上设置多个容忍(Tolerations)。Kubernetes处理多个污点和容忍的方式就像一个过滤器:从节点的所有污点开始,然后忽略可以被Pod容忍匹配的污点;保留其余不可忽略的污点,污点的effect对Pod具有显示效果:特别是:

- 如果有至少一个不可忽略污点,effect为NoSchedule,那么Kubernetes将不调度Pod到该节点

- 如果没有effect为NoSchedule的不可忽视污点,但有至少一个不可忽视污点,effect为PreferNoSchedule,那么Kubernetes将尽量不调度Pod到该节点

- 如果有至少一个不可忽视污点,effect为NoExecute,那么Pod将被从该节点驱逐(如果Pod已经在该节点运行),并且不会被调度到该节点(如果Pod还未在该节点运行)

污点和容忍示例

Node污点为NoExecute的示例

记得把已有的污点清除,以免影响测验。

实现如下污点

1 k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】

2 k8s-node01 污点为:

3 k8s-node02 污点为:

污点添加操作如下:

「无,本次无污点操作」

污点查看操作如下:

1 kubectl describe node k8s-master | grep 'Taints' -A 5

2 kubectl describe node k8s-node01 | grep 'Taints' -A 5

3 kubectl describe node k8s-node02 | grep 'Taints' -A 5

除了k8s-master默认的污点,在k8s-node01、k8s-node02无污点。

yaml文件

1 [root@k8s-master taint]# pwd

2 /root/k8s_practice/scheduler/taint

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# cat noexecute_tolerations.yaml

5 apiVersion: apps/v1

6 kind: Deployment

7 metadata:

8 name: noexec-tolerations-deploy

9 labels:

10 app: noexectolerations-deploy

11 spec:

12 replicas: 6

13 selector:

14 matchLabels:

15 app: myapp

16 template:

17 metadata:

18 labels:

19 app: myapp

20 spec:

21 containers:

22 - name: myapp-pod

23 image: registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1

24 imagePullPolicy: IfNotPresent

25 ports:

26 - containerPort: 80

27 # 有容忍并有 tolerationSeconds 时的格式

28 # tolerations:

29 # - key: "check-mem"

30 # operator: "Equal"

31 # value: "memdb"

32 # effect: "NoExecute"

33 # # 当Pod将被驱逐时,Pod还可以在Node节点上继续保留运行的时间

34 # tolerationSeconds: 30

运行yaml文件

1 [root@k8s-master taint]# kubectl apply -f noexecute_tolerations.yaml

2 deployment.apps/noexec-tolerations-deploy created

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# kubectl get deploy -o wide

5 NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

6 noexec-tolerations-deploy 6/6 6 6 10s myapp-pod registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp

7 [root@k8s-master taint]#

8 [root@k8s-master taint]# kubectl get pod -o wide

9 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

10 noexec-tolerations-deploy-85587896f9-2j848 1/1 Running 0 15s 10.244.4.101 k8s-node01 <none> <none>

11 noexec-tolerations-deploy-85587896f9-jgqkn 1/1 Running 0 15s 10.244.2.141 k8s-node02 <none> <none>

12 noexec-tolerations-deploy-85587896f9-jmw5w 1/1 Running 0 15s 10.244.2.142 k8s-node02 <none> <none>

13 noexec-tolerations-deploy-85587896f9-s8x95 1/1 Running 0 15s 10.244.4.102 k8s-node01 <none> <none>

14 noexec-tolerations-deploy-85587896f9-t82fj 1/1 Running 0 15s 10.244.4.103 k8s-node01 <none> <none>

15 noexec-tolerations-deploy-85587896f9-wx9pz 1/1 Running 0 15s 10.244.2.143 k8s-node02 <none> <none>

由上可见,pod是在k8s-node01、k8s-node02平均分布的。

kubectl taint nodes k8s-node02 check-mem=memdb:NoExecute

此时所有节点污点为

1 k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】

2 k8s-node01 污点为:

3 k8s-node02 污点为:check-mem=memdb:NoExecute

之后再次查看pod信息

1 [root@k8s-master taint]# kubectl get pod -o wide

2 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

3 noexec-tolerations-deploy-85587896f9-2j848 1/1 Running 0 2m2s 10.244.4.101 k8s-node01 <none> <none>

4 noexec-tolerations-deploy-85587896f9-ch96j 1/1 Running 0 8s 10.244.4.106 k8s-node01 <none> <none>

5 noexec-tolerations-deploy-85587896f9-cjrkb 1/1 Running 0 8s 10.244.4.105 k8s-node01 <none> <none>

6 noexec-tolerations-deploy-85587896f9-qbq6d 1/1 Running 0 7s 10.244.4.104 k8s-node01 <none> <none>

7 noexec-tolerations-deploy-85587896f9-s8x95 1/1 Running 0 2m2s 10.244.4.102 k8s-node01 <none> <none>

8 noexec-tolerations-deploy-85587896f9-t82fj 1/1 Running 0 2m2s 10.244.4.103 k8s-node01 <none> <none>

由上可见,在k8s-node02节点上的pod已被驱逐,驱逐的pod被调度到了k8s-node01节点。

Pod没有容忍时(Tolerations)

记得把已有的污点清除,以免影响测验。

实现如下污点

1 k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】

2 k8s-node01 污点为:check-nginx=web:PreferNoSchedule

3 k8s-node02 污点为:check-nginx=web:NoSchedule

污点添加操作如下:

1 kubectl taint nodes k8s-node01 check-nginx=web:PreferNoSchedule

2 kubectl taint nodes k8s-node02 check-nginx=web:NoSchedule

污点查看操作如下:

1 kubectl describe node k8s-master | grep 'Taints' -A 5

2 kubectl describe node k8s-node01 | grep 'Taints' -A 5

3 kubectl describe node k8s-node02 | grep 'Taints' -A 5

yaml文件

1 [root@k8s-master taint]# pwd

2 /root/k8s_practice/scheduler/taint

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# cat no_tolerations.yaml

5 apiVersion: apps/v1

6 kind: Deployment

7 metadata:

8 name: no-tolerations-deploy

9 labels:

10 app: notolerations-deploy

11 spec:

12 replicas: 5

13 selector:

14 matchLabels:

15 app: myapp

16 template:

17 metadata:

18 labels:

19 app: myapp

20 spec:

21 containers:

22 - name: myapp-pod

23 image: registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1

24 imagePullPolicy: IfNotPresent

25 ports:

26 - containerPort: 80

运行yaml文件

1 [root@k8s-master taint]# kubectl apply -f no_tolerations.yaml

2 deployment.apps/no-tolerations-deploy created

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# kubectl get deploy -o wide

5 NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

6 no-tolerations-deploy 5/5 5 5 9s myapp-pod registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp

7 [root@k8s-master taint]#

8 [root@k8s-master taint]# kubectl get pod -o wide

9 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

10 no-tolerations-deploy-85587896f9-6bjv8 1/1 Running 0 16s 10.244.4.54 k8s-node01 <none> <none>

11 no-tolerations-deploy-85587896f9-hbbjb 1/1 Running 0 16s 10.244.4.58 k8s-node01 <none> <none>

12 no-tolerations-deploy-85587896f9-jlmzw 1/1 Running 0 16s 10.244.4.56 k8s-node01 <none> <none>

13 no-tolerations-deploy-85587896f9-kfh2c 1/1 Running 0 16s 10.244.4.55 k8s-node01 <none> <none>

14 no-tolerations-deploy-85587896f9-wmp8b 1/1 Running 0 16s 10.244.4.57 k8s-node01 <none> <none>

由上可见,因为k8s-node02节点的污点check-nginx 的effect为NoSchedule,说明pod不能被调度到该节点。此时k8s-node01节点的污点check-nginx 的effect为PreferNoSchedule【尽量不调度到该节点】;但只有该节点满足调度条件,因此都调度到了k8s-node01节点。

Pod单个容忍时(Tolerations)

记得把已有的污点清除,以免影响测验。

实现如下污点

1 k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】

2 k8s-node01 污点为:check-nginx=web:PreferNoSchedule

3 k8s-node02 污点为:check-nginx=web:NoSchedule

污点添加操作如下:

1 kubectl taint nodes k8s-node01 check-nginx=web:PreferNoSchedule

2 kubectl taint nodes k8s-node02 check-nginx=web:NoSchedule

污点查看操作如下:

1 kubectl describe node k8s-master | grep 'Taints' -A 5

2 kubectl describe node k8s-node01 | grep 'Taints' -A 5

3 kubectl describe node k8s-node02 | grep 'Taints' -A 5

yaml文件

1 [root@k8s-master taint]# pwd

2 /root/k8s_practice/scheduler/taint

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# cat one_tolerations.yaml

5 apiVersion: apps/v1

6 kind: Deployment

7 metadata:

8 name: one-tolerations-deploy

9 labels:

10 app: onetolerations-deploy

11 spec:

12 replicas: 6

13 selector:

14 matchLabels:

15 app: myapp

16 template:

17 metadata:

18 labels:

19 app: myapp

20 spec:

21 containers:

22 - name: myapp-pod

23 image: registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1

24 imagePullPolicy: IfNotPresent

25 ports:

26 - containerPort: 80

27 tolerations:

28 - key: "check-nginx"

29 operator: "Equal"

30 value: "web"

31 effect: "NoSchedule"

运行yaml文件

1 [root@k8s-master taint]# kubectl apply -f one_tolerations.yaml

2 deployment.apps/one-tolerations-deploy created

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# kubectl get deploy -o wide

5 NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

6 one-tolerations-deploy 6/6 6 6 3s myapp-pod registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp

7 [root@k8s-master taint]#

8 [root@k8s-master taint]# kubectl get pod -o wide

9 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

10 one-tolerations-deploy-5757d6b559-gbj49 1/1 Running 0 7s 10.244.2.73 k8s-node02 <none> <none>

11 one-tolerations-deploy-5757d6b559-j9p6r 1/1 Running 0 7s 10.244.2.71 k8s-node02 <none> <none>

12 one-tolerations-deploy-5757d6b559-kpk9q 1/1 Running 0 7s 10.244.2.72 k8s-node02 <none> <none>

13 one-tolerations-deploy-5757d6b559-lsppn 1/1 Running 0 7s 10.244.4.65 k8s-node01 <none> <none>

14 one-tolerations-deploy-5757d6b559-rx72g 1/1 Running 0 7s 10.244.4.66 k8s-node01 <none> <none>

15 one-tolerations-deploy-5757d6b559-s8qr9 1/1 Running 0 7s 10.244.2.74 k8s-node02 <none> <none>

由上可见,此时pod会尽量【优先】调度到k8s-node02节点,尽量不调度到k8s-node01节点。如果我们只有一个pod,那么会一直调度到k8s-node02节点。

Pod多个容忍时(Tolerations)

记得把已有的污点清除,以免影响测验。

实现如下污点

1 k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】

2 k8s-node01 污点为:check-nginx=web:PreferNoSchedule, check-redis=memdb:NoSchedule

3 k8s-node02 污点为:check-nginx=web:NoSchedule, check-redis=database:NoSchedule

污点添加操作如下:

1 kubectl taint nodes k8s-node01 check-nginx=web:PreferNoSchedule

2 kubectl taint nodes k8s-node01 check-redis=memdb:NoSchedule

3 kubectl taint nodes k8s-node02 check-nginx=web:NoSchedule

4 kubectl taint nodes k8s-node02 check-redis=database:NoSchedule

污点查看操作如下:

1 kubectl describe node k8s-master | grep 'Taints' -A 5

2 kubectl describe node k8s-node01 | grep 'Taints' -A 5

3 kubectl describe node k8s-node02 | grep 'Taints' -A 5

yaml文件

1 [root@k8s-master taint]# pwd

2 /root/k8s_practice/scheduler/taint

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# cat multi_tolerations.yaml

5 apiVersion: apps/v1

6 kind: Deployment

7 metadata:

8 name: multi-tolerations-deploy

9 labels:

10 app: multitolerations-deploy

11 spec:

12 replicas: 6

13 selector:

14 matchLabels:

15 app: myapp

16 template:

17 metadata:

18 labels:

19 app: myapp

20 spec:

21 containers:

22 - name: myapp-pod

23 image: registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1

24 imagePullPolicy: IfNotPresent

25 ports:

26 - containerPort: 80

27 tolerations:

28 - key: "check-nginx"

29 operator: "Equal"

30 value: "web"

31 effect: "NoSchedule"

32 - key: "check-redis"

33 operator: "Exists"

34 effect: "NoSchedule"

运行yaml文件

1 [root@k8s-master taint]# kubectl apply -f multi_tolerations.yaml

2 deployment.apps/multi-tolerations-deploy created

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# kubectl get deploy -o wide

5 NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

6 multi-tolerations-deploy 6/6 6 6 5s myapp-pod registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp

7 [root@k8s-master taint]#

8 [root@k8s-master taint]# kubectl get pod -o wide

9 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

10 multi-tolerations-deploy-776ff4449c-2csnk 1/1 Running 0 10s 10.244.2.171 k8s-node02 <none> <none>

11 multi-tolerations-deploy-776ff4449c-4d9fh 1/1 Running 0 10s 10.244.4.116 k8s-node01 <none> <none>

12 multi-tolerations-deploy-776ff4449c-c8fz5 1/1 Running 0 10s 10.244.2.173 k8s-node02 <none> <none>

13 multi-tolerations-deploy-776ff4449c-nj29f 1/1 Running 0 10s 10.244.4.115 k8s-node01 <none> <none>

14 multi-tolerations-deploy-776ff4449c-r7gsm 1/1 Running 0 10s 10.244.2.172 k8s-node02 <none> <none>

15 multi-tolerations-deploy-776ff4449c-s8t2n 1/1 Running 0 10s 10.244.2.174 k8s-node02 <none> <none>

由上可见,示例中的pod容忍为:check-nginx=web:NoSchedule;check-redis=:NoSchedule。因此pod会尽量调度到k8s-node02节点,尽量不调度到k8s-node01节点。

Pod容忍指定污点key的所有effects情况

记得把已有的污点清除,以免影响测验。

实现如下污点

1 k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】

2 k8s-node01 污点为:check-redis=memdb:NoSchedule

3 k8s-node02 污点为:check-redis=database:NoSchedule

污点添加操作如下:

1 kubectl taint nodes k8s-node01 check-redis=memdb:NoSchedule

2 kubectl taint nodes k8s-node02 check-redis=database:NoSchedule

污点查看操作如下:

1 kubectl describe node k8s-master | grep 'Taints' -A 5

2 kubectl describe node k8s-node01 | grep 'Taints' -A 5

3 kubectl describe node k8s-node02 | grep 'Taints' -A 5

yaml文件

1 [root@k8s-master taint]# pwd

2 /root/k8s_practice/scheduler/taint

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# cat key_tolerations.yaml

5 apiVersion: apps/v1

6 kind: Deployment

7 metadata:

8 name: key-tolerations-deploy

9 labels:

10 app: keytolerations-deploy

11 spec:

12 replicas: 6

13 selector:

14 matchLabels:

15 app: myapp

16 template:

17 metadata:

18 labels:

19 app: myapp

20 spec:

21 containers:

22 - name: myapp-pod

23 image: registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1

24 imagePullPolicy: IfNotPresent

25 ports:

26 - containerPort: 80

27 tolerations:

28 - key: "check-redis"

29 operator: "Exists"

运行yaml文件

1 [root@k8s-master taint]# kubectl apply -f key_tolerations.yaml

2 deployment.apps/key-tolerations-deploy created

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# kubectl get deploy -o wide

5 NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

6 key-tolerations-deploy 6/6 6 6 21s myapp-pod registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp

7 [root@k8s-master taint]#

8 [root@k8s-master taint]# kubectl get pod -o wide

9 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

10 key-tolerations-deploy-db5c4c4db-2zqr8 1/1 Running 0 26s 10.244.2.170 k8s-node02 <none> <none>

11 key-tolerations-deploy-db5c4c4db-5qb5p 1/1 Running 0 26s 10.244.4.113 k8s-node01 <none> <none>

12 key-tolerations-deploy-db5c4c4db-7xmt6 1/1 Running 0 26s 10.244.2.169 k8s-node02 <none> <none>

13 key-tolerations-deploy-db5c4c4db-84rkj 1/1 Running 0 26s 10.244.4.114 k8s-node01 <none> <none>

14 key-tolerations-deploy-db5c4c4db-gszxg 1/1 Running 0 26s 10.244.2.168 k8s-node02 <none> <none>

15 key-tolerations-deploy-db5c4c4db-vlgh8 1/1 Running 0 26s 10.244.4.112 k8s-node01 <none> <none>

由上可见,示例中的pod容忍为:check-nginx=:;仅需匹配node污点的key即可,污点的value和effect不需要关心。因此可以匹配k8s-node01、k8s-node02节点。

Pod容忍所有污点

记得把已有的污点清除,以免影响测验。

实现如下污点

1 k8s-master 污点为:node-role.kubernetes.io/master:NoSchedule 【k8s自带污点,直接使用,不必另外操作添加】

2 k8s-node01 污点为:check-nginx=web:PreferNoSchedule, check-redis=memdb:NoSchedule

3 k8s-node02 污点为:check-nginx=web:NoSchedule, check-redis=database:NoSchedule

污点添加操作如下:

1 kubectl taint nodes k8s-node01 check-nginx=web:PreferNoSchedule

2 kubectl taint nodes k8s-node01 check-redis=memdb:NoSchedule

3 kubectl taint nodes k8s-node02 check-nginx=web:NoSchedule

4 kubectl taint nodes k8s-node02 check-redis=database:NoSchedule

污点查看操作如下:

1 kubectl describe node k8s-master | grep 'Taints' -A 5

2 kubectl describe node k8s-node01 | grep 'Taints' -A 5

3 kubectl describe node k8s-node02 | grep 'Taints' -A 5

yaml文件

1 [root@k8s-master taint]# pwd

2 /root/k8s_practice/scheduler/taint

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# cat all_tolerations.yaml

5 apiVersion: apps/v1

6 kind: Deployment

7 metadata:

8 name: all-tolerations-deploy

9 labels:

10 app: alltolerations-deploy

11 spec:

12 replicas: 6

13 selector:

14 matchLabels:

15 app: myapp

16 template:

17 metadata:

18 labels:

19 app: myapp

20 spec:

21 containers:

22 - name: myapp-pod

23 image: registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1

24 imagePullPolicy: IfNotPresent

25 ports:

26 - containerPort: 80

27 tolerations:

28 - operator: "Exists"

运行yaml文件

1 [root@k8s-master taint]# kubectl apply -f all_tolerations.yaml

2 deployment.apps/all-tolerations-deploy created

3 [root@k8s-master taint]#

4 [root@k8s-master taint]# kubectl get deploy -o wide

5 NAME READY UP-TO-DATE AVAILABLE AGE CONTAINERS IMAGES SELECTOR

6 all-tolerations-deploy 6/6 6 6 8s myapp-pod registry.cn-beijing.aliyuncs.com/google_registry/myapp:v1 app=myapp

7 [root@k8s-master taint]#

8 [root@k8s-master taint]# kubectl get pod -o wide

9 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

10 all-tolerations-deploy-566cdccbcd-4klc2 1/1 Running 0 12s 10.244.0.116 k8s-master <none> <none>

11 all-tolerations-deploy-566cdccbcd-59vvc 1/1 Running 0 12s 10.244.0.115 k8s-master <none> <none>

12 all-tolerations-deploy-566cdccbcd-cvw4s 1/1 Running 0 12s 10.244.2.175 k8s-node02 <none> <none>

13 all-tolerations-deploy-566cdccbcd-k8fzl 1/1 Running 0 12s 10.244.2.176 k8s-node02 <none> <none>

14 all-tolerations-deploy-566cdccbcd-s2pw7 1/1 Running 0 12s 10.244.4.118 k8s-node01 <none> <none>

15 all-tolerations-deploy-566cdccbcd-xzngt 1/1 Running 0 13s 10.244.4.117 k8s-node01 <none> <none>

后上可见,示例中的pod容忍所有的污点,因此pod可被调度到所有k8s节点。

相关阅读

1、官网:污点与容忍

2、Kubernetes K8S调度器kube-scheduler详解

3、Kubernetes K8S之affinity亲和性与反亲和性详解与示例

完毕!

———END———

如果觉得不错就关注下呗 (-^O^-) !

Kubernetes K8S之Taints污点与Tolerations容忍详解的更多相关文章

- Kubernetes K8S之CPU和内存资源限制详解

Kubernetes K8S之CPU和内存资源限制详解 Pod资源限制 备注:CPU单位换算:100m CPU,100 milliCPU 和 0.1 CPU 都相同:精度不能超过 1m.1000m C ...

- kubernetes之Taints污点和Tolerations容忍

介绍说明 nodeaffinity节点亲和性是pod上定义的一种属性, 使得pod能够被调度到某些node上运行, taint污点正好相反, 它让node拒绝pod运行, 除非pod明确声明能够容忍这 ...

- Kubernetes K8S之affinity亲和性与反亲和性详解与示例

Kubernetes K8S之Node节点亲和性与反亲和性以及Pod亲和性与反亲和性详解与示例 主机配置规划 服务器名称(hostname) 系统版本 配置 内网IP 外网IP(模拟) k8s-mas ...

- Kubernetes K8S之资源控制器Job和CronJob详解

Kubernetes的资源控制器Job和CronJob详解与示例 主机配置规划 服务器名称(hostname) 系统版本 配置 内网IP 外网IP(模拟) k8s-master CentOS7.7 2 ...

- 容器编排系统K8s之节点污点和pod容忍度

前文我们了解了k8s上的kube-scheduler的工作方式,以及pod调度策略的定义:回顾请参考:https://www.cnblogs.com/qiuhom-1874/p/14243312.ht ...

- Kubernetes中的Taint污点和Toleration容忍

Taint(污点)和 Toleration(容忍)可以作用于 node 和 pod master 上添加taint kubectl taint nodes master1 node-role.kube ...

- kubernetes创建资源对象yaml文件例子--pod详解

apiVersion: v1 #指定api版本,此值必须在kubectl apiversion中 kind: Pod #指定创建资源的角色/类型 metadata: #资源的元数据/属性 name: ...

- k8s之PV、PVC、StorageClass详解

导读 上一篇写了共享存储的概述以及一个简单的案例演示.这一篇就写一下PV和PVC. PV是对底层网络共享存储的抽象,将共享存储定义为一种"资源",比如Node也是容器应用可以消费的 ...

- ASP.NET Core微服务 on K8S学习笔记(第一章:详解基本对象及服务发现)

课程链接:http://video.jessetalk.cn/course/explore 良心课程,大家一起来学习哈! 任务1:课程介绍 任务2:Labels and Selectors 所有资源对 ...

随机推荐

- socket php

$socket = socket_create(AF_INET,SOCK_STREAM,SOL_TCP); socket_bind($socket,'0.0.0.0',6666); while(tru ...

- lumen路由

$router->get('/', function () use ($router) { return config('options.author'); }); $router->ge ...

- python爬取知乎评论

点击评论,出现异步加载的请求 import json import requests from lxml import etree from time import sleep url = " ...

- vue知识点10

今天彻底掌握了如下: 1.解决回调地狱三种方案 callback async await Promise 2.中间件(middleware) express.static ...

- matlab cvx工具箱解决线性优化问题

题目来源:数学建模算法与应用第二版(司守奎)第一章习题1.4 题目说明 作者在答案中已经说明,求解上述线性规划模型时,尽量用Lingo软件,如果使用Matlab软件求解,需要做变量替换,把二维决策变量 ...

- Java中的String到底占用多大的内存空间?你所了解的可能都是错误的!!

写在前面 最近小伙伴加群时,我总是问一个问题:Java中的String类占用多大的内存空间?很多小伙伴的回答着实让我哭笑不得,有说不占空间的,有说1个字节的,有说2个字节的,有说3个字节的,有说不知道 ...

- 深度学习四从循环神经网络入手学习LSTM及GRU

循环神经网络 简介 循环神经网络(Recurrent Neural Networks, RNN) 是一类用于处理序列数据的神经网络.之前的说的卷积神经网络是专门用于处理网格化数据(例如一个图像)的神经 ...

- servlet 验证生命周期过程调用方法的次数

1.书写一个servlet并编译,如: package testservlet; import java.io.IOException;import java.io.PrintWriter; impo ...

- 理解 Android Binder 机制(一):驱动篇

Binder的实现是比较复杂的,想要完全弄明白是怎么一回事,并不是一件容易的事情. 这里面牵涉到好几个层次,每一层都有一些模块和机制需要理解.这部分内容预计会分为三篇文章来讲解.本文是第一篇,首先会对 ...

- ansible-palybooks

ansible-playbooks 如果用模块形式一般有幂等性,如果用shell或者command没有幂等性 playbooks相当于是shell脚本,可以把要执行的任务写到文件当中,一次执行,方便调 ...