2.3AutoEncoder

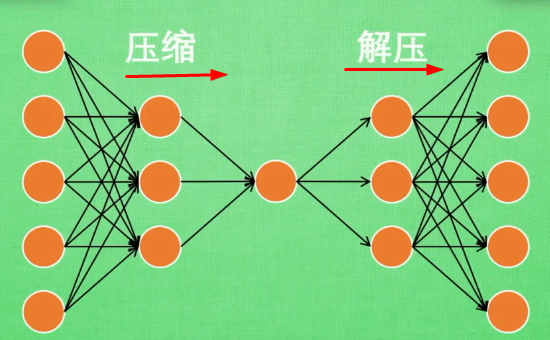

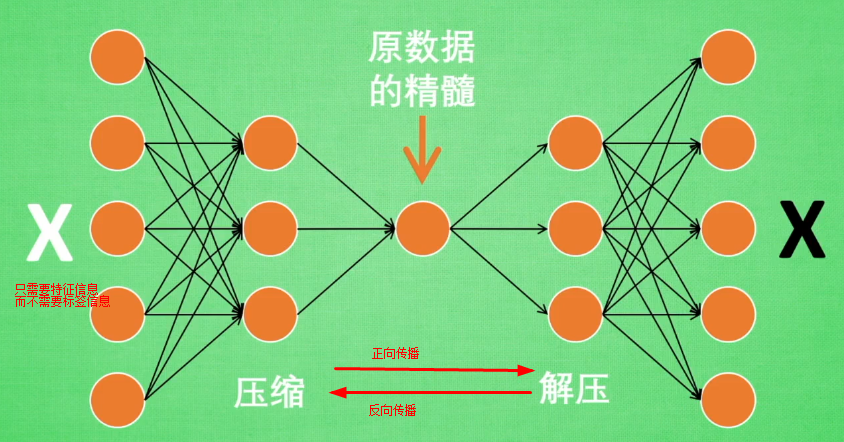

AutoEncoder是包含一个压缩和解压缩的过程,属于一种无监督学习的降维技术。

神经网络接受大量信息,有时候接受的数据达到上千万,可以通过压缩

提取原图片最具有代表性的信息,压缩输入的信息量,在将缩减后的数据放入神经网络中学习,如此学习起来变得轻松了

自编码在这个时候使用,可以将自编码归为无监督学习,类似于PCA,自编码可以为属性降维

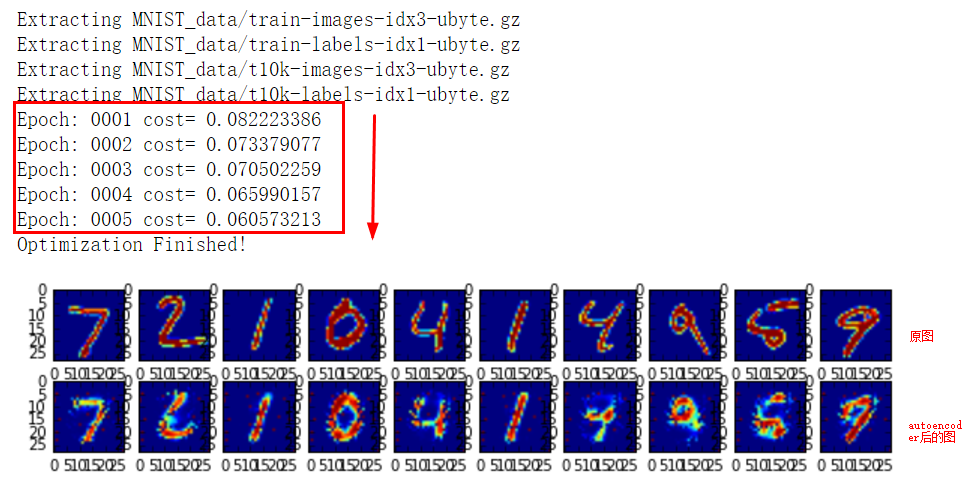

手写体识别代码AutoEncoder

from __future__ import division, print_function, absolute_import import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt # Import MNIST data

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data', one_hot=False) # Visualize decoder setting

# Parameters

learning_rate = 0.01

training_epochs = 5

batch_size = 256

display_step = 1

examples_to_show = 10 # Network Parameters

n_input = 784 # MNIST data input (img shape: 28*28) # tf Graph input (only pictures)

X = tf.placeholder("float", [None, n_input]) # hidden layer settings

n_hidden_1 = 256 # 1st layer num features

n_hidden_2 = 128 # 2nd layer num features

weights = {

'encoder_h1': tf.Variable(tf.random_normal([n_input, n_hidden_1])),

'encoder_h2': tf.Variable(tf.random_normal([n_hidden_1, n_hidden_2])),

'decoder_h1': tf.Variable(tf.random_normal([n_hidden_2, n_hidden_1])),

'decoder_h2': tf.Variable(tf.random_normal([n_hidden_1, n_input])),

}

biases = {

'encoder_b1': tf.Variable(tf.random_normal([n_hidden_1])),

'encoder_b2': tf.Variable(tf.random_normal([n_hidden_2])),

'decoder_b1': tf.Variable(tf.random_normal([n_hidden_1])),

'decoder_b2': tf.Variable(tf.random_normal([n_input])),

} # Building the encoder

def encoder(x):

# Encoder Hidden layer with sigmoid activation #1

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['encoder_h1']),

biases['encoder_b1']))

# Decoder Hidden layer with sigmoid activation #2

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['encoder_h2']),

biases['encoder_b2']))

return layer_2 # Building the decoder

def decoder(x):

# Encoder Hidden layer with sigmoid activation #1

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['decoder_h1']),

biases['decoder_b1']))

# Decoder Hidden layer with sigmoid activation #2

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['decoder_h2']),

biases['decoder_b2']))

return layer_2 """ # Visualize encoder setting

# Parameters

learning_rate = 0.01 # 0.01 this learning rate will be better! Tested

training_epochs = 10

batch_size = 256

display_step = 1 # Network Parameters

n_input = 784 # MNIST data input (img shape: 28*28) # tf Graph input (only pictures)

X = tf.placeholder("float", [None, n_input]) # hidden layer settings

n_hidden_1 = 128

n_hidden_2 = 64

n_hidden_3 = 10

n_hidden_4 = 2 #2D show weights = {

'encoder_h1': tf.Variable(tf.truncated_normal([n_input, n_hidden_1],)),

'encoder_h2': tf.Variable(tf.truncated_normal([n_hidden_1, n_hidden_2],)),

'encoder_h3': tf.Variable(tf.truncated_normal([n_hidden_2, n_hidden_3],)),

'encoder_h4': tf.Variable(tf.truncated_normal([n_hidden_3, n_hidden_4],)), 'decoder_h1': tf.Variable(tf.truncated_normal([n_hidden_4, n_hidden_3],)),

'decoder_h2': tf.Variable(tf.truncated_normal([n_hidden_3, n_hidden_2],)),

'decoder_h3': tf.Variable(tf.truncated_normal([n_hidden_2, n_hidden_1],)),

'decoder_h4': tf.Variable(tf.truncated_normal([n_hidden_1, n_input],)),

}

biases = {

'encoder_b1': tf.Variable(tf.random_normal([n_hidden_1])),

'encoder_b2': tf.Variable(tf.random_normal([n_hidden_2])),

'encoder_b3': tf.Variable(tf.random_normal([n_hidden_3])),

'encoder_b4': tf.Variable(tf.random_normal([n_hidden_4])), 'decoder_b1': tf.Variable(tf.random_normal([n_hidden_3])),

'decoder_b2': tf.Variable(tf.random_normal([n_hidden_2])),

'decoder_b3': tf.Variable(tf.random_normal([n_hidden_1])),

'decoder_b4': tf.Variable(tf.random_normal([n_input])),

} def encoder(x):

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['encoder_h1']),

biases['encoder_b1']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['encoder_h2']),

biases['encoder_b2']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['encoder_h3']),

biases['encoder_b3']))

layer_4 = tf.add(tf.matmul(layer_3, weights['encoder_h4']),

biases['encoder_b4'])

return layer_4 def decoder(x):

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['decoder_h1']),

biases['decoder_b1']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['decoder_h2']),

biases['decoder_b2']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['decoder_h3']),

biases['decoder_b3']))

layer_4 = tf.nn.sigmoid(tf.add(tf.matmul(layer_3, weights['decoder_h4']),

biases['decoder_b4']))

return layer_4

""" # Construct model

encoder_op = encoder(X)

decoder_op = decoder(encoder_op) # Prediction

y_pred = decoder_op

# Targets (Labels) are the input data.

y_true = X # Define loss and optimizer, minimize the squared error

cost = tf.reduce_mean(tf.pow(y_true - y_pred, 2))

optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost) # Launch the graph

with tf.Session() as sess:

# tf.initialize_all_variables() no long valid from

# 2017-03-02 if using tensorflow >= 0.12

if int((tf.__version__).split('.')[1]) < 12 and int((tf.__version__).split('.')[0]) < 1:

init = tf.initialize_all_variables()

else:

init = tf.global_variables_initializer()

sess.run(init)

total_batch = int(mnist.train.num_examples/batch_size)

# Training cycle

for epoch in range(training_epochs):

# Loop over all batches

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size) # max(x) = 1, min(x) = 0

# Run optimization op (backprop) and cost op (to get loss value)

_, c = sess.run([optimizer, cost], feed_dict={X: batch_xs})

# Display logs per epoch step

if epoch % display_step == 0:

print("Epoch:", '%04d' % (epoch+1),

"cost=", "{:.9f}".format(c)) print("Optimization Finished!") # # Applying encode and decode over test set

encode_decode = sess.run(

y_pred, feed_dict={X: mnist.test.images[:examples_to_show]})

# Compare original images with their reconstructions

f, a = plt.subplots(2, 10, figsize=(10, 2))

for i in range(examples_to_show):

a[0][i].imshow(np.reshape(mnist.test.images[i], (28, 28)))

a[1][i].imshow(np.reshape(encode_decode[i], (28, 28)))

plt.show() # encoder_result = sess.run(encoder_op, feed_dict={X: mnist.test.images})

# plt.scatter(encoder_result[:, 0], encoder_result[:, 1], c=mnist.test.labels)

# plt.colorbar()

# plt.show()

利用AutoEncoder进行类似于PCA的降维

代码:

from __future__ import division, print_function, absolute_import import tensorflow as tf

import numpy as np

import matplotlib.pyplot as plt # Import MNIST data

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets('MNIST_data', one_hot=False) """

# Visualize decoder setting

# Parameters

learning_rate = 0.01

training_epochs = 5

batch_size = 256

display_step = 1

examples_to_show = 10 # Network Parameters

n_input = 784 # MNIST data input (img shape: 28*28) # tf Graph input (only pictures)

X = tf.placeholder("float", [None, n_input]) # hidden layer settings

n_hidden_1 = 256 # 1st layer num features

n_hidden_2 = 128 # 2nd layer num features

weights = {

'encoder_h1': tf.Variable(tf.random_normal([n_input, n_hidden_1])),

'encoder_h2': tf.Variable(tf.random_normal([n_hidden_1, n_hidden_2])),

'decoder_h1': tf.Variable(tf.random_normal([n_hidden_2, n_hidden_1])),

'decoder_h2': tf.Variable(tf.random_normal([n_hidden_1, n_input])),

}

biases = {

'encoder_b1': tf.Variable(tf.random_normal([n_hidden_1])),

'encoder_b2': tf.Variable(tf.random_normal([n_hidden_2])),

'decoder_b1': tf.Variable(tf.random_normal([n_hidden_1])),

'decoder_b2': tf.Variable(tf.random_normal([n_input])),

} # Building the encoder

def encoder(x):

# Encoder Hidden layer with sigmoid activation #1

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['encoder_h1']),

biases['encoder_b1']))

# Decoder Hidden layer with sigmoid activation #2

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['encoder_h2']),

biases['encoder_b2']))

return layer_2 # Building the decoder

def decoder(x):

# Encoder Hidden layer with sigmoid activation #1

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['decoder_h1']),

biases['decoder_b1']))

# Decoder Hidden layer with sigmoid activation #2

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['decoder_h2']),

biases['decoder_b2']))

return layer_2 """ # Visualize encoder setting

# Parameters

learning_rate = 0.01 # 0.01 this learning rate will be better! Tested

training_epochs = 10

batch_size = 256

display_step = 1 # Network Parameters

n_input = 784 # MNIST data input (img shape: 28*28) # tf Graph input (only pictures)

X = tf.placeholder("float", [None, n_input]) # hidden layer settings

n_hidden_1 = 128

n_hidden_2 = 64

n_hidden_3 = 10

n_hidden_4 = 2 #2D show weights = {

'encoder_h1': tf.Variable(tf.truncated_normal([n_input, n_hidden_1],)),

'encoder_h2': tf.Variable(tf.truncated_normal([n_hidden_1, n_hidden_2],)),

'encoder_h3': tf.Variable(tf.truncated_normal([n_hidden_2, n_hidden_3],)),

'encoder_h4': tf.Variable(tf.truncated_normal([n_hidden_3, n_hidden_4],)), 'decoder_h1': tf.Variable(tf.truncated_normal([n_hidden_4, n_hidden_3],)),

'decoder_h2': tf.Variable(tf.truncated_normal([n_hidden_3, n_hidden_2],)),

'decoder_h3': tf.Variable(tf.truncated_normal([n_hidden_2, n_hidden_1],)),

'decoder_h4': tf.Variable(tf.truncated_normal([n_hidden_1, n_input],)),

}

biases = {

'encoder_b1': tf.Variable(tf.random_normal([n_hidden_1])),

'encoder_b2': tf.Variable(tf.random_normal([n_hidden_2])),

'encoder_b3': tf.Variable(tf.random_normal([n_hidden_3])),

'encoder_b4': tf.Variable(tf.random_normal([n_hidden_4])), 'decoder_b1': tf.Variable(tf.random_normal([n_hidden_3])),

'decoder_b2': tf.Variable(tf.random_normal([n_hidden_2])),

'decoder_b3': tf.Variable(tf.random_normal([n_hidden_1])),

'decoder_b4': tf.Variable(tf.random_normal([n_input])),

} def encoder(x):

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['encoder_h1']),

biases['encoder_b1']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['encoder_h2']),

biases['encoder_b2']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['encoder_h3']),

biases['encoder_b3']))

layer_4 = tf.add(tf.matmul(layer_3, weights['encoder_h4']),

biases['encoder_b4'])

return layer_4 def decoder(x):

layer_1 = tf.nn.sigmoid(tf.add(tf.matmul(x, weights['decoder_h1']),

biases['decoder_b1']))

layer_2 = tf.nn.sigmoid(tf.add(tf.matmul(layer_1, weights['decoder_h2']),

biases['decoder_b2']))

layer_3 = tf.nn.sigmoid(tf.add(tf.matmul(layer_2, weights['decoder_h3']),

biases['decoder_b3']))

layer_4 = tf.nn.sigmoid(tf.add(tf.matmul(layer_3, weights['decoder_h4']),

biases['decoder_b4']))

return layer_4 # Construct model

encoder_op = encoder(X)

decoder_op = decoder(encoder_op) # Prediction

y_pred = decoder_op

# Targets (Labels) are the input data.

y_true = X # Define loss and optimizer, minimize the squared error

cost = tf.reduce_mean(tf.pow(y_true - y_pred, 2))

optimizer = tf.train.AdamOptimizer(learning_rate).minimize(cost) # Launch the graph

with tf.Session() as sess:

# tf.initialize_all_variables() no long valid from

# 2017-03-02 if using tensorflow >= 0.12

if int((tf.__version__).split('.')[1]) < 12 and int((tf.__version__).split('.')[0]) < 1:

init = tf.initialize_all_variables()

else:

init = tf.global_variables_initializer()

sess.run(init)

total_batch = int(mnist.train.num_examples/batch_size)

# Training cycle

for epoch in range(training_epochs):

# Loop over all batches

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size) # max(x) = 1, min(x) = 0

# Run optimization op (backprop) and cost op (to get loss value)

_, c = sess.run([optimizer, cost], feed_dict={X: batch_xs})

# Display logs per epoch step

if epoch % display_step == 0:

print("Epoch:", '%04d' % (epoch+1),

"cost=", "{:.9f}".format(c)) print("Optimization Finished!") # # # Applying encode and decode over test set

# encode_decode = sess.run(

# y_pred, feed_dict={X: mnist.test.images[:examples_to_show]})

# # Compare original images with their reconstructions

# f, a = plt.subplots(2, 10, figsize=(10, 2))

# for i in range(examples_to_show):

# a[0][i].imshow(np.reshape(mnist.test.images[i], (28, 28)))

# a[1][i].imshow(np.reshape(encode_decode[i], (28, 28)))

# plt.show() encoder_result = sess.run(encoder_op, feed_dict={X: mnist.test.images})

plt.scatter(encoder_result[:, 0], encoder_result[:, 1], c=mnist.test.labels)

plt.colorbar()

plt.show()

显示如下:

2.3AutoEncoder的更多相关文章

随机推荐

- Mybatis -- 批量添加 -- insertBatch

啦啦啦 ---------------InsertBatch Class : Dao /** * 批量插入perfEnvirons * * @author Liang * * 2017年4月25日 * ...

- PHP代码审计笔记--代码执行漏洞

漏洞形成原因:客户端提交的参数,未经任何过滤,传入可以执行代码的函数,造成代码执行漏洞. 常见代码注射函数: 如:eval.preg_replace+/e.assert.call_user_func. ...

- VS05 VS08 VS10 工程之间的转换

VS05 VS08 VS10 工程之间的转换 安装了VS2010后,用它打开以前的VS2005项目或VS2008项目,都会被强制转换为VS2010的项目,给没有装VS2010的电脑带来不能打开高版本项 ...

- thinkphp3.2 实现点击图片或文字进入内容页

首先要先把页面渲染出来,http://www.mmkb.com/weixiang/index/index.html <div class="main3 mt"> < ...

- 模型提升方法adaBoost

他通过改变训练样本的权重,学习多个分类器,并将这些分类器进行线性组合,提高分类的性能. adaboost提高那些被前一轮弱分类器错误分类样本的权重,而降低那些被正确分类样本的权重,这样使得,那些没有得 ...

- <转>13个实用的Linux find命令示例

注:本文摘自青崖白鹿,翻译的妈咪,我找到了! -- 15个实用的Linux find命令示例, 感谢翻译的好文. 除了在一个目录结构下查找文件这种基本的操作,你还可以用find命令实现一些实用的操作, ...

- Objective-c官方文档 怎么使用对象

版权声明:原创作品,谢绝转载!否则将追究法律责任. 对象发送和接受消息 尽管有不同的方法来发送消息在对象之间,到目前位置是想中括号那样[obj doSomeThing]:左边是接受消息的接收器,右 ...

- 【cs229-Lecture10】特征选择

本节课要点: VC维: 模型选择算法 特征选择 vc维:个人还是不太理解.个人的感觉就是为核函数做理论依据,低维线性不可分时,映射到高维就可分,那么映射到多高呢?我把可分理解为“打散”. 参考的资料: ...

- delphi for android 获取手机号

delphi for android 获取手机号 uses System.SysUtils, System.Types, System.UITypes, System.Classes, Syste ...

- Delphi Code Editor 之 几个特性

Delphi Code Editor有几个特性在编写大规模代码时非常有用.下面分别进行介绍: 1.Code Templates(代码模板) 使用代码模板可把任意预定义代码(或正文)插入到单元文件中.当 ...