LogisticRegression in MLLib

例子

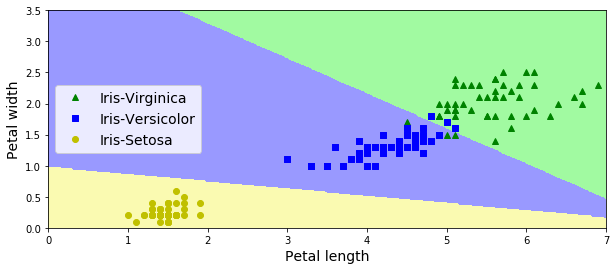

iris数据训练Logistic模型。特征petal width和petal height,分类目标有三类。

import org.apache.spark.mllib.classification.LogisticRegressionWithLBFGS

import org.apache.spark.mllib.evaluation.MulticlassMetrics

import org.apache.spark.mllib.linalg.Vectors

import org.apache.spark.mllib.regression.LabeledPoint

import org.apache.spark.rdd.RDD

import org.apache.spark.sql.SparkSession

object Test1 extends App {

val spark = SparkSession

.builder

.appName("StructuredNetworkWordCountWindowed")

.master("local[3]")

.config("spark.sql.shuffle.partitions", 3)

.config("spark.sql.autoBroadcastJoinThreshold", 1)

.getOrCreate()

spark.sparkContext.setLogLevel("INFO")

val sc = spark.sparkContext

val data: RDD[LabeledPoint] = sc.textFile("iris.txt").map { line =>

val linesp = line.split("\\s+")

LabeledPoint(linesp(2).toInt, Vectors.dense(linesp(0).toDouble, linesp(1).toDouble))

}

// Split data into training (60%) and test (40%).

val splits = data.randomSplit(Array(0.6, 0.4), seed = 11L)

val training = splits(0).cache()

val test = splits(1)

// Run training algorithm to build the model

val model = new LogisticRegressionWithLBFGS()

.setIntercept(true)

.setNumClasses(3)

.run(training)

// Compute raw scores on the test set.

val predictionAndLabels = test.map { case LabeledPoint(label, features) =>

val prediction = model.predict(features)

(prediction, label)

}

// Get evaluation metrics.

val metrics = new MulticlassMetrics(predictionAndLabels)

val accuracy = metrics.accuracy

println(s"Accuracy = $accuracy")

}

训练结果

Accuracy = 0.9516129032258065

model : org.apache.spark.mllib.classification.LogisticRegressionModel: intercept = 0.0, numFeatures = 6, numClasses = 3, threshold = 0.5

weights = [10.806033250918638,59.0125055499883,-74.5967318848371,15.249528477342315,72.68333443959429,-119.02776352645247]

模型将特征空间划分结果(画图代码参见 http://www.cnblogs.com/luweiseu/p/7826679.html):

ML LogisticRegress算法

算法流程在:

org.apache.spark.ml.classification.LogisticRegression

protected[org.apache.spark] def train(dataset: Dataset[_],

handlePersistence: Boolean): LogisticRegressionModel

主要算法在:

val costFun = new LogisticCostFun(instances, numClasses, $(fitIntercept),

$(standardization), bcFeaturesStd, regParamL2, multinomial = isMultinomial,

$(aggregationDepth))

LogisticCostFun 实现了Breeze's DiffFunction[T]函数,计算multinomial (softmax) logistic loss

function, as used in multi-class classification (it is also used in binary logistic regression).

It returns the loss and gradient with L2 regularization at a particular point (coefficients).

该函数分布式计算参数梯度矩阵和损失

val logisticAggregator = {

// 每个训练数据instance参与计算梯度矩阵

val seqOp = (c: LogisticAggregator, instance: Instance) => c.add(instance)

// 各个partition的aggregator merge

val combOp = (c1: LogisticAggregator, c2: LogisticAggregator) => c1.merge(c2)

// spark聚合调用

instances.treeAggregate(

new LogisticAggregator(bcCoeffs, bcFeaturesStd, numClasses, fitIntercept,

multinomial)

)(seqOp, combOp, aggregationDepth)

}

Breeze凸优化:

LogisticCostFun 作为Breeze的凸优化模块(例如LBFGSB)的参数,计算最优的参数结果:

val states = optimizer.iterations(new CachedDiffFunction(costFun),

new BDV[Double](initialCoefWithInterceptMatrix.toArray))

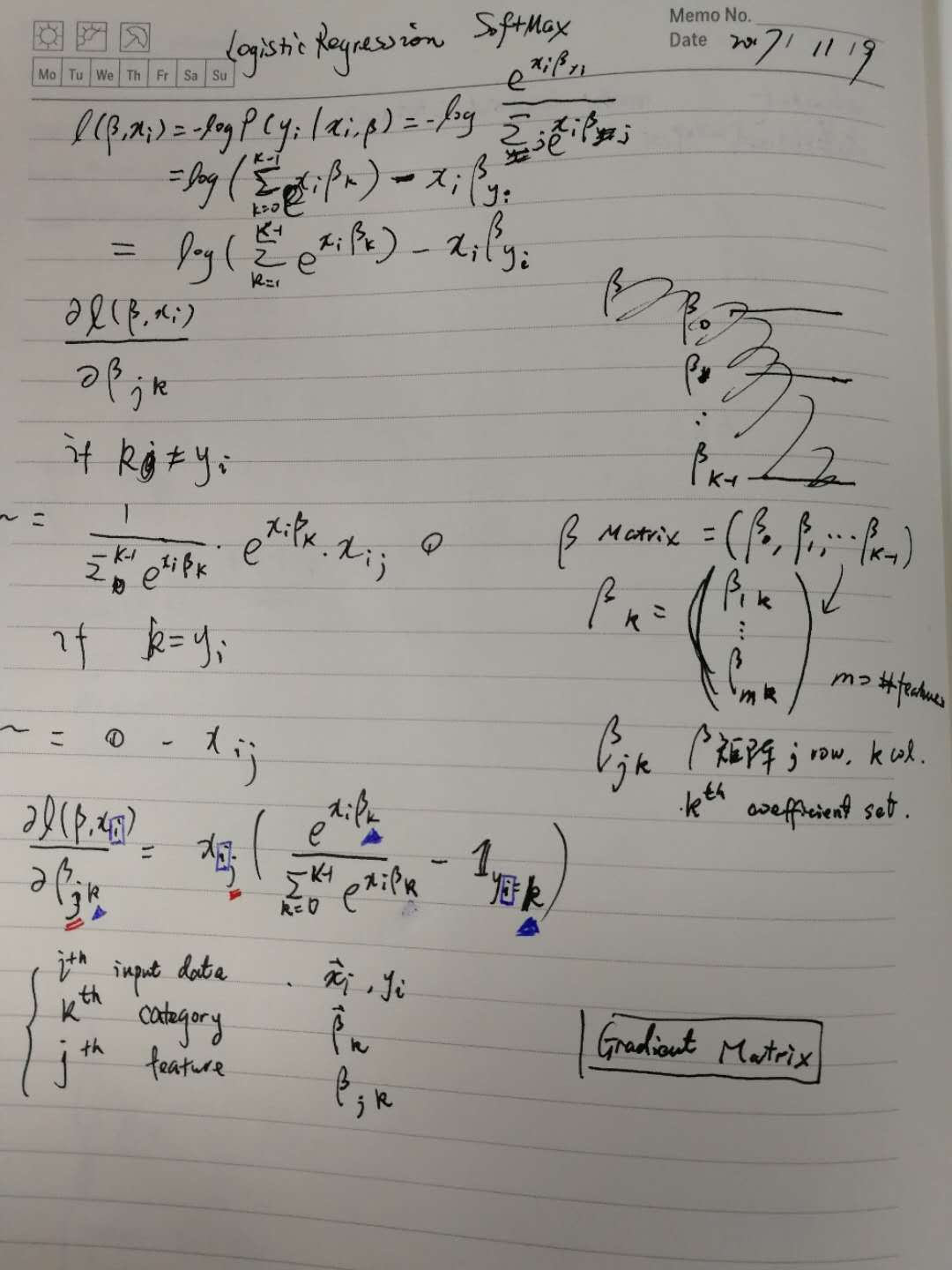

LogisticCostFun 梯度计算(LogisticAggregator)

该模块包含了LogisticRegression训练多类分类器时迭代(online)的逻辑。

主要逻辑是给定一个训练样本\(x_i\),计算该样本对梯度矩阵中各个元素\(\beta_{j,k}\)的贡献。

LogisticAggregator computes the gradient and loss for binary or multinomial logistic (softmax)

loss function, as used in classification for instances in sparse or dense vector in an online

fashion.

Two LogisticAggregators can be merged together to have a summary of loss and gradient of

the corresponding joint dataset.

For improving the convergence rate during the optimization process and also to prevent against

features with very large variances exerting an overly large influence during model training,

packages like R's GLMNET perform the scaling to unit variance and remove the mean in order to

reduce the condition number. The model is then trained in this scaled space, but returns the

coefficients in the original scale. See page 9 in

http://cran.r-project.org/web/packages/glmnet/glmnet.pdf

However, we don't want to apply the [[org.apache.spark.ml.feature.StandardScaler]] on the

training dataset, and then cache the standardized dataset since it will create a lot of overhead.

As a result, we perform the scaling implicitly when we compute the objective function (though

we do not subtract the mean).

Note that there is a difference between multinomial (softmax) and binary loss. The binary case

uses one outcome class as a "pivot" and regresses the other class against the pivot. In the

multinomial case, the softmax loss function is used to model each class probability

independently. Using softmax loss produces K sets of coefficients, while using a pivot class

produces K - 1 sets of coefficients (a single coefficient vector in the binary case). In the

binary case, we can say that the coefficients are shared between the positive and negative

classes. When regularization is applied, multinomial (softmax) loss will produce a result

different from binary loss since the positive and negative don't share the coefficients while the

binary regression shares the coefficients between positive and negative.

The following is a mathematical derivation for the multinomial (softmax) loss.

The probability of the multinomial outcome \(y\) taking on any of the K possible outcomes is:

e^{\vec{x}_i^T \vec{\beta}_k}} \\

P(y_i=1|\vec{x}_i, \beta) = \frac{e^{\vec{x}_i^T \vec{\beta}_1}}{\sum_{k=0}^{K-1}

e^{\vec{x}_i^T \vec{\beta}_k}}\\

P(y_i=K-1|\vec{x}_i, \beta) = \frac{e^{\vec{x}_i^T \vec{\beta}_{K-1}}\,}{\sum_{k=0}^{K-1}

e^{\vec{x}_i^T \vec{\beta}_k}}

\]

The model coefficients \(\beta = (\beta_0, \beta_1, \beta_2, ..., \beta_{K-1})\) become a matrix

which has dimension of \(K \times (N+1)\) if the intercepts are added. If the intercepts are not

added, the dimension will be \(K \times N\).

Note that the coefficients in the model above lack identifiability. That is, any constant scalar

can be added to all of the coefficients and the probabilities remain the same.

\frac{e^{\vec{x}_i^T \left(\vec{\beta}_0 + \vec{c}\right)}}{\sum_{k=0}^{K-1}

e^{\vec{x}_i^T \left(\vec{\beta}_k + \vec{c}\right)}}

= \frac{e^{\vec{x}_i^T \vec{\beta}_0}e^{\vec{x}_i^T \vec{c}}\,}{e^{\vec{x}_i^T \vec{c}}

\sum_{k=0}^{K-1} e^{\vec{x}_i^T \vec{\beta}_k}}

= \frac{e^{\vec{x}_i^T \vec{\beta}_0}}{\sum_{k=0}^{K-1} e^{\vec{x}_i^T \vec{\beta}_k}}

\end{align}

\]

However, when regularization is added to the loss function, the coefficients are indeed

identifiable because there is only one set of coefficients which minimizes the regularization

term. When no regularization is applied, we choose the coefficients with the minimum L2

penalty for consistency and reproducibility. For further discussion see:

Friedman, et al. "Regularization Paths for Generalized Linear Models via Coordinate Descent"

The loss of objective function for a single instance of data (we do not include the

regularization term here for simplicity) can be written as

\ell\left(\beta, x_i\right) &= -log{P\left(y_i \middle| \vec{x}_i, \beta\right)} \\

&= log\left(\sum_{k=0}^{K-1}e^{\vec{x}_i^T \vec{\beta}_k}\right) - \vec{x}_i^T \vec{\beta}_y\\

&= log\left(\sum_{k=0}^{K-1} e^{margins_k}\right) - margins_y

\end{align}

\]

where \({margins}_k = \vec{x}_i^T \vec{\beta}_k\).

For optimization, we have to calculate the first derivative of the loss function, and a simple

calculation shows that

\frac{\partial \ell(\beta, \vec{x}_i, w_i)}{\partial \beta_{j, k}}

&= x_{i,j} \cdot w_i \cdot \left(\frac{e^{\vec{x}_i \cdot \vec{\beta}_k}}{\sum_{k'=0}^{K-1}

e^{\vec{x}_i \cdot \vec{\beta}_{k'}}\,} - I_{y=k}\right) \\

&= x_{i, j} \cdot w_i \cdot multiplier_k

\end{align}

\]

where \(w_i\) is the sample weight, \(I_{y=k}\) is an indicator function

1 & y = k \\

0 & else

\end{cases}

\]

and

e^{\vec{x}_i \cdot \vec{\beta}_k}} - I_{y=k}\right)

\]

If any of margins is larger than 709.78, the numerical computation of multiplier and loss

function will suffer from arithmetic overflow. This issue occurs when there are outliers in

data which are far away from the hyperplane, and this will cause the failing of training once

infinity is introduced. Note that this is only a concern when max(margins) > 0.

Fortunately, when max(margins) = maxMargin > 0, the loss function and the multiplier can

easily be rewritten into the following equivalent numerically stable formula.

margins_{y} + maxMargin

\]

Note that each term, \((margins_k - maxMargin)\) in the exponential is no greater than zero; as a

result, overflow will not happen with this formula.

For \(multiplier\), a similar trick can be applied as the following,

e^{\vec{x}_i \cdot \vec{\beta}_{k'} - maxMargin}} - I_{y=k}\right)

\]

@param bcCoefficients The broadcast coefficients corresponding to the features.

@param bcFeaturesStd The broadcast standard deviation values of the features.

@param numClasses the number of possible outcomes for k classes classification problem in

Multinomial Logistic Regression.

@param fitIntercept Whether to fit an intercept term.

@param multinomial Whether to use multinomial (softmax) or binary loss

@note In order to avoid unnecessary computation during calculation of the gradient updates

we lay out the coefficients in column major order during training. This allows us to

perform feature standardization once, while still retaining sequential memory access

for speed. We convert back to row major order when we create the model,

since this form is optimal for the matrix operations used for prediction.

LogisticRegression in MLLib的更多相关文章

- LogisticRegression in MLLib (PySpark + numpy+matplotlib可视化)

参考'LogisticRegression in MLLib' (http://www.cnblogs.com/luweiseu/p/7809521.html) 通过pySpark MLlib训练lo ...

- Spark Mllib框架1

1. 概述 1.1 功能 MLlib是Spark的机器学习(machine learing)库,其目标是使得机器学习的使用更加方便和简单,其具有如下功能: ML算法:常用的学习算法,包括分类.回归.聚 ...

- spark MLlib Classification and regression 学习

二分类:SVMs,logistic regression,decision trees,random forests,gradient-boosted trees,naive Bayes 多分类: ...

- Spark MLlib 机器学习

本章导读 机器学习(machine learning, ML)是一门涉及概率论.统计学.逼近论.凸分析.算法复杂度理论等多领域的交叉学科.ML专注于研究计算机模拟或实现人类的学习行为,以获取新知识.新 ...

- Spark的MLlib和ML库的区别

机器学习库(MLlib)指南 MLlib是Spark的机器学习(ML)库.其目标是使实际的机器学习可扩展和容易.在高层次上,它提供了如下工具: ML算法:通用学习算法,如分类,回归,聚类和协同过滤 特 ...

- Spark中ml和mllib的区别

转载自:https://vimsky.com/article/3403.html Spark中ml和mllib的主要区别和联系如下: ml和mllib都是Spark中的机器学习库,目前常用的机器学习功 ...

- spark mllib和ml类里面的区别

mllib是老的api,里面的模型都是基于RDD的,模型使用的时候api也是有变化的(model这里是naiveBayes), (1:在模型训练的时候是naiveBayes.run(data: RDD ...

- Spark MLlib框架详解

1. 概述 1.1 功能 MLlib是Spark的机器学习(machine learing)库,其目标是使得机器学习的使用更加方便和简单,其具有如下功能: ML算法:常用的学习算法,包括分类.回归.聚 ...

- Spark之MLlib

目录 Part VI. Advanced Analytics and Machine Learning Advanced Analytics and Machine Learning Overview ...

随机推荐

- Spring 中参数名称解析 - ParameterNameDiscoverer

Spring 中参数名称解析 - ParameterNameDiscoverer Spring 系列目录(https://www.cnblogs.com/binarylei/p/10198698.ht ...

- [SQL]查询最新的数据

在设计数据库的时候,把数据的跟新,删除都是软操作,就是都是变成了增加,也是会需要读取最新的那条数据 ' 获取最新时间的数据 Select a.* FROM SortInfo a,(SELECT SnS ...

- python入门之文件处理

1.读取文件 f=open(file="C:\BiZhi\新建文本文档.txt",mode="r",encoding="utf-8") da ...

- 兼容ie透明书写

filter:alpha(opacity=0); opacity:0;filter:alpha(opacity=70); opacity:0.7;

- 关于进行pdf的每页广告去除、转换word等方案。

pdf转word经常使用的是 软件下载安装破解完成以后进行编辑pdf,可以导出word,效果比一般的word自带的转换效果要好. 在进行pdf的每页去除页脚或者页眉的广告时候,使用pdf的替换功能.这 ...

- 47.iOS跳转AppStore评分和发送邮件

1.跳转到AppStore评分 应用地址是关键:IOS 设备,手机搜索应用,拷贝链接 NSString *appStr =@"https://itunes.apple.com/cn/app/ ...

- stacking过程

图解stacking原理: 上半部分是用一个基础模型进行5折交叉验证,如:用XGBoost作为基础模型Model1,5折交叉验证就是先拿出四折作为training data,另外一折作为testing ...

- 2019.02.06 bzoj4503: 两个串(fft)

传送门 题意简述:给两个字符串s,ts,ts,t,ttt中可能有通配符,问ttt在sss出现的次数和所有位置. 思路:一道很熟悉的题,跟bzoj4259bzoj4259bzoj4259差不多的. 然后 ...

- 2018.11.01 洛谷P3953 逛公园(最短路+dp)

传送门 设f[i][j]f[i][j]f[i][j]表示跟最短路差值为iii当前在点jjj的方案数. in[i][j]in[i][j]in[i][j]表示在被选择的集合当中. 大力记忆化搜索就行了. ...

- linux yum 本地源配置

1.查看硬盘情况 lsblk sr0就是光驱了 2.执行挂载命令 查看光驱cd /devls 执行命令 mount /dev/sr0 /mnt 将光驱挂载到 /mnt 目录 这样光驱就挂载好了 2. ...