suging闲谈-netty 的异步非阻塞IO线程与业务线程分离

前言

surging 对外沉寂了一段时间了,但是作者并没有闲着,而是针对于客户的需要添加了不少功能,也给我带来了不少外快收益, 就比如协议转化,consul 的watcher 机制,JAVA版本,skywalking 升级支持8.0,.升级NET 6.0 ,而客户自己扩展支持服务编排流程引擎,后期客户还需要扩展定制coap ,XMPP等协议。而今天写这篇文章的目的针对于修改基于netty 的异步非阻塞业务逻辑操作

问题描述

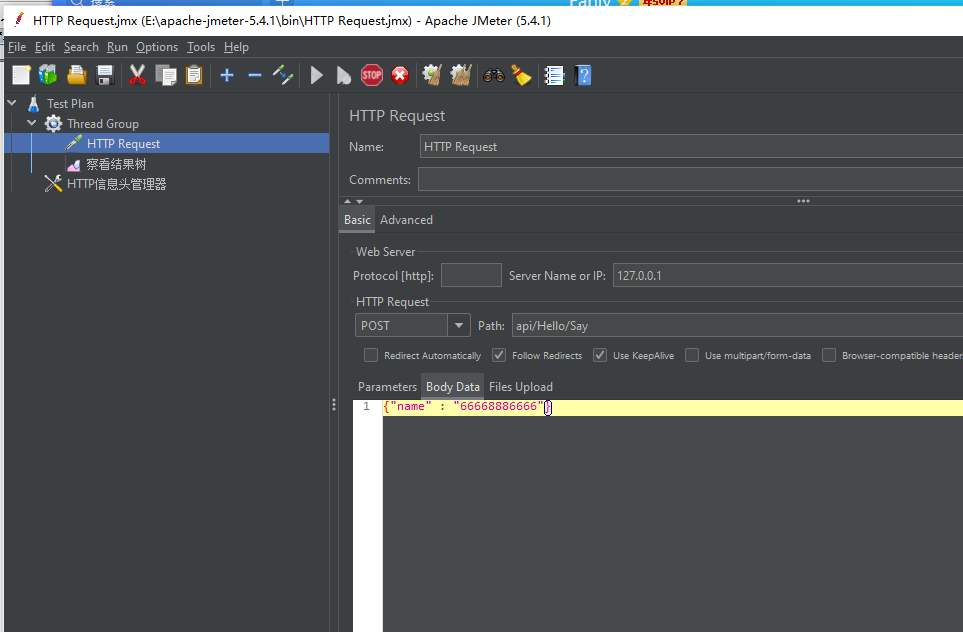

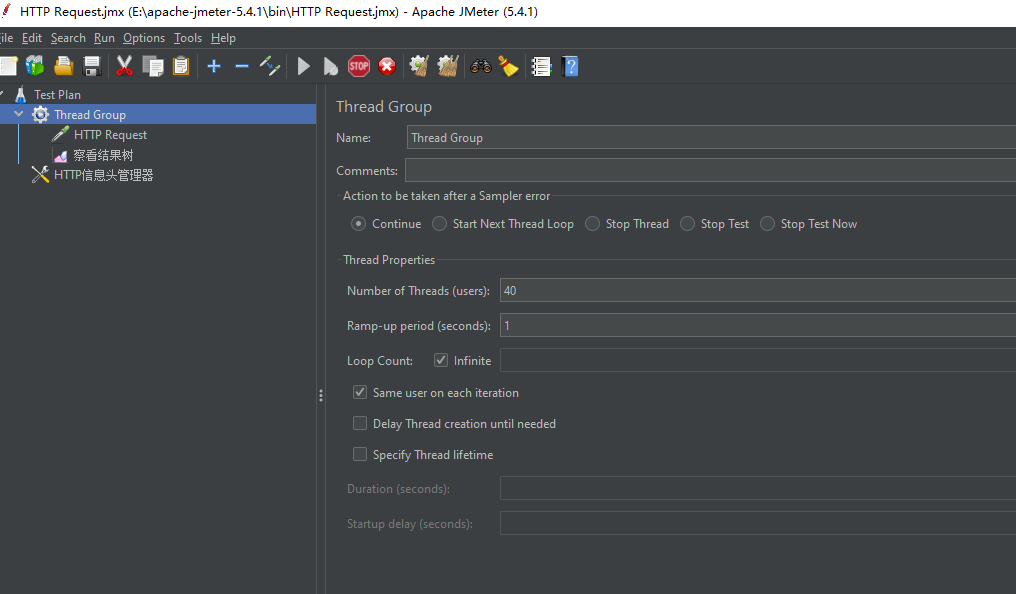

年前客户把JAVA版本进行了测试,产生了不少问题,客户也比较茫然,因为有内存泄漏,通过jmeter压测,并发始终上不来,通过半个月的努力,终于把问题解决了,预估JAVA版本并发能达到2万左右,以下是客户通过设置jmeter压测实例

解决方案

当客户把问题抛给我后,我第一反应是IO线程被阻塞造成的,而这样就可以把问题定位在netty 的处理上,而处理server 端代码是NettyServerMessageListener,而其中ServerHandler的channelRead是处理业务逻辑的,在这当中我是通过ThreadPoolExecutor执行异步处理,可以看看NettyServerMessageListener代码:

public class NettyServerMessageListener implements IMessageListener {

private Thread thread;

private static final Logger logger = LoggerFactory.getLogger(NettyServerMessageListener.class);

private ChannelFuture channel;

private final ITransportMessageDecoder transportMessageDecoder;

private final ITransportMessageEncoder transportMessageEncoder;

ReceivedDelegate Received = new ReceivedDelegate();

@Inject

public NettyServerMessageListener( ITransportMessageCodecFactory codecFactory)

{

this.transportMessageEncoder = codecFactory.GetEncoder();

this.transportMessageDecoder = codecFactory.GetDecoder();

}

public void StartAsync(final String serverAddress) {

thread = new Thread(new Runnable() {

int parallel = Runtime.getRuntime().availableProcessors();

final DefaultEventLoopGroup eventExecutors = new DefaultEventLoopGroup(parallel);

ThreadFactory threadFactory = new DefaultThreadFactory("rpc-netty", true);

public void run() {

String[] array = serverAddress.split(":");

logger.debug("准备启动服务主机,监听地址:" + array[0] + "" + array[1] + "。");

EventLoopGroup bossGroup = new NioEventLoopGroup();

EventLoopGroup workerGroup = new NioEventLoopGroup(parallel,threadFactory);

ServerBootstrap bootstrap = new ServerBootstrap();

bootstrap.group(bossGroup, workerGroup).option(ChannelOption.SO_BACKLOG,128)

.childOption(ChannelOption.SO_KEEPALIVE,true).childOption(ChannelOption.TCP_NODELAY, true).channel(NioServerSocketChannel.class)

.childHandler(new ChannelInitializer<NioSocketChannel>() {

@Override

protected void initChannel(NioSocketChannel socketChannel) throws Exception {

socketChannel.pipeline()

.addLast(new LengthFieldPrepender(4))

.addLast(new LengthFieldBasedFrameDecoder(Integer.MAX_VALUE, 0, 4, 0, 4))

.addLast(new ServerHandler(eventExecutors,new ReadAction<ChannelHandlerContext, TransportMessage>() {

@Override

public void run() {

IMessageSender sender = new NettyServerMessageSender(transportMessageEncoder, this.parameter);

onReceived(sender, this.parameter1);

}

},transportMessageDecoder)

);

}

})

.option(ChannelOption.SO_BACKLOG, 128)

.childOption(ChannelOption.ALLOCATOR, PooledByteBufAllocator.DEFAULT);

try {

String host = array[0];

int port = Integer.parseInt(array[1]);

channel = bootstrap.bind(host, port).sync();

logger.debug("服务主机启动成功,监听地址:" + serverAddress + "。");

} catch (Exception e) {

if (e instanceof InterruptedException) {

logger.info("Rpc server remoting server stop");

} else {

logger.error("Rpc server remoting server error", e);

}

}

}

});

thread.start();

}

@Override

public ReceivedDelegate getReceived() {

return Received;

}

public void onReceived(IMessageSender sender, TransportMessage message) {

if (Received == null)

return;

Received.notifyX(sender,message);

}

private class ReadAction<T,T1> implements Runnable

{

public T parameter;

public T1 parameter1;

public void setParameter( T tParameter,T1 tParameter1) {

parameter = tParameter;

parameter1 = tParameter1;

}

@Override

public void run() {

}

}

private class ServerHandler extends ChannelInboundHandlerAdapter {

private final DefaultEventLoopGroup serverHandlerPool;

private final ReadAction<ChannelHandlerContext, TransportMessage> serverRunnable;

private final ITransportMessageDecoder transportMessageDecoder;

public ServerHandler(final DefaultEventLoopGroup threadPoolExecutor, ReadAction<ChannelHandlerContext, TransportMessage> runnable,

ITransportMessageDecoder transportMessageDecoder) {

this.serverHandlerPool = threadPoolExecutor;

this.serverRunnable = runnable;

this.transportMessageDecoder = transportMessageDecoder;

}

@Override

public void exceptionCaught(ChannelHandlerContext ctx, Throwable cause) {

logger.warn("与服务器:" + ctx.channel().remoteAddress() + "通信时发送了错误。");

ctx.close();

}

@Override

public void channelReadComplete(ChannelHandlerContext context) {

context.flush();

}

@Override

public void channelRead(ChannelHandlerContext channelHandlerContext, Object message) throws Exception {

ByteBuf buffer = (ByteBuf) message;

try {

byte[] data = new byte[buffer.readableBytes()];

buffer.readBytes(data);

serverHandlerPool.execute(() -> {

TransportMessage transportMessage = null;

try {

transportMessage = transportMessageDecoder.Decode(data);

} catch (IOException e) {

e.printStackTrace();

}

serverRunnable.setParameter(channelHandlerContext, transportMessage);

serverRunnable.run();

});

}

finally {

ReferenceCountUtil.release(message);

}

}

}

}

ThreadPoolExecutor代码:

public static ThreadPoolExecutor makeServerThreadPool(final String serviceName, int corePoolSize, int maxPoolSize) {

ThreadPoolExecutor serverHandlerPool = new ThreadPoolExecutor(

corePoolSize,

maxPoolSize,

60L,

TimeUnit.SECONDS,

new ArrayBlockingQueue<Runnable>( 10000));

/*

new LinkedBlockingQueue<Runnable>(10000),

r -> new Thread(r, "netty-rpc-" + serviceName + "-" + r.hashCode()),

new ThreadPoolExecutor.AbortPolicy());*/

return serverHandlerPool;

}

后面通过查找官方的文档发现以下addLast是IO线程阻塞调用

.addLast(new ServerHandler(eventExecutors,new ReadAction<ChannelHandlerContext, TransportMessage>() {

@Override

public void run() {

IMessageSender sender = new NettyServerMessageSender(transportMessageEncoder, this.parameter);

onReceived(sender, this.parameter1);

}

},transportMessageDecoder)

后面通过使用EventExecutorGroup把IO线程与业务线程进行分离,把耗时业务处理添加到EventExecutorGroup进行处理,首先EventExecutorGroup代码如下

public static final EventExecutorGroup execThreadPool = new DefaultEventExecutorGroup( Runtime.getRuntime().availableProcessors()*2,

(ThreadFactory) r -> {

Thread thread = new Thread(r);

thread.setName("custom-tcp-exec-"+r.hashCode());

return thread;

},

100000,

RejectedExecutionHandlers.reject()

);

而addLast的ServerHandler添加了EventExecutorGroup, 最新的NettyServerMessageListener代码如下:

public class NettyServerMessageListener implements IMessageListener {

private Thread thread;

private static final Logger logger = LoggerFactory.getLogger(NettyServerMessageListener.class);

private ChannelFuture channel;

private final ITransportMessageDecoder transportMessageDecoder;

private final ITransportMessageEncoder transportMessageEncoder;

ReceivedDelegate Received = new ReceivedDelegate();

@Inject

public NettyServerMessageListener( ITransportMessageCodecFactory codecFactory)

{

this.transportMessageEncoder = codecFactory.GetEncoder();

this.transportMessageDecoder = codecFactory.GetDecoder();

}

public void StartAsync(final String serverAddress) {

thread = new Thread(new Runnable() {

public void run() {

String[] array = serverAddress.split(":");

logger.debug("准备启动服务主机,监听地址:" + array[0] + "" + array[1] + "。");

EventLoopGroup bossGroup = new NioEventLoopGroup(1);

EventLoopGroup workerGroup = new NioEventLoopGroup();

ServerBootstrap bootstrap = new ServerBootstrap();

bootstrap.group(bossGroup, workerGroup).channel(NioServerSocketChannel.class)

.childHandler(new ChannelInitializer<NioSocketChannel>() {

@Override

protected void initChannel(NioSocketChannel socketChannel) throws Exception {

socketChannel.pipeline()

.addLast(new LengthFieldPrepender(4))

.addLast(new LengthFieldBasedFrameDecoder(Integer.MAX_VALUE, 0, 4, 0, 4))

.addLast(ThreadPoolUtil.execThreadPool, "handler",new ServerHandler(new ReadAction<ChannelHandlerContext, TransportMessage>() {

@Override

public void run() {

IMessageSender sender = new NettyServerMessageSender(transportMessageEncoder, this.parameter);

onReceived(sender, this.parameter1);

}

},transportMessageDecoder)

);

}

})

.option(ChannelOption.SO_BACKLOG, 128)

.childOption(ChannelOption.ALLOCATOR, PooledByteBufAllocator.DEFAULT);

try {

String host = array[0];

int port = Integer.parseInt(array[1]);

channel = bootstrap.bind(host, port).sync();

logger.debug("服务主机启动成功,监听地址:" + serverAddress + "。");

} catch (Exception e) {

if (e instanceof InterruptedException) {

logger.info("Rpc server remoting server stop");

} else {

logger.error("Rpc server remoting server error", e);

}

}

}

});

thread.start();

}

@Override

public ReceivedDelegate getReceived() {

return Received;

}

public void onReceived(IMessageSender sender, TransportMessage message) {

if (Received == null)

return;

Received.notifyX(sender,message);

}

private class ReadAction<T,T1> implements Runnable

{

public T parameter;

public T1 parameter1;

public void setParameter( T tParameter,T1 tParameter1) {

parameter = tParameter;

parameter1 = tParameter1;

}

@Override

public void run() {

}

}

private class ServerHandler extends ChannelInboundHandlerAdapter {

private final ReadAction<ChannelHandlerContext, TransportMessage> serverRunnable;

private final ITransportMessageDecoder transportMessageDecoder;

public ServerHandler(ReadAction<ChannelHandlerContext, TransportMessage> runnable,

ITransportMessageDecoder transportMessageDecoder) {

this.serverRunnable = runnable;

this.transportMessageDecoder = transportMessageDecoder;

}

@Override

public void exceptionCaught(ChannelHandlerContext ctx, Throwable cause) {

logger.warn("与服务器:" + ctx.channel().remoteAddress() + "通信时发送了错误。");

ctx.close();

}

@Override

public void channelReadComplete(ChannelHandlerContext context) {

context.flush();

}

@Override

public void channelRead(ChannelHandlerContext channelHandlerContext, Object message) throws Exception {

ByteBuf buffer = (ByteBuf) message;

try {

byte[] data = new byte[buffer.readableBytes()];

buffer.readBytes(data);

TransportMessage transportMessage = transportMessageDecoder.Decode(data);

serverRunnable.setParameter(channelHandlerContext, transportMessage);

serverRunnable.run();

}

finally {

ReferenceCountUtil.release(message);

}

}

}

}

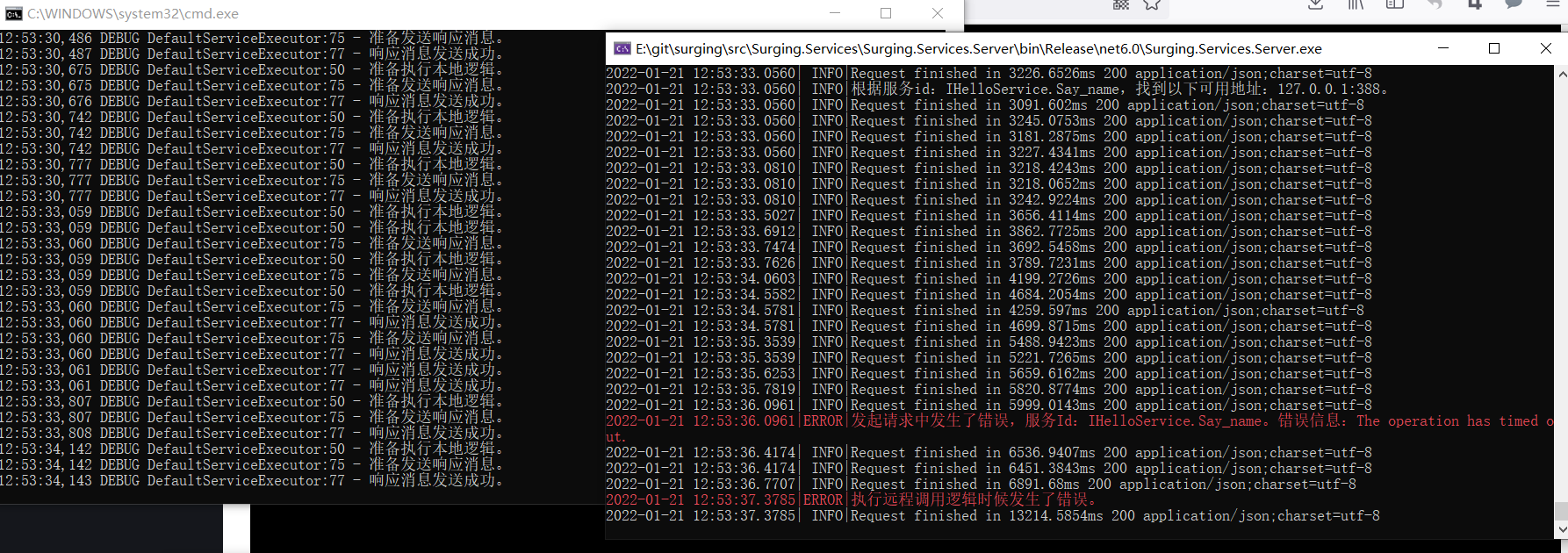

通过以上修改,再通过jmeter压测已经不会出现timeout 问题,就连stage 网关-》.NET微服务-》JAVA微服务都没有Time out问题产生,jmeter的user thread拉长到2000也没有出现问题。

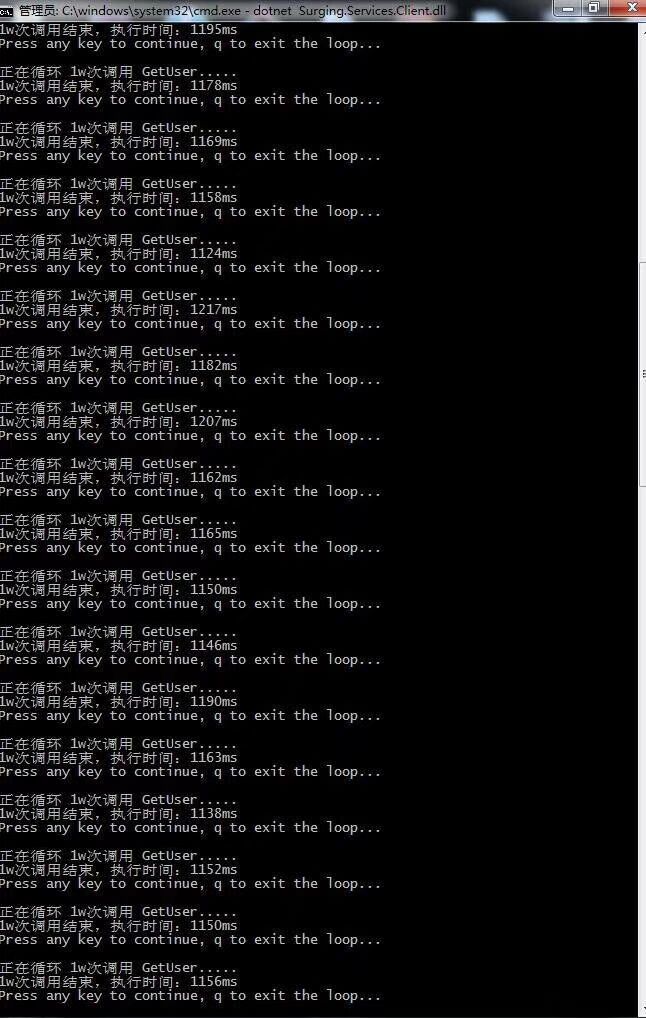

通过以上思路把.NET版本的surging 社区版本也进行了修改,已经提交到github,首先把ServiceHost中的serverMessageListener.Received 中的Task.Run移除,ServerHandler中ChannelRead进行移除,然后addLast的ServerHandler添加了EventExecutorGroup.通过以上修改再通过压测发现可以支持20万+ ,也未发现内存泄漏问题,执行client 1万次 ,服务端cpu 在6%左右,响应速度在1.1秒左右,可以开启多个surging 的client 进行压测,cpu 会叠加上升,响应速度没有影响,以下是执行1万次压测

总结

通过5年研发,surging 从原来的最初的基于netty 的RPC发展到现在可以支持多协议,多语言的异构微服务引擎,不仅是技术的提高,也带来名利的收益,只要不断坚持,终究能看到成果,我也会一直更新,为企业和社区用户带来自己的绵薄之力,让企业能更好的掌握微服务解决方案,已解决现在行业各种不同的业务需求。

suging闲谈-netty 的异步非阻塞IO线程与业务线程分离的更多相关文章

- 异步非阻塞IO的Python Web框架--Tornado

Tornado的全称是Torado Web Server,从名字上就可知它可用作Web服务器,但同时它也是一个Python Web的开发框架.最初是在FriendFeed公司的网站上使用,FaceBo ...

- 转一贴,今天实在写累了,也看累了--【Python异步非阻塞IO多路复用Select/Poll/Epoll使用】

下面这篇,原理理解了, 再结合 这一周来的心得体会,整个框架就差不多了... http://www.haiyun.me/archives/1056.html 有许多封装好的异步非阻塞IO多路复用框架, ...

- Python异步非阻塞IO多路复用Select/Poll/Epoll使用,线程,进程,协程

1.使用select模拟socketserver伪并发处理客户端请求,代码如下: import socket import select sk = socket.socket() sk.bind((' ...

- nodejs的异步非阻塞IO

简单表述一下:发启向系统IO操作请求,系统使用线程池IO操作,执行完放到事件队列里,node主线程轮询事件队列,读取结果与调用回调.所以说node并非真的单线程,还是使用了线程池的多线程. 上个图看看 ...

- swoole与php协程实现异步非阻塞IO开发

“协程可以在遇到阻塞的时候中断主动让渡资源,调度程序选择其他的协程运行.从而实现非阻塞IO” 然而php是不支持原生协程的,遇到阻塞时如不交由异步进程来执行是没有任何意义的,代码还是同步执行的,如下所 ...

- [Flask] 异步非阻塞IO实现

Flask默认是不支持非阻塞IO的,表现为: 当 请求1未完成之前,请求2是需要等待处理状态,效率非常低. 在flask中非阻塞实现可以由2种: 启用flask多线程机制 # Flask from f ...

- 谈谈对不同I/O模型的理解 (阻塞/非阻塞IO,同步/异步IO)

一.关于I/O模型的问题 最近通过对ucore操作系统的学习,让我打开了操作系统内核这一黑盒子,与之前所学知识结合起来,解答了长久以来困扰我的关于I/O的一些问题. 1. 为什么redis能以单工作线 ...

- 同步异步阻塞非阻塞Reactor模式和Proactor模式 (目前JAVA的NIO就属于同步非阻塞IO)

在高性能的I/O设计中,有两个比较著名的模式Reactor和Proactor模式,其中Reactor模式用于同步I/O,而Proactor运用于异步I/O操作. 在比较这两个模式之前,我们首先的搞明白 ...

- 【面试】详解同步/异步/阻塞/非阻塞/IO含义与案例

本文详解同步.异步.阻塞.非阻塞,以及IO与这四者的关联,毕竟我当初刚认识这几个名词的时候也是一脸懵. 目录 1.同步阻塞.同步非阻塞.异步阻塞.异步非阻塞 1.同步 2.异步 3.阻塞 4.非阻塞 ...

随机推荐

- 初识python: 模块定义及调用

一.定义 模块:用来从逻辑上组织python代码(变量.函数.类.逻辑:实现一个功能),本质就是.py结尾的python文件(比如:文件名:test.py,对应的模块名:test) 包:用来从逻辑上组 ...

- layui父表单获取子表单的值完成修改操作

最近在做项目时,学着用layui开发后台管理系统. 但在做编辑表单时遇到了一个坑. 点击编辑时会出现一个弹窗. 我们需要从父表单传值给子表单.content是传值给子表单 layer.open({ t ...

- 关于jar包和war读取静态文件

在war包中static中的静态文件,打成jar包后却读取不到,这是为什么呢,让我门看下两种读取的区别 一.war包中都取静态模板文件 public static void download(Stri ...

- Java 内幕新闻第二期深度解读

这是由 Java 官方发布,Oracle JDK 研发 Nipafx 制作的节目,包含 JDK 近期的研发进展和新特性展望和使用,这里加上个人译制的字幕搬运而来.我把 Nipafx 的扩展资料详细研读 ...

- CTF-sql-group by报错注入

本文章主要涉及group by报错注入的原理讲解,如有错误,望指出.(附有目录,如需查看请点右下角) 一.下图为本次文章所使用到 user表,该表所在的数据库为 test 二.首先介绍一下本文章所使用 ...

- BarTender调用示例

安装BarTender 软件后,会注册一个COM 然后在项目中添加BarTender COM 引用 BarTender模板中的条码右键属性-数据源类型-嵌入的数据-名称(比如设置为 barcode p ...

- deepin20搜狗输入法使用

放大打字框 打出中文语句符号

- gin中间request body绑定到不同的结构体中

1. 一般通过调用 c.Request.Body 方法绑定数据,但不能多次调用这个方法. package main import ( "fmt" "github.com/ ...

- Rsync安装配置

一.先准备两台CentOS服务器,假定是 1.172.18.2.225(服务端) 需要配置rsyncd.conf文件 2.172.18.2.227(客户端) 不需要配置rsyncd.conf文件 二. ...

- SpringBoot使用异步线程池实现生产环境批量数据推送

前言 SpringBoot使用异步线程池: 1.编写线程池配置类,自定义一个线程池: 2.定义一个异步服务: 3.使用@Async注解指向定义的线程池: 这里以我工作中使用过的一个案例来做描述,我所在 ...