Pytorch 入门之Siamese网络

首次体验Pytorch,本文参考于:github and PyTorch 中文网人脸相似度对比

本文主要熟悉Pytorch大致流程,修改了读取数据部分。没有采用原作者的ImageFolder方法: ImageFolder(root, transform=None, target_transform=None, loader=default_loader)。而是采用了一种更自由的方法,利用了Dataset 和 DataLoader 自由实现,更加适合于不同数据的预处理导入工作。

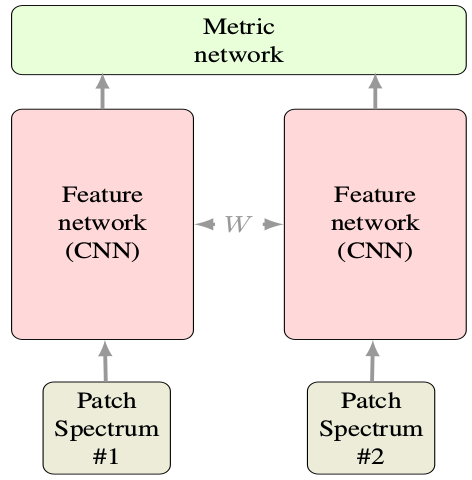

Siamese网络不用多说,就是两个共享参数的CNN。每次的输入是一对图像+1个label,共3个值。注意label=0或1(又称正负样本),表示输入的两张图片match(匹配、同一个人)或no-match(不匹配、非同一人)。 下图是Siamese基本结构,图是其他论文随便找的,输入看做两张图片就好。只不过下图是两个光普段而已。

1. 数据处理

数据采用的是AT&T人脸数据。共40个人,每个人有10张脸。数据下载:AT&T

首先解压后发现文件夹下共40个文件夹,每个文件夹里有10张pgm图片。这里生成一个包含图片路径的train.txt文件共后续调用:

def convert(train=True):

if(train):

f=open(Config.txt_root, 'w')

data_path=root+'/train/'

if(not os.path.exists(data_path)):

os.makedirs(data_path)

for i in range(40):

for j in range(10):

img_path = data_path+'s'+str(i+1)+'/'+str(j+1)+'.pgm'

f.write(img_path+' '+str(i)+'\n')

f.close()

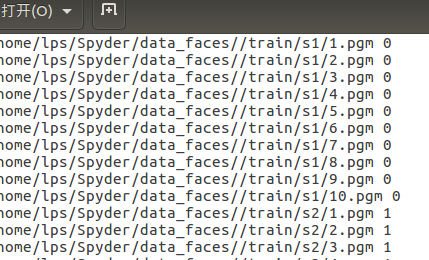

生成结果:每行前面为每张图片的完整路径, 后面数字为类别标签0~39。train文件夹下为s1~s40共40个子文件夹。

2. 定制个性化数据集

这一步骤主要继承了类Dataset,然后重写getitem和len方法即可:

class MyDataset(Dataset): # 集成Dataset类以定制

def __init__(self, txt, transform=None, target_transform=None, should_invert=False):

self.transform = transform

self.target_transform = target_transform

self.should_invert = should_invert

self.txt = txt # 之前生成的train.txt

def __getitem__(self, index):

line = linecache.getline(self.txt, random.randint(1, self.__len__())) # 随机选择一个人脸

line.strip('\n')

img0_list= line.split()

should_get_same_class = random.randint(0,1) # 随机数0或1,是否选择同一个人的脸,这里为了保证尽量使匹配和非匹配数据大致平衡(正负类样本相当)

if should_get_same_class: # 执行的话就挑一张同一个人的脸作为匹配样本对

while True:

img1_list = linecache.getline(self.txt, random.randint(1, self.__len__())).strip('\n').split()

if img0_list[1]==img1_list[1]:

break

else: # else就是随意挑一个人的脸作为非匹配样本对,当然也可能抽到同一个人的脸,概率较小而已

img1_list = linecache.getline(self.txt, random.randint(1,self.__len__())).strip('\n').split()

img0 = Image.open(img0_list[0]) # img_list都是大小为2的列表,list[0]为图像, list[1]为label

img1 = Image.open(img1_list[0])

img0 = img0.convert("L") # 转为灰度

img1 = img1.convert("L")

if self.should_invert: # 是否进行像素反转操作,即0变1,1变0

img0 = PIL.ImageOps.invert(img0)

img1 = PIL.ImageOps.invert(img1)

if self.transform is not None: # 非常方便的transform操作,在实例化时可以进行任意定制

img0 = self.transform(img0)

img1 = self.transform(img1)

return img0, img1 , torch.from_numpy(np.array([int(img1_list[1]!=img0_list[1])],dtype=np.float32)) # 注意一定要返回数据+标签, 这里返回一对图像+label(应由numpy转为tensor)

def __len__(self): # 数据总长

fh = open(self.txt, 'r')

num = len(fh.readlines())

fh.close()

return num

3. 制作双塔CNN

class SiameseNetwork(nn.Module):

def __init__(self):

super(SiameseNetwork, self).__init__()

self.cnn1 = nn.Sequential(

nn.ReflectionPad2d(1),

nn.Conv2d(1, 4, kernel_size=3),

nn.ReLU(inplace=True),

nn.BatchNorm2d(4),

nn.Dropout2d(p=.2), nn.ReflectionPad2d(1),

nn.Conv2d(4, 8, kernel_size=3),

nn.ReLU(inplace=True),

nn.BatchNorm2d(8),

nn.Dropout2d(p=.2), nn.ReflectionPad2d(1),

nn.Conv2d(8, 8, kernel_size=3),

nn.ReLU(inplace=True),

nn.BatchNorm2d(8),

nn.Dropout2d(p=.2),

) self.fc1 = nn.Sequential(

nn.Linear(8*100*100, 500),

nn.ReLU(inplace=True), nn.Linear(500, 500),

nn.ReLU(inplace=True), nn.Linear(500, 5)

) def forward_once(self, x):

output = self.cnn1(x)

output = output.view(output.size()[0], -1)

output = self.fc1(output)

return output def forward(self, input1, input2):

output1 = self.forward_once(input1)

output2 = self.forward_once(input2)

return output1, output2

很简单,没说的,注意前向传播是两张图同时输入进行。

4. 定制对比损失函数

# Custom Contrastive Loss

class ContrastiveLoss(torch.nn.Module):

"""

Contrastive loss function.

Based on: http://yann.lecun.com/exdb/publis/pdf/hadsell-chopra-lecun-06.pdf

""" def __init__(self, margin=2.0):

super(ContrastiveLoss, self).__init__()

self.margin = margin def forward(self, output1, output2, label):

euclidean_distance = F.pairwise_distance(output1, output2)

loss_contrastive = torch.mean((1-label) * torch.pow(euclidean_distance, 2) + # calmp夹断用法

(label) * torch.pow(torch.clamp(self.margin - euclidean_distance, min=0.0), 2)) return loss_contrastive

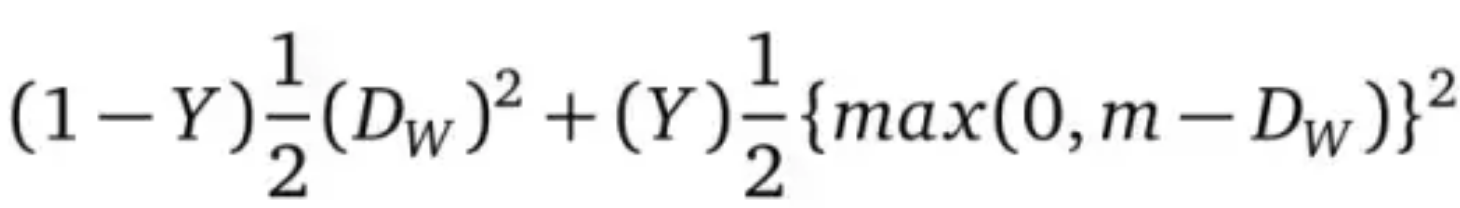

上面的损失函数为自己制作的,公式源于lecun文章:

Loss =

DW=

m为容忍度, Dw为两张图片的欧氏距离。

5. 训练一波

train_data = MyDataset(txt = Config.txt_root,transform=transforms.Compose(

[transforms.Resize((100,100)),transforms.ToTensor()]), should_invert=False) #Resize到100,100

train_dataloader = DataLoader(dataset=train_data, shuffle=True, num_workers=2, batch_size = Config.train_batch_size) net = SiameseNetwork().cuda() # GPU加速

criterion = ContrastiveLoss()

optimizer = optim.Adam(net.parameters(), lr=0.0005) counter = []

loss_history =[]

iteration_number =0 for epoch in range(0, Config.train_number_epochs):

for i, data in enumerate(train_dataloader, 0):

img0, img1, label = data

img0, img1, label = Variable(img0).cuda(), Variable(img1).cuda(), Variable(label).cuda()

output1, output2 = net(img0, img1)

optimizer.zero_grad()

loss_contrastive = criterion(output1, output2, label)

loss_contrastive.backward()

optimizer.step() if i%10 == 0:

print("Epoch:{}, Current loss {}\n".format(epoch,loss_contrastive.data[0]))

iteration_number += 10

counter.append(iteration_number)

loss_history.append(loss_contrastive.data[0])

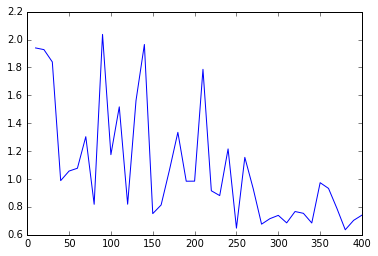

show_plot(counter, loss_history) # plot 损失函数变化曲线

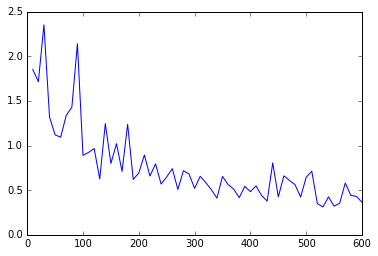

损失函数结果图:

batch_size=32, epoches=20, lr=0.001 batch_size=32, epoches=30, lr=0.0005

全部代码:

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

Created on Wed Jan 24 10:00:24 2018

Paper: Siamese Neural Networks for One-shot Image Recognition

links: https://www.cnblogs.com/denny402/p/7520063.html

"""

import torch

from torch.autograd import Variable

import os

import random

import linecache

import numpy as np

import torchvision

from torch.utils.data import Dataset, DataLoader

from torchvision import transforms

from PIL import Image

import PIL.ImageOps

import matplotlib.pyplot as plt class Config():

root = '/home/lps/Spyder/data_faces/'

txt_root = '/home/lps/Spyder/data_faces/train.txt'

train_batch_size = 32

train_number_epochs = 30 # Helper functions

def imshow(img,text=None,should_save=False):

npimg = img.numpy()

plt.axis("off")

if text:

plt.text(75, 8, text, style='italic',fontweight='bold',

bbox={'facecolor':'white', 'alpha':0.8, 'pad':10})

plt.imshow(np.transpose(npimg, (1, 2, 0)))

plt.show() def show_plot(iteration,loss):

plt.plot(iteration,loss)

plt.show() def convert(train=True):

if(train):

f=open(Config.txt_root, 'w')

data_path=root+'/train/'

if(not os.path.exists(data_path)):

os.makedirs(data_path)

for i in range(40):

for j in range(10):

img_path = data_path+'s'+str(i+1)+'/'+str(j+1)+'.pgm'

f.write(img_path+' '+str(i)+'\n')

f.close() #convert(True) # ready the dataset, Not use ImageFolder as the author did

class MyDataset(Dataset): def __init__(self, txt, transform=None, target_transform=None, should_invert=False): self.transform = transform

self.target_transform = target_transform

self.should_invert = should_invert

self.txt = txt def __getitem__(self, index): line = linecache.getline(self.txt, random.randint(1, self.__len__()))

line.strip('\n')

img0_list= line.split()

should_get_same_class = random.randint(0,1)

if should_get_same_class:

while True:

img1_list = linecache.getline(self.txt, random.randint(1, self.__len__())).strip('\n').split()

if img0_list[1]==img1_list[1]:

break

else:

img1_list = linecache.getline(self.txt, random.randint(1,self.__len__())).strip('\n').split() img0 = Image.open(img0_list[0])

img1 = Image.open(img1_list[0])

img0 = img0.convert("L")

img1 = img1.convert("L") if self.should_invert:

img0 = PIL.ImageOps.invert(img0)

img1 = PIL.ImageOps.invert(img1) if self.transform is not None:

img0 = self.transform(img0)

img1 = self.transform(img1) return img0, img1 , torch.from_numpy(np.array([int(img1_list[1]!=img0_list[1])],dtype=np.float32)) def __len__(self):

fh = open(self.txt, 'r')

num = len(fh.readlines())

fh.close()

return num # Visualising some of the data

"""

train_data=MyDataset(txt = Config.txt_root, transform=transforms.ToTensor(),

transform=transforms.Compose([transforms.Scale((100,100)),

transforms.ToTensor()], should_invert=False))

train_loader = DataLoader(dataset=train_data, batch_size=8, shuffle=True)

#it = iter(train_loader)

p1, p2, label = it.next()

example_batch = it.next()

concatenated = torch.cat((example_batch[0],example_batch[1]),0)

imshow(torchvision.utils.make_grid(concatenated))

print(example_batch[2].numpy())

""" # Neural Net Definition, Standard CNNs

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim class SiameseNetwork(nn.Module):

def __init__(self):

super(SiameseNetwork, self).__init__()

self.cnn1 = nn.Sequential(

nn.ReflectionPad2d(1),

nn.Conv2d(1, 4, kernel_size=3),

nn.ReLU(inplace=True),

nn.BatchNorm2d(4),

nn.Dropout2d(p=.2), nn.ReflectionPad2d(1),

nn.Conv2d(4, 8, kernel_size=3),

nn.ReLU(inplace=True),

nn.BatchNorm2d(8),

nn.Dropout2d(p=.2), nn.ReflectionPad2d(1),

nn.Conv2d(8, 8, kernel_size=3),

nn.ReLU(inplace=True),

nn.BatchNorm2d(8),

nn.Dropout2d(p=.2),

) self.fc1 = nn.Sequential(

nn.Linear(8*100*100, 500),

nn.ReLU(inplace=True), nn.Linear(500, 500),

nn.ReLU(inplace=True), nn.Linear(500, 5)

) def forward_once(self, x):

output = self.cnn1(x)

output = output.view(output.size()[0], -1)

output = self.fc1(output)

return output def forward(self, input1, input2):

output1 = self.forward_once(input1)

output2 = self.forward_once(input2)

return output1, output2 # Custom Contrastive Loss

class ContrastiveLoss(torch.nn.Module):

"""

Contrastive loss function.

Based on: http://yann.lecun.com/exdb/publis/pdf/hadsell-chopra-lecun-06.pdf

""" def __init__(self, margin=2.0):

super(ContrastiveLoss, self).__init__()

self.margin = margin def forward(self, output1, output2, label):

euclidean_distance = F.pairwise_distance(output1, output2)

loss_contrastive = torch.mean((1-label) * torch.pow(euclidean_distance, 2) +

(label) * torch.pow(torch.clamp(self.margin - euclidean_distance, min=0.0), 2)) return loss_contrastive # Training

train_data = MyDataset(txt = Config.txt_root,transform=transforms.Compose(

[transforms.Resize((100,100)),transforms.ToTensor()]), should_invert=False)

train_dataloader = DataLoader(dataset=train_data, shuffle=True, num_workers=2, batch_size = Config.train_batch_size) net = SiameseNetwork().cuda()

criterion = ContrastiveLoss()

optimizer = optim.Adam(net.parameters(), lr=0.0005) counter = []

loss_history =[]

iteration_number =0 for epoch in range(0, Config.train_number_epochs):

for i, data in enumerate(train_dataloader, 0):

img0, img1, label = data

img0, img1, label = Variable(img0).cuda(), Variable(img1).cuda(), Variable(label).cuda()

output1, output2 = net(img0, img1)

optimizer.zero_grad()

loss_contrastive = criterion(output1, output2, label)

loss_contrastive.backward()

optimizer.step() if i%10 == 0:

print("Epoch:{}, Current loss {}\n".format(epoch,loss_contrastive.data[0]))

iteration_number += 10

counter.append(iteration_number)

loss_history.append(loss_contrastive.data[0])

show_plot(counter, loss_history)

Total codes

原作者jupyter notebook下载:Siamese Neural Networks for One-shot Image Recognition

更多资料:Some important Pytorch tasks

利用Siamese network 来解决 one-shot learning:https://sorenbouma.github.io/blog/oneshot/ 译文: 【深度神经网络 One-shot Learning】孪生网络少样本精准分类

A PyTorch Implementation of "Siamese Neural Networks for One-shot Image Recognition"

Pytorch 入门之Siamese网络的更多相关文章

- Pytorch入门随手记

Pytorch入门随手记 什么是Pytorch? Pytorch是Torch到Python上的移植(Torch原本是用Lua语言编写的) 是一个动态的过程,数据和图是一起建立的. tensor.dot ...

- pytorch 入门指南

两类深度学习框架的优缺点 动态图(PyTorch) 计算图的进行与代码的运行时同时进行的. 静态图(Tensorflow <2.0) 自建命名体系 自建时序控制 难以介入 使用深度学习框架的优点 ...

- 超简单!pytorch入门教程(五):训练和测试CNN

我们按照超简单!pytorch入门教程(四):准备图片数据集准备好了图片数据以后,就来训练一下识别这10类图片的cnn神经网络吧. 按照超简单!pytorch入门教程(三):构造一个小型CNN构建好一 ...

- pytorch入门2.2构建回归模型初体验(开始训练)

pytorch入门2.x构建回归模型系列: pytorch入门2.0构建回归模型初体验(数据生成) pytorch入门2.1构建回归模型初体验(模型构建) pytorch入门2.2构建回归模型初体验( ...

- pytorch入门2.1构建回归模型初体验(模型构建)

pytorch入门2.x构建回归模型系列: pytorch入门2.0构建回归模型初体验(数据生成) pytorch入门2.1构建回归模型初体验(模型构建) pytorch入门2.2构建回归模型初体验( ...

- Pytorch入门——手把手教你MNIST手写数字识别

MNIST手写数字识别教程 要开始带组内的小朋友了,特意出一个Pytorch教程来指导一下 [!] 这里是实战教程,默认读者已经学会了部分深度学习原理,若有不懂的地方可以先停下来查查资料 目录 MNI ...

- Pytorch入门上 —— Dataset、Tensorboard、Transforms、Dataloader

本节内容参照小土堆的pytorch入门视频教程.学习时建议多读源码,通过源码中的注释可以快速弄清楚类或函数的作用以及输入输出类型. Dataset 借用Dataset可以快速访问深度学习需要的数据,例 ...

- Pytorch入门下 —— 其他

本节内容参照小土堆的pytorch入门视频教程. 现有模型使用和修改 pytorch框架提供了很多现有模型,其中torchvision.models包中有很多关于视觉(图像)领域的模型,如下图: 下面 ...

- pytorch写一个LeNet网络

我们先介绍下pytorch中的cnn网络 学过深度卷积网络的应该都非常熟悉这张demo图(LeNet): 先不管怎么训练,我们必须先构建出一个CNN网络,很快我们写了一段关于这个LeNet的代码,并进 ...

随机推荐

- 投入机器学习的怀抱?先学Python吧

前两天写了篇文章,给想进程序员这个行当的同学们一点建议,没想到反响这么好,关注和阅读数都上了新高度,有点人生巅峰的感觉呀.今天趁热打铁,聊聊我最喜欢的编程语言——Python. 为什么要说Python ...

- 自学Linux Shell4.3-处理数据文件sort grep gzip tar

点击返回 自学Linux命令行与Shell脚本之路 4.3-处理数据文件sort grep gzip tar ls命令用于显示文件目录列表,和Windows系统下DOS命令dir类似.当执行ls命令时 ...

- [2019/03/17#杭师大ACM]赛后总结(被吊锤记)

前言 和扬子曰大佬和慕容宝宝大佬一组,我压力巨大,而且掌机,累死我了,敲了一个下午的代码,他们两个人因为比我巨就欺负我QwQ. 依旧被二中学军爆锤,我真的好菜,慕容宝宝或者是扬子曰大佬来掌机一定成绩比 ...

- React Native——组件的生命周期

组件生命周期 上流程图描述了组件从创建.运行到销毁的整个过程,可以看到如果一个组件在被创建,从开始一直到运行会依次调用getDefaultProps到render这五个函数:在运行过程中,如果有属性和 ...

- linux-shell数据重定向详细分析

在了解重定向之前,我们先来看看linux 的文件描述符.linux文件描述符:可以理解为linux跟踪打开文件,而分配的一个数字,这个数字有点类似c语言操作文件时候的句柄,通过句柄就可以实现文件的读写 ...

- CAN总线中节点ID相同会怎样?

CAN-bus网络中原则上不允许两个节点具有相同的ID段,但如果两个节点ID段相同会怎样呢? 实验前,我们首先要对CAN报文的结构组成.仲裁原理有清晰的认识. 一.CAN报文结构 目前使用最广泛的CA ...

- 洛谷P1850 换教室

令人印象深刻的状态转移方程... f[i][j][0/1]表示前i个换j次,第i次是否申请时的期望. 注意可能有重边,自环. 转移要分类讨论,距离是上/这次成功/失败的概率乘相应的路程. 从上次的0/ ...

- csp20151203画图 解题报告和易错地方

Solution: dfs 对于dfs: //遇到map[u][v]==c,则不用再搜 //因为通过(u,v)到达的其它点(p,q), //之前从(u,v)开始肯定能到达(p,q),(p, ...

- aop 初探

1.首先是配置文件: 上图是让aop配置正确,不报红: 完整代码: <?xml version="1.0" encoding="UTF-8"?> & ...

- HDU1199 动态线段树 // 离散化

附动态线段树AC代码 http://acm.hdu.edu.cn/showproblem.php?pid=1199 因为昨天做了一道动态线段树的缘故,今天遇到了这题没有限制范围的题就自然而然想到了动态 ...