Recurrent Neural Network[Content]

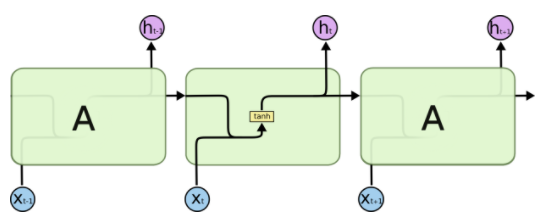

1. RNN

图1.1 标准RNN模型的结构

2. BiRNN

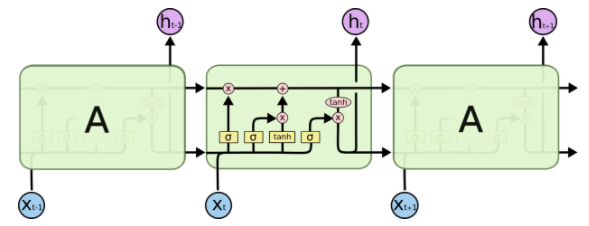

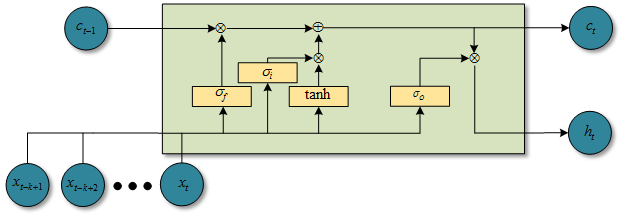

3. LSTM

图3.1 LSTM模型的结构

4. Clockwork RNN

5. Depth Gated RNN

6. Grid LSTM

7. DRAW

8. RLVM

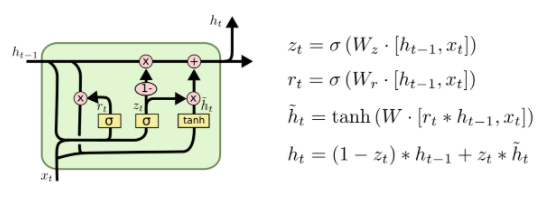

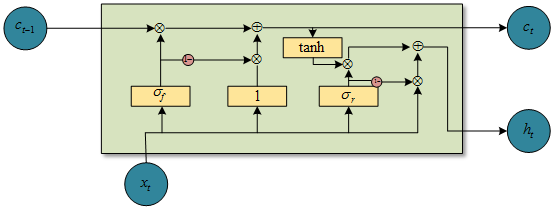

9. GRU

图9.1 GRU模型的结构

10. NTM

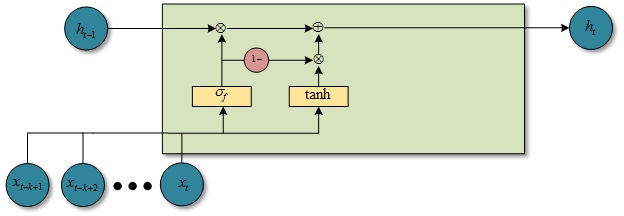

11. QRNN

图11.1 f-pooling时候的QRNN结构图

图11.2 fo-pooling时候的QRNN结构图

图11.3 ifo-pooling时候的QRNN结构图

点这里,QRNN

12. Persistent RNN

13. SRU

图13.1 SRU模型的结构

点这里,SRU

参考文献:

- [RNN&Depth] - Pascanu R, Gulcehre C, Cho K, et al. How to construct deep recurrent neural networks[J]. arXiv preprint arXiv:1312.6026, 2013.

- [survey] - Lipton Z C, Berkowitz J, Elkan C. A critical review of recurrent neural networks for sequence learning[J]. arXiv preprint arXiv:1506.00019, 2015.

.. [survey] - Jozefowicz R, Zaremba W, Sutskever I. An empirical exploration of recurrent network architectures[C]//Proceedings of the 32nd International Conference on Machine Learning (ICML-15). 2015: 2342-2350.

.. [survey] - Greff K, Srivastava R K, Koutník J, et al. LSTM: A search space odyssey[J]. IEEE transactions on neural networks and learning systems, 2017.

.. [survey] - Karpathy A, Johnson J, Fei-Fei L. Visualizing and understanding recurrent networks[J]. arXiv preprint arXiv:1506.02078, 2015. - [RNN] - Elman, Jeffrey L. “Finding structure in time.” Cognitive science 14.2 (1990): 179-211.

- [BiRNN] - Schuster, Mike, and Kuldip K. Paliwal. “Bidirectional recurrent neural networks.” IEEE Transactions on Signal Processing 45.11 (1997): 2673-2681.

- [LSTM] - Hochreiter, Sepp, and Jürgen Schmidhuber. “Long short-term memory.” Neural computation 9.8 (1997): 1735-1780

.. [LSTM] - 理解 LSTM 网络

.. [LSTM Variants] - Gers F A, Schmidhuber J. Recurrent nets that time and count[C]//Neural Networks, 2000. IJCNN 2000, Proceedings of the IEEE-INNS-ENNS International Joint Conference on. IEEE, 2000, 3: 189-194. - [Multi-dimensional RNN] - Alex Graves, Santiago Fernandez, and Jurgen Schmidhuber, Multi-Dimensional Recurrent Neural Networks, ICANN 2007

- [GFRNN] - Junyoung Chung, Caglar Gulcehre, Kyunghyun Cho, Yoshua Bengio, Gated Feedback Recurrent Neural Networks, arXiv:1502.02367 / ICML 2015

- [Tree-Structured RNNs] - Kai Sheng Tai, Richard Socher, and Christopher D. Manning, Improved Semantic Representations From Tree-Structured Long Short-Term Memory Networks, arXiv:1503.00075 / ACL 2015

.. [Tree-Structured RNNs] - Samuel R. Bowman, Christopher D. Manning, and Christopher Potts, Tree-structured composition in neural networks without tree-structured architectures, arXiv:1506.04834 - [Clockwork RNN] - Koutník J, Greff K, Gomez F, et al. A Clockwork RNN[J]. arXiv preprint arXiv:1402.3511, 2014.

- [Depth Gated RNN] - Yao K, Cohn T, Vylomova K, et al. Depth-gated recurrent neural networks[J]. arXiv preprint, 2015.

- [Grid LSTM] - Kalchbrenner N, Danihelka I, Graves A. Grid long short-term memory[J]. arXiv preprint arXiv:1507.01526, 2015.

- [Segmental RNN] - Lingpeng Kong, Chris Dyer, Noah Smith, "Segmental Recurrent Neural Networks", ICLR 2016.

- [Seq2seq for Sets ] - Oriol Vinyals, Samy Bengio, Manjunath Kudlur, "Order Matters: Sequence to sequence for sets", ICLR 2016.

- [Hierarchical Recurrent Neural Networks] - Junyoung Chung, Sungjin Ahn, Yoshua Bengio, "Hierarchical Multiscale Recurrent Neural Networks", arXiv:1609.01704

- [DRAW] - Gregor K, Danihelka I, Graves A, et al. DRAW: A recurrent neural network for image generation[J]. arXiv preprint arXiv:1502.04623, 2015.

- [RLVM] - Chung J, Kastner K, Dinh L, et al. A recurrent latent variable model for sequential data[C]//Advances in neural information processing systems. 2015: 2980-2988.

- [Generate] - Bayer J, Osendorfer C. Learning stochastic recurrent networks[J]. arXiv preprint arXiv:1411.7610, 2014.

- [GRU] - Cho K, Van Merriënboer B, Gulcehre C, et al. Learning phrase representations using RNN encoder-decoder for statistical machine translation[J]. arXiv preprint arXiv:1406.1078, 2014.

.. [GRU] - Cho K, Van Merriënboer B, Bahdanau D, et al. On the properties of neural machine translation: Encoder-decoder approaches[J]. arXiv preprint arXiv:1409.1259, 2014.

.. [GRU] - Chung, Junyoung, et al. “Empirical evaluation of gated recurrent neural networks on sequence modeling.” arXiv preprint arXiv:1412.3555 (2014). - [NTM] - Graves, Alex, Greg Wayne, and Ivo Danihelka. “Neural turing machines.” arXiv preprint arXiv:1410.5401 (2014).

- [Neural GPU] - Łukasz Kaiser, Ilya Sutskever, arXiv:1511.08228 / ICML 2016 (under review)

- [QRNN] - Bradbury J, Merity S, Xiong C, et al. Quasi-recurrent neural networks[J]. arXiv preprint arXiv:1611.01576, 2016.

- [Memory Network] - Jason Weston, Sumit Chopra, Antoine Bordes, Memory Networks, arXiv:1410.3916

- [Pointer Network] - Oriol Vinyals, Meire Fortunato, and Navdeep Jaitly, Pointer Networks, arXiv:1506.03134 / NIPS 2015

- [Deep Attention Recurrent Q-Network] - Ivan Sorokin, Alexey Seleznev, Mikhail Pavlov, Aleksandr Fedorov, Anastasiia Ignateva, Deep Attention Recurrent Q-Network , arXiv:1512.01693

- [Dynamic Memory Networks] - Ankit Kumar, Ozan Irsoy, Peter Ondruska, Mohit Iyyer, James Bradbury, Ishaan Gulrajani, Victor Zhong, Romain Paulus, Richard Socher, "Ask Me Anything: Dynamic Memory Networks for Natural Language Processing", arXiv:1506.07285

- [SRU] - Lei T, Zhang Y. Training RNNs as Fast as CNNs[J]. arXiv preprint arXiv:1709.02755, 2017.

- [知乎] - 如何评价新提出的RNN变种SRU

- [attention] - Xu K, Ba J, Kiros R, et al. Show, attend and tell: Neural image caption generation with visual attention[C]//International Conference on Machine Learning. 2015: 2048-2057.

- [Persistent RNN] - Diamos G, Sengupta S, Catanzaro B, et al. Persistent rnns: Stashing recurrent weights on-chip[C]//International Conference on Machine Learning. 2016: 2024-2033.

.. [Persistent RNN] - Diamos G, Sengupta S, Catanzaro B, et al. Persistent RNNs: Stashing Weights on Chip[J]. 2016. - [github] - Awesome Recurrent Neural Networks.

Recurrent Neural Network[Content]的更多相关文章

- Recurrent Neural Network系列1--RNN(循环神经网络)概述

作者:zhbzz2007 出处:http://www.cnblogs.com/zhbzz2007 欢迎转载,也请保留这段声明.谢谢! 本文翻译自 RECURRENT NEURAL NETWORKS T ...

- Recurrent Neural Network(循环神经网络)

Reference: Alex Graves的[Supervised Sequence Labelling with RecurrentNeural Networks] Alex是RNN最著名变种 ...

- Recurrent Neural Network系列2--利用Python,Theano实现RNN

作者:zhbzz2007 出处:http://www.cnblogs.com/zhbzz2007 欢迎转载,也请保留这段声明.谢谢! 本文翻译自 RECURRENT NEURAL NETWORKS T ...

- Recurrent Neural Network系列3--理解RNN的BPTT算法和梯度消失

作者:zhbzz2007 出处:http://www.cnblogs.com/zhbzz2007 欢迎转载,也请保留这段声明.谢谢! 这是RNN教程的第三部分. 在前面的教程中,我们从头实现了一个循环 ...

- Recurrent Neural Network系列4--利用Python,Theano实现GRU或LSTM

yi作者:zhbzz2007 出处:http://www.cnblogs.com/zhbzz2007 欢迎转载,也请保留这段声明.谢谢! 本文翻译自 RECURRENT NEURAL NETWORK ...

- 循环神经网络(Recurrent Neural Network,RNN)

为什么使用序列模型(sequence model)?标准的全连接神经网络(fully connected neural network)处理序列会有两个问题:1)全连接神经网络输入层和输出层长度固定, ...

- Recurrent Neural Network[survey]

0.引言 我们发现传统的(如前向网络等)非循环的NN都是假设样本之间无依赖关系(至少时间和顺序上是无依赖关系),而许多学习任务却都涉及到处理序列数据,如image captioning,speech ...

- 【NLP】Recurrent Neural Network and Language Models

0. Overview What is language models? A time series prediction problem. It assigns a probility to a s ...

- 课程五(Sequence Models),第一 周(Recurrent Neural Networks) —— 1.Programming assignments:Building a recurrent neural network - step by step

Building your Recurrent Neural Network - Step by Step Welcome to Course 5's first assignment! In thi ...

随机推荐

- Android 实现锚点定位

相信做前端的都做过页面锚点定位的功能,通过<a href="#head"> 去设置页面内锚点定位跳转. 本篇文章就使用tablayout.scrollview来实现an ...

- C# Params的使用

using System; namespace Params { class Program { static void Main(string[] args) { PrintMany("H ...

- python爬虫学习记录——各种软件/库的安装

Ubuntu18.04安装python3-pip 1.apt-get update更新源 2,ubuntu18.04默认安装了python3,但是pip没有安装,安装命令:apt install py ...

- django数据查询之F查询和Q查询

仅仅靠单一的关键字参数查询已经很难满足查询要求.此时Django为我们提供了F和Q查询: # F 使用查询条件的值,专门取对象中某列值的操作 # from django.db.models impor ...

- 【爬坑】远程连接 MySQL 失败

问题描述 远程连接 MySQL 服务器失败 报以下错误 host 192.168.23.1 is not allowed to connect to mysql server 解决方案 在服务器端打开 ...

- 联想ts550服务器安装windows2008R2系统

发布时间:2018-10-18 点击数:4 服务器型号:联想 thinkserver ts550 系统:windowsserver2008R2 联想的 TS550 USB口全是USB3.0的,官方引 ...

- wordpress安装后访问博客只显示文字的解决办法

按着网上的教程,买了腾讯云服务器,上面的镜像已经安装好WordPress了.但是发现并不像网上十分钟搭建个人站点等的写的那么简单.遇到了一些问题,下面来详细讲一讲. 首先是用ip地址不能直接访问服务器 ...

- LeetCode算法题-Sum of Left Leaves(Java实现)

这是悦乐书的第217次更新,第230篇原创 01 看题和准备 今天介绍的是LeetCode算法题中Easy级别的第85题(顺位题号是404).找到给定二叉树中所有左叶的总和.例如: 二叉树中有两个左叶 ...

- Unity Shader 基础(2) Image Effect

Unity中 Image Effect 是Post Processing的一种方,Unity自身也提供很多Effect效果供使用.Image Effect的使用官方文档做了很多介绍,这里重点Post ...

- 【项目 · Wonderland】立项报告

[软件工程实践 · 团队项目] 第二次作业 团 队 作 业 原 文:http://www.cnblogs.com/andwho/p/7598662.html Part 0 · 简 要 目 录 Part ...