k8s部署高可用Ingress

部署高可用Ingress

官网地址https://kubernetes.github.io/ingress-nginx/deploy/

获取ingress的编排文件

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/master/deploy/static/mandatory.yaml

增加节点标签

kubectl label node k8snode1 ingresscontroller=true

kubectl get nodes --show-labels

附:

- 删除标签

kubectl label node k8snode1 ingresscontroller-- 更新标签

kubectl label node k8snode1 ingresscontroller=false --overwrite

1、修改Deployment为DaemonSet,并注释掉副本数

kind: DaemonSet

#replicas: 1

2、启用hostNetwork网络,并指定运行节点

hostNetwork暴露ingress-nginx controller的相关业务端口到主机,这样node节点主机所在网络的其他主机,都可以通过该端口访问到此应用程序。

nodeSelector指定之前添加ingresscontroller=true标签的node

hostNetwork: true

nodeSelector:

ingresscontroller: 'true'

3、修改镜像地址

registry.cn-hangzhou.aliyuncs.com/google_containers/nginx-ingress-controller:0.24.1

4、修改容器端口

args:

- /nginx-ingress-controller

- --configmap=$(POD_NAMESPACE)/nginx-configuration

- --tcp-services-configmap=$(POD_NAMESPACE)/tcp-services

- --udp-services-configmap=$(POD_NAMESPACE)/udp-services

- --publish-service=$(POD_NAMESPACE)/ingress-nginx

- --annotations-prefix=nginx.ingress.kubernetes.io

- --http-port=88 #默认80

- --https-port=4433 #默认443

ports:

- name: http

containerPort: 88 #更改为88

- name: https

containerPort: 4433 #更改为4433

5、增加master节点容忍

tolerations: #增加容忍,可分配到master节点

- key: "node-role.kubernetes.io/master"

operator: "Exists"

effect: "NoSchedule"

6、mandatory.yaml如下

vim mandatory.yaml

apiVersion: v1

kind: Namespace

metadata:

name: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: nginx-configuration

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: tcp-services

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

kind: ConfigMap

apiVersion: v1

metadata:

name: udp-services

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: nginx-ingress-clusterrole

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- endpoints

- nodes

- pods

- secrets

verbs:

- list

- watch

- apiGroups:

- ""

resources:

- nodes

verbs:

- get

- apiGroups:

- ""

resources:

- services

verbs:

- get

- list

- watch

- apiGroups:

- ""

resources:

- events

verbs:

- create

- patch

- apiGroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses

verbs:

- get

- list

- watch

- apiGroups:

- "extensions"

- "networking.k8s.io"

resources:

- ingresses/status

verbs:

- update

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: Role

metadata:

name: nginx-ingress-role

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

rules:

- apiGroups:

- ""

resources:

- configmaps

- pods

- secrets

- namespaces

verbs:

- get

- apiGroups:

- ""

resources:

- configmaps

resourceNames:

# Defaults to "<election-id>-<ingress-class>"

# Here: "<ingress-controller-leader>-<nginx>"

# This has to be adapted if you change either parameter

# when launching the nginx-ingress-controller.

- "ingress-controller-leader-nginx"

verbs:

- get

- update

- apiGroups:

- ""

resources:

- configmaps

verbs:

- create

- apiGroups:

- ""

resources:

- endpoints

verbs:

- get

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: RoleBinding

metadata:

name: nginx-ingress-role-nisa-binding

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: Role

name: nginx-ingress-role

subjects:

- kind: ServiceAccount

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: nginx-ingress-clusterrole-nisa-binding

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: nginx-ingress-clusterrole

subjects:

- kind: ServiceAccount

name: nginx-ingress-serviceaccount

namespace: ingress-nginx

---

apiVersion: apps/v1

kind: DaemonSet

metadata:

name: nginx-ingress-controller

namespace: ingress-nginx

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

spec:

selector:

matchLabels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

template:

metadata:

labels:

app.kubernetes.io/name: ingress-nginx

app.kubernetes.io/part-of: ingress-nginx

annotations:

prometheus.io/port: "10254"

prometheus.io/scrape: "true"

spec:

# wait up to five minutes for the drain of connections

terminationGracePeriodSeconds: 300

serviceAccountName: nginx-ingress-serviceaccount

hostNetwork: true

nodeSelector:

ingresscontroller: 'true'

tolerations: #增加容忍,可分配到master节点

- key: "node-role.kubernetes.io/master"

operator: "Exists"

effect: "NoSchedule"

containers:

- name: nginx-ingress-controller

image: registry.cn-hangzhou.aliyuncs.com/google_containers/nginx-ingress-controller:0.24.1

args:

- /nginx-ingress-controller

- --configmap=$(POD_NAMESPACE)/nginx-configuration

- --tcp-services-configmap=$(POD_NAMESPACE)/tcp-services

- --udp-services-configmap=$(POD_NAMESPACE)/udp-services

- --publish-service=$(POD_NAMESPACE)/ingress-nginx

- --annotations-prefix=nginx.ingress.kubernetes.io

- --http-port=88 #默认80

- --https-port=4433 #默认443

securityContext:

allowPrivilegeEscalation: true

capabilities:

drop:

- ALL

add:

- NET_BIND_SERVICE

# www-data -> 33

runAsUser: 33

env:

- name: POD_NAME

valueFrom:

fieldRef:

fieldPath: metadata.name

- name: POD_NAMESPACE

valueFrom:

fieldRef:

fieldPath: metadata.namespace

ports:

- name: http

containerPort: 88 #更改为88

- name: https

containerPort: 4433 #更改为4433

livenessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

initialDelaySeconds: 10

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

readinessProbe:

failureThreshold: 3

httpGet:

path: /healthz

port: 10254

scheme: HTTP

periodSeconds: 10

successThreshold: 1

timeoutSeconds: 10

lifecycle:

preStop:

exec:

command:

- /wait-shutdown

测试

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: nginx-static

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: nginx-static

labels:

name: nginx-static

spec:

replicas: 1

template:

metadata:

labels:

name: nginx-static

spec:

containers:

- name: nginx-static

image: nginx:latest

volumeMounts:

- mountPath: /etc/localtime

name: vol-localtime

readOnly: true

ports:

- containerPort: 80

volumes:

- name: vol-localtime

hostPath:

path: /etc/localtime

---

apiVersion: v1

kind: Service

metadata:

name: nginx-static

labels:

name: nginx-static

spec:

ports:

- port: 80

protocol: TCP

targetPort: 80

name: http

selector:

name: nginx-static

---

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: submodule-checker-ingress

spec:

rules:

- host: nginx.weave.pub

http:

paths:

- backend:

serviceName: nginx-static

servicePort: 80

kubectl create -f nginx-static.yaml

访问

vim /etc/hosts

127.0.0.1 localhost localhost.localdomain localhost4 localhost4.localdomain4

::1 localhost localhost.localdomain localhost6 localhost6.localdomain6

10.x.x.x master1 nginx.test.pub

10.x.x.x master2

[root@master1 ingress]# curl nginx.test.pub

<html>

<head><title>308 Permanent Redirect</title></head>

<body>

<center><h1>308 Permanent Redirect</h1></center>

<hr><center>nginx/1.16.1</center>

</body>

</html>

1.1 Ingress简介

1.1.1需求

coredns是实现pods之间通过域名访问,如果外部需要访问service服务,需访问对应的NodeIP:Port。但是由于NodePort需要指定宿主机端口,一旦服务多起来,多个端口就难以管理。那么,这种情况下,使用Ingress暴露服务更加合适。

1.1.2 Ingress组件说明

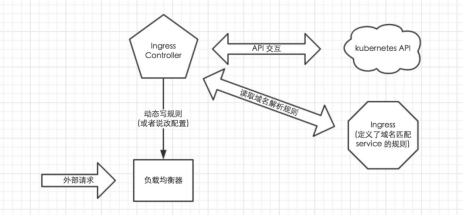

使用Ingress时一般会有三个组件:反向代理负载均衡器、Ingress Controller、Ingress

1、反向代理负载均衡器

反向代理负载均衡器很简单,说白了就是 nginx、apache 等中间件,新版k8s已经将Nginx与Ingress Controller合并为一个组件,所以Nginx无需单独部署,只需要部署Ingress Controller即可。在集群中反向代理负载均衡器可以自由部署,可以使用 Replication Controller、Deployment、DaemonSet 等等方式

2、Ingress Controller

Ingress Controller 实质上可以理解为是个监视器,Ingress Controller 通过不断地跟 kubernetes API 打交道,实时的感知后端 service、pod 等变化,比如新增和减少 pod,service 增加与减少等;当得到这些变化信息后,Ingress Controller 再结合下文的 Ingress 生成配置,然后更新反向代理负载均衡器,并刷新其配置,达到服务发现的作用

3、Ingress

Ingress 简单理解就是个规则定义;比如说某个域名对应某个 service,即当某个域名的请求进来时转发给某个 service;这个规则将与 Ingress Controller 结合,然后 Ingress Controller 将其动态写入到负载均衡器配置中,从而实现整体的服务发现和负载均衡

整体关系如下图所示:

从上图中可以很清晰的看到,实际上请求进来还是被负载均衡器拦截,比如 nginx,然后 Ingress Controller 通过跟 Ingress 交互得知某个域名对应哪个 service,再通过跟 kubernetes API 交互得知 service 地址等信息;综合以后生成配置文件实时写入负载均衡器,然后负载均衡器 reload 该规则便可实现服务发现,即动态映射。

3.1.3 Nginx-Ingress工作原理

ingress controller通过和kubernetes api交互,动态的去感知集群中ingress规则变化;然后读取它,按照自定义的规则,规则就是写明了哪个域名对应哪个service,生成一段nginx配置;再写到nginx-ingress-control的pod里,这个Ingress controller的pod里运行着一个Nginx服务,控制器会把生成的nginx配置写入/etc/nginx.conf文件中;然后reload一下使配置生效。以此达到域名分配置和动态更新的问题。

说明:基于nginx服务的ingress controller根据不同的开发公司,又分为:

- k8s社区的ingres-nginx(https://github.com/kubernetes/ingress-nginx)

- nginx公司的nginx-ingress(https://github.com/nginxinc/kubernetes-ingress)

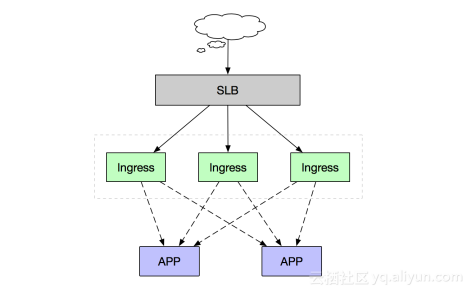

3.2.4 Ingress Controller高可用架构

作为集群流量接入层,Ingress Controller的高可用性显得尤为重要,高可用性首先要解决的就是单点故障问题,一般常用的是采用多副本部署的方式,我们在Kubernetes集群中部署高可用Ingress Controller接入层同样采用多节点部署架构,同时由于Ingress作为集群流量接入口,建议采用独占Ingress节点的方式,以避免业务应用与Ingress服务发生资源争抢。

如上述部署架构图,由多个独占Ingress实例组成统一接入层承载集群入口流量,同时可依据后端业务流量水平扩缩容Ingress节点。当然如果您前期的集群规模并不大,也可以采用将Ingress服务与业务应用混部的方式,但建议进行资源限制和隔离。

k8s部署高可用Ingress的更多相关文章

- 企业运维实践-还不会部署高可用的kubernetes集群?使用kubeadm方式安装高可用k8s集群v1.23.7

关注「WeiyiGeek」公众号 设为「特别关注」每天带你玩转网络安全运维.应用开发.物联网IOT学习! 希望各位看友[关注.点赞.评论.收藏.投币],助力每一个梦想. 文章目录: 0x00 前言简述 ...

- kubernetes kubeadm部署高可用集群

k8s kubeadm部署高可用集群 kubeadm是官方推出的部署工具,旨在降低kubernetes使用门槛与提高集群部署的便捷性. 同时越来越多的官方文档,围绕kubernetes容器化部署为环境 ...

- kubernetes部署高可用Harbor

前言 本文Harbor高可用依照Harbor官网部署,主要思路如下,大家可以根据具体情况选择搭建. 部署Postgresql高可用集群.(本文选用Stolon进行管理,请查看文章<kuberne ...

- 附012.Kubeadm部署高可用Kubernetes

一 kubeadm介绍 1.1 概述 参考<附003.Kubeadm部署Kubernetes>. 1.2 kubeadm功能 参考<附003.Kubeadm部署Kubernetes& ...

- 通过 Kubeadm 安装 K8S 与高可用,版本1.13.4

环境介绍: CentOS: 7.6 Docker: 18.06.1-ce Kubernetes: 1.13.4 Kuberadm: 1.13.4 Kuberlet: 1.13.4 Kuberctl: ...

- Quartz学习笔记:集群部署&高可用

Quartz学习笔记:集群部署&高可用 集群部署 一个Quartz集群中的每个节点是一个独立的Quartz应用,它又管理着其他的节点.这就意味着你必须对每个节点分别启动或停止.Quartz集群 ...

- Rancher 2.2.2 - HA 部署高可用k8s集群

对于生产环境,需以高可用的配置安装 Rancher,确保用户始终可以访问 Rancher Server.当安装在Kubernetes集群中时,Rancher将与集群的 etcd 集成,并利用Kuber ...

- kubeadm部署高可用K8S集群(v1.14.2)

1. 简介 测试环境Kubernetes 1.14.2版本高可用搭建文档,搭建方式为kubeadm 2. 服务器版本和架构信息 系统版本:CentOS Linux release 7.6.1810 ( ...

- 1.还不会部署高可用的kubernetes集群?看我手把手教你使用二进制部署v1.23.6的K8S集群实践(上)

公众号关注「WeiyiGeek」 设为「特别关注」,每天带你玩转网络安全运维.应用开发.物联网IOT学习! 本章目录: 0x00 前言简述 0x01 环境准备 主机规划 软件版本 网络规划 0x02 ...

随机推荐

- unittest介绍

unittest框架是python中一个标准的库中的一个模块,该模块包括许多的类如 test case类.test suit类.texttest runner类.texttest resuite类.t ...

- Hibernate HQL注入与防御(ctf实例)

遇到一个hql注入ctf题 这里总结下java中Hibernate HQL的注入问题. 0x01 关于HQL注入 Hibernate是一种ORM框架,用来映射与tables相关的类定义(代码) ...

- 内网转发之reGeorg+proxifier

先将reGeorg的对应脚本上传到服务器端,reGeorg提供了PHP.ASPX.JSP脚本,直接访问显示“Georg says, 'All seems fine'”,表示脚本运行正常. 运行 pyt ...

- CTF-SSH服务渗透

环境 Kali ip 192.168.56.102 Smb 靶机ip 192.168.56.101 0x01信息探测 首页发现有类似用户名的信息 先记录下来 Martin N Hadi M Jimmy ...

- [NOIp2011] luogu P1311 选择客栈

我妈的抽象歌曲真 nb. 题目描述 给你 nnn 个点,每个点有两个参数 ci,dic_i,d_ici,di,给你一个数 DDD.定义一种方案合法,当且仅当你选出整数 i,j∈[1,n],i< ...

- Springboot读取Request参数的坑

[后端拿参数相关] 默认配置时, getInputStream()和getReader()一起使用会报错 使用两遍getInputStream(),第二遍会为空 当存在@RequestBody等注 ...

- 2.Linux Bash认识

虚拟机快照操作 1.什么是Bash shell? 它就是命令解释器,将用户输入的指令翻译给内核程序,内核处理完成之后将结果返回给Bash 2.Bash shell的用途? 几乎能完成所有的操作: 文件 ...

- Fiddler抓包和工作原理

一.概述 Fiddler是一款免费且功能强大的数据包抓取软件.它通过代理的方式获取程序http通讯的数据, 可以用其检测网页和服务器的交互情况,能够记录所有客户端和服务器间的http请求, 支持监视. ...

- 【Bug】解决 java.sql.SQLSyntaxErrorException 异常

java.sql.SQLSyntaxErrorException: You have an error in your SQL syntax 错误 错误详情: Caused by: java.sql. ...

- Kafka权威指南阅读笔记(第五章)

Kafka Broker kafka 第一个启动的Broker在ZooKeeper中创建一个临时节点/controller,让自己成为控制器.其他Broker启动后在控制器节点上创建Watch对象,便 ...