(原创)Stanford Machine Learning (by Andrew NG) --- (week 1) Linear Regression

Andrew NG的Machine learning课程地址为:https://www.coursera.org/course/ml

在Linear Regression部分出现了一些新的名词,这些名词在后续课程中会频繁出现:

| Cost Function | Linear Regression | Gradient Descent | Normal Equation | Feature Scaling | Mean normalization |

| 损失函数 | 线性回归 | 梯度下降 | 正规方程 | 特征归一化 | 均值标准化 |

Model Representation

- m: number of training examples

- x(i): input (features) of ith training example

- xj(i): value of feature j in ith training example

- y(i): “output” variable / “target” variable of ith training example

- n: number of features

- θ: parameters

- Hypothesis: hθ(x) = θ0 + θ1x1 + θ2x2 + … +θnxn

Cost Function

IDEA: Choose θso that hθ(x) is close to y for our training examples (x, y).

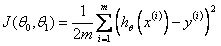

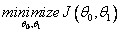

A.Linear Regression with One Variable Cost Function

Cost Function:

Goal:

Contour Plot:

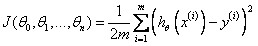

B.Linear Regression with Multiple Variable Cost Function

Cost Function:

Goal:

Gradient Descent

Outline

Gradient Descent Algorithm

迭代过程收敛图可能如下:

(此为等高线图,中间为最小值点,图中蓝色弧线为可能的收敛路径。)

Learning Rate α:

1) If α is too small, gradient descent can be slow to converge;

2) If α is too large, gradient descent may not decrease on every iteration or may not converge;

3) For sufficiently small α , J(θ) should decrease on every iteration;

Choose Learning Rate α: Debug, 0.001, 0.003, 0.006, 0.01, 0.03, 0.06, 0.1, 0.3, 0.6, 1.0;

“Batch” Gradient Descent: Each step of gradient descent uses all the training examples;

“Stochastic” gradient descent: Each step of gradient descent uses only one training examples.

Normal Equation

IDEA: Method to solve for θ analytically.

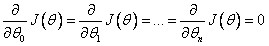

for every j, then

for every j, then

Restriction: Normal Equation does not work when (XTX) is non-invertible.

PS: 当矩阵为满秩矩阵时,该矩阵可逆。列向量(feature)线性无关且行向量(样本)线性无关的个数大于列向量的个数(特征个数n).

Gradient Descent Algorithm VS. Normal Equation

Gradient Descent:

- Need to choose α;

- Needs many iterations;

- Works well even when n is large; (n > 1000 is appropriate)

Normal Equation:

- No need to choose α;

- Don’t need to iterate;

- Need to compute (XTX)-1 ;

- Slow if n is very large. (n < 1000 is OK)

Feature Scaling

IDEA: Make sure features are on a similar scale.

好处: 减少迭代次数,有利于快速收敛

Example: If we need to get every feature into approximately a -1 ≤ xi ≤ 1 range, feature values located in [-3, 3] or [-1/3, 1/3] fields are acceptable.

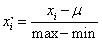

Mean normalization:

HOMEWORK

好了,既然看完了视频课程,就来做一下作业吧,下面是Linear Regression部分作业的核心代码:

1.computeCost.m/computeCostMulti.m

J=/(*m)*sum((theta'*X'-y').^2);

2.gradientDescent.m/gradientDescentMulti.m

h=X*theta-y;

v=X'*h;

v=v*alpha/m;

theta1=theta;

theta=theta-v;

(原创)Stanford Machine Learning (by Andrew NG) --- (week 1) Linear Regression的更多相关文章

- (原创)Stanford Machine Learning (by Andrew NG) --- (week 3) Logistic Regression & Regularization

coursera上面Andrew NG的Machine learning课程地址为:https://www.coursera.org/course/ml 我曾经使用Logistic Regressio ...

- (原创)Stanford Machine Learning (by Andrew NG) --- (week 10) Large Scale Machine Learning & Application Example

本栏目来源于Andrew NG老师讲解的Machine Learning课程,主要介绍大规模机器学习以及其应用.包括随机梯度下降法.维批量梯度下降法.梯度下降法的收敛.在线学习.map reduce以 ...

- (原创)Stanford Machine Learning (by Andrew NG) --- (week 8) Clustering & Dimensionality Reduction

本周主要介绍了聚类算法和特征降维方法,聚类算法包括K-means的相关概念.优化目标.聚类中心等内容:特征降维包括降维的缘由.算法描述.压缩重建等内容.coursera上面Andrew NG的Mach ...

- (原创)Stanford Machine Learning (by Andrew NG) --- (week 7) Support Vector Machines

本栏目内容来源于Andrew NG老师讲解的SVM部分,包括SVM的优化目标.最大判定边界.核函数.SVM使用方法.多分类问题等,Machine learning课程地址为:https://www.c ...

- (原创)Stanford Machine Learning (by Andrew NG) --- (week 9) Anomaly Detection&Recommender Systems

这部分内容来源于Andrew NG老师讲解的 machine learning课程,包括异常检测算法以及推荐系统设计.异常检测是一个非监督学习算法,用于发现系统中的异常数据.推荐系统在生活中也是随处可 ...

- (原创)Stanford Machine Learning (by Andrew NG) --- (week 4) Neural Networks Representation

Andrew NG的Machine learning课程地址为:https://www.coursera.org/course/ml 神经网络一直被认为是比较难懂的问题,NG将神经网络部分的课程分为了 ...

- (原创)Stanford Machine Learning (by Andrew NG) --- (week 1) Introduction

最近学习了coursera上面Andrew NG的Machine learning课程,课程地址为:https://www.coursera.org/course/ml 在Introduction部分 ...

- (原创)Stanford Machine Learning (by Andrew NG) --- (week 5) Neural Networks Learning

本栏目内容来自Andrew NG老师的公开课:https://class.coursera.org/ml/class/index 一般而言, 人工神经网络与经典计算方法相比并非优越, 只有当常规方法解 ...

- (原创)Stanford Machine Learning (by Andrew NG) --- (week 6) Advice for Applying Machine Learning & Machine Learning System Design

(1) Advice for applying machine learning Deciding what to try next 现在我们已学习了线性回归.逻辑回归.神经网络等机器学习算法,接下来 ...

随机推荐

- 关于Solaris系统“mpt_sas”驱动

1.mpt_sas 驱动源文件所在系统源代码中目录: illumos-soulos/usr/src/uts/common/sys/scsi/adapters/mpt_sas -- 头文件 illum ...

- Kaggle机器学习之模型集成(stacking)

Stacking是用新的模型(次学习器)去学习怎么组合那些基学习器,它的思想源自于Stacked Generalization这篇论文.如果把Bagging看作是多个基分类器的线性组合,那么Stack ...

- Python模块学习 - Fileinput

Fileinput模块 fileinput是python提供的标准库,使用fileinput模块可以依次读取命令行参数中给出的多个文件.也就是说,它可以遍历 sys.argv[1:],并按行读取列表中 ...

- Java 关于微信公众号支付总结附代码

很多朋友第一次做微信支付的时候都有蒙,但当你完整的做一次就会发现其实并没有那么难 业务流程和应用场景官网有详细的说明:https://pay.weixin.qq.com/wiki/doc/api/js ...

- python实战===爬取所有微信好友的信息

''' 爬取所有T信好友的信息 ''' import itchat from pandas import DataFrame itchat.login() friends=itchat.get_fri ...

- XCopy复制文件夹命令及参数详解以及xcopy拷贝目录并排除特定文件

XCOPY是COPY的扩展,可以把指定的目录连文件和目录结构一并拷贝,但不能拷贝系统文件:使用时源盘符.源目标路径名.源文件名至少指定一个:选用/S时对源目录下及其子目录下的所有文件进行COPY.除非 ...

- MACBOOK 总是断网怎么办

MACBOOK 连接 wifi 老是断网.焦躁不安 看图,二个方法,第一就搞定,

- python--tesseract

tesseract的介绍 我们爬虫会受到阻碍,其中一个便是我们在模拟登陆或者请求一些数据的时候,出现的图形验证码,因此我们需要一种能叫图形验证码识别成文本的技术.将图片翻译成文字一般称为光学文字识别( ...

- TCP三次握手和四次挥手及用户访问网页流程

TCP报文格式 TCP通信是通过报文进行的,首先要了解TCP报文的格式. 序号:Seq序号,占32位,用来标识从TCP源端向目的端发送的字节流,发起方发送数据时对此进行标记. 确认序号:Ack序号,占 ...

- django渲染模板时跟vue使用的{{ }}冲突解决方法

var vm = new Vue({ el: '#app', // 分割符: 修改vue中显示数据的语法, 防止与django冲突 delimiters: ['[[', ']]'], data: { ...