[转帖]CPU Utilization is Wrong

CPU Utilization is Wrong

09 May 2017

The metric we all use for CPU utilization is deeply misleading, and getting worse every year. What is CPU utilization? How busy your processors are? No, that's not what it measures. Yes, I'm talking about the "%CPU" metric used everywhere, by everyone. In every performance monitoring product. In top(1).

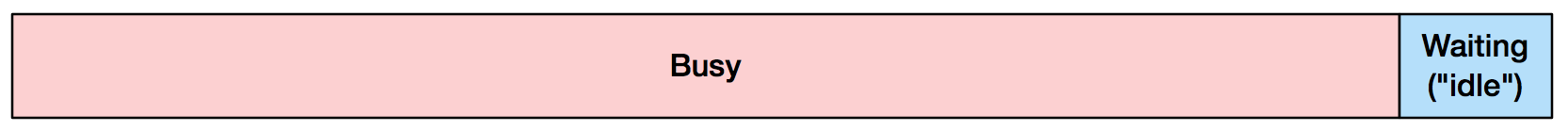

What you may think 90% CPU utilization means:

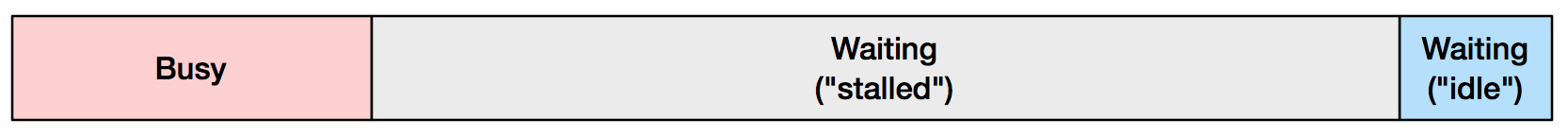

What it might really mean:

Stalled means the processor was not making forward progress with instructions, and usually happens because it is waiting on memory I/O. The ratio I drew above (between busy and stalled) is what I typically see in production. Chances are, you're mostly stalled, but don't know it.

What does this mean for you? Understanding how much your CPUs are stalled can direct performance tuning efforts between reducing code or reducing memory I/O. Anyone looking at CPU performance, especially on clouds that auto scale based on CPU, would benefit from knowing the stalled component of their %CPU.

What really is CPU Utilization?

The metric we call CPU utilization is really "non-idle time": the time the CPU was not running the idle thread. Your operating system kernel (whatever it is) usually tracks this during context switch. If a non-idle thread begins running, then stops 100 milliseconds later, the kernel considers that CPU utilized that entire time.

This metric is as old as time sharing systems. The Apollo Lunar Module guidance computer (a pioneering time sharing system) called its idle thread the "DUMMY JOB", and engineers tracked cycles running it vs real tasks as a important computer utilization metric. (I wrote about this before.)

So what's wrong with this?

Nowadays, CPUs have become much faster than main memory, and waiting on memory dominates what is still called "CPU utilization". When you see high %CPU in top(1), you might think of the processor as being the bottleneck – the CPU package under the heat sink and fan – when it's really those banks of DRAM.

This has been getting worse. For a long time processor manufacturers were scaling their clockspeed quicker than DRAM was scaling its access latency (the "CPU DRAM gap"). That levelled out around 2005 with 3 GHz processors, and since then processors have scaled using more cores and hyperthreads, plus multi-socket configurations, all putting more demand on the memory subsystem. Processor manufacturers have tried to reduce this memory bottleneck with larger and smarter CPU caches, and faster memory busses and interconnects. But we're still usually stalled.

How to tell what the CPUs are really doing

By using Performance Monitoring Counters (PMCs): hardware counters that can be read using Linux perf, and other tools. For example, measuring the entire system for 10 seconds:

# perf stat -a -- sleep 10

Performance counter stats for 'system wide':

641398.723351 task-clock (msec) # 64.116 CPUs utilized (100.00%)

379,651 context-switches # 0.592 K/sec (100.00%)

51,546 cpu-migrations # 0.080 K/sec (100.00%)

13,423,039 page-faults # 0.021 M/sec

1,433,972,173,374 cycles # 2.236 GHz (75.02%)

<not supported> stalled-cycles-frontend

<not supported> stalled-cycles-backend

1,118,336,816,068 instructions # 0.78 insns per cycle (75.01%)

249,644,142,804 branches # 389.218 M/sec (75.01%)

7,791,449,769 branch-misses # 3.12% of all branches (75.01%)

10.003794539 seconds time elapsed

The key metric here is instructions per cycle (insns per cycle: IPC), which shows on average how many instructions we were completed for each CPU clock cycle. The higher, the better (a simplification). The above example of 0.78 sounds not bad (78% busy?) until you realize that this processor's top speed is an IPC of 4.0. This is also known as 4-wide, referring to the instruction fetch/decode path. Which means, the CPU can retire (complete) four instructions with every clock cycle. So an IPC of 0.78 on a 4-wide system, means the CPUs are running at 19.5% their top speed. Newer Intel processors may move to 5-wide.

There are hundreds more PMCs you can use to dig further: measuring stalled cycles directly by different types.

In the cloud

If you are in a virtual environment, you might not have access to PMCs, depending on whether the hypervisor supports them for guests. I recently posted about The PMCs of EC2: Measuring IPC, showing how PMCs are now available for dedicated host types on the AWS EC2 Xen-based cloud.

Interpretation and actionable items

If your IPC is < 1.0, you are likely memory stalled, and software tuning strategies include reducing memory I/O, and improving CPU caching and memory locality, especially on NUMA systems. Hardware tuning includes using processors with larger CPU caches, and faster memory, busses, and interconnects.

If your IPC is > 1.0, you are likely instruction bound. Look for ways to reduce code execution: eliminate unnecessary work, cache operations, etc. CPU flame graphs are a great tool for this investigation. For hardware tuning, try a faster clock rate, and more cores/hyperthreads.

For my above rules, I split on an IPC of 1.0. Where did I get that from? I made it up, based on my prior work with PMCs. Here's how you can get a value that's custom for your system and runtime: write two dummy workloads, one that is CPU bound, and one memory bound. Measure their IPC, then calculate their mid point.

What performance monitoring products should tell you

Every performance tool should show IPC along with %CPU. Or break down %CPU into instruction-retired cycles vs stalled cycles, eg, %INS and %STL.

As for top(1), there is tiptop(1) for Linux, which shows IPC by process:

tiptop - [root]

Tasks: 96 total, 3 displayed screen 0: default PID [ %CPU] %SYS P Mcycle Minstr IPC %MISS %BMIS %BUS COMMAND

3897 35.3 28.5 4 274.06 178.23 0.65 0.06 0.00 0.0 java

1319+ 5.5 2.6 6 87.32 125.55 1.44 0.34 0.26 0.0 nm-applet

900 0.9 0.0 6 25.91 55.55 2.14 0.12 0.21 0.0 dbus-daemo

Other reasons CPU utilization is misleading

It's not just memory stall cycles that makes CPU utilization misleading. Other factors include:

- Temperature trips stalling the processor.

- Turboboost varying the clockrate.

- The kernel varying the clock rate with speed step.

- The problem with averages: 80% utilized over 1 minute, hiding bursts of 100%.

- Spin locks: the CPU is utilized, and has high IPC, but the app is not making logical forward progress.

Update: is CPU utilization actually wrong?

There have been hundreds of comments on this post, here (below) and elsewhere (1, 2). Thanks to everyone for taking the time and the interest in this topic. To summarize my responses: I'm not talking about iowait at all (that's disk I/O), and there are actionable items if you know you are memory bound (see above).

But is CPU utilization actually wrong, or just deeply misleading? I think many people interpret high %CPU to mean that the processing unit is the bottleneck, which is wrong (as I said earlier). At that point you don't yet know, and it is often something external. Is the metric technically correct? If the CPU stall cycles can't be used by anything else, aren't they are therefore "utilized waiting" (which sounds like an oxymoron)? In some cases, yes, you could say that %CPU as an OS-level metric is technically correct, but deeply misleading. With hyperthreads, however, those stalled cycles can now be used by another thread, so %CPU may count cycles as utilized that are in fact available. That's wrong. In this post I wanted to focus on the interpretation problem and suggested solutions, but yes, there are technical problems with this metric as well.

You might just say that utilization as a metric was already broken, as Adrian Cockcroft discussed previously.

Conclusion

CPU utilization has become a deeply misleading metric: it includes cycles waiting on main memory, which can dominate modern workloads. Perhaps %CPU should be renamed to %CYC, short for cycles. You can figure out what %CPU really means by using additional metrics, including instructions per cycle (IPC). An IPC < 1.0 likely means memory bound, and an IPC > 1.0 likely means instruction bound. I covered IPC in my previous post, including an introduction to the Performance Monitoring Counters (PMCs) needed to measure it.

Performance monitoring products that show %CPU – which is all of them – should also show PMC metrics to explain what that means, and not mislead the end user. For example, they can show %CPU with IPC, and/or instruction-retired cycles vs stalled cycles. Armed with these metrics, developers and operators can choose how to better tune their applications and systems.

[转帖]CPU Utilization is Wrong的更多相关文章

- How do I Find Out Linux CPU Utilization?

From:http://www.cyberciti.biz/tips/how-do-i-find-out-linux-cpu-utilization.html Whenever a Linux sys ...

- 压力测试衡量CPU的三个指标:CPU Utilization、Load Average和Context Switch Rate

分类: 4.软件设计/架构/测试 2010-01-12 19:58 34241人阅读 评论(4) 收藏 举报 测试loadrunnerlinux服务器firebugthread 上篇讲如何用LoadR ...

- Zabbix CPU utilization监控参数

工作中查看Zabbix linux 监控项的时候对linux 监控的cpu使用的各个参数没怎么明白,特意查看了下资料 Zabbix linux模板下的CPU utilization是自带的监控Linu ...

- 【每日一摩斯】-Troubleshooting: High CPU Utilization (164768.1) - 系列6

如果问题是一个正运行的缓慢的查询SQL,那么就应该对该查询进行调优,避免它耗费过高的CPU资源.如果它做了许多的hash连接和全表扫描,那么就应该添加索引以提高效率. 下面的文章可以帮助判断查询的问题 ...

- 【每日一摩斯】-Troubleshooting: High CPU Utilization (164768.1) - 系列5

Oracle(用户)进程 以下这些操作都是需要消耗大量CPU资源的:解析大型查询,存储过程编译或执行,空间管理和排序. 下面这几篇文章可以帮助采集关于使用高CPU资源的进程的更多信息: Note:35 ...

- 【每日一摩斯】-Troubleshooting: High CPU Utilization (164768.1) - 系列4

Jobs (CJQ0, Jn, SNPn) Job进程运行用户定义的以及系统定义的类似于batch的任务.检查Job进程占用大量CPU资源的方法,就像检查用户进程一样. 可以根据以下视图检查Job进程 ...

- [转帖]CPU Cache 机制以及 Cache miss

CPU Cache 机制以及 Cache miss https://www.cnblogs.com/jokerjason/p/10711022.html CPU体系结构之cache小结 1.What ...

- [转帖]CPU 的缓存

缓存这个词想必大家都听过,其实缓存的意义很广泛:电脑整机最大的缓存可以体现为内存条.显卡上的显存就是显卡芯片所需要用到的缓存.硬盘上也有相对应的缓存.CPU有着最快的缓存(L1.L2.L3缓存等),缓 ...

- [转帖]CPU时间片

CPU时间片 https://www.cnblogs.com/xingzc/p/6077214.html CPU的时间片 CPU的利用率好CPU的 load average 是不一样的 Conntex ...

- [转帖]震惊,用了这么多年的 CPU 利用率,其实是错的

震惊,用了这么多年的 CPU 利用率,其实是错的 2018年12月22日 08:43:09 Linuxer_ 阅读数:50 https://blog.csdn.net/juS3Ve/article/d ...

随机推荐

- KubeEdge边缘计算在顺丰科技工业物联网中的实践

摘要:顺丰物联网平台负责人胡典钢为大家带来了 " 边缘计算在工业物联网中的应用实践与思考 " ,阐述了工业物联网的发展背景.整体架构设计以及边缘计算在此过程中承担的重要角色,并梳理 ...

- 如何给网页和代码做HTML加密?

如何给网页和代码做HTML加密? 本篇文章给大家谈谈html混淆加密在线,以及HTML在线加密对应的知识点,希望对各位有所帮助,不要忘了收藏本站喔. 如何给代码加密? 1.源代码加密软件推荐使 ...

- 使用阿里云镜像安装 Docker 服务

Docker从1.13版本之后采用时间线的方式作为版本号,分为社区版CE和企业版EE.社区版是免费提供给个人开发者和小型团体使用的,企业版会提供额外的收费服务,比如经过官方测试认证过的基础设施.容器. ...

- 消息驱动 —— SpringCloud Stream

Stream 简介 Spring Cloud Stream 是用于构建消息驱动的微服务应用程序的框架,提供了多种中间件的合理配置 Spring Cloud Stream 包含以下核心概念: Desti ...

- vivo智能活动中台-悟空系统建设之路

作者:来自 vivo 互联网悟空系统研发团队 本文根据冯伟.姜野老师在"2023 vivo开发者大会"现场演讲内容整理而成.[vivo互联网技术]公众号回复[2023 VDC]获取 ...

- PVE API创建虚拟机

度娘,谷歌都搜了一圈没有找到通过PVE API创建虚拟机的方式, 于是查官网自己试了试,部分代码抄的Sam Liu大佬的作业,感谢大佬. python代码如下: import requests # s ...

- 2、springboot创建多模块工程

系列导航 springBoot项目打jar包 1.springboot工程新建(单模块) 2.springboot创建多模块工程 3.springboot连接数据库 4.SpringBoot连接数据库 ...

- java进阶(25)--泛型

一.泛型基本概念 JDK5.0后新特性:Generic 1.不使用泛型举例

- Go 汇编学习笔记

0.前言 学习 Go 离不开看源码,源码又包含大量汇编代码,离开汇编是学不好 Go 的.同样,离开汇编去学习计算机是不完整的,汇编是基石,是离操作系统和硬件最近的一层. 虽然之前学过一点 Go 汇编, ...

- 容器网络原理分析:veth 和 network namespace

1. Liunx veth-pair 和 network namespace Docker 中容器的访问需要依赖 veth-pair 和 network namespace 等技术.network n ...