flink入门

wordCount

POM文件需要导入的依赖:

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-java_2.12</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-table_2.12</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-java</artifactId>

<version>${flink.version}</version>

</dependency>

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-clients_2.12</artifactId>

<version>${flink.version}</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-scala -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-scala_2.12</artifactId>

<version>1.7.1</version>

</dependency>

<!-- https://mvnrepository.com/artifact/org.apache.flink/flink-streaming-scala -->

<dependency>

<groupId>org.apache.flink</groupId>

<artifactId>flink-streaming-scala_2.12</artifactId>

<version>1.7.1</version>

</dependency>

离线代码:

java版本:

package flink; import org.apache.flink.api.java.DataSet;

import org.apache.flink.api.java.ExecutionEnvironment;

import org.apache.flink.api.java.tuple.Tuple2; public class WordExample {

public static void main(String[] args) throws Exception { final ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment(); //创建构建字符串的数据集

DataSet<String> text = env.fromElements(

"flink test","" +

"I think I hear them. Stand, ho! Who's there?"); //分割字符串,按照key进行分组,统计相同的key个数

DataSet<Tuple2<String, Integer>> wordCount = text.flatMap(new LineSplitter())

.groupBy(0).sum(1); wordCount.print();

}

}

package flink; import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.util.Collector; public class LineSplitter implements FlatMapFunction<String, Tuple2<String,Integer>> {

@Override

public void flatMap(String o, Collector<Tuple2<String, Integer>> collector) throws Exception {

for (String word : o.split(" ")) {

collector.collect(new Tuple2<String, Integer>(word,1));

}

}

}

scala版本:

package flink

import org.apache.flink.api.scala._

object WordCountExample {

def main(args: Array[String]): Unit = {

val env = ExecutionEnvironment.getExecutionEnvironment

val text = env.fromElements("Who's there?",

"I think I hear them. Stand, ho! Who's there?")

val counts = text.flatMap(_.toLowerCase().split("\\W+")filter(_.nonEmpty))

.map((_,1)).groupBy(0).sum(1)

counts.print()

}

}

流式:

java版本:

package flink; import org.apache.flink.api.common.functions.FlatMapFunction;

import org.apache.flink.api.common.functions.ReduceFunction;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.streaming.api.datastream.DataStream;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.api.windowing.time.Time;

import org.apache.flink.util.Collector; public class WordCount {

public static void main(String[] args) throws Exception {

final int port;

try {

final ParameterTool params = ParameterTool.fromArgs(args);

port = params.getInt("port");

} catch (Exception e) {

System.out.println("No port specified.Please run 'SocketWindowWordCount--port <port>'");

return;

}

//get the execution enviroment

final StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment(); //get input data by connecting to the socket

DataStream<String> text = env.socketTextStream("localhost", port, '\n');

//parse the data,group it.window it,and aggregeate the counts

DataStream<WordWithCount> windowCounts = text

.flatMap(new FlatMapFunction<String, WordWithCount>() {

@Override

public void flatMap(String s, Collector<WordWithCount> collector) {

for (String word : s.split("\\s")) {

collector.collect(new WordWithCount(word, 1L));

}

}

}).keyBy("word").timeWindow(Time.seconds(10), Time.seconds(5))

.reduce(new ReduceFunction<WordWithCount>() {

@Override

public WordWithCount reduce(WordWithCount wordWithCount, WordWithCount t1) throws Exception {

return new WordWithCount(wordWithCount.word, wordWithCount.count + t1.count);

}

}); //print the result with a single thread,rather than in parallel

windowCounts.print().setParallelism(1); env.execute("Socket Window WordCount");

}

}

package flink;

public class WordWithCount {

public String word;

public long count;

public WordWithCount() {

}

public WordWithCount(String word, long count) {

this.word = word;

this.count = count;

}

@Override

public String toString() {

return word + ":" + count;

}

}

scala版本

package flink import org.apache.flink.api.java.utils.ParameterTool

import org.apache.flink.api.scala._

import org.apache.flink.streaming.api.scala.StreamExecutionEnvironment

import org.apache.flink.streaming.api.windowing.time.Time object SokcetWindowWordCount { case class WordWithCount(word: String, count: Long) def main(args: Array[String]): Unit = {

//the port to connect to

val port: Int = try {

ParameterTool.fromArgs(args).getInt("port")

} catch {

case e: Exception => {

System.err.println("No port specified.Please run 'SocketWindowWordCount --port<port>'")

return

}

}

//get the execution enviroment

val env: StreamExecutionEnvironment = StreamExecutionEnvironment.getExecutionEnvironment //parse input data by connecting to the socket

val text = env.socketTextStream("localhost", port, '\n') //parse the data.group it.window it.and aggregate the counts val windowCount = text

.flatMap{w => w.split("\\s")}

.map{w => WordWithCount(w, 1)}

.keyBy("word")

.timeWindow(Time.seconds(10), Time.seconds(5)) .sum("count") //print the results with a single thread ,rather than in parallel

windowCount.print().setParallelism(1) env.execute("Socket Window WordCount")

}

}

运行,传参:

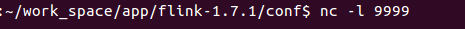

终端使用nc命令进行模拟发送数据到9999端口

运行结果:

注意事项:

千万不要把包导错了,java就导java,scala就导scala,如果导错,程序跑不起来

flink入门的更多相关文章

- Flink入门(二)——Flink架构介绍

1.基本组件栈 了解Spark的朋友会发现Flink的架构和Spark是非常类似的,在整个软件架构体系中,同样遵循着分层的架构设计理念,在降低系统耦合度的同时,也为上层用户构建Flink应用提供了丰富 ...

- Flink入门(三)——环境与部署

flink是一款开源的大数据流式处理框架,他可以同时批处理和流处理,具有容错性.高吞吐.低延迟等优势,本文简述flink在windows和linux中安装步骤,和示例程序的运行,包括本地调试环境,集群 ...

- Flink入门(四)——编程模型

flink是一款开源的大数据流式处理框架,他可以同时批处理和流处理,具有容错性.高吞吐.低延迟等优势,本文简述flink的编程模型. 数据集类型: 无穷数据集:无穷的持续集成的数据集合 有界数据集:有 ...

- Flink入门(五)——DataSet Api编程指南

Apache Flink Apache Flink 是一个兼顾高吞吐.低延迟.高性能的分布式处理框架.在实时计算崛起的今天,Flink正在飞速发展.由于性能的优势和兼顾批处理,流处理的特性,Flink ...

- 不一样的Flink入门教程

前言 微信搜[Java3y]关注这个朴实无华的男人,点赞关注是对我最大的支持! 文本已收录至我的GitHub:https://github.com/ZhongFuCheng3y/3y,有300多篇原创 ...

- Flink入门-第一篇:Flink基础概念以及竞品对比

Flink入门-第一篇:Flink基础概念以及竞品对比 Flink介绍 截止2021年10月Flink最新的稳定版本已经发展到1.14.0 Flink起源于一个名为Stratosphere的研究项目主 ...

- flink 入门

http://ifeve.com/flink-quick-start/ http://vinoyang.com/2016/05/02/flink-concepts/ http://wuchong.me ...

- Flink入门宝典(详细截图版)

本文基于java构建Flink1.9版本入门程序,需要Maven 3.0.4 和 Java 8 以上版本.需要安装Netcat进行简单调试. 这里简述安装过程,并使用IDEA进行开发一个简单流处理程序 ...

- 记一次flink入门学习笔记

团队有几个系统数据量偏大,且每天以几万条的数量累增.有一个系统每天需要定时读取数据库,并进行相关的业务逻辑计算,从而获取最新的用户信息,定时任务的整个耗时需要4小时左右.由于定时任务是夜晚执行,目前看 ...

- 第02讲:Flink 入门程序 WordCount 和 SQL 实现

我们右键运行时相当于在本地启动了一个单机版本.生产中都是集群环境,并且是高可用的,生产上提交任务需要用到flink run 命令,指定必要的参数. 本课时我们主要介绍 Flink 的入门程序以及 SQ ...

随机推荐

- Golang自定义包导入

# 文件Tree project -/bin -/pkg -/src -main.go -/test -test1.go -test2.go main.go package main import ( ...

- RNN Train和Test Mismatch

李宏毅深度学习 https://www.bilibili.com/video/av9770302/?p=8 在看RNN的时候,你是不是也会觉得有些奇怪, Train的过程中, 是把训练集中的结果作为下 ...

- xcode代码提示没了

defaults write com.apple.dt.XCode IDEIndexDisable 0 https://www.jianshu.com/p/57a14bed9d1b

- vc调试不能入断点

确保输出目录和中间目录在同一个文件夹:

- MongoDB $关键字 关系比较符号 $lt $lte $gt $gte $ne

关系比较符: 小于:$lt 小于或等于:$lte 大于:$gt 大于或等于:$gte 不等于:$ne 属于:$in 查询中常见的 等于 大于 小于 大于等于 小于等于 等于 : 在MongoDB中什么 ...

- mysql报错Ignoring the redo log due to missing MLOG_CHECKPOINT between

mysql报错Ignoring the redo log due to missing MLOG_CHECKPOINT between mysql版本:5.7.19 系统版本:centos7.3 由于 ...

- webstorm 配置 开发微信小程序

默认情况下,webstorm是不支持wxml和wxss的文件类型,不会有语法高亮 设置高亮 除了高亮,还需要代码提示, 所幸已经有前辈整理了小程序的代码片段,只需要导入其安装包即可使用,包文件路径如下 ...

- 关于 vue 日期格式的过滤

最近也在写公司几个单独页面,数据量比较,让前端来做,还不让angular,jquery? no no no~ 对于一个前端来说绑数据那么麻烦的一款 “经典的老东西“ ,我才不用, SO~ vue ...

- 发布自己的npm包、开源项目

背景:由于最近在做项目之余想做一些其他的事,所以东找找西找找的,最后决定写一个封装一些常用原型方法的NPM包,但不仅限于此.话不多说,说一下实践过程. 一.注册NPM及如何上传NPM包参考连接:htt ...

- 把vim插入状态的光标改为竖线

和终端有关系,如果是Konsole的终端,把下面两行加到.vimrc文件里就可以 let &t_SI = "\<Esc>]50;CursorShape=1\x7" ...