Eclipse远程提交hadoop集群任务

文章概览:

1 前言

Hadoop高可用品台搭建完备后,参见《Hadoop高可用平台搭建》,下一步是在集群上跑任务,本文主要讲述Eclipse远程提交hadoop集群任务。

2 Eclipse查看远程hadoop集群文件

2.1 编译hadoop eclipse 插件

Hadoop集群文件查看可以通过webUI或hadoop Cmd,为了在Eclipse上方便增删改查集群文件,我们需要编译hadoop eclipse 插件,步骤如下:

① 环境准备

JDK环境配置 配置JAVA_HOME,并将bin目录配置到path

ANT环境配置 配置ANT_HOME,并将bin目录配置到path

在cmd查看:

② 软件准备

hadoop2x-eclipse-plugin-master https://github.com/winghc/hadoop2x-eclipse-plugin

hadoop-common-2.2.0-bin-master https://github.com/srccodes/hadoop-common-2.2.0-bin

hadoop-2.6.0

eclipse-jee-luna-SR2-win32-x86_64

③ 编译

注:软件位置为自己机器上位置,请勿照搬。

E:\>cd E:\hadoop\hadoop2x-eclipse-plugin-master\src\contrib\eclipse-plugin E:\hadoop\hadoop2x-eclipse-plugin-master\src\contrib\eclipse-plugin>ant jar -Dve

rsion=2.6. -Declipse.home=E:\eclipse -Dhadoop.home=E:\hadoop\hadoop-2.6.

Buildfile: E:\hadoop\hadoop2x-eclipse-plugin-master\src\contrib\eclipse-plugin\b

uild.xml check-contrib: init:

[echo] contrib: eclipse-plugin init-contrib: ivy-probe-antlib: ivy-init-antlib: ivy-init:

[ivy:configure] :: Ivy 2.1. - :: http://ant.apache.org/ivy/ ::

[ivy:configure] :: loading settings :: file = E:\hadoop\hadoop2x-eclipse-plugin-

master\ivy\ivysettings.xml ivy-resolve-common: ivy-retrieve-common:

[ivy:cachepath] DEPRECATED: 'ivy.conf.file' is deprecated, use 'ivy.settings.fil

e' instead

[ivy:cachepath] :: loading settings :: file = E:\hadoop\hadoop2x-eclipse-plugin-

master\ivy\ivysettings.xml compile:

[echo] contrib: eclipse-plugin

[javac] E:\hadoop\hadoop2x-eclipse-plugin-master\src\contrib\eclipse-plugin\

build.xml:: warning: 'includeantruntime' was not set, defaulting to build.sysc

lasspath=last; set to false for repeatable builds jar: BUILD SUCCESSFUL

Total time: seconds

成功编译,生成如下图:

④ 将改文件拷贝到Eclipse中plugins目录下,重启Eclipse会出现:

2.2 配置hadoop选项

打开Map/Reduce Locations

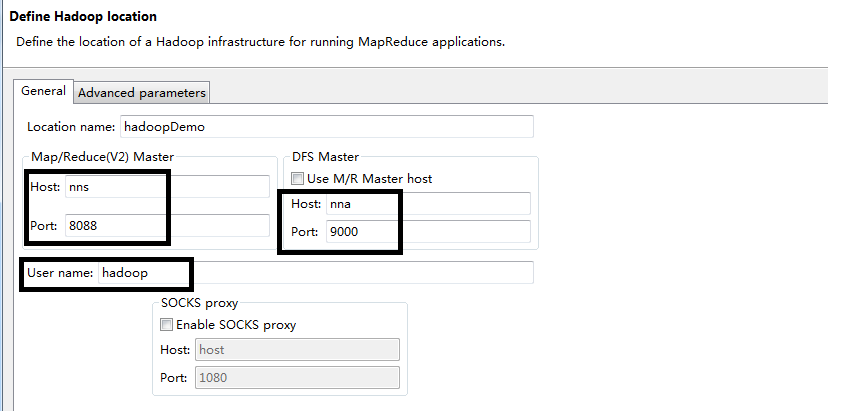

编辑Map/Reduce配置项:

根据上一篇,我们配置用户hadoop,Active HDFS和Active NM位置信息。

完成后,就可以在Eclipse中查看HDFS文件信息:

2.3 hdfs简单实例

我们编写一个hdfs简单实例,来远程操作hadoop。

package com.diexun.cn.mapred; import java.io.IOException;

import java.net.URI;

import java.net.URISyntaxException; import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataOutputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path; public class MR2Test { static final String INPUT_PATH = "hdfs://192.168.137.101:9000/hello";

static final String OUTPUT_PATH = "hdfs://192.168.137.101:9000/output"; public static void main(String[] args) throws IOException, URISyntaxException {

Configuration conf = new Configuration();

final FileSystem fileSystem = FileSystem.get(new URI(INPUT_PATH), conf);

final Path outPath = new Path(OUTPUT_PATH);

if (fileSystem.exists(outPath)) {

fileSystem.delete(outPath, true);

} FSDataOutputStream fsDataOutputStream = fileSystem.create(new Path(INPUT_PATH));

fsDataOutputStream.writeBytes("welcome to here ...");

} }

用Eclipse查看HDFS文件,发现hello文件被修改为“welcome to here ...”。

3 Eclipse提交远程hadoop集群任务

正式进入本文的正题,新建一个Map/Reduce Project,会引用很多jar(注:平常我们都是新建Maven项目进行开发,有利于程序迁移及体积,后面的文章会以Maven构建),将自带WordCount实例拷贝到Eclipse,

配置运行参数:(注:填写hdfs集群上路径,本地路径无效)

执行,出现线面结果:

log4j:WARN No appenders could be found for logger (org.apache.hadoop.metrics2.lib.MutableMetricsFactory).

log4j:WARN Please initialize the log4j system properly.

log4j:WARN See http://logging.apache.org/log4j/1.2/faq.html#noconfig for more info.

Exception in thread "main" java.lang.UnsatisfiedLinkError: org.apache.hadoop.io.nativeio.NativeIO$Windows.access0(Ljava/lang/String;I)Z

at org.apache.hadoop.io.nativeio.NativeIO$Windows.access0(Native Method)

at org.apache.hadoop.io.nativeio.NativeIO$Windows.access(NativeIO.java:557)

at org.apache.hadoop.fs.FileUtil.canRead(FileUtil.java:977)

at org.apache.hadoop.util.DiskChecker.checkAccessByFileMethods(DiskChecker.java:187)

at org.apache.hadoop.util.DiskChecker.checkDirAccess(DiskChecker.java:174)

at org.apache.hadoop.util.DiskChecker.checkDir(DiskChecker.java:108)

at org.apache.hadoop.fs.LocalDirAllocator$AllocatorPerContext.confChanged(LocalDirAllocator.java:285)

at org.apache.hadoop.fs.LocalDirAllocator$AllocatorPerContext.getLocalPathForWrite(LocalDirAllocator.java:344)

at org.apache.hadoop.fs.LocalDirAllocator.getLocalPathForWrite(LocalDirAllocator.java:150)

at org.apache.hadoop.fs.LocalDirAllocator.getLocalPathForWrite(LocalDirAllocator.java:131)

at org.apache.hadoop.fs.LocalDirAllocator.getLocalPathForWrite(LocalDirAllocator.java:115)

at org.apache.hadoop.mapred.LocalDistributedCacheManager.setup(LocalDistributedCacheManager.java:131)

at org.apache.hadoop.mapred.LocalJobRunner$Job.<init>(LocalJobRunner.java:163)

at org.apache.hadoop.mapred.LocalJobRunner.submitJob(LocalJobRunner.java:731)

at org.apache.hadoop.mapreduce.JobSubmitter.submitJobInternal(JobSubmitter.java:536)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1296)

at org.apache.hadoop.mapreduce.Job$10.run(Job.java:1293)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:415)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1628)

at org.apache.hadoop.mapreduce.Job.submit(Job.java:1293)

at org.apache.hadoop.mapreduce.Job.waitForCompletion(Job.java:1314)

at WordCount.main(WordCount.java:76)

方便后面打印,先添加log4j.properties文件:

log4j.rootLogger=DEBUG,stdout,R log4j.appender.stdout=org.apache.log4j.ConsoleAppender

log4j.appender.stdout.layout=org.apache.log4j.PatternLayout

log4j.appender.stdout.layout.ConversionPattern=%5p - %m%n log4j.appender.R=org.apache.log4j.RollingFileAppender

log4j.appender.R.File=mapreduce_test.log

log4j.appender.R.MaxFileSize=1MB

log4j.appender.R.MaxBackupIndex=1

log4j.appender.R.layout=org.apache.log4j.PatternLayout

log4j.appender.R.layout.ConversionPattern=%p %t %c - %m%n

log4j.logger.com.codefutures=INFO

根据出错提示,是由于NativeIO.java中return access0(path, desiredAccess.accessRight());导致,此句注,改为返回return true。

修改源码后,在项目里创建和Apache中一样的包,此包会覆盖Apache源码包,如下:

再次执行:

INFO - Job job_local401325246_0001 completed successfully

DEBUG - PrivilegedAction as:wangxiaolong (auth:SIMPLE) from:org.apache.hadoop.mapreduce.Job.getCounters(Job.java:764)

INFO - Counters: 38

File System Counters

FILE: Number of bytes read=16290

FILE: Number of bytes written=545254

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=38132

HDFS: Number of bytes written=6834

HDFS: Number of read operations=15

HDFS: Number of large read operations=0

HDFS: Number of write operations=4

Map-Reduce Framework

Map input records=174

Map output records=1139

Map output bytes=23459

Map output materialized bytes=7976

Input split bytes=99

Combine input records=1139

Combine output records=286

Reduce input groups=286

Reduce shuffle bytes=7976

Reduce input records=286

Reduce output records=286

Spilled Records=572

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=18

CPU time spent (ms)=0

Physical memory (bytes) snapshot=0

Virtual memory (bytes) snapshot=0

Total committed heap usage (bytes)=468713472

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=19066

File Output Format Counters

Bytes Written=6834

确实已经成功执行了,可发现“INFO - Job job_local401325246_0001 completed successfully”,

观察http://nns:8088/cluster/apps也没有发现该任务,说明此任务并未提交到集群执行。

添加配置文件,如下:

配置文件直接从集群下载(注:集群中yarn-site.xml配置中“yarn.resourcemanager.ha.id”是有所不同的),该下载哪份配置?

由于集群中Active RM是nns,故下载nns中yarn-site.xml配置。执行:

Error: java.lang.RuntimeException: java.lang.ClassNotFoundException: Class com.diexun.cn.mapred.WordCount$TokenizerMapper not found

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:)

at org.apache.hadoop.mapreduce.task.JobContextImpl.getMapperClass(JobContextImpl.java:)

at org.apache.hadoop.mapred.MapTask.runNewMapper(MapTask.java:)

at org.apache.hadoop.mapred.MapTask.run(MapTask.java:)

at org.apache.hadoop.mapred.YarnChild$.run(YarnChild.java:)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:)

at org.apache.hadoop.mapred.YarnChild.main(YarnChild.java:)

Caused by: java.lang.ClassNotFoundException: Class com.diexun.cn.mapred.WordCount$TokenizerMapper not found

at org.apache.hadoop.conf.Configuration.getClassByName(Configuration.java:)

at org.apache.hadoop.conf.Configuration.getClass(Configuration.java:)

... more

没有找到对应的代码文件,我们把代码打包,并设置conf,conf.set("mapred.jar", "**.jar"); 再次执行:

Exception message: /bin/bash: line : fg: no job control

Stack trace: ExitCodeException exitCode=: /bin/bash: line : fg: no job control

at org.apache.hadoop.util.Shell.runCommand(Shell.java:)

at org.apache.hadoop.util.Shell.run(Shell.java:)

at org.apache.hadoop.util.Shell$ShellCommandExecutor.execute(Shell.java:)

at org.apache.hadoop.yarn.server.nodemanager.DefaultContainerExecutor.launchContainer(DefaultContainerExecutor.java:)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:)

at org.apache.hadoop.yarn.server.nodemanager.containermanager.launcher.ContainerLaunch.call(ContainerLaunch.java:)

at java.util.concurrent.FutureTask.run(FutureTask.java:)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:)

at java.lang.Thread.run(Thread.java:)

出现如下错误,是由于平台引起,在hadoop2.2~2.5中需修改源码编译(略),hadoop2.6已经可以直接添加配置,conf.set("mapreduce.app-submission.cross-platform", "true");或直接到mapred-site.xml中配置。再次执行:

INFO - Job job_1438912697979_0023 completed successfully

DEBUG - PrivilegedAction as:wangxiaolong (auth:SIMPLE) from:org.apache.hadoop.mapreduce.Job.getCounters(Job.java:)

DEBUG - IPC Client () connection to dn2/192.168.137.104: from wangxiaolong sending #

DEBUG - IPC Client () connection to dn2/192.168.137.104: from wangxiaolong got value #

DEBUG - Call: getCounters took 139ms

INFO - Counters:

File System Counters

FILE: Number of bytes read=

FILE: Number of bytes written=

FILE: Number of read operations=

FILE: Number of large read operations=

FILE: Number of write operations=

HDFS: Number of bytes read=

HDFS: Number of bytes written=

HDFS: Number of read operations=

HDFS: Number of large read operations=

HDFS: Number of write operations=

Job Counters

Launched map tasks=

Launched reduce tasks=

Data-local map tasks=

Total time spent by all maps in occupied slots (ms)=

Total time spent by all reduces in occupied slots (ms)=

Total time spent by all map tasks (ms)=

Total time spent by all reduce tasks (ms)=

Total vcore-seconds taken by all map tasks=

Total vcore-seconds taken by all reduce tasks=

Total megabyte-seconds taken by all map tasks=

Total megabyte-seconds taken by all reduce tasks=

Map-Reduce Framework

Map input records=

Map output records=

Map output bytes=

Map output materialized bytes=

Input split bytes=

Combine input records=

Combine output records=

Reduce input groups=

Reduce shuffle bytes=

Reduce input records=

Reduce output records=

Spilled Records=

Shuffled Maps =

Failed Shuffles=

Merged Map outputs=

GC time elapsed (ms)=

CPU time spent (ms)=

Physical memory (bytes) snapshot=

Virtual memory (bytes) snapshot=

Total committed heap usage (bytes)=

Shuffle Errors

BAD_ID=

CONNECTION=

IO_ERROR=

WRONG_LENGTH=

WRONG_MAP=

WRONG_REDUCE=

File Input Format Counters

Bytes Read=

File Output Format Counters

Bytes Written=

至此,任务已经成功提交至集群执行。

有时我们想用我们特定用户去执行任务(注:dfs.permissions.enabled为true时,往往会涉及用户权限问题),可以在VM arguments中设置,这样任务的提交这就变成了设定者。

4 小结

本文主要阐述hadoop eclipse插件的编译与远程提交hadoop集群任务。hadoop eclipse插件的编译需要注意软件安装位置对应。远程提交hadoop集群任务需留意,本地与HDFS文件路径异同,加载特定文件配置,指定特定用户,跨平台异常等问题。

参考:

http://www.cxyclub.cn/n/48423/

http://zy19982004.iteye.com/blog/2031172

http://www.iteye.com/blogs/subjects/Hadoop

http://qindongliang.iteye.com/blog/2078452

http://qindongliang.iteye.com/blog/2119620

http://www.aboutyun.com/forum.php?mod=viewthread&tid=8498

Eclipse远程提交hadoop集群任务的更多相关文章

- windows下eclipse远程连接hadoop集群开发mapreduce

转载请注明出处,谢谢 2017-10-22 17:14:09 之前都是用python开发maprduce程序的,今天试了在windows下通过eclipse java开发,在开发前先搭建开发环境.在 ...

- windows下在eclipse上远程连接hadoop集群调试mapreduce错误记录

第一次跑mapreduce,记录遇到的几个问题,hadoop集群是CDH版本的,但我windows本地的jar包是直接用hadoop2.6.0的版本,并没有特意找CDH版本的 1.Exception ...

- Win7下通过eclipse远程连接CDH集群来执行相应的程序以及错误说明

最近尝试这用用eclipse连接CDH的集群,由于之前尝试过很多次都没连上,有一次发现Cloudera Manager是将连接的端口修改了,所以才导致连接不上CDH的集群,之前Apache hadoo ...

- eclipse提交hadoop集群跑程序

在eclipse下搭建hadoop后,测试wordcount程序,右击 Run on hadoop 程序跑成功后,发现“INFO - Job job_local401325246_0001 compl ...

- Eclipse/MyEclipse连接Hadoop集群出现:Unable to ... ... org.apache.hadoop.security.AccessControlExceptiom:Permission denied问题

问题详细如下: 解决办法: <property> <name>dfs.premissions</name> <value>false</value ...

- 在windows远程提交任务给Hadoop集群(Hadoop 2.6)

我使用3台Centos虚拟机搭建了一个Hadoop2.6的集群.希望在windows7上面使用IDEA开发mapreduce程序,然后提交的远程的Hadoop集群上执行.经过不懈的google终于搞定 ...

- eclipse 远程链接访问hadoop 集群日志信息没有输出的问题l

Eclipse插件Run on Hadoop没有用到hadoop集群节点的问题参考来源 http://f.dataguru.cn/thread-250980-1-1.html http://f.dat ...

- Hadoop集群 -Eclipse开发环境设置

1.Hadoop开发环境简介 1.1 Hadoop集群简介 Java版本:jdk-6u31-linux-i586.bin Linux系统:CentOS6.0 Hadoop版本:hadoop-1.0.0 ...

- 【hadoop】——window下elicpse连接hadoop集群基础超详细版

1.Hadoop开发环境简介 1.1 Hadoop集群简介 Java版本:jdk-6u31-linux-i586.bin Linux系统:CentOS6.0 Hadoop版本:hadoop-1.0.0 ...

随机推荐

- 【Ecstore2.0】计划任务/队列/导入导出 的执行问题

[环境]CENTOS6.3 + wdcp(php5.3) [症状]可正常加入队列,但不执行队列 [原因]大部份都是用户权限造成 [原理] Ecstore2.0的导入导出.发送邮件.日常清理备份等任务操 ...

- JBPM4.4GPD设计器中文乱码问题的另一种解决方法

在eclipse中使用JBPM4.4的设计器时,输入中文后直接查看Source发现xml里中文全都乱码了,这时候大约整个人都不好了!赶紧百度.谷歌,搜到的多数结果都是要你在eclipse.ini或my ...

- python 的文件操作。

20.文件操作: 1.打开文件: f = open('db','r') 只读 ; f = open('db','w') 只写 ...

- EDIT编辑框

编辑框 编辑框的主要作用是让用户输入文本,例如要求用户在编辑框中输入密码的文本. .基础知识 编辑框里的文本可以是单行,也可以是多行,后者的风格取值为 ES_MULTILINE.一般对于多行文本编辑框 ...

- .Net框架与框架类库-转

http://blog.csdn.net/rrrfff/article/details/6686493 .NET Framework 具有两个主要组件:公共语言运行库和 .NET Framework类 ...

- Linux系统编程(26)——守护进程

Linux系统启动时会启动很多系统服务进程,比如inetd,这些系统服务进程没有控制终端,不能直接和用户交互.其它进程都是在用户登录或运行程序时创建,在运行结束或用户注销时终止,但系统服务进程不受用户 ...

- HDU_1042——阶乘,万进制

#include <cstdio> ; const int BASE = MAX; int main() { int n, i, j; while(~scanf("%d" ...

- 从一个聊天信息引发的思考之Android事件分发机制

转载请声明:http://www.cnblogs.com/courtier/p/4295235.html 起源: 我在某一天看到了下面的一条信息(如下图),我想了下(当然不是这 ...

- HDU 3262 Seat taking up is tough (模拟搜索)

传送门:http://acm.hdu.edu.cn/showproblem.php?pid=3262 题意:教室有n*m个座位,每个座位有一个舒适值,有K个学生在不同时间段进来,要占t个座位,必须是连 ...

- FreeMarker---数据类型

1.a.ftl 你好,${user},今天你的精神不错! ----------------------------- 测试if语句: <#if user=="老高"> ...