kubebuilder实战之六:构建部署运行

欢迎访问我的GitHub

https://github.com/zq2599/blog_demos

内容:所有原创文章分类汇总及配套源码,涉及Java、Docker、Kubernetes、DevOPS等;

系列文章链接

- kubebuilder实战之一:准备工作

- kubebuilder实战之二:初次体验kubebuilder

- kubebuilder实战之三:基础知识速览

- kubebuilder实战之四:operator需求说明和设计

- kubebuilder实战之五:operator编码

- kubebuilder实战之六:构建部署运行

- kubebuilder实战之七:webhook

- kubebuilder实战之八:知识点小记

本篇概览

- 作为《kubebuilder实战》系列的第六篇,前面已完成了编码,现在到了验证功能的环节,请确保您的docker和kubernetes环境正常,然后咱们一起完成以下操作:

- 部署CRD

- 本地运行Controller

- 通过yaml文件新建elasticweb资源对象

- 通过日志和kubectl命令验证elasticweb功能是否正常

- 浏览器访问web,验证业务服务是否正常

- 修改singlePodQPS,看elasticweb是否自动调整pod数量

- 修改totalQPS,看elasticweb是否自动调整pod数

- 删除elasticweb,看相关的service和deployment被自动删除

- 构建Controller镜像,在kubernetes运行此Controller,验证上述功能是否正常

- 看似简单的部署验证操作,零零散散加起来居然有这么多...好吧不感慨了,立即开始吧;

部署CRD

- 从控制台进入Makefile所在目录,执行命令make install,即可将CRD部署到kubernetes:

zhaoqin@zhaoqindeMBP-2 elasticweb % make install

/Users/zhaoqin/go/bin/controller-gen "crd:trivialVersions=true" rbac:roleName=manager-role webhook paths="./..." output:crd:artifacts:config=config/crd/bases

kustomize build config/crd | kubectl apply -f -

Warning: apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition

customresourcedefinition.apiextensions.k8s.io/elasticwebs.elasticweb.com.bolingcavalry configured

从上述内容可见,实际上执行的操作是用kustomize将config/crd下的yaml资源合并后在kubernetes进行创建;

可以用命令kubectl api-versions验证CRD部署是否成功:

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl api-versions|grep elasticweb

elasticweb.com.bolingcavalry/v1

本地运行Controller

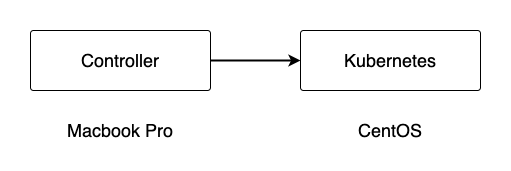

- 先尝试用最简单的方式来验证Controller的功能,如下图,Macbook电脑是我的开发环境,直接用elasticweb工程中的Makefile,可以将Controller的代码在本地运行起来里面:

- 进入Makefile文件所在目录,执行命令make run即可编译运行controller:

zhaoqin@zhaoqindeMBP-2 elasticweb % pwd

/Users/zhaoqin/github/blog_demos/kubebuilder/elasticweb

zhaoqin@zhaoqindeMBP-2 elasticweb % make run

/Users/zhaoqin/go/bin/controller-gen object:headerFile="hack/boilerplate.go.txt" paths="./..."

go fmt ./...

go vet ./...

/Users/zhaoqin/go/bin/controller-gen "crd:trivialVersions=true" rbac:roleName=manager-role webhook paths="./..." output:crd:artifacts:config=config/crd/bases

go run ./main.go

2021-02-20T20:46:16.774+0800 INFO controller-runtime.metrics metrics server is starting to listen {"addr": ":8080"}

2021-02-20T20:46:16.774+0800 INFO setup starting manager

2021-02-20T20:46:16.775+0800 INFO controller-runtime.controller Starting EventSource {"controller": "elasticweb", "source": "kind source: /, Kind="}

2021-02-20T20:46:16.776+0800 INFO controller-runtime.manager starting metrics server {"path": "/metrics"}

2021-02-20T20:46:16.881+0800 INFO controller-runtime.controller Starting Controller {"controller": "elasticweb"}

2021-02-20T20:46:16.881+0800 INFO controller-runtime.controller Starting workers {"controller": "elasticweb", "worker count": 1}

新建elasticweb资源对象

负责处理elasticweb的Controller已经运行起来了,接下来就开始创建elasticweb资源对象吧,用yaml文件来创建;

在config/samples目录下,kubebuilder为咱们创建了demo文件elasticweb_v1_elasticweb.yaml,不过这里面spec的内容不是咱们定义的那四个字段,需要改成以下内容:

apiVersion: v1

kind: Namespace

metadata:

name: dev

labels:

name: dev

---

apiVersion: elasticweb.com.bolingcavalry/v1

kind: ElasticWeb

metadata:

namespace: dev

name: elasticweb-sample

spec:

# Add fields here

image: tomcat:8.0.18-jre8

port: 30003

singlePodQPS: 500

totalQPS: 600

- 对上述配置的几个参数做如下说明:

- 使用的namespace为dev

- 本次测试部署的应用为tomcat

- service使用宿主机的30003端口暴露tomcat的服务

- 假设单个pod能支撑500QPS,外部请求的QPS为600

- 执行命令kubectl apply -f config/samples/elasticweb_v1_elasticweb.yaml,即可在kubernetes创建elasticweb实例:

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl apply -f config/samples/elasticweb_v1_elasticweb.yaml

namespace/dev created

elasticweb.elasticweb.com.bolingcavalry/elasticweb-sample created

- 去controller的窗口发现打印了不少日志,通过分析日志发现Reconcile方法执行了两次,第一执行时创建了deployment和service等资源:

2021-02-21T10:03:57.108+0800 INFO controllers.ElasticWeb 1. start reconcile logic {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T10:03:57.108+0800 INFO controllers.ElasticWeb 3. instance : Image [tomcat:8.0.18-jre8], Port [30003], SinglePodQPS [500], TotalQPS [600], RealQPS [nil] {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T10:03:57.210+0800 INFO controllers.ElasticWeb 4. deployment not exists {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T10:03:57.313+0800 INFO controllers.ElasticWeb set reference {"func": "createService"}

2021-02-21T10:03:57.313+0800 INFO controllers.ElasticWeb start create service {"func": "createService"}

2021-02-21T10:03:57.364+0800 INFO controllers.ElasticWeb create service success {"func": "createService"}

2021-02-21T10:03:57.365+0800 INFO controllers.ElasticWeb expectReplicas [2] {"func": "createDeployment"}

2021-02-21T10:03:57.365+0800 INFO controllers.ElasticWeb set reference {"func": "createDeployment"}

2021-02-21T10:03:57.365+0800 INFO controllers.ElasticWeb start create deployment {"func": "createDeployment"}

2021-02-21T10:03:57.382+0800 INFO controllers.ElasticWeb create deployment success {"func": "createDeployment"}

2021-02-21T10:03:57.382+0800 INFO controllers.ElasticWeb singlePodQPS [500], replicas [2], realQPS[1000] {"func": "updateStatus"}

2021-02-21T10:03:57.407+0800 DEBUG controller-runtime.controller Successfully Reconciled {"controller": "elasticweb", "request": "dev/elasticweb-sample"}

2021-02-21T10:03:57.407+0800 INFO controllers.ElasticWeb 1. start reconcile logic {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T10:03:57.407+0800 INFO controllers.ElasticWeb 3. instance : Image [tomcat:8.0.18-jre8], Port [30003], SinglePodQPS [500], TotalQPS [600], RealQPS [1000] {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T10:03:57.407+0800 INFO controllers.ElasticWeb 9. expectReplicas [2], realReplicas [2] {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T10:03:57.407+0800 INFO controllers.ElasticWeb 10. return now {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T10:03:57.407+0800 DEBUG controller-runtime.controller Successfully Reconciled {"controller": "elasticweb", "request": "dev/elasticweb-sample"}

- 再用kubectl get命令详细检查资源对象,一切符合预期,elasticweb、service、deployment、pod都是正常的:

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl apply -f config/samples/elasticweb_v1_elasticweb.yaml

namespace/dev created

elasticweb.elasticweb.com.bolingcavalry/elasticweb-sample created

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get elasticweb -n dev

NAME AGE

elasticweb-sample 35s

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get service -n dev

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

elasticweb-sample NodePort 10.107.177.158 <none> 8080:30003/TCP 41s

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get deployment -n dev

NAME READY UP-TO-DATE AVAILABLE AGE

elasticweb-sample 2/2 2 2 46s

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

elasticweb-sample-56fc5848b7-l5thk 1/1 Running 0 50s

elasticweb-sample-56fc5848b7-lqjk5 1/1 Running 0 50s

浏览器验证业务功能

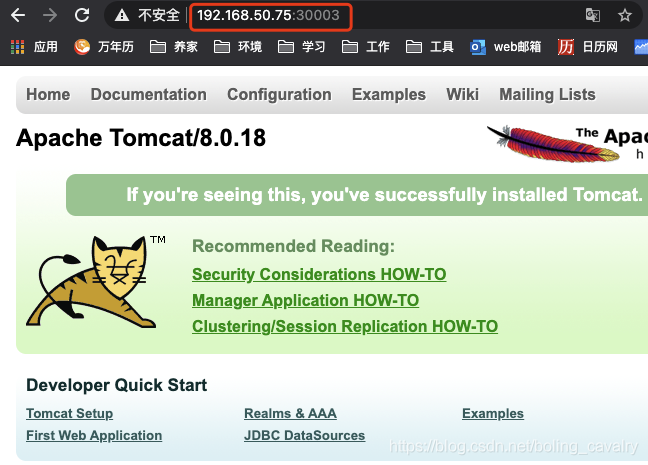

- 本次部署操作使用的docker镜像是tomcat,验证起来非常简单,打开默认页面能见到猫就证明tomcat启动成功了,我这kubernetes宿主机的IP地址是192.168.50.75,于是用浏览器访问http://192.168.50.75:30003,如下图,业务功能正常:

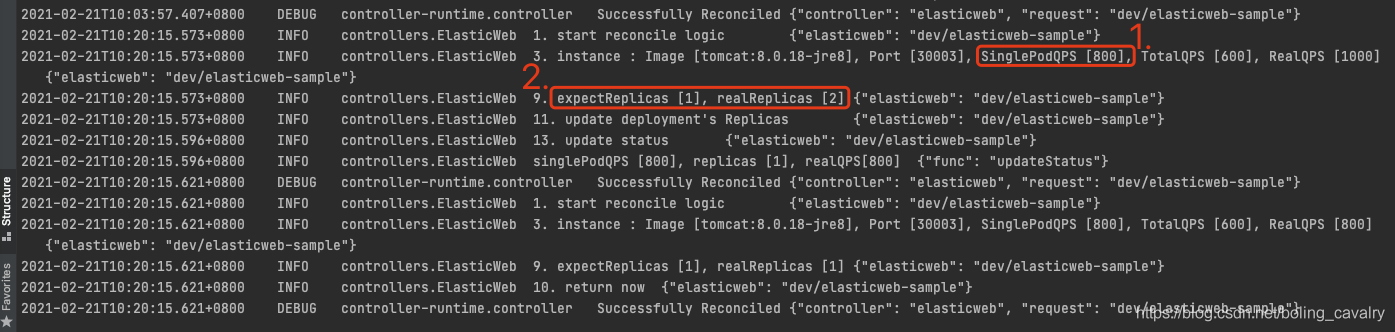

修改单个Pod的QPS

如果自身优化,或者外界依赖变化(如缓存、数据库扩容),这些都可能导致当前服务的QPS提升,假设单个Pod的QPS从500提升到了800,看看咱们的Operator能不能自动做出调整(总QPS是600,因此pod数应该从2降到1)

在config/samples/目录下新增名为update_single_pod_qps.yaml的文件,内容如下:

spec:

singlePodQPS: 800

- 执行以下命令,即可将单个Pod的QPS从500更新为800(注意,参数type很重要别漏了):

kubectl patch elasticweb elasticweb-sample \

-n dev \

--type merge \

--patch "$(cat config/samples/update_single_pod_qps.yaml)"

- 此时去看controller日志,如下图,红框1表示spec已经更新,红框2则表示用最新的参数计算出来的pod数量,符合预期:

- 用kubectl get命令检查pod,可见已经降到1个了:

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

elasticweb-sample-56fc5848b7-l5thk 1/1 Running 0 30m

- 记得用浏览器检查tomcat是否正常;

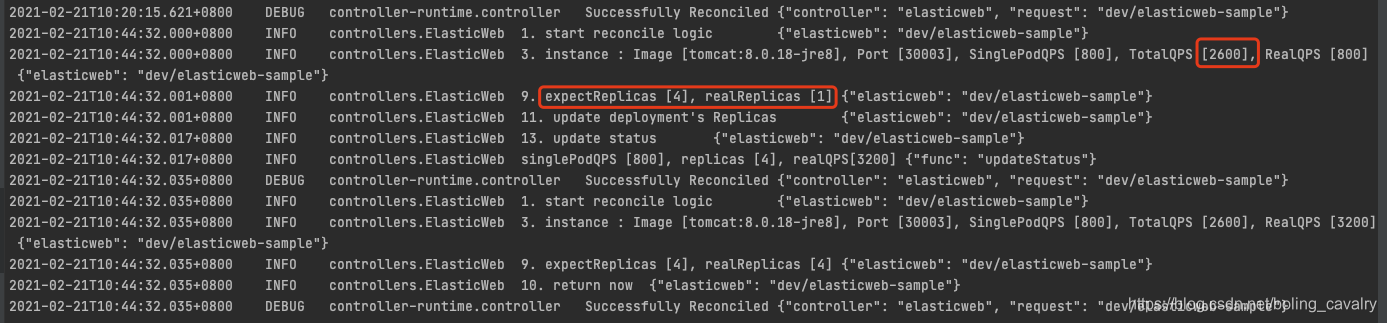

修改总QPS

外部QPS也在频繁变化中,咱们的operator也需要根据总QPS及时调节pod实例,以确保整体服务质量,接下来咱们就修改总QPS,看operator是否生效:

在config/samples/目录下新增名为update_total_qps.yaml的文件,内容如下:

spec:

totalQPS: 2600

- 执行以下命令,即可将总QPS从600更新为2600(注意,参数type很重要别漏了):

kubectl patch elasticweb elasticweb-sample \

-n dev \

--type merge \

--patch "$(cat config/samples/update_total_qps.yaml)"

- 此时去看controller日志,如下图,红框1表示spec已经更新,红框2则表示用最新的参数计算出来的pod数量,符合预期:

- 用kubectl get命令检查pod,可见已经增长到4个,4个pd的能支撑的QPS为3200,满足了当前2600的要求:

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

elasticweb-sample-56fc5848b7-8n7tq 1/1 Running 0 8m22s

elasticweb-sample-56fc5848b7-f2lpb 1/1 Running 0 8m22s

elasticweb-sample-56fc5848b7-l5thk 1/1 Running 0 48m

elasticweb-sample-56fc5848b7-q8p5f 1/1 Running 0 8m22s

- 记得用浏览器检查tomcat是否正常;

- 聪明的您一定会觉得用这个方法来调节pod数太low了,呃...您说得没错确实low,但您可以自己开发一个应用,收到当前QPS后自动调用client-go去修改elasticweb的totalQPS,让operator及时调整pod数,这也勉强算自动调节了......吧

删除验证

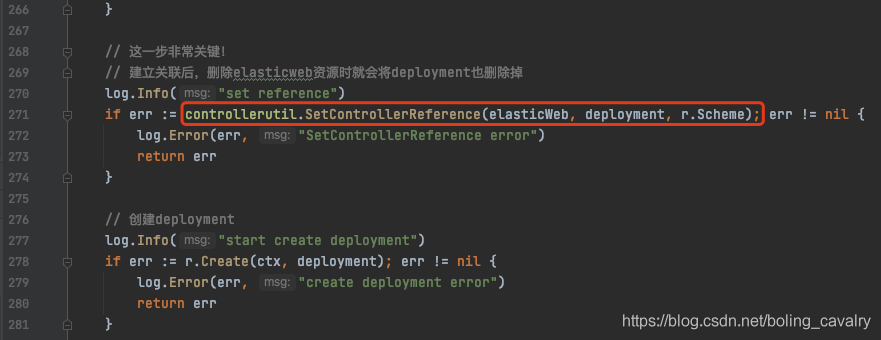

- 目前整个dev这个namespace下有service、deployment、pod、elasticweb这些资源对象,如果要全部删除,只需删除elasticweb即可,因为service和deployment都和elasticweb建立的关联关系,代码如下图红框:

- 执行删除elasticweb的命令:

kubectl delete elasticweb elasticweb-sample -n dev

- 再去查看其他资源,都被自动删除了:

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl delete elasticweb elasticweb-sample -n dev

elasticweb.elasticweb.com.bolingcavalry "elasticweb-sample" deleted

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

elasticweb-sample-56fc5848b7-9lcww 1/1 Terminating 0 45s

elasticweb-sample-56fc5848b7-n7p7f 1/1 Terminating 0 45s

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

elasticweb-sample-56fc5848b7-n7p7f 0/1 Terminating 0 73s

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get pod -n dev

No resources found in dev namespace.

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get deployment -n dev

No resources found in dev namespace.

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get service -n dev

No resources found in dev namespace.

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get namespace dev

NAME STATUS AGE

dev Active 97s

构建镜像

- 前面咱们在开发环境将controller运行起来尝试了所有功能,在实际生产环境中,controller并非这样独立于kubernetes之外,而是以pod的状态运行在kubernetes之中,接下来咱们尝试将controller代码编译构建成docker镜像,再在kubernetes上运行起来;

- 要做的第一件事,就是在前面的controller控制台上执行Ctrl+C,把那个controller停掉;

- 这里有个要求,就是您要有个kubernetes可以访问的镜像仓库,例如局域网内的Harbor,或者公共的hub.docker.com,我这为了操作方便选择了hub.docker.com,使用它的前提是拥有hub.docker.com的注册帐号;

- 在kubebuilder电脑上,打开一个控制台,执行docker login命令登录,根据提示输入hub.docker.com的帐号和密码,这样就可以在当前控制台上执行docker push命令将镜像推送到hub.docker.com上了(这个网站的网络很差,可能要登录好几次才能成功);

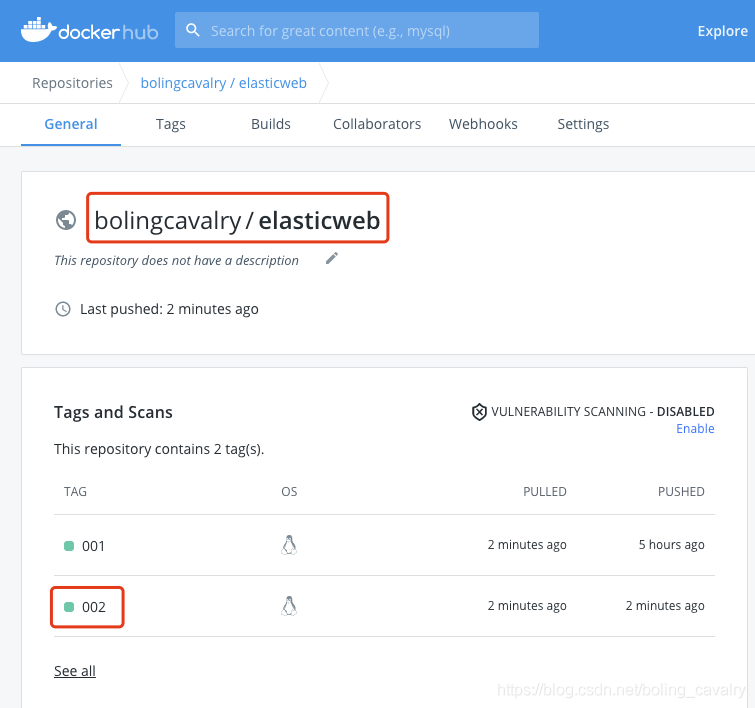

- 执行以下命令构建docker镜像并推送到hub.docker.com,镜像名为bolingcavalry/elasticweb:002:

make docker-build docker-push IMG=bolingcavalry/elasticweb:002

- hub.docker.com的网络状况不是一般的差,kubebuilder电脑上的docker一定要设置镜像加速,上述命令如果遭遇超时失败,请重试几次,此外,构建过程中还会下载诸多go模块的依赖,也需要您耐心等待,也很容易遇到网络问题,需要多次重试,所以,最好是使用局域网内搭建的Habor服务;

- 最终,命令执行成功后输出如下:

zhaoqin@zhaoqindeMBP-2 elasticweb % make docker-build docker-push IMG=bolingcavalry/elasticweb:002

/Users/zhaoqin/go/bin/controller-gen object:headerFile="hack/boilerplate.go.txt" paths="./..."

go fmt ./...

go vet ./...

/Users/zhaoqin/go/bin/controller-gen "crd:trivialVersions=true" rbac:roleName=manager-role webhook paths="./..." output:crd:artifacts:config=config/crd/bases

go test ./... -coverprofile cover.out

? elasticweb [no test files]

? elasticweb/api/v1 [no test files]

ok elasticweb/controllers 8.287s coverage: 0.0% of statements

docker build . -t bolingcavalry/elasticweb:002

[+] Building 146.8s (17/17) FINISHED

=> [internal] load build definition from Dockerfile 0.1s

=> => transferring dockerfile: 37B 0.0s

=> [internal] load .dockerignore 0.0s

=> => transferring context: 2B 0.0s

=> [internal] load metadata for gcr.io/distroless/static:nonroot 1.8s

=> [internal] load metadata for docker.io/library/golang:1.13 0.7s

=> [builder 1/9] FROM docker.io/library/golang:1.13@sha256:8ebb6d5a48deef738381b56b1d4cd33d99a5d608e0d03c5fe8dfa3f68d41a1f8 0.0s

=> [stage-1 1/3] FROM gcr.io/distroless/static:nonroot@sha256:b89b98ea1f5bc6e0b48c8be6803a155b2a3532ac6f1e9508a8bcbf99885a9152 0.0s

=> [internal] load build context 0.0s

=> => transferring context: 14.51kB 0.0s

=> CACHED [builder 2/9] WORKDIR /workspace 0.0s

=> CACHED [builder 3/9] COPY go.mod go.mod 0.0s

=> CACHED [builder 4/9] COPY go.sum go.sum 0.0s

=> CACHED [builder 5/9] RUN go mod download 0.0s

=> CACHED [builder 6/9] COPY main.go main.go 0.0s

=> CACHED [builder 7/9] COPY api/ api/ 0.0s

=> [builder 8/9] COPY controllers/ controllers/ 0.1s

=> [builder 9/9] RUN CGO_ENABLED=0 GOOS=linux GOARCH=amd64 GO111MODULE=on go build -a -o manager main.go 144.5s

=> CACHED [stage-1 2/3] COPY --from=builder /workspace/manager . 0.0s

=> exporting to image 0.0s

=> => exporting layers 0.0s

=> => writing image sha256:622d30aa44c77d93db4093b005fce86b39d5ba5c6cd29f1fb2accb7e7f9b23b8 0.0s

=> => naming to docker.io/bolingcavalry/elasticweb:002 0.0s

docker push bolingcavalry/elasticweb:002

The push refers to repository [docker.io/bolingcavalry/elasticweb]

eea77d209b68: Layer already exists

8651333b21e7: Layer already exists

002: digest: sha256:c09ab87f6fce3d85f1fda0ffe75ead9db302a47729aefd3ef07967f2b99273c5 size: 739

- 去hub.docker.com网站看看,如下图,新镜像已经上传,这样只要任何机器只要能上网就能pull此镜像到本地使用了:

- 镜像准备好之后,执行以下命令即可在kubernetes环境部署controller:

make deploy IMG=bolingcavalry/elasticweb:002

- 接下来像之前那样创建elasticweb资源对象,验证所有资源是否创建成功:

zhaoqin@zhaoqindeMBP-2 elasticweb % make deploy IMG=bolingcavalry/elasticweb:002

/Users/zhaoqin/go/bin/controller-gen "crd:trivialVersions=true" rbac:roleName=manager-role webhook paths="./..." output:crd:artifacts:config=config/crd/bases

cd config/manager && kustomize edit set image controller=bolingcavalry/elasticweb:002

kustomize build config/default | kubectl apply -f -

namespace/elasticweb-system created

Warning: apiextensions.k8s.io/v1beta1 CustomResourceDefinition is deprecated in v1.16+, unavailable in v1.22+; use apiextensions.k8s.io/v1 CustomResourceDefinition

customresourcedefinition.apiextensions.k8s.io/elasticwebs.elasticweb.com.bolingcavalry configured

role.rbac.authorization.k8s.io/elasticweb-leader-election-role created

clusterrole.rbac.authorization.k8s.io/elasticweb-manager-role created

clusterrole.rbac.authorization.k8s.io/elasticweb-proxy-role created

Warning: rbac.authorization.k8s.io/v1beta1 ClusterRole is deprecated in v1.17+, unavailable in v1.22+; use rbac.authorization.k8s.io/v1 ClusterRole

clusterrole.rbac.authorization.k8s.io/elasticweb-metrics-reader created

rolebinding.rbac.authorization.k8s.io/elasticweb-leader-election-rolebinding created

clusterrolebinding.rbac.authorization.k8s.io/elasticweb-manager-rolebinding created

clusterrolebinding.rbac.authorization.k8s.io/elasticweb-proxy-rolebinding created

service/elasticweb-controller-manager-metrics-service created

deployment.apps/elasticweb-controller-manager created

zhaoqin@zhaoqindeMBP-2 elasticweb %

zhaoqin@zhaoqindeMBP-2 elasticweb %

zhaoqin@zhaoqindeMBP-2 elasticweb %

zhaoqin@zhaoqindeMBP-2 elasticweb %

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl apply -f config/samples/elasticweb_v1_elasticweb.yaml

namespace/dev created

elasticweb.elasticweb.com.bolingcavalry/elasticweb-sample created

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get service -n dev

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

elasticweb-sample NodePort 10.96.234.7 <none> 8080:30003/TCP 13s

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get deployment -n dev

NAME READY UP-TO-DATE AVAILABLE AGE

elasticweb-sample 2/2 2 2 18s

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get pod -n dev

NAME READY STATUS RESTARTS AGE

elasticweb-sample-56fc5848b7-559lw 1/1 Running 0 22s

elasticweb-sample-56fc5848b7-hp4wv 1/1 Running 0 22s

- 这还不够!还有个重要的信息需要咱们检查---controller的日志,先看有哪些pod:

zhaoqin@zhaoqindeMBP-2 elasticweb % kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

dev elasticweb-sample-56fc5848b7-559lw 1/1 Running 0 68s

dev elasticweb-sample-56fc5848b7-hp4wv 1/1 Running 0 68s

elasticweb-system elasticweb-controller-manager-5795d4d98d-t6jvc 2/2 Running 0 98s

kube-system coredns-7f89b7bc75-5pdwc 1/1 Running 15 20d

kube-system coredns-7f89b7bc75-nvbvm 1/1 Running 15 20d

kube-system etcd-hedy 1/1 Running 15 20d

kube-system kube-apiserver-hedy 1/1 Running 15 20d

kube-system kube-controller-manager-hedy 1/1 Running 16 20d

kube-system kube-flannel-ds-v84vc 1/1 Running 22 20d

kube-system kube-proxy-hlppx 1/1 Running 15 20d

kube-system kube-scheduler-hedy 1/1 Running 16 20d

test-clientset client-test-deployment-7677cc9669-kd7l7 1/1 Running 9 9d

test-clientset client-test-deployment-7677cc9669-kt5rv 1/1 Running 9 9d

- 可见controller的pod名称为elasticweb-controller-manager-5795d4d98d-t6jvc,执行以下命令可以查看日志,多了-c manager参数是因为这个pod里面有两个容器,需要指定正确的容器才能看到日志:

kubectl logs -f \

elasticweb-controller-manager-5795d4d98d-t6jvc \

-c manager \

-n elasticweb-system

- 再次看到了熟悉的业务日志:

2021-02-21T08:52:27.064Z INFO controllers.ElasticWeb 1. start reconcile logic {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T08:52:27.064Z INFO controllers.ElasticWeb 3. instance : Image [tomcat:8.0.18-jre8], Port [30003], SinglePodQPS [500], TotalQPS [600], RealQPS [nil] {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T08:52:27.064Z INFO controllers.ElasticWeb 4. deployment not exists {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T08:52:27.064Z INFO controllers.ElasticWeb set reference {"func": "createService"}

2021-02-21T08:52:27.064Z INFO controllers.ElasticWeb start create service {"func": "createService"}

2021-02-21T08:52:27.107Z INFO controllers.ElasticWeb create service success {"func": "createService"}

2021-02-21T08:52:27.107Z INFO controllers.ElasticWeb expectReplicas [2] {"func": "createDeployment"}

2021-02-21T08:52:27.107Z INFO controllers.ElasticWeb set reference {"func": "createDeployment"}

2021-02-21T08:52:27.107Z INFO controllers.ElasticWeb start create deployment {"func": "createDeployment"}

2021-02-21T08:52:27.119Z INFO controllers.ElasticWeb create deployment success {"func": "createDeployment"}

2021-02-21T08:52:27.119Z INFO controllers.ElasticWeb singlePodQPS [500], replicas [2], realQPS[1000] {"func": "updateStatus"}

2021-02-21T08:52:27.198Z DEBUG controller-runtime.controller Successfully Reconciled {"controller": "elasticweb", "request": "dev/elasticweb-sample"}

2021-02-21T08:52:27.198Z INFO controllers.ElasticWeb 1. start reconcile logic {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T08:52:27.198Z INFO controllers.ElasticWeb 3. instance : Image [tomcat:8.0.18-jre8], Port [30003], SinglePodQPS [500], TotalQPS [600], RealQPS [1000] {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T08:52:27.198Z INFO controllers.ElasticWeb 9. expectReplicas [2], realReplicas [2] {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T08:52:27.198Z INFO controllers.ElasticWeb 10. return now {"elasticweb": "dev/elasticweb-sample"}

2021-02-21T08:52:27.198Z DEBUG controller-runtime.controller Successfully Reconciled {"controller": "elasticweb", "request": "dev/elasticweb-sample"}

- 再用浏览器验证tomcat已经启动成功;

卸载和清理

- 体验完毕后,如果想把前面创建的资源全部清理掉(注意,是清理资源,不是资源对象),可以执行以下命令:

make uninstall

- 至此,整个operator的设计、开发、部署、验证流程就全部完成了,在您的operator开发过程中,希望本文能给您带来一些参考;

你不孤单,欣宸原创一路相伴

欢迎关注公众号:程序员欣宸

微信搜索「程序员欣宸」,我是欣宸,期待与您一同畅游Java世界...

https://github.com/zq2599/blog_demos

kubebuilder实战之六:构建部署运行的更多相关文章

- kubebuilder实战之一:准备工作kubebuilder实战之一:准备工作

欢迎访问我的GitHub https://github.com/zq2599/blog_demos 内容:所有原创文章分类汇总及配套源码,涉及Java.Docker.Kubernetes.DevOPS ...

- kubebuilder实战之二:初次体验kubebuilder

欢迎访问我的GitHub https://github.com/zq2599/blog_demos 内容:所有原创文章分类汇总及配套源码,涉及Java.Docker.Kubernetes.DevOPS ...

- kubebuilder实战之三:基础知识速览

欢迎访问我的GitHub https://github.com/zq2599/blog_demos 内容:所有原创文章分类汇总及配套源码,涉及Java.Docker.Kubernetes.DevOPS ...

- kubebuilder实战之四:operator需求说明和设计

欢迎访问我的GitHub https://github.com/zq2599/blog_demos 内容:所有原创文章分类汇总及配套源码,涉及Java.Docker.Kubernetes.DevOPS ...

- kubebuilder实战之五:operator编码

欢迎访问我的GitHub https://github.com/zq2599/blog_demos 内容:所有原创文章分类汇总及配套源码,涉及Java.Docker.Kubernetes.DevOPS ...

- kubebuilder实战之八:知识点小记

欢迎访问我的GitHub https://github.com/zq2599/blog_demos 内容:所有原创文章分类汇总及配套源码,涉及Java.Docker.Kubernetes.DevOPS ...

- 通过Dapr实现一个简单的基于.net的微服务电商系统(十三)——istio+dapr构建多运行时服务网格之生产环境部署

之前所有的演示都是在docker for windows上进行部署的,没有真正模拟生产环境,今天我们模拟真实环境在公有云上用linux操作如何实现istio+dapr+电商demo的部署. 目录:一. ...

- SpringBoot+Maven 多模块项目的构建、运行、打包实战

前言 最近在做一个很复杂的会员综合线下线上商城大型项目,单模块项目无法满足多人开发和架构,很多模块都是重复的就想到了把模块提出来,做成公共模块,基于maven的多模块项目,也好分工开发,也便于后期微服 ...

- kubebuilder实战之七:webhook

欢迎访问我的GitHub https://github.com/zq2599/blog_demos 内容:所有原创文章分类汇总及配套源码,涉及Java.Docker.Kubernetes.DevOPS ...

随机推荐

- springboot-8-企业开发

一.邮件发送 流程: mbqplwpheeyvgdjh 首先需要开启POS3/SMTP服务,这是一个邮件传输协议 点击开启 导入依赖 <!--mail--> <dependency& ...

- 微信小程序云开发-云存储-上传单个视频到云存储并显示到页面上

一.wxml文件 <!-- 上传视频到云存储 --> <button bindtap="chooseVideo" type="primary" ...

- Solon 1.5.16 发布,多项细节优化

Solon 是一个轻量的Java基础开发框架.强调,克制 + 简洁 + 开放的原则:力求,更小.更快.更自由的体验.支持:RPC.REST API.MVC.Job.Micro service.WebS ...

- AntDesignBlazor 学习笔记

AntDesignBlazor是基于 Ant Design 的 Blazor 实现,开发和服务于企业级后台产品.我的 Blazor Server 学习就从这里开始,有问题可以随时上 Blazor 中文 ...

- 自建CA实现HTTPS

说明:这里是Linux服务综合搭建文章的一部分,本文可以作为自建CA搭建https网站的参考. 注意:这里所有的标题都是根据主要的文章(Linux基础服务搭建综合)的顺序来做的. 如果需要查看相关软件 ...

- Mysql用户、权限、密码管理

一.用户管理 默认:用户root 创建用户: use mysql; create user 'alex'@'192.168.193.200' identified by '123456'; 创建了al ...

- Python -- 长字符串

如果需要写一个非常非常长的字符串,它需要跨多行,那么,可以使用三个引号代替普通引号. print '''This is a very long string. It continues here. A ...

- 1.4matlab矩阵的表示

1.4matlab矩阵的表示 矩阵的建立 利用直接输入法建立矩阵:将矩阵的元素用中括号括起来,按矩阵的顺序输入各元素,同一行的各元素之间用逗号或空格分隔,不同行的元素之间用分号分隔. 利用已建立好的矩 ...

- selenium WebDriverWait

Selenium WebDriverWait的知识: 一.webdrivewait 示例代码 from selenium import webdriver from selenium.webdri ...

- python3中的希尔排序

def shell_sort(alist): n = len(alist) # 初始步长 gap = round(n / 2) while gap > 0: # 按步长进行插入排序 for i ...