Sqoop导入HBase,并借助Coprocessor协处理器同步索引到ES

1.环境

- Mysql 5.6

- Sqoop 1.4.6

- Hadoop 2.5.2

- HBase 0.98

- Elasticsearch 2.3.5

2.安装(略过)

3.HBase Coprocessor实现

HBase Observer

import org.apache.commons.logging.Log;

import org.apache.commons.logging.LogFactory;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.hbase.Cell;

import org.apache.hadoop.hbase.CellUtil;

import org.apache.hadoop.hbase.CoprocessorEnvironment;

import org.apache.hadoop.hbase.client.Delete;

import org.apache.hadoop.hbase.client.Durability;

import org.apache.hadoop.hbase.client.Put;

import org.apache.hadoop.hbase.coprocessor.BaseRegionObserver;

import org.apache.hadoop.hbase.coprocessor.ObserverContext;

import org.apache.hadoop.hbase.coprocessor.RegionCoprocessorEnvironment;

import org.apache.hadoop.hbase.regionserver.wal.WALEdit;

import org.apache.hadoop.hbase.util.Bytes;

import org.elasticsearch.client.Client;

//import org.elasticsearch.client.transport.TransportClient;

//import org.elasticsearch.common.settings.ImmutableSettings;

//import org.elasticsearch.common.settings.Settings;

//import org.elasticsearch.common.transport.InetSocketTransportAddress; import java.io.IOException;

import java.util.HashMap;

import java.util.List;

import java.util.Map;

//import java.util.NavigableMap; public class DataSyncObserver extends BaseRegionObserver { private static Client client = null;

private static final Log LOG = LogFactory.getLog(DataSyncObserver.class); /**

* 读取HBase Shell的指令参数

*

* @param env

*/

private void readConfiguration(CoprocessorEnvironment env) {

Configuration conf = env.getConfiguration();

Config.clusterName = conf.get("es_cluster");

Config.nodeHost = conf.get("es_host");

Config.nodePort = conf.getInt("es_port", -);

Config.indexName = conf.get("es_index");

Config.typeName = conf.get("es_type"); LOG.info("observer -- started with config: " + Config.getInfo());

} @Override

public void start(CoprocessorEnvironment env) throws IOException {

readConfiguration(env);

// Settings settings = ImmutableSettings.settingsBuilder()

// .put("cluster.name", Config.clusterName).build();

// client = new TransportClient(settings)

// .addTransportAddress(new InetSocketTransportAddress(

// Config.nodeHost, Config.nodePort));

client = MyTransportClient.client;

} @Override

public void postPut(ObserverContext<RegionCoprocessorEnvironment> e, Put put, WALEdit edit, Durability durability) throws IOException {

try {

String indexId = new String(put.getRow());

Map<byte[], List<Cell>> familyMap = put.getFamilyCellMap();

// NavigableMap<byte[], List<Cell>> familyMap = put.getFamilyCellMap();

Map<String, Object> json = new HashMap<String, Object>();

for (Map.Entry<byte[], List<Cell>> entry : familyMap.entrySet()) {

for (Cell cell : entry.getValue()) {

String key = Bytes.toString(CellUtil.cloneQualifier(cell));

String value = Bytes.toString(CellUtil.cloneValue(cell));

json.put(key, value);

}

}

System.out.println();

ElasticSearchOperator.addUpdateBuilderToBulk(client.prepareUpdate(Config.indexName, Config.typeName, indexId).setDoc(json).setUpsert(json));

LOG.info("observer -- add new doc: " + indexId + " to type: " + Config.typeName);

} catch (Exception ex) {

LOG.error(ex);

}

} @Override

public void postDelete(final ObserverContext<RegionCoprocessorEnvironment> e, final Delete delete, final WALEdit edit, final Durability durability) throws IOException {

try {

String indexId = new String(delete.getRow());

ElasticSearchOperator.addDeleteBuilderToBulk(client.prepareDelete(Config.indexName, Config.typeName, indexId));

LOG.info("observer -- delete a doc: " + indexId);

} catch (Exception ex) {

LOG.error(ex);

}

} }

ES方法

import org.elasticsearch.action.bulk.BulkRequestBuilder;

import org.elasticsearch.action.bulk.BulkResponse;

import org.elasticsearch.action.delete.DeleteRequestBuilder;

import org.elasticsearch.action.update.UpdateRequestBuilder;

import org.elasticsearch.client.Client;

//import org.elasticsearch.client.transport.TransportClient;

//import org.elasticsearch.common.settings.ImmutableSettings;

//import org.elasticsearch.common.settings.Settings;

//import org.elasticsearch.common.transport.InetSocketTransportAddress; import java.util.HashMap;

import java.util.Map;

import java.util.Timer;

import java.util.TimerTask;

import java.util.concurrent.locks.Lock;

import java.util.concurrent.locks.ReentrantLock; public class ElasticSearchOperator { // 缓冲池容量

private static final int MAX_BULK_COUNT = ;

// 最大提交间隔(秒)

private static final int MAX_COMMIT_INTERVAL = * ; private static Client client = null;

private static BulkRequestBuilder bulkRequestBuilder = null; private static Lock commitLock = new ReentrantLock(); static { // elasticsearch1.5.0

// Settings settings = ImmutableSettings.settingsBuilder()

// .put("cluster.name", Config.clusterName).build();

// client = new TransportClient(settings)

// .addTransportAddress(new InetSocketTransportAddress(

// Config.nodeHost, Config.nodePort)); // 2.3.5

client = MyTransportClient.client; bulkRequestBuilder = client.prepareBulk();

bulkRequestBuilder.setRefresh(true); Timer timer = new Timer();

timer.schedule(new CommitTimer(), * , MAX_COMMIT_INTERVAL * );

} /**

* 判断缓存池是否已满,批量提交

*

* @param threshold

*/

private static void bulkRequest(int threshold) {

if (bulkRequestBuilder.numberOfActions() > threshold) {

BulkResponse bulkResponse = bulkRequestBuilder.execute().actionGet();

if (!bulkResponse.hasFailures()) {

bulkRequestBuilder = client.prepareBulk();

}

}

} /**

* 加入索引请求到缓冲池

*

* @param builder

*/

public static void addUpdateBuilderToBulk(UpdateRequestBuilder builder) {

commitLock.lock();

try {

bulkRequestBuilder.add(builder);

bulkRequest(MAX_BULK_COUNT);

} catch (Exception ex) {

ex.printStackTrace();

} finally {

commitLock.unlock();

}

} /**

* 加入删除请求到缓冲池

*

* @param builder

*/

public static void addDeleteBuilderToBulk(DeleteRequestBuilder builder) {

commitLock.lock();

try {

bulkRequestBuilder.add(builder);

bulkRequest(MAX_BULK_COUNT);

} catch (Exception ex) {

ex.printStackTrace();

} finally {

commitLock.unlock();

}

} /**

* 定时任务,避免RegionServer迟迟无数据更新,导致ElasticSearch没有与HBase同步

*/

static class CommitTimer extends TimerTask {

@Override

public void run() {

commitLock.lock();

try {

bulkRequest();

} catch (Exception ex) {

ex.printStackTrace();

} finally {

commitLock.unlock();

}

}

} }

打包并上传到hdfs

mvn clean compile assembly:single

mv observer-1.0-SNAPSHOT-jar-with-dependencies.jar observer-hb0.-es2.3.5.jar

hdfs dfs -put observer-hb0.-es2.3.5.jar /hbase/lib/

4.创建HBase表,并启用Coprocessor

mysql

hbase shell

create 'region','data'

disable 'region'

alter 'region', METHOD => 'table_att', 'coprocessor' => 'hdfs:///hbase/lib/observer-hb0.98-es2.3.5.jar|com.gavin.observer.DataSyncObserver|1001|es_cluster=elas2.3.4,es_type=mysql_region,es_index=hbase,es_port=9300,es_host=localhost'

enable 'region'

oracle

create 'sp','data'

disable 'sp'

alter 'sp', METHOD => 'table_att', 'coprocessor' => 'hdfs:///hbase/lib/observer-hb0.98-es2.3.5.jar|com.gavin.observer.DataSyncObserver|1001|es_cluster=elas2.3.4,es_type=oracle_sp,es_index=hbase,es_port=9300,es_host=localhost'

enable 'sp'

查看

hbase(main)::* describe 'ora_test'

Table ora_test is ENABLED

ora_test, {TABLE_ATTRIBUTES => {coprocessor$ => 'hdfs:///appdt/hbase

/lib/observer-hb1.2.2-es2.3.5.jar|com.gavin.observer.DataSyncObserver

||es_cluster=elas2.3.4,es_type=ora_test,es_index=hbase,es_port=

,es_host=localhost'}

COLUMN FAMILIES DESCRIPTION

{NAME => 'data', DATA_BLOCK_ENCODING => 'NONE', BLOOMFILTER => 'ROW',

REPLICATION_SCOPE => '', VERSIONS => '', COMPRESSION => 'NONE', MI

N_VERSIONS => '', TTL => 'FOREVER', KEEP_DELETED_CELLS => 'FALSE', B

LOCKSIZE => '', IN_MEMORY => 'false', BLOCKCACHE => 'true'}

row(s) in 0.0260 seconds

删除Coprocessor

disable 'ora_test'

alter 'ora_test',METHOD => 'table_att_unset',NAME =>'coprocessor$1'

enable 'ora_test'

查看删除效果

hbase(main)::> describe 'ora_test'

Table ora_test is ENABLED

ora_test

COLUMN FAMILIES DESCRIPTION

{NAME => 'data', DATA_BLOCK_ENCODING => 'NONE', BLOOMFILTER => 'ROW',

REPLICATION_SCOPE => '', VERSIONS => '', COMPRESSION => 'NONE', MI

N_VERSIONS => '', TTL => 'FOREVER', KEEP_DELETED_CELLS => 'FALSE', B

LOCKSIZE => '', IN_MEMORY => 'false', BLOCKCACHE => 'true'}

row(s) in 0.0200 seconds

5.使用sqoop上传数据

mysql

bin/sqoop import --connect jdbc:mysql://192.168.1.187:3306/trade_dev --username mysql --password 111111 --table TB_REGION --hbase-table region --hbase-row-key REGION_ID --column-family data

oracle

bin/sqoop import --connect jdbc:oracle:thin:@192.168.16.223:/orcl --username sitts --password password --table SITTS.ESB_SERVICE_PARAM --split-by PARAM_ID --hbase-table sp --hbase-row-key PARAM_ID --column-family data

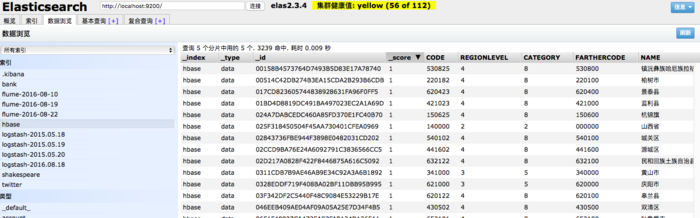

6.校验

HBase

scan 'region'

ES

7.参考

HBase Observer同步数据到ElasticSearch

8.注意

- 同一个Coprocessor用一个index,不同表可以设置不同type,不然index会乱

- 修改Java代码后,上传到HDFS的jar包文件必须和之前不一样,否则就算卸载掉原有的coprocessor再重新安装也不能生效

- 如果你有多个表对多个索引/类型的映射,每个表所加载Observer对应的jar包路径不能相同,否则ElasticSearch会串数据

Sqoop导入HBase,并借助Coprocessor协处理器同步索引到ES的更多相关文章

- sqoop与hbase导入导出数据

环境:sqoop1.4.6+hadoop2.6+hbase1.1+mysql5.7 说明: 1.文中的导入导出的表结构借鉴了网上的某篇博客 2.mysql导入hbase可以直接通过sqoop进行 3. ...

- HBase协处理器同步二级索引到Solr

一. 背景二. 什么是HBase的协处理器三. HBase协处理器同步数据到Solr四. 添加协处理器五. 测试六. 协处理器动态加载 一. 背景 在实际生产中,HBase往往不能满足多维度分析,我们 ...

- 使用sqoop将MySQL数据库中的数据导入Hbase

使用sqoop将MySQL数据库中的数据导入Hbase 前提:安装好 sqoop.hbase. 下载jbdc驱动:mysql-connector-java-5.1.10.jar 将 mysql-con ...

- HBase 二级索引与Coprocessor协处理器

Coprocessor简介 (1)实现目的 HBase无法轻易建立“二级索引”: 执行求和.计数.排序等操作比较困难,必须通过MapReduce/Spark实现,对于简单的统计或聚合计算时,可能会因为 ...

- Sqoop导入mysql数据到Hbase

sqoop import --driver com.mysql.jdbc.Driver --connect "jdbc:mysql://11.143.18.29:3306/db_1" ...

- Sqoop将mysql数据导入hbase的血与泪

Sqoop将mysql数据导入hbase的血与泪(整整搞了大半天) 版权声明:本文为yunshuxueyuan原创文章.如需转载请标明出处: https://my.oschina.net/yunsh ...

- sqoop将mysql数据导入hbase、hive的常见异常处理

原创不易,如需转载,请注明出处https://www.cnblogs.com/baixianlong/p/10700700.html,否则将追究法律责任!!! 一.需求: 1.将以下这张表(test_ ...

- 使用Observer实现HBase到Elasticsearch的数据同步

最近在公司做统一日志收集处理平台,技术选型肯定要选择elasticsearch,因为可以快速检索系统日志,日志问题排查及功业务链调用可以被快速检索,公司各个应用的日志有些字段比如说content是不需 ...

- Oracle数据导入Hbase操作步骤

——本文非本人原创,为公司同事整理,发布至此以便查阅 一.入库前数据准备 1.入hbase详细要求及rowkey生成规则,参考文档“_入HBase库要求 20190104.docx”. 2.根据标准库 ...

随机推荐

- Matlab练习——素数查找

输入数字,0结束,判断输入的数字中的素数 clc; %清空命令行窗口的数据 clear; %清除工作空间的变量 k = ; n = ; %素数的个数 zzs(k) = input('请输入正整数: ' ...

- 基于VLAN的二三层转发

[章节内容]1 MAC地址2 冲突域和广播域3 集线器.交换机.路由器 3.1 集线器 3.2 网桥和交换机 3.3 路由器4 VLAN 4.1 VLAN帧格式 4.1.1 ...

- web应用安全防范(1)—为什么要重视web应用安全漏洞

现在几乎所有的平台都是依赖于互联网构建核心业务的. 自从XP年代开始windows自带防火墙后,传统的缓冲器溢出等攻击失去了原有威力,黑客们也把更多的目光放在了WEB方面,直到进入WEB2.0后,WE ...

- DELPHI XE Android 开发笔记

第一次编译时,设定android SDK: F:\RAD Studio XE6\PlatformSDKs\adt-bundle-windows-x86-20131030\sdk F:\RAD Stud ...

- 计算完成率 SQL

计算完成率 SQL ,), ,) ) AS XX_完成率

- sql查看本机IP地址

CREATE FUNCTION [dbo].[GetCurrentIP] () ) AS BEGIN ); SELECT @IP_Address = client_net_address FROM s ...

- 【Shell脚本编程系列】Shell脚本开发的习惯和规范

1.开头指定脚本解释器 #!/bin/sh或#!/bin/bash 2.开头加版本版权信息 #Date #Author #Mail #Function #Version 提示:可配置vim编辑文件时自 ...

- Eclipse的控制台console经常闪现

Eclipse的控制台console有时候经常闪现! 让它不经常的调出来,可以按下面的操作去掉它: windows -> preferences -> run/debug ...

- css零零散散的笔记

1.div根据内容自适应大小 效果图: html: <body> <div class="parent"> <div class="chil ...

- sencha touch Container tpl 监听组件插件(2013-9-14)

将http://www.cnblogs.com/mlzs/p/3279162.html中的功能插件化 插件代码: /* *tpl模版加入按钮 *<div class="x-button ...