TensorFlow线性回归

目录

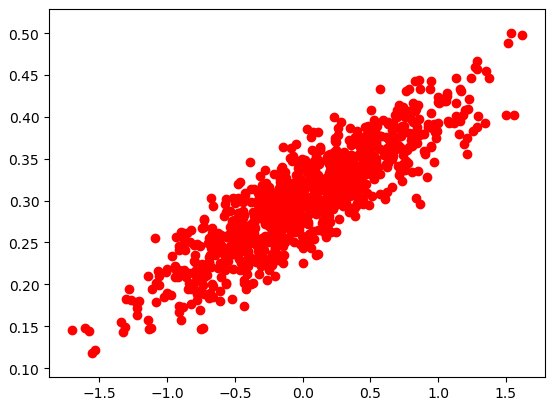

数据可视化

梯度下降

结果可视化

|

数据可视化 |

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt # 随机生成1000个点,围绕在y=0.1x+0.3的直线周围

num_points = 1000

vectors_set = []

for i in range(num_points):

x1 = np.random.normal(0.0, 0.55)

y1 = x1 * 0.1 + 0.3 + np.random.normal(0.0, 0.03)

vectors_set.append([x1, y1]) # 生成一些样本

x_data = [v[0] for v in vectors_set]

y_data = [v[1] for v in vectors_set] plt.scatter(x_data,y_data,c='r')

plt.show()

|

梯度下降 |

# -*- coding: utf-8 -*-

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt # 随机生成1000个点,围绕在y=0.1x+0.3的直线周围

num_points = 1000

vectors_set = []

for i in range(num_points):

x1 = np.random.normal(0.0, 0.55)

y1 = x1 * 0.1 + 0.3 + np.random.normal(0.0, 0.03)

vectors_set.append([x1, y1]) # 生成一些样本

x_data = [v[0] for v in vectors_set]

y_data = [v[1] for v in vectors_set] # 生成1维的W矩阵,取值是[-1,1]之间的随机数

W = tf.Variable(tf.random_uniform([1], -1.0, 1.0), name='W')

# 生成1维的b矩阵,初始值是0

b = tf.Variable(tf.zeros([1]), name='b')

# 经过计算得出预估值y

y = W * x_data + b # 以预估值y和实际值y_data之间的均方误差作为损失

loss = tf.reduce_mean(tf.square(y - y_data), name='loss')

# 采用梯度下降法来优化参数

optimizer = tf.train.GradientDescentOptimizer(0.5) #参数是学习率

# 训练的过程就是最小化这个误差值

train = optimizer.minimize(loss, name='train') sess = tf.Session() init = tf.global_variables_initializer()

sess.run(init) # 初始化的W和b是多少

print ("W =", sess.run(W), "b =", sess.run(b), "loss =", sess.run(loss))

# 执行20次训练

for step in range(20):

sess.run(train)

# 输出训练好的W和b

print ("W =", sess.run(W), "b =", sess.run(b), "loss =", sess.run(loss))

'''

W = [ 0.72134733] b = [ 0.] loss = 0.204532

W = [ 0.54246926] b = [ 0.31014919] loss = 0.0552976

W = [ 0.41924465] b = [ 0.30693138] loss = 0.029155

W = [ 0.33045709] b = [ 0.30471471] loss = 0.0155833

W = [ 0.26648441] b = [ 0.30311754] loss = 0.00853772

W = [ 0.22039121] b = [ 0.30196676] loss = 0.00488007

W = [ 0.18718043] b = [ 0.3011376] loss = 0.00298124

W = [ 0.16325161] b = [ 0.30054021] loss = 0.00199547

W = [ 0.14601055] b = [ 0.30010974] loss = 0.00148373

W = [ 0.13358814] b = [ 0.29979959] loss = 0.00121806

W = [ 0.12463761] b = [ 0.29957613] loss = 0.00108014

W = [ 0.11818863] b = [ 0.29941514] loss = 0.00100854

W = [ 0.11354206] b = [ 0.29929912] loss = 0.000971367

W = [ 0.11019413] b = [ 0.29921553] loss = 0.00095207

W = [ 0.10778191] b = [ 0.29915532] loss = 0.000942053

W = [ 0.10604387] b = [ 0.29911193] loss = 0.000936852

W = [ 0.10479159] b = [ 0.29908064] loss = 0.000934153

W = [ 0.1038893] b = [ 0.29905814] loss = 0.000932751

W = [ 0.10323919] b = [ 0.2990419] loss = 0.000932023

W = [ 0.10277078] b = [ 0.29903021] loss = 0.000931646

W = [ 0.10243329] b = [ 0.29902178] loss = 0.00093145

'''

|

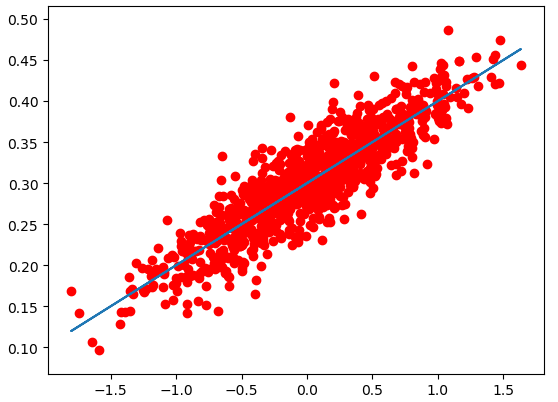

结果可视化 |

# -*- coding: utf-8 -*-

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt # 随机生成1000个点,围绕在y=0.1x+0.3的直线周围

num_points = 1000

vectors_set = []

for i in range(num_points):

x1 = np.random.normal(0.0, 0.55)

y1 = x1 * 0.1 + 0.3 + np.random.normal(0.0, 0.03)

vectors_set.append([x1, y1]) # 生成一些样本

x_data = [v[0] for v in vectors_set]

y_data = [v[1] for v in vectors_set] # 生成1维的W矩阵,取值是[-1,1]之间的随机数

W = tf.Variable(tf.random_uniform([1], -1.0, 1.0), name='W')

# 生成1维的b矩阵,初始值是0

b = tf.Variable(tf.zeros([1]), name='b')

# 经过计算得出预估值y

y = W * x_data + b # 以预估值y和实际值y_data之间的均方误差作为损失

loss = tf.reduce_mean(tf.square(y - y_data), name='loss')

# 采用梯度下降法来优化参数

optimizer = tf.train.GradientDescentOptimizer(0.5) #参数是学习率

# 训练的过程就是最小化这个误差值

train = optimizer.minimize(loss, name='train') sess = tf.Session() init = tf.global_variables_initializer()

sess.run(init) # 初始化的W和b是多少

print ("W =", sess.run(W), "b =", sess.run(b), "loss =", sess.run(loss))

# 执行20次训练

for step in range(20):

sess.run(train)

# 输出训练好的W和b

print ("W =", sess.run(W), "b =", sess.run(b), "loss =", sess.run(loss)) plt.scatter(x_data,y_data,c='r')

plt.plot(x_data,sess.run(W)*x_data+sess.run(b))

plt.show()

TensorFlow线性回归的更多相关文章

- [tensorflow] 线性回归模型实现

在这一篇博客中大概讲一下用tensorflow如何实现一个简单的线性回归模型,其中就可能涉及到一些tensorflow的基本概念和操作,然后因为我只是入门了点tensorflow,所以我只能对部分代码 ...

- python,tensorflow线性回归Django网页显示Gif动态图

1.工程组成 2.urls.py """Django_machine_learning_linear_regression URL Configuration The ` ...

- tensorflow 线性回归解决 iris 2分类

# Combining Everything Together #---------------------------------- # This file will perform binary ...

- 1.tensorflow——线性回归

tensorflow 1.一切都要tf. 2.只有sess.run才能生效 import tensorflow as tf import numpy as np import matplotlib.p ...

- tensorflow 线性回归 iris

线性拟合

- TensorFlow简要教程及线性回归算法示例

TensorFlow是谷歌推出的深度学习平台,目前在各大深度学习平台中使用的最广泛. 一.安装命令 pip3 install -U tensorflow --default-timeout=1800 ...

- TensorFlow API 汉化

TensorFlow API 汉化 模块:tf 定义于tensorflow/__init__.py. 将所有公共TensorFlow接口引入此模块. 模块 app module:通用入口点脚本. ...

- tfboys——tensorflow模块学习(三)

tf.estimator模块 定义在:tensorflow/python/estimator/estimator_lib.py 估算器(Estimator): 用于处理模型的高级工具. 主要模块 ex ...

- TensorFlow — 相关 API

TensorFlow — 相关 API TensorFlow 相关函数理解 任务时间:时间未知 tf.truncated_normal truncated_normal( shape, mean=0. ...

随机推荐

- js获取url(request)中的参数

index.htm?参数1=数值1&参数2=数值2&参数3=数据3&参数4=数值4&...... 静态html文件js读取url参数,根据获取html的参数值控制htm ...

- 【转】CNN+BLSTM+CTC的验证码识别从训练到部署

[转]CNN+BLSTM+CTC的验证码识别从训练到部署 转载地址:https://www.jianshu.com/p/80ef04b16efc 项目地址:https://github.com/ker ...

- el-table + el-form实现可编辑表格字段验证

表格输入信息很常见,因此表格的验证也很必要,el-form提供了输入框验证.可以和表格结合起来用,使用效果 HTML: <div class="table_box"& ...

- mysql的导入导出操作

mysqldump工具基本用法 此方法不适用于大数据备份 备份所有数据库 mysqldump -u root -p --all-databases > all_database_sql 备份my ...

- Linux服务之httpd基本配置详解

一.基本介绍 1.版本 httpd-1.3 httpd-2.0 httpd-2.2 httpd-2.4 目前为止最新的版本是httpd-2.4.6,但是这里我用的是系统自带的RPM包安装的httpd- ...

- MixNet学习笔记

最近,谷歌使用了AutoML,推出了一种新网络:MixNet,其论文为<MixNet: Mixed Depthwise Convolutional Kernels>.其主要创新点是,研究不 ...

- zabbix分布式部署和主机自动发现

1.分布式部署原理 1.1Zabbix分布式部署的原理 传统的部署架构,是server直接监控所有的主机,全部主机的数据都是有server自己来采集和处理,server端的压力比较大,当监控主机数量很 ...

- Nginx实用整理

1. nginx 简述 1.1Nginx是轻量级高并发HTTP服务器和反向代理服务器:同时也是一个IMAP.POP3.SMTP代理服务器:Nginx可以作为一个HTTP服务器进行网站的发布处理,另外N ...

- Python测试开发必知必会-PEP

互联网发展了许多年,不仅颠覆了很多行业,还让很多职位有了更多的用武之地.产品发布迭代速度不断加快,让测试开发这个岗位简直火得不要不要的. Python语言,作为一种更接近人来自然语言的开发语言,以简洁 ...

- java8学习之自定义收集器实现

在上次花了几个篇幅对Collector收集器的javadoc进行了详细的解读,其涉及到的文章有: http://www.cnblogs.com/webor2006/p/8311074.html htt ...