GBDT,随机森林

author:yangjing

time:2018-10-22

Gradient boosting decision tree

1.main diea

The main idea behind GBDT is to combine many simple models(also known as week kernels),like shallow trees.Each tree can only provide good predictions on part of the data,and so more and more trees are added to iteratively improve performance.

2.parameters setting

the algorithm is a bit more sensitive to parameter settings than random forests,but can provide better accuracy if the parameters are set correctly.

- number of trees

By increasing n_estimators ,also increasing the model complexity,as the model has more chances to correct misticks on the training set. - learning rate

controns how strongly each tree tries to correct the misticks of the previous trees.A higher learning rate means each tree can make stronger correctinos,allowing for more complex models. - max_depth

or alternatively max_leaf_nodes.Usyally max_depth is set very low for gradient-boosted models,often not deeper than five splits.

3.code

from sklearn.ensemble import GradientBoostingClassifier

from sklearn.model_selection import train_test_split

from sklearn.datasets import load_breast_cancer

cancer=load_breast_cancer()

X_train,X_test,y_train,y_test=train_test_split(cancer.data,cancer.target,random_state=0)

gbrt=GradientBoostingClassifier(random_state=0)

gbrt.fit(X_train,y_train)

gbrt.score(X_test,y_test)

In [261]: X_train,X_test,y_train,y_test=train_test_split(cancer.data,cancer.target,random_state=0)

...: gbrt=GradientBoostingClassifier(random_state=0)

...: gbrt.fit(X_train,y_train)

...: gbrt.score(X_test,y_test)

...:

Out[261]: 0.958041958041958

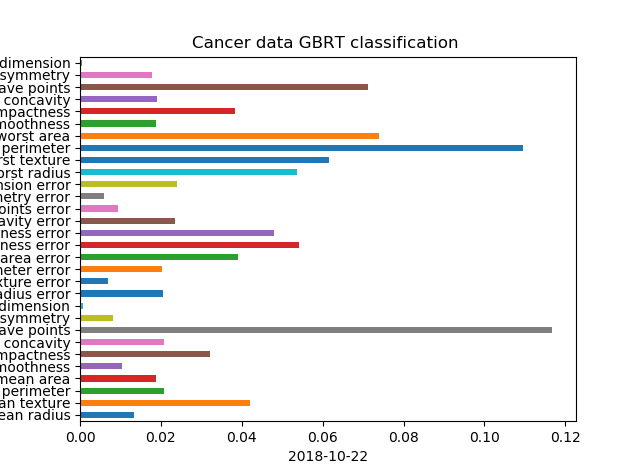

In [262]: gbrt.feature_importances_

Out[262]:

array([0.01337291, 0.04201687, 0.0208666 , 0.01889077, 0.01028091,

0.03215986, 0.02074619, 0.11678956, 0.00820024, 0.00074312,

0.02042134, 0.00680047, 0.02023052, 0.03907398, 0.05406751,

0.04795741, 0.02358101, 0.00934718, 0.00593481, 0.0239241 ,

0.05354265, 0.06160083, 0.10961728, 0.07395201, 0.01867851,

0.03842953, 0.01915824, 0.07128703, 0.01773659, 0.00059199])

In [263]: gbrt.learning_rate

Out[263]: 0.1

In [264]: gbrt.max_depth

Out[264]: 3

In [265]: len(gbrt.estimators_)

Out[266]: 100

In [272]: gbrt.get_params()

Out[272]:

{'criterion': 'friedman_mse',

'init': None,

'learning_rate': 0.1,

'loss': 'deviance',

'max_depth': 3,

'max_features': None,

'max_leaf_nodes': None,

'min_impurity_decrease': 0.0,

'min_impurity_split': None,

'min_samples_leaf': 1,

'min_samples_split': 2,

'min_weight_fraction_leaf': 0.0,

'n_estimators': 100,

'presort': 'auto',

'random_state': 0,

'subsample': 1.0,

'verbose': 0,

'warm_start': False}

Random forest

In [230]: y

Out[230]:

array([1, 1, 0, 1, 1, 1, 1, 0, 0, 0, 1, 0, 1, 1, 0, 1, 1, 0, 1, 0, 1, 0,

0, 0, 1, 1, 0, 1, 0, 1, 0, 0, 0, 0, 1, 0, 1, 1, 0, 0, 0, 0, 0, 0,

1, 1, 1, 1, 1, 0, 1, 1, 1, 1, 0, 1, 1, 1, 1, 1, 0, 1, 1, 1, 0, 1,

0, 0, 0, 0, 0, 1, 1, 0, 1, 1, 0, 1, 1, 0, 1, 0, 1, 0, 0, 0, 0, 0,

0, 1, 0, 0, 1, 0, 0, 0, 1, 1, 0, 0], dtype=int64)

In [231]: axes.ravel()

Out[231]:

array([<matplotlib.axes._subplots.AxesSubplot object at 0x000001F46F3694A8>,

<matplotlib.axes._subplots.AxesSubplot object at 0x000001F46C099F28>,

<matplotlib.axes._subplots.AxesSubplot object at 0x000001F46E6E3BE0>,

<matplotlib.axes._subplots.AxesSubplot object at 0x000001F46BEB72E8>,

<matplotlib.axes._subplots.AxesSubplot object at 0x000001F46ED67198>,

<matplotlib.axes._subplots.AxesSubplot object at 0x000001F46F292C88>],

dtype=object)

In [232]: from sklearn.model_selection import train_test_split

In [233]: X_trai,X_test,y_train,y_test=train_test_split(X,y,stratify=y,random_state=42)

In [234]: len(X_trai)

Out[234]: 75

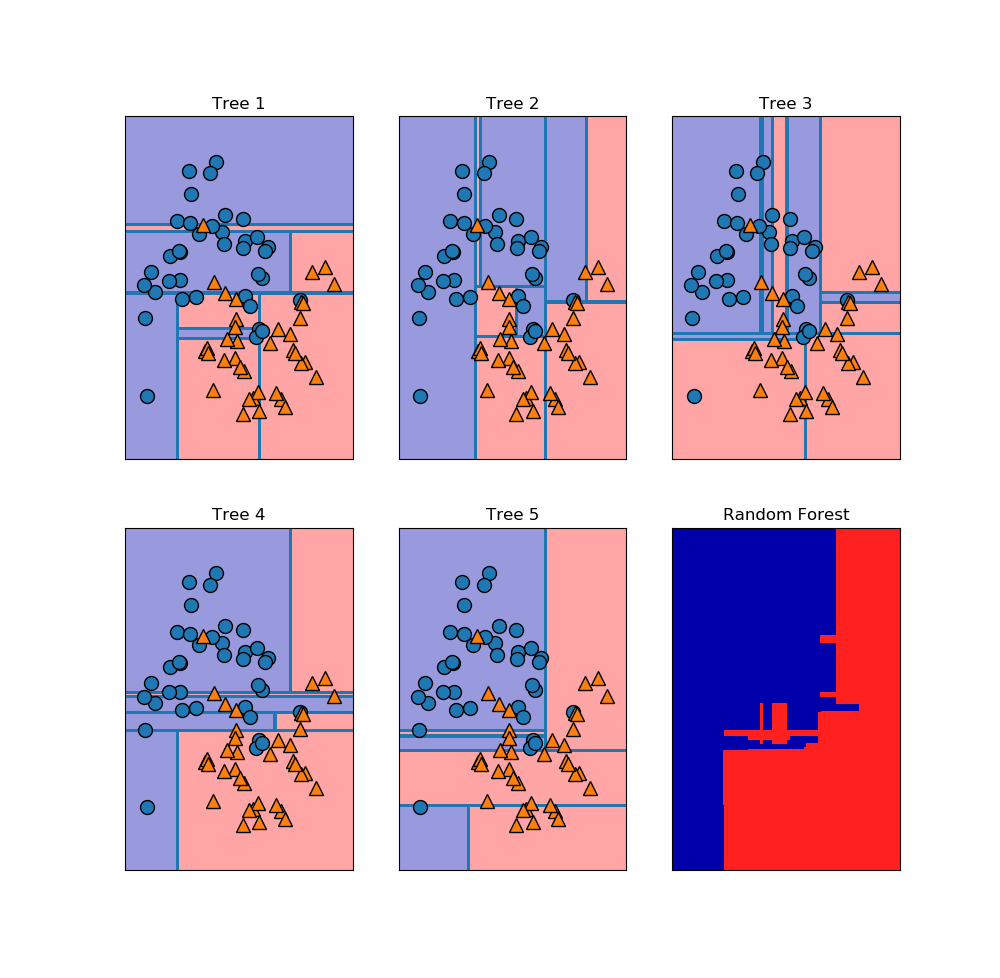

In [235]: fores=RandomForestClassifier(n_estimators=5,random_state=2)

In [236]: fores.fit(X_trai,y_train)

Out[236]:

RandomForestClassifier(bootstrap=True, class_weight=None, criterion='gini',

max_depth=None, max_features='auto', max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, n_estimators=5, n_jobs=1,

oob_score=False, random_state=2, verbose=0, warm_start=False)

In [237]: fores.score(X_test,y_test)

Out[237]: 0.92

In [238]: fores.estimators_

Out[238]:

[DecisionTreeClassifier(class_weight=None, criterion='gini', max_depth=None,

max_features='auto', max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, presort=False,

random_state=1872583848, splitter='best'),

DecisionTreeClassifier(class_weight=None, criterion='gini', max_depth=None,

max_features='auto', max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, presort=False,

random_state=794921487, splitter='best'),

DecisionTreeClassifier(class_weight=None, criterion='gini', max_depth=None,

max_features='auto', max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, presort=False,

random_state=111352301, splitter='best'),

DecisionTreeClassifier(class_weight=None, criterion='gini', max_depth=None,

max_features='auto', max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, presort=False,

random_state=1853453896, splitter='best'),

DecisionTreeClassifier(class_weight=None, criterion='gini', max_depth=None,

max_features='auto', max_leaf_nodes=None,

min_impurity_decrease=0.0, min_impurity_split=None,

min_samples_leaf=1, min_samples_split=2,

min_weight_fraction_leaf=0.0, presort=False,

random_state=213298710, splitter='best')]

GBDT,随机森林的更多相关文章

- ObjectT5:在线随机森林-Multi-Forest-A chameleon in track in

原文::Multi-Forest:A chameleon in tracking,CVPR2014 下的蛋...原文 使用随机森林的优势,在于可以使用GPU把每棵树分到一个流处理器里运行,容易并行化 ...

- 机器学习中的算法(1)-决策树模型组合之随机森林与GBDT

版权声明: 本文由LeftNotEasy发布于http://leftnoteasy.cnblogs.com, 本文可以被全部的转载或者部分使用,但请注明出处,如果有问题,请联系wheeleast@gm ...

- 机器学习中的算法——决策树模型组合之随机森林与GBDT

前言: 决策树这种算法有着很多良好的特性,比如说训练时间复杂度较低,预测的过程比较快速,模型容易展示(容易将得到的决策树做成图片展示出来)等.但是同时,单决策树又有一些不好的地方,比如说容易over- ...

- 决策树模型组合之(在线)随机森林与GBDT

前言: 决策树这种算法有着很多良好的特性,比如说训练时间复杂度较低,预测的过程比较快速,模型容易展示(容易将得到的决策树做成图片展示出来)等.但是同时, 单决策树又有一些不好的地方,比如说容易over ...

- 机器学习中的算法-决策树模型组合之随机森林与GBDT

机器学习中的算法(1)-决策树模型组合之随机森林与GBDT 版权声明: 本文由LeftNotEasy发布于http://leftnoteasy.cnblogs.com, 本文可以被全部的转载或者部分使 ...

- 随机森林与GBDT

前言: 决策树这种算法有着很多良好的特性,比如说训练时间复杂度较低,预测的过程比较快速,模型容易展示(容易将得到的决策树做成图片展示出来)等.但是同时,单决策树又有一些不好的地方,比如说容易over- ...

- 决策树模型组合之随机森林与GBDT

版权声明: 本文由LeftNotEasy发布于http://leftnoteasy.cnblogs.com, 本文可以被全部的转载或者部分使用,但请注明出处,如果有问题,请联系wheeleast@gm ...

- 随机森林和GBDT

1. 随机森林 Random Forest(随机森林)是Bagging的扩展变体,它在以决策树 为基学习器构建Bagging集成的基础上,进一步在决策树的训练过程中引入了随机特征选择,因此可以概括RF ...

- 随机森林RF、XGBoost、GBDT和LightGBM的原理和区别

目录 1.基本知识点介绍 2.各个算法原理 2.1 随机森林 -- RandomForest 2.2 XGBoost算法 2.3 GBDT算法(Gradient Boosting Decision T ...

随机推荐

- Jekins持续集成,gitlab代码仓库

http://blog.csdn.net/john_cdy/article/details/7738393

- 福州三中基训day2

今天讲的BFS,不得不说,福建三中订的旅馆是真的劲,早餐极棒!!! 早上和yty大神边交流边听zld犇犇讲BFS,嘛,今天讲的比较基础,而且BFS也很好懂(三天弄过一道青铜莲花池的我好像没资格说),除 ...

- RPD Volume 168 Issue 4 March 2016 评论6

Natural variation of ambient dose rate in the air of Izu-Oshima Island after the Fukushima Daiichi N ...

- 【分块】bzoj1901 Zju2112 Dynamic Rankings

区间k大,分块大法好,每个区间内存储一个有序表. 二分答案,统计在区间内小于二分到的答案的值的个数,在每个整块内二分.零散的暴力即可. 还是说∵有二分操作,∴每个块的大小定为sqrt(n*log2(n ...

- 【费用流】【Next Array】费用流模板(spfa版)

#include<cstdio> #include<algorithm> #include<cstring> #include<queue> using ...

- Problem I: 打印金字塔

#include<stdio.h> int main() { int n,i,j,k; scanf("%d",&n); ;i<=n;i++) { ;j&l ...

- Missing iOS Distribution signing identity解决方案

相信很多朋友跟我遇到相同的问题,之前iOS发布打包的证书没问题,现在莫名其妙的总是打包失败,并且报如下错误 第一反应,是不是证书被别人搞乱了.于是去Developer Member Center,把所 ...

- microsoft visual studio遇到了问题,需要关闭

http://www.microsoft.com/zh-cn/download/confirmation.aspx?id=13821 装上这个补丁: WindowsXP-KB971513-x86-CH ...

- 误删除Ubuntu 14桌面文件夹“~/桌面”,如何恢复?

一不小心把当前用户的桌面文件夹“/home/wenjianbao/桌面”删了,导致系统把“/home/wenjianbao”当成桌面文件夹.结果,桌面上全是乱七八糟的文件/文件夹. 查看了下网络资料, ...

- Matlab中struct的用法

struct在matlab中是用来建立结构体数组的.通常有两种用法: s = struct('field1',{},'field2',{},...) 这是建立一个空的结构体,field1,field ...