Stylized Image Caption论文笔记

Neural Storyteller (Krios et al. 2015)

: NST breaks down the task into two steps, which first generate unstylish captions than apply style shift techniques to generate stylish descriptions.

SentiCap: Generating Image Descriptions with Sentiments (AAAI 2016)

代码和数据都有公布. (代码用的是比较老的框架,没有读。)

Supervised Image Caption

Style: Positive, Negtive

Datasets:

MSCOCO

SentiCap Dataset:作者自己收集的一个数据集 (数据量不大,Positive: 998 images/2873 captions for train, 673 images/2019 captions for test, Negtive: 997 images/2468 captions for train, 503 images/ 1509 captions for test) 3 positive and 3 negative captions per image

This is done in a caption re-writing task based upon objective captions from MSCOCO by asking AMT workers to choose among ANPs of the desired sentiment, and incorporate one or more of them into any one of the five existing captions.

Evaluation Metrics:

Automatic metrics: BLEU, ROUGEL, METEOR, CIDEr

Human evaluation

Model

Shortcomings: requires paired image-sentiment caption data, but also world-level supervison to emphsize the sentiment words(e.g., sentiment strengths of each word in the sentiment caption), which makes the approach very expensive and difficult to scale up.(StyleNet)

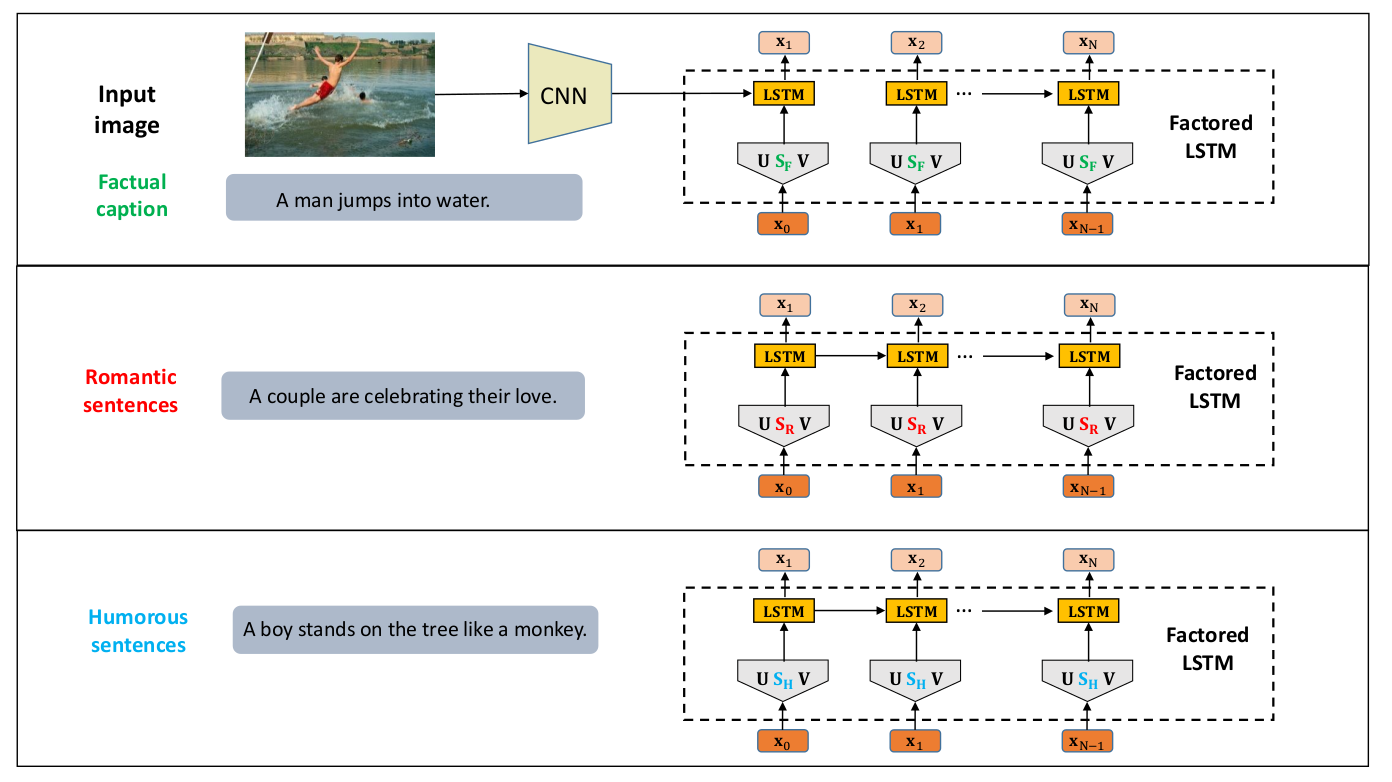

StyleNet: Generating Attractive Visual Captions with Styles (CVPR2017)

代码没有公布,有第三方Pytorch实现,数据集公布了FlickrStyle9K(1k测试数据没有公开)

Unsupervised(without using supervised style-specific image-caption paired data): factual image caption pairs + stylized language corpus(only text)

Produce attractive visual captions with styles only using monolingual stylized language corpus(without paired images) and standard factual image/video-caption pairs.

Style:Romantic, Humorous

Datasets:

FlickrStyle10K(built on Flickr 30K image caption dataset, show a standard factual caption for a image, to revise the caption to make it romantic or humorous)(这里虽然有image-stylized caption pairs,但训练的时候作者并没有用这些成对的数据,而是用image-factual caption pairs + stylized text corpora,在evaluate的时候会用到image-stylized caption pairs,用作Ground Truth.)

Evaluation Metrics:

Automatic Metrics:BLEU, METEOR, ROUGE, CIDEr

Human evaluation

Model

关键点:

1.将LSTM中参数Wx拆分成3项,Ux,Sx,Vx,模型中所有的LSTM网络除S之外的参数都是共享的,参数S用来记忆特定的风格。

2.类似于Multi-task sequence to sequence training. First task, train to generate factual captions given the paired images,更新所有的参数. Second, factored LSTM is trained as a language model,只更新SR或者SH.

“Factual” and “Emotional”: Stylized Image Captioning with Adaptive Learning and Attention (ECCV 2018)

Style-factual LSTM block: Sx, Sh and gxt, ght

Two-stage learning strategy

MLE loss + KL divergence

Image Captioning at Will: A Versatile Scheme for Effectively Injecting Sentiments into Image Descriptions (Preprint 30 Jan 2018)

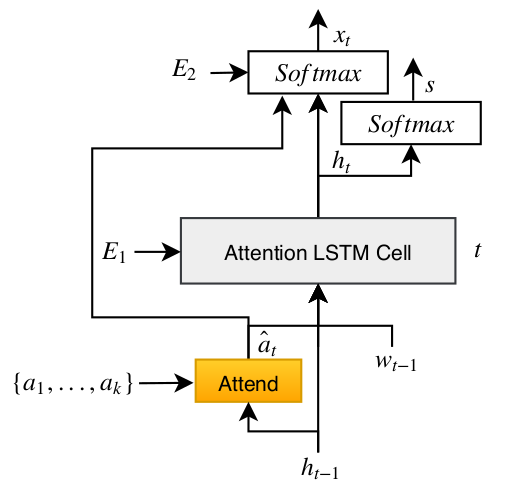

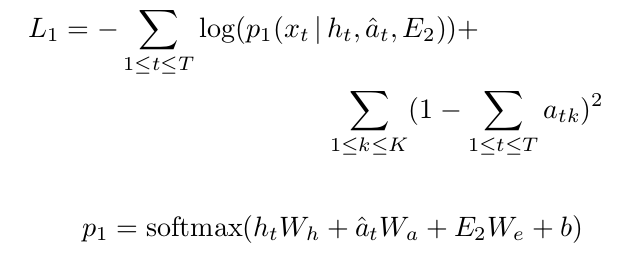

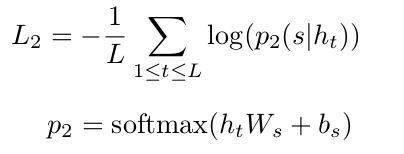

SENTI-ATTEND: Image Captioning using Sentiment and Attention (Preprint 24 Nov 2018)

这篇文章可以看作是SentiCap的后续工作,采用的是Supervised的方式。

Datasets

MS COCO: 用于生成generic image captions

SentiCap dataset:

Evaluation Metrics

standard image caption evaluation metrics: BLEU, ROUGE-L, METEOR, CIDEr, SPICE

Entropy

Model

损失函数:

文章没有公布代码,实验部分对比的是SentiCap以及Image Caption at Will

疑问: SentiCap数据集很小,利用image-caption pairs来Cross entropy loss训练会有效果吗???

LSTM多加了E1和E2两个输入,每一步LSTM拿ht来预测s这个操作在SentiCap里也有,然后文章一直处于PrePrint状态。

SemStyle: Learning to Generate Stylised Image Captions using Unaligned Text (CVPR 2018)

公布了部分代码和数据

Style: Story

Learns on existing image caption datasets with only factual descriptions + a large set of styled texts without aligned images

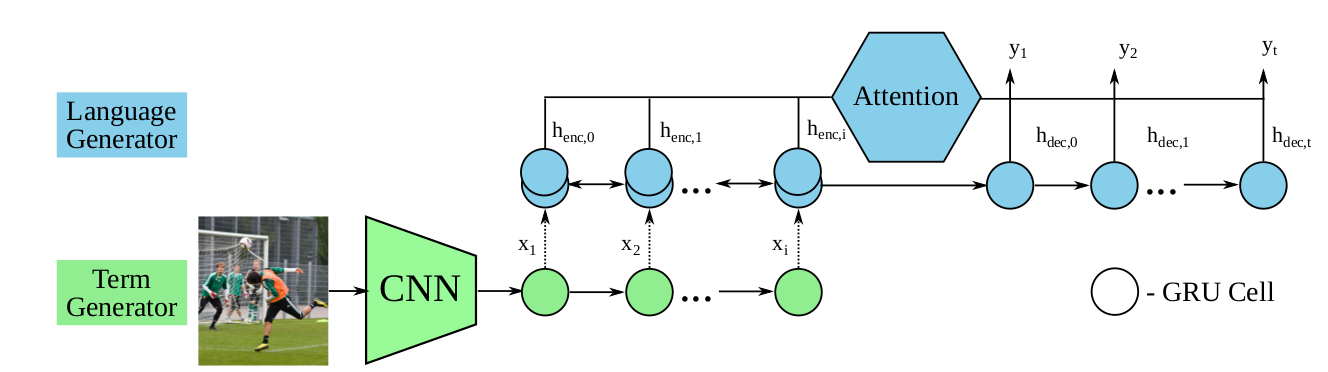

Two-stage training strategy for the term generator and language generator

Dataset:

Descriptive Image Captions: MSCOCO

The Styled Text: bookcorpus

Evaluation:

Automatic relevance metrics: Widely-used captioning metrics (BLEU, METEOR, CIDEr, SPICE)

Automatic style metrics: 作者自己提出的LM(4-gram model)、GRULM(GRU language model)、CLF(binary classifier)

Human evaluations of relevance and style

Unsupervised Stylish Image Description Generation via Domain Layer Norm (AAAI 2019)

Unsupervised Image Caption

Four different styles: fairy tale, romance, humor, country song lyrics(lyrics)

Our model is jointly trained with a paired unstylish image description corpus(source domain) and a monolingual corpus of the specific style(target domain)

代码和数据集均未公开

Datasets:

Source Domain:VG-Para(Krause et al. 2017)

Target: BookCorpus(humor and romance), 作者自己收集的country song lyrics and fairy tale

Evaluation Metircs:

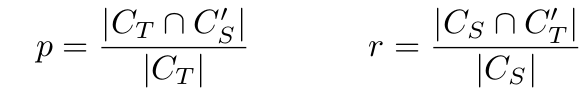

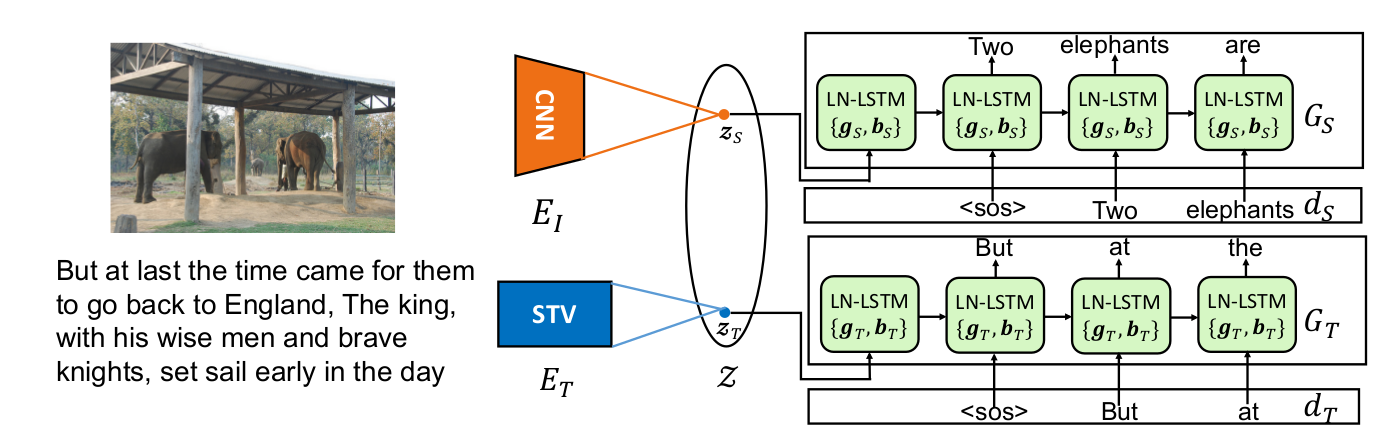

Metrics of Semantic Relevance: 作者自己提出的p和r,SPICE

Metrics of Stylishness: transfer accuracy

Human evaluation

Approach Key Point

EI和ET分别将图片和目标风格的描述映射到同一个隐空间,Gs用来生成非风格化的描述,即Source domain里的句子,EI和GS组合起来就是传统的Image Caption的Encoder-Decoder模型,训练数据是有监督的Image-Caption对。GT用来生成风格化的描述,ET将风格化的句子编码到隐空间Z,GT则根据隐空间内的编码zT重新生成风格化的句子(Reconstruction),训练数据是风格化的句子。模型训练完成之后,将EI和GT组合,就可以生成风格化的图像描述。

关键点1:作者假设存在一个隐空间Z使得可以将图片, 不带风格的源描述以及带风格的目标描述映射到这个空间。

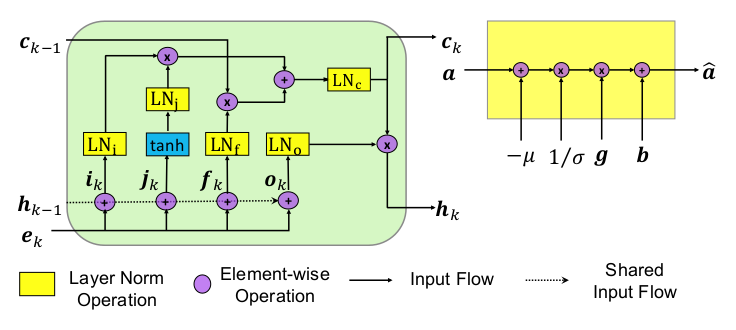

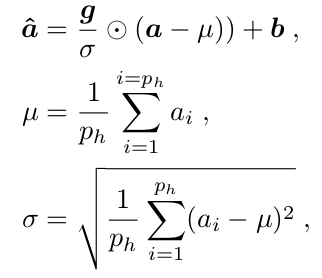

关键点2:GS和GT只在层规范化的参数不同,其他参数是共享的。即GS和GT的LN-LSTM是共享的,其中只有参数{gS,bS}和{gT,bT}不同,作者将这种机制称为Domain Layer Norm(DLN)。层规范化操作(layer norm operation)作用在LSTM的每一个Gate(input gate,forget gate, output gate)上。

Stylized Image Caption论文笔记的更多相关文章

- Multimodal —— 看图说话(Image Caption)任务的论文笔记(一)评价指标和NIC模型

看图说话(Image Caption)任务是结合CV和NLP两个领域的一种比较综合的任务,Image Caption模型的输入是一幅图像,输出是对该幅图像进行描述的一段文字.这项任务要求模型可以识别图 ...

- 论文笔记:Towards Diverse and Natural Image Descriptions via a Conditional GAN

论文笔记:Towards Diverse and Natural Image Descriptions via a Conditional GAN ICCV 2017 Paper: http://op ...

- 论文笔记之:Natural Language Object Retrieval

论文笔记之:Natural Language Object Retrieval 2017-07-10 16:50:43 本文旨在通过给定的文本描述,在图像中去实现物体的定位和识别.大致流程图如下 ...

- Deep Learning论文笔记之(四)CNN卷积神经网络推导和实现(转)

Deep Learning论文笔记之(四)CNN卷积神经网络推导和实现 zouxy09@qq.com http://blog.csdn.net/zouxy09 自己平时看了一些论文, ...

- 论文笔记之:Visual Tracking with Fully Convolutional Networks

论文笔记之:Visual Tracking with Fully Convolutional Networks ICCV 2015 CUHK 本文利用 FCN 来做跟踪问题,但开篇就提到并非将其看做 ...

- Deep Learning论文笔记之(八)Deep Learning最新综述

Deep Learning论文笔记之(八)Deep Learning最新综述 zouxy09@qq.com http://blog.csdn.net/zouxy09 自己平时看了一些论文,但老感觉看完 ...

- Twitter 新一代流处理利器——Heron 论文笔记之Heron架构

Twitter 新一代流处理利器--Heron 论文笔记之Heron架构 标签(空格分隔): Streaming-process realtime-process Heron Architecture ...

- Deep Learning论文笔记之(六)Multi-Stage多级架构分析

Deep Learning论文笔记之(六)Multi-Stage多级架构分析 zouxy09@qq.com http://blog.csdn.net/zouxy09 自己平时看了一些 ...

- 论文笔记(1):Deep Learning.

论文笔记1:Deep Learning 2015年,深度学习三位大牛(Yann LeCun,Yoshua Bengio & Geoffrey Hinton),合作在Nature ...

随机推荐

- linux之架构图和八台服务器

(1) (2)

- python 编码和解码

- GitOps:Kubernetes多集群环境下的高效CICD实践

为了解决传统应用升级缓慢.架构臃肿.不能快速迭代.故障不能快速定位.问题无法快速解决等问题,云原生这一概念横空出世.云原生可以改进应用开发的效率,改变企业的组织结构,甚至会在文化层面上直接影响一个公司 ...

- 下载mysql document

wget -b -r -np -L -p https://dev.mysql.com/doc/refman/5.6/en/ 在下载时.有用到外部域名的图片或连接.如果需要同时下载就要用-H参数. wg ...

- 22-2 模板语言的进阶和fontawesome字体的使用

一 fontfawesome字体的使用 http://fontawesome.dashgame.com/ 官网 1 下载 2 放到你的项目下面 3 html导入这个目录 实例: class最前面的f ...

- Streamy 解决办法

- @atcoder - ARC066F@ Contest with Drinks Hard

目录 @description@ @solution@ @accepted code@ @details@ @description@ 给定序列 T1, T2, ... TN,你可以从中选择一些 Ti ...

- url地址栏参数<==>对象(将对象转换成地址栏的参数以及将地址栏的参数转换为对象)的实用函数

/** * @author web得胜 * @param {Object} obj 需要拼接的参数对象 * @return {String} * */ function obj2qs(obj) { i ...

- selenium webdriver学习(八)------------如何操作select下拉框(转)

selenium webdriver学习(八)------------如何操作select下拉框 博客分类: Selenium-webdriver 下面我们来看一下selenium webdriv ...

- kuangbin专题-连通图A - Network of Schools

这道题的意思是就是 问题 1:初始至少需要向多少个学校发放软件,使得网络内所有的学校最终都能得到软件. 2:至少需要添加几条传输线路(边),使任意向一个学校发放软件后,经过若干次传送,网络内所有的学校 ...