flume采集log4j日志到kafka

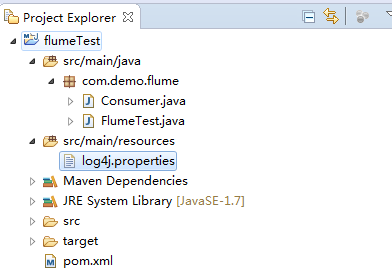

简单测试项目:

1、新建Java项目结构如下:

测试类FlumeTest代码如下:

package com.demo.flume;

import org.apache.log4j.Logger;

public class FlumeTest {

private static final Logger LOGGER = Logger.getLogger(FlumeTest.class);

public static void main(String[] args) throws InterruptedException {

for (int i = 20; i < 100; i++) {

LOGGER.info("Info [" + i + "]");

Thread.sleep(1000);

}

}

}

监听kafka接收消息Consumer代码如下:

package com.demo.flume; /**

* INFO: info

* User: zhaokai

* Date: 2017/3/17

* Version: 1.0

* History: <p>如果有修改过程,请记录</P>

*/ import java.util.Arrays;

import java.util.Properties; import org.apache.kafka.clients.consumer.ConsumerRecord;

import org.apache.kafka.clients.consumer.ConsumerRecords;

import org.apache.kafka.clients.consumer.KafkaConsumer; public class Consumer { public static void main(String[] args) {

System.out.println("begin consumer");

connectionKafka();

System.out.println("finish consumer");

} @SuppressWarnings("resource")

public static void connectionKafka() { Properties props = new Properties();

props.put("bootstrap.servers", "192.168.1.163:9092");

props.put("group.id", "testConsumer");

props.put("enable.auto.commit", "true");

props.put("auto.commit.interval.ms", "1000");

props.put("session.timeout.ms", "30000");

props.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

props.put("value.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

KafkaConsumer<String, String> consumer = new KafkaConsumer<>(props);

consumer.subscribe(Arrays.asList("flumeTest"));

while (true) {

ConsumerRecords<String, String> records = consumer.poll(100);

try {

Thread.sleep(2000);

} catch (InterruptedException e) {

e.printStackTrace();

}

for (ConsumerRecord<String, String> record : records) {

System.out.printf("===================offset = %d, key = %s, value = %s", record.offset(), record.key(),

record.value());

}

}

}

}

log4j配置文件配置如下:

log4j.rootLogger=INFO,console # for package com.demo.kafka, log would be sent to kafka appender.

log4j.logger.com.demo.flume=info,flume log4j.appender.flume = org.apache.flume.clients.log4jappender.Log4jAppender

log4j.appender.flume.Hostname = 192.168.1.163

log4j.appender.flume.Port = 4141

log4j.appender.flume.UnsafeMode = true

log4j.appender.flume.layout=org.apache.log4j.PatternLayout

log4j.appender.flume.layout.ConversionPattern=%d{yyyy-MM-dd HH:mm:ss} %p [%c:%L] - %m%n # appender console

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.target=System.out

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d [%-5p] [%t] - [%l] %m%n

备注:其中hostname为flume安装的服务器IP,port为端口与下面的flume的监听端口相对应

pom.xml引入如下jar:

<dependencies>

<dependency>

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.10</version>

</dependency>

<dependency>

<groupId>org.apache.flume</groupId>

<artifactId>flume-ng-core</artifactId>

<version>1.5.0</version>

</dependency>

<dependency>

<groupId>org.apache.flume.flume-ng-clients</groupId>

<artifactId>flume-ng-log4jappender</artifactId>

<version>1.5.0</version>

</dependency> <dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency> <dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-clients</artifactId>

<version>0.10.2.0</version>

</dependency> <dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka_2.10</artifactId>

<version>0.10.2.0</version>

</dependency> <dependency>

<groupId>org.apache.kafka</groupId>

<artifactId>kafka-log4j-appender</artifactId>

<version>0.10.2.0</version>

</dependency> <dependency>

<groupId>com.google.guava</groupId>

<artifactId>guava</artifactId>

<version>18.0</version>

</dependency>

</dependencies>

2、配置flume

flume/conf下:

新建avro.conf 文件内容如下:

当然skin可以用任何方式,这里我用的是kafka,具体的skin方式可以看官网

a1.sources=source1

a1.channels=channel1

a1.sinks=sink1 a1.sources.source1.type=avro

a1.sources.source1.bind=192.168.1.163

a1.sources.source1.port=4141

a1.sources.source1.channels = channel1 a1.channels.channel1.type=memory

a1.channels.channel1.capacity=10000

a1.channels.channel1.transactionCapacity=1000

a1.channels.channel1.keep-alive=30 a1.sinks.sink1.type = org.apache.flume.sink.kafka.KafkaSink

a1.sinks.sink1.topic = flumeTest

a1.sinks.sink1.brokerList = 192.168.1.163:9092

a1.sinks.sink1.requiredAcks = 0

a1.sinks.sink1.sink.batchSize = 20

a1.sinks.sink1.channel = channel1

如上配置,flume服务器运行在192.163.1.163上,并且监听的端口为4141,在log4j中只需要将日志发送到192.163.1.163的4141端口就能成功的发送到flume上。flume会监听并收集该端口上的数据信息,然后将它转化成kafka event,并发送到kafka集群flumeTest topic下。

3、启动flume并测试

- flume启动命令:bin/flume-ng agent --conf conf --conf-file conf/avro.conf --name a1 -Dflume.root.logger=INFO,console

- 运行FlumeTest类的main方法打印日志

- 允许Consumer的main方法打印kafka接收到的数据

flume采集log4j日志到kafka的更多相关文章

- flume学习(三):flume将log4j日志数据写入到hdfs(转)

原文链接:flume学习(三):flume将log4j日志数据写入到hdfs 在第一篇文章中我们是将log4j的日志输出到了agent的日志文件当中.配置文件如下: tier1.sources=sou ...

- Centos7 搭建 Flume 采集 Nginx 日志

版本信息 CentOS: Linux localhost.localdomain 3.10.0-862.el7.x86_64 #1 SMP Fri Apr 20 16:44:24 UTC 2018 x ...

- Flume采集处理日志文件

Flume简介 Flume是Cloudera提供的一个高可用的,高可靠的,分布式的海量日志采集.聚合和传输的系统,Flume支持在日志系统中定制各类数据发送方,用于收集数据:同时,Flume提供对数据 ...

- 利用Flume采集IIS日志到HDFS

1.下载flume 1.7 到官网上下载 flume 1.7版本 2.配置flume配置文件 刚开始的想法是从IIS--->Flume-->Hdfs 但在采集的时候一直报错,无法直接连接到 ...

- Flume采集Nginx日志到HDFS

下载apache-flume-1.7.0-bin.tar.gz,用 tar -zxvf 解压,在/etc/profile文件中增加设置: export FLUME_HOME=/opt/apache-f ...

- flume采集MongoDB数据到Kafka中

环境说明 centos7(运行于vbox虚拟机) flume1.9.0(自定义了flume连接mongodb的source插件) jdk1.8 kafka(2.11) zookeeper(3.57) ...

- 一次flume exec source采集日志到kafka因为单条日志数据非常大同步失败的踩坑带来的思考

本次遇到的问题描述,日志采集同步时,当单条日志(日志文件中一行日志)超过2M大小,数据无法采集同步到kafka,分析后,共踩到如下几个坑.1.flume采集时,通过shell+EXEC(tail -F ...

- flume实时采集mysql数据到kafka中并输出

环境说明 centos7(运行于vbox虚拟机) flume1.9.0(flume-ng-sql-source插件版本1.5.3) jdk1.8 kafka(版本忘了后续更新) zookeeper(版 ...

- 带你看懂大数据采集引擎之Flume&采集目录中的日志

一.Flume的介绍: Flume由Cloudera公司开发,是一种提供高可用.高可靠.分布式海量日志采集.聚合和传输的系统,Flume支持在日志系统中定制各类数据发送方,用于采集数据:同时,flum ...

随机推荐

- jvm-垃圾收集器与内存分配策略

垃圾收集器与内存分配策略 参考: https://my.oschina.net/hosee/blog/644085 http://www.cnblogs.com/zhguang/p/Java-JVM- ...

- [Ctsc2000]冰原探险

Description 传说中,南极有一片广阔的冰原,在冰原下藏有史前文明的遗址.整个冰原被横竖划分成了很多个大小相等的方格.在这个冰原上有N个大小不等的矩形冰山,这些巨大的冰山有着和南极一样古老的历 ...

- LeetCode——Hamming Distance

LeetCode--Hamming Distance Question The Hamming distance between two integers is the number of posit ...

- strcpy的实现

// // Strcpy.c // libin // // Created by 李宾 on 15/8/20. // Copyright (c) 2015年 李宾. All rights reserv ...

- js 小秘密

1.RegExp 对象方法 test检索字符串中指定的值.返回 true 或 false. 支持正则表达式的 String 对象的方法

- python time 和 datetime 模块的简介

时间处理 time 和 datetime import timeimport datetimeprint time.time() #时间戳显示为1508228106.49print time.strf ...

- hdu 5978 To begin or not to begin(概率,找规律)

To begin or not to begin Time Limit: 2000/1000 MS (Java/Others) Memory Limit: 65536/65536 K (Java ...

- New Concept English three (32)

26w/m 68 The salvage operation had been a complete failure. The small ship, Elkor, which had been se ...

- phpcms内容限制(转发自王小明爱红领巾)

因为页面显示需要对文章内容做剪切,所以用到{str_cut($r[content],60)},但是出现了乱码 所以 {str_cut(strip_tags($r[content]),60)}加stri ...

- cursor光标类型

今天早上在网上看到一篇关于光标类型的总结代码,很好,特定拿来: 最终结果: 代码: <!DOCTYPE html> <html lang="zh-cn"> ...