ElasticSearch(三):通分词器(Analyzer)进行分词(Analysis)

ElasticSearch(三):通过分词器(Analyzer)进行分词(Analysis)

## Analysis与Analyzer

* Analysis文本分析就是把全文转换成一系列单词的过程,也叫做分词。

* Analysis是通过Analyzer来实现的,它是专门处理分词的组件。可以使用ElasticSearch内置的分词器,也可以按需定制化分词器。

* 除了在数据写入时用分词器转换词条,在匹配查询语句时,也需要用相同的分词器对查询语句进行分析。

Analyzer的组成

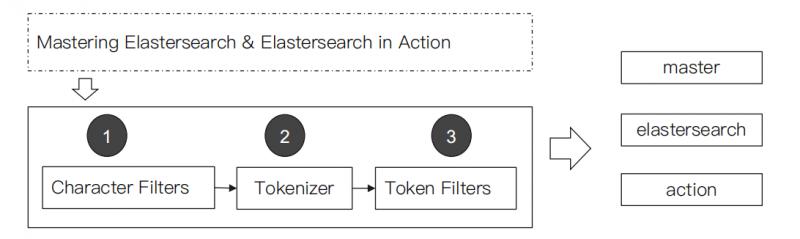

分词器是专门处理分词的组件,Analyzer由三个部分组成:

- Character Filters:主要作用是对原始文本进行处理,例如去除HTML标签。

- Tokenizer:主要作用是按照规则来切分单词。

- Token Filter:将切分好的单词进行加工,例如:小写转换、删除停用词、增加同义词。

ElasticSearch的内置分词器

- Standard Analyzer:默认分词器,按词切分,小写处理。

#standard

GET _analyze

{

"analyzer": "standard",

"text": "2 running Quick brown-foxes leap over lazy dogs in the summer evening."

}

#分词结果:Quick小写处理, brown-foxes被切分为 brown,foxes

{

"tokens" : [

{

"token" : "2",

"start_offset" : 0,

"end_offset" : 1,

"type" : "<NUM>",

"position" : 0

},

{

"token" : "running",

"start_offset" : 2,

"end_offset" : 9,

"type" : "<ALPHANUM>",

"position" : 1

},

{

"token" : "quick",#小写处理

"start_offset" : 10,

"end_offset" : 15,

"type" : "<ALPHANUM>",

"position" : 2

},

{

"token" : "brown",

"start_offset" : 16,

"end_offset" : 21,

"type" : "<ALPHANUM>",

"position" : 3

},

{

"token" : "foxes",

"start_offset" : 22,

"end_offset" : 27,

"type" : "<ALPHANUM>",

"position" : 4

},

{

"token" : "leap",

"start_offset" : 28,

"end_offset" : 32,

"type" : "<ALPHANUM>",

"position" : 5

},

{

"token" : "over",

"start_offset" : 33,

"end_offset" : 37,

"type" : "<ALPHANUM>",

"position" : 6

},

{

"token" : "lazy",

"start_offset" : 38,

"end_offset" : 42,

"type" : "<ALPHANUM>",

"position" : 7

},

{

"token" : "dogs",

"start_offset" : 43,

"end_offset" : 47,

"type" : "<ALPHANUM>",

"position" : 8

},

{

"token" : "in",

"start_offset" : 48,

"end_offset" : 50,

"type" : "<ALPHANUM>",

"position" : 9

},

{

"token" : "the",

"start_offset" : 51,

"end_offset" : 54,

"type" : "<ALPHANUM>",

"position" : 10

},

{

"token" : "summer",

"start_offset" : 55,

"end_offset" : 61,

"type" : "<ALPHANUM>",

"position" : 11

},

{

"token" : "evening",

"start_offset" : 62,

"end_offset" : 69,

"type" : "<ALPHANUM>",

"position" : 12

}

]

}

- Simple Analyzer:按照非字母切分(符号被过滤),小写处理。

#simpe

GET _analyze

{

"analyzer": "simple",

"text": "2 running Quick brown-foxes leap over lazy dogs in the summer evening."

}

#分词结果:数字2被过滤,Quick小写处理, brown-foxes被切分为 brown,foxes

{

"tokens" : [

{

"token" : "running",

"start_offset" : 2,

"end_offset" : 9,

"type" : "word",

"position" : 0

},

{

"token" : "quick",

"start_offset" : 10,

"end_offset" : 15,

"type" : "word",

"position" : 1

},

{

"token" : "brown",

"start_offset" : 16,

"end_offset" : 21,

"type" : "word",

"position" : 2

},

{

"token" : "foxes",

"start_offset" : 22,

"end_offset" : 27,

"type" : "word",

"position" : 3

},

{

"token" : "leap",

"start_offset" : 28,

"end_offset" : 32,

"type" : "word",

"position" : 4

},

{

"token" : "over",

"start_offset" : 33,

"end_offset" : 37,

"type" : "word",

"position" : 5

},

{

"token" : "lazy",

"start_offset" : 38,

"end_offset" : 42,

"type" : "word",

"position" : 6

},

{

"token" : "dogs",

"start_offset" : 43,

"end_offset" : 47,

"type" : "word",

"position" : 7

},

{

"token" : "in",

"start_offset" : 48,

"end_offset" : 50,

"type" : "word",

"position" : 8

},

{

"token" : "the",

"start_offset" : 51,

"end_offset" : 54,

"type" : "word",

"position" : 9

},

{

"token" : "summer",

"start_offset" : 55,

"end_offset" : 61,

"type" : "word",

"position" : 10

},

{

"token" : "evening",

"start_offset" : 62,

"end_offset" : 69,

"type" : "word",

"position" : 11

}

]

}

- Stop Analyzer:停用词过滤(is/a/the),小写处理。

#stop

GET _analyze

{

"analyzer": "stop",

"text": "2 running Quick brown-foxes leap over lazy dogs in the summer evening."

}

#分词结果:2,in,the被过滤,Quick小写处理, brown-foxes被切分为 brown,foxes

{

"tokens" : [

{

"token" : "running",

"start_offset" : 2,

"end_offset" : 9,

"type" : "word",

"position" : 0

},

{

"token" : "quick",

"start_offset" : 10,

"end_offset" : 15,

"type" : "word",

"position" : 1

},

{

"token" : "brown",

"start_offset" : 16,

"end_offset" : 21,

"type" : "word",

"position" : 2

},

{

"token" : "foxes",

"start_offset" : 22,

"end_offset" : 27,

"type" : "word",

"position" : 3

},

{

"token" : "leap",

"start_offset" : 28,

"end_offset" : 32,

"type" : "word",

"position" : 4

},

{

"token" : "over",

"start_offset" : 33,

"end_offset" : 37,

"type" : "word",

"position" : 5

},

{

"token" : "lazy",

"start_offset" : 38,

"end_offset" : 42,

"type" : "word",

"position" : 6

},

{

"token" : "dogs",

"start_offset" : 43,

"end_offset" : 47,

"type" : "word",

"position" : 7

},

{

"token" : "summer",

"start_offset" : 55,

"end_offset" : 61,

"type" : "word",

"position" : 10

},

{

"token" : "evening",

"start_offset" : 62,

"end_offset" : 69,

"type" : "word",

"position" : 11

}

]

}

- WhiteSpace Analyzer:按照空格切分,不转小写。

#whitespace

GET _analyze

{

"analyzer": "whitespace",

"text": "2 running Quick brown-foxes leap over lazy dogs in the summer evening."

}

#分词结果:按空格切分

{

"tokens" : [

{

"token" : "2",

"start_offset" : 0,

"end_offset" : 1,

"type" : "word",

"position" : 0

},

{

"token" : "running",

"start_offset" : 2,

"end_offset" : 9,

"type" : "word",

"position" : 1

},

{

"token" : "Quick",

"start_offset" : 10,

"end_offset" : 15,

"type" : "word",

"position" : 2

},

{

"token" : "brown-foxes",

"start_offset" : 16,

"end_offset" : 27,

"type" : "word",

"position" : 3

},

{

"token" : "leap",

"start_offset" : 28,

"end_offset" : 32,

"type" : "word",

"position" : 4

},

{

"token" : "over",

"start_offset" : 33,

"end_offset" : 37,

"type" : "word",

"position" : 5

},

{

"token" : "lazy",

"start_offset" : 38,

"end_offset" : 42,

"type" : "word",

"position" : 6

},

{

"token" : "dogs",

"start_offset" : 43,

"end_offset" : 47,

"type" : "word",

"position" : 7

},

{

"token" : "in",

"start_offset" : 48,

"end_offset" : 50,

"type" : "word",

"position" : 8

},

{

"token" : "the",

"start_offset" : 51,

"end_offset" : 54,

"type" : "word",

"position" : 9

},

{

"token" : "summer",

"start_offset" : 55,

"end_offset" : 61,

"type" : "word",

"position" : 10

},

{

"token" : "evening.",

"start_offset" : 62,

"end_offset" : 70,

"type" : "word",

"position" : 11

}

]

}

- Keyword Analyzer:不分词,直接将输入当作输出。

#keyword

GET _analyze

{

"analyzer": "keyword",

"text": "2 running Quick brown-foxes leap over lazy dogs in the summer evening."

}

#分词结果:

{

"tokens" : [

{

"token" : "2 running Quick brown-foxes leap over lazy dogs in the summer evening.",

"start_offset" : 0,

"end_offset" : 70,

"type" : "word",

"position" : 0

}

]

}

- Pattern Analyzer:正则表达式分词,默认\W+(非字符分隔)。

#pattern

GET _analyze

{

"analyzer": "pattern",

"text": "2 running Quick brown-foxes leap over lazy dogs in the summer evening."

}

#分词结果:

{

"tokens" : [

{

"token" : "2",

"start_offset" : 0,

"end_offset" : 1,

"type" : "word",

"position" : 0

},

{

"token" : "running",

"start_offset" : 2,

"end_offset" : 9,

"type" : "word",

"position" : 1

},

{

"token" : "quick",

"start_offset" : 10,

"end_offset" : 15,

"type" : "word",

"position" : 2

},

{

"token" : "brown",

"start_offset" : 16,

"end_offset" : 21,

"type" : "word",

"position" : 3

},

{

"token" : "foxes",

"start_offset" : 22,

"end_offset" : 27,

"type" : "word",

"position" : 4

},

{

"token" : "leap",

"start_offset" : 28,

"end_offset" : 32,

"type" : "word",

"position" : 5

},

{

"token" : "over",

"start_offset" : 33,

"end_offset" : 37,

"type" : "word",

"position" : 6

},

{

"token" : "lazy",

"start_offset" : 38,

"end_offset" : 42,

"type" : "word",

"position" : 7

},

{

"token" : "dogs",

"start_offset" : 43,

"end_offset" : 47,

"type" : "word",

"position" : 8

},

{

"token" : "in",

"start_offset" : 48,

"end_offset" : 50,

"type" : "word",

"position" : 9

},

{

"token" : "the",

"start_offset" : 51,

"end_offset" : 54,

"type" : "word",

"position" : 10

},

{

"token" : "summer",

"start_offset" : 55,

"end_offset" : 61,

"type" : "word",

"position" : 11

},

{

"token" : "evening",

"start_offset" : 62,

"end_offset" : 69,

"type" : "word",

"position" : 12

}

]

}

- Language:提供了30多种常见语言的分词器。

#english

GET _analyze

{

"analyzer": "english",

"text": "2 running Quick brown-foxes leap over lazy dogs in the summer evening."

}

#分词结果:running转为run,Quick转为quick,brown-foxes 转为brown、fox,in、the过滤等等

{

"tokens" : [

{

"token" : "2",

"start_offset" : 0,

"end_offset" : 1,

"type" : "<NUM>",

"position" : 0

},

{

"token" : "run",

"start_offset" : 2,

"end_offset" : 9,

"type" : "<ALPHANUM>",

"position" : 1

},

{

"token" : "quick",

"start_offset" : 10,

"end_offset" : 15,

"type" : "<ALPHANUM>",

"position" : 2

},

{

"token" : "brown",

"start_offset" : 16,

"end_offset" : 21,

"type" : "<ALPHANUM>",

"position" : 3

},

{

"token" : "fox",

"start_offset" : 22,

"end_offset" : 27,

"type" : "<ALPHANUM>",

"position" : 4

},

{

"token" : "leap",

"start_offset" : 28,

"end_offset" : 32,

"type" : "<ALPHANUM>",

"position" : 5

},

{

"token" : "over",

"start_offset" : 33,

"end_offset" : 37,

"type" : "<ALPHANUM>",

"position" : 6

},

{

"token" : "lazi",

"start_offset" : 38,

"end_offset" : 42,

"type" : "<ALPHANUM>",

"position" : 7

},

{

"token" : "dog",

"start_offset" : 43,

"end_offset" : 47,

"type" : "<ALPHANUM>",

"position" : 8

},

{

"token" : "summer",

"start_offset" : 55,

"end_offset" : 61,

"type" : "<ALPHANUM>",

"position" : 11

},

{

"token" : "even",

"start_offset" : 62,

"end_offset" : 69,

"type" : "<ALPHANUM>",

"position" : 12

}

]

}

- Custom Analyzer:自定义分词器。

#需要安装analysis-icu插件

POST _analyze

{

"analyzer": "icu_analyzer",

"text": "他说的确实在理”"

}

#返回结果

{

"tokens" : [

{

"token" : "他",

"start_offset" : 0,

"end_offset" : 1,

"type" : "<IDEOGRAPHIC>",

"position" : 0

},

{

"token" : "说的",

"start_offset" : 1,

"end_offset" : 3,

"type" : "<IDEOGRAPHIC>",

"position" : 1

},

{

"token" : "确实",

"start_offset" : 3,

"end_offset" : 5,

"type" : "<IDEOGRAPHIC>",

"position" : 2

},

{

"token" : "在",

"start_offset" : 5,

"end_offset" : 6,

"type" : "<IDEOGRAPHIC>",

"position" : 3

},

{

"token" : "理",

"start_offset" : 6,

"end_offset" : 7,

"type" : "<IDEOGRAPHIC>",

"position" : 4

}

]

}

中文分词比较:

POST _analyze

{

"analyzer": "standard",

"text": "他说的确实在理”"

}

#返回结果

{

"tokens" : [

{

"token" : "他",

"start_offset" : 0,

"end_offset" : 1,

"type" : "<IDEOGRAPHIC>",

"position" : 0

},

{

"token" : "说",

"start_offset" : 1,

"end_offset" : 2,

"type" : "<IDEOGRAPHIC>",

"position" : 1

},

{

"token" : "的",

"start_offset" : 2,

"end_offset" : 3,

"type" : "<IDEOGRAPHIC>",

"position" : 2

},

{

"token" : "确",

"start_offset" : 3,

"end_offset" : 4,

"type" : "<IDEOGRAPHIC>",

"position" : 3

},

{

"token" : "实",

"start_offset" : 4,

"end_offset" : 5,

"type" : "<IDEOGRAPHIC>",

"position" : 4

},

{

"token" : "在",

"start_offset" : 5,

"end_offset" : 6,

"type" : "<IDEOGRAPHIC>",

"position" : 5

},

{

"token" : "理",

"start_offset" : 6,

"end_offset" : 7,

"type" : "<IDEOGRAPHIC>",

"position" : 6

}

]

}

ElasticSearch(三):通分词器(Analyzer)进行分词(Analysis)的更多相关文章

- Elasticsearch(10) --- 内置分词器、中文分词器

Elasticsearch(10) --- 内置分词器.中文分词器 这篇博客主要讲:分词器概念.ES内置分词器.ES中文分词器. 一.分词器概念 1.Analysis 和 Analyzer Analy ...

- ElasticSearch7.3 学习之倒排索引揭秘及初识分词器(Analyzer)

一.倒排索引 1. 构建倒排索引 例如说有下面两个句子doc1,doc2 doc1:I really liked my small dogs, and I think my mom also like ...

- es的分词器analyzer

analyzer 分词器使用的两个情形: 1,Index time analysis. 创建或者更新文档时,会对文档进行分词2,Search time analysis. 查询时,对查询语句 ...

- Lucene.net(4.8.0)+PanGu分词器问题记录一:分词器Analyzer的构造和内部成员ReuseStategy

前言:目前自己在做使用Lucene.net和PanGu分词实现全文检索的工作,不过自己是把别人做好的项目进行迁移.因为项目整体要迁移到ASP.NET Core 2.0版本,而Lucene使用的版本是3 ...

- Lucene.net(4.8.0) 学习问题记录一:分词器Analyzer的构造和内部成员ReuseStategy

前言:目前自己在做使用Lucene.net和PanGu分词实现全文检索的工作,不过自己是把别人做好的项目进行迁移.因为项目整体要迁移到ASP.NET Core 2.0版本,而Lucene使用的版本是3 ...

- Elasticsearch修改分词器以及自定义分词器

Elasticsearch修改分词器以及自定义分词器 参考博客:https://blog.csdn.net/shuimofengyang/article/details/88973597

- 【Lucene3.6.2入门系列】第05节_自定义停用词分词器和同义词分词器

首先是用于显示分词信息的HelloCustomAnalyzer.java package com.jadyer.lucene; import java.io.IOException; import j ...

- Lucene学习-深入Lucene分词器,TokenStream获取分词详细信息

Lucene学习-深入Lucene分词器,TokenStream获取分词详细信息 在此回复牛妞的关于程序中分词器的问题,其实可以直接很简单的在词库中配置就好了,Lucene中分词的所有信息我们都可以从 ...

- 自然语言处理之中文分词器-jieba分词器详解及python实战

(转https://blog.csdn.net/gzmfxy/article/details/78994396) 中文分词是中文文本处理的一个基础步骤,也是中文人机自然语言交互的基础模块,在进行中文自 ...

- 【ELK】【docker】【elasticsearch】2.使用elasticSearch+kibana+logstash+ik分词器+pinyin分词器+繁简体转化分词器 6.5.4 启动 ELK+logstash概念描述

官网地址:https://www.elastic.co/guide/en/elasticsearch/reference/current/docker.html#docker-cli-run-prod ...

随机推荐

- 关于MySQL的经典例题50道

--1.学生表Student(S,Sname,Sage,Ssex) --S 学生编号,Sname 学生姓名,Sage 出生年月,Ssex 学生性别--2.课程表 Course(C,Cname,T) - ...

- c#关于JWT跨域身份验证解决方案

学习程序,不是记代码,而是学习一种思想,以及对代码的理解和思考. JSON Web Token(JWT)是目前最流行的跨域身份验证解决方案.为了网络应用环境间传递声明而执行的一种基于JSON的开发标准 ...

- UVA - 1160 X-Plosives

A secret service developed a new kind of explosive that attain its volatile property only when a spe ...

- Focus on the Good 专注于好的方面

[1] Dealing with people is like digging for gold. When you go digging for an ounce of gold, you hav ...

- git的下载及简单使用一

git 是世界上最先进的分布式版本控制系统 常用的git网站 GitHub gitee(码云) git的下载地址 https://git-scm.com/downloads 而后根据计算机的系统选择相 ...

- EasyExcel 轻松灵活读取Excel内容

写在前面 Java 后端程序员应该会遇到读取 Excel 信息到 DB 等相关需求,脑海中可能突然间想起 Apache POI 这个技术解决方案,但是当 Excel 的数据量非常大的时候,你也许发现, ...

- Python_文本的读写操作

[需求] 1. 获取文本内容,提取内容中的可用信息,对信息进行清洗等一系列处理 2. 算法输出一些内容,保存到文本文件中,便于使用 [函数] 在Python中open()函数是用来打开文件的,包括文本 ...

- C语言打印当前所在函数名、文件名、行号

printf("[%s %s] %s: %s: %d\n", \ __DATE__, __TIME__, __FILE__, __func__, __LINE__); 内核驱动中: ...

- windows自带的netsh的使用

0x01netsh简介 自Windows XP开始,Windows中就内置网络端口转发的功能.任何传入到本地端口的TCP连接(IPv4或IPv6)都可以被重定向到另一个本地端口,或远程计算机上的端口, ...

- POJ 1753 Flip Game(状态压缩+BFS)

题目网址:http://poj.org/problem?id=1753 题目: Flip Game Description Flip game is played on a rectangular 4 ...