使用R语言和XML包抓取网页数据-Scraping data from web pages in R with XML package

In the last years a lot of data has been released publicly in different formats, but sometimes the data we're interested in are still inside the HTML of a web page: let's see how to get those data.

One of the existing packages for doing this job is the XML package. This package allows us to read and create XML and HTML documents; among the many features, there's a function called readHTMLTable() that analyze the parsed HTML and returns the tables present in the page. The details of the package are available in the official documentation of the package.

Let's start.

Suppose we're interested in the italian demographic info present in this page http://sdw.ecb.europa.eu/browse.do?node=2120803 from the EU website. We start loading and parsing the page:

page <- "http://sdw.ecb.europa.eu/browse.do?node=2120803"

parsed <- htmlParse(page)

Now that we have parsed HTML, we can use the readHTMLTable() function to return a list with all the tables present in the page; we'll call the function with these parameters:

- parsed: the parsed HTML

- skip.rows: the rows we want to skip (at the beginning of this table there are a couple of rows that don't contain data but just formatting elements)

- colClasses: the datatype of the different columns of the table (in our case all the columns have integer values); the rep() function is used to replicate 31 times the "integer" value

table <- readHTMLTable(parsed, skip.rows=c(1,3,4,5), colClasses = c(rep("integer", 31)))

As we can see from the page source code, this web page contains six HTML tables; the one that contains the data we're interested in is the fifth, so we extract that one from the list of tables, as a data frame:

values <- as.data.frame(table[5])

Just for convenience, we rename the columns with the period and italian data:

# renames the columns for the period and Italy

colnames(values)[1] <- 'Period'

colnames(values)[19] <- 'Italy'

The italian data lasts from 1990 to 2014, so we have to subset only those rows and, of course, only the two columns of period and italian data:

# subsets the data: we are interested only in the first and the 19th column (period and italian info)

ids <- values[c(1,19)] # Italy has only 25 years of info, so we cut away the other rows

ids <- as.data.frame(ids[1:25,])

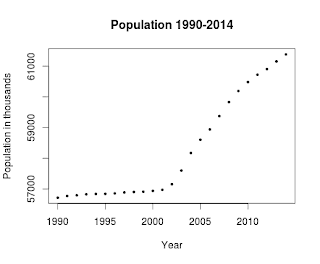

Now we can plot these data calling the plot function with these parameters:

- ids: the data to plot

- xlab: the label of the X axis

- ylab: the label of the Y axis

- main: the title of the plot

- pch: the symbol to draw for evey point (19 is a solid circle: look here for an overview)

- cex: the size of the symbol

plot(ids, xlab="Year", ylab="Population in thousands", main="Population 1990-2014", pch=19, cex=0.5)

and here is the result:

Here's the full code, also available on my github:

library(XML) # sets the URL

url <- "http://sdw.ecb.europa.eu/browse.do?node=2120803" # let the XML library parse the HTMl of the page

parsed <- htmlParse(url) # reads the HTML table present inside the page, paying attention

# to the data types contained in the HTML table

table <- readHTMLTable(parsed, skip.rows=c(1,3,4,5), colClasses = c(rep("integer", 31) )) # this web page contains seven HTML pages, but the one that contains the data

# is the fifth

values <- as.data.frame(table[5]) # renames the columns for the period and Italy

colnames(values)[1] <- 'Period'

colnames(values)[19] <- 'Italy' # now subsets the data: we are interested only in the first and

# the 19th column (period and Italy info)

ids <- values[c(1,19)] # Italy has only 25 year of info, so we cut away the others

ids <- as.data.frame(ids[1:25,]) # plots the data

plot(ids, xlab="Year", ylab="Population in thousands", main="Population 1990-2014", pch=19, cex=0.5) from: http://andreaiacono.blogspot.com/2014/01/scraping-data-from-web-pages-in-r-with.html

使用R语言和XML包抓取网页数据-Scraping data from web pages in R with XML package的更多相关文章

- java抓取网页数据,登录之后抓取数据。

最近做了一个从网络上抓取数据的一个小程序.主要关于信贷方面,收集的一些黑名单网站,从该网站上抓取到自己系统中. 也找了一些资料,觉得没有一个很好的,全面的例子.因此在这里做个笔记提醒自己. 首先需要一 ...

- Asp.net 使用正则和网络编程抓取网页数据(有用)

Asp.net 使用正则和网络编程抓取网页数据(有用) Asp.net 使用正则和网络编程抓取网页数据(有用) /// <summary> /// 抓取网页对应内容 /// </su ...

- 使用JAVA抓取网页数据

一.使用 HttpClient 抓取网页数据 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 ...

- 使用HtmlAgilityPack批量抓取网页数据

原文:使用HtmlAgilityPack批量抓取网页数据 相关软件点击下载登录的处理.因为有些网页数据需要登陆后才能提取.这里要使用ieHTTPHeaders来提取登录时的提交信息.抓取网页 Htm ...

- web scraper 抓取网页数据的几个常见问题

如果你想抓取数据,又懒得写代码了,可以试试 web scraper 抓取数据. 相关文章: 最简单的数据抓取教程,人人都用得上 web scraper 进阶教程,人人都用得上 如果你在使用 web s ...

- c#抓取网页数据

写了一个简单的抓取网页数据的小例子,代码如下: //根据Url地址得到网页的html源码 private string GetWebContent(string Url) { string strRe ...

- 【iOS】正則表達式抓取网页数据制作小词典

版权声明:本文为博主原创文章,未经博主同意不得转载. https://blog.csdn.net/xn4545945/article/details/37684127 应用程序不一定要自己去提供数据. ...

- 01 UIPath抓取网页数据并导出Excel(非Table表单)

上次转载了一篇<UIPath抓取网页数据并导出Excel>的文章,因为那个导出的是table标签中的数据,所以相对比较简单.现实的网页中,有许多不是通过table标签展示的,那又该如何处理 ...

- Node.js的学习--使用cheerio抓取网页数据

打算要写一个公开课网站,缺少数据,就决定去网易公开课去抓取一些数据. 前一阵子看过一段时间的Node.js,而且Node.js也比较适合做这个事情,就打算用Node.js去抓取数据. 关键是抓取到网页 ...

随机推荐

- PHP 标准AES加密算法类

分享一个标准PHP的AES加密算法类,其中mcrypt_get_block_size('rijndael-128', 'ecb');,如果在不明白原理的情况下比较容易搞错,可以通过mcrypt_lis ...

- [你必须知道的.NET]第十七回:貌合神离:覆写和重载

本文将介绍以下内容: 什么是覆写,什么是重载 覆写与重载的区别 覆写与重载在多态特性中的应用 1. 引言 覆写(override)与重载(overload),是成就.NET面向对象多态特性的基本技术之 ...

- 2019.2.25 模拟赛T1【集训队作业2018】小Z的礼物

T1: [集训队作业2018]小Z的礼物 我们发现我们要求的是覆盖所有集合里的元素的期望时间. 设\(t_{i,j}\)表示第一次覆盖第i行第j列的格子的时间,我们要求的是\(max\{ALL\}\) ...

- es6的Set()构造函数

关于Set()函数 Set是一个构造器,类似于数组,但是元素没有重复的 1.接收数组或者其他iterable接口的数据 用于初始化数据 let a=new Set([1,32,424,22,12,3, ...

- 在 Windows 上进行 Laravel Homestead 安装、配置及测试

软件环境:在 Windows 7 64位 上基于 VirtualBox 5.2.12 + Vagrant 2.1.1 使用 Laravel Homestead. 1.准备 先下载VirtualBox- ...

- vs.net 效率提升-自定义快捷键

工欲善其事必先利其器,记录一下自己开发时常用的几个自定义的快捷键.做了这么多年了用着还是比较顺手的分享下~~~~设置时有时设置不成功,非得一项一项设置才可以~~~ 设置自定义快捷键位置:vs.net- ...

- Django总叙(转)

Django 千锋培训读书笔记 https://www.bilibili.com/video/av17879644/?p=1 切换到创建项目的目录 cd C:\Users\admin\Desktop\ ...

- 【WPF】城市级联(XmlDataProvider)

首先在绑定的时候进行转换: public class RegionConverter : IValueConverter { public object Convert(object value, T ...

- 20179202《Linux内核原理与分析》第一周作业

实验一 Linux 系统简介 这一节主要学习了Linux的历史,重要人物以及学习Linux的方法.Linux和Windows在是否收费.软件与支持.安全性.可定制性和应用范畴等方面都存在着区别.目前感 ...

- 查看Android手机数据库

有的时候,手机没有root,无法查看数据库,甚不方便,好在Github上有解决方案: Github地址:https://github.com/king1039/Android-Debug-Databa ...