Rancher v2.4.8 容器管理平台-集群搭建(基于k8s)

整体概要

1、准备VMware+Ubuntu(ubuntu-20.04-live-server-amd64.iso)三台,一主两从(master,node1,node2)

2、在三台服务器上安装 docker

3、在master 主节点上使用docker 启动rancher

4、登录UI管理界面,添加集群

5、复制添加集群命令,在各node节点上执行(需要等待一会)

一、硬件需求

| 服务器系统 | 节点IP | 节点类型 | 服务器-内存/CUP | hostname |

| Ubuntu 20.04 | 192.168.1.106 | 主节点 | 2G/4核 | master |

| Ubuntu 20.04 | 192.168.1.108 | 工作节点1 | 2G/4核 | node1 |

| Ubuntu 20.04 | 192.168.1.109 | 工作节点2 | 2G/4核 | node2 |

二、环境准备

1、VMware 虚拟机安装ubuntu-20.04.3-live-server-amd64.iso 稳定版系统,并配置固定IP。(此处安装步骤省略.....之前文档有写)

三、安装docker

# 卸载旧版本

sudo apt-get remove docker docker-engine docker.io containerd runc

# 更新包索引

sudo apt-get update

# 允许使用apt通过https使用存储库

sudo apt-get install \

ca-certificates \

curl \

gnupg \

lsb-release

# 添加Docker官方的GPG密钥

curl -fsSL https://download.docker.com/linux/ubuntu/gpg | sudo gpg --dearmor -o /usr/share/keyrings/docker-archive-keyring.gpg

# 设置稳定存储库

echo \

"deb [arch=$(dpkg --print-architecture) signed-by=/usr/share/keyrings/docker-archive-keyring.gpg] https://download.docker.com/linux/ubuntu \

$(lsb_release -cs) stable" | sudo tee /etc/apt/sources.list.d/docker.list > /dev/null

# 更新包索引

sudo apt-get update

# 安装docker 引擎

sudo apt-get install docker-ce docker-ce-cli containerd.io

# 将普通用户添加到docker组

sudo gpasswd -a $user docker

# 更新docker组

newgrp docker

# 查看版本

docker version

四、安装rancher

sudo docker run -d --privileged --restart=unless-stopped -p 80:80 -p 443:443 rancher/rancher:v2.4.8

yang@master:~$ sudo docker run -d --privileged --restart=unless-stopped -p 80:80 -p 443:443 rancher/rancher:v2.4.8

Unable to find image 'rancher/rancher:v2.4.8' locally

v2.4.8: Pulling from rancher/rancher

f08d8e2a3ba1: Pull complete

3baa9cb2483b: Pull complete

94e5ff4c0b15: Pull complete

1860925334f9: Pull complete

ff9fca190532: Pull complete

9edbd5af6f75: Pull complete

39647e735cf8: Pull complete

3470d6dc42b2: Pull complete

0dceba04daf4: Pull complete

4ef3bd369bd9: Pull complete

72d28ebec0e3: Pull complete

3071d34067a8: Pull complete

7b7c203ef611: Pull complete

ed9cc207940b: Pull complete

687ea77f4cb7: Pull complete

b390c49bee0c: Pull complete

d2ae58f8a2c4: Pull complete

e82824cbbb83: Pull complete

2cca9f7c734e: Pull complete

Digest: sha256:5a16a6a0611e49d55ff9d9fbf278b5ca2602575de8f52286b18158ee1a8a5963

Status: Downloaded newer image for rancher/rancher:v2.4.8

ba1afc6482db94f2c5d9553286bd0a11c5df78b7f3106164e894a66b9e18c9cc

注:等待下载镜像,并启动,启动后使用本机真实IP访问

访问路径:

http://本机真实IP (默认端口80)

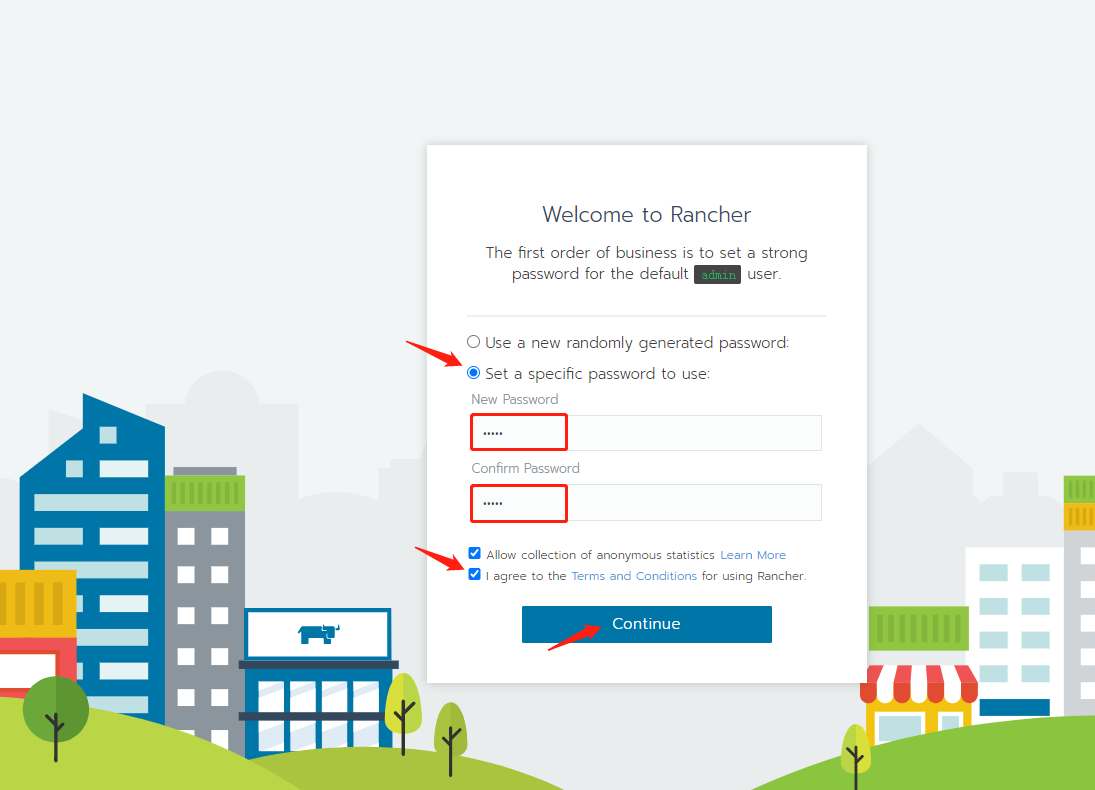

修改新密码并确认密码

我同意使用 Rancher 的条款和条件,然后点击Continue(继续).

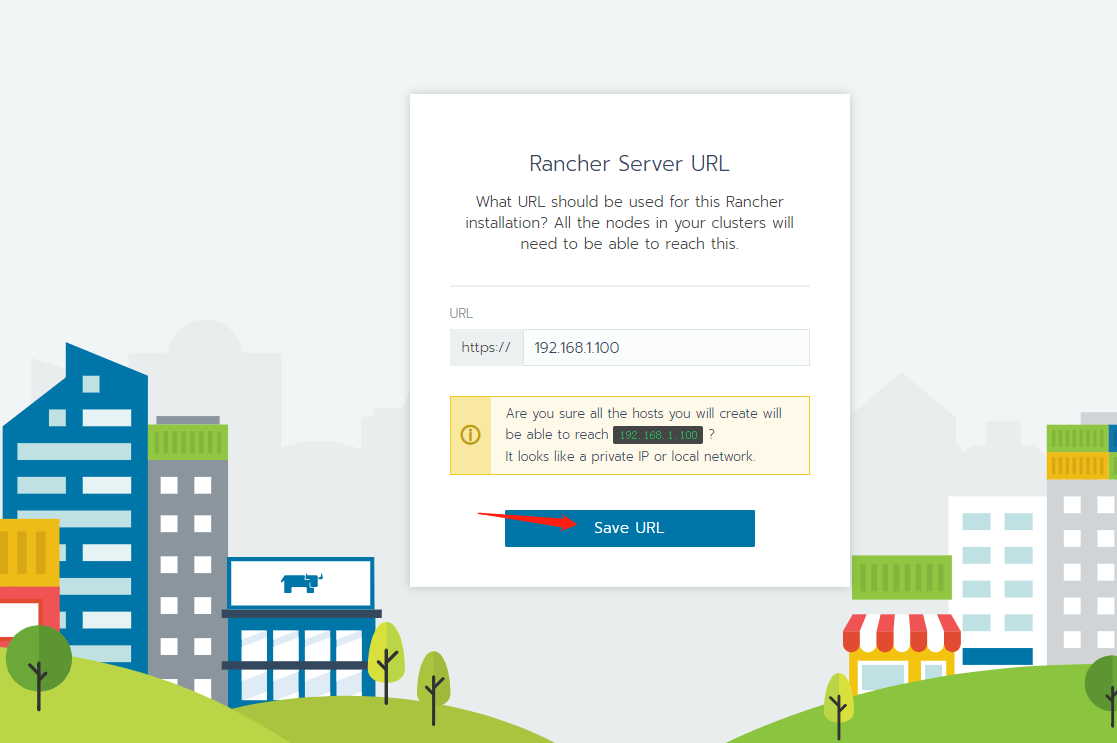

点击 保存 URL

五、安装kubectl命令行工具

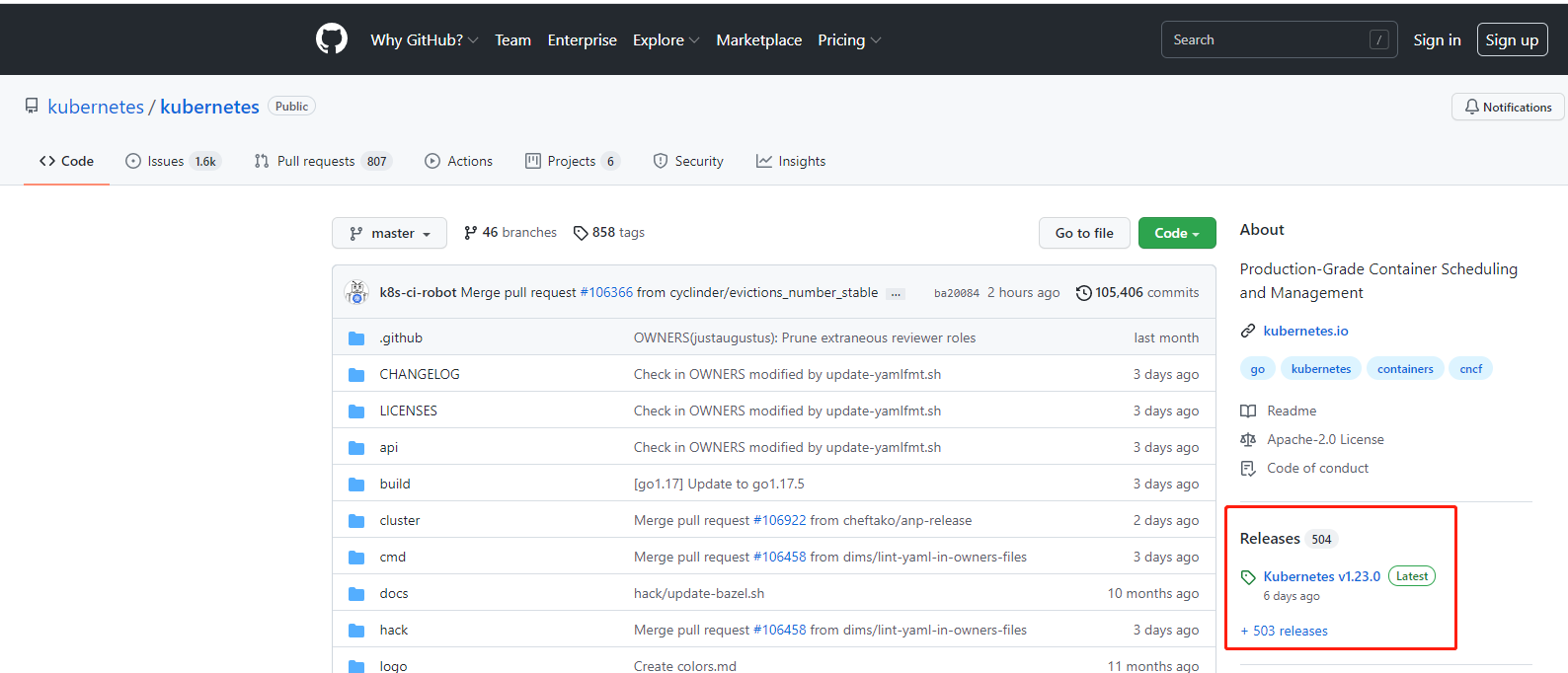

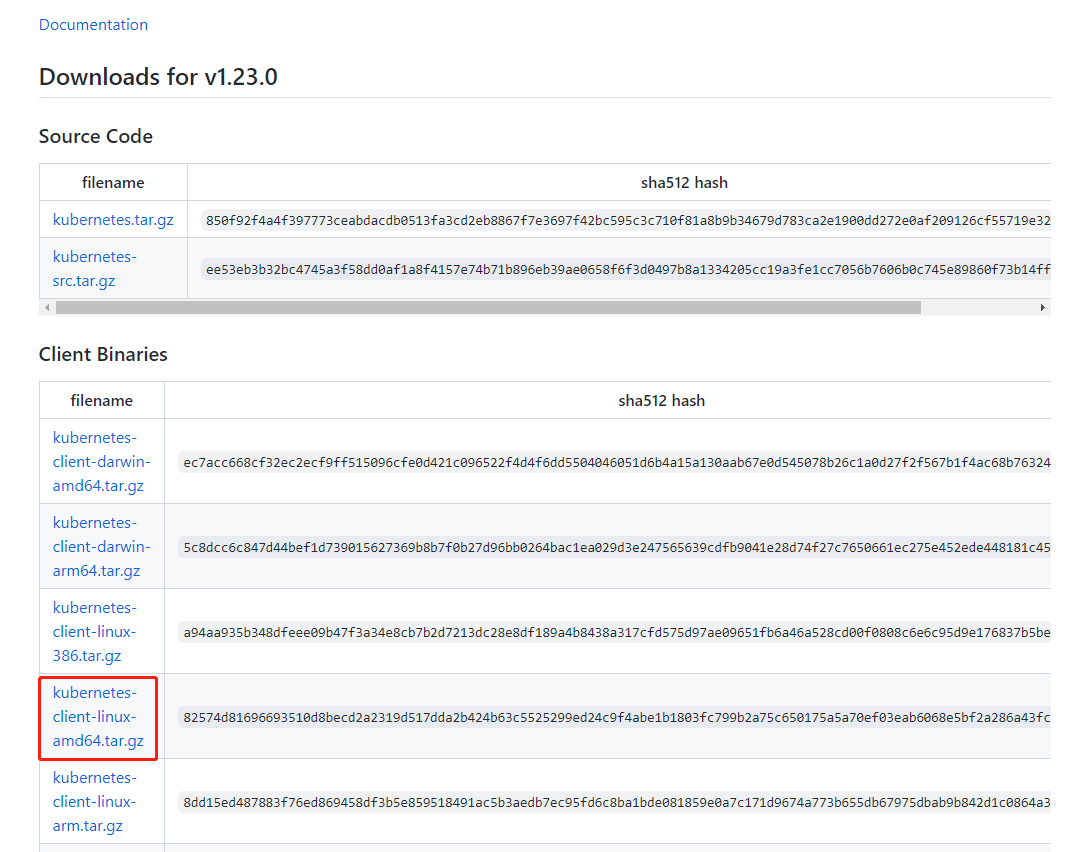

1、软件包地址:https://github.com/kubernetes/kubernetes

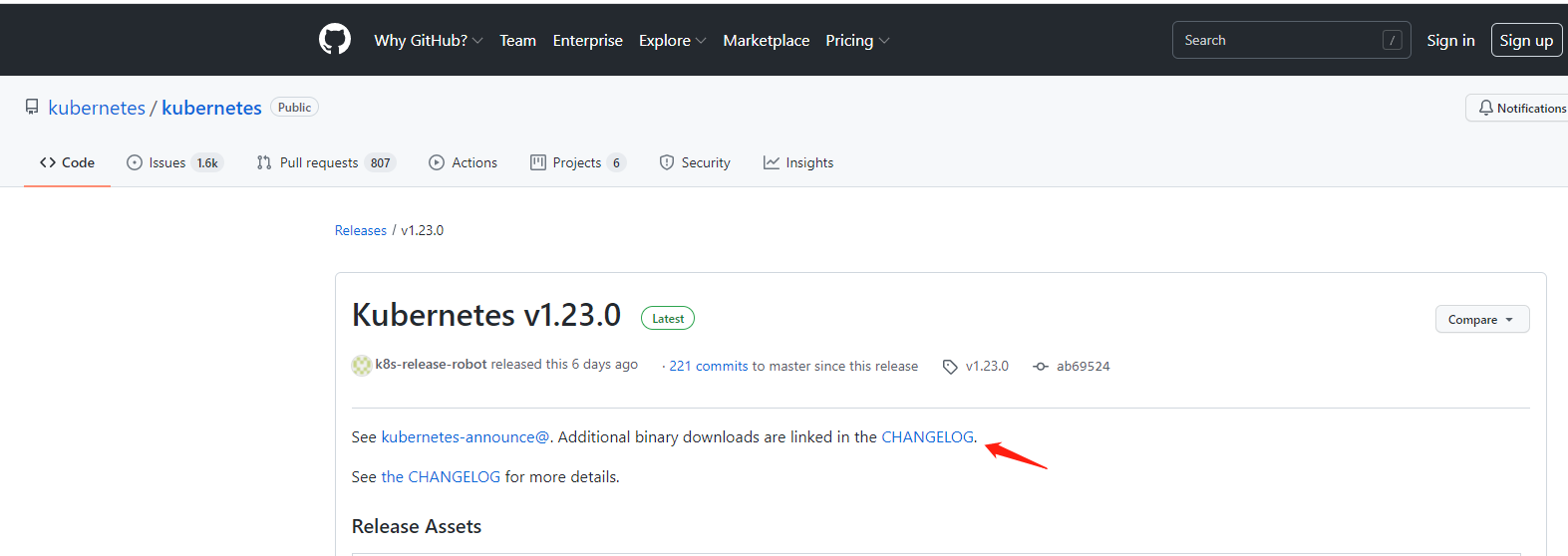

2、点击二进制下载链接在 CHANGELOG 。

3、找到Client Binaries(也就是kubernetes,包里面包含了kubectl),选择对应操作系统的客户端(我这里是linux 的ubuntu系统,amd64位),然后复制连接地址或点击下载。

4、上传kubernetes-client-linux-amd64.tar.gz到master服务器

yang@master:~/ya$ ls

kubernetes-client-linux-amd64.tar.gz

yang@master:~/ya$ tar xf kubernetes-client-linux-amd64.tar.gz

yang@master:~/ya$ cd kubernetes/client/bin/

yang@master:~/ya/kubernetes/client/bin$ sudo chmod +x kubectl

yang@master:~/ya/kubernetes/client/bin$ sudo mv ./kubectl /usr/local/bin/kubectl

# 查看版本,返回版本信息,说明安装成功

yang@master:/usr/local/bin$ ./kubectl version --client

Client Version: version.Info{Major:"1", Minor:"23", GitVersion:"v1.23.0", GitCommit:"ab69524f795c42094a6630298ff53f3c3ebab7f4", GitTreeState:"clean", BuildDate:"2021-12-07T18:16:20Z", GoVersion:"go1.17.3", Compiler:"gc", Platform:"linux/amd64"}

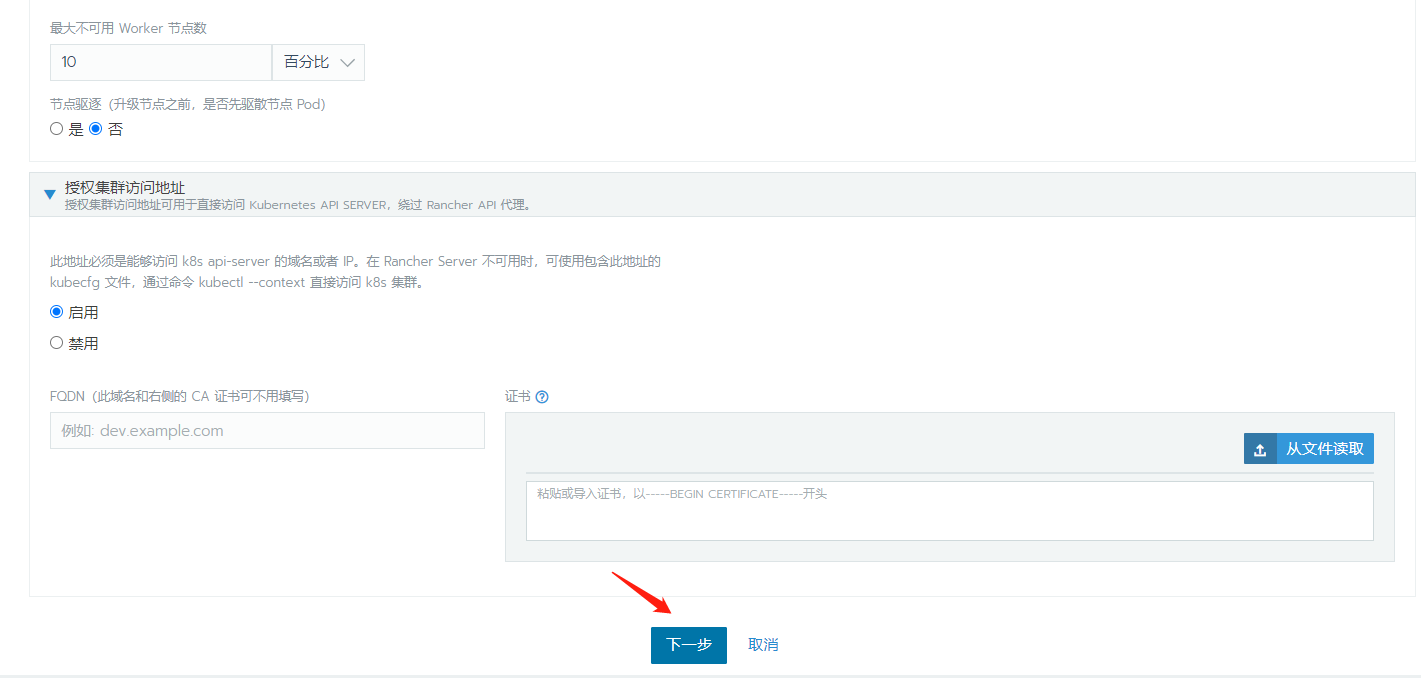

六、添加集群

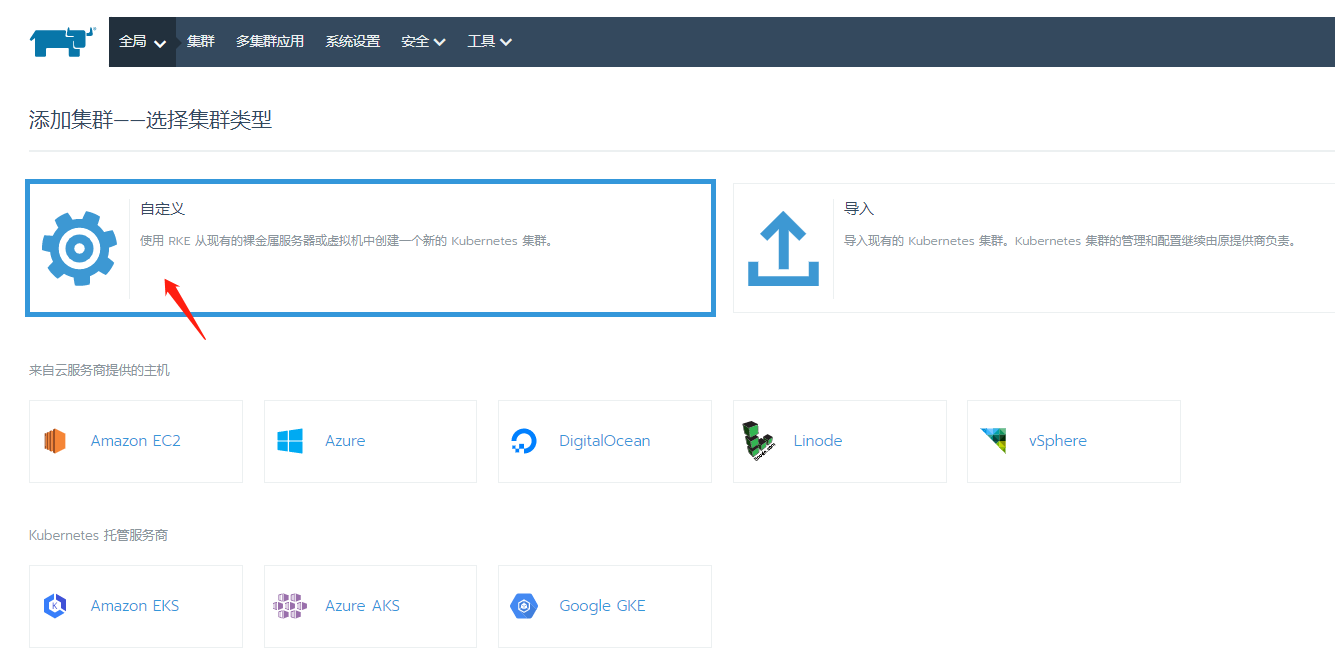

1、登录进rancher中,点击添加集群

2、选择自定义

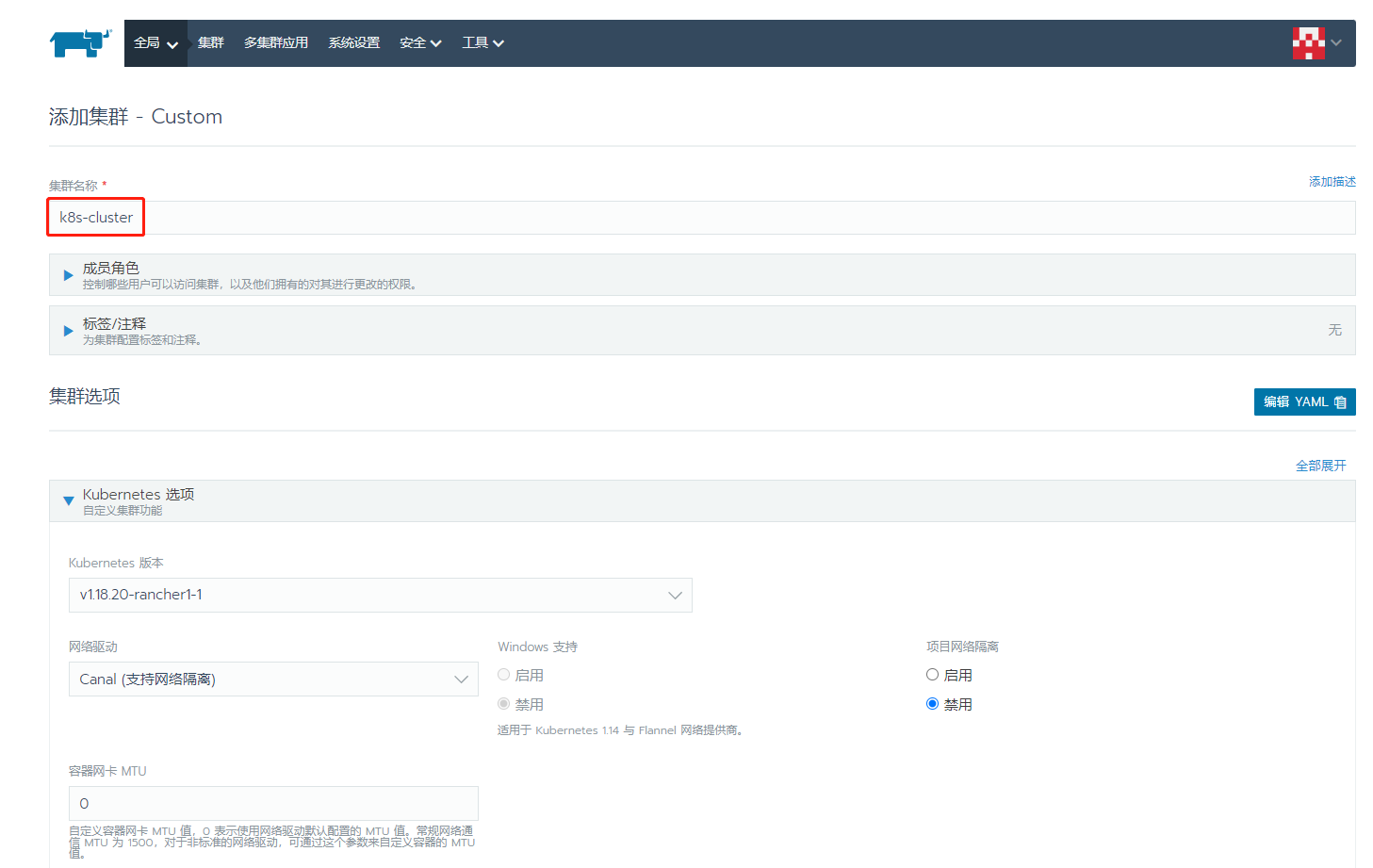

3、填写集群名称

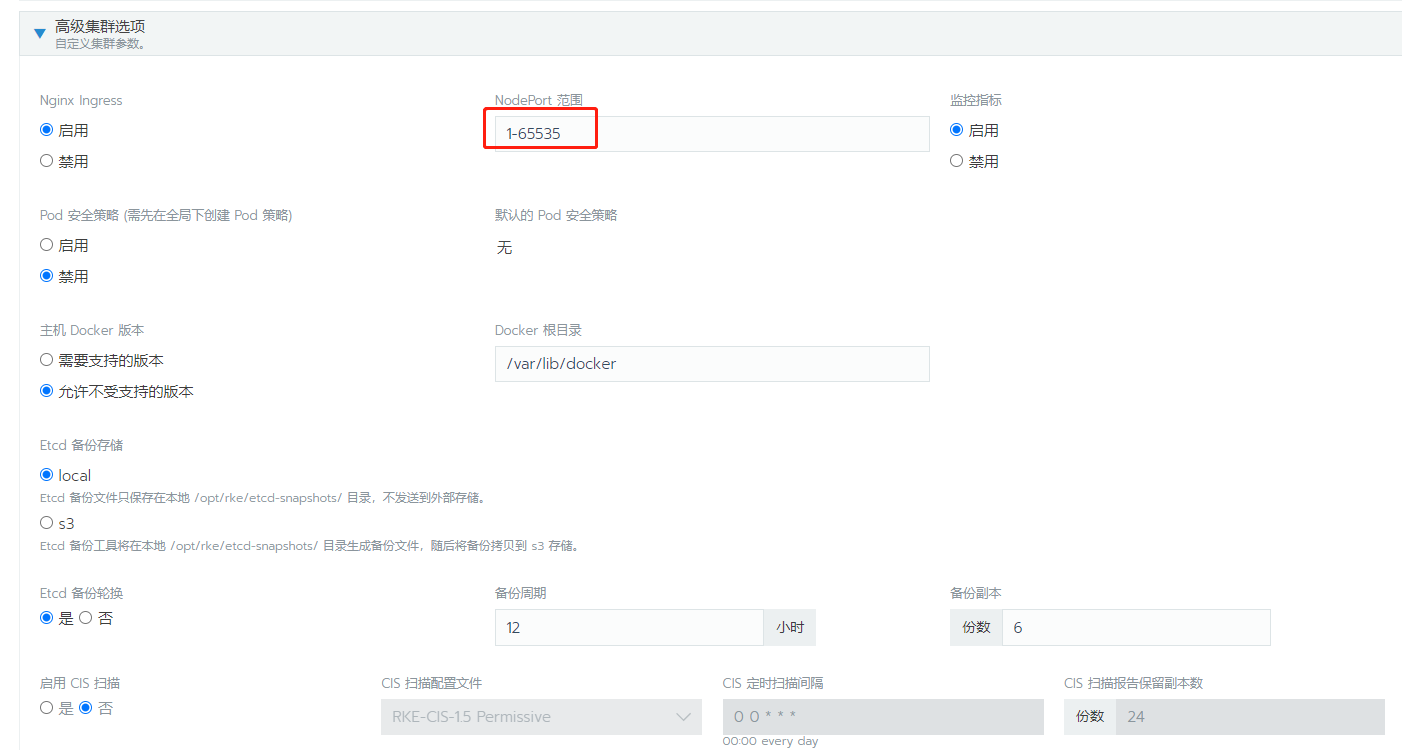

4、修改NodePort为1-65535

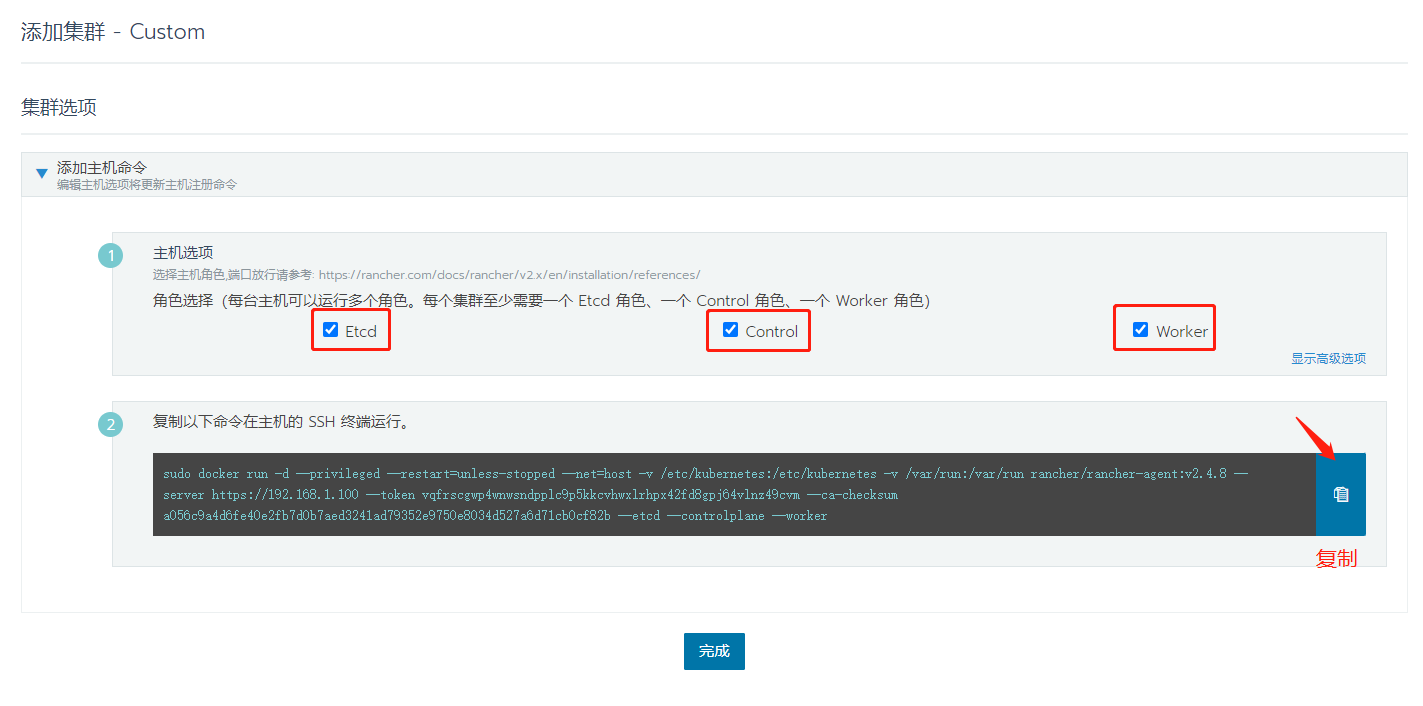

5、点击下一步,即可创建完成。

6、选择主机选项,Etcd,Control,Worker.复制生成的命令到master服务器上执行。

7、master服务器上执行

yang@master:~$ sudo docker run -d --privileged --restart=unless-stopped --net=host -v /etc/kubernetes:/etc/kubernetes -v /var/run:/var/run rancher/rancher-agent:v2.4.8 --server https://192.168.1.106 --token vqfrscgwp4wnwsndpplc9p5kkcvhwxlrhpx42fd8gpj64vlnz49cvm --ca-checksum a056c9a4d6fe40e2fb7d0b7aed3241ad79352e9750e8034d527a6d71cb0cf82b --etcd --controlplane --worker

Unable to find image 'rancher/rancher-agent:v2.4.8' locally

v2.4.8: Pulling from rancher/rancher-agent

f08d8e2a3ba1: Already exists

3baa9cb2483b: Already exists

94e5ff4c0b15: Already exists

1860925334f9: Already exists

e5d12d0f9a84: Pull complete

5116e686c448: Pull complete

d4f72327bfd0: Pull complete

61bcbcce7861: Pull complete

fca783017521: Pull complete

29ab00ed6801: Pull complete

Digest: sha256:c8a111e6250a313f1dd5d34696ddbef9068f70ddf4b15ab4c9cefd0ea39b76c1

Status: Downloaded newer image for rancher/rancher-agent:v2.4.8

5d0dab9b2c081057f482025d477b329c7a90464289b9209675755280842813bf

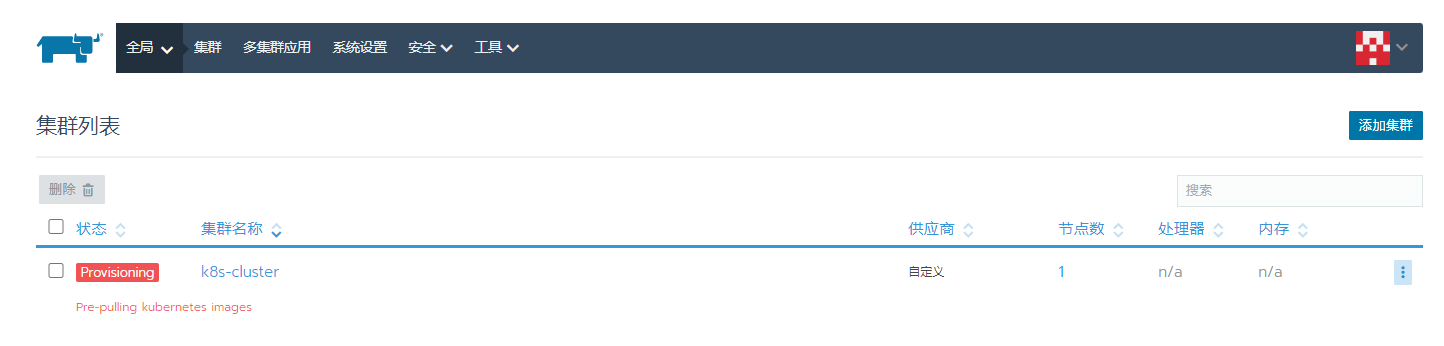

8、rancher管理界面查看状态(下载的镜像比较多,耐心等待)

9、同样的命令,将node节点也加入到集群中,如下:

七、k8s使用kubectl查看节点状态

1、建立config文件

在/home/yang/.kube的文件夹下,创建config文件

sudo mkdir -m 777 /home/yang/.kube

cd /home/yang/.kube/

sudo touch config

2、点击Rancher管理界面仪表盘右边的Kubeconfig File

3、复制里面的内容,粘贴到config文件中。

yang@master:~/.kube$ cat config

apiVersion: v1

kind: Config

clusters:

- name: "k8s-cluster"

cluster:

server: "https://192.168.1.106/k8s/clusters/c-rvp4f"

certificate-authority-data: "LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUJpRENDQ\

VM2Z0F3SUJBZ0lCQURBS0JnZ3Foa2pPUFFRREFqQTdNUnd3R2dZRFZRUUtFeE5rZVc1aGJXbGoKY\

kdsemRHVnVaWEl0YjNKbk1Sc3dHUVlEVlFRREV4SmtlVzVoYldsamJHbHpkR1Z1WlhJdFkyRXdIa\

GNOTWpFeApNakV6TURJME16STJXaGNOTXpFeE1qRXhNREkwTXpJMldqQTdNUnd3R2dZRFZRUUtFe\

E5rZVc1aGJXbGpiR2x6CmRHVnVaWEl0YjNKbk1Sc3dHUVlEVlFRREV4SmtlVzVoYldsamJHbHpkR\

1Z1WlhJdFkyRXdXVEFUQmdjcWhrak8KUFFJQkJnZ3Foa2pPUFFNQkJ3TkNBQVExamNjUDJDRkNiY\

XVYUEEvZWFqMmlUMmh1SWRoS3NkZmI4REhpYnN2egptMkZ1M1dCRXQ2NlkyMDZTL3BFT2FKTll1Q\

3lBaytHYjhYZjFITnRqbEhlVG95TXdJVEFPQmdOVkhROEJBZjhFCkJBTUNBcVF3RHdZRFZSMFRBU\

UgvQkFVd0F3RUIvekFLQmdncWhrak9QUVFEQWdOSUFEQkZBaUEwaUo2a0psSW8KeTNIS0RxN2NkT\

UgyaEZCRmM1VUdQRk5oZVRYNVBlOU0wQUloQUkzNEZwR0xNeUoxZE5GQnYrNHhTR0kwQVlPUwpmO\

FlGVVdJQjFBOVB3clo0Ci0tLS0tRU5EIENFUlRJRklDQVRFLS0tLS0="

- name: "k8s-cluster-node1"

cluster:

server: "https://192.168.1.108:6443"

certificate-authority-data: "LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUN3akNDQ\

WFxZ0F3SUJBZ0lCQURBTkJna3Foa2lHOXcwQkFRc0ZBREFTTVJBd0RnWURWUVFERXdkcmRXSmwKT\

FdOaE1CNFhEVEl4TVRJeE16QTBNREV6TkZvWERUTXhNVEl4TVRBME1ERXpORm93RWpFUU1BNEdBM\

VVFQXhNSAphM1ZpWlMxallUQ0NBU0l3RFFZSktvWklodmNOQVFFQkJRQURnZ0VQQURDQ0FRb0NnZ\

0VCQUxzU2UvMzFFTldECkE1YUljRkJwY0NkUGNQMGlKTWozUU94V2VPQ2pzbDhOU0pXSngrYUlhV\

1pUZDRmSzIwZHhPTnBjdUFiUXEyUWUKa3NwSExLRTNlUDJqZXpyekZJZndaTFdKTUtUM0t1RkR6c\

y8zaGhNU1NSczVwTUczYWo1bmVGajFYaTJLa2svWAo4VnVtS1FXQjlmSGRRdDVwclU4ZzBuYUZiQ\

mw0S0dicUJ4RUNRa0ZDV0hhM1U0RXpTVkpNbnRFRG1ZbDVxeHlFClV6VHRzUUx1dEpEdFBDdzFHb\

HB4Vndob1VtOXBYQ2pROElFYWsrU0g5c0o0a1JEOUxMSC9sMmkyWGxROUZkUksKaEZ4OUFCcUp4U\

khSaVc5K1dyTE9wUk1ENFBxL0ZkbCtqcDNNcTVtQ2lPN09ac1pNN1hUcWg1M1FEbFAzbjZDcApEa\

EtTVmZwMU02RUNBd0VBQWFNak1DRXdEZ1lEVlIwUEFRSC9CQVFEQWdLa01BOEdBMVVkRXdFQi93U\

UZNQU1CCkFmOHdEUVlKS29aSWh2Y05BUUVMQlFBRGdnRUJBQ2sxRTNQUE5JbENFc3lTRHhMbVFkZ\

25WUzNRT09oakczWXoKSXRPNVlJRVcxbUlDTlNBVUxjQ1pLaHFLSjRERkVIZEUyV0p1WGhhQmtIM\

nBQOFljQVpIVktWUHRGZGJRK29aMQpGUktDeXZHU2lQaTlQZ2VITDRCb2FHQ21wb2ZRdDFaaisyQ\

TFXQ3MvUUV4U3FyTXE2cXF1WXp0L3BKMFJIMFdZCmRMckFxL0NDRHMrbzlOQW4xQW5VYWZtUzB0R\

0FKb2R4SXZYM0haVVpNSUk5OWZLMDhIcWlKSVEyS0V5bnk3ZWwKUnVIR3Jlc0I4Y0lTR2pOZDg5M\

W16OEZJTk1QeExoNDFwWURjZjNqTEZ5VS83anZpZjUrbEdiRHlZM01Mb09UOApLVld4UXhSRDVka\

lF3MVZFZHlKVUVLK0EwMThOenBRM0JZenJDejI2THloZmZPVit3QVE9Ci0tLS0tRU5EIENFUlRJR\

klDQVRFLS0tLS0K"

- name: "k8s-cluster-node2"

cluster:

server: "https://192.168.1.109:6443"

certificate-authority-data: "LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUN3akNDQ\

WFxZ0F3SUJBZ0lCQURBTkJna3Foa2lHOXcwQkFRc0ZBREFTTVJBd0RnWURWUVFERXdkcmRXSmwKT\

FdOaE1CNFhEVEl4TVRJeE16QTBNREV6TkZvWERUTXhNVEl4TVRBME1ERXpORm93RWpFUU1BNEdBM\

VVFQXhNSAphM1ZpWlMxallUQ0NBU0l3RFFZSktvWklodmNOQVFFQkJRQURnZ0VQQURDQ0FRb0NnZ\

0VCQUxzU2UvMzFFTldECkE1YUljRkJwY0NkUGNQMGlKTWozUU94V2VPQ2pzbDhOU0pXSngrYUlhV\

1pUZDRmSzIwZHhPTnBjdUFiUXEyUWUKa3NwSExLRTNlUDJqZXpyekZJZndaTFdKTUtUM0t1RkR6c\

y8zaGhNU1NSczVwTUczYWo1bmVGajFYaTJLa2svWAo4VnVtS1FXQjlmSGRRdDVwclU4ZzBuYUZiQ\

mw0S0dicUJ4RUNRa0ZDV0hhM1U0RXpTVkpNbnRFRG1ZbDVxeHlFClV6VHRzUUx1dEpEdFBDdzFHb\

HB4Vndob1VtOXBYQ2pROElFYWsrU0g5c0o0a1JEOUxMSC9sMmkyWGxROUZkUksKaEZ4OUFCcUp4U\

khSaVc5K1dyTE9wUk1ENFBxL0ZkbCtqcDNNcTVtQ2lPN09ac1pNN1hUcWg1M1FEbFAzbjZDcApEa\

EtTVmZwMU02RUNBd0VBQWFNak1DRXdEZ1lEVlIwUEFRSC9CQVFEQWdLa01BOEdBMVVkRXdFQi93U\

UZNQU1CCkFmOHdEUVlKS29aSWh2Y05BUUVMQlFBRGdnRUJBQ2sxRTNQUE5JbENFc3lTRHhMbVFkZ\

25WUzNRT09oakczWXoKSXRPNVlJRVcxbUlDTlNBVUxjQ1pLaHFLSjRERkVIZEUyV0p1WGhhQmtIM\

nBQOFljQVpIVktWUHRGZGJRK29aMQpGUktDeXZHU2lQaTlQZ2VITDRCb2FHQ21wb2ZRdDFaaisyQ\

TFXQ3MvUUV4U3FyTXE2cXF1WXp0L3BKMFJIMFdZCmRMckFxL0NDRHMrbzlOQW4xQW5VYWZtUzB0R\

0FKb2R4SXZYM0haVVpNSUk5OWZLMDhIcWlKSVEyS0V5bnk3ZWwKUnVIR3Jlc0I4Y0lTR2pOZDg5M\

W16OEZJTk1QeExoNDFwWURjZjNqTEZ5VS83anZpZjUrbEdiRHlZM01Mb09UOApLVld4UXhSRDVka\

lF3MVZFZHlKVUVLK0EwMThOenBRM0JZenJDejI2THloZmZPVit3QVE9Ci0tLS0tRU5EIENFUlRJR\

klDQVRFLS0tLS0K"

- name: "k8s-cluster-master"

cluster:

server: "https://192.168.1.106:6443"

certificate-authority-data: "LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUN3akNDQ\

WFxZ0F3SUJBZ0lCQURBTkJna3Foa2lHOXcwQkFRc0ZBREFTTVJBd0RnWURWUVFERXdkcmRXSmwKT\

FdOaE1CNFhEVEl4TVRJeE16QTBNREV6TkZvWERUTXhNVEl4TVRBME1ERXpORm93RWpFUU1BNEdBM\

VVFQXhNSAphM1ZpWlMxallUQ0NBU0l3RFFZSktvWklodmNOQVFFQkJRQURnZ0VQQURDQ0FRb0NnZ\

0VCQUxzU2UvMzFFTldECkE1YUljRkJwY0NkUGNQMGlKTWozUU94V2VPQ2pzbDhOU0pXSngrYUlhV\

1pUZDRmSzIwZHhPTnBjdUFiUXEyUWUKa3NwSExLRTNlUDJqZXpyekZJZndaTFdKTUtUM0t1RkR6c\

y8zaGhNU1NSczVwTUczYWo1bmVGajFYaTJLa2svWAo4VnVtS1FXQjlmSGRRdDVwclU4ZzBuYUZiQ\

mw0S0dicUJ4RUNRa0ZDV0hhM1U0RXpTVkpNbnRFRG1ZbDVxeHlFClV6VHRzUUx1dEpEdFBDdzFHb\

HB4Vndob1VtOXBYQ2pROElFYWsrU0g5c0o0a1JEOUxMSC9sMmkyWGxROUZkUksKaEZ4OUFCcUp4U\

khSaVc5K1dyTE9wUk1ENFBxL0ZkbCtqcDNNcTVtQ2lPN09ac1pNN1hUcWg1M1FEbFAzbjZDcApEa\

EtTVmZwMU02RUNBd0VBQWFNak1DRXdEZ1lEVlIwUEFRSC9CQVFEQWdLa01BOEdBMVVkRXdFQi93U\

UZNQU1CCkFmOHdEUVlKS29aSWh2Y05BUUVMQlFBRGdnRUJBQ2sxRTNQUE5JbENFc3lTRHhMbVFkZ\

25WUzNRT09oakczWXoKSXRPNVlJRVcxbUlDTlNBVUxjQ1pLaHFLSjRERkVIZEUyV0p1WGhhQmtIM\

nBQOFljQVpIVktWUHRGZGJRK29aMQpGUktDeXZHU2lQaTlQZ2VITDRCb2FHQ21wb2ZRdDFaaisyQ\

TFXQ3MvUUV4U3FyTXE2cXF1WXp0L3BKMFJIMFdZCmRMckFxL0NDRHMrbzlOQW4xQW5VYWZtUzB0R\

0FKb2R4SXZYM0haVVpNSUk5OWZLMDhIcWlKSVEyS0V5bnk3ZWwKUnVIR3Jlc0I4Y0lTR2pOZDg5M\

W16OEZJTk1QeExoNDFwWURjZjNqTEZ5VS83anZpZjUrbEdiRHlZM01Mb09UOApLVld4UXhSRDVka\

lF3MVZFZHlKVUVLK0EwMThOenBRM0JZenJDejI2THloZmZPVit3QVE9Ci0tLS0tRU5EIENFUlRJR\

klDQVRFLS0tLS0K" users:

- name: "k8s-cluster"

user:

token: "kubeconfig-user-trn62.c-rvp4f:p82f2nfbxnzqllvls9rpfmtxk8dkcnjjgm8rsl5nvq978gms5twpd8" contexts:

- name: "k8s-cluster"

context:

user: "k8s-cluster"

cluster: "k8s-cluster"

- name: "k8s-cluster-node1"

context:

user: "k8s-cluster"

cluster: "k8s-cluster-node1"

- name: "k8s-cluster-node2"

context:

user: "k8s-cluster"

cluster: "k8s-cluster-node2"

- name: "k8s-cluster-master"

context:

user: "k8s-cluster"

cluster: "k8s-cluster-master" current-context: "k8s-cluster"

4、让kubectl能辨识到~/.kube/config

export KUBECONFIG=/home/rancher/.kube/config

5、确认kubectl有没有抓到nodes

kubectl cluster-info

6、查看node节点状态

yang@master:~$ kubectl get node

NAME STATUS ROLES AGE VERSION

master Ready control-plane,etcd,worker 4d1h v1.18.8

node1 Ready control-plane,etcd,worker 4d v1.18.8

node2 Ready control-plane,etcd,worker 4d v1.18.8

至此rancher 基于k8s集群配置完成,即可部署服务!

Rancher v2.4.8 容器管理平台-集群搭建(基于k8s)的更多相关文章

- K8S集群搭建——基于CentOS 7系统

环境准备集群数量此次使用3台CentOS 7系列机器,分别为7.3,7.4,7.5 节点名称 节点IPmaster 192.168.0.100node1 192.168.0.101node2 192. ...

- centos7 mysql cluster集群搭建基于docker

1.准备 mn:集群管理服务器用于管理集群的其他节点.我们可以从管理节点创建和配置集群上的新节点.重新启动.删除或备份节点. db2/db3:这是节点间同步和数据复制的过程发生的层. db4/db5: ...

- SolrCloud集群搭建(基于zookeeper)

1. 环境准备 1.1 三台Linux机器,x64系统 1.2 jdk1.8 1.3 Solr5.5 2. 安装zookeeper集群 2.1 分别在三台机器上创建目录 mkdir /usr/hdp/ ...

- K8S之集群搭建

转自声明 ASP.NET Core on K8S深入学习(1)K8S基础知识与集群搭建 1.K8S环境搭建的几种方式 搭建K8S环境有几种常见的方式如下: (1)Minikube Minikube是一 ...

- 企业级容器管理平台 Rancher 介绍入门及如何备份数据

企业级容器管理平台 Rancher 介绍入门及如何备份数据 是什么 Rancher 是一个为 DevOps 团队提供的完整的 Kubernetes 与容器管理解决方案的开源的企业级容器管理平台.它解决 ...

- Docker容器管理平台Rancher高可用部署——看这篇就够了

记得刚接触Rancher时,看了官方文档云里雾里,跟着官网文档部署了高可用Rancher,发现不管怎么折腾都无法部署成功(相信已尝试的朋友也有类似的感觉),今天腾出空来写个总结,给看到的朋友留个参考( ...

- Rancher 容器管理平台-免费视频培训-链接及内容-第三季

Rancher 容器管理平台-免费视频培训-链接及内容 第三季 第5期-2018年05月10日-持续集成的容器化实践回放网址:http://www.itdks.com/liveevent/detail ...

- [转帖]devops 容器管理平台 rancher 简介

https://testerhome.com/topics/10828 chenhengjie123 for PPmoney · 2017年11月13日 · 最后由 c19950809 回复于 201 ...

- 基于kubernetes自研容器管理平台的技术实践

一.容器云的背景 伴随着微服务的架构的普及,结合开源的Dubbo和Spring Cloud等微服务框架,宜信内部很多业务线逐渐了从原来的单体架构逐渐转移到微服务架构.应用从有状态到无状态,具体来说将业 ...

- [转帖]两大容器管理平台,Kubernetes与OpenShift有什么区别?

两大容器管理平台,Kubernetes与OpenShift有什么区别? https://www.sohu.com/a/327413642_100159565 原来openshift 就是 k8s的一个 ...

随机推荐

- Angular响应式表单验证输入(跨字段验证、异步API验证)

一.跨字段验证 1.新增验证器 import { AbstractControl, ValidationErrors, ValidatorFn } from '@angular/forms'; exp ...

- Visual Studio Code 如何设置成中文语言

Visual Studio Code 是一款微软的代码编辑器,这款软件是比较不错的,用起来也比较方便,但是好多人在第一次安装的时候展现的是英文的,这对于一些小伙伴是比较头疼的问题,那如何调整为中文的呢 ...

- redisTemplate类学习及理解

List<Object> list = masterRedisTemplate.executePipelined((RedisCallback<Long>) connectio ...

- Android集成mupdf,实现手写笔签字,手指翻页的java代码

import android.graphics.Bitmap; import android.graphics.Color; import android.graphics.RectF; import ...

- dcat-admin在弹框中使用grid的编辑框不提示也不报错

显示效果 #版本:2.1.5-beta #点击编辑时没有反应,其实它已经把编辑框显示出来了,只是在当前这个弹框的后面,我们看不见,这样你可以在自己的项目中把弹框挪开或在F12中html搜索应该显示的代 ...

- ElementUI Select下拉框定位问题!

今天遇到了下拉不跟随文本框滚动的问题 参考官方手册添加参数: popper-append-to-body="false" 无效[内心很无语]继续检查向上推,查看html样式,发现了 ...

- Web入门实战

Web入门实战 - [湖湘杯 2021 final]Penetratable 难度:**** 查看题解 - [GKCTF 2021]easycms 难度:** 查看题解

- NextCloud 17.0.1 升级到NextCloud 23.0.0

NextCloud 版本过低使用时间过长,想升级一下. 问题记录及参考文档 本次采用离线升级(在线不能下载) 官网下载https://nextcloud.com/install/# 23.0.0最新 ...

- 4-20mA换算为实际值公式

Ov = [(Osh - Osl) * (Iv - Isl) / (Ish - Isl)] + Osl 实际工程量 = [((实际工程量)的高限 - (实际工程量)的低限)*(lv - 4) / (2 ...

- selenium------关于switch_to的用法场景

基于python3的语法,driver.switch_to_alert()的表达会出现中划线,因此需要把后面的下划线改为点.一.目前接触到的switch_to的用法包括以下几种:1. 切换到制定的wi ...