K8s - 安装部署Kafka、Zookeeper集群教程(支持从K8s外部访问)

本文演示如何在K8s集群下部署Kafka集群,并且搭建后除了可以K8s内部访问Kafka服务,也支持从K8s集群外部访问Kafka服务。服务的集群部署通常有两种方式:一种是 StatefulSet,另一种是 Service&Deployment。本次我们使用 StatefulSet 方式搭建 ZooKeeper 集群,使用 Service&Deployment 搭建 Kafka 集群。

一、创建 NFS 存储

NFS 存储主要是为了给 Kafka、ZooKeeper 提供稳定的后端存储,当 Kafka、ZooKeeper 的 Pod 发生故障重启或迁移后,依然能获得原先的数据。

1,安装 NFS

yum -y install nfs-utils

yum -y install rpcbind

2,创建共享文件夹

mkdir -p /usr/local/k8s/zookeeper/pv{1..3}

mkdir -p /usr/local/k8s/kafka/pv{1..3}

(2)编辑 /etc/exports 文件:

vim /etc/exports

(3)在里面添加如下内容:

/usr/local/k8s/kafka/pv1 *(rw,sync,no_root_squash)

/usr/local/k8s/kafka/pv2 *(rw,sync,no_root_squash)

/usr/local/k8s/kafka/pv3 *(rw,sync,no_root_squash)

/usr/local/k8s/zookeeper/pv1 *(rw,sync,no_root_squash)

/usr/local/k8s/zookeeper/pv2 *(rw,sync,no_root_squash)

/usr/local/k8s/zookeeper/pv3 *(rw,sync,no_root_squash)

(4)保存退出后执行如下命令重启服务:

如果执行 systemctl restart nfs 报“Failed to restart nfs.service: Unit nfs.service not found.”错误,可以尝试改用如下命令:

- sudo service nfs-server start

systemctl restart rpcbind

systemctl restart nfs

systemctl enable nfs

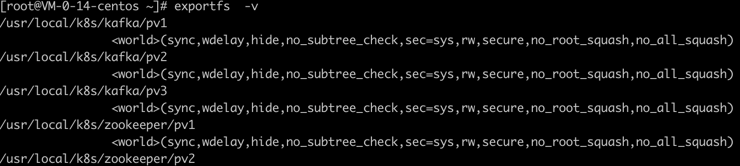

(5)执行 exportfs -v 命令可以显示出所有的共享目录:

(6)而其他的 Node 节点上需要执行如下命令安装 nfs-utils 客户端:

yum -y install nfs-util

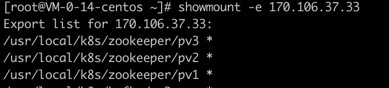

(7)然后其他的 Node 节点上可执行如下命令(ip 为 Master 节点 IP)查看 Master 节点上共享的文件夹:

showmount -e 107.106.37.33(nfs服务端的IP)

二、创建 ZooKeeper 集群

1,创建 ZooKeeper PV

(1)首先创建一个 zookeeper-pv.yaml 文件,内容如下:

apiVersion: v1

kind: PersistentVolume

metadata:

name: k8s-pv-zk01

labels:

app: zk

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

nfs:

server: 170.106.37.33

path: "/usr/local/k8s/zookeeper/pv1"

persistentVolumeReclaimPolicy: Recycle

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: k8s-pv-zk02

labels:

app: zk

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

nfs:

server: 170.106.37.33

path: "/usr/local/k8s/zookeeper/pv2"

persistentVolumeReclaimPolicy: Recycle

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: k8s-pv-zk03

labels:

app: zk

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

capacity:

storage: 1Gi

accessModes:

- ReadWriteOnce

nfs:

server: 170.106.37.33

path: "/usr/local/k8s/zookeeper/pv3"

persistentVolumeReclaimPolicy: Recycle

(2)然后执行如下命令创建 PV:

kubectl apply -f zookeeper-pv.yaml

(3)执行如下命令可以查看是否创建成功:

kubectl get pv

2,创建 ZooKeeper 集群

apiVersion: v1

kind: Service

metadata:

name: zk-hs

labels:

app: zk

spec:

selector:

app: zk

clusterIP: None

ports:

- name: server

port: 2888

- name: leader-election

port: 3888

---

apiVersion: v1

kind: Service

metadata:

name: zk-cs

labels:

app: zk

spec:

selector:

app: zk

type: NodePort

ports:

- name: client

port: 2181

nodePort: 31811

---

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: zk

spec:

serviceName: "zk-hs"

replicas: 3 # by default is 1

selector:

matchLabels:

app: zk # has to match .spec.template.metadata.labels

updateStrategy:

type: RollingUpdate

podManagementPolicy: Parallel

template:

metadata:

labels:

app: zk # has to match .spec.selector.matchLabels

spec:

containers:

- name: zk

imagePullPolicy: Always

image: leolee32/kubernetes-library:kubernetes-zookeeper1.0-3.4.10

ports:

- containerPort: 2181

name: client

- containerPort: 2888

name: server

- containerPort: 3888

name: leader-election

command:

- sh

- -c

- "start-zookeeper \

--servers=3 \

--data_dir=/var/lib/zookeeper/data \

--data_log_dir=/var/lib/zookeeper/data/log \

--conf_dir=/opt/zookeeper/conf \

--client_port=2181 \

--election_port=3888 \

--server_port=2888 \

--tick_time=2000 \

--init_limit=10 \

--sync_limit=5 \

--heap=4G \

--max_client_cnxns=60 \

--snap_retain_count=3 \

--purge_interval=12 \

--max_session_timeout=40000 \

--min_session_timeout=4000 \

--log_level=INFO"

readinessProbe:

exec:

command:

- sh

- -c

- "zookeeper-ready 2181"

initialDelaySeconds: 10

timeoutSeconds: 5

livenessProbe:

exec:

command:

- sh

- -c

- "zookeeper-ready 2181"

initialDelaySeconds: 10

timeoutSeconds: 5

volumeMounts:

- name: datadir

mountPath: /var/lib/zookeeper

volumeClaimTemplates:

- metadata:

name: datadir

annotations:

volume.beta.kubernetes.io/storage-class: "anything"

spec:

accessModes: [ "ReadWriteOnce" ]

resources:

requests:

storage: 1Gi

(2)然后执行如下命令开始创建:

kubectl apply -f zookeeper.yaml

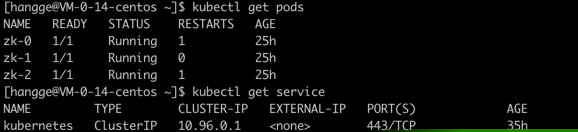

(3)执行如下命令可以查看是否创建成功:

kubectl get pods

kubectl get service

三、创建 Kafka 集群

- nfs 地址需要改成实际 NFS 服务器地址。

- status.hostIP 表示宿主机的 IP,即 Pod 实际最终部署的 Node 节点 IP(本文我是直接部署到 Master 节点上),将 KAFKA_ADVERTISED_HOST_NAME 设置为宿主机 IP 可以确保 K8s 集群外部也可以访问 Kafka

apiVersion: v1

kind: Service

metadata:

name: kafka-service-1

labels:

app: kafka-service-1

spec:

type: NodePort

ports:

- port: 9092

name: kafka-service-1

targetPort: 9092

nodePort: 30901

protocol: TCP

selector:

app: kafka-1

---

apiVersion: v1

kind: Service

metadata:

name: kafka-service-2

labels:

app: kafka-service-2

spec:

type: NodePort

ports:

- port: 9092

name: kafka-service-2

targetPort: 9092

nodePort: 30902

protocol: TCP

selector:

app: kafka-2

---

apiVersion: v1

kind: Service

metadata:

name: kafka-service-3

labels:

app: kafka-service-3

spec:

type: NodePort

ports:

- port: 9092

name: kafka-service-3

targetPort: 9092

nodePort: 30903

protocol: TCP

selector:

app: kafka-3

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka-deployment-1

spec:

replicas: 1

selector:

matchLabels:

app: kafka-1

template:

metadata:

labels:

app: kafka-1

spec:

containers:

- name: kafka-1

image: wurstmeister/kafka

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9092

env:

- name: KAFKA_ZOOKEEPER_CONNECT

value: zk-0.zk-hs.default.svc.cluster.local:2181,zk-1.zk-hs.default.svc.cluster.local:2181,zk-2.zk-hs.default.svc.cluster.local:2181

- name: KAFKA_BROKER_ID

value: "1"

- name: KAFKA_CREATE_TOPICS

value: mytopic:2:1

- name: KAFKA_LISTENERS

value: PLAINTEXT://0.0.0.0:9092

- name: KAFKA_ADVERTISED_PORT

value: "30901"

- name: KAFKA_ADVERTISED_HOST_NAME

valueFrom:

fieldRef:

fieldPath: status.hostIP

volumeMounts:

- name: datadir

mountPath: /var/lib/kafka

volumes:

- name: datadir

nfs:

server: 170.106.37.33

path: "/usr/local/k8s/kafka/pv1"

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka-deployment-2

spec:

replicas: 1

selector:

matchLabels:

app: kafka-2

template:

metadata:

labels:

app: kafka-2

spec:

containers:

- name: kafka-2

image: wurstmeister/kafka

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9092

env:

- name: KAFKA_ZOOKEEPER_CONNECT

value: zk-0.zk-hs.default.svc.cluster.local:2181,zk-1.zk-hs.default.svc.cluster.local:2181,zk-2.zk-hs.default.svc.cluster.local:2181

- name: KAFKA_BROKER_ID

value: "2"

- name: KAFKA_LISTENERS

value: PLAINTEXT://0.0.0.0:9092

- name: KAFKA_ADVERTISED_PORT

value: "30902"

- name: KAFKA_ADVERTISED_HOST_NAME

valueFrom:

fieldRef:

fieldPath: status.hostIP

volumeMounts:

- name: datadir

mountPath: /var/lib/kafka

volumes:

- name: datadir

nfs:

server: 170.106.37.33

path: "/usr/local/k8s/kafka/pv2"

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: kafka-deployment-3

spec:

replicas: 1

selector:

matchLabels:

app: kafka-3

template:

metadata:

labels:

app: kafka-3

spec:

containers:

- name: kafka-3

image: wurstmeister/kafka

imagePullPolicy: IfNotPresent

ports:

- containerPort: 9092

env:

- name: KAFKA_ZOOKEEPER_CONNECT

value: zk-0.zk-hs.default.svc.cluster.local:2181,zk-1.zk-hs.default.svc.cluster.local:2181,zk-2.zk-hs.default.svc.cluster.local:2181

- name: KAFKA_BROKER_ID

value: "3"

- name: KAFKA_LISTENERS

value: PLAINTEXT://0.0.0.0:9092

- name: KAFKA_ADVERTISED_PORT

value: "30903"

- name: KAFKA_ADVERTISED_HOST_NAME

valueFrom:

fieldRef:

fieldPath: status.hostIP

volumeMounts:

- name: datadir

mountPath: /var/lib/kafka

volumes:

- name: datadir

nfs:

server: 170.106.37.33

path: "/usr/local/k8s/kafka/pv3"

(2)然后执行如下命令开始创建:

kubectl apply -f kafka.yaml

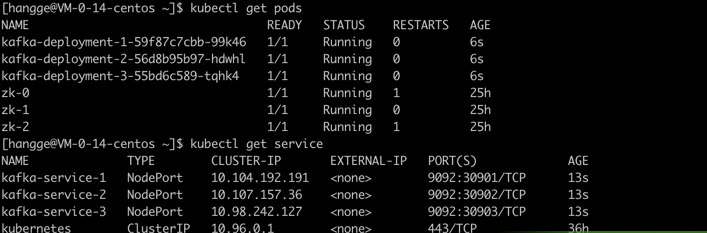

(3)执行如下命令可以查看是否创建成功:

kubectl get pods

kubectl get service

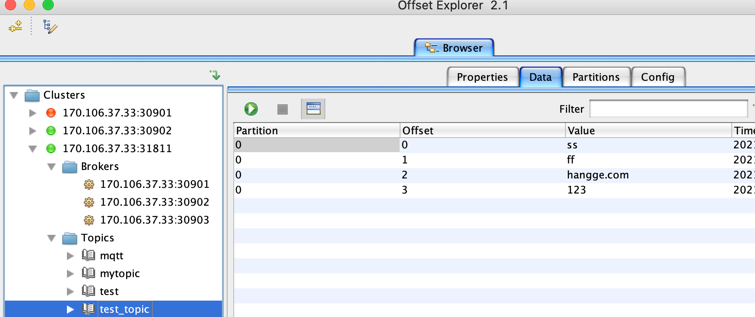

四、开始测试

1,K8s 集群内部测试

kubectl exec -it kafka-deployment-1-59f87c7cbb-99k46 /bin/bash

(2)接着执行如下命令创建一个名为 test_topic 的 topic:

kafka-topics.sh --create --topic test_topic --zookeeper zk-0.zk-hs.default.svc.cluster.local:2181,zk-1.zk-hs.default.svc.cluster.local:2181,zk-2.zk-hs.default.svc.cluster.local:2181 --partitions 1 --replication-factor 1

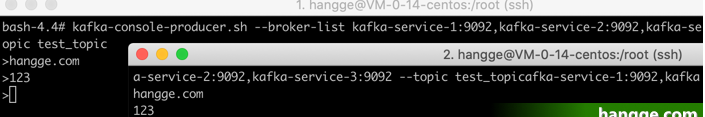

(3)创建后执行如下命令开启一个生产者,启动后可以直接在控制台中输入消息来发送,控制台中的每一行数据都会被视为一条消息来发送。

kafka-console-producer.sh --broker-list kafka-service-1:9092,kafka-service-2:9092,kafka-service-3:9092 --topic test_topic

(4)重新再打开一个终端连接服务器,然后进入容器后执行如下命令开启一个消费者:

kafka-console-consumer.sh --bootstrap-server kafka-service-1:9092,kafka-service-2:9092,kafka-service-3:9092 --topic test_topic

(5)再次打开之前的消息生产客户端来发送消息,并观察消费者这边对消息的输出来体验 Kafka 对消息的基础处理。

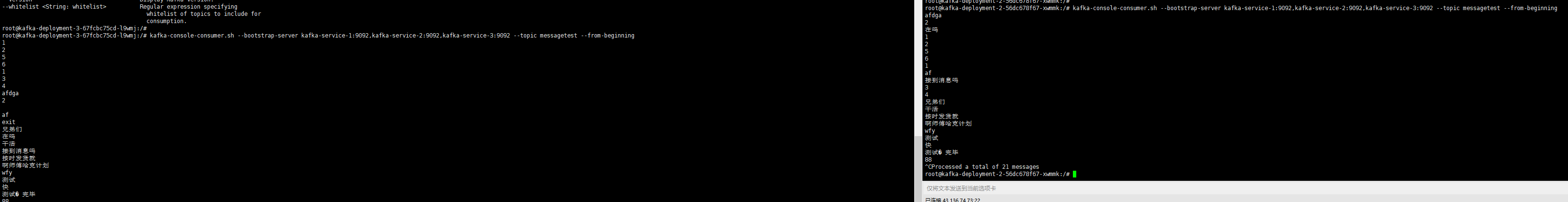

2,集群外出测试

更多的测试命令参考:

二、Kafka生产者消费者实例(基于命令行)

1.创建一个itcasttopic的主题

代码如下(示例):

kafka-topics.sh --create --topic itcasttopic --partitions 3 --replication-factor 2 -zookeeper 10.0.0.27:2181,10.0.0.103:2181,10.0.0.37:2181 2.hadoop01当生产者

代码如下(示例):

kafka-console-producer.sh --broker-list kafka-service-1:9092,kafka-service-2:9092,kafka-service-3:9092 --topic itcasttopic 3.hadoop02当消费者

代码如下(示例):

kafka-console-consumer.sh --from-beginning --topic itcasttopic --bootstrap-server kafka-service-1:9092,kafka-service-2:9092,kafka-service-3:9092 3.–list查看所有主题

代码如下(示例):

kafka-topics.sh --list --zookeeper 10.0.0.27:2181,10.0.0.103:2181,10.0.0.37:2181 4.删除主题

代码如下(示例):

kafka-topics.sh --delete --zookeeper 10.0.0.27:2181,10.0.0.103:2181,10.0.0.37:2181 --topic itcasttopic 5.关闭kafka

代码如下(示例):

bin/kafka-server-stop.sh config/server.properties

K8s - 安装部署Kafka、Zookeeper集群教程(支持从K8s外部访问)的更多相关文章

- Zookeeper详解(02) - zookeeper安装部署-单机模式-集群模式

Zookeeper详解(02) - zookeeper安装部署-单机模式-集群模式 安装包下载 官网首页:https://zookeeper.apache.org/ 历史版本下载地址:http://a ...

- kafka+zookeeper集群

参考: kafka中文文档 快速搭建kafka+zookeeper高可用集群 kafka+zookeeper集群搭建 kafka+zookeeper集群部署 kafka集群部署 kafk ...

- 消息中间件kafka+zookeeper集群部署、测试与应用

业务系统中,通常会遇到这些场景:A系统向B系统主动推送一个处理请求:A系统向B系统发送一个业务处理请求,因为某些原因(断电.宕机..),B业务系统挂机了,A系统发起的请求处理失败:前端应用并发量过大, ...

- Kafka+Zookeeper集群搭建

上次介绍了ES集群搭建的方法,希望能帮助大家,这儿我再接着介绍kafka集群,接着上次搭建的效果. 首先我们来简单了解下什么是kafka和zookeeper? Apache kafka 是一个分布式的 ...

- Kafka/Zookeeper集群的实现(二)

[root@kafkazk1 ~]# wget http://mirror.bit.edu.cn/apache/zookeeper/zookeeper-3.4.12/zookeeper-3.4.12. ...

- 安装kafka + zookeeper集群

系统:centos 7.4 要求:jdk :1.8.x kafka_2.11-1.1.0 1.绑定/etc/hosts 10.10.10.xxx online-ops-xxx-0110.10 ...

- KAFKA && zookeeper 集群安装

服务器:#vim /etc/hosts10.16.166.90 sh-xxx-xxx-xxx-online-0110.16.168.220 sh-xx-xxx-xxx-online-0210.16.1 ...

- Linux下部署Kafka分布式集群,安装与测试

注意:部署Kafka之前先部署环境JAVA.Zookeeper 准备三台CentOS_6.5_x64服务器,分别是:IP: 192.168.0.249 dbTest249 Kafka IP: 192. ...

- docker-搭建 kafka+zookeeper集群

拉取容器 docker pull wurstmeister/zookeeper docker pull wurstmeister/kafka 这里演示使 ...

- 在三台Centos或Windows中部署三台Zookeeper集群配置

一.安装包 1.下载最新版(3.4.13):https://archive.apache.org/dist/zookeeper/ 下载https://archive.apache.org/dist/ ...

随机推荐

- 高效运营新纪元:智能化华为云Astro低代码重塑组装式交付

摘要:程序员不再需要盲目编码,填补单调乏味的任务空白,他们可以专注于设计和创新:企业不必困惑于复杂的开发过程,可以更好地满足客户需求以及业务策略迭代. 本文分享自华为云社区<高效运营新纪元:智能 ...

- Redis的设计与实现(5)-整数集合

整数集合(intset)是集合键的底层实现之一: 当一个集合只包含整数值元素, 并且这个集合的元素数量不多时, Redis 就会使用整数集合作为集合键的底层实现. 整数集合 (intset) 是 Re ...

- 用虚拟机配置Linux实验环境

我们平时经常需要利用VMware搭建Linux实验环境,下面我将搭建步骤整理了一下. 安装虚拟机 系统镜像:CentOS-7-x86_64-Everything-1708.iso 用VMware安装系 ...

- Centos7安装Python3.x

一.修改yum源 查看Centos发行版本 cat /etc/redhat-release 换阿里云yum源 备份原始yum源 mv /etc/yum.repos.d/CentOS-Base.repo ...

- 用 Hugging Face 推理端点部署 LLM

开源的 LLM,如 Falcon.(Open-)LLaMA.X-Gen.StarCoder 或 RedPajama,近几个月来取得了长足的进展,能够在某些用例中与闭源模型如 ChatGPT 或 GPT ...

- 关于在modelsim中 仿真 ROM IP核 读取不了 mif文件 的解决方法

在modelsim中 仿真 ROM IP核 读取不了 mif文件 . 出现状况: 显示无法打开 rom_8x256.mif 文件 .点开modelsim下面文件的内存列表,可看到内存全为0. 查看自身 ...

- Cobalt Strike使用教程二

0x00 前言 继前一章介绍了Cobalt Strike的基本用法,本章接着介绍如何攻击.提权.维权等. 0x01 与Metasploit联动 Cobalt Strike → Metasploit m ...

- Room组件的用法

一.Android官方ORM数据库Room Android采用Sqlite作为数据库存储.但由于Sqlite代码写起来繁琐且容易出错,因此Google推出了Room,其实Room就是在Sqlite上面 ...

- 基于Supabase开发公众号接口

在<开源BaaS平台Supabase介绍>一文中我们对什么是BaaS以及一个优秀的BaaS平台--Supabase做了一些介绍.在这之后,出于探究的目的,我利用一些空闲时间基于Micros ...

- Win11和Win10怎么禁用驱动程序强制签名? 关闭Windows系统驱动强制签名的技巧?

前言 什么是驱动程序签名? 驱动程序签名又叫做驱动程序的数字签名,它是由微软的Windows硬件设备质量实验室完成的.硬件开发商将自己的硬件设备和相应的驱动程序交给该实验室,由实验室对其进行测试,测试 ...