大数据【四】MapReduce(单词计数;二次排序;计数器;join;分布式缓存)

前言:

根据前面的几篇博客学习,现在可以进行MapReduce学习了。本篇博客首先阐述了MapReduce的概念及使用原理,其次直接从五个实验中实践学习(单词计数,二次排序,计数器,join,分布式缓存)。

一 概述

定义

MapReduce是一种计算模型,简单的说就是将大批量的工作(数据)分解(MAP)执行,然后再将结果合并成最终结果(REDUCE)。这样做的好处是可以在任务被分解后,可以通过大量机器进行并行计算,减少整个操作的时间。

适用范围:数据量大,但是数据种类小可以放入内存。

基本原理及要点:将数据交给不同的机器去处理,数据划分,结果归约。

理解MapReduce和Yarn:在新版Hadoop中,Yarn作为一个资源管理调度框架,是Hadoop下MapReduce程序运行的生存环境。其实MapRuduce除了可以运行Yarn框架下,也可以运行在诸如Mesos,Corona之类的调度框架上,使用不同的调度框架,需要针对Hadoop做不同的适配。(了解YARN见上一篇博客>> http://www.cnblogs.com/1996swg/p/7286490.html )

MapReduce编程

编写在Hadoop中依赖Yarn框架执行的MapReduce程序,并不需要自己开发MRAppMaster和YARNRunner,因为Hadoop已经默认提供通用的YARNRunner和MRAppMaster程序, 大部分情况下只需要编写相应的Map处理和Reduce处理过程的业务程序即可。

编写一个MapReduce程序并不复杂,关键点在于掌握分布式的编程思想和方法,主要将计算过程分为以下五个步骤:

(1)迭代。遍历输入数据,并将之解析成key/value对。

(2)将输入key/value对映射(map)成另外一些key/value对。

(3)依据key对中间数据进行分组(grouping)。

(4)以组为单位对数据进行归约(reduce)。

(5)迭代。将最终产生的key/value对保存到输出文件中。

Java API解析

(1)InputFormat:用于描述输入数据的格式,常用的为TextInputFormat提供如下两个功能:

数据切分: 按照某个策略将输入数据切分成若干个split,以便确定Map Task个数以及对应的split。

为Mapper提供数据:给定某个split,能将其解析成一个个key/value对。

(2)OutputFormat:用于描述输出数据的格式,它能够将用户提供的key/value对写入特定格式的文件中。

(3)Mapper/Reducer: Mapper/Reducer中封装了应用程序的数据处理逻辑。

(4)Writable:Hadoop自定义的序列化接口。实现该类的接口可以用作MapReduce过程中的value数据使用。

(5)WritableComparable:在Writable基础上继承了Comparable接口,实现该类的接口可以用作MapReduce过程中的key数据使用。(因为key包含了比较排序的操作)。

二 单词计数实验

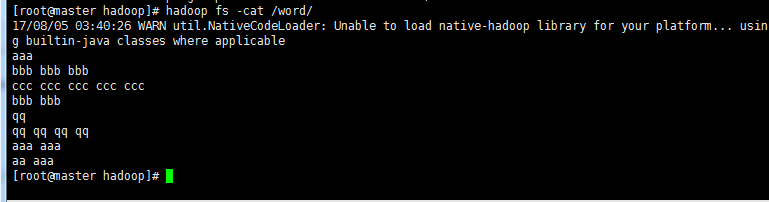

!单词计数文件word

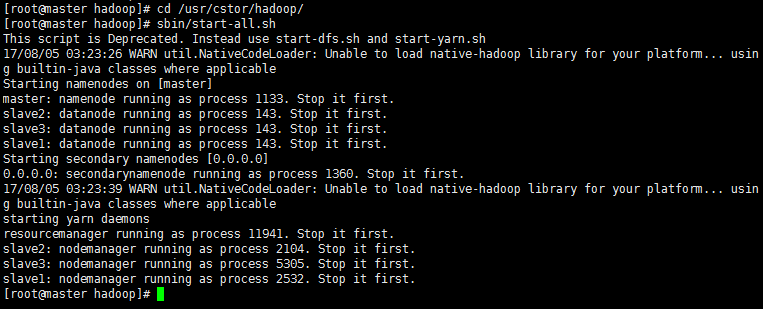

1‘ 启动Hadoop 执行命令启动(前面博客)部署好的Hadoop系统。

命令:

cd /usr/cstor/hadoop/

sbin/start-all.sh

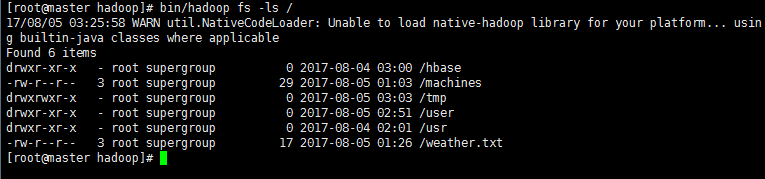

2’ 验证HDFS上没有wordcount的文件夹 此时HDFS上应该是没有wordcount文件夹。

cd /usr/cstor/hadoop/

bin/hadoop fs -ls / #查看HDFS上根目录文件 /

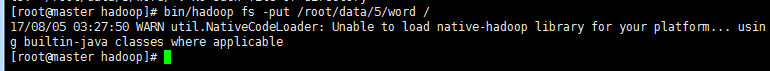

3‘ 上传数据文件到HDFS

cd /usr/cstor/hadoop/

bin/hadoop fs -put /root/data/5/word /

4’ 编写MapReduce程序

在eclipse新建mapreduce项目(方法见博客>> http://www.cnblogs.com/1996swg/p/7286136.html ),新建class类WordCount

主要编写Map和Reduce类,其中Map过程需要继承org.apache.hadoop.mapreduce包中Mapper类,并重写其map方法;Reduce过程需要继承org.apache.hadoop.mapreduce包中Reduce类,并重写其reduce方法。

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.TextInputFormat;

import org.apache.hadoop.mapreduce.lib.output.TextOutputFormat;

import org.apache.hadoop.mapreduce.lib.partition.HashPartitioner; import java.io.IOException;

import java.util.StringTokenizer; public class WordCount {

public static class TokenizerMapper extends Mapper<Object, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private Text word = new Text();

//map方法,划分一行文本,读一个单词写出一个<单词,1>

public void map(Object key, Text value, Context context)throws IOException, InterruptedException {

StringTokenizer itr = new StringTokenizer(value.toString());

while (itr.hasMoreTokens()) {

word.set(itr.nextToken());

context.write(word, one);//写出<单词,1>

}}}

//定义reduce类,对相同的单词,把它们<K,VList>中的VList值全部相加

public static class IntSumReducer extends Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable();

public void reduce(Text key, Iterable<IntWritable> values,Context context)

throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();//相当于<Hello,1><Hello,1>,将两个1相加

}

result.set(sum);

context.write(key, result);//写出这个单词,和这个单词出现次数<单词,单词出现次数>

}}

public static void main(String[] args) throws Exception {//主方法,函数入口

Configuration conf = new Configuration(); //实例化配置文件类

Job job = new Job(conf, "WordCount"); //实例化Job类

job.setInputFormatClass(TextInputFormat.class); //指定使用默认输入格式类

TextInputFormat.setInputPaths(job, args[0]); //设置待处理文件的位置

job.setJarByClass(WordCount.class); //设置主类名

job.setMapperClass(TokenizerMapper.class); //指定使用上述自定义Map类

job.setCombinerClass(IntSumReducer.class); //指定开启Combiner函数

job.setMapOutputKeyClass(Text.class); //指定Map类输出的<K,V>,K类型

job.setMapOutputValueClass(IntWritable.class); //指定Map类输出的<K,V>,V类型

job.setPartitionerClass(HashPartitioner.class); //指定使用默认的HashPartitioner类

job.setReducerClass(IntSumReducer.class); //指定使用上述自定义Reduce类

job.setNumReduceTasks(Integer.parseInt(args[2])); //指定Reduce个数

job.setOutputKeyClass(Text.class); //指定Reduce类输出的<K,V>,K类型

job.setOutputValueClass(Text.class); //指定Reduce类输出的<K,V>,V类型

job.setOutputFormatClass(TextOutputFormat.class); //指定使用默认输出格式类

TextOutputFormat.setOutputPath(job, new Path(args[1])); //设置输出结果文件位置

System.exit(job.waitForCompletion(true) ? 0 : 1); //提交任务并监控任务状态

}

}

5' 打包成jar文件上传

假定打包后的文件名为hdpAction.jar,主类WordCount位于包njupt下,则可使用如下命令向YARN集群提交本应用。

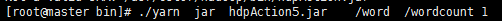

./yarn jar hdpAction.jar mapreduce1.WordCount /word /wordcount 1

其中“yarn”为命令,“jar”为命令参数,后面紧跟打包后的代码地址,“mapreduce1”为包名,“WordCount”为主类名,“/word”为输入文件在HDFS中的位置,/wordcount为输出文件在HDFS中的位置。

注意:如果打包时明确了主类,那么在输入命令时,就无需输入mapreduce1.WordCount来确定主类!

结果显示:

17/08/05 03:37:05 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

17/08/05 03:37:06 INFO client.RMProxy: Connecting to ResourceManager at master/10.1.21.27:8032

17/08/05 03:37:06 WARN mapreduce.JobResourceUploader: Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this.

17/08/05 03:37:07 INFO input.FileInputFormat: Total input paths to process : 1

17/08/05 03:37:07 INFO mapreduce.JobSubmitter: number of splits:1

17/08/05 03:37:07 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1501872322130_0004

17/08/05 03:37:07 INFO impl.YarnClientImpl: Submitted application application_1501872322130_0004

17/08/05 03:37:07 INFO mapreduce.Job: The url to track the job: http://master:8088/proxy/application_1501872322130_0004/

17/08/05 03:37:07 INFO mapreduce.Job: Running job: job_1501872322130_0004

17/08/05 03:37:12 INFO mapreduce.Job: Job job_1501872322130_0004 running in uber mode : false

17/08/05 03:37:12 INFO mapreduce.Job: map 0% reduce 0%

17/08/05 03:37:16 INFO mapreduce.Job: map 100% reduce 0%

17/08/05 03:37:22 INFO mapreduce.Job: map 100% reduce 100%

17/08/05 03:37:22 INFO mapreduce.Job: Job job_1501872322130_0004 completed successfully

17/08/05 03:37:22 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=54

FILE: Number of bytes written=232239

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=166

HDFS: Number of bytes written=28

HDFS: Number of read operations=6

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=2275

Total time spent by all reduces in occupied slots (ms)=2598

Total time spent by all map tasks (ms)=2275

Total time spent by all reduce tasks (ms)=2598

Total vcore-seconds taken by all map tasks=2275

Total vcore-seconds taken by all reduce tasks=2598

Total megabyte-seconds taken by all map tasks=2329600

Total megabyte-seconds taken by all reduce tasks=2660352

Map-Reduce Framework

Map input records=8

Map output records=20

Map output bytes=154

Map output materialized bytes=54

Input split bytes=88

Combine input records=20

Combine output records=5

Reduce input groups=5

Reduce shuffle bytes=54

Reduce input records=5

Reduce output records=5

Spilled Records=10

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=47

CPU time spent (ms)=1260

Physical memory (bytes) snapshot=421257216

Virtual memory (bytes) snapshot=1647611904

Total committed heap usage (bytes)=402653184

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=78

File Output Format Counters

Bytes Written=28

>生成结果文件wordcount目录下的part-r-00000,用hadoop命令查看生成文件

三 二次排序

MR默认会对键进行排序,然而有的时候我们也有对值进行排序的需求。满足这种需求一是可以在reduce阶段排序收集过来的values,但是,如果有数量巨大的values可能就会导致内存溢出等问题,这就是二次排序应用的场景——将对值的排序也安排到MR计算过程之中,而不是单独来做。

二次排序就是首先按照第一字段排序,然后再对第一字段相同的行按照第二字段排序,注意不能破坏第一次排序的结果。

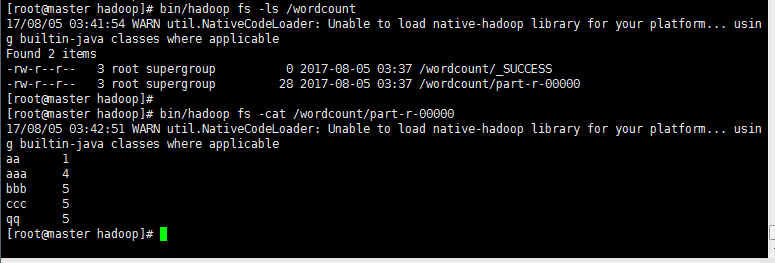

!需排序文件secsortdata.txt

1' 编写程序IntPair 类和主类 SecondarySort类

同第一个实验在eclipse编程的创建方法!

程序主要难点在于排序和聚合。

对于排序我们需要定义一个IntPair类用于数据的存储,并在IntPair类内部自定义Comparator类以实现第一字段和第二字段的比较。

对于聚合我们需要定义一个FirstPartitioner类,在FirstPartitioner类内部指定聚合规则为第一字段。

此外,我们还需要开启MapReduce框架自定义Partitioner 功能和GroupingComparator功能。

Inpair.java

package mr; import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException; import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.WritableComparable; public class IntPair implements WritableComparable<IntPair> {

private IntWritable first;

private IntWritable second;

public void set(IntWritable first, IntWritable second) {

this.first = first;

this.second = second;

}

//注意:需要添加无参的构造方法,否则反射时会报错。

public IntPair() {

set(new IntWritable(), new IntWritable());

}

public IntPair(int first, int second) {

set(new IntWritable(first), new IntWritable(second));

}

public IntPair(IntWritable first, IntWritable second) {

set(first, second);

}

public IntWritable getFirst() {

return first;

}

public void setFirst(IntWritable first) {

this.first = first;

}

public IntWritable getSecond() {

return second;

}

public void setSecond(IntWritable second) {

this.second = second;

}

public void write(DataOutput out) throws IOException {

first.write(out);

second.write(out);

}

public void readFields(DataInput in) throws IOException {

first.readFields(in);

second.readFields(in);

}

public int hashCode() {

return first.hashCode() * 163 + second.hashCode();

}

public boolean equals(Object o) {

if (o instanceof IntPair) {

IntPair tp = (IntPair) o;

return first.equals(tp.first) && second.equals(tp.second);

}

return false;

}

public String toString() {

return first + "\t" + second;

}

public int compareTo(IntPair tp) {

int cmp = first.compareTo(tp.first);

if (cmp != 0) {

return cmp;

}

return second.compareTo(tp.second);

}

}

secsortdata.java

package mr; import java.io.IOException; import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.NullWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.WritableComparable;

import org.apache.hadoop.io.WritableComparator;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Partitioner;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat; public class SecondarySort {

static class TheMapper extends Mapper<LongWritable, Text, IntPair, NullWritable> {

@Override

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

String[] fields = value.toString().split("\t");

int field1 = Integer.parseInt(fields[0]);

int field2 = Integer.parseInt(fields[1]);

context.write(new IntPair(field1,field2), NullWritable.get());

}

}

static class TheReducer extends Reducer<IntPair, NullWritable,IntPair, NullWritable> {

//private static final Text SEPARATOR = new Text("------------------------------------------------");

@Override

protected void reduce(IntPair key, Iterable<NullWritable> values, Context context)

throws IOException, InterruptedException {

context.write(key, NullWritable.get());

}

}

public static class FirstPartitioner extends Partitioner<IntPair, NullWritable> {

public int getPartition(IntPair key, NullWritable value,

int numPartitions) {

return Math.abs(key.getFirst().get()) % numPartitions;

}

}

//如果不添加这个类,默认第一列和第二列都是升序排序的。

//这个类的作用是使第一列升序排序,第二列降序排序

public static class KeyComparator extends WritableComparator {

//无参构造器必须加上,否则报错。

protected KeyComparator() {

super(IntPair.class, true);

}

public int compare(WritableComparable a, WritableComparable b) {

IntPair ip1 = (IntPair) a;

IntPair ip2 = (IntPair) b;

//第一列按升序排序

int cmp = ip1.getFirst().compareTo(ip2.getFirst());

if (cmp != 0) {

return cmp;

}

//在第一列相等的情况下,第二列按倒序排序

return -ip1.getSecond().compareTo(ip2.getSecond());

}

}

//入口程序

public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

Job job = Job.getInstance(conf);

job.setJarByClass(SecondarySort.class);

//设置Mapper的相关属性

job.setMapperClass(TheMapper.class);

//当Mapper中的输出的key和value的类型和Reduce输出

//的key和value的类型相同时,以下两句可以省略。

//job.setMapOutputKeyClass(IntPair.class);

//job.setMapOutputValueClass(NullWritable.class);

FileInputFormat.setInputPaths(job, new Path(args[0]));

//设置分区的相关属性

job.setPartitionerClass(FirstPartitioner.class);

//在map中对key进行排序

job.setSortComparatorClass(KeyComparator.class);

//job.setGroupingComparatorClass(GroupComparator.class);

//设置Reducer的相关属性

job.setReducerClass(TheReducer.class);

job.setOutputKeyClass(IntPair.class);

job.setOutputValueClass(NullWritable.class);

FileOutputFormat.setOutputPath(job, new Path(args[1]));

//设置Reducer数量

int reduceNum = 1;

if(args.length >= 3 && args[2] != null){

reduceNum = Integer.parseInt(args[2]);

}

job.setNumReduceTasks(reduceNum);

job.waitForCompletion(true);

}

}

2’ 打包提交

使用Eclipse开发工具将该代码打包,选择主类为mr.Secondary。如果没有指定主类,那么在执行时就要指定须执行的类。假定打包后的文件名为Secondary.jar,主类SecondarySort位于包mr下,则可使用如下命令向Hadoop集群提交本应用。

bin/hadoop jar hdpAction6.jar mr.Secondary /user/mapreduce/secsort/in/secsortdata.txt /user/mapreduce/secsort/out 1

其中“hadoop”为命令,“jar”为命令参数,后面紧跟打的包,/user/mapreduce/secsort/in/secsortdata.txt”为输入文件在HDFS中的位置,如果HDFS中没有这个文件,则自己自行上传。“/user/mapreduce/secsort/out/”为输出文件在HDFS中的位置,“1”为Reduce个数。

如果打包时已经设定了主类,此时命令中无需再次输入定义主类!

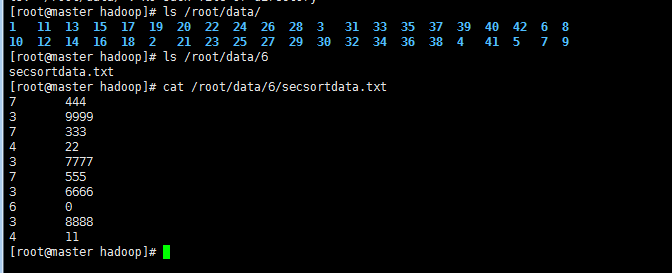

(上传secsortdata.txt到HDFS 命令: ” hadoop fs -put 目标文件包括路径 hdfs路径 “)

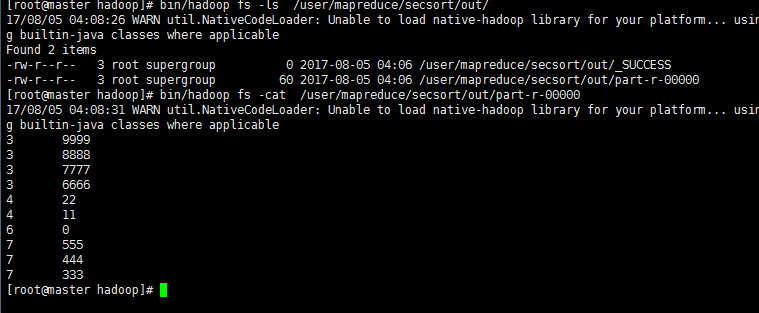

显示结果:

[root@master hadoop]# bin/hadoop jar SecondarySort.jar /secsortdata.txt /user/mapreduce/secsort/out 1

Not a valid JAR: /usr/cstor/hadoop/SecondarySort.jar

[root@master hadoop]# bin/hadoop jar hdpAction6.jar /secsortdata.txt /user/mapreduce/secsort/out 1

17/08/05 04:05:48 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

17/08/05 04:05:49 INFO client.RMProxy: Connecting to ResourceManager at master/10.1.21.27:8032

17/08/05 04:05:49 WARN mapreduce.JobResourceUploader: Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this.

17/08/05 04:05:50 INFO input.FileInputFormat: Total input paths to process : 1

17/08/05 04:05:50 INFO mapreduce.JobSubmitter: number of splits:1

17/08/05 04:05:50 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1501872322130_0007

17/08/05 04:05:50 INFO impl.YarnClientImpl: Submitted application application_1501872322130_0007

17/08/05 04:05:50 INFO mapreduce.Job: The url to track the job: http://master:8088/proxy/application_1501872322130_0007/

17/08/05 04:05:50 INFO mapreduce.Job: Running job: job_1501872322130_0007

17/08/05 04:05:56 INFO mapreduce.Job: Job job_1501872322130_0007 running in uber mode : false

17/08/05 04:05:56 INFO mapreduce.Job: map 0% reduce 0%

17/08/05 04:06:00 INFO mapreduce.Job: map 100% reduce 0%

17/08/05 04:06:05 INFO mapreduce.Job: map 100% reduce 100%

17/08/05 04:06:06 INFO mapreduce.Job: Job job_1501872322130_0007 completed successfully

17/08/05 04:06:07 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=106

FILE: Number of bytes written=230897

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=159

HDFS: Number of bytes written=60

HDFS: Number of read operations=6

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=2534

Total time spent by all reduces in occupied slots (ms)=2799

Total time spent by all map tasks (ms)=2534

Total time spent by all reduce tasks (ms)=2799

Total vcore-seconds taken by all map tasks=2534

Total vcore-seconds taken by all reduce tasks=2799

Total megabyte-seconds taken by all map tasks=2594816

Total megabyte-seconds taken by all reduce tasks=2866176

Map-Reduce Framework

Map input records=10

Map output records=10

Map output bytes=80

Map output materialized bytes=106

Input split bytes=99

Combine input records=0

Combine output records=0

Reduce input groups=10

Reduce shuffle bytes=106

Reduce input records=10

Reduce output records=10

Spilled Records=20

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=55

CPU time spent (ms)=1490

Physical memory (bytes) snapshot=419209216

Virtual memory (bytes) snapshot=1642618880

Total committed heap usage (bytes)=402653184

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=60

File Output Format Counters

Bytes Written=60

生成文件显示二次排序结果:

四 计数器

1‘ MapReduce计数器是什么?

计数器是用来记录job的执行进度和状态的。它的作用可以理解为日志。我们可以在程序的某个位置插入计数器,记录数据或者进度的变化情况。

MapReduce计数器能做什么?

MapReduce 计数器(Counter)为我们提供一个窗口,用于观察 MapReduce Job 运行期的各种细节数据。对MapReduce性能调优很有帮助,MapReduce性能优化的评估大部分都是基于这些 Counter 的数值表现出来的。

在许多情况下,一个用户需要了解待分析的数据,尽管这并非所要执行的分析任务 的核心内容。以统计数据集中无效记录数目的任务为例,如果发现无效记录的比例 相当高,那么就需要认真思考为何存在如此多无效记录。是所采用的检测程序存在 缺陷,还是数据集质量确实很低,包含大量无效记录?如果确定是数据集的质量问 题,则可能需要扩大数据集的规模,以增大有效记录的比例,从而进行有意义的分析。

计数器是一种收集作业统计信息的有效手段,用于质量控制或应用级统计。计数器 还可辅助诊断系统故障。如果需要将日志信息传输到map或reduce任务,更好的 方法通常是尝试传输计数器值以监测某一特定事件是否发生。对于大型分布式作业 而言,使用计数器更为方便。首先,获取计数器值比输出日志更方便,其次,根据 计数器值统计特定事件的发生次数要比分析一堆日志文件容易得多。

内置计数器

MapReduce 自带了许多默认Counter,现在我们来分析这些默认 Counter 的含义,方便大家观察 Job 结果,如输入的字节数、输出的字节数、Map端输入/输出的字节数和条数、Reduce端的输入/输出的字节数和条数等。下面我们只需了解这些内置计数器,知道计数器组名称(groupName)和计数器名称(counterName),以后使用计数器会查找groupName和counterName即可。

自定义计数器

MapReduce允许用户编写程序来定义计数器,计数器的值可在mapper或reducer 中增加。多个计数器由一个Java枚举(enum)类型来定义,以便对计数器分组。一个作业可以定义的枚举类型数量不限,各个枚举类型所包含的字段数量也不限。枚 举类型的名称即为组的名称,枚举类型的字段就是计数器名称。计数器是全局的。换言之,MapReduce框架将跨所有map和reduce聚集这些计数器,并在作业结束 时产生一个最终结果。

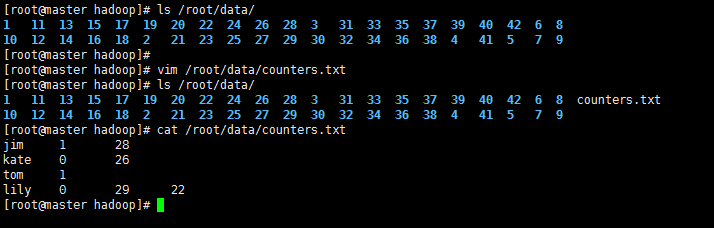

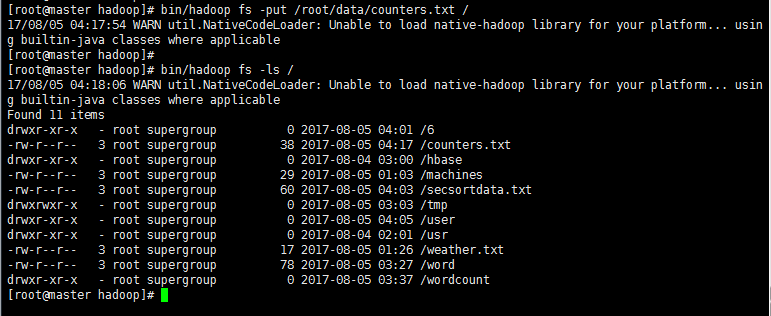

2’ >编辑计数文件counters.txt

>上传该文件到HDFS

3' 编写程序Counters.java

package mr ;

import java.io.IOException;

import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Counter;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.GenericOptionsParser; public class Counters {

public static class MyCounterMap extends Mapper<LongWritable, Text, Text, Text> {

public static Counter ct = null;

protected void map(LongWritable key, Text value,

org.apache.hadoop.mapreduce.Mapper<LongWritable, Text, Text, Text>.Context context)

throws java.io.IOException, InterruptedException {

String arr_value[] = value.toString().split("\t");

if (arr_value.length > 3) {

ct = context.getCounter("ErrorCounter", "toolong"); // ErrorCounter为组名,toolong为组员名

ct.increment(1); // 计数器加一

} else if (arr_value.length < 3) {

ct = context.getCounter("ErrorCounter", "tooshort");

ct.increment(1);

}

}

}

public static void main(String[] args) throws IOException, InterruptedException, ClassNotFoundException {

Configuration conf = new Configuration();

String[] otherArgs = new GenericOptionsParser(conf, args).getRemainingArgs();

if (otherArgs.length != 2) {

System.err.println("Usage: Counters <in> <out>");

System.exit(2);

}

Job job = new Job(conf, "Counter");

job.setJarByClass(Counters.class); job.setMapperClass(MyCounterMap.class); FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[1]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

4' 打包并提交

使用Eclipse开发工具将该代码打包,选择主类为mr.Counters。假定打包后的文件名为hdpAction7.jar,主类Counters位于包mr下,则可使用如下命令向Hadoop集群提交本应用。

bin/hadoop jar hdpAction7.jar mr.Counters /counters.txt /usr/counters/out

其中“hadoop”为命令,“jar”为命令参数,后面紧跟打包。 “/usr/counts/in/counts.txt”为输入文件在HDFS中的位置(如果没有,自行上传),“/usr/counts/out”为输出文件在HDFS中的位置。

显示结果:

[root@master hadoop]# bin/hadoop jar hdpAction7.jar /counters.txt /usr/counters/out

17/08/05 04:22:58 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

17/08/05 04:22:59 INFO client.RMProxy: Connecting to ResourceManager at master/10.1.21.27:8032

17/08/05 04:23:00 INFO input.FileInputFormat: Total input paths to process : 1

17/08/05 04:23:00 INFO mapreduce.JobSubmitter: number of splits:1

17/08/05 04:23:00 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1501872322130_0008

17/08/05 04:23:00 INFO impl.YarnClientImpl: Submitted application application_1501872322130_0008

17/08/05 04:23:00 INFO mapreduce.Job: The url to track the job: http://master:8088/proxy/application_1501872322130_0008/

17/08/05 04:23:00 INFO mapreduce.Job: Running job: job_1501872322130_0008

17/08/05 04:23:05 INFO mapreduce.Job: Job job_1501872322130_0008 running in uber mode : false

17/08/05 04:23:05 INFO mapreduce.Job: map 0% reduce 0%

17/08/05 04:23:10 INFO mapreduce.Job: map 100% reduce 0%

17/08/05 04:23:15 INFO mapreduce.Job: map 100% reduce 100%

17/08/05 04:23:16 INFO mapreduce.Job: Job job_1501872322130_0008 completed successfully

17/08/05 04:23:16 INFO mapreduce.Job: Counters: 51

File System Counters

FILE: Number of bytes read=6

FILE: Number of bytes written=229309

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=134

HDFS: Number of bytes written=0

HDFS: Number of read operations=6

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=2400

Total time spent by all reduces in occupied slots (ms)=2472

Total time spent by all map tasks (ms)=2400

Total time spent by all reduce tasks (ms)=2472

Total vcore-seconds taken by all map tasks=2400

Total vcore-seconds taken by all reduce tasks=2472

Total megabyte-seconds taken by all map tasks=2457600

Total megabyte-seconds taken by all reduce tasks=2531328

Map-Reduce Framework

Map input records=4

Map output records=0

Map output bytes=0

Map output materialized bytes=6

Input split bytes=96

Combine input records=0

Combine output records=0

Reduce input groups=0

Reduce shuffle bytes=6

Reduce input records=0

Reduce output records=0

Spilled Records=0

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=143

CPU time spent (ms)=1680

Physical memory (bytes) snapshot=413036544

Virtual memory (bytes) snapshot=1630470144

Total committed heap usage (bytes)=402653184

ErrorCounter

toolong=1

tooshort=1

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=38

File Output Format Counters

Bytes Written=0

[root@master hadoop]#

五 join操作

1' 概述

对于RDBMS中的Join操作大伙一定非常熟悉,写SQL的时候要十分注意细节,稍有差池就会耗时巨久造成很大的性能瓶颈,而在Hadoop中使用MapReduce框架进行Join的操作时同样耗时,但是由于Hadoop的分布式设计理念的特殊性,因此对于这种Join操作同样也具备了一定的特殊性。

原理

使用MapReduce实现Join操作有多种实现方式:

>在Reduce端连接为最为常见的模式:

Map端的主要工作:为来自不同表(文件)的key/value对打标签以区别不同来源的记录。然后用连接字段作为key,其余部分和新加的标志作为value,最后进行输出。

Reduce端的主要工作:在Reduce端以连接字段作为key的分组已经完成,我们只需要在每一个分组当中将那些来源于不同文件的记录(在map阶段已经打标志)分开,最后进行笛卡尔只就OK了。

>在Map端进行连接

使用场景:一张表十分小、一张表很大。

用法:在提交作业的时候先将小表文件放到该作业的DistributedCache中,然后从DistributeCache中取出该小表进行Join key / value解释分割放到内存中(可以放大Hash Map等等容器中)。然后扫描大表,看大表中的每条记录的Join key /value值是否能够在内存中找到相同Join key的记录,如果有则直接输出结果。

>SemiJoin

SemiJoin就是所谓的半连接,其实仔细一看就是Reduce Join的一个变种,就是在map端过滤掉一些数据,在网络中只传输参与连接的数据不参与连接的数据不必在网络中进行传输,从而减少了shuffle的网络传输量,使整体效率得到提高,其他思想和Reduce Join是一模一样的。说得更加接地气一点就是将小表中参与Join的key单独抽出来通过DistributedCach分发到相关节点,然后将其取出放到内存中(可以放到HashSet中),在map阶段扫描连接表,将Join key不在内存HashSet中的记录过滤掉,让那些参与Join的记录通过shuffle传输到Reduce端进行Join操作,其他的和Reduce Join都是一样的

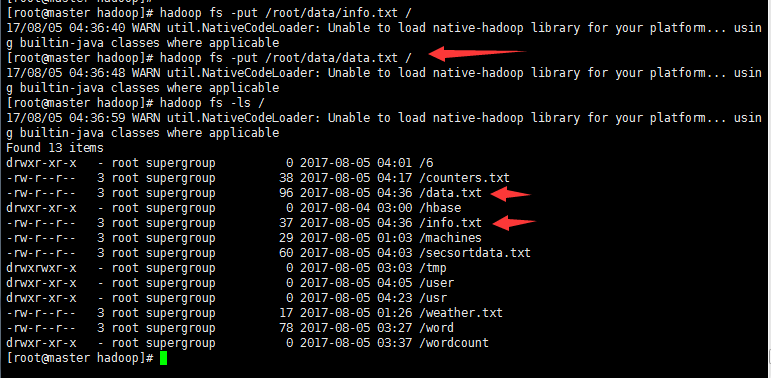

2' >创建两个表文件data.txt 和 info.txt

>上传到HDFS

3‘ 编写程序MRJoin.java

程序分析执行过程如下:

在map阶段,把所有记录标记成<key, value>的形式,其中key是1003/1004/1005/1006的字段值,value则根据来源不同取不同的形式:来源于表A的记录,value的值为“201001 abc”等值;来源于表B的记录,value的值为”kaka“之类的值。

在Reduce阶段,先把每个key下的value列表拆分为分别来自表A和表B的两部分,分别放入两个向量中。然后遍历两个向量做笛卡尔积,形成一条条最终结果。

代码如下:

package mr; import java.io.DataInput;

import java.io.DataOutput;

import java.io.IOException; import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.LongWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.io.WritableComparable;

import org.apache.hadoop.io.WritableComparator;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Partitioner;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.mapreduce.lib.input.FileSplit;

import org.apache.hadoop.util.GenericOptionsParser; public class MRJoin {

public static class MR_Join_Mapper extends Mapper<LongWritable, Text, TextPair, Text> {

@Override

protected void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

// 获取输入文件的全路径和名称

String pathName = ((FileSplit) context.getInputSplit()).getPath().toString();

if (pathName.contains("data.txt")) {

String values[] = value.toString().split("\t");

if (values.length < 3) {

// data数据格式不规范,字段小于3,抛弃数据

return;

} else {

// 数据格式规范,区分标识为1

TextPair tp = new TextPair(new Text(values[1]), new Text("1"));

context.write(tp, new Text(values[0] + "\t" + values[2]));

}

}

if (pathName.contains("info.txt")) {

String values[] = value.toString().split("\t");

if (values.length < 2) {

// data数据格式不规范,字段小于2,抛弃数据

return;

} else {

// 数据格式规范,区分标识为0

TextPair tp = new TextPair(new Text(values[0]), new Text("0"));

context.write(tp, new Text(values[1]));

}

}

}

} public static class MR_Join_Partitioner extends Partitioner<TextPair, Text> {

@Override

public int getPartition(TextPair key, Text value, int numParititon) {

return Math.abs(key.getFirst().hashCode() * 127) % numParititon;

}

} public static class MR_Join_Comparator extends WritableComparator {

public MR_Join_Comparator() {

super(TextPair.class, true);

} public int compare(WritableComparable a, WritableComparable b) {

TextPair t1 = (TextPair) a;

TextPair t2 = (TextPair) b;

return t1.getFirst().compareTo(t2.getFirst());

}

} public static class MR_Join_Reduce extends Reducer<TextPair, Text, Text, Text> {

protected void Reduce(TextPair key, Iterable<Text> values, Context context)

throws IOException, InterruptedException {

Text pid = key.getFirst();

String desc = values.iterator().next().toString();

while (values.iterator().hasNext()) {

context.write(pid, new Text(values.iterator().next().toString() + "\t" + desc));

}

}

} public static void main(String agrs[])

throws IOException, InterruptedException, ClassNotFoundException {

Configuration conf = new Configuration();

GenericOptionsParser parser = new GenericOptionsParser(conf, agrs);

String[] otherArgs = parser.getRemainingArgs();

if (agrs.length < 3) {

System.err.println("Usage: MRJoin <in_path_one> <in_path_two> <output>");

System.exit(2);

} Job job = new Job(conf, "MRJoin");

// 设置运行的job

job.setJarByClass(MRJoin.class);

// 设置Map相关内容

job.setMapperClass(MR_Join_Mapper.class);

// 设置Map的输出

job.setMapOutputKeyClass(TextPair.class);

job.setMapOutputValueClass(Text.class);

// 设置partition

job.setPartitionerClass(MR_Join_Partitioner.class);

// 在分区之后按照指定的条件分组

job.setGroupingComparatorClass(MR_Join_Comparator.class);

// 设置Reduce

job.setReducerClass(MR_Join_Reduce.class);

// 设置Reduce的输出

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(Text.class);

// 设置输入和输出的目录

FileInputFormat.addInputPath(job, new Path(otherArgs[0]));

FileInputFormat.addInputPath(job, new Path(otherArgs[1]));

FileOutputFormat.setOutputPath(job, new Path(otherArgs[2]));

// 执行,直到结束就退出

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

} class TextPair implements WritableComparable<TextPair> {

private Text first;

private Text second; public TextPair() {

set(new Text(), new Text());

} public TextPair(String first, String second) {

set(new Text(first), new Text(second));

} public TextPair(Text first, Text second) {

set(first, second);

} public void set(Text first, Text second) {

this.first = first;

this.second = second;

} public Text getFirst() {

return first;

} public Text getSecond() {

return second;

} public void write(DataOutput out) throws IOException {

first.write(out);

second.write(out);

} public void readFields(DataInput in) throws IOException {

first.readFields(in);

second.readFields(in);

} public int compareTo(TextPair tp) {

int cmp = first.compareTo(tp.first);

if (cmp != 0) {

return cmp;

}

return second.compareTo(tp.second);

}

}

4’ 打包并提交

使用Eclipse开发工具将该代码打包,假定打包后的文件名为hdpAction8.jar,主类MRJoin位于包mr下,则可使用如下命令向Hadoop集群提交本应用。

bin/hadoop jar hdpAction8.jar mr.MRJoin /data.txt /info.txt /usr/MRJoin/out

其中“hadoop”为命令,“jar”为命令参数,后面紧跟打包。 “/data.txt”和 “/info.txt”为输入文件在HDFS中的位置,“/usr/MRJoin/out”为输出文件在HDFS中的位置。

执行结果如下:

[root@master hadoop]# bin/hadoop jar hdpAction8.jar /data.txt /info.txt /usr/MRJoin/out

17/08/05 04:38:11 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

17/08/05 04:38:12 INFO client.RMProxy: Connecting to ResourceManager at master/10.1.21.27:8032

17/08/05 04:38:13 INFO input.FileInputFormat: Total input paths to process : 2

17/08/05 04:38:13 INFO mapreduce.JobSubmitter: number of splits:2

17/08/05 04:38:13 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1501872322130_0009

17/08/05 04:38:13 INFO impl.YarnClientImpl: Submitted application application_1501872322130_0009

17/08/05 04:38:13 INFO mapreduce.Job: The url to track the job: http://master:8088/proxy/application_1501872322130_0009/

17/08/05 04:38:13 INFO mapreduce.Job: Running job: job_1501872322130_0009

17/08/05 04:38:18 INFO mapreduce.Job: Job job_1501872322130_0009 running in uber mode : false

17/08/05 04:38:18 INFO mapreduce.Job: map 0% reduce 0%

17/08/05 04:38:23 INFO mapreduce.Job: map 100% reduce 0%

17/08/05 04:38:28 INFO mapreduce.Job: map 100% reduce 100%

17/08/05 04:38:28 INFO mapreduce.Job: Job job_1501872322130_0009 completed successfully

17/08/05 04:38:29 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=179

FILE: Number of bytes written=347823

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=317

HDFS: Number of bytes written=283

HDFS: Number of read operations=9

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=2

Launched reduce tasks=1

Data-local map tasks=2

Total time spent by all maps in occupied slots (ms)=5122

Total time spent by all reduces in occupied slots (ms)=2685

Total time spent by all map tasks (ms)=5122

Total time spent by all reduce tasks (ms)=2685

Total vcore-seconds taken by all map tasks=5122

Total vcore-seconds taken by all reduce tasks=2685

Total megabyte-seconds taken by all map tasks=5244928

Total megabyte-seconds taken by all reduce tasks=2749440

Map-Reduce Framework

Map input records=10

Map output records=10

Map output bytes=153

Map output materialized bytes=185

Input split bytes=184

Combine input records=0

Combine output records=0

Reduce input groups=4

Reduce shuffle bytes=185

Reduce input records=10

Reduce output records=10

Spilled Records=20

Shuffled Maps =2

Failed Shuffles=0

Merged Map outputs=2

GC time elapsed (ms)=122

CPU time spent (ms)=2790

Physical memory (bytes) snapshot=680472576

Virtual memory (bytes) snapshot=2443010048

Total committed heap usage (bytes)=603979776

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=133

File Output Format Counters

Bytes Written=283

[root@master hadoop]#

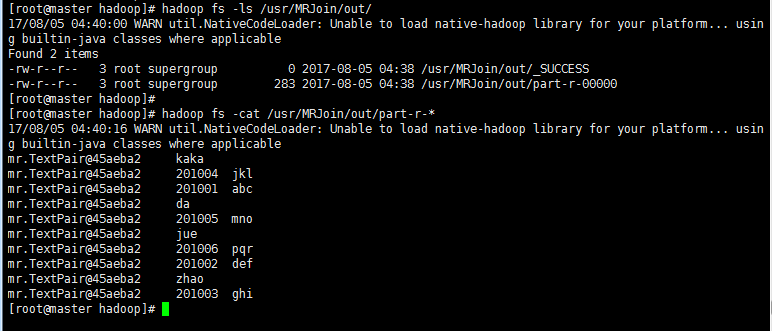

> 生成join后的文件在/usr/MRJoin/out目录下:

六 分布式缓存

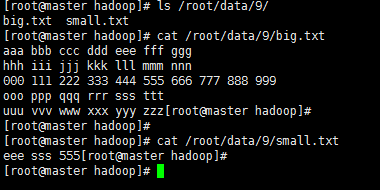

1‘ 假定现有一个大为100G的大表big.txt和一个大小为1M的小表small.txt,请基于MapReduce思想编程实现判断小表中单词在大表中出现次数。也即所谓的“扫描大表、加载小表”。

为解决上述问题,可开启10个Map。这样,每个Map只需处理总量的1/10,将大大加快处理。而在单独Map内,直接用HashSet加载“1M小表”,对于存在硬盘(Map处理时会将HDFS文件拷贝至本地)的10G大文件,则逐条扫描,这就是所谓的“扫描大表、加载小表”,也即分布式缓存。

2’ >新建两个txt文件

>上传到HDFS

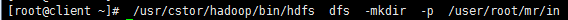

首先登录client机,查看HDFS里是否已存在目录“/user/root/mr/in”,若不存在,使用下述命令新建该目录。

/usr/cstor/hadoop/bin/hdfs dfs -mkdir -p /user/root/mr/in

接着,使用下述命令将client机本地文件“/root/data/9/big.txt”和“/root/data/9/ small.txt”上传至HDFS的“/user/root/mr/in”目录:

/usr/cstor/hadoop/bin/hdfs dfs -put /root/data/9/big.txt /user/root/mr/in

/usr/cstor/hadoop/bin/hdfs dfs -put /root/data/9/small.txt /user/root/mr/in

3‘ 编写代码,新建BigAndSmallTable类并指定包名(代码中为cn.cstor.mr),在BigAndSmallTable.java文件中

依次写入如下代码:

package cn.cstor.mr; import java.io.IOException;

import java.util.HashSet; import org.apache.hadoop.conf.Configuration;

import org.apache.hadoop.fs.FSDataInputStream;

import org.apache.hadoop.fs.FileSystem;

import org.apache.hadoop.fs.Path;

import org.apache.hadoop.io.IntWritable;

import org.apache.hadoop.io.Text;

import org.apache.hadoop.mapreduce.Job;

import org.apache.hadoop.mapreduce.Mapper;

import org.apache.hadoop.mapreduce.Reducer;

import org.apache.hadoop.mapreduce.lib.input.FileInputFormat;

import org.apache.hadoop.mapreduce.lib.output.FileOutputFormat;

import org.apache.hadoop.util.LineReader; public class BigAndSmallTable {

public static class TokenizerMapper extends

Mapper<Object, Text, Text, IntWritable> {

private final static IntWritable one = new IntWritable(1);

private static HashSet<String> smallTable = null; protected void setup(Context context) throws IOException,

InterruptedException {

smallTable = new HashSet<String>();

Path smallTablePath = new Path(context.getConfiguration().get(

"smallTableLocation"));

FileSystem hdfs = smallTablePath.getFileSystem(context

.getConfiguration());

FSDataInputStream hdfsReader = hdfs.open(smallTablePath);

Text line = new Text();

LineReader lineReader = new LineReader(hdfsReader);

while (lineReader.readLine(line) > 0) {

// you can do something here

String[] values = line.toString().split(" ");

for (int i = 0; i < values.length; i++) {

smallTable.add(values[i]);

System.out.println(values[i]);

}

}

lineReader.close();

hdfsReader.close();

System.out.println("setup ok *^_^* ");

} public void map(Object key, Text value, Context context)

throws IOException, InterruptedException {

String[] values = value.toString().split(" ");

for (int i = 0; i < values.length; i++) {

if (smallTable.contains(values[i])) {

context.write(new Text(values[i]), one);

}

}

}

} public static class IntSumReducer extends

Reducer<Text, IntWritable, Text, IntWritable> {

private IntWritable result = new IntWritable(); public void reduce(Text key, Iterable<IntWritable> values,

Context context) throws IOException, InterruptedException {

int sum = 0;

for (IntWritable val : values) {

sum += val.get();

}

result.set(sum);

context.write(key, result);

}

} public static void main(String[] args) throws Exception {

Configuration conf = new Configuration();

conf.set("smallTableLocation", args[1]);

Job job = Job.getInstance(conf, "BigAndSmallTable");

job.setJarByClass(BigAndSmallTable.class);

job.setMapperClass(TokenizerMapper.class);

job.setReducerClass(IntSumReducer.class);

job.setMapOutputKeyClass(Text.class);

job.setMapOutputValueClass(IntWritable.class);

job.setOutputKeyClass(Text.class);

job.setOutputValueClass(IntWritable.class);

FileInputFormat.addInputPath(job, new Path(args[0]));

FileOutputFormat.setOutputPath(job, new Path(args[2]));

System.exit(job.waitForCompletion(true) ? 0 : 1);

}

}

4’ 打包上传并执行

首先,使用“Xmanager Enterprise 5”将“C:\Users\allen\ Desktop\BigSmallTable.jar”上传至client机。此处上传至“/root/BigSmallTable.jar”

接着,登录client机上,使用下述命令提交BigSmallTable.jar任务。

/usr/cstor/hadoop/bin/hadoop jar /root/BigSmallTable.jar cn.cstor.mr.BigAndSmallTable /user/root/mr/in/big.txt /user/root/mr/in/small.txt /user/root/mr/bigAndSmallResult

[root@client ~]# /usr/cstor/hadoop/bin/hadoop jar /root/BigSmallTable.jar /user/root/mr/in/big.txt /user/root/mr/in/small.txt /user/root/mr/bigAndSmallResult

17/08/05 04:55:51 INFO client.RMProxy: Connecting to ResourceManager at master/10.1.21.27:8032

17/08/05 04:55:52 WARN mapreduce.JobResourceUploader: Hadoop command-line option parsing not performed. Implement the Tool interface and execute your application with ToolRunner to remedy this.

17/08/05 04:55:52 INFO input.FileInputFormat: Total input paths to process : 1

17/08/05 04:55:52 INFO mapreduce.JobSubmitter: number of splits:1

17/08/05 04:55:52 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1501872322130_0010

17/08/05 04:55:53 INFO impl.YarnClientImpl: Submitted application application_1501872322130_0010

17/08/05 04:55:53 INFO mapreduce.Job: The url to track the job: http://master:8088/proxy/application_1501872322130_0010/

17/08/05 04:55:53 INFO mapreduce.Job: Running job: job_1501872322130_0010

17/08/05 04:55:58 INFO mapreduce.Job: Job job_1501872322130_0010 running in uber mode : false

17/08/05 04:55:58 INFO mapreduce.Job: map 0% reduce 0%

17/08/05 04:56:03 INFO mapreduce.Job: map 100% reduce 0%

17/08/05 04:56:08 INFO mapreduce.Job: map 100% reduce 100%

17/08/05 04:56:09 INFO mapreduce.Job: Job job_1501872322130_0010 completed successfully

17/08/05 04:56:09 INFO mapreduce.Job: Counters: 49

File System Counters

FILE: Number of bytes read=36

FILE: Number of bytes written=231153

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=265

HDFS: Number of bytes written=18

HDFS: Number of read operations=7

HDFS: Number of large read operations=0

HDFS: Number of write operations=2

Job Counters

Launched map tasks=1

Launched reduce tasks=1

Data-local map tasks=1

Total time spent by all maps in occupied slots (ms)=2597

Total time spent by all reduces in occupied slots (ms)=2755

Total time spent by all map tasks (ms)=2597

Total time spent by all reduce tasks (ms)=2755

Total vcore-seconds taken by all map tasks=2597

Total vcore-seconds taken by all reduce tasks=2755

Total megabyte-seconds taken by all map tasks=2659328

Total megabyte-seconds taken by all reduce tasks=2821120

Map-Reduce Framework

Map input records=5

Map output records=3

Map output bytes=24

Map output materialized bytes=36

Input split bytes=107

Combine input records=0

Combine output records=0

Reduce input groups=3

Reduce shuffle bytes=36

Reduce input records=3

Reduce output records=3

Spilled Records=6

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=57

CPU time spent (ms)=1480

Physical memory (bytes) snapshot=425840640

Virtual memory (bytes) snapshot=1651806208

Total committed heap usage (bytes)=402653184

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=147

File Output Format Counters

Bytes Written=18

[root@client ~]#

该命令执行显示

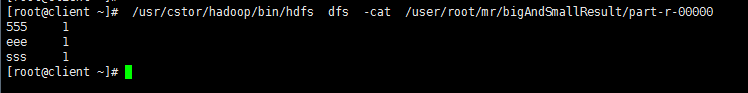

>查看结果

程序执行后,可使用下述命令查看执行结果,注意若再次执行,请更改结果目录:

/usr/cstor/hadoop/bin/hdfs dfs -cat /user/root/mr/bigAndSmallResult/part-r-00000

总结:

从五个实验做出来之后,我们可以系统化的了解mapreduce的运行流程:

首先目标文件上传到HDFS;

其次编写目标程序代码;

然后将其打包上传到集群服务器上;

再然后执行该jar包;

生成part-r-00000结果文件。

关于hadoop的命令使用也更加熟练,对于一些文件上传、查看、编辑的处理也可以掌握于心了。学习到这里,对于大数据也算可以入门了,对于大数据也有了一定的了解与基本操作。

路漫漫其修远兮,吾将上下而求索。日积月累,坚持不懈是学习成果的前提。量变造成质变,望看到此处的朋友们共同努力,互相交流学习,我们都是爱学习的人!

大数据【四】MapReduce(单词计数;二次排序;计数器;join;分布式缓存)的更多相关文章

- HDFS 手写mapreduce单词计数框架

一.数据处理类 package com.css.hdfs; import java.io.BufferedReader; import java.io.IOException; import java ...

- Hadoop分布环境搭建步骤,及自带MapReduce单词计数程序实现

Hadoop分布环境搭建步骤: 1.软硬件环境 CentOS 7.2 64 位 JDK- 1.8 Hadoo p- 2.7.4 2.安装SSH sudo yum install openssh-cli ...

- 大数据技术 - MapReduce的Combiner介绍

本章来简单介绍下 Hadoop MapReduce 中的 Combiner.Combiner 是为了聚合数据而出现的,那为什么要聚合数据呢?因为我们知道 Shuffle 过程是消耗网络IO 和 磁盘I ...

- 【机器学习实战】第15章 大数据与MapReduce

第15章 大数据与MapReduce 大数据 概述 大数据: 收集到的数据已经远远超出了我们的处理能力. 大数据 场景 假如你为一家网络购物商店工作,很多用户访问该网站,其中有些人会购买商品,有些人则 ...

- [大数据]-Elasticsearch5.3.1+Kibana5.3.1从单机到分布式的安装与使用<1>

一.Elasticsearch,Kibana简介: Elasticsearch是一个基于Apache Lucene(TM)的开源搜索引擎.无论在开源还是专有领域, Lucene可以被认为是迄今为止最先 ...

- [大数据]-Elasticsearch5.3.1+Kibana5.3.1从单机到分布式的安装与使用<2>

前言:上篇[大数据]-Elasticsearch5.3.1+Kibana5.3.1从单机到分布式的安装与使用<1>中介绍了ES ,Kibana的单机到分布式的安装,这里主要是介绍Elast ...

- hadoop系列四:mapreduce的使用(二)

转载请在页首明显处注明作者与出处 一:说明 此为大数据系列的一些博文,有空的话会陆续更新,包含大数据的一些内容,如hadoop,spark,storm,机器学习等. 当前使用的hadoop版本为2.6 ...

- 大数据与Mapreduce

第十五章 大数据与Maprudece 一.引言 实际生活中的数据量是非常庞大的,采用单机运行的方式可能需要若干天才能出结果,这显然不符合我们的预期,为了尽快的获得结果,我们将采用分布式的方式,将计算分 ...

- 大数据开篇 MapReduce初步

最近在学习大数据相关的东西,开这篇专题来记录一下学习过程.今天主要记录一下MapReduce执行流程解析 引子(我们需要解决一个简单的单词计数(WordCount)问题) 1000个单词 嘿嘿,100 ...

- FusionInsight大数据开发---MapReduce与YARN应用开发

MapReduce MapReduce的基本定义及过程 搭建开发环境 代码实例及运行程序 MapReduce开发接口介绍 1. MapReduce的基本定义及过程 MapReduce是面向大数据并行处 ...

随机推荐

- Consul使用

- shell信号捕捉命令 trap

trap 命令 tarp命令用于在接收到指定信号后要执行的动作,通常用途是在shell脚本被中断时完成清理工作.例如: 脚本在执行时按下CTRL+c时,将显示"program exit... ...

- web工程迁移---weblogic8迁移到jboss5遇到的异常

原有的web工程是在weblogic8上运行的,但现在的要求是要运行到jboss5中,为如后迁移到更高版本的jboss做准备 由于我对weblogic没有过研究,所以之前的步骤都是有别人进行的,在进行 ...

- 执行bin/hdfs haadmin -transitionToActive nn1时出现,Automatic failover is enabled for NameNode at bigdata-pro02.kfk.com/192.168.80.152:8020 Refusing to manually manage HA state的解决办法(图文详解)

不多说,直接上干货! 首先, 那么,你也许,第一感觉,是想到的是 全网最详细的Hadoop HA集群启动后,两个namenode都是standby的解决办法(图文详解) 这里,nn1,不多赘述了.很简 ...

- 研究CondItem

- 【教程向】——基于hexo+github搭建私人博客

前言 1.github pages服务生成的全是静态文件,访问速度快: 2.免费方便,不用花一分钱就可以搭建一个自由的个人博客,不需要服务器不需要后台: 3.可以随意绑定自己的域名,不仔细看的话根本看 ...

- 前端组件化Polymer深入篇(1)

在前面的几节里面简单的介绍了一下Polymer的基本功能,但还有一些细节的东西并没有讨论,所有打算花点时间把Polymer的一些细节写一下. new和createElement有区别吗? <sc ...

- postgresql 创建用户并创建数据库

首先通过 sudo -i -u postgres 以管理员身份 postgres 登陆,然后通过 createuser --interactive (-- interactive 是交互式,创建过程可 ...

- Linux终端回话记录和回放工具 - asciinema使用总结

目前linux终端回放工具常见的就是asciinema和script了, 这两种工具都有那种类似于视频回放的效果.虽然这样做的代价是录制过程中需要占用一定的cpu资源以及录制后可能会因为视频文件太大而 ...

- linux wheel组

wheel 组的概念 wheel 组的概念继承自 UNIX.当服务器需要进行一些日常系统管理员无法执行的高级维护时,往往就要用到 root 权限:而“wheel” 组就是一个包含这些特殊权限的用户池: ...