On Using Very Large Target Vocabulary for Neural Machine Translation Candidate Sampling Sampled Softmax

【softmax分类器的加速器】

https://www.tensorflow.org/api_docs/python/tf/nn/sampled_softmax_loss

This is a faster way to train a softmax classifier over a huge number of classes.

【分类的结果集过大,选取子集】

https://www.tensorflow.org/api_guides/python/nn#Candidate_Sampling

Do you want to train a multiclass or multilabel model with thousands or millions of output classes (for example, a language model with a large vocabulary)? Training with a full Softmax is slow in this case, since all of the classes are evaluated for every training example. Candidate Sampling training algorithms can speed up your step times by only considering a small randomly-chosen subset of contrastive classes (called candidates) for each batch of training examples.

https://www.tensorflow.org/extras/candidate_sampling.pdf

【 compute F(x, y) for every class y ∈ L for every training example----耗时点,这是要解决的问题】

What is Candidate Sampling Say we have a multiclass or multilabel problem where each training example (x , ) consists of i Ti a context xi a small (multi)set of target classes Ti out of a large universe L of possible classes. For example, the problem might be to predicting the next word (or the set of future words) in a sentence given the previous words.

We wish to learn a compatibility function F(x, y) which says something about the compatibility of a class y with a context x . For example the probability of the class given the context.

“Exhaustive” training methods such as softmax and logistic regression require us to compute F(x, y) for every class y ∈ L for every training example. When |L| is very large, this can be prohibitively expensive.

【the model having a very large target vocabulary by selecting only a small subset of the whole target vocabulary:子集】

https://arxiv.org/pdf/1412.2007.pdf

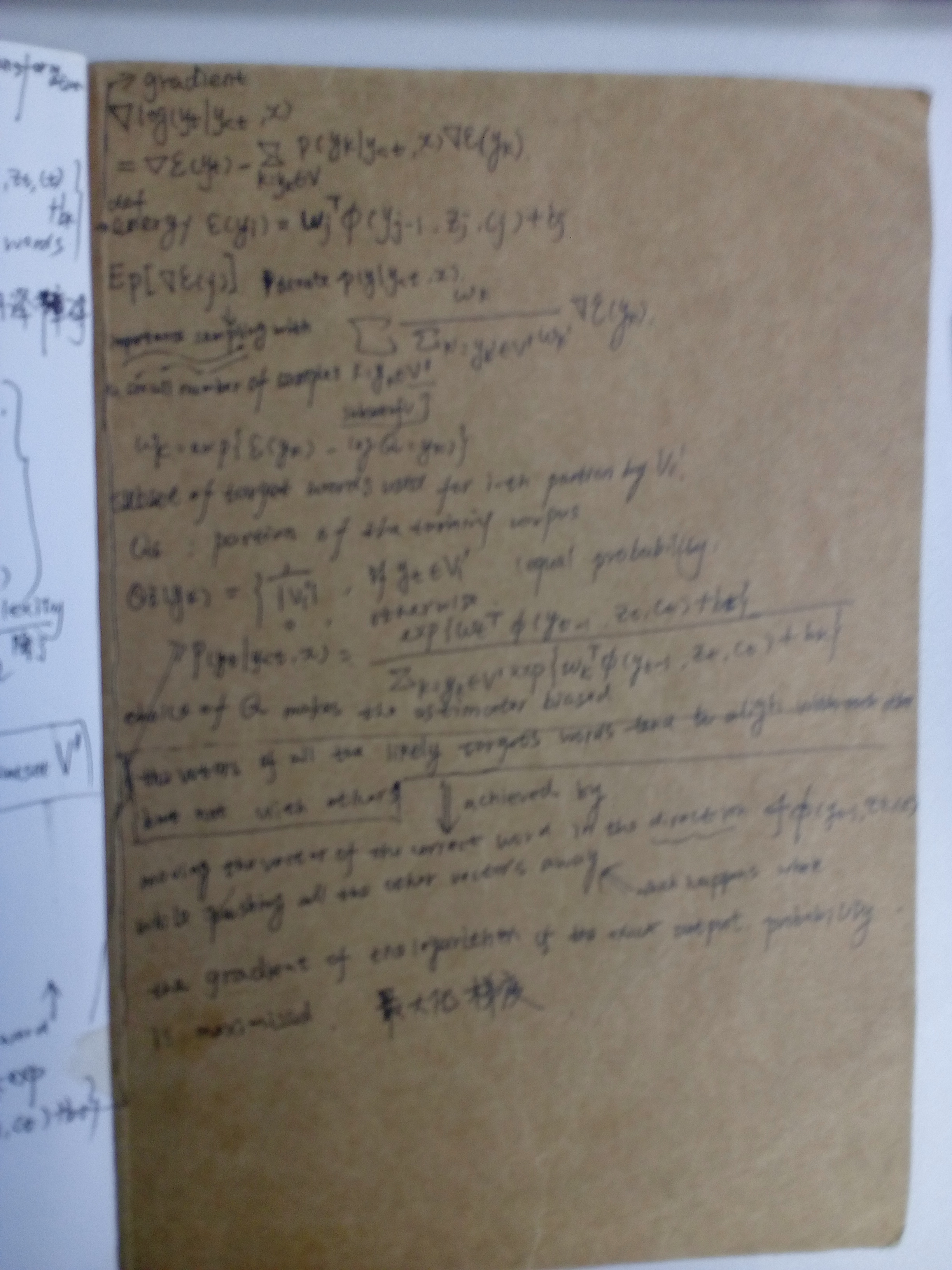

Neural machine translation, a recently proposed approach to machine translation based purely on neural networks, has shown promising results compared to the existing approaches such as phrase-based statistical machine translation. Despite its recent success, neural machine translation has its limitation in handling a larger vocabulary, as training complexity as well as decoding complexity increase proportionally to the number of target words. In this paper, we propose a method that allows us to use a very large target vocabulary without increasing training complexity, based on importance sampling. We show that decoding can be efficiently done even with the model having a very large target vocabulary by selecting only a small subset of the whole target vocabulary. The models trained by the proposed approach are empirically found to outperform the baseline models with a small vocabulary as well as the LSTM-based neural machine translation models. Furthermore, when we use the ensemble of a few models with very large target vocabularies, we achieve the state-of-the-art translation performance (measured by BLEU) on the English->German translation and almost as high performance as state-of-the-art English->French translation system.

On Using Very Large Target Vocabulary for Neural Machine Translation Candidate Sampling Sampled Softmax的更多相关文章

- 课程五(Sequence Models),第三周(Sequence models & Attention mechanism) —— 1.Programming assignments:Neural Machine Translation with Attention

Neural Machine Translation Welcome to your first programming assignment for this week! You will buil ...

- Sequence Models Week 3 Neural Machine Translation

Neural Machine Translation Welcome to your first programming assignment for this week! You will buil ...

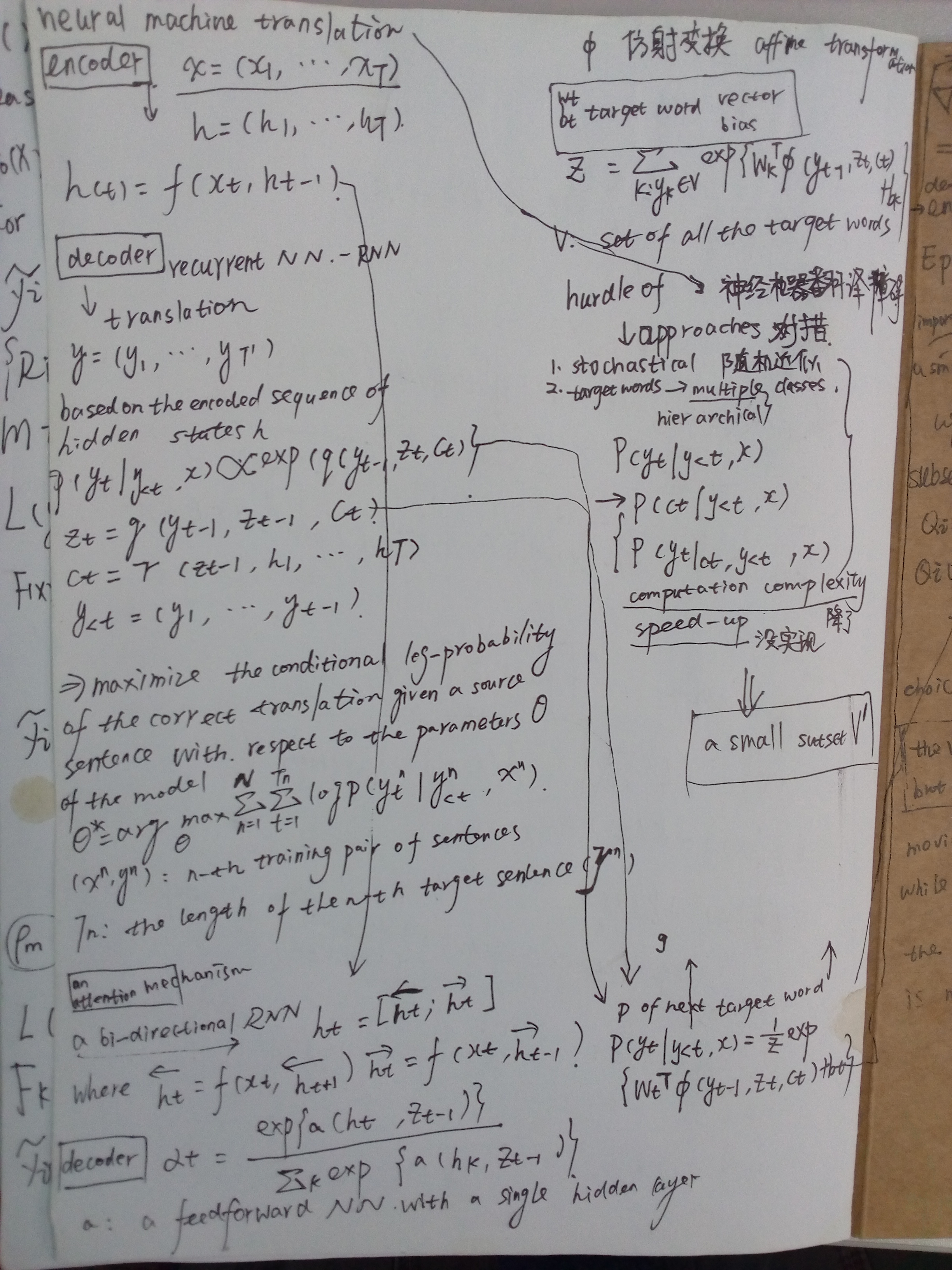

- 神经机器翻译 - NEURAL MACHINE TRANSLATION BY JOINTLY LEARNING TO ALIGN AND TRANSLATE

论文:NEURAL MACHINE TRANSLATION BY JOINTLY LEARNING TO ALIGN AND TRANSLATE 综述 背景及问题 背景: 翻译: 翻译模型学习条件分布 ...

- 对Neural Machine Translation by Jointly Learning to Align and Translate论文的详解

读论文 Neural Machine Translation by Jointly Learning to Align and Translate 这个论文是在NLP中第一个使用attention机制 ...

- Effective Approaches to Attention-based Neural Machine Translation(Global和Local attention)

这篇论文主要是提出了Global attention 和 Local attention 这个论文有一个译文,不过我没细看 Effective Approaches to Attention-base ...

- 【转载 | 翻译】Visualizing A Neural Machine Translation Model(神经机器翻译模型NMT的可视化)

转载并翻译Jay Alammar的一篇博文:Visualizing A Neural Machine Translation Model (Mechanics of Seq2seq Models Wi ...

- [笔记] encoder-decoder NEURAL MACHINE TRANSLATION BY JOINTLY LEARNING TO ALIGN AND TRANSLATE

原文地址 :[1409.0473] Neural Machine Translation by Jointly Learning to Align and Translate (arxiv.org) ...

- Introduction to Neural Machine Translation - part 1

The Noise Channel Model \(p(e)\): the language Model \(p(f|e)\): the translation model where, \(e\): ...

- 论文阅读 | Robust Neural Machine Translation with Doubly Adversarial Inputs

(1)用对抗性的源实例攻击翻译模型; (2)使用对抗性目标输入来保护翻译模型,提高其对对抗性源输入的鲁棒性. 生成对抗输入:基于梯度 (平均损失) -> AdvGen 我们的工作处理由白盒N ...

随机推荐

- 好用的 HTTP模块SuperAgent

SuperAgent 最近在写爬虫,看了下node里面有啥关于ajax的模块,发现superagent这个模块灰常的好用.好东西要和大家分享,话不多说,开始吧- 什么是SuperAgent super ...

- Linked List Cycle - LeetCode

Given a linked list, determine if it has a cycle in it. Follow up:Can you solve it without using ext ...

- Java基础教程---JDK的安装和环境变量的配置

一.Java的安装和环境变量配置 1.Java的安装: 第一步,从Oracle官网下载安装包,当然也可以从其他安全可靠的地方下载(PS:根据不同电脑系统下载相应的安装包,注意电脑的位数.如x64,x3 ...

- 评分条RatingBar Android

<?xml version="1.0" encoding="utf-8"?> <LinearLayout xmlns:android=&quo ...

- xampp 安装 mysql-python

在已经安装brew前提下:brew install mysql-connector-c pip install MySQL-python

- vim批量缩进功能

注释符号:" :set tabstop=4 设定tab宽度为4个字符 :set shiftwidth=4 设定自动缩进为4个字符 :set expandtab 用space替代tab的输入 ...

- 13.【nuxt起步】-部署到正式环境

已经购买centos服务器,并安装了nodejs环境 Secure CRT链接 Cd / Cd /var/www Mkdir test.abc.cn 用ftp 除了node_modules,其他都上传 ...

- 【前端GUI】——对一些优秀网页设计作品的分析&心得

前言:优秀的网站设计作品都有一些相似的地方,即使是美学,也一定会遵循着一定的规律. ONE 这一组,属于同类. 主题:点心. 背景:卡通动物形象. 色调:柔和,甜美. 点线面布局: 在这两个页面中,点 ...

- UE把环境变量Path改了

为了比较个文件,装了UE. 文件比较完了,环境变量也被改了. 改还不是写添加式的改,是写覆盖式的改. 搞得ant都起不动了,一看Path被改的那样(C:\hy\soft\ultraedit\Ultra ...

- GUID概念

GUID概念 GUID: 即Globally Unique Identifier(全球唯一标识符) 也称作 UUID(Universally Unique IDentifier) . GUID是 ...