java.net.ConnectException: Your endpoint configuration is wrong; For more details see: http://wiki.apache.org/hadoop/UnsetHostnameOrPort

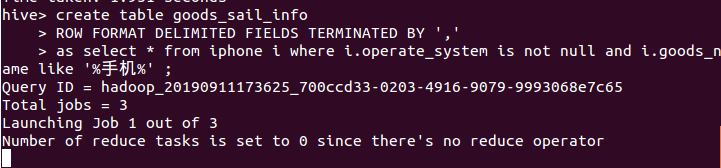

今天使用在hive中建表,并在hive中将查询到的语句插入到新表中时,一直开在如图所示位置不动

等待了20多分钟,然后报了这么个错

java.net.ConnectException: Your endpoint configuration is wrong; For more details see: http://wiki.apache.org/hadoop/UnsetHostnameOrPort

at sun.reflect.GeneratedConstructorAccessor49.newInstance(Unknown Source)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.net.NetUtils.wrapWithMessage(NetUtils.java:824)

at org.apache.hadoop.net.NetUtils.wrapException(NetUtils.java:750)

at org.apache.hadoop.ipc.Client.getRpcResponse(Client.java:1497)

at org.apache.hadoop.ipc.Client.call(Client.java:1439)

at org.apache.hadoop.ipc.Client.call(Client.java:1349)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:227)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:116)

at com.sun.proxy.$Proxy77.getNewApplication(Unknown Source)

at org.apache.hadoop.yarn.api.impl.pb.client.ApplicationClientProtocolPBClientImpl.getNewApplication(ApplicationClientProtocolPBClientImpl.java:262)

at sun.reflect.GeneratedMethodAccessor5.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:422)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeMethod(RetryInvocationHandler.java:165)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invoke(RetryInvocationHandler.java:157)

at org.apache.hadoop.io.retry.RetryInvocationHandler$Call.invokeOnce(RetryInvocationHandler.java:95)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:359)

at com.sun.proxy.$Proxy78.getNewApplication(Unknown Source)

at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.getNewApplication(YarnClientImpl.java:236)

at org.apache.hadoop.yarn.client.api.impl.YarnClientImpl.createApplication(YarnClientImpl.java:244)

at org.apache.hadoop.mapred.ResourceMgrDelegate.getNewJobID(ResourceMgrDelegate.java:193)

at org.apache.hadoop.mapred.YARNRunner.getNewJobID(YARNRunner.java:265)

at org.apache.hadoop.mapreduce.JobSubmitter.submitJobInternal(JobSubmitter.java:159)

at org.apache.hadoop.mapreduce.Job$11.run(Job.java:1570)

at org.apache.hadoop.mapreduce.Job$11.run(Job.java:1567)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1889)

at org.apache.hadoop.mapreduce.Job.submit(Job.java:1567)

at org.apache.hadoop.mapred.JobClient$1.run(JobClient.java:576)

at org.apache.hadoop.mapred.JobClient$1.run(JobClient.java:571)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1889)

at org.apache.hadoop.mapred.JobClient.submitJobInternal(JobClient.java:571)

at org.apache.hadoop.mapred.JobClient.submitJob(JobClient.java:562)

at org.apache.hadoop.hive.ql.exec.mr.ExecDriver.execute(ExecDriver.java:423)

at org.apache.hadoop.hive.ql.exec.mr.MapRedTask.execute(MapRedTask.java:149)

at org.apache.hadoop.hive.ql.exec.Task.executeTask(Task.java:205)

at org.apache.hadoop.hive.ql.exec.TaskRunner.runSequential(TaskRunner.java:97)

at org.apache.hadoop.hive.ql.Driver.launchTask(Driver.java:2664)

at org.apache.hadoop.hive.ql.Driver.execute(Driver.java:2335)

at org.apache.hadoop.hive.ql.Driver.runInternal(Driver.java:2011)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1709)

at org.apache.hadoop.hive.ql.Driver.run(Driver.java:1703)

at org.apache.hadoop.hive.ql.reexec.ReExecDriver.run(ReExecDriver.java:157)

at org.apache.hadoop.hive.ql.reexec.ReExecDriver.run(ReExecDriver.java:218)

at org.apache.hadoop.hive.cli.CliDriver.processLocalCmd(CliDriver.java:239)

at org.apache.hadoop.hive.cli.CliDriver.processCmd(CliDriver.java:188)

at org.apache.hadoop.hive.cli.CliDriver.processLine(CliDriver.java:402)

at org.apache.hadoop.hive.cli.CliDriver.executeDriver(CliDriver.java:821)

at org.apache.hadoop.hive.cli.CliDriver.run(CliDriver.java:759)

at org.apache.hadoop.hive.cli.CliDriver.main(CliDriver.java:683)

at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method)

at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.util.RunJar.run(RunJar.java:239)

at org.apache.hadoop.util.RunJar.main(RunJar.java:153)

Caused by: java.net.ConnectException: 拒绝连接

at sun.nio.ch.SocketChannelImpl.checkConnect(Native Method)

at sun.nio.ch.SocketChannelImpl.finishConnect(SocketChannelImpl.java:717)

at org.apache.hadoop.net.SocketIOWithTimeout.connect(SocketIOWithTimeout.java:206)

at org.apache.hadoop.net.NetUtils.connect(NetUtils.java:531)

at org.apache.hadoop.ipc.Client$Connection.setupConnection(Client.java:687)

at org.apache.hadoop.ipc.Client$Connection.setupIOstreams(Client.java:790)

at org.apache.hadoop.ipc.Client$Connection.access$3500(Client.java:411)

at org.apache.hadoop.ipc.Client.getConnection(Client.java:1554)

at org.apache.hadoop.ipc.Client.call(Client.java:1385)

... 55 more

Job Submission failed with exception 'java.net.ConnectException(Your endpoint configuration is wrong; For more details see: http://wiki.apache.org/hadoop/UnsetHostnameOrPort)'

FAILED: Execution Error, return code 1 from org.apache.hadoop.hive.ql.exec.mr.MapRedTask. Your endpoint configuration is wrong; For more details see: http://wiki.apache.org/hadoop/UnsetHostnameOrPort

在百度的过程中我参考了一篇博客,他的说法是由于没有启动historyserver引起的,于是我就按照他的方法修改了mapred-site.xml,结果还是不行,

于是我就想到了我之前配置过yarn,在开启Hadoop的时候我输入的命令是./sbin/start-dfs.sh,并没有输入./sbin/start-all.sh

于是我就开启了yarn,结果就可以正常的查询插入了。

参考博客:https://www.cnblogs.com/cac2020/p/10274979.html

yarn基本概念:https://baike.baidu.com/item/yarn/16075826?fr=aladdin

java.net.ConnectException: Your endpoint configuration is wrong; For more details see: http://wiki.apache.org/hadoop/UnsetHostnameOrPort的更多相关文章

- 异常: Call From * 9000 failed on connection exception: java.net.ConnectException: Connection refused: no further information; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

场景: eclipse链接不上阿里云hadoop解决: 将hadoop的配置文件中的ip改为内网IP即可

- exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org/hadoop/ConnectionRefused

1.虽然,不是大错,还说要贴一下,由于我运行run-example streaming.NetworkWordCount localhost 9999的测试案例,出现的错误,第一感觉就是Spark没有 ...

- java.net.ConnectException: Call From slaver1/192.168.19.128 to slaver1:8020 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.apache.org

1:练习spark的时候,操作大概如我读取hdfs上面的文件,然后spark懒加载以后,我读取详细信息出现如下所示的错误,错误虽然不大,我感觉有必要记录一下,因为错误的起因是对命令的不熟悉造成的,错误 ...

- Exception in thread “main“ java.net.ConnectException: Call From

问题描述:#报错语句:FileSystem fs = FileSystem.get(new URI("hdfs://hadoop000:8020"),new Configurati ...

- Caused by: java.net.ConnectException: Call From master/192.168.199.130 to master:9000 failed on connection exception: java.net.ConnectException: Connection refused; For more details see: http://wiki.

1:安装好hive,准备启动的时候出现下面的错误(由于hive是基于Hadoop的,所以必须先将你的集群启动起来,我就是没有启动集群,直接启动hive导致的错误): [root@master bin] ...

- Call From master/192.168.128.135 to master:8485 failed on connection exception: java.net.ConnectException: Connection refused

hadoop集群搭建了ha,初次启动正常,最近几天启动时偶尔发现,namenode1节点启动后一段时间(大约10几秒-半分钟左右),namenode1上namenode进程停掉,查看日志: -- :: ...

- error: not found: value sqlContext/import sqlContext.implicits._/error: not found: value sqlContext /import sqlContext.sql/Caused by: java.net.ConnectException: Connection refused

1.今天启动启动spark的spark-shell命令的时候报下面的错误,百度了很多,也没解决问题,最后想着是不是没有启动hadoop集群的问题 ,可是之前启动spark-shell命令是不用启动ha ...

- hadoop报错:java.io.IOException(java.net.ConnectException: Call From xxx/xxx to xxx:10020 failed on connection exception: java.net.ConnectException: 拒绝连接

任务一直报错 现象比较奇怪,部分任务可以正常跑,部分问题报错 报错信息如下: Ended Job = job_1527476268558_132947 with exception 'java.io. ...

- ls: Call From hdoop2/192.168.18.87 to hdoop2:8020 failed on connection exception: java.net.ConnectException: 拒绝连接; For more details see

场景: 预发环境中,同事已经搭建了一套hadoop集群,由于版本与所需不符,所以需要替换版本 问题描述: 在配置文件都准确的情况下,启动hadoop,出现以下报错: 启动之前初始化: 初始化目录 ...

- Error: java.net.ConnectException: Call From tuge1/192.168.40.100 to tuge2:8032 failed on connection exception

先看解决方案,再看唠嗑,唠嗑可以忽略. 解决方案: 使用start yarn.sh启动yarn就可以了. 唠嗑: 今天学习Spark基于Yarn部署.然后总以为Yarn是让Spark启动的,提交程序的 ...

随机推荐

- python语法进阶这一篇就够了

前言 前面我们已经学习了Python的基础语法,了解了Python的分支结构,也就是选择结构.循环结构以及函数这些具体的框架,还学习了列表.元组.字典.字符串这些Python中特有的数据结构,还用这些 ...

- 【分析笔记】全志方案通过命令行操作 GPIO 口(带源码分析)

前言说明 在项目开发初期,很经常会需要临时操作某个GPIO来验证某些功能,可以通过编写一个简单的驱动程序来操作,但更方便的是可以通过命令行直接操作 GPIO ,这样不需要经过编写代码.编译驱动.推入文 ...

- Asp.Net Core中利用过滤器控制Nginx的缓存时间

前言 Web项目中很多网页资源比如html.js.css通常会做服务器端的缓存,加快网页的加载速度 一些周期性变化的API数据也可以做缓存,例如广告资源位数据,菜单数据,商品类目数据,商品详情数据,商 ...

- Autodesk Maya2023 破解版安装教程(小白看了也说understand)

前言 Maya是Autodesk旗下的著名三维建模和动画软件,应用对象是专业的影视广告,角色动画,电影特技等.Maya功能完善,工作灵活,制作效率极高,渲染真实感极强,是电影级别的高端制作软件. 安装 ...

- StartAllBack使用教程

StartAllBack简介 StartAllBack是一款Win11开始菜单增强工具,为Windows11恢复经典样式的Windows7主题风格开始菜单和任务栏,功能包括:自定义开始菜单样式和操作, ...

- STM32F1库函数初始化系列:串口DMA空闲接收_DMA发送

1 void USART3_Configuration(void) //串口3配置---S 2 { 3 DMA_InitTypeDef DMA_InitStructure; 4 USART_InitT ...

- Elemen ui&表单 、CRUD、安装

ElementUI表单 Form表单,每一个表单域是由一个form-item组件构成的,表单域中可以放置各种类型的表单控键,有input.switch.checkbox 表单的绑定form 内容分别是 ...

- JZOJ 3304. Theresa与数据结构

\(\text{Problem}\) 标准四维偏序 带修改(加和删除)和询问的三维空间正方体内部(包括边上)的点的数目 \(\text{Analysis}\) 打法很多,\(\text{cdq}\) ...

- JZOJ 3242. Spacing

\(\text{Analysis}\) 最大值最小很容易想到二分答案 然后用 \(dp\) 检查 设 \(f_i\) 表示当前行最后一个为 \(i\) 时最优情况最大空格数是否小于 \(mid\) 若 ...

- JZOJ 1090. 【SDOI2009】晨跑

题目 略,luogu上有 解析 一眼费用流 然而怎么建图? 首先我们要挖掘题中的限制条件和性质 一个点只能经过一次 能走的天数最长 满足第二条的条件下走过的路程最短 那么显然是最小费用最大流了 对于后 ...