Step-by-step from Markov Process to Markov Decision Process

In this post, I will illustrate Markov Property, Markov Reward Process and finally Markov Decision Process, which are fundamental concepts in Reinforcement Learning.

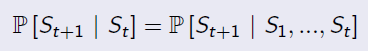

Markov Property

'The state is independent of the past given the present'

Markov Process (Markov Chain)

Keywords: state, transition matrix

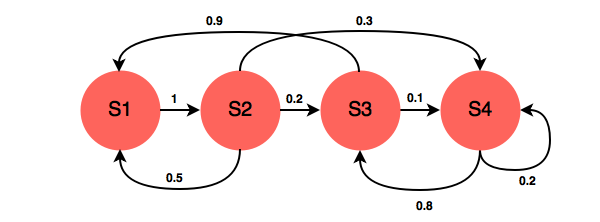

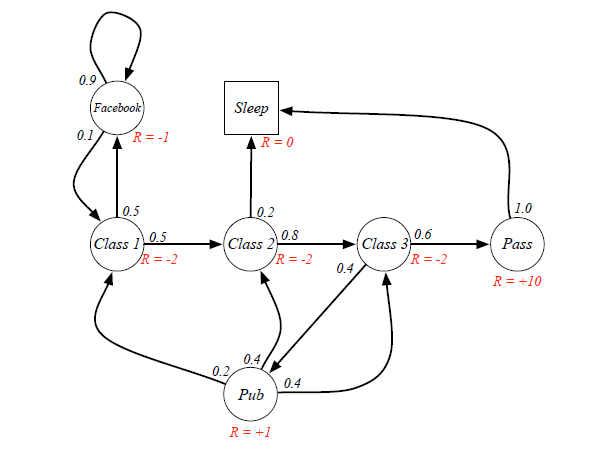

A Markov Process is defined by a Tuple(S,P), in which S is the state space, and P is the transition matrix. The following chart is an example.

A transition matrix demonstrates the probabilities of transitioning from one state to another.

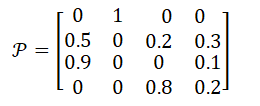

In the example above, the transition matrix is:

Markov Reward Process: Markov Process with Value Judgement

Keywords: Reward, Return, Discount Factor, Value Function

MRP add two additional properties into Markov Chain: one is Reward, who represents the immediate feedback an agent can receive at time t+1 if he is in state s at time t; another property is Discount Factor γ∈[0,1]. So the representation tuple is [S,P,R,γ].

Formally, Reward is the immediate feedback, which means when agent gets to state s at time t, it can definetly receive this reward at time t+1. It is defined by:

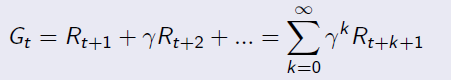

Given reward and discount factor, we can calculate the Return for a given senario by this equation:

Example for Return calculation:

Senario: Class1->Class2->Class3->Pass->Sleep, and the agent is at state=Class1.

Case 1: when gamma=0, g=-2+(-2)*0+(-2)*0+10*0=-2

Case 2: when gamma=1, g=-2+(-2)*1+(-2)*1+10*1=4

Case 3: when gamma=0.8, g=-2+(-2)*0.8+(-2)*0.64+10*=-4-1.6+5.12=-0.48

From different γ, we know our agent can be exetremely short-sighted (far-sighted) only for immediate reward, or trying to seek balance between short and long term reward.

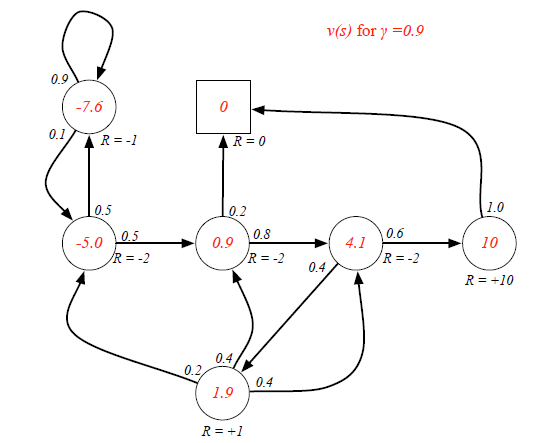

When an agent is in a certain state, the way to measure the total reward from this state over time is calculating expected Returns for all possible senarios. The function to calculate it is called Value Function:

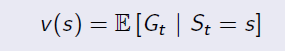

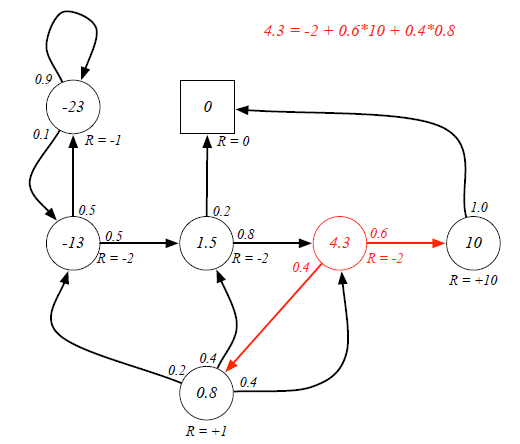

Ex. If the agent is at Class3 state, it has 0.6 and 0.4 probabilities to transite to Pass and Pub respectively. Because there are loops inside the graph, it's difficult to directly derive expected return from value function. (Forget the red labeled value, they are result...)

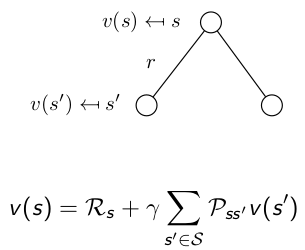

Bellman Equation helps to solve this complexity:

It breaks the value function into two parts: Immediate Reward and Future Reward: The future reward is discounted by γ, and it has probabilities on different states, so actually the future reward is an expectation.

The future reward is discounted by γ, and it has probabilities on different states, so actually the future reward is an expectation.

Now we can use Bellman Equation to solve value function:

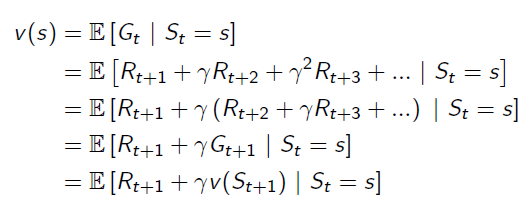

Markov Decision Process: MRP with Actions

Keywords: Action

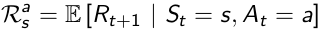

Markov Decision Process adds more complexity onto MRP, it is defined by a tuple(S,A,P,R,γ), in which:

S is state space, and γ is discount factor, they are same as MRP.

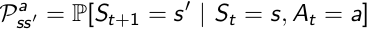

A is a finite set of Actions, which is new. Then because of the existense of Action, Transition Matrix and Reward Function are all conditional on both State and Action.

P is State Transition Matrix: it is conditional on state and action at time t, which means different actions would result in different distribution of state at time t+1.

R is Reward Function conditional upon state and action: also, different actions lead to different reward, despite of the same state s.

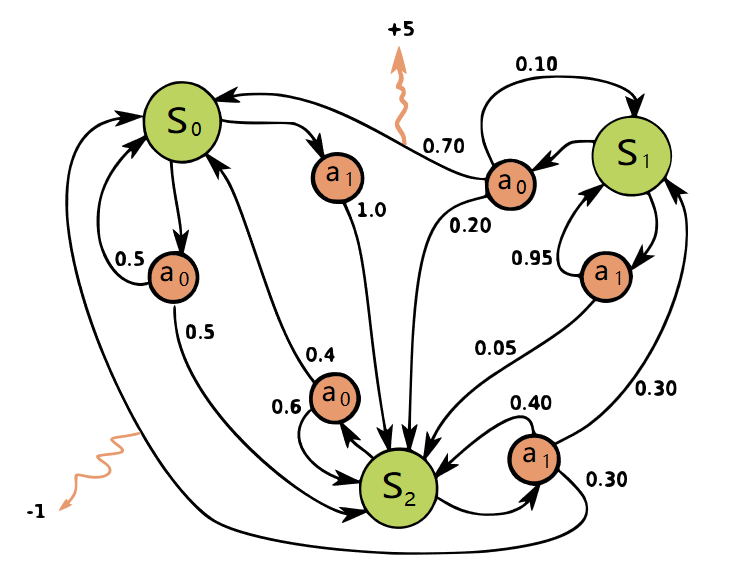

A graph(from Wikipedia) helps understanding the role of actions:

So by now, we have already had the model of the environment: all states, all possible actions and transition matrix conditional on state and actions.

Step-by-step from Markov Process to Markov Decision Process的更多相关文章

- Step by step Process of creating APD

Step by step Process of creating APD: Business Scenario: Here we are going to create an APD on top o ...

- Step by Step Process of Migrating non-CDBs and PDBs Using ASM for File Storage (Doc ID 1576755.1)

Step by Step Process of Migrating non-CDBs and PDBs Using ASM for File Storage (Doc ID 1576755.1) AP ...

- Tomcat Clustering - A Step By Step Guide --转载

Tomcat Clustering - A Step By Step Guide Apache Tomcat is a great performer on its own, but if you'r ...

- [ZZ] Understanding 3D rendering step by step with 3DMark11 - BeHardware >> Graphics cards

http://www.behardware.com/art/lire/845/ --> Understanding 3D rendering step by step with 3DMark11 ...

- e2e 自动化集成测试 架构 实例 WebStorm Node.js Mocha WebDriverIO Selenium Step by step (二) 图片验证码的识别

上一篇文章讲了“e2e 自动化集成测试 架构 京东 商品搜索 实例 WebStorm Node.js Mocha WebDriverIO Selenium Step by step 一 京东 商品搜索 ...

- Code Understanding Step by Step - We Need a Task

Code understanding is a task we are always doing, though we are not even aware that we're doing it ...

- enode框架step by step之saga的思想与实现

enode框架step by step之saga的思想与实现 enode框架系列step by step文章系列索引: 分享一个基于DDD以及事件驱动架构(EDA)的应用开发框架enode enode ...

- 课程五(Sequence Models),第一 周(Recurrent Neural Networks) —— 1.Programming assignments:Building a recurrent neural network - step by step

Building your Recurrent Neural Network - Step by Step Welcome to Course 5's first assignment! In thi ...

- 精通initramfs构建step by step

(一)hello world 一.initramfs是什么 在2.6版本的linux内核中,都包含一个压缩过的cpio格式 的打包文件.当内核启动时,会从这个打包文件中导出文件到内核的rootfs ...

随机推荐

- CopyOnWriteArrayList详解

可以提前读这篇文章:多读少写的场景 如何提高性能 写入时复制(CopyOnWrite)思想 写入时复制(CopyOnWrite,简称COW)思想是计算机程序设计领域中的一种优化策略.其核心思想是,如果 ...

- Python中map和reduce函数??

①从参数方面来讲: map()函数: map()包含两个参数,第一个是参数是一个函数,第二个是序列(列表或元组).其中,函数(即map的第一个参数位置的函数)可以接收一个或多个参数. reduce() ...

- Python 内置函数raw_input()和input()用法和区别

我们知道python接受输入的raw_input()和input() ,在python3 输入raw_input() 去掉乐,只要用input() 输入,input 可以接收一个Python表达式作为 ...

- Ionic -v1初始项目结构

界面

- vue.js(18)--父组件向子组件传值

子组件是不能直接使用父组件中数据的,需要进行属性绑定(v-bind:自定义属性名=“msg”),绑定后需要在子组件中使用props[‘自定义属性名’]数组来定义父组件的自定义名称. props数组中的 ...

- sqli(7)

前言 第7关 导出文件GET字符型注入 步骤OK,但是就是不能写入文件,不知是文件夹的问题还是自己操作的问题.但是确实,没有导入成功. 1. 查看闭合,看源码,发现闭合是((‘ ’)): 2.查看所在 ...

- linux 服务器与客户端异常断开连接问题

服务器与客户端连接,客户端异常断掉之后服务器端口仍然被占用, 到最后是不是服务器端达到最大连接数就没法连接了?领导让我测试这种情况,我用自己的电脑当TCP Client,虚拟机当服务器,连接之后能正常 ...

- Thinkphp 请求和响应

一. Request对象获取方法 1. request() 助手函数获取 2. think\Request 类获取 3.利用框架注入Request对象 Request方法时单利方法 在think框架 ...

- centos 6.5 安装 dubbo 管理中心

从http://pan.baidu.com/s/1dDlI7aL下载dubbo-admin-2.5.4.war包,将下载的包放在tomcat的webapps目录,启动tomcat自动解压该war包,然 ...

- R reticulate 设置 python 环境

library("reticulate") use_python("/usr/bin/python", required = T) py_config() 注意 ...