Linear Regression and Gradient Descent (English version)

1.Problem and Loss Function

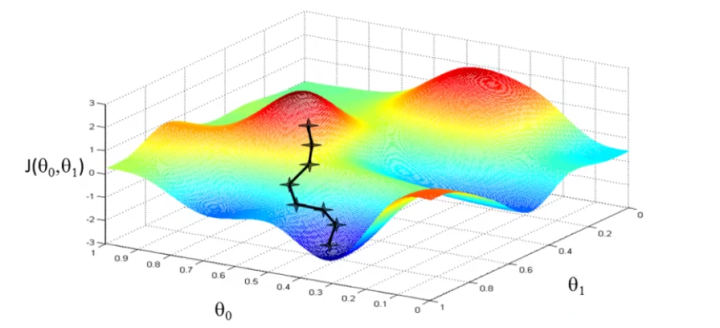

From the initial state, we probably have a really poor system (may be only output zero). By using X and Y to train, we try to derive a better parameter θ. The training process (learning process) may be time-consuming, because the algorithm updates parameters only a little on every training step.

2. Cost Function?

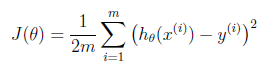

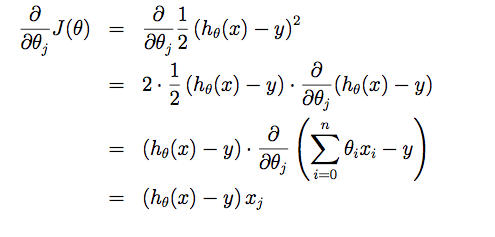

Suppose driving from somewhere to Toronto: it is easy to know the coordinates of Toronto, but it is more important to know where we are now! Cost function is the tool giving us how different between Hypothesis and label Y, so that we can drive to the target. For regression problem, we use MSE as the cost function.

Linear Regression and Gradient Descent (English version)的更多相关文章

- Linear Regression Using Gradient Descent 代码实现

参考吴恩达<机器学习>, 进行 Octave, Python(Numpy), C++(Eigen) 的原理实现, 同时用 scikit-learn, TensorFlow, dlib 进行 ...

- 线性回归、梯度下降(Linear Regression、Gradient Descent)

转载请注明出自BYRans博客:http://www.cnblogs.com/BYRans/ 实例 首先举个例子,假设我们有一个二手房交易记录的数据集,已知房屋面积.卧室数量和房屋的交易价格,如下表: ...

- 斯坦福机器学习视频笔记 Week1 Linear Regression and Gradient Descent

最近开始学习Coursera上的斯坦福机器学习视频,我是刚刚接触机器学习,对此比较感兴趣:准备将我的学习笔记写下来, 作为我每天学习的签到吧,也希望和各位朋友交流学习. 这一系列的博客,我会不定期的更 ...

- 斯坦福机器学习视频笔记 Week1 线性回归和梯度下降 Linear Regression and Gradient Descent

最近开始学习Coursera上的斯坦福机器学习视频,我是刚刚接触机器学习,对此比较感兴趣:准备将我的学习笔记写下来, 作为我每天学习的签到吧,也希望和各位朋友交流学习. 这一系列的博客,我会不定期的更 ...

- Linear Regression and Gradient Descent

随着所学算法的增多,加之使用次数的增多,不时对之前所学的算法有新的理解.这篇博文是在2018年4月17日再次编辑,将之前的3篇博文合并为一篇. 1.Problem and Loss Function ...

- Logistic Regression and Gradient Descent

Logistic Regression and Gradient Descent Logistic regression is an excellent tool to know for classi ...

- Logistic Regression Using Gradient Descent -- Binary Classification 代码实现

1. 原理 Cost function Theta 2. Python # -*- coding:utf8 -*- import numpy as np import matplotlib.pyplo ...

- flink 批量梯度下降算法线性回归参数求解(Linear Regression with BGD(batch gradient descent) )

1.线性回归 假设线性函数如下: 假设我们有10个样本x1,y1),(x2,y2).....(x10,y10),求解目标就是根据多个样本求解theta0和theta1的最优值. 什么样的θ最好的呢?最 ...

- machine learning (7)---normal equation相对于gradient descent而言求解linear regression问题的另一种方式

Normal equation: 一种用来linear regression问题的求解Θ的方法,另一种可以是gradient descent 仅适用于linear regression问题的求解,对其 ...

随机推荐

- 提交代码到github

1. 下载git 点击download下载即可.下载地址:https://gitforwindows.org/ 2. 注册github github地址:https://github.com/ 一定要 ...

- Manacher(最长递减回文串)

http://acm.hdu.edu.cn/showproblem.php?pid=4513 Problem Description 吉哥又想出了一个新的完美队形游戏! 假设有n个人按顺序站在他的面前 ...

- Codeforces 1110C (思维+数论)

题面 传送门 分析 这种数据范围比较大的题最好的方法是先暴力打表找规律 通过打表,可以发现规律如下: 定义\(x=2^{log_2a+1}\) (注意,cf官方题解这里写错了,官方题解中定义\(x=2 ...

- BZOJ 4821 (luogu 3707)(全网最简洁的代码实现之一)

题面 传送门 分析 计算的部分其他博客已经写的很清楚了,本博客主要提供一个简洁的实现方法 尤其是pushdown函数写得很简洁 代码 #include<iostream> #include ...

- 凸包模板——Graham扫描法

凸包模板--Graham扫描法 First 标签: 数学方法--计算几何 题目:洛谷P2742[模板]二维凸包/[USACO5.1]圈奶牛Fencing the Cows yyb的讲解:https:/ ...

- POJ 2528 Mayor's posters(线段树,区间覆盖,单点查询)

Mayor's posters Time Limit: 1000MS Memory Limit: 65536K Total Submissions: 45703 Accepted: 13239 ...

- CGAffineTransform 图像处理类

CGAffineTransform 介绍 概述 CGAffineTransform是一个用于处理形变的类,其可以改变控件的平移.缩放.旋转等,其坐标系统采用的是二维坐标系,即向右为x轴正方向,向下为y ...

- javaweb各种框架组合案例(五):springboot+mybatis+generator

一.介绍 1.springboot是spring项目的总结+整合 当我们搭smm,ssh,ssjdbc等组合框架时,各种配置不胜其烦,不仅是配置问题,在添加各种依赖时也是让人头疼,关键有些jar包之间 ...

- shlwapi.h文件夹文件是否存在

{ if( NULL == lpszFileName) { return FALSE; } if (PathFileExists(lpszFileName)) { return TRUE; } els ...

- Go 使用切片

1.使用切片字面量来声明切片 // 创建一个整型切片 // 其容量和长度都是 5 个元素 slice := [], , , , } // 改变索引为 1 的元素的值 slice[] = 2.使用切片创 ...