第8章 Spark SQL实战

第8章 Spark SQL实战

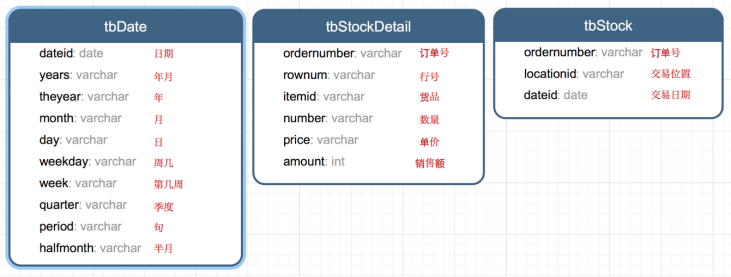

8.1 数据说明

数据集是货品交易数据集。

每个订单可能包含多个货品,每个订单可以产生多次交易,不同的货品有不同的单价。

8.2 加载数据

tbStock:

scala> case class tbStock(ordernumber:String,locationid:String,dateid:String) extends Serializable

defined class tbStock scala> val tbStockRdd = spark.sparkContext.textFile("tbStock.txt")

tbStockRdd: org.apache.spark.rdd.RDD[String] = tbStock.txt MapPartitionsRDD[1] at textFile at <console>:23 scala> val tbStockDS = tbStockRdd.map(_.split(",")).map(attr=>tbStock(attr(0),attr(1),attr(2))).toDS

tbStockDS: org.apache.spark.sql.Dataset[tbStock] = [ordernumber: string, locationid: string ... 1 more field] scala> tbStockDS.show()

+------------+----------+---------+

| ordernumber|locationid| dataid|

+------------+----------+---------+

|BYSL00000893| ZHAO|2007-8-23|

|BYSL00000897| ZHAO|2007-8-24|

|BYSL00000898| ZHAO|2007-8-25|

|BYSL00000899| ZHAO|2007-8-26|

|BYSL00000900| ZHAO|2007-8-26|

|BYSL00000901| ZHAO|2007-8-27|

|BYSL00000902| ZHAO|2007-8-27|

|BYSL00000904| ZHAO|2007-8-28|

|BYSL00000905| ZHAO|2007-8-28|

|BYSL00000906| ZHAO|2007-8-28|

|BYSL00000907| ZHAO|2007-8-29|

|BYSL00000908| ZHAO|2007-8-30|

|BYSL00000909| ZHAO| 2007-9-1|

|BYSL00000910| ZHAO| 2007-9-1|

|BYSL00000911| ZHAO|2007-8-31|

|BYSL00000912| ZHAO| 2007-9-2|

|BYSL00000913| ZHAO| 2007-9-3|

|BYSL00000914| ZHAO| 2007-9-3|

|BYSL00000915| ZHAO| 2007-9-4|

|BYSL00000916| ZHAO| 2007-9-4|

+------------+----------+---------+

only showing top 20 rows

tbStockDetail:

scala> case class tbStockDetail(ordernumber:String, rownum:Int, itemid:String, number:Int, price:Double, amount:Double) extends Serializable

defined class tbStockDetail scala> val tbStockDetailRdd = spark.sparkContext.textFile("tbStockDetail.txt")

tbStockDetailRdd: org.apache.spark.rdd.RDD[String] = tbStockDetail.txt MapPartitionsRDD[13] at textFile at <console>:23 scala> val tbStockDetailDS = tbStockDetailRdd.map(_.split(",")).map(attr=> tbStockDetail(attr(0),attr(1).trim().toInt,attr(2),attr(3).trim().toInt,attr(4).trim().toDouble, attr(5).trim().toDouble)).toDS

tbStockDetailDS: org.apache.spark.sql.Dataset[tbStockDetail] = [ordernumber: string, rownum: int ... 4 more fields] scala> tbStockDetailDS.show()

+------------+------+--------------+------+-----+------+

| ordernumber|rownum| itemid|number|price|amount|

+------------+------+--------------+------+-----+------+

|BYSL00000893| 0|FS527258160501| -1|268.0|-268.0|

|BYSL00000893| 1|FS527258169701| 1|268.0| 268.0|

|BYSL00000893| 2|FS527230163001| 1|198.0| 198.0|

|BYSL00000893| 3|24627209125406| 1|298.0| 298.0|

|BYSL00000893| 4|K9527220210202| 1|120.0| 120.0|

|BYSL00000893| 5|01527291670102| 1|268.0| 268.0|

|BYSL00000893| 6|QY527271800242| 1|158.0| 158.0|

|BYSL00000893| 7|ST040000010000| 8| 0.0| 0.0|

|BYSL00000897| 0|04527200711305| 1|198.0| 198.0|

|BYSL00000897| 1|MY627234650201| 1|120.0| 120.0|

|BYSL00000897| 2|01227111791001| 1|249.0| 249.0|

|BYSL00000897| 3|MY627234610402| 1|120.0| 120.0|

|BYSL00000897| 4|01527282681202| 1|268.0| 268.0|

|BYSL00000897| 5|84126182820102| 1|158.0| 158.0|

|BYSL00000897| 6|K9127105010402| 1|239.0| 239.0|

|BYSL00000897| 7|QY127175210405| 1|199.0| 199.0|

|BYSL00000897| 8|24127151630206| 1|299.0| 299.0|

|BYSL00000897| 9|G1126101350002| 1|158.0| 158.0|

|BYSL00000897| 10|FS527258160501| 1|198.0| 198.0|

|BYSL00000897| 11|ST040000010000| 13| 0.0| 0.0|

+------------+------+--------------+------+-----+------+

only showing top 20 rows

tbDate:

scala> case class tbDate(dateid:String, years:Int, theyear:Int, month:Int, day:Int, weekday:Int, week:Int, quarter:Int, period:Int, halfmonth:Int) extends Serializable

defined class tbDate scala> val tbDateRdd = spark.sparkContext.textFile("tbDate.txt")

tbDateRdd: org.apache.spark.rdd.RDD[String] = tbDate.txt MapPartitionsRDD[20] at textFile at <console>:23 scala> val tbDateDS = tbDateRdd.map(_.split(",")).map(attr=> tbDate(attr(0),attr(1).trim().toInt, attr(2).trim().toInt,attr(3).trim().toInt, attr(4).trim().toInt, attr(5).trim().toInt, attr(6).trim().toInt, attr(7).trim().toInt, attr(8).trim().toInt, attr(9).trim().toInt)).toDS

tbDateDS: org.apache.spark.sql.Dataset[tbDate] = [dateid: string, years: int ... 8 more fields] scala> tbDateDS.show()

+---------+------+-------+-----+---+-------+----+-------+------+---------+

| dateid| years|theyear|month|day|weekday|week|quarter|period|halfmonth|

+---------+------+-------+-----+---+-------+----+-------+------+---------+

| 2003-1-1|200301| 2003| 1| 1| 3| 1| 1| 1| 1|

| 2003-1-2|200301| 2003| 1| 2| 4| 1| 1| 1| 1|

| 2003-1-3|200301| 2003| 1| 3| 5| 1| 1| 1| 1|

| 2003-1-4|200301| 2003| 1| 4| 6| 1| 1| 1| 1|

| 2003-1-5|200301| 2003| 1| 5| 7| 1| 1| 1| 1|

| 2003-1-6|200301| 2003| 1| 6| 1| 2| 1| 1| 1|

| 2003-1-7|200301| 2003| 1| 7| 2| 2| 1| 1| 1|

| 2003-1-8|200301| 2003| 1| 8| 3| 2| 1| 1| 1|

| 2003-1-9|200301| 2003| 1| 9| 4| 2| 1| 1| 1|

|2003-1-10|200301| 2003| 1| 10| 5| 2| 1| 1| 1|

|2003-1-11|200301| 2003| 1| 11| 6| 2| 1| 2| 1|

|2003-1-12|200301| 2003| 1| 12| 7| 2| 1| 2| 1|

|2003-1-13|200301| 2003| 1| 13| 1| 3| 1| 2| 1|

|2003-1-14|200301| 2003| 1| 14| 2| 3| 1| 2| 1|

|2003-1-15|200301| 2003| 1| 15| 3| 3| 1| 2| 1|

|2003-1-16|200301| 2003| 1| 16| 4| 3| 1| 2| 2|

|2003-1-17|200301| 2003| 1| 17| 5| 3| 1| 2| 2|

|2003-1-18|200301| 2003| 1| 18| 6| 3| 1| 2| 2|

|2003-1-19|200301| 2003| 1| 19| 7| 3| 1| 2| 2|

|2003-1-20|200301| 2003| 1| 20| 1| 4| 1| 2| 2|

+---------+------+-------+-----+---+-------+----+-------+------+---------+

only showing top 20 rows

注册表:

scala> tbStockDS.createOrReplaceTempView("tbStock")

scala> tbDateDS.createOrReplaceTempView("tbDate")

scala> tbStockDetailDS.createOrReplaceTempView("tbStockDetail")

8.3 计算所有订单中每年的销售单数、销售总额

统计所有订单中每年的销售单数、销售总额

三个表连接后以count(distinct a.ordernumber)计销售单数,sum(b.amount)计销售总额

SELECT c.theyear, COUNT(DISTINCT a.ordernumber), SUM(b.amount)

FROM tbStock a

JOIN tbStockDetail b ON a.ordernumber = b.ordernumber

JOIN tbDate c ON a.dateid = c.dateid

GROUP BY c.theyear

ORDER BY c.theyear

spark.sql("SELECT c.theyear, COUNT(DISTINCT a.ordernumber), SUM(b.amount) FROM tbStock a JOIN tbStockDetail b ON a.ordernumber = b.ordernumber JOIN tbDate c ON a.dateid = c.dateid GROUP BY c.theyear ORDER BY c.theyear").show

结果如下:

+-------+---------------------------+--------------------+

|theyear|count(DISTINCT ordernumber)| sum(amount)|

+-------+---------------------------+--------------------+

| 2004| 1094| 3268115.499199999|

| 2005| 3828|1.3257564149999991E7|

| 2006| 3772|1.3680982900000006E7|

| 2007| 4885|1.6719354559999993E7|

| 2008| 4861| 1.467429530000001E7|

| 2009| 2619| 6323697.189999999|

| 2010| 94| 210949.65999999997|

+-------+---------------------------+--------------------+

8.4 计算所有订单每年最大金额订单的销售额

目标:统计每年最大金额订单的销售额:

1)统计每年,每个订单一共有多少销售额

SELECT a.dateid, a.ordernumber, SUM(b.amount) AS SumOfAmount

FROM tbStock a

JOIN tbStockDetail b ON a.ordernumber = b.ordernumber

GROUP BY a.dateid, a.ordernumber

spark.sql("SELECT a.dateid, a.ordernumber, SUM(b.amount) AS SumOfAmount FROM tbStock a JOIN tbStockDetail b ON a.ordernumber = b.ordernumber GROUP BY a.dateid, a.ordernumber").show

结果如下:

+----------+------------+------------------+

| dateid| ordernumber| SumOfAmount|

+----------+------------+------------------+

| 2008-4-9|BYSL00001175| 350.0|

| 2008-5-12|BYSL00001214| 592.0|

| 2008-7-29|BYSL00011545| 2064.0|

| 2008-9-5|DGSL00012056| 1782.0|

| 2008-12-1|DGSL00013189| 318.0|

|2008-12-18|DGSL00013374| 963.0|

| 2009-8-9|DGSL00015223| 4655.0|

| 2009-10-5|DGSL00015585| 3445.0|

| 2010-1-14|DGSL00016374| 2934.0|

| 2006-9-24|GCSL00000673|3556.1000000000004|

| 2007-1-26|GCSL00000826| 9375.199999999999|

| 2007-5-24|GCSL00001020| 6171.300000000002|

| 2008-1-8|GCSL00001217| 7601.6|

| 2008-9-16|GCSL00012204| 2018.0|

| 2006-7-27|GHSL00000603| 2835.6|

|2006-11-15|GHSL00000741| 3951.94|

| 2007-6-6|GHSL00001149| 0.0|

| 2008-4-18|GHSL00001631| 12.0|

| 2008-7-15|GHSL00011367| 578.0|

| 2009-5-8|GHSL00014637| 1797.6|

+----------+------------+------------------+

2)以上一步查询结果为基础表,和表tbDate使用dateid join,求出每年最大金额订单的销售额

SELECT theyear, MAX(c.SumOfAmount) AS SumOfAmount

FROM (SELECT a.dateid, a.ordernumber, SUM(b.amount) AS SumOfAmount

FROM tbStock a

JOIN tbStockDetail b ON a.ordernumber = b.ordernumber

GROUP BY a.dateid, a.ordernumber

) c

JOIN tbDate d ON c.dateid = d.dateid

GROUP BY theyear

ORDER BY theyear DESC

spark.sql("SELECT theyear, MAX(c.SumOfAmount) AS SumOfAmount FROM (SELECT a.dateid, a.ordernumber, SUM(b.amount) AS SumOfAmount FROM tbStock a JOIN tbStockDetail b ON a.ordernumber = b.ordernumber GROUP BY a.dateid, a.ordernumber ) c JOIN tbDate d ON c.dateid = d.dateid GROUP BY theyear ORDER BY theyear DESC").show

结果如下:

+-------+------------------+

|theyear| SumOfAmount|

+-------+------------------+

| 2010|13065.280000000002|

| 2009|25813.200000000008|

| 2008| 55828.0|

| 2007| 159126.0|

| 2006| 36124.0|

| 2005|38186.399999999994|

| 2004| 23656.79999999997|

+-------+------------------+

8.5 计算所有订单中每年最畅销货品

目标:统计每年最畅销货品(哪个货品销售额amount在当年最高,哪个就是最畅销货品)

第一步、求出每年每个货品的销售额

SELECT c.theyear, b.itemid, SUM(b.amount) AS SumOfAmount

FROM tbStock a

JOIN tbStockDetail b ON a.ordernumber = b.ordernumber

JOIN tbDate c ON a.dateid = c.dateid

GROUP BY c.theyear, b.itemid

spark.sql("SELECT c.theyear, b.itemid, SUM(b.amount) AS SumOfAmount FROM tbStock a JOIN tbStockDetail b ON a.ordernumber = b.ordernumber JOIN tbDate c ON a.dateid = c.dateid GROUP BY c.theyear, b.itemid").show

结果如下:

+-------+--------------+------------------+

|theyear| itemid| SumOfAmount|

+-------+--------------+------------------+

| 2004|43824480810202| 4474.72|

| 2006|YA214325360101| 556.0|

| 2006|BT624202120102| 360.0|

| 2007|AK215371910101|24603.639999999992|

| 2008|AK216169120201|29144.199999999997|

| 2008|YL526228310106|16073.099999999999|

| 2009|KM529221590106| 5124.800000000001|

| 2004|HT224181030201|2898.6000000000004|

| 2004|SG224308320206| 7307.06|

| 2007|04426485470201|14468.800000000001|

| 2007|84326389100102| 9134.11|

| 2007|B4426438020201| 19884.2|

| 2008|YL427437320101|12331.799999999997|

| 2008|MH215303070101| 8827.0|

| 2009|YL629228280106| 12698.4|

| 2009|BL529298020602| 2415.8|

| 2009|F5127363019006| 614.0|

| 2005|24425428180101| 34890.74|

| 2007|YA214127270101| 240.0|

| 2007|MY127134830105| 11099.92|

+-------+--------------+------------------+

第二步、在第一步的基础上,统计每年单个货品中的最大金额

SELECT d.theyear, MAX(d.SumOfAmount) AS MaxOfAmount

FROM (SELECT c.theyear, b.itemid, SUM(b.amount) AS SumOfAmount

FROM tbStock a

JOIN tbStockDetail b ON a.ordernumber = b.ordernumber

JOIN tbDate c ON a.dateid = c.dateid

GROUP BY c.theyear, b.itemid

) d

GROUP BY d.theyear

spark.sql("SELECT d.theyear, MAX(d.SumOfAmount) AS MaxOfAmount FROM (SELECT c.theyear, b.itemid, SUM(b.amount) AS SumOfAmount FROM tbStock a JOIN tbStockDetail b ON a.ordernumber = b.ordernumber JOIN tbDate c ON a.dateid = c.dateid GROUP BY c.theyear, b.itemid ) d GROUP BY d.theyear").show

结果如下:

+-------+------------------+

|theyear| MaxOfAmount|

+-------+------------------+

| 2007| 70225.1|

| 2006| 113720.6|

| 2004|53401.759999999995|

| 2009| 30029.2|

| 2005|56627.329999999994|

| 2010| 4494.0|

| 2008| 98003.60000000003|

+-------+------------------+

第三步、用最大销售额和统计好的每个货品的销售额join,以及用年join,集合得到最畅销货品那一行信息

SELECT DISTINCT e.theyear, e.itemid, f.MaxOfAmount

FROM (SELECT c.theyear, b.itemid, SUM(b.amount) AS SumOfAmount

FROM tbStock a

JOIN tbStockDetail b ON a.ordernumber = b.ordernumber

JOIN tbDate c ON a.dateid = c.dateid

GROUP BY c.theyear, b.itemid

) e

JOIN (SELECT d.theyear, MAX(d.SumOfAmount) AS MaxOfAmount

FROM (SELECT c.theyear, b.itemid, SUM(b.amount) AS SumOfAmount

FROM tbStock a

JOIN tbStockDetail b ON a.ordernumber = b.ordernumber

JOIN tbDate c ON a.dateid = c.dateid

GROUP BY c.theyear, b.itemid

) d

GROUP BY d.theyear

) f ON e.theyear = f.theyear

AND e.SumOfAmount = f.MaxOfAmount

ORDER BY e.theyear

spark.sql("SELECT DISTINCT e.theyear, e.itemid, f.maxofamount FROM (SELECT c.theyear, b.itemid, SUM(b.amount) AS sumofamount FROM tbStock a JOIN tbStockDetail b ON a.ordernumber = b.ordernumber JOIN tbDate c ON a.dateid = c.dateid GROUP BY c.theyear, b.itemid ) e JOIN (SELECT d.theyear, MAX(d.sumofamount) AS maxofamount FROM (SELECT c.theyear, b.itemid, SUM(b.amount) AS sumofamount FROM tbStock a JOIN tbStockDetail b ON a.ordernumber = b.ordernumber JOIN tbDate c ON a.dateid = c.dateid GROUP BY c.theyear, b.itemid ) d GROUP BY d.theyear ) f ON e.theyear = f.theyear AND e.sumofamount = f.maxofamount ORDER BY e.theyear").show

结果如下:

+-------+--------------+------------------+

|theyear| itemid| maxofamount|

+-------+--------------+------------------+

| 2004|JY424420810101|53401.759999999995|

| 2005|24124118880102|56627.329999999994|

| 2006|JY425468460101| 113720.6|

| 2007|JY425468460101| 70225.1|

| 2008|E2628204040101| 98003.60000000003|

| 2009|YL327439080102| 30029.2|

| 2010|SQ429425090101| 4494.0|

+-------+--------------+------------------+

第8章 Spark SQL实战的更多相关文章

- 第7章 Spark SQL 的运行原理(了解)

第7章 Spark SQL 的运行原理(了解) 7.1 Spark SQL运行架构 Spark SQL对SQL语句的处理和关系型数据库类似,即词法/语法解析.绑定.优化.执行.Spark SQL会先将 ...

- 第1章 Spark SQL概述

第1章 Spark SQL概述 1.1 什么是Spark SQL Spark SQL是Spark用来处理结构化数据的一个模块,它提供了一个编程抽象叫做DataFrame并且作为分布式SQL查询引擎的作 ...

- Spark SQL实战

一.程序 package sparklearning import org.apache.log4j.Logger import org.apache.spark.SparkConf import o ...

- 大数据技术之_19_Spark学习_03_Spark SQL 应用解析 + Spark SQL 概述、解析 、数据源、实战 + 执行 Spark SQL 查询 + JDBC/ODBC 服务器

第1章 Spark SQL 概述1.1 什么是 Spark SQL1.2 RDD vs DataFrames vs DataSet1.2.1 RDD1.2.2 DataFrame1.2.3 DataS ...

- Spark SQL知识点大全与实战

Spark SQL概述 1.什么是Spark SQL Spark SQL是Spark用于结构化数据(structured data)处理的Spark模块. 与基本的Spark RDD API不同,Sp ...

- Spark SQL知识点与实战

Spark SQL概述 1.什么是Spark SQL Spark SQL是Spark用于结构化数据(structured data)处理的Spark模块. 与基本的Spark RDD API不同,Sp ...

- 以慕课网日志分析为例-进入大数据Spark SQL的世界

下载地址.请联系群主 第1章 初探大数据 本章将介绍为什么要学习大数据.如何学好大数据.如何快速转型大数据岗位.本项目实战课程的内容安排.本项目实战课程的前置内容介绍.开发环境介绍.同时为大家介绍项目 ...

- 以某课网日志分析为例 进入大数据 Spark SQL 的世界

第1章 初探大数据 本章将介绍为什么要学习大数据.如何学好大数据.如何快速转型大数据岗位.本项目实战课程的内容安排.本项目实战课程的前置内容介绍.开发环境介绍.同时为大家介绍项目中涉及的Hadoop. ...

- Spark SQL 源代码分析系列

从决定写Spark SQL文章的源代码分析,到现在一个月的时间,一个又一个几乎相同的结束很快,在这里也做了一个综合指数,方便阅读,下面是读取顺序 :) 第一章 Spark SQL源代码分析之核心流程 ...

随机推荐

- Oracle DataGuard故障转移(failover)后使用RMAN还原失败的主库

(一)DG故障转移后切换为备库的方法 在DG执行故障转移之后,主库与从库的关系就被破坏了.这个时候如果要恢复主从关系,可以使用下面的3种方法: 将失败的主库重新搭建为备库,该方法比较耗时: 使用数据库 ...

- 每日一道 LeetCode (1):两数之和

引言 前段时间看到一篇刷 LeetCode 的文章,感触很深,我本身自己上大学的时候,没怎么研究过算法这一方面,导致自己直到现在算法都不咋地. 一直有心想填补下自己的这个短板,实际上又一直给自己找理由 ...

- day19:os模块&shutil模块&tarfile模块

os模块:对系统进行操作(6+3) system popen listdir getcwd chdir environ / name sep linesep import os #### ...

- 下载excel模板,导入数据时需要用到

页面代码: <form id="form1" enctype="multipart/form-data"> <div style=" ...

- 7.9 NOI模拟赛 C.走路 背包 dp 特异性

(啊啊啊 什么考试的时候突然降智这题目硬生生没想出来. 容易发现是先走到某个地方 然后再走回来的 然后在倒着走的路径上选择一些点使得最后的得到的最多. 设\(f_{i,j}\)表示到达i这个点选择的价 ...

- luogu P3223 [HNOI2012]排队

LINK:排队\ 原谅我没学过组合数学 没有高中数学基础水平... 不过凭着隔板法的应用还是可以推出来的. 首先考虑女生 发现一个排列数m! 两个女生不能相邻 那么理论上来说存在无解的情况 而这道题好 ...

- 文件操作之File 和 Path

转载:https://blog.csdn.net/u010889616/article/details/52694061 Java7中文件IO发生了很大的变化,专门引入了很多新的类: import j ...

- Linux下利用docker搭建elasticsearch(单节点)

1. 拉取镜像 #elasticsearch 6.x和7.x版本有很多不一样需要确认 docker pull docker.elastic.co/elasticsearch/elasticsearch ...

- Django自学教程PDF高清电子书百度云网盘免费领取

点击获取提取码:x3di 你一定可以学会,Django 很简单! <Django自学教程>的作者学习了全部的 Django英文的官方文档,觉得国内比较好的Django学习资源不多,所以决定 ...

- jmeter如何设置全局变量

场景:性能测试或者接口测试,如果想跨线程引用(案例:A线程组里面的一个输出,是B线程组里面的一个输入,这个时候如果要引用),这个时候你就必须要设置全局变量;全链路压测也需要分不同场景,通常情况,一个场 ...