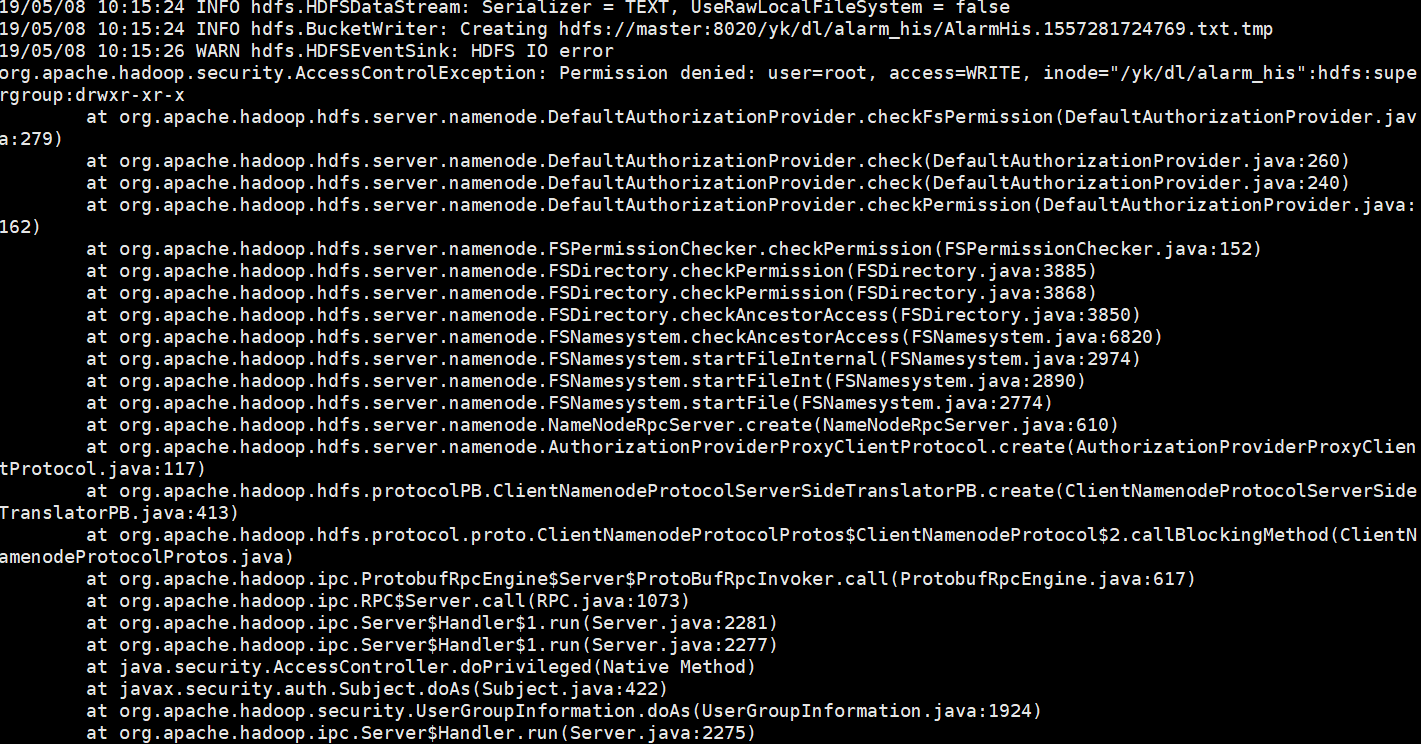

报错:HDFS IO error org.apache.hadoop.security.AccessControlException: Permission denied: user=root, access=WRITE, inode="/yk/dl/alarm_his":hdfs:supergroup:drwxr-xr-x

报错背景:

CDH集成了Flume服务,准备通过Flume将kafka中的数据放到HDFS中,

启动Flume的时候报错。

报错现象:

// :: INFO hdfs.HDFSDataStream: Serializer = TEXT, UseRawLocalFileSystem = false

// :: INFO hdfs.BucketWriter: Creating hdfs://master:8020/yk/dl/alarm_his/AlarmHis.1557281724769.txt.tmp

// :: WARN hdfs.HDFSEventSink: HDFS IO error

org.apache.hadoop.security.AccessControlException: Permission denied: user=root, access=WRITE, inode="/yk/dl/alarm_his":hdfs:supergroup:drwxr-xr-x

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.checkFsPermission(DefaultAuthorizationProvider.java:)

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.check(DefaultAuthorizationProvider.java:)

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.check(DefaultAuthorizationProvider.java:)

at org.apache.hadoop.hdfs.server.namenode.DefaultAuthorizationProvider.checkPermission(DefaultAuthorizationProvider.java:)

at org.apache.hadoop.hdfs.server.namenode.FSPermissionChecker.checkPermission(FSPermissionChecker.java:)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkPermission(FSDirectory.java:)

at org.apache.hadoop.hdfs.server.namenode.FSDirectory.checkAncestorAccess(FSDirectory.java:)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.checkAncestorAccess(FSNamesystem.java:)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFileInternal(FSNamesystem.java:)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFileInt(FSNamesystem.java:)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.startFile(FSNamesystem.java:)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.create(NameNodeRpcServer.java:)

at org.apache.hadoop.hdfs.server.namenode.AuthorizationProviderProxyClientProtocol.create(AuthorizationProviderProxyClientProtocol.java:)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.create(ClientNamenodeProtocolServerSideTranslatorPB.java:)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:)

at org.apache.hadoop.ipc.Server$Handler$.run(Server.java:)

at org.apache.hadoop.ipc.Server$Handler$.run(Server.java:)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:) at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:)

at java.lang.reflect.Constructor.newInstance(Constructor.java:)

at org.apache.hadoop.ipc.RemoteException.instantiateException(RemoteException.java:)

at org.apache.hadoop.ipc.RemoteException.unwrapRemoteException(RemoteException.java:)

at org.apache.hadoop.hdfs.DFSOutputStream.newStreamForCreate(DFSOutputStream.java:)

at org.apache.hadoop.hdfs.DFSClient.create(DFSClient.java:)

at org.apache.hadoop.hdfs.DFSClient.create(DFSClient.java:)

at org.apache.hadoop.hdfs.DistributedFileSystem$.doCall(DistributedFileSystem.java:)

at org.apache.hadoop.hdfs.DistributedFileSystem$.doCall(DistributedFileSystem.java:)

at org.apache.hadoop.fs.FileSystemLinkResolver.resolve(FileSystemLinkResolver.java:)

at org.apache.hadoop.hdfs.DistributedFileSystem.create(DistributedFileSystem.java:)

at org.apache.hadoop.hdfs.DistributedFileSystem.create(DistributedFileSystem.java:)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:)

at org.apache.hadoop.fs.FileSystem.create(FileSystem.java:)

at org.apache.flume.sink.hdfs.HDFSDataStream.doOpen(HDFSDataStream.java:)

at org.apache.flume.sink.hdfs.HDFSDataStream.open(HDFSDataStream.java:)

at org.apache.flume.sink.hdfs.BucketWriter$.call(BucketWriter.java:)

at org.apache.flume.sink.hdfs.BucketWriter$.call(BucketWriter.java:)

at org.apache.flume.sink.hdfs.BucketWriter$$.run(BucketWriter.java:)

at org.apache.flume.auth.SimpleAuthenticator.execute(SimpleAuthenticator.java:)

at org.apache.flume.sink.hdfs.BucketWriter$.call(BucketWriter.java:)

at java.util.concurrent.FutureTask.run(FutureTask.java:)

at java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:)

at java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:)

at java.lang.Thread.run(Thread.java:)

报错原因:

根据日志信息可以解读出,root用户想要在文件系统里面创建一个目录,失败。

原因是,root用户无法操作hdfs

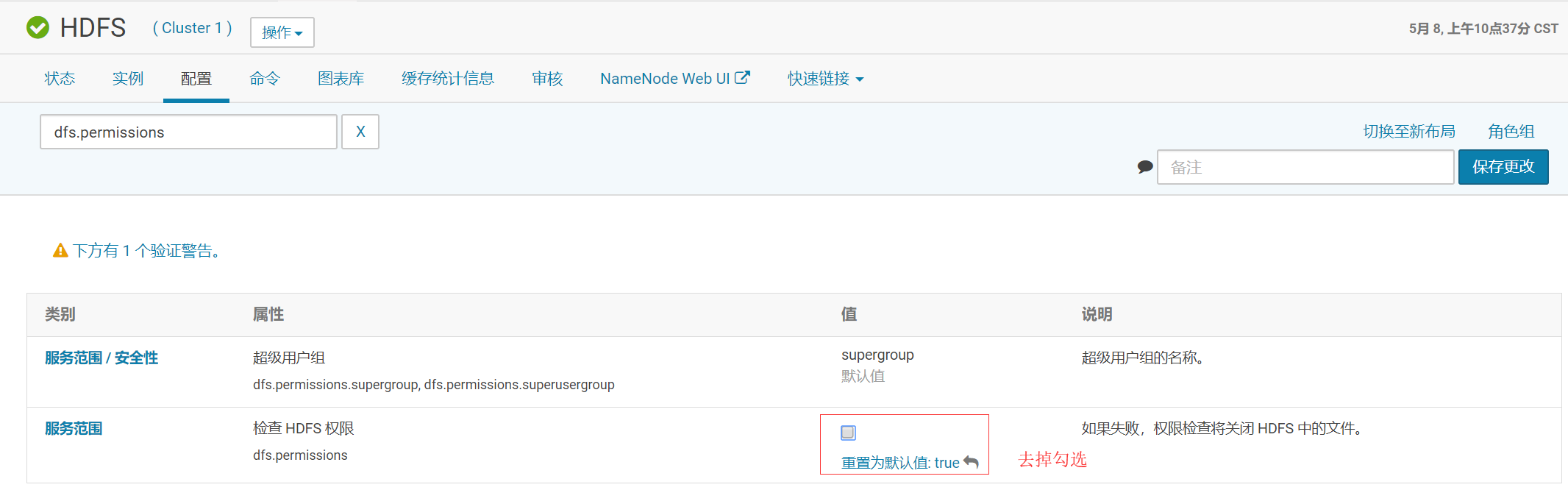

报错解决:

重启HDFS即可

报错:HDFS IO error org.apache.hadoop.security.AccessControlException: Permission denied: user=root, access=WRITE, inode="/yk/dl/alarm_his":hdfs:supergroup:drwxr-xr-x的更多相关文章

- kylin cube测试时,报错:org.apache.hadoop.security.AccessControlException: Permission denied: user=root, access=WRITE, inode="/user":hdfs:supergroup:drwxr-xr-x

异常: org.apache.hadoop.security.AccessControlException: Permission denied: user=root, access=WRITE, i ...

- 【Hadoop & Ecilpse】Exception in thread "main" org.apache.hadoop.security.AccessControlException: Permission denied: user=bruce, access=WRITE, inode="/out2/_temporary/0":atguigu:supergroup:drwxr-xr-x

问题再现: 使用本机 Ecilpse (Windows环境) 去访问远程 hadoop 集群出现以下异常: 问题原因: 因为远程提交的情况下如果没有 hadoop 的系统环境变量,就会读取当前主机的 ...

- 一脸懵逼加从入门到绝望学习hadoop之 org.apache.hadoop.ipc.RemoteException(org.apache.hadoop.security.AccessControlException): Permission denied: user=Administrator, access=WRITE, inode="/":root:supergroup:drwxr-xr报错

1:初学hadoop遇到各种错误,这里贴一下,方便以后脑补吧,报错如下: 主要是在window环境下面搞hadoop,而hadoop部署在linux操作系统上面:出现这个错误是权限的问题,操作hado ...

- Exception in thread "main" org.apache.hadoop.security.AccessControlException: Permission denied: user=lenovo, access=WRITE, inode="/user/hadoop/spark/people_savemode_test/_temporary/0":hadoop:supergro

保存文件时权限被拒绝 曾经踩过的坑: 保存结果到hdfs上没有写的权限 通过修改权限将文件写入到指定的目录下 * * * $HADOOP_HOME/bin/hdfs dfs -chmod 777 /u ...

- Exception in thread "main" org.apache.hadoop.security.AccessControlException: Permission denied: user=Mypc, access=WRITE, inode="/":fan:supergroup:drwxr-xr-x

在window上编程提示没有写Hadoop的权限 Exception in thread "main" org.apache.hadoop.security.AccessContr ...

- org.apache.hadoop.security.AccessControlException: org.apache.hadoop.security .AccessControlException: Permission denied: user=Administrator, access=WRITE, inode="hadoop": hadoop:supergroup:rwxr-xr-x

这时windows远程调试hadoop集群出现的这里 做个记录 我用改变系统变量的方法 修正了错误 网上搜索出来大概有三种: 1.在系统的环境变量或java JVM变量里面添加HADOOP_USE ...

- hadoop 3.x org.apache.hadoop.security.AccessControlException: Permission denied: user=Administrator, access=WRITE, inode="/":tele:supergroup:drwxr-xr-x

权限不足,上传文件时应当使用启动hadoop的账户,即在获取FileSystem时就应当指定用户 修改后的代码 public class Demo1 { public static void main ...

- 访问HDFS报错:org.apache.hadoop.security.AccessControlException: Permission denied

import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.fs.FileSystem; import org.apac ...

- 从 "org.apache.hadoop.security.AccessControlException:Permission denied: user=..." 看Hadoop 的用户登陆认证

假设远程提交任务给Hadoop 可能会遇到 "org.apache.hadoop.security.AccessControlException:Permission denied: use ...

随机推荐

- Dubbo源码分析(5):ExtensionLoader

背景 Dubbo所有的模块加载是基于SPI机制的.在接口名的上一行加个@SPI注解表明要此模块要通过ExtensionLoader加载.基于SPI机制的扩展性比较好,在不修改原有代码,可以实现新模块的 ...

- shellshock溢出攻击

实验背景 2014年9月24日,Bash中发现了一个严重漏洞shellshock,该漏洞可用于许多系统,并且既可以远程也可以在本地触发.在本实验中,需要亲手重现攻击来理解该漏洞,并回答一些问题. 什么 ...

- Java - Annotation使用

本文转载于(这个写的很好):https://www.cnblogs.com/be-forward-to-help-others/p/6846821.html Annotation Annotation ...

- greenplum常见问题及解决方法

本文链接:https://blog.csdn.net/q936889811/article/details/85612046 文章目录 1.错误:数据库初始化:gpini ...

- learning AWT Jrame

import java.awt.*; public class FrameTest { public static void main(String[] args) { var f = new Fra ...

- 「LibreOJ NOI Round #2」单枪匹马

嘟嘟嘟 这题没卡带一个\(log\)的,那么就很水了. 然后我因为好长时间没写矩阵优化dp,就只敲了一个暴力分--看来复习还是很关键的啊. 这个函数显然是从后往前递推的,那么令第\(i\)位的分子分母 ...

- 51 Nod 1430 奇偶游戏(博弈)

1430 奇偶游戏 题目来源: CodeForces 基准时间限制:1 秒 空间限制:131072 KB 分值: 160 难度:6级算法题 收藏 关注 有n个城市,第i个城市有ai个人.Daenery ...

- OSPF外部实验详解

- 制作OpenFOAM计算结果的gif动画【转载】

转载自:http://blog.sina.com.cn/s/blog_6277cbbf0100niqi.html PS:对其中错误地方进行了修正 1.用ParaView将每一帧都输出成图片(File- ...

- 2018-2019-2 网络对抗技术 20165322 Exp7 网络欺诈防范

2018-2019-2 网络对抗技术 20165322 Exp7 网络欺诈防范 目录 实验原理 实验内容与步骤 简单应用SET工具建立冒名网站 ettercap DNS spoof 结合应用两种技术, ...