一个sqoop export案例中踩到的坑

案例分析:

需要将hdfs上的数据导出到mysql里的一张表里。

虚拟机集群的为:centos1-centos5

问题1:

在centos1上将hdfs上的数据导出到centos1上的mysql里:

sqoop export

--connect jdbc:mysql://centos1:3306/test \

--username root \

--password root \

--table order_uid \

--export-dir /user/hive/warehouse/test.db/order_uid/ \

--fields-terminated-by ','

报错:

Error executing statement: java.sql.SQLException: Access denied for user 'root'@'centos1' (using password: YES)

改成:

sqoop export

--connect jdbc:mysql://localhost:3306/test \

--username root \

--password root \

--table order_uid \

--export-dir /user/hive/warehouse/test.db/order_uid/ \

--fields-terminated-by ','

报错:

Error: java.io.IOException: com.mysql.jdbc.exceptions.jdbc4.MySQLSyntaxErrorException: Table 'test.order_uid' doesn't exist at

org.apache.sqoop.mapreduce.AsyncSqlRecordWriter.close(AsyncSqlRecordWriter.java:) at

org.apache.hadoop.mapred.MapTask$NewDirectOutputCollector.close(MapTask.java:) at

org.apache.hadoop.mapred.MapTask.runNewMapper(MapTask.java:) at org.apache.hadoop.mapred.MapTask.run(MapTask.java:) at

org.apache.hadoop.mapred.YarnChild$.run(YarnChild.java:) at java.security.AccessController.doPrivileged(Native Method) at

javax.security.auth.Subject.doAs(Subject.java:) at

...

问题2:

在centos3上将hdfs上的数据导出到centos1上的mysql里:

sqoop export

--connect jdbc:mysql://centos1:3306/test \

--username root \

--password root \

--table order_uid \

--export-dir /user/hive/warehouse/test.db/order_uid/ \

--fields-terminated-by ','

报错:

// :: ERROR mapreduce.ExportJobBase: Export job failed!

// :: ERROR tool.ExportTool: Error during export:

Export job failed!

at org.apache.sqoop.mapreduce.ExportJobBase.runExport(ExportJobBase.java:)

at org.apache.sqoop.manager.SqlManager.exportTable(SqlManager.java:)

at org.apache.sqoop.tool.ExportTool.exportTable(ExportTool.java:)

at org.apache.sqoop.tool.ExportTool.run(ExportTool.java:)

at org.apache.sqoop.Sqoop.run(Sqoop.java:)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:)

at org.apache.sqoop.Sqoop.main(Sqoop.java:)

在网上找到两种解决方案:

1.在网上找到有人说在hdfs的路径写到具体文件,而不是写到目录,改成:

sqoop export

--connect jdbc:mysql://centos1:3306/test \

--username root \

--password root \

--table order_uid \

--export-dir /user/hive/warehouse/test.db/order_uid/t1.dat \

--fields-terminated-by ','

还是报相同错误!

2. 更改mysql里表的编码

将cengos1里mysql的表order_uid字符集编码改成:utf-8,重新执行,centos1的mysql表里导入了部分数据, 仍然报错:

Job failed as tasks failed. failedMaps: failedReduces:

// :: INFO mapreduce.Job: Counters:

File System Counters

FILE: Number of bytes read=

FILE: Number of bytes written=

FILE: Number of read operations=

FILE: Number of large read operations=

FILE: Number of write operations=

HDFS: Number of bytes read=

HDFS: Number of bytes written=

HDFS: Number of read operations=

HDFS: Number of large read operations=

HDFS: Number of write operations=

Job Counters

Failed map tasks=

Killed map tasks=

Launched map tasks=

Data-local map tasks=

Rack-local map tasks=

Total time spent by all maps in occupied slots (ms)=

Total time spent by all reduces in occupied slots (ms)=

Total time spent by all map tasks (ms)=

Total vcore-milliseconds taken by all map tasks=

Total megabyte-milliseconds taken by all map tasks=

Map-Reduce Framework

Map input records=

Map output records=

Input split bytes=

Spilled Records=

Failed Shuffles=

Merged Map outputs=

GC time elapsed (ms)=

CPU time spent (ms)=

Physical memory (bytes) snapshot=

Virtual memory (bytes) snapshot=

Total committed heap usage (bytes)=

File Input Format Counters

Bytes Read=

File Output Format Counters

Bytes Written=

// :: INFO mapreduce.ExportJobBase: Transferred 1.1035 KB in 223.4219 seconds (5.0577 bytes/sec)

// :: INFO mapreduce.ExportJobBase: Exported records.

// :: ERROR mapreduce.ExportJobBase: Export job failed!

// :: ERROR tool.ExportTool: Error during export:

Export job failed!

at org.apache.sqoop.mapreduce.ExportJobBase.runExport(ExportJobBase.java:)

at org.apache.sqoop.manager.SqlManager.exportTable(SqlManager.java:)

at org.apache.sqoop.tool.ExportTool.exportTable(ExportTool.java:)

at org.apache.sqoop.tool.ExportTool.run(ExportTool.java:)

at org.apache.sqoop.Sqoop.run(Sqoop.java:)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:)

at org.apache.sqoop.Sqoop.main(Sqoop.java:)

当指定map为1时:

sqoop export

--connect jdbc:mysql://centos1:3306/test \

--username root \

--password root \

--table order_uid \

--export-dir /user/hive/warehouse/test.db/order_uid \

--fields-terminated-by ',' \

--m

运行成功了!!!

sqoop默认情况下的map数量为4,也就是说这种情况下1个map能运行成功,而多个map会失败。于是将map改为2又试了一遍:

sqoop export

--connect jdbc:mysql://centos1:3306/test \

--username root \

--password root \

--table order_uid \

--export-dir /user/hive/warehouse/test.db/order_uid \

--fields-terminated-by ',' \

--m 2

执行结果为:

// :: INFO mapreduce.Job: Counters:

File System Counters

FILE: Number of bytes read=

FILE: Number of bytes written=

FILE: Number of read operations=

FILE: Number of large read operations=

FILE: Number of write operations=

HDFS: Number of bytes read=

HDFS: Number of bytes written=

HDFS: Number of read operations=

HDFS: Number of large read operations=

HDFS: Number of write operations=

Job Counters

Failed map tasks=

Launched map tasks=

Data-local map tasks=

Rack-local map tasks=

Total time spent by all maps in occupied slots (ms)=

Total time spent by all reduces in occupied slots (ms)=

Total time spent by all map tasks (ms)=

Total vcore-milliseconds taken by all map tasks=

Total megabyte-milliseconds taken by all map tasks=

Map-Reduce Framework

Map input records=

Map output records=

Input split bytes=

Spilled Records=

Failed Shuffles=

Merged Map outputs=

GC time elapsed (ms)=

CPU time spent (ms)=

Physical memory (bytes) snapshot=

Virtual memory (bytes) snapshot=

Total committed heap usage (bytes)=

File Input Format Counters

Bytes Read=

File Output Format Counters

Bytes Written=

// :: INFO mapreduce.ExportJobBase: Transferred bytes in 99.4048 seconds (7.0922 bytes/sec)

// :: INFO mapreduce.ExportJobBase: Exported 5 records.

// :: ERROR mapreduce.ExportJobBase: Export job failed!

// :: ERROR tool.ExportTool: Error during export:

Export job failed!

at org.apache.sqoop.mapreduce.ExportJobBase.runExport(ExportJobBase.java:)

at org.apache.sqoop.manager.SqlManager.exportTable(SqlManager.java:)

at org.apache.sqoop.tool.ExportTool.exportTable(ExportTool.java:)

at org.apache.sqoop.tool.ExportTool.run(ExportTool.java:)

at org.apache.sqoop.Sqoop.run(Sqoop.java:)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:)

at org.apache.sqoop.Sqoop.main(Sqoop.java:)

可以看到它成功的导入了5条记录!!!

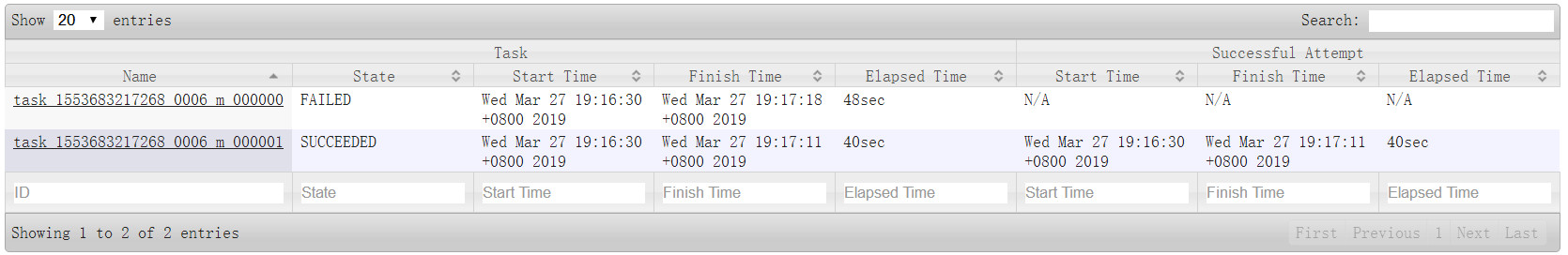

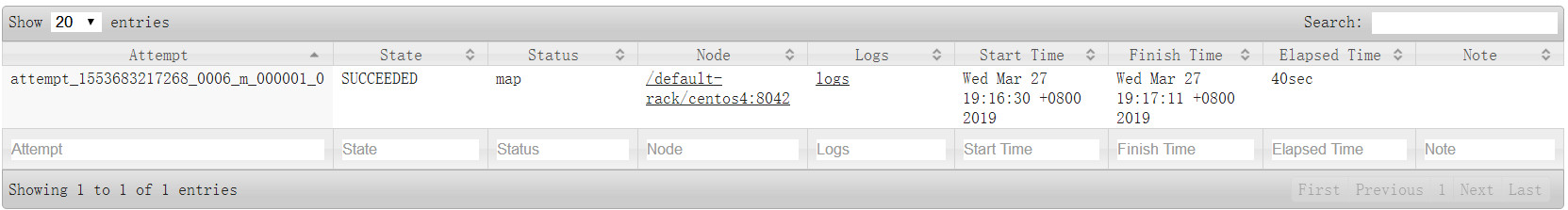

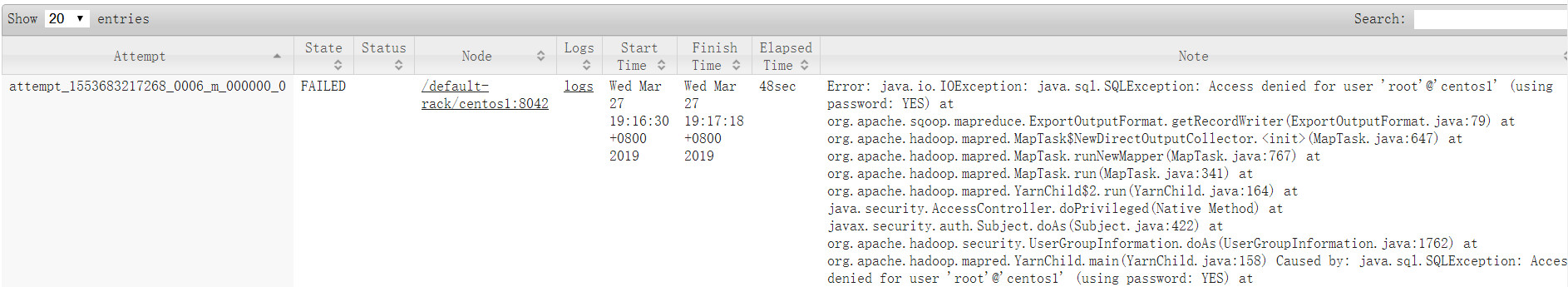

通过jobhistory窗口可以看到两个map一个成功,一个执行失败

点击task的name:

成功的任务由centos4节点运行的,失败的task由centos1运行,又回到了问题1,就是centos1不能访问centos1的mysql数据!

最终一个朋友告诉我再centos1上单独添加对centos1的远程访问权限:

grant all privileges on *.* to 'root'@'centos1' identified by 'root' with grant option;

flush privileges;

然后重新运行一下,问题1和问题2都被愉快的解决了!!!

当时在centos1上的mysql里执行了:

GRANT ALL PRIVILEGES ON *.* TO 'root'@'%' IDENTIFIED BY 'root' WITH GRANT OPTION;

flush privileges;

对其他节点添加了远程访问,但没有对自己添加远程访问权限。

一个sqoop export案例中踩到的坑的更多相关文章

- 项目中踩过的坑之-sessionStorage

总想写点什么,却不知道从何写起,那就从项目中踩过的坑开始吧,希望能给可能碰到相同问题的小伙伴一点帮助. 项目情景: 有一个id,要求通过当前网页打开一个新页面(不是当前页面),并把id传给打开的新页面 ...

- ng-zorro-antd中踩过的坑

ng-zorro-antd中踩过的坑 前端项目中,我们经常会使用阿里开源的组件库:ant-design,其提供的组件已经足以满足多数的需求,拿来就能直接用,十分方便,当然了,有些公司会对组件库进行二次 ...

- 【spring】使用spring过程中踩到的坑

这里简单记录一下,学习spring的时候碰过的异常: 异常:org.springframework.beans.factory.BeanDefinitionStoreException: Unexpe ...

- git工作中常用命令-工作中踩过的坑

踩坑篇又来啦,这是我在工作中从git小白进化到现在工作中运用自如的过程中,踩过的坑,以及解决办法. 1.基于远程develop分支,建一个本地task分支,并切换到该task分支 git checko ...

- 转:Flutter开发中踩过的坑

记录一下入手Flutter后实际开发中踩过的一些坑,这些坑希望后来者踩的越少越好.本文章默认读者已经掌握Flutter初步开发基础. 坑1问题:在debug模式下,App启动第一个页面会很慢,甚至是黑 ...

- vue项目开发中踩过的坑

一.路由 这两天移动端的同事在研究vue,跟我说看着我的项目做的,子路由访问的时候是空白的,我第一反应是,不会模块没加载进来吧,还是....此处省略一千字... 废话不多说上代码 路由代码 { pat ...

- ionic2+angular2中踩的那些坑

好久没写什么东西了,最近在做一个ionic2的小东西,遇到了不少问题,也记录一下,避免后来的同学走弯路. 之前写过一篇使用VS2015开发ionic1的文章,但自己还没摸清门道,本来也是感兴趣就学习了 ...

- JasperReport 中踩过的坑

Mac Book Pro 10.13.6Jaspersoft Studio community version 6.6.9JDK 8 安装 Jaspersoft Studio Jasper Rep ...

- spring-data-redis 使用过程中踩过的坑

spring-data-redis简介 Spring-data-redis是spring大家族的一部分,提供了在srping应用中通过简单的配置访问redis服务,对reids底层开发包(Jedis, ...

随机推荐

- codeforces 12D Ball

codeforces 12D Ball 这道题有两种做法 一种用树状数组/线段树维护区间最值,一种用map维护折线,昨天我刚遇见了一道类似的用STL维护折线的题目: 392D Three Arrays ...

- 团队作业—预则立&&他山之石(人月神教)

1.团队任务 GitHub issues 1.2 团队计划 2.访谈任务 2.1采访对象 采访团队:龙威零式 采访时间:2017.10.23 采访形式:微信群 2.2采访内容 问:你们选题的时候有哪些 ...

- Android 之 GridView具体解释

工作这么久以来,都是以解决需求为目标.渐渐发现这样的学习方式不好,学到的知识能立即解决这个问题,但没有经过梳理归纳. 故想系统总结下一些有趣味的知识点. 在这篇博客中想以一个样例系统解说下GridVi ...

- vim在插入模式粘贴代码缩进问题解决方法

转载自:https://blog.csdn.net/commshare/article/details/6215088 在vim粘贴代码会出现缩进问题,原因在于vim在代码粘贴时会自动缩进 解决方法: ...

- LCTF wp简单复现

1.T4lk 1s ch34p,sh0w m3 the sh31l 代码如下: <?php $SECRET = `../read_secret`; $SANDBOX = "../dat ...

- Appium基础篇(一)——启动emulator

1. Appium API文档:链接参考 http://appium.io/slate/cn/v/?ruby#appium-介绍. 2. Appium 安装篇:http://www.cnblogs.c ...

- .net根据经纬度获取地址(百度api)

private string GetAddress(string lng, string lat) { try { string url = @"http://api.map.baidu.c ...

- [转]从三层架构到MVC,MVP

本来是不想跳出来充大头蒜的,但最近发现园子里关于MVC的文章和讨论之风越刮越烈,其中有些朋友的观点并不是我所欣赏和推荐的,同时最近也在忙着给公司里的同事做MVC方面的“扫盲工作”.所以就搜集了一些大家 ...

- 关于python线程池threadpool

#coding=utf-8 import time import threadpool def wait_time(n): print('%d\n' % n) time.sleep(2) #在线程池中 ...

- 电脑需要重启才能连上WLAN

我的笔记本电脑是Windows10 系统,在某次更新后发现这个问题,查资料过程中忽然断网,非要重启才能解决,非常恼人.经过一番研究,发现一个行之有效的解决方法. 1.打开设备管理器. 2.点击网络适配 ...