Hadoop 学习笔记3 Develping MapReduce

小笔记:

Mavon是一种项目管理工具,通过xml配置来设置项目信息。

Mavon POM(project of model).

Steps:

1. set up and configure the development environment.

2. writing your map and reduce functions and run them in local (standalone) mode from the command line or within your IDE.

3. unit test --> test on small dataset --> test on the full dataset after unleash in a cluster

--> tuning

1. Configuration API

- Components in Hadoop are configured using Hadoop’s own configuration API.

- org.apache.hadoop.conf package

- Configurations read their properties from resources — XML files with a simple structure for defining name-value pairs.

For example, write a configuration-1.xml like:

<?xml version="1.0"?>

<configuration>

<property>

<name>color</name>

<value>yellow</value>

<description>Color</description>

</property>

<property>

<name>size</name>

<value>10</value>

<description>Size</description>

</property>

<property>

<name>weight</name>

<value>heavy</value>

<final>true</final>

<description>Weight</description>

</property>

<property>

<name>size-weight</name>

<value>${size},${weight}</value>

<description>Size and weight</description>

</property>

</configuration>

then access it by coding below:

Configuration conf = new Configuration();

conf.addResource("configuration-1.xml");

conf.addResource("configuration-2.xml"); // more than one resource are added orderly, and the latter will overwrite the former. assertThat(conf.get("color"), is("yellow"));

assertThat(conf.getInt("size", 0), is(10));

assertThat(conf.get("breadth", "wide"), is("wide"));

Note:

- type information is not stored in the XML file;

- instead, properties can be interpreted as a given type when they are read.

- Also, the get() methods allow you to specify a default value, which is used if the property is not defined in the XML file, as in the case of breadth here.

- more than one resource are added orderly, and the latter properties will overwrite the former.

- However, properties that are marked as final cannot be overridden in later definitions.

- system properties take priority:

System.setProperty("size", "14")

Options specified with -D take priority over properties from the configuration files.

This will override the number of reducers set on the cluster or set in any client-side configuration files.

% hadoop ConfigurationPrinter -D color=yellow | grep color

2. Set up dev enviroment

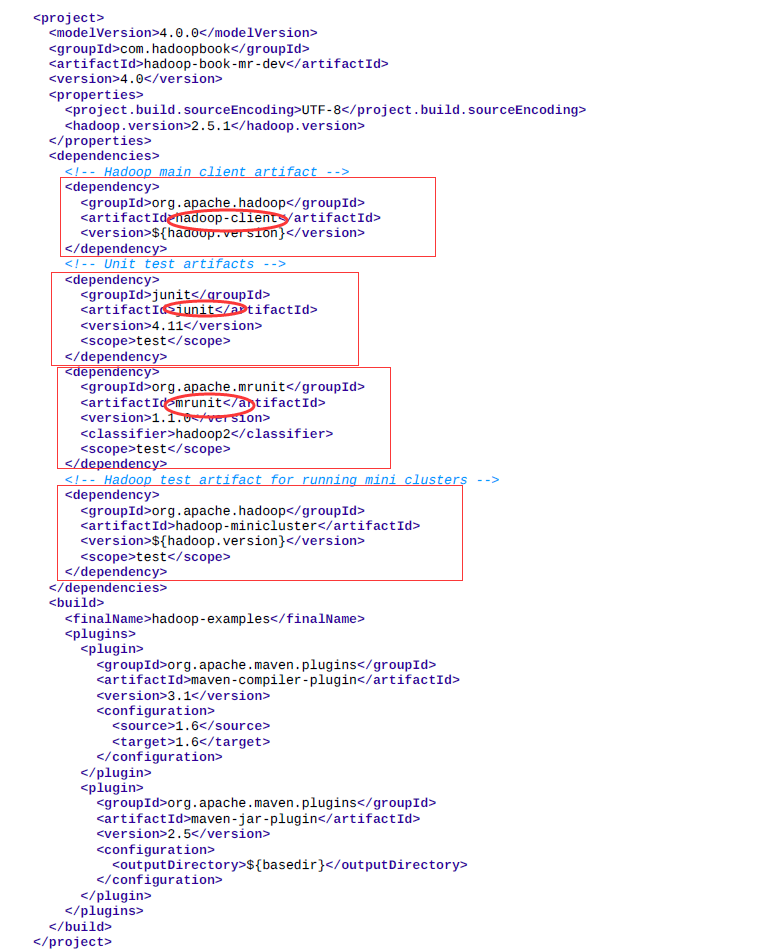

The Maven POMs (Project Object Model) are used to show the dependencies needed for building and testing MapReduce programs. Actually a xml file.

- hadoop-client dependency, which contains all the Hadoop client-side classes needed to interact with HDFS and MapReduce.

- For running unit tests, we use junit,

- for writing MapReduce tests, we use mrunit.

- The hadoop-minicluster library contains the “mini-” clusters that are useful for testing with Hadoop clusters running in a single JVM.

Many IDEs can read Maven POMs directly, so you can just point them at the directory containing the pom.xml file and start writing code.

Alternatively, you can use Maven to generate configuration files for your IDE. For example, the following creates Eclipse configuration files so you can import the project into Eclipse:

% mvn eclipse:eclipse -DdownloadSources=true -DdownloadJavadocs=true

3. Managing switching

It is common to switch between running the application locally and running it on a cluster.

- have Hadoop configuration files containing the connection settings for each cluster

- we assume the existence of a directory called conf that contains three configuration files: hadoop-local.xml, hadoop-localhost.xml, and hadoopcluster.xml

For example, the following command shows a directory listing on the HDFS serverrunning in pseudodistributed mode on localhost:

- conf

% hadoop fs -conf conf/hadoop-localhost.xml -ls Found 2 items

drwxr-xr-x - tom supergroup 0 2014-09-08 10:19 input

drwxr-xr-x - tom supergroup 0 2014-09-08 10:19 output

4. Starts MapReduce example:

Mapper: to get year and temperature from an input string

public class MaxTemperatureMapper

extends Mapper<LongWritable, Text, Text, IntWritable> { @Override

public void map(LongWritable key, Text value, Context context)

throws IOException, InterruptedException {

String line = value.toString();

String year = line.substring(15, 19);

int airTemperature = Integer.parseInt(line.substring(87, 92)); context.write(new Text(year), new IntWritable(airTemperature));

}

}

Unit test for the Mapper:

import java.io.IOException;

import org.apache.hadoop.io.*;

import org.apache.hadoop.mrunit.mapreduce.MapDriver;

import org.junit.*; public class MaxTemperatureMapperTest {

@Test

public void processesValidRecord() throws IOException, InterruptedException {

Text value = new Text("0043011990999991950051518004+68750+023550FM-12+0382" +

// Year ^^^^

"99999V0203201N00261220001CN9999999N9-00111+99999999999");

// Temperature ^^^^^ new MapDriver<LongWritable, Text, Text, IntWritable>()

.withMapper(new MaxTemperatureMapper())

.withInput(new LongWritable(0), value)

.withOutput(new Text("1950"), new IntWritable(-11))

.runTest();

}

}

Reducer: to get the maxmium

public class MaxTemperatureReducer

extends Reducer<Text, IntWritable, Text, IntWritable> { @Override

public void reduce(Text key, Iterable<IntWritable> values, Context context)

throws IOException, InterruptedException { int maxValue = Integer.MIN_VALUE; for (IntWritable value : values) {

maxValue = Math.max(maxValue, value.get());

} context.write(key, new IntWritable(maxValue));

}

}

Unit test for the Reducer:

@Test

public void returnsMaximumIntegerInValues() throws IOException, InterruptedException { new ReduceDriver<Text, IntWritable, Text, IntWritable>()

.withReducer(new MaxTemperatureReducer())

.withInput(new Text("1950"),

Arrays.asList(new IntWritable(10), new IntWritable(5)))

.withOutput(new Text("1950"), new IntWritable(10))

.runTest();

}

5 . a write job driver

Using the Tool interface , it’s easy to write a driver to run a MapReduce job.

Then run the driver locally.

% mvn compile

% export HADOOP_CLASSPATH=target/classes/

% hadoop v2.MaxTemperatureDriver -conf conf/hadoop-local.xml \

input/ncdc/micro output

或

% hadoop v2.MaxTemperatureDriver -fs file:/// -jt local input/ncdc/micro output

The local job runner uses a single JVM to run a job, so as long as all the classes that your job needs are on its classpath, then things will just work.

6. Running on a cluster

a job’s classes must be packaged into a job JAR file to send to the cluster

Hadoop 学习笔记3 Develping MapReduce的更多相关文章

- Hadoop学习笔记—4.初识MapReduce

一.神马是高大上的MapReduce MapReduce是Google的一项重要技术,它首先是一个编程模型,用以进行大数据量的计算.对于大数据量的计算,通常采用的处理手法就是并行计算.但对许多开发者来 ...

- Hadoop学习笔记(2) 关于MapReduce

1. 查找历年最高的温度. MapReduce任务过程被分为两个处理阶段:map阶段和reduce阶段.每个阶段都以键/值对作为输入和输出,并由程序员选择它们的类型.程序员还需具体定义两个函数:map ...

- Hadoop学习笔记—22.Hadoop2.x环境搭建与配置

自从2015年花了2个多月时间把Hadoop1.x的学习教程学习了一遍,对Hadoop这个神奇的小象有了一个初步的了解,还对每次学习的内容进行了总结,也形成了我的一个博文系列<Hadoop学习笔 ...

- Hadoop学习笔记(7) ——高级编程

Hadoop学习笔记(7) ——高级编程 从前面的学习中,我们了解到了MapReduce整个过程需要经过以下几个步骤: 1.输入(input):将输入数据分成一个个split,并将split进一步拆成 ...

- Hadoop学习笔记(6) ——重新认识Hadoop

Hadoop学习笔记(6) ——重新认识Hadoop 之前,我们把hadoop从下载包部署到编写了helloworld,看到了结果.现是得开始稍微更深入地了解hadoop了. Hadoop包含了两大功 ...

- Hadoop学习笔记(2)

Hadoop学习笔记(2) ——解读Hello World 上一章中,我们把hadoop下载.安装.运行起来,最后还执行了一个Hello world程序,看到了结果.现在我们就来解读一下这个Hello ...

- Hadoop学习笔记(5) ——编写HelloWorld(2)

Hadoop学习笔记(5) ——编写HelloWorld(2) 前面我们写了一个Hadoop程序,并让它跑起来了.但想想不对啊,Hadoop不是有两块功能么,DFS和MapReduce.没错,上一节我 ...

- Hadoop学习笔记(2) ——解读Hello World

Hadoop学习笔记(2) ——解读Hello World 上一章中,我们把hadoop下载.安装.运行起来,最后还执行了一个Hello world程序,看到了结果.现在我们就来解读一下这个Hello ...

- Hadoop学习笔记(1) ——菜鸟入门

Hadoop学习笔记(1) ——菜鸟入门 Hadoop是什么?先问一下百度吧: [百度百科]一个分布式系统基础架构,由Apache基金会所开发.用户可以在不了解分布式底层细节的情况下,开发分布式程序. ...

随机推荐

- iOS 关于版本升级问题的解决

从iOS8系统开始,用户可以在设置里面设置在WiFi环境下,自动更新安装的App.此功能大大方便了用户,但是一些用户没有开启此项功能,因此还是需要在程序里面提示用户的. 虽然现在苹果审核不能看到版本提 ...

- 在C#中将String转换成Enum:

一: 在C#中将String转换成Enum: object Enum.Parse(System.Type enumType, string value, bool ignoreCase); 所以,我 ...

- Visual Studio 2013编辑HTML文件无设计视图的解决方案

在Visual Studio 2013中编辑HTML文件,会发现没有设计视图. 解决方法:点击Visual Studio 2013的”工具“菜单,再点击”选项“—>文本编辑器—>文件扩展名 ...

- performSelector的原理以及用法

一.performSelector调用和直接调用区别下面两段代码都在主线程中运行,我们在看别人代码时会发现有时会直接调用,有时会利用performSelector调用,今天看到有人在问这个问题,我便做 ...

- 二叉树的遍历(递归,迭代,Morris遍历)

二叉树的三种遍历方法: 先序,中序,后序,这三种遍历方式每一个都可以用递归,迭代,Morris三种形式实现,其中Morris效率最高,空间复杂度为O(1). 主要参考博客: 二叉树的遍历(递归,迭代, ...

- CentOS 7.0 Nvidia显卡安装步骤

from: http://blog.sina.com.cn/s/blog_49c0985a0102v3fa.html CentOS 7.0 Nvidia显卡安装步骤: 1 在英伟达官网下载相应驱动 搜 ...

- JAVA_HOME环境变量失效的解决办法

晚上把oracle自带的weblogic给卸载了,然后打开eclipse,发现报错了:Error: could not open `C:\Java\jre7\lib\amd64\jvm.cfg' JA ...

- CentOS7 下安装JDK1.7 和 Tomcat7

一.下载JDK 和 Tomcat安装包 1.JDK1.7 下载地址:http://www.oracle.com/technetwork/java/javase/downloads/jdk7-downl ...

- 如何用 fiddler 调试线上代码

有时代码上线了,突然就碰到了坑爹的错误.或者有时看别人家线上的代码,对于一个文件想 fork 下来试试效果又不想把全部文件拉到本地,都可以使用 fiddler 的线上调试功能. 比方说我们打开携程的首 ...

- 基于DDD的.NET开发框架 - ABP缓存Caching实现

返回ABP系列 ABP是“ASP.NET Boilerplate Project (ASP.NET样板项目)”的简称. ASP.NET Boilerplate是一个用最佳实践和流行技术开发现代WEB应 ...