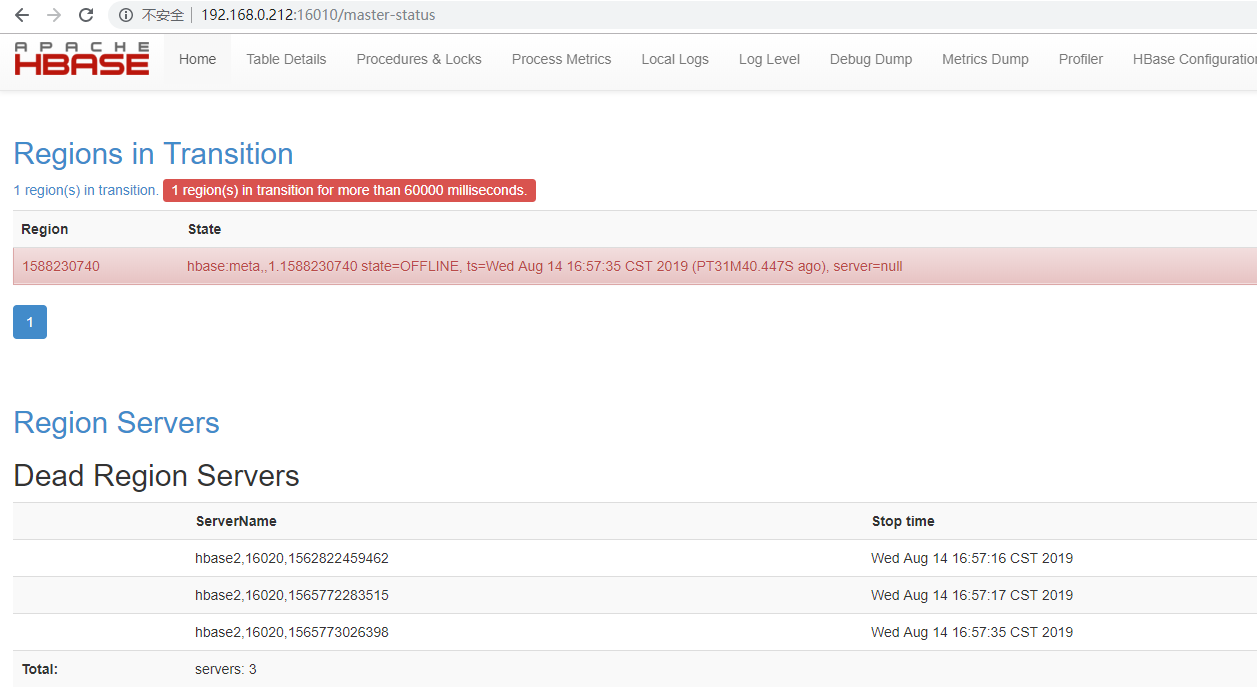

hbase报Dead Region Servers

问题描述:

16010端口启动成功,16020未启动。

hbase-root-regionserver-hbase2.log日志:

2019-08-14 16:45:10,552 WARN [Thread-37] hdfs.DFSClient: DataStreamer Exception

org.apache.hadoop.ipc.RemoteException(java.io.IOException): File /hbase/default/tsdb/3f2398c5b49b581c09687c49a739b007/recovered.edits/0000000000006253152-hbase2%2C16020%2C1562822459462.1565198820284.temp could only be written to 0 of the 1 minReplication nodes. There are 1 datanode(s) running and 1 node(s) are excluded in this operation.

at org.apache.hadoop.hdfs.server.blockmanagement.BlockManager.chooseTarget4NewBlock(BlockManager.java:2121)

at org.apache.hadoop.hdfs.server.namenode.FSDirWriteFileOp.chooseTargetForNewBlock(FSDirWriteFileOp.java:295)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getAdditionalBlock(FSNamesystem.java:2702)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.addBlock(NameNodeRpcServer.java:875)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.addBlock(ClientNamenodeProtocolServerSideTranslatorPB.java:561)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:523)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:991)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:872)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:818)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1729)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2678) at org.apache.hadoop.ipc.Client.call(Client.java:1476)

at org.apache.hadoop.ipc.Client.call(Client.java:1413)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Invoker.invoke(ProtobufRpcEngine.java:229)

at com.sun.proxy.$Proxy18.addBlock(Unknown Source)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolTranslatorPB.addBlock(ClientNamenodeProtocolTranslatorPB.java:418)

at sun.reflect.GeneratedMethodAccessor7.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invokeMethod(RetryInvocationHandler.java:191)

at org.apache.hadoop.io.retry.RetryInvocationHandler.invoke(RetryInvocationHandler.java:102)

at com.sun.proxy.$Proxy19.addBlock(Unknown Source)

at sun.reflect.GeneratedMethodAccessor7.invoke(Unknown Source)

at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43)

at java.lang.reflect.Method.invoke(Method.java:498)

at org.apache.hadoop.hbase.fs.HFileSystem$1.invoke(HFileSystem.java:372)

at com.sun.proxy.$Proxy20.addBlock(Unknown Source)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.locateFollowingBlock(DFSOutputStream.java:1603)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.nextBlockOutputStream(DFSOutputStream.java:1388)

at org.apache.hadoop.hdfs.DFSOutputStream$DataStreamer.run(DFSOutputStream.java:554)

2019-08-14 16:45:10,568 ERROR [RS_LOG_REPLAY_OPS-regionserver/hbase2:16020-1-Writer-2] wal.WALSplitter: Got while writing log entry to log

java.io.IOException: File /hbase/default/tsdb/3f2398c5b49b581c09687c49a739b007/recovered.edits/0000000000006253152-hbase2%2C16020%2C1562822459462.1565198820284.temp could only be written to 0 of the 1 minReplication nodes. There are 1 datanode(s) running and 1 node(s) are excluded in this operation.

at org.apache.hadoop.hdfs.server.blockmanagement.BlockManager.chooseTarget4NewBlock(BlockManager.java:2121)

at org.apache.hadoop.hdfs.server.namenode.FSDirWriteFileOp.chooseTargetForNewBlock(FSDirWriteFileOp.java:295)

at org.apache.hadoop.hdfs.server.namenode.FSNamesystem.getAdditionalBlock(FSNamesystem.java:2702)

at org.apache.hadoop.hdfs.server.namenode.NameNodeRpcServer.addBlock(NameNodeRpcServer.java:875)

at org.apache.hadoop.hdfs.protocolPB.ClientNamenodeProtocolServerSideTranslatorPB.addBlock(ClientNamenodeProtocolServerSideTranslatorPB.java:561)

at org.apache.hadoop.hdfs.protocol.proto.ClientNamenodeProtocolProtos$ClientNamenodeProtocol$2.callBlockingMethod(ClientNamenodeProtocolProtos.java)

at org.apache.hadoop.ipc.ProtobufRpcEngine$Server$ProtoBufRpcInvoker.call(ProtobufRpcEngine.java:523)

at org.apache.hadoop.ipc.RPC$Server.call(RPC.java:991)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:872)

at org.apache.hadoop.ipc.Server$RpcCall.run(Server.java:818)

at java.security.AccessController.doPrivileged(Native Method)

at javax.security.auth.Subject.doAs(Subject.java:422)

at org.apache.hadoop.security.UserGroupInformation.doAs(UserGroupInformation.java:1729)

at org.apache.hadoop.ipc.Server$Handler.run(Server.java:2678) at sun.reflect.NativeConstructorAccessorImpl.newInstance0(Native Method)

at sun.reflect.NativeConstructorAccessorImpl.newInstance(NativeConstructorAccessorImpl.java:62)

at sun.reflect.DelegatingConstructorAccessorImpl.newInstance(DelegatingConstructorAccessorImpl.java:45)

at java.lang.reflect.Constructor.newInstance(Constructor.java:423)

at org.apache.hadoop.ipc.RemoteException.instantiateException(RemoteException.java:106)

at org.apache.hadoop.ipc.RemoteException.unwrapRemoteException(RemoteException.java:95)

at org.apache.hadoop.hbase.wal.WALSplitter$LogRecoveredEditsOutputSink.appendBuffer(WALSplitter.java:1601)

at org.apache.hadoop.hbase.wal.WALSplitter$LogRecoveredEditsOutputSink.append(WALSplitter.java:1559)

at org.apache.hadoop.hbase.wal.WALSplitter$WriterThread.writeBuffer(WALSplitter.java:1084)

at org.apache.hadoop.hbase.wal.WALSplitter$WriterThread.doRun(WALSplitter.java:1076)

at org.apache.hadoop.hbase.wal.WALSplitter$WriterThread.run(WALSplitter.java:1046)

16010上日志:

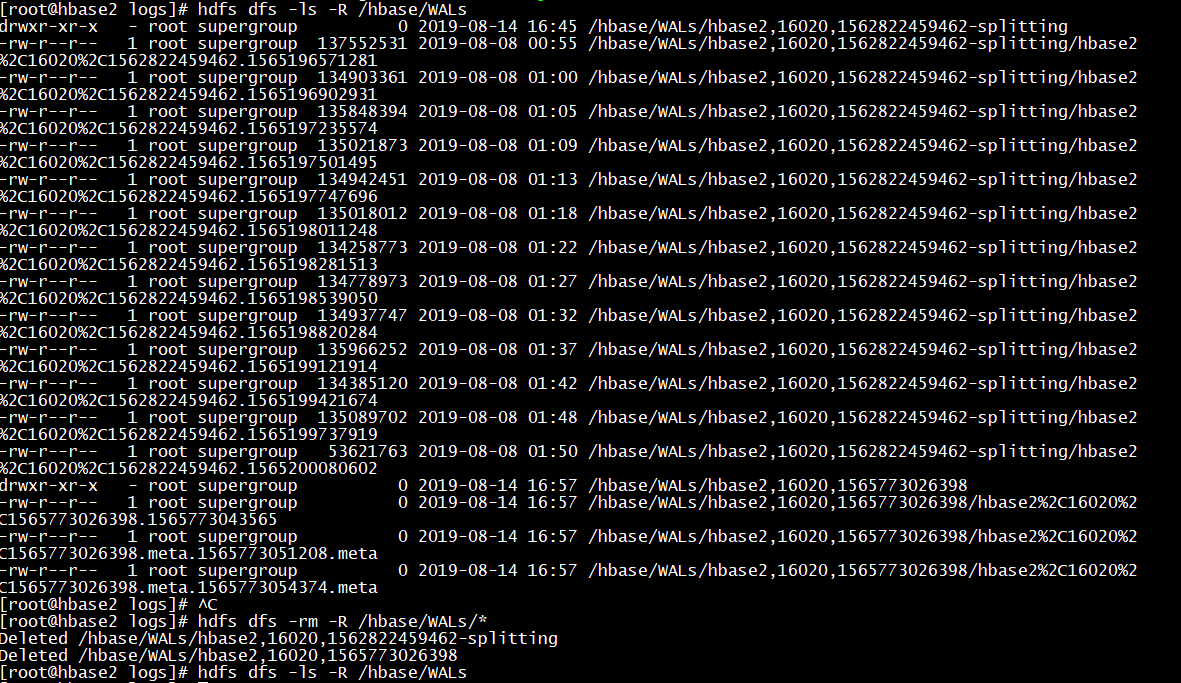

原因:参考网址https://issues.apache.org/jira/browse/HBASE-12426

Description

I initially had a set of 5 region servers which had a single table which was pre split into 30 regions and was evenly distributed to all the regions with data.I then went ahead and removed/decommissioned a coupe of region servers,so in the end I have 3 region servers.Ran hbase hbck and verified there were 0 inconsistencies.However when 'status' command is issued is from hbase shell it shows a dead region server and the same is displayed in master UI as well.Fail over of hbase master did not fix the issue.On investigation we could see some WAL entries which was still pointing to the old region server.

/hbase/WALs/myserver,60020,1406745344969-splitting After removing these orphan entries from hdfs and master failover the dead region servers went away.I wonder if this could have caused any replication issues in the cluster.

解决:删除/hbase/WALs上的分割文件

<!--查看--> hdfs dfs -ls -R /hbase/WALs <!--删除-->

hdfs dfs -rm -R /hbase/WALs/*

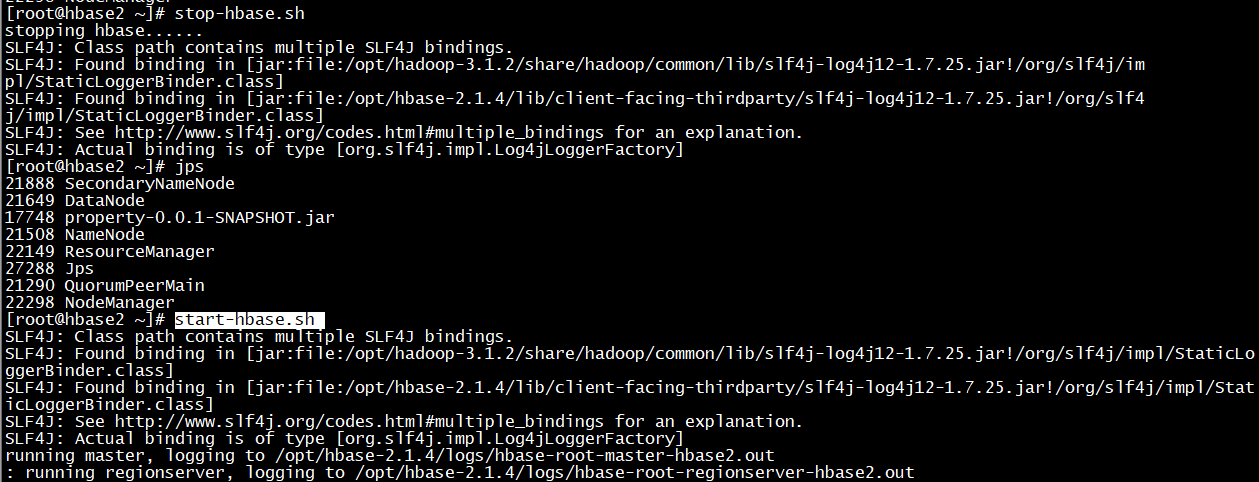

<!--重启hbase-->

stop-hbase.sh

start-hbase.sh

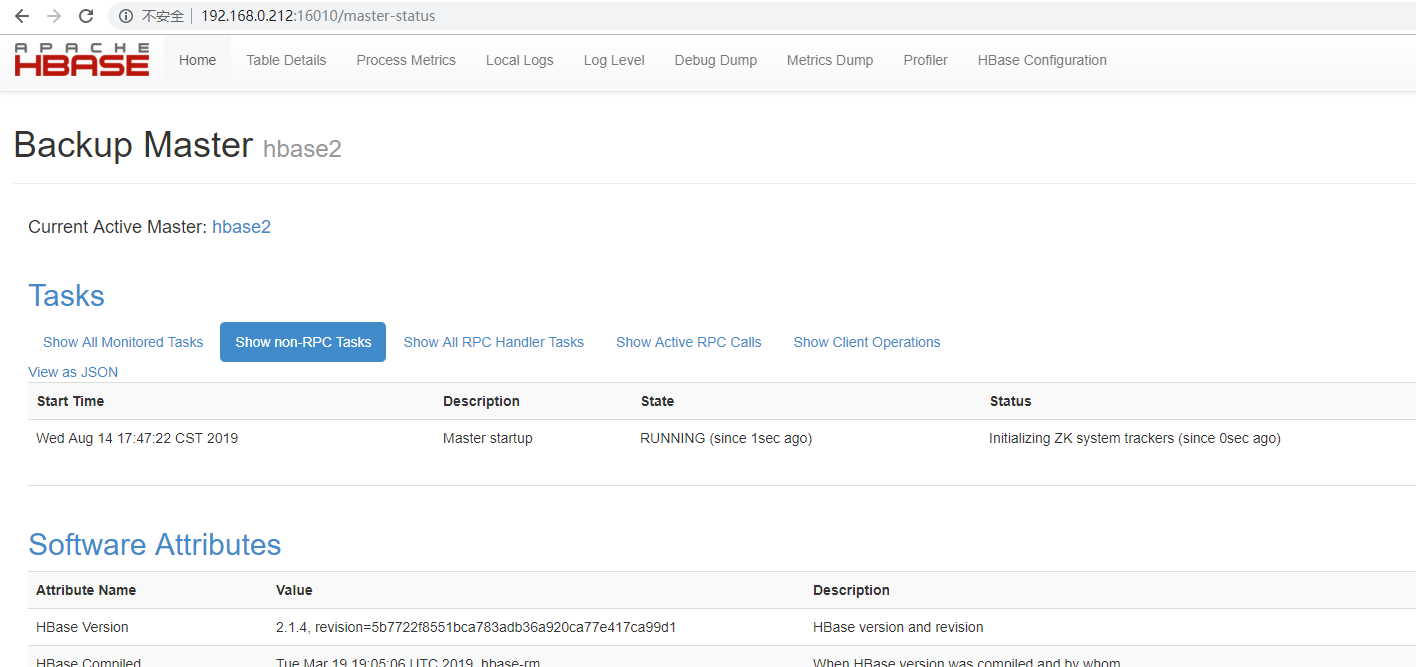

16010上验证:

hbase报Dead Region Servers的更多相关文章

- HBase管理与监控——Dead Region Servers

[问题描述] 在持续批量写入HBase的情况下,出现了Dead Region Servers的情况.集群会把dead掉节点上的region自动分发到另外2个节点上,集群还能继续运行,只是少了1个节点. ...

- 应用程序连接hbase报错:java.net.SocketTimeoutException: callTimeout=60000

背景说明: 今天对生产环境hbase增加了节点,下午的时候一个同事反馈,应用程序后台报错,如下: Tue Feb 26 17:35:35 CST 2019, null, java.net.Socket ...

- HBase原理–所有Region切分的细节都在这里了

本文由 网易云发布. 作者:范欣欣(本篇文章仅限内部分享,如需转载,请联系网易获取授权.) Region自动切分是HBase能够拥有良好扩张性的最重要因素之一,也必然是所有分布式系统追求无限 ...

- Eclipse连接HBase 报错:org.apache.hadoop.hbase.PleaseHoldException: Master is initializing

在eclipse中连接到HBase报错org.apache.hadoop.hbase.PleaseHoldException: Master is initializing,搜索了好久,网上其它人说的 ...

- hbase hbck及region RIT处理

hbase hbck主要用来检查hbase集群region的状态以及对有问题的region进行修复. hbase hbck :检查hbase所有表的一致性,如果正常,就会Print OK hbase ...

- hbase集群region数量和大小的影响

1.Region数量的影响 通常较少的region数量可使群集运行的更加平稳,官方指出每个RegionServer大约100个regions的时候效果最好,理由如下: 1)Hbase的一个特性MSLA ...

- 读者来信-5 | 如果你家HBase集群Region太多请点进来看看,这个问题你可能会遇到

前言:<读者来信>是HBase老店开设的一个问答专栏,旨在能为更多的小伙伴解决工作中常遇到的HBase相关的问题.老店会尽力帮大家解决这些问题或帮你发出求救贴,老店希望这会是一个互帮互助的 ...

- 读者来信 | 如果你家HBase集群Region太多请点进来看看,这个问题你可能会遇到

前言:<读者来信>是HBase老店开设的一个问答专栏,旨在能为更多的小伙伴解决工作中常遇到的HBase相关的问题.老店会尽力帮大家解决这些问题或帮你发出求救贴,老店希望这会是一个互帮互助的 ...

- java连接hbase报错

报错信息如下: The node /hbase is not in ZooKeeper. It should have been written by the master. Check the va ...

随机推荐

- python pickle模块的用法

pickle用于python特有的类型,和python的数据类型间进行转换,提供四个功能 dumps,dump,loads,load. pickle 的用法 #pickle.dumps 将数据通过特殊 ...

- java实战应用:MyBatis实现单表的增删改

MyBatis 是支持普通 SQL查询.存储过程和高级映射的优秀持久层框架.MyBatis 消除了差点儿全部的JDBC代码和參数的手工设置以及结果集的检索.MyBatis 使用简单的 XML或注解用于 ...

- 自定义django中间件

自定义中间件 第一步:在根目录创建路径Middle/m1.py(注意如果是python2的话Middle下要有__init__.py文件,不然会报找不到模块错误) m1.py的内容: # -*- co ...

- MVC框架与MTC框架

3.WEB框架 MVC Model View Controller 数据库 模板文件 业务处理 MTV Model Template View 数据库 模板文件 业务处理 ############## ...

- Ubuntu14 vsftp 的安装和虚拟用户配置

一.介绍 FTP 是 File Transfer Protocol (文件传输协议)的缩写 ,在 Unix/Linux 系统中常用的免费 FTP 服务器软件主要是 VSFTP,vsftp的官方地址:h ...

- 命令行运行python -m http.server报错

最近在学习网站搭建,借助python搭建服务器时,在网站目录启动python服务时报错,如下: UnicodeDecodeError: 'utf-8' codec can't decode byte ...

- SQL语句分类

SQL Structured Query Language SQL是结构化查询语言,是一种用来操作RDBMS的数据库语言,当前关系型数据库都支持使用SQL语言进行操作,也就是说可以通过 SQL 操作 ...

- 你不知道的hostname命令

一般hostname可以获取主机名,但是hostname实际上可以做更多的事情. 让我们先来看看它的帮助. Usage: hostname [-b] {hostname|-F file} set ho ...

- LightOJ 1289 LCM from 1 to n(位图标记+素数筛

https://vjudge.net/contest/324284#problem/B 数学水题,其实就是想写下位图..和状压很像 题意:给n让求lcm(1,2,3,...,n),n<=1e8 ...

- vue的class和style的绑定

<div class="input-search" :class="{input-search-focus : iscur == 1}"> 在原本有 ...