torch& tensorflow

#torch

import torch

import torch.nn as nn

import torch.nn.functional as F class Net(nn.Module): def __init__(self):

super(Net, self).__init__()

# 1 input image channel, 6 output channels, 5x5 square convolution

# kernel

self.conv1 = nn.Conv2d(1, 6, 5)

self.conv2 = nn.Conv2d(6, 16, 5)

# an affine operation: y = Wx + b

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10) def forward(self, x):

# Max pooling over a (2, 2) window

x = F.max_pool2d(F.relu(self.conv1(x)), (2, 2))

# If the size is a square you can only specify a single number

x = F.max_pool2d(F.relu(self.conv2(x)), 2)

x = x.view(-1, self.num_flat_features(x))

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x def num_flat_features(self, x):

size = x.size()[1:] # all dimensions except the batch dimension

num_features = 1

for s in size:

num_features *= s

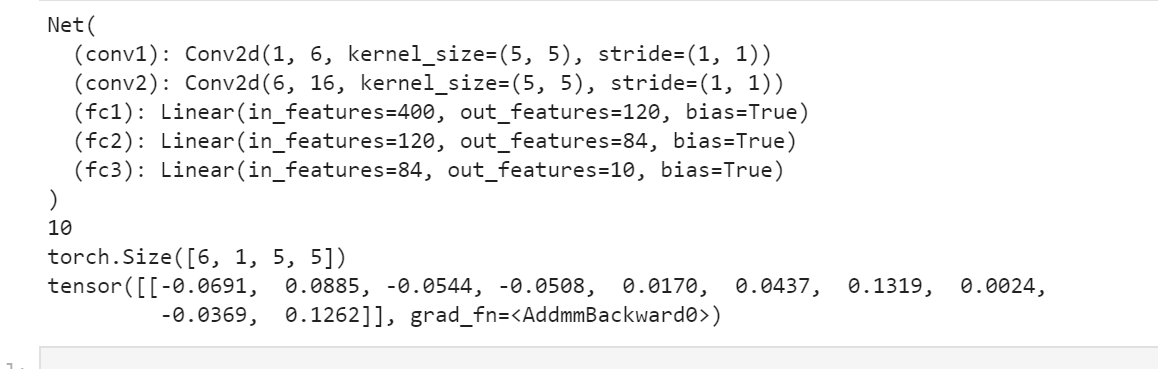

return num_features net = Net()

print(net) params = list(net.parameters())

print(len(params))

print(params[0].size()) # conv1's .weigh input = torch.randn(1, 1, 32, 32)

out = net(input)

print(out)

vgg

#从keras.model中导入model模块,为函数api搭建网络做准备

from tensorflow.keras import Model

from tensorflow.keras.layers import Flatten,Dense,Dropout,MaxPooling2D,Conv2D,BatchNormalization,Input,ZeroPadding2D,Concatenate

from tensorflow.keras import *

from tensorflow.keras import regularizers #正则化

from tensorflow.keras.optimizers import RMSprop #优化选择器

from tensorflow.keras.layers import AveragePooling2D

from tensorflow.keras.datasets import mnist

import matplotlib.pyplot as plt

import numpy as np

from tensorflow.python.keras.utils import np_utils #数据处理

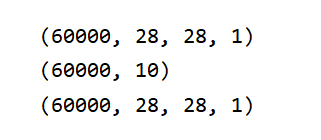

(X_train,Y_train),(X_test,Y_test)=mnist.load_data()

X_test1=X_test

Y_test1=Y_test

X_train=X_train.reshape(-1,28,28,1).astype("float32")/255.0

X_test=X_test.reshape(-1,28,28,1).astype("float32")/255.0

Y_train=np_utils.to_categorical(Y_train,10)

Y_test=np_utils.to_categorical(Y_test,10)

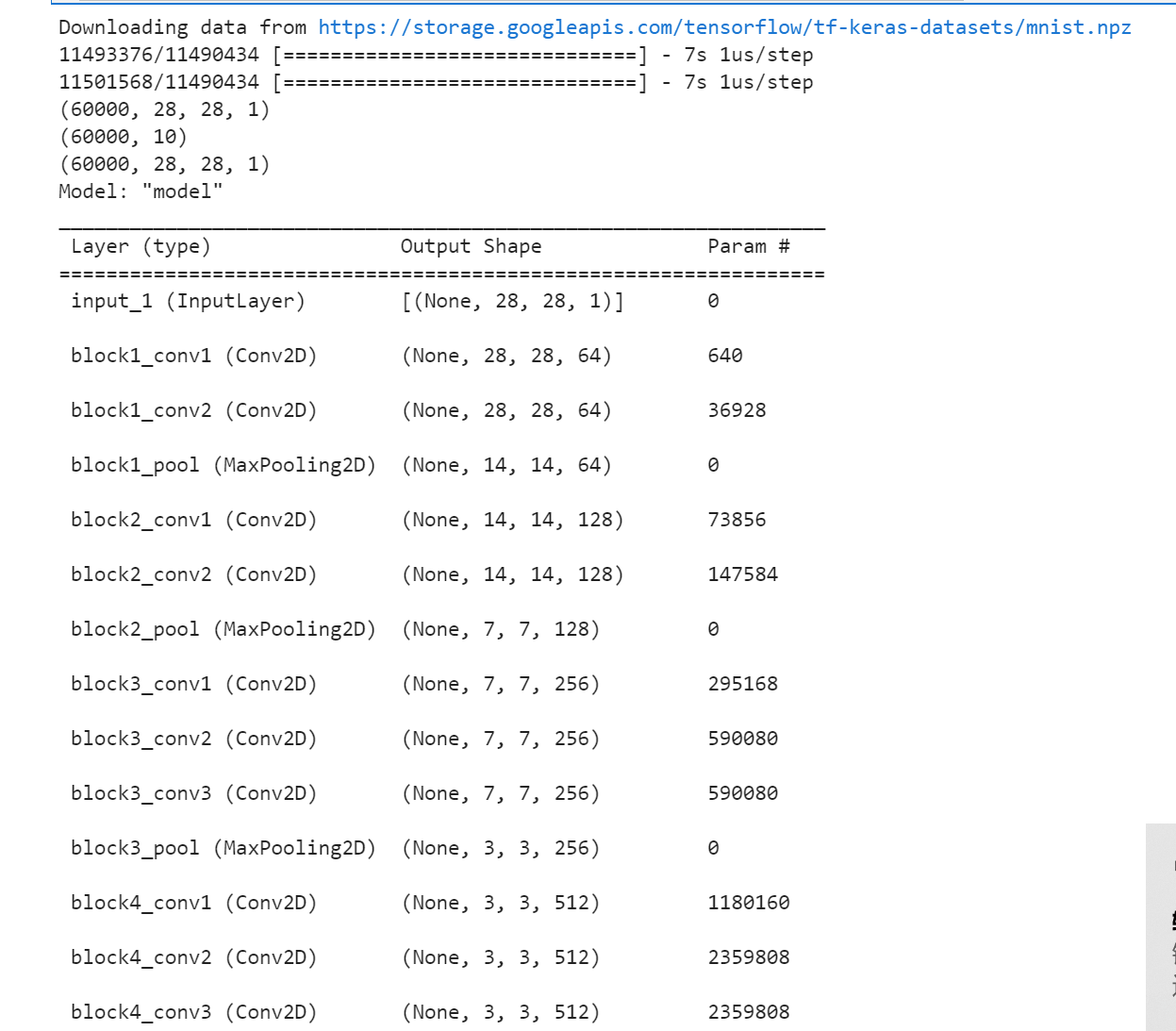

print(X_train.shape)

print(Y_train.shape)

print(X_train.shape) def vgg16():

x_input = Input((28, 28, 1)) # 输入数据形状28*28*1

# Block 1

x = Conv2D(64, (3, 3), activation='relu', padding='same', name='block1_conv1')(x_input)

x = Conv2D(64, (3, 3), activation='relu', padding='same', name='block1_conv2')(x)

x = MaxPooling2D((2, 2), strides=(2, 2), name='block1_pool')(x) # Block 2

x = Conv2D(128, (3, 3), activation='relu', padding='same', name='block2_conv1')(x)

x = Conv2D(128, (3, 3), activation='relu', padding='same', name='block2_conv2')(x)

x = MaxPooling2D((2, 2), strides=(2, 2), name='block2_pool')(x) # Block 3

x = Conv2D(256, (3, 3), activation='relu', padding='same', name='block3_conv1')(x)

x = Conv2D(256, (3, 3), activation='relu', padding='same', name='block3_conv2')(x)

x = Conv2D(256, (3, 3), activation='relu', padding='same', name='block3_conv3')(x)

x = MaxPooling2D((2, 2), strides=(2, 2), name='block3_pool')(x) # Block 4

x = Conv2D(512, (3, 3), activation='relu', padding='same', name='block4_conv1')(x)

x = Conv2D(512, (3, 3), activation='relu', padding='same', name='block4_conv2')(x)

x = Conv2D(512, (3, 3), activation='relu', padding='same', name='block4_conv3')(x)

x = MaxPooling2D((2, 2), strides=(2, 2), name='block4_pool')(x) # Block 5

x = Conv2D(512, (3, 3), activation='relu', padding='same', name='block5_conv1')(x)

x = Conv2D(512, (3, 3), activation='relu', padding='same', name='block5_conv2')(x)

x = Conv2D(512, (3, 3), activation='relu', padding='same', name='block5_conv3')(x) #BLOCK 6

x=Flatten()(x)

x=Dense(256,activation="relu")(x)

x=Dropout(0.5)(x)

x = Dense(256, activation="relu")(x)

x = Dropout(0.5)(x)

#搭建最后一层,即输出层

x = Dense(10, activation="softmax")(x)

# 调用MDOEL函数,定义该网络模型的输入层为X_input,输出层为x.即全连接层

model = Model(inputs=x_input, outputs=x)

# 查看网络模型的摘要

model.summary()

return model model=vgg16()

optimizer=RMSprop(lr=1e-4)

model.compile(loss="binary_crossentropy",optimizer=optimizer,metrics=["accuracy"])

#训练加评估模型

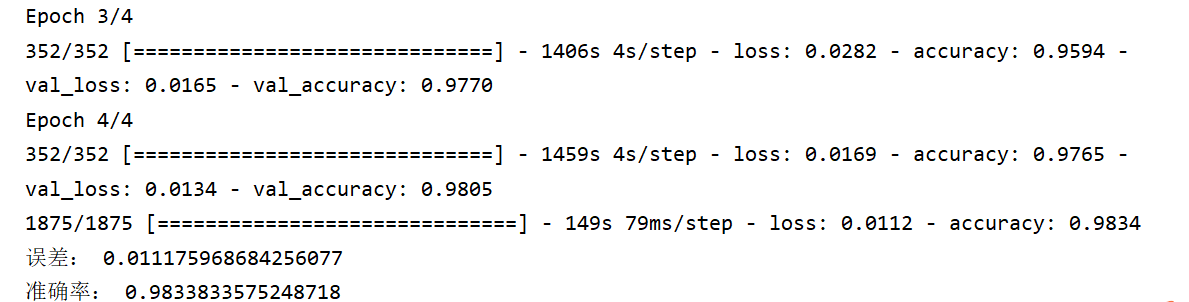

n_epoch=4

batch_size=128

def run_model(): #训练模型

training=model.fit(

X_train,

Y_train,

batch_size=batch_size,

epochs=n_epoch,

validation_split=0.25,

verbose=1

)

test=model.evaluate(X_train,Y_train,verbose=1)

return training,test

training,test=run_model()

print("误差:",test[0])

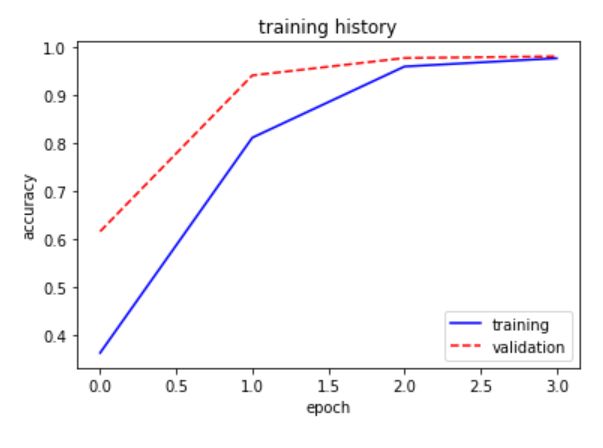

print("准确率:",test[1]) def show_train(training_history,train, validation):

plt.plot(training.history[train],linestyle="-",color="b")

plt.plot(training.history[validation] ,linestyle="--",color="r")

plt.title("training history")

plt.xlabel("epoch")

plt.ylabel("accuracy")

plt.legend(["training","validation"],loc="lower right")

plt.show()

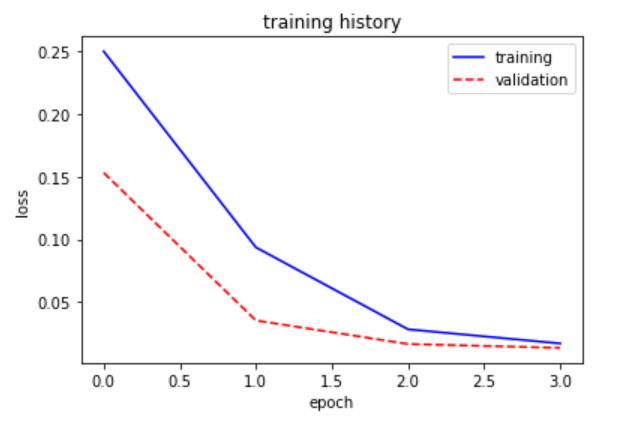

show_train(training,"accuracy","val_accuracy") def show_train1(training_history,train, validation):

plt.plot(training.history[train],linestyle="-",color="b")

plt.plot(training.history[validation] ,linestyle="--",color="r")

plt.title("training history")

plt.xlabel("epoch")

plt.ylabel("loss")

plt.legend(["training","validation"],loc="upper right")

plt.show()

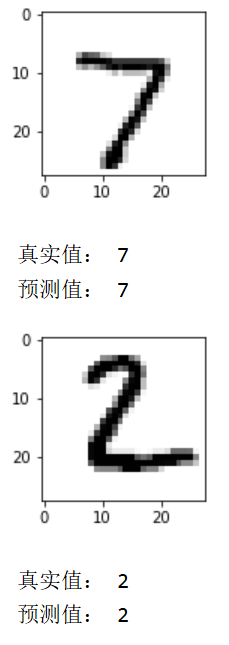

show_train1(training,"loss","val_loss") prediction=model.predict(X_test)

def image_show(image):

fig=plt.gcf() #获取当前图像

fig.set_size_inches(2,2) #改变图像大小

plt.imshow(image,cmap="binary") #显示图像

plt.show()

def result(i):

image_show(X_test1[i])

print("真实值:",Y_test1[i])

print("预测值:",np.argmax(prediction[i]))

result(0)

result(1)

torch& tensorflow的更多相关文章

- Tutorial: Implementation of Siamese Network on Caffe, Torch, Tensorflow

Tutorial: Implementation of Siamese Network with Caffe, Theano, PyTorch, Tensorflow Updated on 2018 ...

- torch 入门

torch 入门1.安装环境我的环境mac book pro 集成显卡 Intel Iris不能用 cunn 模块,因为显卡不支持 CUDA2.安装步骤: 官方文档 (1).git clone htt ...

- 学习Data Science/Deep Learning的一些材料

原文发布于我的微信公众号: GeekArtT. 从CFA到如今的Data Science/Deep Learning的学习已经有一年的时间了.期间经历了自我的兴趣.擅长事务的探索和试验,有放弃了的项目 ...

- pytorch使用不完全文档

1. 利用tensorboard看loss: tensorflow和pytorch环境是好的的话,链接中的logger.py拉到自己的工程里,train.py里添加相应代码,直接能用. 关于环境,小小 ...

- CS231n 2016 通关 第一章-内容介绍

第一节视频的主要内容: Fei-Fei Li 女神对Computer Vision的整体介绍.包括了发展历史中的重要事件,其中最为重要的是1959年测试猫视觉神经的实验. In 1959 Harvar ...

- 深度学习框架caffe/CNTK/Tensorflow/Theano/Torch的对比

在单GPU下,所有这些工具集都调用cuDNN,因此只要外层的计算或者内存分配差异不大其性能表现都差不多. Caffe: 1)主流工业级深度学习工具,具有出色的卷积神经网络实现.在计算机视觉领域Caff ...

- Torch,Tensorflow使用: Ubuntu14.04(x64)+ CUDA8.0 安装 Torch和Tensorflow

系统配置: Ubuntu14.04(x64) CUDA8.0 cudnn-8.0-linux-x64-v5.1.tgz(Tensorflow依赖) Anaconda 1. Torch安装 Torch是 ...

- 一图看懂深度学习框架对比----Caffe Torch Theano TensorFlow

Caffe Torch Theano TensorFlow Language C++, Python Lua Python Python Pretrained Yes ++ Yes ++ Yes ...

- tensorflow,torch tips

apply weightDecay,L2 REGULARIZATION_LOSSES weights = tf.get_collection(tf.GraphKeys.TRAINABLE_VARIAB ...

- 关于类型为numpy,TensorFlow.tensor,torch.tensor的shape变化以及相互转化

https://blog.csdn.net/zz2230633069/article/details/82669546 2018年09月12日 22:56:50 一只tobey 阅读数:727 1 ...

随机推荐

- Nginx 同一个域名自动识别 pc h5

首先设置环境变量 我们先设置变量,通过判断来改变变量的值(注: 我写在server中) set $is_mobile false; # 初始值 if ( $http_cookie ~* "A ...

- vue中引入字体

前言: 做大屏 项目需要引入字体做个记录一.先看看效果 二.实现1.下载字体文件 分享一个下载开源字体网站: https://www.dafont.com/theme.php2.文件放到项目中 可以 ...

- 信息学奥赛介绍-CSP

什么是信息学奥赛 信息学奥赛,全称为信息学奥林匹克竞赛,是教育部和中国科协委托中国计算机 学会举办的一项全国青少年计算机程序设计竞赛.主要分为NOIP(全国联赛),夏令营 NOI比赛的扩展赛,也称全国 ...

- 【C学习笔记】day5-3 编写代码模拟三次密码输入的场景

3.编写代码模拟三次密码输入的场景. 最多能输入三次密码,密码正确,提示"登录成功",密码错误, 可以重新输入,最多输入三次.三次均错,则提示退出程序. #define _CRT_ ...

- OpenGL错误记录

OpenGL3之--三角形(无法解析的外部符号 __imp__glClear@4,该符号在函数 _main 中被引用) 添加头文件 #include <GL/glut.h>

- HttpClient 提交 JSON数据

常见数据格式 application/x-www-form-urlencoded 这也是最常见的 POST 提交数据的方式,一般是用来提交表单. multipart/form-data 上传文件时通常 ...

- stm32 出入栈

Start.S 一般指定栈顶指针及栈大小 1.硬件中断 有硬件入栈和软件入栈部分 硬件入栈寄存器: R0,R1,R2,R3,R12,PSR 软件入栈寄存器: r4 - r11 2.程序切换入栈 ...

- vue+element form 动态改变rules校验数据

优化:确定secondRules的数据在secondFlag改变之前进行赋值 可以用$nextTick来执行,不用setTimeOut ~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~~ ...

- vue 状态类展示使用红绿圆点

vue 状态类展示使用红绿圆点通常对于一些在线.离线类的展示使用图标展示比使用文字描述会更加清晰直观.项目中使用的代码如下: HTML <el-table-column prop="s ...

- vue2/vue3+eslint文件格式化

vue+javascript 1.设置vscode保存时格式化文件 2.打开settings.json 3.设置settings.json文件 { "editor.codeActionsOn ...