MPI - 缓冲区和非阻塞通信

转载自: Introduction to MPI - Part II (Youtube)

Buffering

Suppose we have

if(rank==)

MPI_Send(sendbuf,...,,...)

if(rank==)

MPI_Recv(recvbuf,...,,...)

These are blocking communications, which means they will not return until the arguments to the functions can be safely modified by subsequent statements in the program.

Assume that process 1 is not ready to receive. Then there are 3 possibilities for process 0:

(1) stops and waits until process 1 is ready to receive

(2) copies the message at sendbuf into a system buffer (can be on process 0, process 1 or somewhere else) and returns from MPI_Send

(3) fails

As long as buffer space is available, (2) is a reasonable alternative.

An MPI implementation is permitted to copy the message to be sent into internal storage, but it is not required to do so.

What if not enough space is available?

>> In applications communicating large amounts of data, there may not be enough momory (left) in buffers.

>> Until receive starts, no place to store the send message.

>> Practically, (1) results in a serial execution.

A programmer should not assume that the system provides adequate buffering.

Now consider a program executing:

| Process 0 | Process 1 |

| MPI_Send to process 1 | MPI_Send to process 0 |

| MPI_Recv from process 1 | MPI_Recv from process 0 |

Such a program may work in many cases, but it is certain to fail for message of some size that is large enough.

There are some possible solutions:

>> Ordered send and receive - make sure each receive is matched with send in execution order across processes.

>> The aboved matched pairing can be difficult in complex applications. An alternative is to use MPI_Sendrecv. It performs both send and receive such that if no buffering is available, no deadlock will occur.

>> Buffered sends. MPI allows the programmer to provide a buffer into which data can be placed until it is delivered (or at lease left in buffer) via MPI_Bsend.

>> Nonblocking communication. Initiated, then program proceeds while the communication is ongoing, until a check that communication is completed later in the program. IMPORTANT: must make certain data not modified until communication has completed.

Safe programs

>> A program is safe if it will produce correct results even if the system provides no buffering.

>> Need safe programs for portability.

>> Most programmers expect the system to provide some buffering, hence many unsafe MPI programs are around.

>> Write safe programs using matching send with receive, MPI_Sendrecv, allocating own buffers, nonblocking operations.

Nonblocking communications

>> nonblocking communications are useful for overlapping communication with computation, and ensuring safe programs.

>> a nonblocking operation request the MPI library to perform an operation (when it can).

>> nonblocking operations do not wait for any communication events to complete.

>> nonblocking send and receive: return almost immediately

>> can safely modify a send (receive) buffer only after send (receive) is completed.

>> "wait" routines will let program know when a nonblocking operation is done.

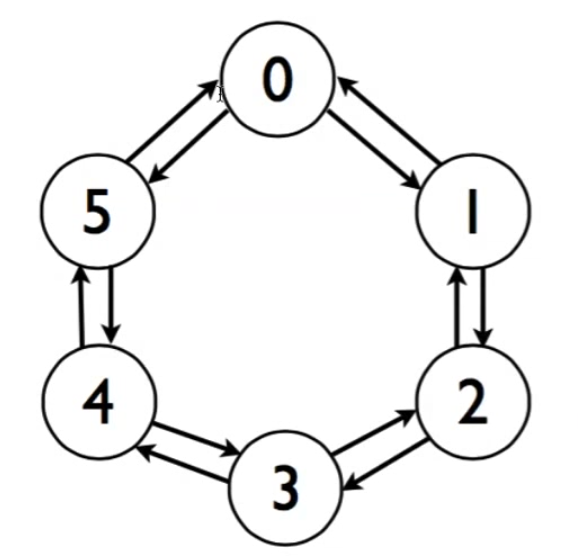

Example - Communication between processes in ring topology

>> With blocking communications it is not possible to write a simple code to accomplish this data exchange.

>> For example, if we have MPI_Send first in all processes, program will get stuck as there will be no matching MPI_Recv to send data.

>> Nonblocking communication avoids this problem.

#include <stdio.h>

#include <stdlib.h>

#include "mpi.h" int main(int argc, char *argv[]) {

int numtasks, rank, next, prev, buf[], tag1=, tag2=; tag1=tag2=;

MPI_Request reqs[];

MPI_Status stats[]; MPI_Init(&argc, &argv);

MPI_Comm_size(MPI_COMM_WORLD, &numtasks);

MPI_Comm_rank(MPI_COMM_WORLD, &rank); prev= rank-;

next= rank+;

if(rank == ) prev= numtasks - ;

if(rank == numtasks-) next= ;

MPI_Irecv(&buf[], , MPI_INT, prev, tag1, MPI_COMM_WORLD, &reqs[]);

MPI_Irecv(&buf[], , MPI_INT, next, tag2, MPI_COMM_WORLD, &reqs[]);

MPI_Isend(&rank, , MPI_INT, prev, tag2, MPI_COMM_WORLD, &reqs[]);

MPI_Isend(&rank, , MPI_INT, next, tag1, MPI_COMM_WORLD, &reqs[]);

MPI_Waitall(, reqs, stats); printf("Task %d communicated with tasks %d & %d\n",rank,prev,next);

MPI_Finalize();

return ;

}

Summary for Nonblocking Communications

>> nonblocking send can be posted whether a matching receive has been posted or not.

>> send is completed when data has been copied out of send buffer.

>> nonblocking send can be matched with blocking receive and vice versa.

>> communications are initiated by sender

>> a communication will generally have lower overhead if a receive buffer is already posted when a sender initiates a communication.

MPI - 缓冲区和非阻塞通信的更多相关文章

- 【MPI学习4】MPI并行程序设计模式:非阻塞通信MPI程序设计

这一章讲了MPI非阻塞通信的原理和一些函数接口,最后再用非阻塞通信方式实现Jacobi迭代,记录学习中的一些知识. (1)阻塞通信与非阻塞通信 阻塞通信调用时,整个程序只能执行通信相关的内容,而无法执 ...

- 用Java实现非阻塞通信

用ServerSocket和Socket来编写服务器程序和客户程序,是Java网络编程的最基本的方式.这些服务器程序或客户程序在运行过程中常常会阻塞.例如当一个线程执行ServerSocket的acc ...

- UE4 Socket多线程非阻塞通信

转自:https://blog.csdn.net/lunweiwangxi3/article/details/50468593 ue4自带的Fsocket用起来依旧不是那么的顺手,感觉超出了我的理解范 ...

- Java NIO Socket 非阻塞通信

相对于非阻塞通信的复杂性,通常客户端并不需要使用非阻塞通信以提高性能,故这里只有服务端使用非阻塞通信方式实现 客户端: package com.test.client; import java.io. ...

- 利用Python中SocketServer 实现客户端与服务器间非阻塞通信

利用SocketServer模块来实现网络客户端与服务器并发连接非阻塞通信 版权声明 本文转自:http://blog.csdn.net/cnmilan/article/details/9664823 ...

- 【python】网络编程-SocketServer 实现客户端与服务器间非阻塞通信

利用SocketServer模块来实现网络客户端与服务器并发连接非阻塞通信.首先,先了解下SocketServer模块中可供使用的类:BaseServer:包含服务器的核心功能与混合(mix-in)类 ...

- 基于MFC的socket编程(异步非阻塞通信)

对于许多初学者来说,网络通信程序的开发,普遍的一个现象就是觉得难以入手.许多概念,诸如:同步(Sync)/异步(Async),阻塞(Block)/非阻塞(Unblock)等,初学者往往迷惑不清, ...

- TCP非阻塞通信

一.SelectableChannel SelectableChannel支持阻塞和非阻塞模式的channel 非阻塞模式下的SelectableChannel,读写不会阻塞 SelectableCh ...

- java网络通信之非阻塞通信

java中提供的非阻塞类主要包含在java.nio,包括: 1.ServerSocketChannel:ServerSocket替代类,支持阻塞与非阻塞: 2.SocketChannel:Socket ...

随机推荐

- [USACO07FEB] Lilypad Pond

https://www.luogu.org/problem/show?pid=1606 题目描述 FJ has installed a beautiful pond for his cows' aes ...

- Python学习笔记(四十六)网络编程(2)— UDP编程

摘抄:https://www.liaoxuefeng.com/wiki/0014316089557264a6b348958f449949df42a6d3a2e542c000/0014320049779 ...

- CSS3的新属性

1.圆角矩形 .border_radius_test{ border-radius:25px; -moz-border-radius:25px; } 数值越大越圆 2.容器阴影 .box_shadow ...

- 【IDEA】 Can't Update No tracked branch configured for branch master or the branch doesn't exist. To make your branch track a remote branch call, for example, git branch --set-upstream-to origin/master

IDEA点击GIT更新按钮时,报错如下: Can't UpdateNo tracked branch configured for branch master or the branch doesn' ...

- 使用CSS3+JQuery打造自定义视频播放器

简介 HTML5的<video>标签已经被目前大多数主流浏览器所支持,包括还未正式发布的IE9也声明将支持<video>标签,利用浏览器原生特性嵌入视频有很多好处,所以很多开发 ...

- [转] Linux下程序的加载、运行和终止流程

TAG: linux, main, _start DATE: 2013-08-08 原文地址: http://blog.csdn.net/tigerscorpio/article/details/62 ...

- 【BZOJ】4032: [HEOI2015]最短不公共子串(LibreOJ #2123)

[题意]给两个小写字母串A,B,请你计算: (1) A的一个最短的子串,它不是B的子串 (2) A的一个最短的子串,它不是B的子序列 (3) A的一个最短的子序列,它不是B的子串 (4) A的一个最短 ...

- bootstrap-select,selectpicker 用法详细:通过官方文档翻译

用过selectpicker的都说好~但是网上中文的教程又找不到比较完整的用法,于是去官网看了下 顺便弄过来翻译一下: 选项可以通过数据属性或JavaScript传递.对于数据属性,附加选项名称dat ...

- NYOJ 328 完全覆盖 (找规律)

题目链接 描述 有一天小董子在玩一种游戏----用21或12的骨牌把mn的棋盘完全覆盖.但他感觉游戏过于简单,于是就随机生成了两个方块的位置(可能相同),标记一下,标记后的方块不用覆盖.还要注意小董子 ...

- 【Git】git clone与git pull区别

从字面意思也可以理解,都是往下拉代码,git clone是克隆,git pull 是拉.但是,也有区别: 从远程服务器克隆一个一模一样的版本库到本地,复制的是整个版本库,叫做clone.(clone是 ...