prometheus 集群

思路一

统一区域的监控目标,prometheus server两台监控相同的目标群体。

改变后

上面这个变化对于监控目标端,会多出一倍的查询请求,但在一台prometheus server宕机的情况下,可以不影响监控。

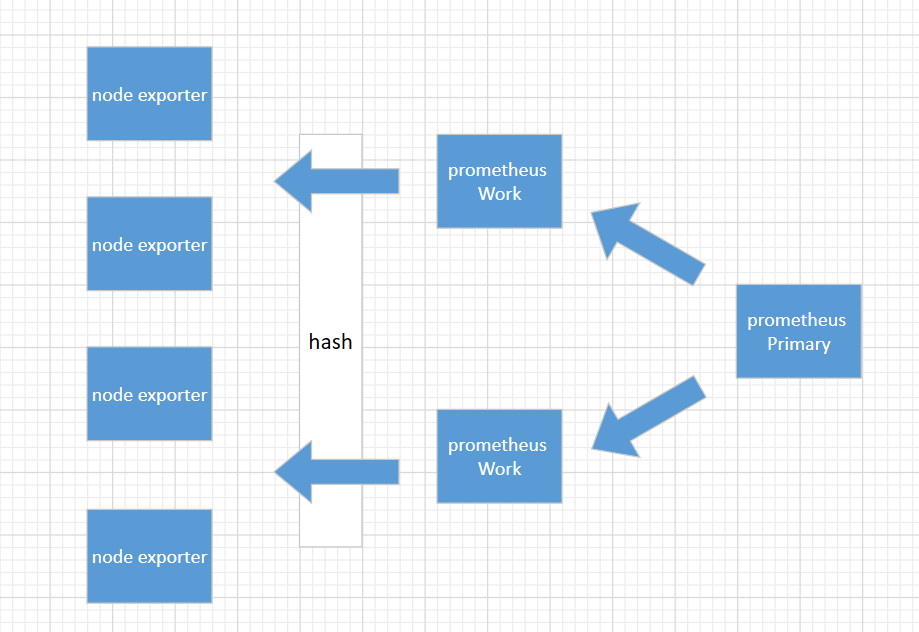

思路二

这是一个金字塔式的层次结构,而不是分布式层次结构。Prometheus 的抓取请求也会加载到prometheus work节点上,这是需要考虑的。

上面这种模式,准备3台prometheus server进行搭建,这种方式work节点一台宕机后,其它wokr节点不会去接手故障work节点的机器。

1、环境准备

192.168.31.151(primary)

192.168.31.144 (worker)

192.168.31.82(worker)

2、部署prometheus

cd /usr/loacl

tar -xvf prometheus-2.8.0.linux-amd64.tar.gz

ln -s /usr/local/prometheus-2.8.0.linux-amd64 /usr/local/prometheus

cd /usr/local/prometheus;mkdir bin conf data

mv ./promtool bin

mv ./prometheus bin

mv ./prometheus.yml conf

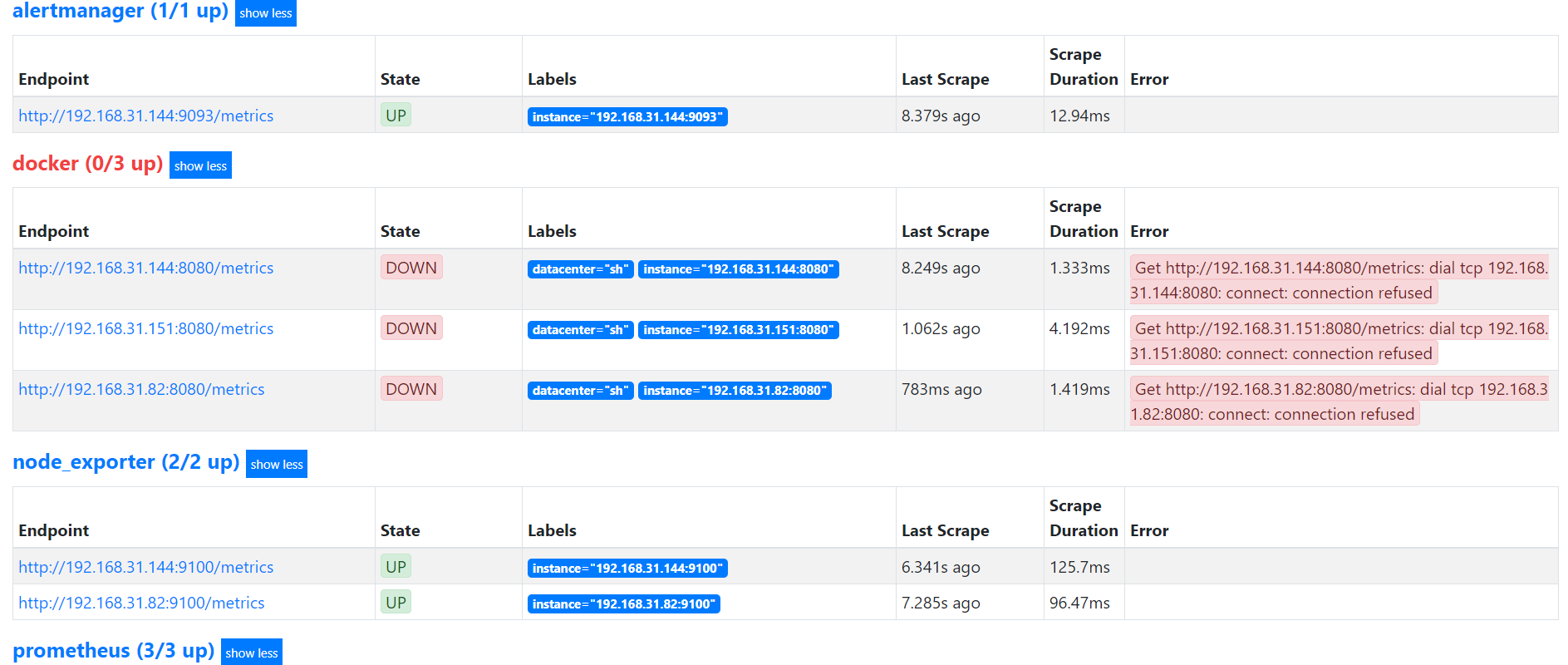

3、worker节点配置(192.168.31.144)

prometheus.yml

# my global config

global:

scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute.

evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute.

external_labels:

worker: 0 # Alertmanager configuration

alerting:

alertmanagers:

- static_configs:

- targets:

# - alertmanager:9093 # Load rules once and periodically evaluate them according to the global 'evaluation_interval'.

rule_files:

- "rules/*_rules.yml" # A scrape configuration containing exactly one endpoint to scrape:

# Here it's Prometheus itself.

scrape_configs:

# The job name is added as a label `job=<job_name>` to any timeseries scraped from this config.

- job_name: 'prometheus'

static_configs:

- targets:

- 192.168.31.151:9090

- 192.168.31.144:9090

- 192.168.31.82:9090

relabel_configs:

- source_labels: [__address__]

modulus: 2

target_label: __tmp_hash

action: hashmod

- source_labels: [__tmp_hash]

regex: ^0$

action: keep

- job_name: 'node_exporter'

file_sd_configs:

- files:

- targets/nodes/*.json

refresh_interval: 1m

relabel_configs:

- source_labels: [__address__]

modulus: 2

target_label: __tmp_hash

action: hashmod

- source_labels: [__tmp_hash]

regex: ^0$

action: keep

- job_name: 'docker'

file_sd_configs:

- files:

- targets/docker/*.json

refresh_interval: 1m

relabel_configs:

- source_labels: [__address__]

modulus: 2

target_label: __tmp_hash

action: hashmod

- source_labels: [__tmp_hash]

regex: ^0$

action: keep

- job_name: 'alertmanager'

static_configs:

- targets:

- 192.168.31.151:9093

- 192.168.31.144:9093

relabel_configs:

- source_labels: [__address__]

modulus: 2

target_label: __tmp_hash

action: hashmod

- source_labels: [__tmp_hash]

regex: ^0$

action: keep

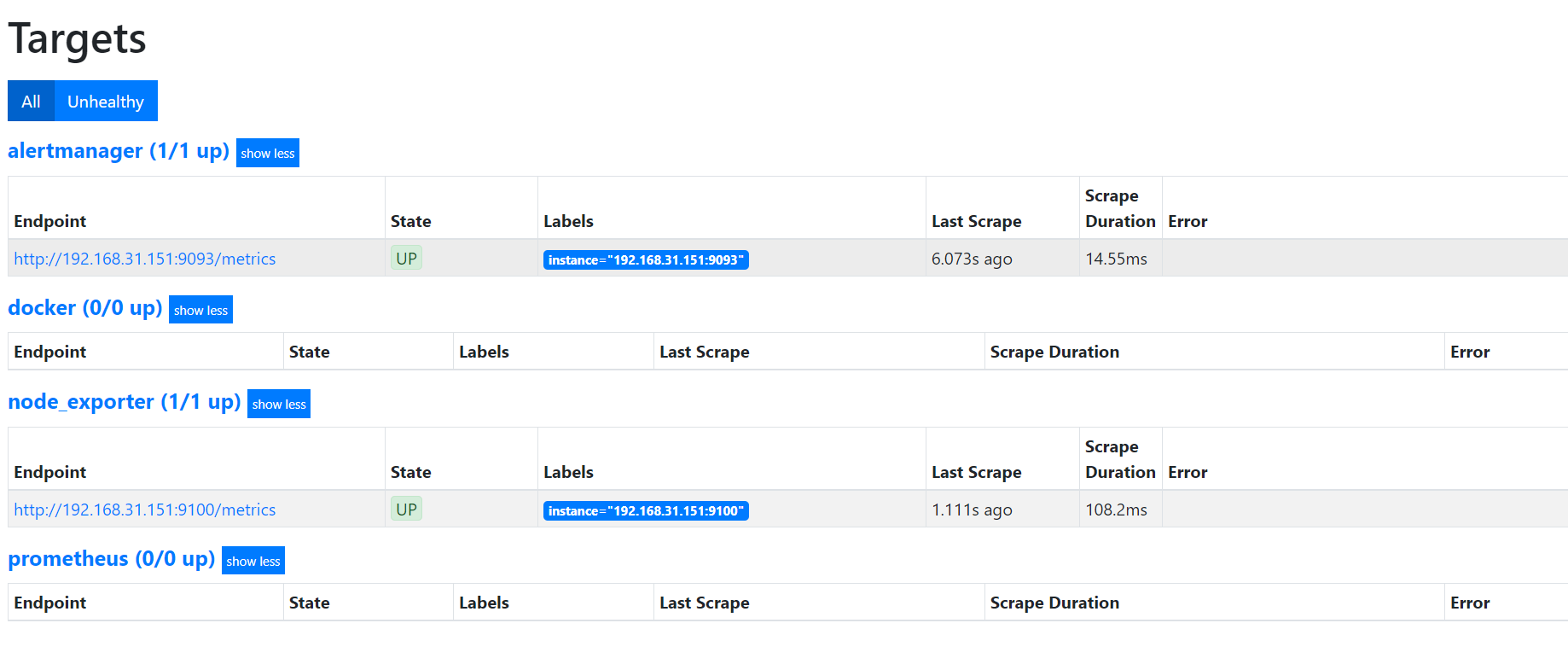

worker节点配置(192.168.31.82)

prometheus.yml

# my global config

global:

scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute.

evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute.

external_labels:

worker: 1 # Alertmanager configuration

alerting:

alertmanagers:

- static_configs:

- targets:

# - alertmanager:9093 # Load rules once and periodically evaluate them according to the global 'evaluation_interval'.

rule_files:

- "rules/*_rules.yml" # A scrape configuration containing exactly one endpoint to scrape:

# Here it's Prometheus itself.

scrape_configs:

# The job name is added as a label `job=<job_name>` to any timeseries scraped from this config.

- job_name: 'prometheus'

static_configs:

- targets:

- 192.168.31.151:9090

- 192.168.31.144:9090

- 192.168.31.82:9090

relabel_configs:

- source_labels: [__address__]

modulus: 2

target_label: __tmp_hash

action: hashmod

- source_labels: [__tmp_hash]

regex: ^1$

action: keep

- job_name: 'node_exporter'

file_sd_configs:

- files:

- targets/nodes/*.json

refresh_interval: 1m

relabel_configs:

- source_labels: [__address__]

modulus: 2

target_label: __tmp_hash

action: hashmod

- source_labels: [__tmp_hash]

regex: ^1$

action: keep

- job_name: 'docker'

file_sd_configs:

- files:

- targets/docker/*.json

refresh_interval: 1m

relabel_configs:

- source_labels: [__address__]

modulus: 2

target_label: __tmp_hash

action: hashmod

- source_labels: [__tmp_hash]

regex: ^1$

action: keep

- job_name: 'alertmanager'

static_configs:

- targets:

- 192.168.31.151:9093

- 192.168.31.144:9093

relabel_configs:

- source_labels: [__address__]

modulus: 2

target_label: __tmp_hash

action: hashmod

- source_labels: [__tmp_hash]

regex: ^1$

action: keep

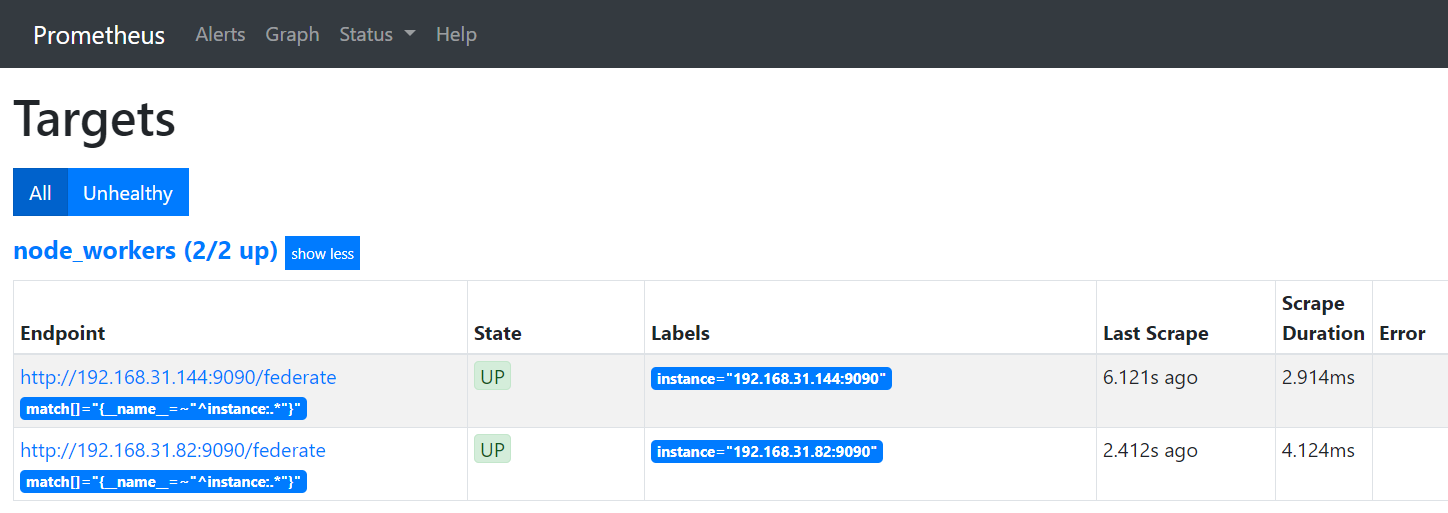

primary节点配置(192.168.31.151)

prometheus.yml

# my global config

global:

scrape_interval: 15s # Set the scrape interval to every 15 seconds. Default is every 1 minute.

evaluation_interval: 15s # Evaluate rules every 15 seconds. The default is every 1 minute.

# scrape_timeout is set to the global default (10s). # Alertmanager configuration

alerting:

alertmanagers:

- static_configs:

- targets:

- 192.168.31.151:9093

- 192.168.31.144:9093 # Load rules once and periodically evaluate them according to the global 'evaluation_interval'.

rule_files:

- "rules/*_alerts.yml" # A scrape configuration containing exactly one endpoint to scrape:

# Here it's Prometheus itself.

scrape_configs:

- job_name: 'node_workers'

file_sd_configs:

- files:

- 'targets/workers/*.json'

refresh_interval: 5m

honor_labels: true

metrics_path: /federate

params:

'match[]':

- '{__name__=~"^instance:.*"}'

cat ./targets/workers/workers.json

[{

"targets": [

"192.168.31.144:9090",

"192.168.31.82:9090"

]

}]

prometheus 集群的更多相关文章

- Prometheus集群介绍-1

Prometheus监控介绍 公司做教育的,要迁移上云,所以需要我这边从零开始调研加后期维护Prometheus:近期看过二本方面的prometheus书籍,一本是深入浅出一般是实战方向的:官方文档主 ...

- Thanos prometheus 集群以及多租户解决方案docker-compose 试用(一)

prometheus 是一个非常不多的metrics 监控解决方案,但是对于ha 以及多租户的处理并不是很好,当前有好多解决方案 cortex Thanos prometheus+ influxdb ...

- 部署prometheus监控kubernetes集群并存储到ceph

简介 Prometheus 最初是 SoundCloud 构建的开源系统监控和报警工具,是一个独立的开源项目,于2016年加入了 CNCF 基金会,作为继 Kubernetes 之后的第二个托管项目. ...

- 如何扩展单个Prometheus实现近万Kubernetes集群监控?

引言 TKE团队负责公有云,私有云场景下近万个集群,数百万核节点的运维管理工作.为了监控规模如此庞大的集群联邦,TKE团队在原生Prometheus的基础上进行了大量探索与改进,研发出一套可扩展,高可 ...

- 如何用Prometheus监控十万container的Kubernetes集群

概述 不久前,我们在文章<如何扩展单个Prometheus实现近万Kubernetes集群监控?>中详细介绍了TKE团队大规模Kubernetes联邦监控系统Kvass的演进过程,其中介绍 ...

- vivo 容器集群监控系统架构与实践

vivo 互联网服务器团队-YuanPeng 一.概述 从容器技术的推广以及 Kubernetes成为容器调度管理领域的事实标准开始,云原生的理念和技术架构体系逐渐在生产环境中得到了越来越广泛的应用实 ...

- 如何使用helm优雅安装prometheus-operator,并监控k8s集群微服务

前言:随着云原生概念盛行,对于容器.服务.节点以及集群的监控变得越来越重要.Prometheus 作为 Kubernetes 监控的事实标准,有着强大的功能和良好的生态.但是它不支持分布式,不支持数据 ...

- Kubernetes集群部署史上最详细(二)Prometheus监控Kubernetes集群

使用Prometheus监控Kubernetes集群 监控方面Grafana采用YUM安装通过服务形式运行,部署在Master上,而Prometheus则通过POD运行,Grafana通过使用Prom ...

- Prometheus监控elasticsearch集群(以elasticsearch-6.4.2版本为例)

部署elasticsearch集群,配置文件可"浓缩"为以下: cluster.name: es_cluster node.name: node1 path.data: /app/ ...

随机推荐

- 小米平板8.0以上系统如何不用root激活xposed框架的流程

在大多使用室的引流,或业务操作中,基本上都需要使用安卓的强大XPOSED框架,近来我们使用室购来了一批新的小米平板8.0以上系统,基本上都都是基于7.0以上系统版本,基本上都不能够刷入ROOT的su权 ...

- IOS跟ANDROID的区别

大家总是会纠结哪个手机系统会更加适合自己,那就由小编我简要介绍一下IOS和安卓的区别吧! 运行机制:安卓是虚拟机运行机制,IOS是沙盒运行机制.这里再说明一下这两者的主要不同之处.安卓系统中应用程序的 ...

- Java获取图片属性

BufferdImage bfi = ImageIO.read( new File(“d:/file/img.jpg”) ); //获取图片位深度 Int imgBit = bfi.getColorM ...

- DIY手机锂电池万能充

今天翻出来一个诺基亚的旧手机,试了一下,无法开机,应该了电池亏电了.可惜手头没有充电器,无法给手机充电. 活人岂能让尿憋死?回想了一下以前用过的手机万能充的样式(这里暴露年龄了) 根据家中现成的材料, ...

- linux的自有(内置)服务

运行模式(运行级别) 在linux中存在一个进程,init(initialize初始化)进程号为1 ,该进程对应一个配置文件inittab 文件路径为/etc/inittab centOS6.5存在7 ...

- asyncio 基础用法

asyncio 基础用法 python也是在python 3.4中引入了协程的概念.也通过这次整理更加深刻理解这个模块的使用 asyncio 是干什么的? asyncio是Python 3.4版本引入 ...

- ASP.NET MVC 下自定义 ModelState 扩展类,响应给 AJAX

ModelStateExtensions.cs using System.Collections.Generic; using System.Linq; using System.Web.Mvc; n ...

- [JSOI2008]Blue Mary的旅行

嘟嘟嘟 看\(n\)那么小,就知道是网络流.然后二分,按时间拆点. 刚开始我看成所有航班一天只能起飞一次,纠结了好一会儿.但实际上是每一个航班单独考虑,互不影响. 建图很显然,拆完点后每一个点的第\( ...

- 逆向-攻防世界-CSAW2013Reversing2

运行程序乱码,OD载入搜索字符串,断电到弹窗Flag附近. 发现跳过00B61000函数,弹窗乱码,我们试试调用00B61000函数.将00B61094的指令修改为JE SHORT 00B6109b. ...

- 一个简单的以太坊合约让imtoken支持多签

熟悉比特币和以太坊的人应该都知道,在比特币中有2种类型的地址,1开头的是P2PKH,就是个人地址,3开头的是P2SH,一般是一个多签地址.所以在原生上比特币就支持多签.多签的一个优势就是可以多方对一笔 ...