附014.Kubernetes Prometheus+Grafana+EFK+Kibana+Glusterfs整合性方案

一 glusterfs存储集群部署

1.1 架构示意

1.2 相关规划

|

主机

|

IP

|

磁盘

|

备注

|

|

k8smaster01

|

172.24.8.71

|

——

|

Kubernetes Master节点

Heketi主机

|

|

k8smaster02

|

172.24.8.72

|

——

|

Kubernetes Master节点

Heketi主机

|

|

k8smaster03

|

172.24.8.73

|

——

|

Kubernetes Master节点

Heketi主机

|

|

k8snode01

|

172.24.8.74

|

sdb

|

Kubernetes Worker节点

glusterfs 01节点

|

|

k8snode02

|

172.24.8.75

|

sdb

|

Kubernetes Worker节点

glusterfs 02节点

|

|

k8snode03

|

172.24.8.76

|

sdb

|

Kubernetes Worker节点

glusterfs 03节点

|

1.3 安装glusterfs

1.4 添加信任池

1.5 安装heketi

1.6 配置heketi

{

"_port_comment": "Heketi Server Port Number",

"port": "",

"_use_auth": "Enable JWT authorization. Please enable for deployment",

"use_auth": true,

"_jwt": "Private keys for access",

"jwt": {

"_admin": "Admin has access to all APIs",

"admin": {

"key": "admin123"

},

"_user": "User only has access to /volumes endpoint",

"user": {

"key": "xianghy"

}

},

"_glusterfs_comment": "GlusterFS Configuration",

"glusterfs": {

"_executor_comment": [

"Execute plugin. Possible choices: mock, ssh",

"mock: This setting is used for testing and development.",

" It will not send commands to any node.",

"ssh: This setting will notify Heketi to ssh to the nodes.",

" It will need the values in sshexec to be configured.",

"kubernetes: Communicate with GlusterFS containers over",

" Kubernetes exec api."

],

"executor": "ssh",

"_sshexec_comment": "SSH username and private key file information",

"sshexec": {

"keyfile": "/etc/heketi/heketi_key",

"user": "root",

"port": "",

"fstab": "/etc/fstab"

},

"_db_comment": "Database file name",

"db": "/var/lib/heketi/heketi.db",

"_loglevel_comment": [

"Set log level. Choices are:",

" none, critical, error, warning, info, debug",

"Default is warning"

],

"loglevel" : "warning"

}

}

1.7 配置免秘钥

1.8 启动heketi

1.9 配置Heketi拓扑

{

"clusters": [

{

"nodes": [

{

"node": {

"hostnames": {

"manage": [

"k8snode01"

],

"storage": [

"172.24.8.74"

]

},

"zone": 1

},

"devices": [

"/dev/sdb"

]

},

{

"node": {

"hostnames": {

"manage": [

"k8snode02"

],

"storage": [

"172.24.8.75"

]

},

"zone": 1

},

"devices": [

"/dev/sdb"

]

},

{

"node": {

"hostnames": {

"manage": [

"k8snode03"

],

"storage": [

"172.24.8.76"

]

},

"zone": 1

},

"devices": [

"/dev/sdb"

]

}

]

}

]

}

1.10 集群管理及测试

1.11 创建StorageClass

apiVersion: v1

kind: Secret

metadata:

name: heketi-secret

namespace: heketi

data:

key: YWRtaW4xMjM=

type: kubernetes.io/glusterfs

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:

name: ghstorageclass

parameters:

resturl: "http://172.24.8.71:8080"

clusterid: "ad0f81f75f01d01ebd6a21834a2caa30"

restauthenabled: "true"

restuser: "admin"

secretName: "heketi-secret"

secretNamespace: "heketi"

volumetype: "replicate:3"

provisioner: kubernetes.io/glusterfs

reclaimPolicy: Delete

二 集群监控Metrics

2.1 开启聚合层

2.2 获取部署文件

……

image: mirrorgooglecontainers/metrics-server-amd64:v0.3.6 #修改为国内源

command:

- /metrics-server

- --metric-resolution=30s

- --kubelet-insecure-tls

- --kubelet-preferred-address-types=InternalIP,Hostname,InternalDNS,ExternalDNS,ExternalIP #添加如上command

……

2.3 正式部署

2.4 确认验证

三 Prometheus部署

3.1 获取部署文件

3.2 创建命名空间

apiVersion: v1

kind: Namespace

metadata:

name: monitoring

3.3 创建RBAC

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:

name: prometheus

rules:

- apiGroups: [""]

resources:

- nodes

- nodes/proxy

- services

- endpoints

- pods

verbs: ["get", "list", "watch"]

- apiGroups:

- extensions

resources:

- ingresses

verbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]

verbs: ["get"]

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: prometheus

namespace: monitoring #仅需修改命名空间

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:

name: prometheus

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: prometheus

subjects:

- kind: ServiceAccount

name: prometheus

namespace: monitoring #仅需修改命名空间

3.4 创建Prometheus ConfigMap

apiVersion: v1

kind: ConfigMap

metadata:

name: prometheus-server-conf

labels:

name: prometheus-server-conf

namespace: monitoring #修改命名空间

……

3.5 创建持久PVC

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: prometheus-pvc

namespace: monitoring

annotations:

volume.beta.kubernetes.io/storage-class: ghstorageclass

spec:

accessModes:

- ReadWriteMany

resources:

requests:

storage: 5Gi

3.6 Prometheus部署

apiVersion: apps/v1beta2

kind: Deployment

metadata:

labels:

name: prometheus-deployment

name: prometheus-server

namespace: monitoring

spec:

replicas: 1

selector:

matchLabels:

app: prometheus-server

template:

metadata:

labels:

app: prometheus-server

spec:

containers:

- name: prometheus-server

image: prom/prometheus:v2.14.0

command:

- "/bin/prometheus"

args:

- "--config.file=/etc/prometheus/prometheus.yml"

- "--storage.tsdb.path=/prometheus/"

- "--storage.tsdb.retention=72h"

ports:

- containerPort: 9090

protocol: TCP

volumeMounts:

- name: prometheus-config-volume

mountPath: /etc/prometheus/

- name: prometheus-storage-volume

mountPath: /prometheus/

serviceAccountName: prometheus

imagePullSecrets:

- name: regsecret

volumes:

- name: prometheus-config-volume

configMap:

defaultMode: 420

name: prometheus-server-conf

- name: prometheus-storage-volume

persistentVolumeClaim:

claimName: prometheus-pvc

3.7 创建Prometheus Service

apiVersion: v1

kind: Service

metadata:

labels:

app: prometheus-service

name: prometheus-service

namespace: monitoring

spec:

type: NodePort

selector:

app: prometheus-server

ports:

- port: 9090

targetPort: 9090

nodePort: 30001

3.8 确认验证Prometheus

四 部署grafana

4.1 获取部署文件

4.2 创建持久PVC

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: grafana-data-pvc

namespace: monitoring

annotations:

volume.beta.kubernetes.io/storage-class: ghstorageclass

spec:

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 5Gi

4.3 grafana部署

apiVersion: extensions/v1beta1

kind: Deployment

metadata:

name: monitoring-grafana

namespace: monitoring

spec:

replicas: 1

template:

metadata:

labels:

task: monitoring

k8s-app: grafana

spec:

containers:

- name: grafana

image: grafana/grafana:6.5.0

imagePullPolicy: IfNotPresent

ports:

- containerPort: 3000

protocol: TCP

volumeMounts:

- mountPath: /var/lib/grafana

name: grafana-storage

env:

- name: INFLUXDB_HOST

value: monitoring-influxdb

- name: GF_SERVER_HTTP_PORT

value: ""

- name: GF_AUTH_BASIC_ENABLED

value: "false"

- name: GF_AUTH_ANONYMOUS_ENABLED

value: "true"

- name: GF_AUTH_ANONYMOUS_ORG_ROLE

value: Admin

- name: GF_SERVER_ROOT_URL

value: /

readinessProbe:

httpGet:

path: /login

port: 3000

volumes:

- name: grafana-storage

persistentVolumeClaim:

claimName: grafana-data-pvc

nodeSelector:

node-role.kubernetes.io/master: "true"

tolerations:

- key: "node-role.kubernetes.io/master"

effect: "NoSchedule"

---

apiVersion: v1

kind: Service

metadata:

labels:

kubernetes.io/cluster-service: 'true'

kubernetes.io/name: monitoring-grafana

annotations:

prometheus.io/scrape: 'true'

prometheus.io/tcp-probe: 'true'

prometheus.io/tcp-probe-port: '80'

name: monitoring-grafana

namespace: monitoring

spec:

type: NodePort

ports:

- port: 80

targetPort: 3000

nodePort: 30002

selector:

k8s-app: grafana

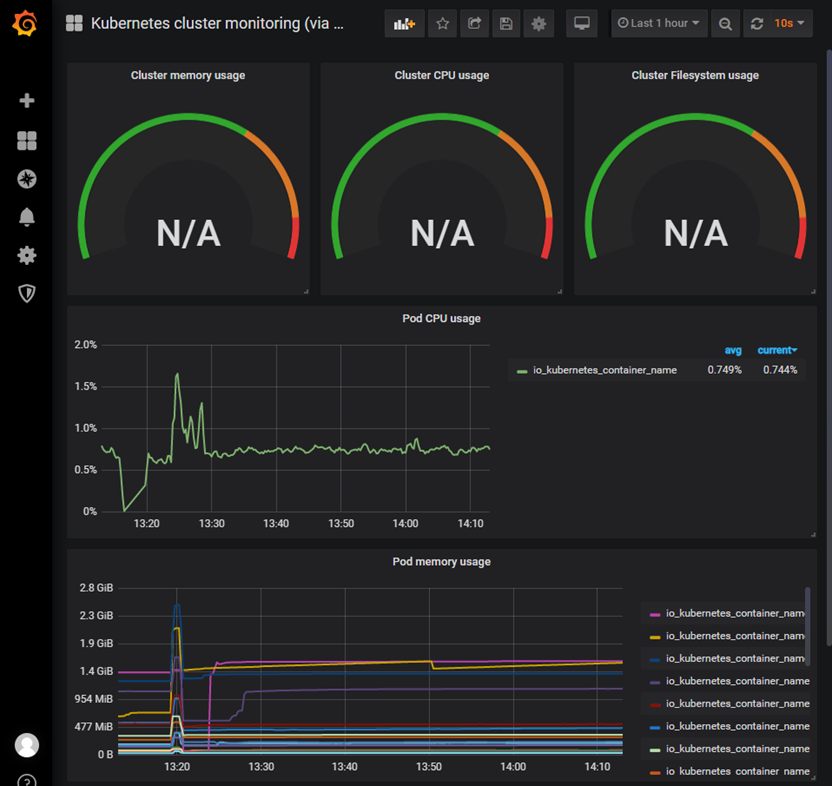

4.4 确认验证Prometheus

4.4 grafana配置

- 添加数据源:略

- 创建用户:略

4.5 查看监控

附014.Kubernetes Prometheus+Grafana+EFK+Kibana+Glusterfs整合性方案的更多相关文章

- 附014.Kubernetes Prometheus+Grafana+EFK+Kibana+Glusterfs整合解决方案

一 glusterfs存储集群部署 注意:以下为简略步骤,详情参考<附009.Kubernetes永久存储之GlusterFS独立部署>. 1.1 架构示意 略 1.2 相关规划 主机 I ...

- Kubernetes+Prometheus+Grafana部署笔记

一.基础概念 1.1 基础概念 Kubernetes(通常写成“k8s”)Kubernetes是Google开源的容器集群管理系统.其设计目标是在主机集群之间提供一个能够自动化部署.可拓展.应用容器可 ...

- Kubernetes使用prometheus+grafana做一个简单的监控方案

前言 本文介绍在k8s集群中使用node-exporter.prometheus.grafana对集群进行监控.其实现原理有点类似ELK.EFK组合.node-exporter组件负责收集节点上的me ...

- Kubernetes prometheus+grafana k8s 监控

参考: https://www.cnblogs.com/terrycy/p/10058944.html https://www.cnblogs.com/weiBlog/p/10629966.html ...

- 附024.Kubernetes全系列大总结

Kubernetes全系列总结如下,后期不定期更新.欢迎基于学习.交流目的的转载和分享,禁止任何商业盗用,同时希望能带上原文出处,尊重ITer的成果,也是尊重知识.若发现任何错误或纰漏,留言反馈或右侧 ...

- SpringBoot+Prometheus+Grafana实现应用监控和报警

一.背景 SpringBoot的应用监控方案比较多,SpringBoot+Prometheus+Grafana是目前比较常用的方案之一.它们三者之间的关系大概如下图: 关系图 二.开发SpringBo ...

- 使用 Prometheus + Grafana 对 Kubernetes 进行性能监控的实践

1 什么是 Kubernetes? Kubernetes 是 Google 开源的容器集群管理系统,其管理操作包括部署,调度和节点集群间扩展等. 如下图所示为目前 Kubernetes 的架构图,由 ...

- [转帖]Prometheus+Grafana监控Kubernetes

原博客的位置: https://blog.csdn.net/shenhonglei1234/article/details/80503353 感谢原作者 这里记录一下自己试验过程中遇到的问题: . 自 ...

- 附010.Kubernetes永久存储之GlusterFS超融合部署

一 前期准备 1.1 基础知识 在Kubernetes中,使用GlusterFS文件系统,操作步骤通常是: 创建brick-->创建volume-->创建PV-->创建PVC--&g ...

随机推荐

- awk使用和详解

awk是一个强大的文本分析工具,相对于grep的查找,sed的编辑,awk在其对数据分析并生成报告时,显得尤为强大.简单来说awk就是把文件逐行的读入,以空格为默认分隔符将每行切片,切开的部分再进行各 ...

- phpStrom安装PHP_CodeSniffer检查代码规范

为什么使用PHP_CodeSniffer 一个开发团队统一的编码风格,有助于他人对代码的理解和维护,对于大项目来说尤其重要. PHP_CodeSniffer是PEAR中的一个用PHP5写的用来检查嗅探 ...

- python 内置方法、数据序列化

abc(*args, **kwargs) 取绝对值 def add(a,b,f): return f(a)+f(b) res = add(3,-6,abs) print(res) all(*args, ...

- Java學習筆記(基本語法)

本文件是以學習筆記的概念為基礎,用於自我的複習紀錄,不過也開放各位的概念指證.畢竟學習過程中難免會出現觀念錯誤的問題.也感謝各位的觀念指證. 安裝JDK 在Oracle網站中找自己系統的JDK下載位置 ...

- mysqldump免密备份方法

注意:1.暂时只试验了root用户 2.暂时只试验了5.6和5.7两个版本 1.我用的root用户,先进入家目录 cd ~ 2.vim .my.cnf #在家目录添加该文件 [mysqldum ...

- 【转】Android Monkey 命令行可用的全部选项

常规 事件 约束限制 调试 原文参见:http://www.douban.com/note/257030384/ 常规 –help 列出简单的用法. -v 命令行的每一个 -v 将增加反馈信息的级别. ...

- Android Pay正式启用 支付宝们还好吗

Pay正式启用 支付宝们还好吗" title="Android Pay正式启用 支付宝们还好吗"> 苹果发布会上能够真正让人眼前一亮的产品并不多,但对于" ...

- coreseek 在gcc 4.9+ 上编译不通过 [sphinxexpr.o] Error 1 错误解决方案

这几天玩hhvm,把gcc环境都装到4.9了,然后编译coreseek的时候就出问题,google一大圈,貌似捕风捉影看到一些信息说是gcc4.7+的c++作用域必须用this->去引用,这里整 ...

- OLE DB访问接口“MICROSOFT.JET.OLEDB.4.0”配置为在单线程单位模式下运行,所以该访问接口无法用于分布式

OLE DB访问接口"MICROSOFT.JET.OLEDB.4.0"配置为在单线程单位模式下运行,所以该访问接口无法用于分布式 数据库操作excel时遇到的以上问题的解决方法 解 ...

- sublime 安装Anaconda插件 配置python开发环境

我的sublime 3 python 3.6.6 安装Anaconda插件 由于Anaconda插件本身无法知道Python安装的路径,所以需要设置Python主程序的实际位置.选择Settings ...