Neural Network Basics

在学习NLP之前还是要打好基础,第二部分就是神经网络基础。

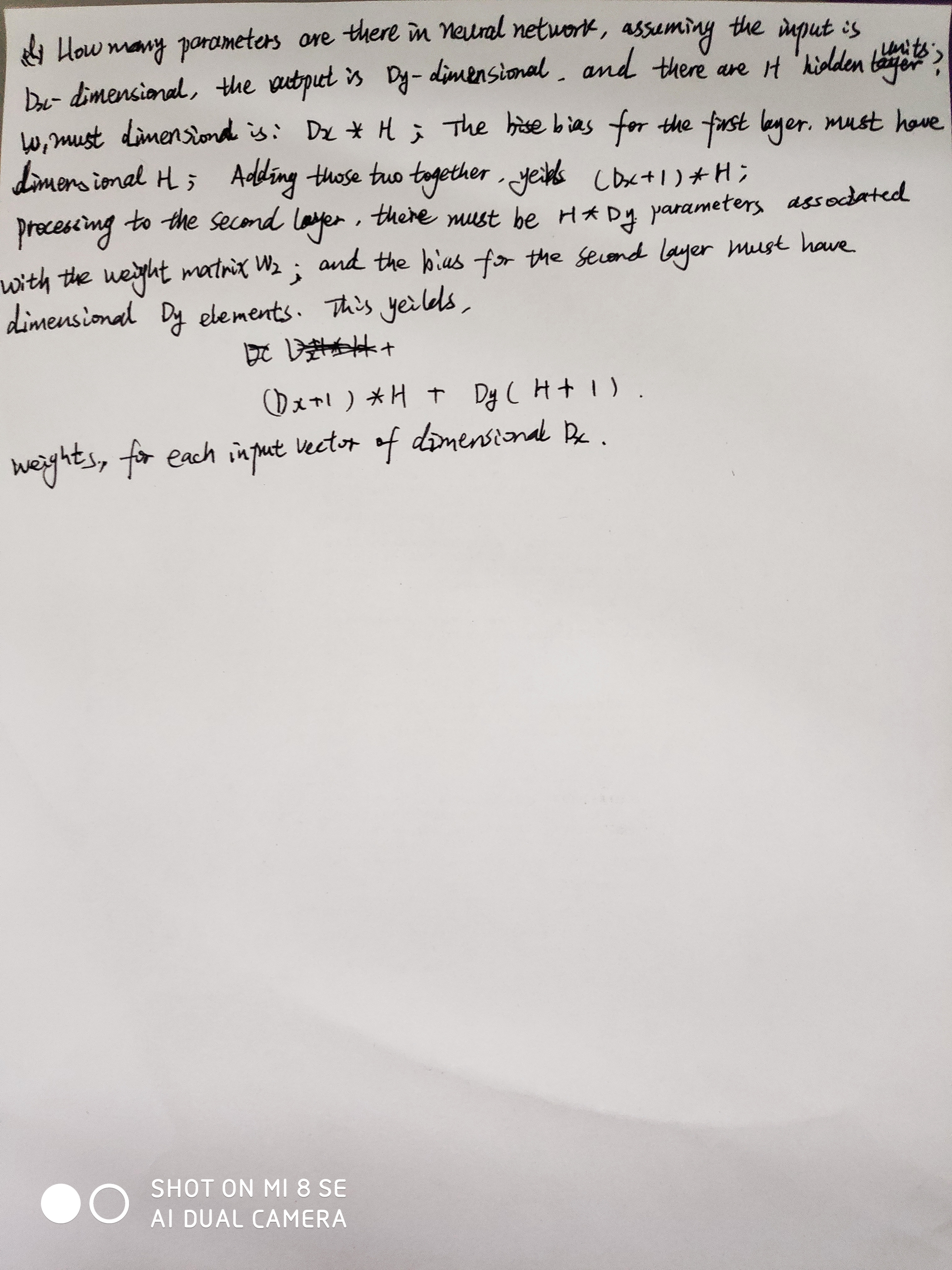

知识点总结:

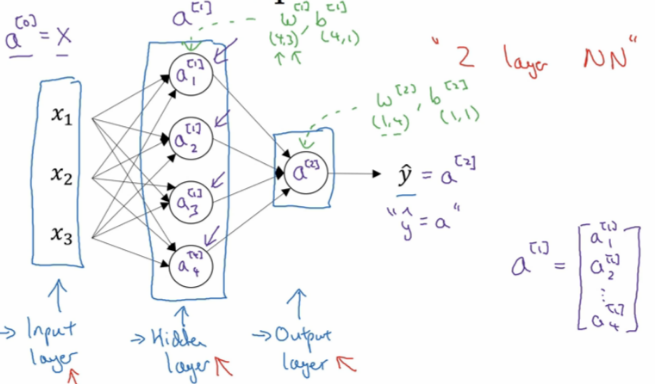

1.神经网络概要:

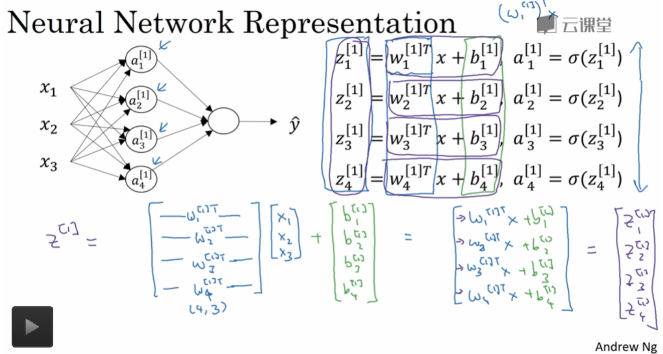

2. 神经网络表示:

第0层为输入层(input layer)、隐藏层(hidden layer)、输出层(output layer)组成。

3. 神经网络的输出计算:

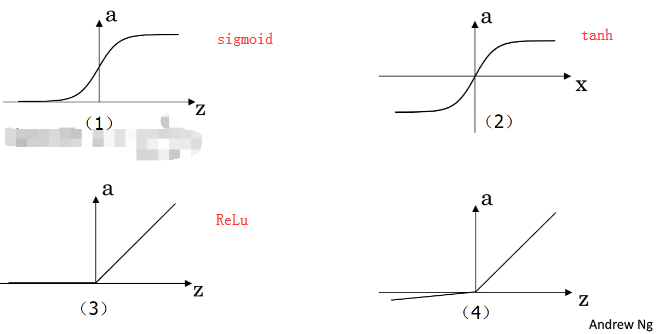

4.三种常见激活函数:

sigmoid:一般只用在二分类的输出层,因为二分类输出结果对应着0,1恰好也是sigmoid的阈值之间。

。它相比sigmoid函数均值在0附近,有数据中心化的优点,但是两者的缺点是z值很大很小时候,w几乎为0,学习速率非常慢。

。它相比sigmoid函数均值在0附近,有数据中心化的优点,但是两者的缺点是z值很大很小时候,w几乎为0,学习速率非常慢。

ReLu: f(x)= max(0, x)

- 优点:相较于sigmoid和tanh函数,ReLU对于随机梯度下降的收敛有巨大的加速作用( Krizhevsky等的论文指出有6倍之多)。据称这是由它的线性,非饱和的公式导致的。

- 优点:sigmoid和tanh神经元含有指数运算等耗费计算资源的操作,而ReLU可以简单地通过对一个矩阵进行阈值计算得到。

- 缺点:在训练的时候,ReLU单元比较脆弱并且可能“死掉”。举例来说,当一个很大的梯度流过ReLU的神经元的时候,可能会导致梯度更新到一种特别的状态,在这种状态下神经元将无法被其他任何数据点再次激活。如果这种情况发生,那么从此所以流过这个神经元的梯度将都变成0。也就是说,这个ReLU单元在训练中将不可逆转的死亡,因为这导致了数据多样化的丢失。例如,如果学习率设置得太高,可能会发现网络中40%的神经元都会死掉(在整个训练集中这些神经元都不会被激活)。通过合理设置学习率,这种情况的发生概率会降低。

Assignment:

sigmoid 实现和梯度实现:

import numpy as np def sigmoid(x):

f = 1 / (1 + np.exp(-x))

return f def sigmoid_grad(f):

f = f * (1 - f)

return f def test_sigmoid_basic():

x = np.array([[1, 2], [-1, -2]])

f = sigmoid(x)

g = sigmoid_grad(f)

print (g)

def test_sigmoid():

pass

if __name__ == "__main__":

test_sigmoid_basic() #输出:

[[0.19661193 0.10499359]

[0.19661193 0.10499359]]

实现实现梯度check

import numpy as np

import random

def gradcheck_navie(f, x):

rndstate = random . getstate ()

random . setstate ( rndstate )

fx , grad = f(x) # Evaluate function value at original point

h = 1e-4

it = np. nditer (x, flags =[' multi_index '], op_flags =[' readwrite '])

while not it. finished :

ix = it. multi_index

### YOUR CODE HERE :

old_xix = x[ix]

x[ix] = old_xix + h

random . setstate ( rndstate )

fp = f(x)[0]

x[ix] = old_xix - h

random . setstate ( rndstate )

fm = f(x)[0]

x[ix] = old_xix

numgrad = (fp - fm)/(2* h)

### END YOUR CODE

# Compare gradients

reldiff = abs ( numgrad - grad [ix]) / max (1, abs ( numgrad ), abs ( grad [ix]))

if reldiff > 1e-5:

print (" Gradient check failed .")

print (" First gradient error found at index %s" % str(ix))

print (" Your gradient : %f \t Numerical gradient : %f" % ( grad [ix], numgrad return

it. iternext () # Step to next dimension

print (" Gradient check passed !") def sanity_check():

"""

Some basic sanity checks.

"""

quad = lambda x: (np.sum(x ** 2), x * 2) print ("Running sanity checks...")

gradcheck_naive(quad, np.array(123.456)) # scalar test

gradcheck_naive(quad, np.random.randn(3,)) # 1-D test

gradcheck_naive(quad, np.random.randn(4,5)) # 2-D test

print("") if __name__ == "__main__":

sanity_check()

Neural Network Basics的更多相关文章

- 吴恩达《深度学习》-课后测验-第一门课 (Neural Networks and Deep Learning)-Week 2 - Neural Network Basics(第二周测验 - 神经网络基础)

Week 2 Quiz - Neural Network Basics(第二周测验 - 神经网络基础) 1. What does a neuron compute?(神经元节点计算什么?) [ ] A ...

- CS224d assignment 1【Neural Network Basics】

refer to: 机器学习公开课笔记(5):神经网络(Neural Network) CS224d笔记3--神经网络 深度学习与自然语言处理(4)_斯坦福cs224d 大作业测验1与解答 CS224 ...

- 课程一(Neural Networks and Deep Learning),第二周(Basics of Neural Network programming)—— 1、10个测验题(Neural Network Basics)

--------------------------------------------------中文翻译---------------------------------------------- ...

- 课程一(Neural Networks and Deep Learning),第二周(Basics of Neural Network programming)—— 4、Logistic Regression with a Neural Network mindset

Logistic Regression with a Neural Network mindset Welcome to the first (required) programming exerci ...

- [C1W2] Neural Networks and Deep Learning - Basics of Neural Network programming

第二周:神经网络的编程基础(Basics of Neural Network programming) 二分类(Binary Classification) 这周我们将学习神经网络的基础知识,其中需要 ...

- 吴恩达《深度学习》-第一门课 (Neural Networks and Deep Learning)-第二周:(Basics of Neural Network programming)-课程笔记

第二周:神经网络的编程基础 (Basics of Neural Network programming) 2.1.二分类(Binary Classification) 二分类问题的目标就是习得一个分类 ...

- 课程一(Neural Networks and Deep Learning),第二周(Basics of Neural Network programming)—— 0、学习目标

1. Build a logistic regression model, structured as a shallow neural network2. Implement the main st ...

- (转)The Neural Network Zoo

转自:http://www.asimovinstitute.org/neural-network-zoo/ THE NEURAL NETWORK ZOO POSTED ON SEPTEMBER 14, ...

- (转)LSTM NEURAL NETWORK FOR TIME SERIES PREDICTION

LSTM NEURAL NETWORK FOR TIME SERIES PREDICTION Wed 21st Dec 2016 Neural Networks these days are th ...

随机推荐

- 数位dp poj1850

题目链接:https://vjudge.net/problem/POJ-1850 这题我用的是数位dp,刚刚看了一下别人用排列组合,我脑子不行,想不出来. 在这题里面我把a看成1,其他的依次递增,如果 ...

- SDK和API

软件开发工具包(缩写:SDK.外语全称:Software Development Kit)一般都是一些软件工程师为特定的软件包.软件框架.硬件平台.操作系统等建立应用软件时的开发工具的集合. 笔记:开 ...

- sizeof 4字节对齐

#include <iostream> #include<assert.h> using namespace std; typedef struct sys{ char a; ...

- python函数传入参数(默认参数、可变长度参数、关键字参数)

1.python中默认缺省参数----定义默认参数要牢记一点:默认参数必须指向不变对象! 1 def foo(a,b=1): 2 print a,b 3 4 foo(2) #2 1 5 foo(3,1 ...

- 被遗忘的having

清明节后公司网站搞活动主要功能很简单就是实现一个消费送的功能.比如, 当天消费金额满5000 返回10%,5000 及以下 返 7% 的功能.本身这个功能不是很难,但是 这个功能跟上次的一个 新用户 ...

- swift - 指定VC隐藏导航栏 - 禁用tabbar的根控制器手势,防止两个tabbar跳转 手势冲突

1. viewdidload 设置代理 self.navigationController?.delegate = self 2.代理里面指定VC 隐藏 //MARK: - 导航栏delegate e ...

- jquery写tab切换,三行代码搞定

<script type="text/javascript"> $("button").on("click",function( ...

- 半吊子的STM32 — SPI通信

全双工,同步串行通信. 一般需要三条线通信: MOSI 主设备发送,从设备接收 MISO 主设备接收,从设备发送 SCLK 时钟线 多设备时,多线选取从机: 传输过程中,主从机中的移位寄存器中数据相互 ...

- iOS.CM5.CM4.CM2

增量数据计算接口: CC_MDx_Init CC_MDx_Update CC_MDx_Final 全量数据计算接口: CC_MDx

- python-bs4的使用

BeautifulSoup4 官方文档 是一个Python库,用于从HTML和XML文件中提取数据.它与您最喜欢的解析器一起使用,提供导航,搜索和修改解析树的惯用方法.它通常可以节省程序员数小时或数天 ...