Going Deeper with Convolutions (GoogLeNet)

@article{szegedy2015going,

title={Going deeper with convolutions},

author={Szegedy, Christian and Liu, Wei and Jia, Yangqing and Sermanet, Pierre and Reed, Scott and Anguelov, Dragomir and Erhan, Dumitru and Vanhoucke, Vincent and Rabinovich, Andrew},

pages={1--9},

year={2015}}

这里讲的很细, 不多赘诉了.

代码

"""

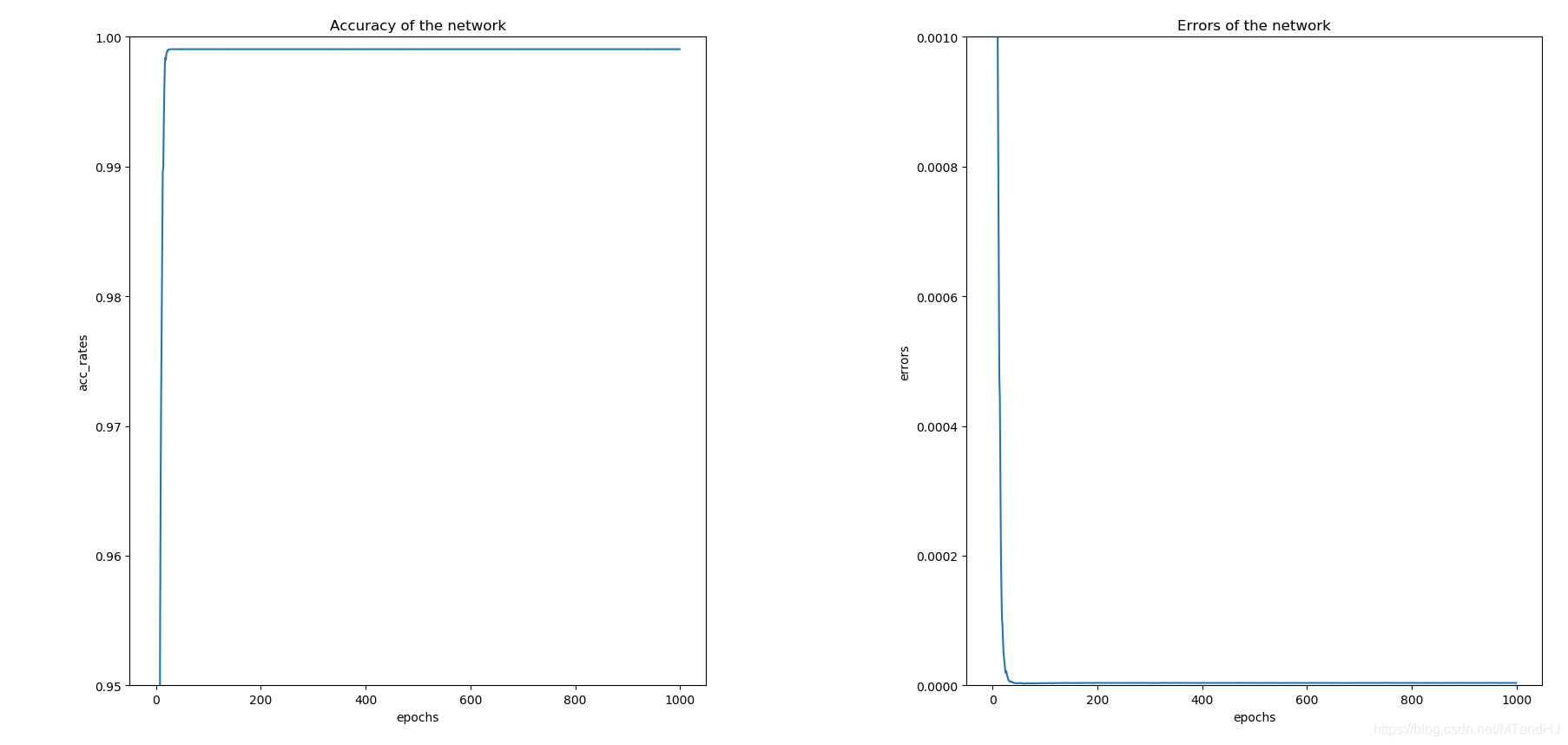

代码虽然是"copy"源代码, 但是收获不少.

虽然参数少, 但是训练得很慢, 是因为要传三次梯度?

测试集上正确率维0.8682

"""

import torch

import torch.nn as nn

import torchvision

import torchvision.transforms as transforms

import numpy as np

import os

class BasicConv2d(nn.Module):

def __init__(self, in_channels, out_channels, **kwargs):

super(BasicConv2d, self).__init__()

self.conv = nn.Conv2d(in_channels, out_channels,

bias=False, **kwargs) #不要偏置

#eps 为了数值稳定 默认是1e-5

self.bn = nn.BatchNorm2d(out_channels, eps=0.001)

self.relu = nn.ReLU(inplace=True)

def forward(self, x):

x = self.conv(x)

x = self.bn(x)

out = self.relu(x)

return out

class Inception(nn.Module):

def __init__(self, in_channels, ch1x1, ch3x3red, ch3x3,

ch5x5red, ch5x5, pool_proj):

"""

:param in_channels: 输入的通道数

:param ch1x1: 1x1卷积核的输出通道数

:param ch3x3red: 3x3一开始的1x1部分的通道数

:param ch3x3: 3x3后半的3x3部分的通道数

:param ch5x5: ...

:param ch5x5red: ...

:param pool_proj: 池化层的通道数

"""

super(Inception, self).__init__()

self.branch1 = BasicConv2d(in_channels, ch1x1, kernel_size=1)

self.branch2 = nn.Sequential(

BasicConv2d(in_channels, ch3x3red, kernel_size=1),

BasicConv2d(ch3x3red, ch3x3, kernel_size=3, padding=1)

)

#pytorch 这里用的3x3卷积核?

self.branch3 = nn.Sequential(

BasicConv2d(in_channels, ch5x5red, kernel_size=1),

BasicConv2d(ch5x5red, ch5x5, kernel_size=5, padding=2)

)

self.branch4 = nn.Sequential(

nn.MaxPool2d(kernel_size=3, stride=1, padding=1, ceil_mode=True),

BasicConv2d(in_channels, pool_proj, kernel_size=1)

)

def forward(self, x):

x1 = self.branch1(x)

x2 = self.branch2(x)

x3 = self.branch3(x)

x4 = self.branch4(x)

out = (x1, x2, x3, x4)

return torch.cat(out, 1)

class InceptionAux(nn.Module):

def __init__(self, in_channels, num_classes):

super(InceptionAux, self).__init__()

self.avgpool = nn.AdaptiveAvgPool2d((4, 4))

self.conv = BasicConv2d(in_channels, 128, kernel_size=1)

#N x 128 x 4 x 4

self.dense = nn.Sequential(

nn.Linear(2048, 1024),

nn.ReLU(inplace=True),

nn.Dropout(0.7),

nn.Linear(1024, num_classes)

)

def forward(self, x):

x = self.avgpool(x)

x = self.conv(x)

x = torch.flatten(x, 1)

out = self.dense(x)

return out

class GoogLeNet(nn.Module):

def __init__(self, num_classes=10, aux_logits=True):

"""

:param num_classes: 类别个数

:param aux_logits: 是否需要添加辅助训练器

"""

super(GoogLeNet, self).__init__()

self.aux_logits =aux_logits

# N x 3 x 224 x 224

self.conv1 = BasicConv2d(3, 64, kernel_size=7, stride=2, padding=3)

# N x 64 x 112 x 112

self.maxpool1 = nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True)

# N x 64 x 56 x 56

self.conv2 = BasicConv2d(64, 64, kernel_size=1)

self.conv3 = BasicConv2d(64, 192, kernel_size=3, padding=1)

# N x 192 x 56 x 56

self.maxpool2 = nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True)

# N x 192 x 28 x 28

self.inception3a = Inception(192, 64, 96, 128, 16, 32, 32)

#N x 256 x 28 x 28

self.inception3b = Inception(256, 128, 128, 192, 32, 96, 64)

#N x 480 x 28 x 28

self.maxpool3 = nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True)

#N x 480 x 14 x 14

self.inception4a = Inception(480, 192, 96, 208, 16, 48, 64)

#N x 512 x 14 x 14

self.inception4b = Inception(512, 160, 112, 224, 24, 64, 64)

#N x 512 x 14 x 14

self.inception4c = Inception(512, 128, 128, 256, 24, 64, 64)

#N x 512 x 14 x 14

self.inception4d = Inception(512, 112, 144, 288, 32, 64, 64)

#N x 528 x 14 x 14

self.inception4e = Inception(528, 256, 160, 320, 32, 128, 128)

#N x 832 x 14 x 14

self.maxpool4 = nn.MaxPool2d(kernel_size=3, stride=2, ceil_mode=True)

#N x 832 x 7 x 7

self.inception5a = Inception(832, 256, 160, 320, 32, 128, 128)

#N x 832 x 7 x 7

self.inception5b = Inception(832, 384, 192, 384, 48, 128, 128)

#N x 1024 x 7 x 7

self.avgpool = nn.AdaptiveAvgPool2d((1, 1))

#N x 1024 x 1 x 1

self.drop = nn.Dropout(0.4)

self.fc = nn.Linear(1024, num_classes)

if self.aux_logits:

self.aux1 = InceptionAux(512, num_classes)

self.aux2 = InceptionAux(528, num_classes)

def forward(self, x):

x = self.conv1(x)

x = self.maxpool1(x)

x = self.conv2(x)

x = self.conv3(x)

x = self.maxpool2(x)

x = self.inception3a(x)

x = self.inception3b(x)

x = self.maxpool3(x)

x = self.inception4a(x)

if self.aux_logits and self.training:

aux1 = self.aux1(x)

x = self.inception4b(x)

x = self.inception4c(x)

x = self.inception4d(x)

if self.aux_logits and self.training:

aux2 = self.aux2(x)

x = self.inception4e(x)

x = self.maxpool4(x)

x = self.inception5a(x)

x = self.inception5b(x)

x = self.avgpool(x)

x = torch.flatten(x, 1)

x = self.drop(x)

out = self.fc(x)

if self.aux_logits and self.training:

return (out, aux1, aux2)

return out

class Train:

def __init__(self, lr=0.01, momentum=0.9, weight_decay=0.0001):

self.net = GoogLeNet()

self.criterion = nn.CrossEntropyLoss()

self.opti = torch.optim.SGD(self.net.parameters(),

lr=lr, momentum=momentum,

weight_decay=weight_decay)

self.gpu()

self.generate_path()

self.acc_rates = []

self.errors = []

def gpu(self):

self.device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

if torch.cuda.device_count() > 1:

print("Let'us use %d GPUs" % torch.cuda.device_count())

self.net = nn.DataParallel(self.net)

self.net = self.net.to(self.device)

def generate_path(self):

"""

生成保存数据的路径

:return:

"""

try:

os.makedirs('./paras')

os.makedirs('./logs')

os.makedirs('./infos')

except FileExistsError as e:

pass

name = self.net.__class__.__name__

paras = os.listdir('./paras')

logs = os.listdir('./logs')

infos = os.listdir('./infos')

number = max((len(paras), len(logs), len(infos)))

self.para_path = "./paras/{0}{1}.pt".format(

name,

number

)

self.log_path = "./logs/{0}{1}.txt".format(

name,

number

)

self.info_path = "./infos/{0}{1}.npy".format(

name,

number

)

def log(self, strings):

"""

运行日志

:param strings:

:return:

"""

# a 往后添加内容

with open(self.log_path, 'a', encoding='utf8') as f:

f.write(strings)

def save(self):

"""

保存网络参数

:return:

"""

torch.save(self.net.state_dict(), self.para_path)

def derease_lr(self, multi=0.96):

"""

降低学习率

:param multi:

:return:

"""

self.opti.param_groups[0]['lr'] *= multi

def train(self, trainloder, epochs=50):

data_size = len(trainloder) * trainloder.batch_size

part = int(trainloder.batch_size / 2)

for epoch in range(epochs):

running_loss = 0.

total_loss = 0.

acc_count = 0.

if (epoch + 1) % 8 is 0:

self.derease_lr()

self.log(#日志记录

"learning rate change!!!\n"

)

for i, data in enumerate(trainloder):

imgs, labels = data

imgs = imgs.to(self.device)

labels = labels.to(self.device)

(out, aux1, aux2) = self.net(imgs)

loss1 = self.criterion(out, labels)

loss2 = self.criterion(aux1, labels)

loss3 = self.criterion(aux2, labels)

loss = 0.4 * loss1 + 0.3 * loss2 + 0.3 * loss3

_, pre = torch.max(out, 1) #判断是否判断正确

acc_count += (pre == labels).sum().item() #加总对的个数

self.opti.zero_grad()

loss.backward()

self.opti.step()

running_loss += loss.item()

if (i+1) % part is 0:

strings = "epoch {0:<3} part {1:<5} loss: {2:<.7f}\n".format(

epoch, i, running_loss / part

)

self.log(strings)#日志记录

total_loss += running_loss

running_loss = 0.

self.acc_rates.append(acc_count / data_size)

self.errors.append(total_loss / data_size)

self.log( #日志记录

"Accuracy of the network on %d train images: %d %%\n" %(

data_size, acc_count / data_size * 100

)

)

self.save() #保存网络参数

#保存一些信息画图用

np.save(self.info_path, {

'acc_rates': np.array(self.acc_rates),

'errors': np.array(self.errors)

})

if __name__ == "__main__":

root = "../../data"

trainset = torchvision.datasets.CIFAR10(root=root, train=True,

download=False,

transform=transforms.Compose(

[transforms.Resize(224),

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))]

))

train_loader = torch.utils.data.DataLoader(trainset, batch_size=128,

shuffle=True, num_workers=8,

pin_memory=True)

dog = Train()

dog.train(train_loader, epochs=1000)

Going Deeper with Convolutions (GoogLeNet)的更多相关文章

- 【CV论文阅读】Going deeper with convolutions(GoogLeNet)

目的: 提升深度神经网络的性能. 一般方法带来的问题: 增加网络的深度与宽度. 带来两个问题: (1)参数增加,数据不足的情况容易导致过拟合 (2)计算资源要求高,而且在训练过程中会使得很多参数趋向于 ...

- Going deeper with convolutions(GoogLeNet、Inception)

从LeNet-5开始,cnn就有了标准的结构:stacked convolutional layers are followed by one or more fully-connected laye ...

- 解读(GoogLeNet)Going deeper with convolutions

(GoogLeNet)Going deeper with convolutions Inception结构 目前最直接提升DNN效果的方法是increasing their size,这里的size包 ...

- 图像分类(一)GoogLenet Inception_V1:Going deeper with convolutions

论文地址 在该论文中作者提出了一种被称为Inception Network的深度卷积神经网络,它由若干个Inception modules堆叠而成.Inception的主要特点是它能提高网络中计算资源 ...

- Going deeper with convolutions 这篇论文

致网友:如果你不小心检索到了这篇文章,请不要看,因为很烂.写下来用于作为我的笔记. 2014年,在LSVRC14(large-Scale Visual Recognition Challenge)中, ...

- Going Deeper with Convolutions阅读摘要

论文链接:Going deeper with convolutions 代码下载: Abstract We propose a deep convolutional neural network ...

- [论文阅读]Going deeper with convolutions(GoogLeNet)

本文采用的GoogLenet网络(代号Inception)在2014年ImageNet大规模视觉识别挑战赛取得了最好的结果,该网络总共22层. Motivation and High Level Co ...

- 【网络结构】GoogLeNet inception-v1:Going deeper with convolutions论文笔记

目录 0. 论文链接 1. 概述 2. inception 3. GoogleNet 参考链接 @ 0. 论文链接 1. 概述 GoogLeNet是谷歌团队提出的一种大体保持计算资源不变的前提下, ...

- 论文阅读笔记四十二:Going deeper with convolutions (Inception V1 CVPR2014 )

论文原址:https://arxiv.org/pdf/1409.4842.pdf 代码连接:https://github.com/titu1994/Inception-v4(包含v1,v2,v4) ...

随机推荐

- HDFS【概述、数据流】

目录 概述 定义 优缺点 HDFS组成架构 HDFS文件块大小 HDFS数据流 写数据 读数据 网络拓扑-节点距离计算 机架感知(写数据的副本存储节点选择) 概述 定义 HDFS是一个分布式文件管理系 ...

- 一起手写吧!Promise!

1.Promise 的声明 首先呢,promise肯定是一个类,我们就用class来声明. 由于new Promise((resolve, reject)=>{}),所以传入一个参数(函数),秘 ...

- Android EditText软键盘显示隐藏以及“监听”

一.写此文章的起因 本人在做类似于微信.易信等这样的聊天软件时,遇到了一个问题.聊天界面最下面一般类似于如图1这样(这里只是显示了最下面部分,可以参考微信等),有输入文字的EditText和表情按钮等 ...

- window 查看端口占用情况

查看哪个进程在用 netstat -aon|findstr "8080" TCP 0.0.0.0:8080 0.0.0.0:0 ...

- Java 设计模式--策略模式,枚举+工厂方法实现

如果项目中的一个页面跳转功能存在10个以上的if else判断,想要做一下整改 一.什么是策略模式 策略模式是对算法的包装,是把使用算法的责任和算法本身分割开来,委派给不同的对象管理,最终可以实现解决 ...

- Pagination.js + Sqlite web系统分页

前端使用 jquery pagination.js 插件. 环境准备:jquery.js.pagination.js.pagination.css 附件下载:https://files.cnblogs ...

- python实现skywalking的trace模块过滤和报警

skywalking本身的报警功能,用起来视乎不是特别好用,目前想实现对skywalking的trace中的错误接口进行过滤并报警通知管理员和开发.所以自己就用python对skywalking做了二 ...

- Wireshark基本使用(1)

原文出处: EMC中文支持论坛 按照国际惯例,从最基本的说起. 抓取报文: 下载和安装好Wireshark之后,启动Wireshark并且在接口列表中选择接口名,然后开始在此接口上抓包.例如,如果想要 ...

- [BUUCTF]PWN——bjdctf_2020_router

bjdctf_2020_router 附件 步骤: 例行检查,64位程序,开启了NX保护 本地试运行一下程序,看看大概的情况 会让我们选择,选择4.root,没什么用,但是注意了,这边选1会执行pin ...

- Python pyecharts绘制水球图

一.水球图Liquid.add()方法简介 Liquid.add()方法签名add(name, data, shape='circle', liquid_color=None, is_liquid_a ...