spark 笔记 16: BlockManager

/* Class for returning a fetched block and associated metrics. */

private[spark] class BlockResult(

val data: Iterator[Any],

readMethod: DataReadMethod.Value,

bytes: Long) {

val inputMetrics = new InputMetrics(readMethod)

inputMetrics.bytesRead = bytes

}

private[spark] class BlockManager(

executorId: String,

actorSystem: ActorSystem,

val master: BlockManagerMaster,

defaultSerializer: Serializer,

maxMemory: Long,

val conf: SparkConf,

securityManager: SecurityManager,

mapOutputTracker: MapOutputTracker,

shuffleManager: ShuffleManager)

extends BlockDataProvider with Logging {

/**

* Contains all the state related to a particular shuffle. This includes a pool of unused

* ShuffleFileGroups, as well as all ShuffleFileGroups that have been created for the shuffle.

*/

private class ShuffleState(val numBuckets: Int) {

val nextFileId = new AtomicInteger(0)

val unusedFileGroups = new ConcurrentLinkedQueue[ShuffleFileGroup]()

val allFileGroups = new ConcurrentLinkedQueue[ShuffleFileGroup]()

/**

* The mapIds of all map tasks completed on this Executor for this shuffle.

* NB: This is only populated if consolidateShuffleFiles is FALSE. We don't need it otherwise.

*/

val completedMapTasks = new ConcurrentLinkedQueue[Int]()

}

private val shuffleStates = new TimeStampedHashMap[ShuffleId, ShuffleState]

private val metadataCleaner =

new MetadataCleaner(MetadataCleanerType.SHUFFLE_BLOCK_MANAGER, this.cleanup, conf)

/**

* Register a completed map without getting a ShuffleWriterGroup. Used by sort-based shuffle

* because it just writes a single file by itself.

*/

def addCompletedMap(shuffleId: Int, mapId: Int, numBuckets: Int): Unit = {

shuffleStates.putIfAbsent(shuffleId, new ShuffleState(numBuckets))

val shuffleState = shuffleStates(shuffleId)

shuffleState.completedMapTasks.add(mapId)

}

/**

* Initialize the BlockManager. Register to the BlockManagerMaster, and start the

* BlockManagerWorker actor.

*/

private def initialize(): Unit = {

master.registerBlockManager(blockManagerId, maxMemory, slaveActor)

BlockManagerWorker.startBlockManagerWorker(this)

}

/**

* A short-circuited method to get blocks directly from disk. This is used for getting

* shuffle blocks. It is safe to do so without a lock on block info since disk store

* never deletes (recent) items.

*/

def getLocalFromDisk(blockId: BlockId, serializer: Serializer): Option[Iterator[Any]] = {

diskStore.getValues(blockId, serializer).orElse {

throw new BlockException(blockId, s"Block $blockId not found on disk, though it should be")

}

}

/** A group of writers for a ShuffleMapTask, one writer per reducer. */

private[spark] trait ShuffleWriterGroup {

val writers: Array[BlockObjectWriter]

/** @param success Indicates all writes were successful. If false, no blocks will be recorded. */

def releaseWriters(success: Boolean)

}

/**

* Manages assigning disk-based block writers to shuffle tasks. Each shuffle task gets one file

* per reducer (this set of files is called a ShuffleFileGroup).

*

* As an optimization to reduce the number of physical shuffle files produced, multiple shuffle

* blocks are aggregated into the same file. There is one "combined shuffle file" per reducer

* per concurrently executing shuffle task. As soon as a task finishes writing to its shuffle

* files, it releases them for another task.

* Regarding the implementation of this feature, shuffle files are identified by a 3-tuple:

* - shuffleId: The unique id given to the entire shuffle stage.

* - bucketId: The id of the output partition (i.e., reducer id)

* - fileId: The unique id identifying a group of "combined shuffle files." Only one task at a

* time owns a particular fileId, and this id is returned to a pool when the task finishes.

* Each shuffle file is then mapped to a FileSegment, which is a 3-tuple (file, offset, length)

* that specifies where in a given file the actual block data is located.

*

* Shuffle file metadata is stored in a space-efficient manner. Rather than simply mapping

* ShuffleBlockIds directly to FileSegments, each ShuffleFileGroup maintains a list of offsets for

* each block stored in each file. In order to find the location of a shuffle block, we search the

* files within a ShuffleFileGroups associated with the block's reducer.

*/

// TODO: Factor this into a separate class for each ShuffleManager implementation

private[spark]

class ShuffleBlockManager(blockManager: BlockManager,

shuffleManager: ShuffleManager) extends Logging {

private[spark]

object ShuffleBlockManager {

/**

* A group of shuffle files, one per reducer.

* A particular mapper will be assigned a single ShuffleFileGroup to write its output to.

*/

private class ShuffleFileGroup(val shuffleId: Int, val fileId: Int, val files: Array[File]) {

private var numBlocks: Int = 0

/**

* Stores the absolute index of each mapId in the files of this group. For instance,

* if mapId 5 is the first block in each file, mapIdToIndex(5) = 0.

*/

private val mapIdToIndex = new PrimitiveKeyOpenHashMap[Int, Int]()

/**

* Stores consecutive offsets and lengths of blocks into each reducer file, ordered by

* position in the file.

* Note: mapIdToIndex(mapId) returns the index of the mapper into the vector for every

* reducer.

*/

private val blockOffsetsByReducer = Array.fill[PrimitiveVector[Long]](files.length) {

new PrimitiveVector[Long]()

}

private val blockLengthsByReducer = Array.fill[PrimitiveVector[Long]](files.length) {

new PrimitiveVector[Long]()

}

def apply(bucketId: Int) = files(bucketId)

def recordMapOutput(mapId: Int, offsets: Array[Long], lengths: Array[Long]) {

assert(offsets.length == lengths.length)

mapIdToIndex(mapId) = numBlocks

numBlocks += 1

for (i <- 0 until offsets.length) {

blockOffsetsByReducer(i) += offsets(i)

blockLengthsByReducer(i) += lengths(i)

}

}

/** Returns the FileSegment associated with the given map task, or None if no entry exists. */

def getFileSegmentFor(mapId: Int, reducerId: Int): Option[FileSegment] = {

val file = files(reducerId)

val blockOffsets = blockOffsetsByReducer(reducerId)

val blockLengths = blockLengthsByReducer(reducerId)

val index = mapIdToIndex.getOrElse(mapId, -1)

if (index >= 0) {

val offset = blockOffsets(index)

val length = blockLengths(index)

Some(new FileSegment(file, offset, length))

} else {

None

}

}

}

}

spark 笔记 16: BlockManager的更多相关文章

- Ext.Net学习笔记16:Ext.Net GridPanel 折叠/展开行

Ext.Net学习笔记16:Ext.Net GridPanel 折叠/展开行 Ext.Net GridPanel的行支持折叠/展开功能,这个功能个人觉得还说很有用处的,尤其是数据中包含图片等内容的时候 ...

- 安装Hadoop及Spark(Ubuntu 16.04)

安装Hadoop及Spark(Ubuntu 16.04) 安装JDK 下载jdk(以jdk-8u91-linux-x64.tar.gz为例) 新建文件夹 sudo mkdir /usr/lib/jvm ...

- SQL反模式学习笔记16 使用随机数排序

目标:随机排序,使用高效的SQL语句查询获取随机数据样本. 反模式:使用RAND()随机函数 SELECT * FROM Employees AS e ORDER BY RAND() Limit 1 ...

- golang学习笔记16 beego orm 数据库操作

golang学习笔记16 beego orm 数据库操作 beego ORM 是一个强大的 Go 语言 ORM 框架.她的灵感主要来自 Django ORM 和 SQLAlchemy. 目前该框架仍处 ...

- spark的存储系统--BlockManager源码分析

spark的存储系统--BlockManager源码分析 根据之前的一系列分析,我们对spark作业从创建到调度分发,到执行,最后结果回传driver的过程有了一个大概的了解.但是在分析源码的过程中也 ...

- spark笔记 环境配置

spark笔记 spark简介 saprk 有六个核心组件: SparkCore.SparkSQL.SparkStreaming.StructedStreaming.MLlib,Graphx Spar ...

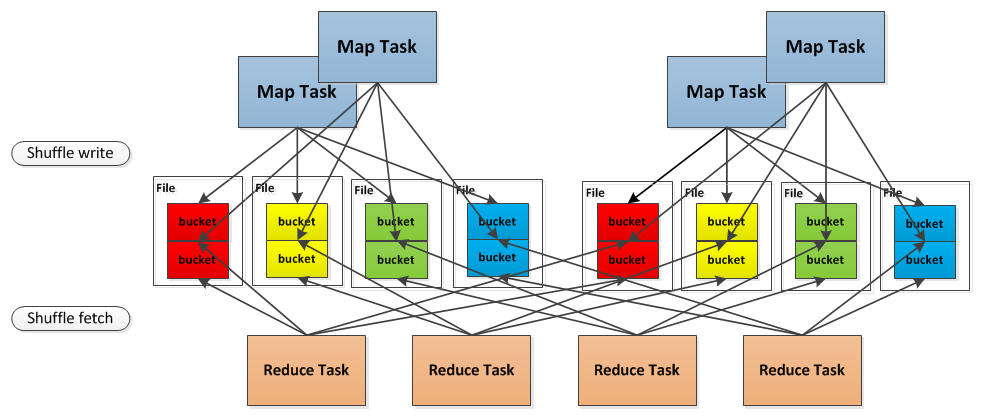

- spark 笔记 15: ShuffleManager,shuffle map两端的stage/task的桥梁

无论是Hadoop还是spark,shuffle操作都是决定其性能的重要因素.在不能减少shuffle的情况下,使用一个好的shuffle管理器也是优化性能的重要手段. ShuffleManager的 ...

- spark 笔记 12: Executor,task最后的归宿

spark的Executor是执行task的容器.和java的executor概念类似. ===================start executor runs task============ ...

- Spark笔记:复杂RDD的API的理解(下)

本篇接着谈谈那些稍微复杂的API. 1) flatMapValues:针对Pair RDD中的每个值应用一个返回迭代器的函数,然后对返回的每个元素都生成一个对应原键的键值对记录 这个方法我最开始接 ...

随机推荐

- luogu P4755 Beautiful Pair

luogu 这题有坨区间最大值,考虑最值分治.分治时每次取出最大值,然后考虑统计跨过这个位置的区间答案,然后两边递归处理.如果之枚举左端点,因为最大值确定,右端点权值要满足\(a_r\le \frac ...

- ccs之经典布局(二)(两栏,三栏布局)

接上篇ccs之经典布局(一)(水平垂直居中) 四.两列布局 单列宽度固定,另一列宽度是自适应. 1.float+overflow:auto; 固定端用float进行浮动,自适应的用overflow:a ...

- X-Forwarded-For伪造及防御

使用x-Forward_for插件或者burpsuit可以改包,伪造任意的IP地址,使一些管理员后台绕过对IP地址限制的访问. 防护策略: 1.对于直接使用的 Web 应用,必须使用从TCP连接中得到 ...

- Redis总结1

一.Redis安装(Linux) 1.在官网上下载Linux版本的Redis(链接https://redis.io/download) 2.在Linux的/usr/local中创建Redis文件夹mk ...

- 免费使用Google

这里需要借助一下`梯子`,这里有教程 点击进入 如果没有谷歌浏览器,进入下载最新版谷歌浏览器,进入下载,不要移动它的安装位置,选择默认位置, 如果已经安装了谷歌浏览器,打开赛风之后,选择设置 进行安装 ...

- Could not determine which “make” command to run. Check the “make” step in the build configuration

环境: QT5.10 VisualStudio2015 错误1: Could not determine which “make” command to run. Check the “make” s ...

- linux工具之pmap

1.pmap简介 pmap命令用来报告一个进程或多个进程的内存映射.可以使用这个工具确定系统是如何为服务器上的进程分配内存的. 例如查看ssh进程的内存映射:

- Python with open 使用技巧

在使用Python处理文件的是,对于文件的处理,都会经过三个步骤:打开文件->操作文件->关闭文件.但在有些时候,我们会忘记把文件关闭,这就无法释放文件的打开句柄.这可能觉得有些麻烦,每次 ...

- npoi c#

没有安装excel docx的情况下 操作excel docx

- 我说CMMI之二:CMMI里有什么?--转载

版权声明:本文为博主原创文章,遵循 CC 4.0 BY-SA 版权协议,转载请附上原文出处链接和本声明.本文链接:https://blog.csdn.net/dylanren/article/deta ...