论文阅读 | Tackling Adversarial Examples in QA via Answer Sentence Selection

核心思想

基于阅读理解中QA系统的样本中可能混有对抗样本的情况,在寻找答案时,首先筛选出可能包含答案的句子,再做进一步推断。

方法

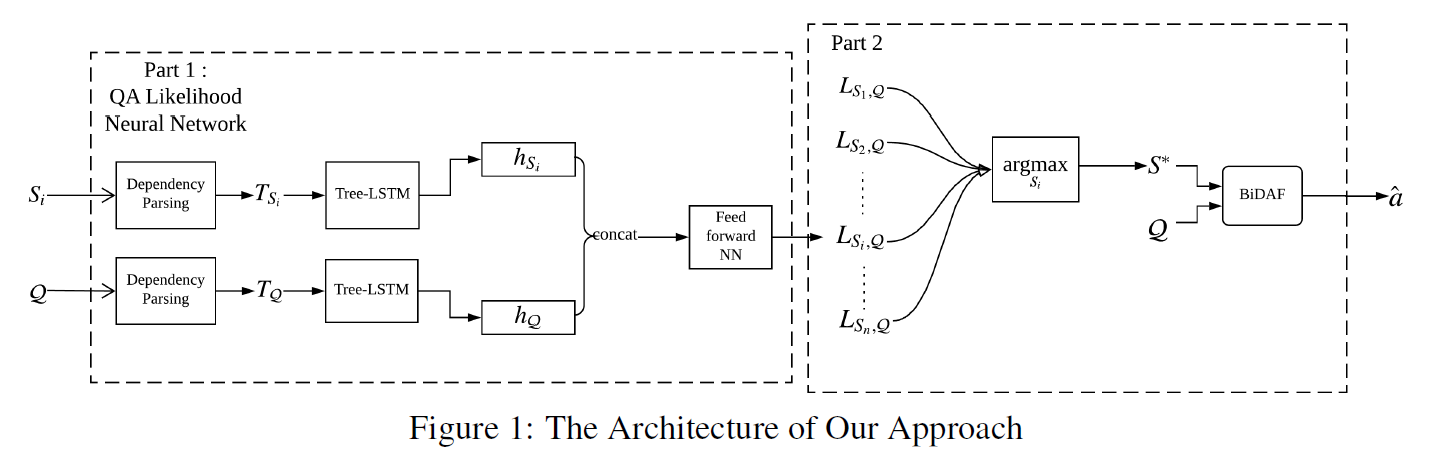

Part 1

given: 段落C query Q

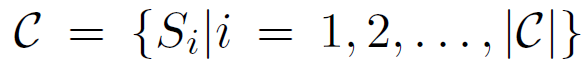

段落切分成句子:

每个句子和Q合并:

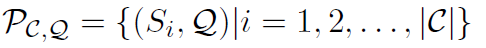

使用依存句法分析得到表示:

基于T Si T Q ,分别构建 Tree-LSTMSi Tree-LSTMQ

两个Tree-LSTMs的叶结点的输入都是GloVe word vectors

输出隐向量分别是 hSi hQ

hSi hQ连接起来并传递给一个前馈神经网络来计算出Si包含Q的答案的可能性

loss 和前馈神经网络follows语义相关性网络

有监督的训练时,si包含答案为1,否则为0。

Part 2

计算最可能答案:

L代表QA似然神经网络预测的似然

将一对句子S*和Q传递给预先训练好的单BiDAF(Seo et al., 2016),生成Q的答案a^。

实验

数据集:sampled from the training set of SQuAD v1.1

there are 87,599 queries of 18,896 paragraphs in the training set of SQuAD v1.1. While each query refers to one paragraph, a paragraph may refer to multiple queries.

d=87,599 is the number of queries. The set D contains 440,135 sentence pairs, among which 87,306 are positive instances and 352,829 are negative instances.

positive instance:  ,前者包含后者的答案。

,前者包含后者的答案。

两种采样方法: pair-level sampling ,paragraph-level sampling

1. In pair-level sampling, 45,000 positive instances and 45,000 negative instances are randomly selected from D as the training set.

2. paragraph-level sampling 首先随机选Qk,然后从Dk中随机采样出一个positive instance 和一个negative instance

Each set has 90,000 instances. The validation set with 3,000 instances are sampled through these two methods as well.

测试集:ADDANY adversarial dataset : 1,000 paragraphs and each paragraph refers to only one query. By splitting and combining, 6,154 sentence pairs are obtained.

实验设置:The dimension of GloVe word vectors (Pennington et al., 2014) is set as 300. The sentence scoring neural network is trained by Adagrad (Duchi et al., 2011) with a learning rate of 0.01 and a batch size of 25. Model parameters are regularized by a 10-4 strength of per-minibatch L2 regularization.

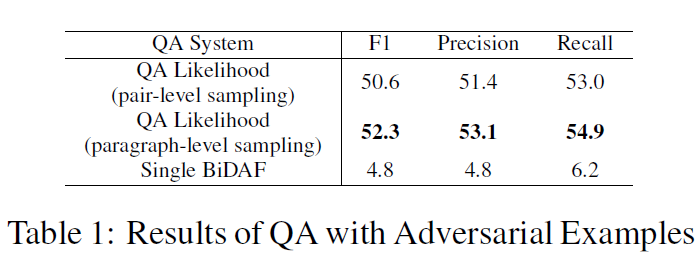

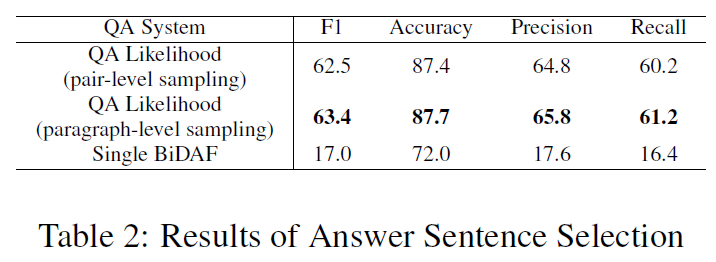

结果

评价标准:Macro-averaged F1 score (Rajpurkar et al., 2016; Jia and Liang, 2017).

对于table2,可以理解为二分类问题。

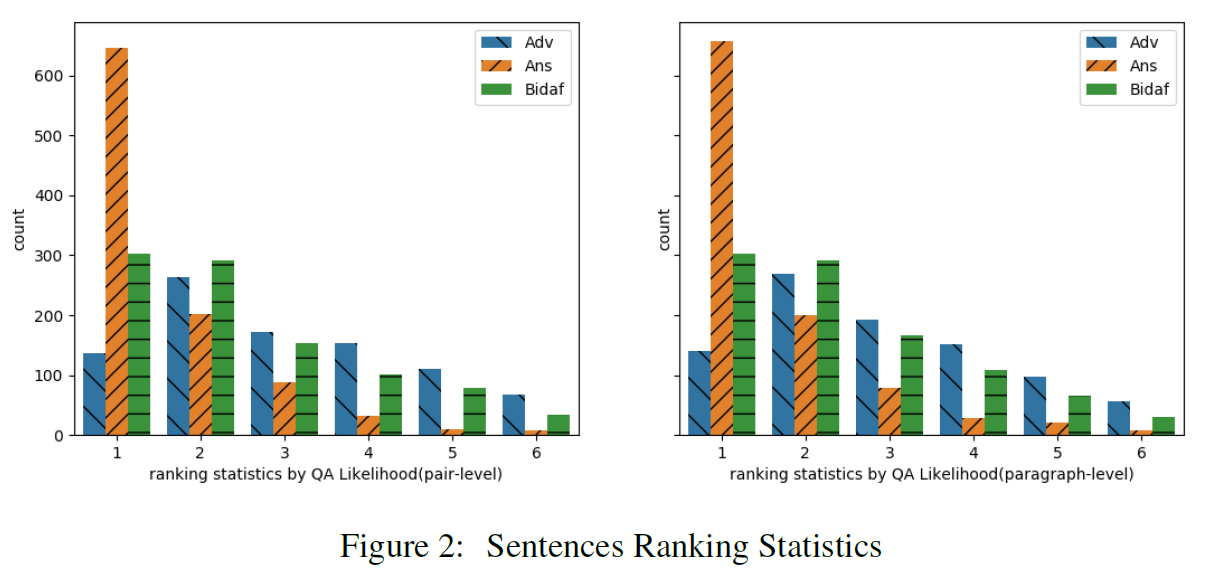

consider three types of sentences: adversarial sentences, answer sentences, and the sentences that include the answers returned by the single BiDAF system.

the x-axis denotes the ranked position for each sentence according to its likelihood score , while the y-axis is the number of sentences for each type ranked at this position.

It shows that among the 1,000 (C;Q) pairs, 647 and 657 answer sentences are selected by the QA Likelihood neural network based on pair-level sampling and paragraph-level sampling respectively, but only 136 and 141 adversarial sentences are selected by the QA Likelihood neural network.

结论

对于ADDSENT的没有做。

论文阅读 | Tackling Adversarial Examples in QA via Answer Sentence Selection的更多相关文章

- [论文阅读笔记] Adversarial Learning on Heterogeneous Information Networks

[论文阅读笔记] Adversarial Learning on Heterogeneous Information Networks 本文结构 解决问题 主要贡献 算法原理 参考文献 (1) 解决问 ...

- [论文阅读笔记] Adversarial Mutual Information Learning for Network Embedding

[论文阅读笔记] Adversarial Mutual Information Learning for Network Embedding 本文结构 解决问题 主要贡献 算法原理 实验结果 参考文献 ...

- 论文阅读 | Universal Adversarial Triggers for Attacking and Analyzing NLP

[code] [blog] 主要思想和贡献 以前,NLP中的对抗攻击一般都是针对特定输入的,那么他们对任意的输入是否有效呢? 本文搜索通用的对抗性触发器:与输入无关的令牌序列,当连接到来自数据集的任何 ...

- 论文阅读 | Combating Adversarial Misspellings with Robust Word Recognition

对抗防御可以从语义消歧这个角度来做,不同的模型,后备模型什么的,我觉得是有道理的,和解决未登录词的方式是类似的,毕竟文本方面的对抗常常是修改为UNK来发生错误的.怎么使用backgroud model ...

- 论文阅读 | Real-Time Adversarial Attacks

摘要 以前的对抗攻击关注于静态输入,这些方法对流输入的目标模型并不适用.攻击者只能通过观察过去样本点在剩余样本点中添加扰动. 这篇文章提出了针对于具有流输入的机器学习模型的实时对抗攻击. 1 介绍 在 ...

- 论文阅读 | Generating Fluent Adversarial Examples for Natural Languages

Generating Fluent Adversarial Examples for Natural Languages ACL 2019 为自然语言生成流畅的对抗样本 摘要 有效地构建自然语言处 ...

- 《Explaining and harnessing adversarial examples》 论文学习报告

<Explaining and harnessing adversarial examples> 论文学习报告 组员:裴建新 赖妍菱 周子玉 2020-03-27 1 背景 Sz ...

- 【论文阅读】Deep Adversarial Subspace Clustering

导读: 本文为CVPR2018论文<Deep Adversarial Subspace Clustering>的阅读总结.目的是做聚类,方法是DASC=DSC(Deep Subspace ...

- Adversarial Examples for Semantic Segmentation and Object Detection 阅读笔记

Adversarial Examples for Semantic Segmentation and Object Detection (语义分割和目标检测中的对抗样本) 作者:Cihang Xie, ...

随机推荐

- BZOJ 1982 / Luogu SP2021: [Spoj 2021]Moving Pebbles (找平衡状态)

这道题在论文里看到过,直接放论文原文吧 在BZOJ上是单组数据,而且数据范围符合,直接int读入排序就行了.代码: #include <cstdio> #include <algor ...

- CentOS 7 yum update 升级提示:PackageKit 锁定解决方案

CentOS 7 系列新安装后都会进行yum update操作,但每次都会遇到PackageKit 锁定问题,提示如下: /var/run/yum.pid 已被锁定,PID 为 2694 的另一个程序 ...

- springboot的@Configuration文件读取static静态文件

错误 正确

- 并发编程入门(一): POSIX 使用互斥量和条件变量实现生产者/消费者问题

boost的mutex,condition_variable非常好用.但是在Linux上,boost实际上做的是对pthread_mutex_t和pthread_cond_t的一系列的封装.因此通过对 ...

- jQuery系列(八):jQuery的位置信息

1.宽度和高度 (1):获取宽度 .width() 描述:为匹配的元素集合中获取第一个元素的当前计算宽度值.这个方法不接受任何参数..css(width) 和 .width()之间的区别是后者返回一个 ...

- Educational Codeforces Round 53 E. Segment Sum(数位DP)

Educational Codeforces Round 53 E. Segment Sum 题意: 问[L,R]区间内有多少个数满足:其由不超过k种数字构成. 思路: 数位DP裸题,也比较好想.由于 ...

- JavaWeb_(Hibernate框架)Hibernate中数据查询语句SQL基本用法

本文展示三种在Hibernate中使用SQL语句进行数据查询基本用法 1.基本查询 2.条件查询 3.分页查询 package com.Gary.dao; import java.util.List; ...

- 图论——Floyd算法拓展及其动规本质

一.Floyd算法本质 首先,关于Floyd算法: Floyd-Warshall算法是一种在具有正或负边缘权重(但没有负周期)的加权图中找到最短路径的算法.算法的单个执行将找到所有顶点对之间的最短路径 ...

- Java后台开发精选知识图谱

1.引言: 学习一个新的技术时,其实不在于跟着某个教程敲出了几行.几百行代码,这样你最多只能知其然而不知其所以然,进步缓慢且深度有限,最重要的是一开始就对整个学习路线有宏观.简洁的认识,确定大的学习方 ...

- Leetcode题目分类整理

一.数组 8) 双指针 ---- 滑动窗口 例题: 3. Longest Substring Without Repeating Characters 描述:Given a string, find ...