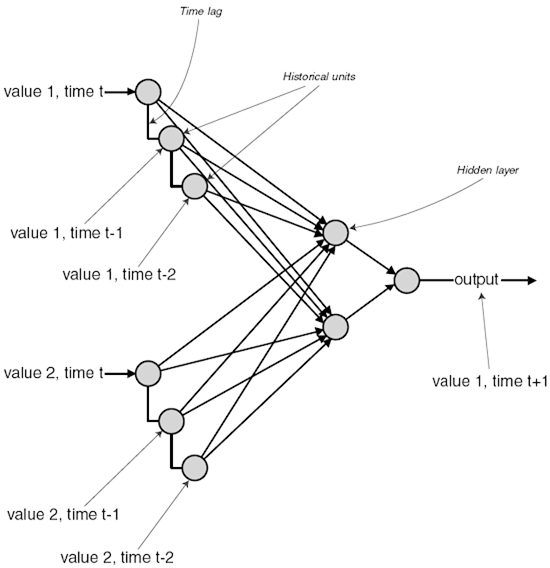

Elman network with additional notes

// Author: John McCullock

// Date: 10-15-05

// Description: Elman Network Example 1.

//http://www.mnemstudio.org/neural-networks-elman.htm

#include <iostream>

#include <iomanip>

#include <cmath>

#include <string>

#include <ctime>

#include <cstdlib> using namespace std; const int maxTests = 10000;

const int maxSamples = 4; const int inputNeurons = 6;

const int hiddenNeurons = 3;

const int outputNeurons = 6;

const int contextNeurons = 3; const double learnRate = 0.2; //Rho.

const int trainingReps = 2000; //beVector is the symbol used to start or end a sequence.

double beVector[inputNeurons] = {1.0, 0.0, 0.0, 0.0, 0.0, 0.0}; // 0 1 2 3 4 5

double sampleInput[3][inputNeurons] = {{0.0, 0.0, 0.0, 1.0, 0.0, 0.0},

{0.0, 0.0, 0.0, 0.0, 0.0, 1.0},

{0.0, 0.0, 1.0, 0.0, 0.0, 0.0}}; //Input to Hidden weights (with biases).

double wih[inputNeurons + 1][hiddenNeurons]; //Context to Hidden weights (with biases).

double wch[contextNeurons + 1][hiddenNeurons]; //Hidden to Output weights (with biases).

double who[hiddenNeurons + 1][outputNeurons]; //Hidden to Context weights (no biases).

double whc[outputNeurons + 1][contextNeurons]; //Activations.

double inputs[inputNeurons];

double hidden[hiddenNeurons];

double target[outputNeurons];

double actual[outputNeurons];

double context[contextNeurons]; //Unit errors.

double erro[outputNeurons];

double errh[hiddenNeurons]; void ElmanNetwork();

void testNetwork();

void feedForward();

void backPropagate();

void assignRandomWeights();

int getRandomNumber();

double sigmoid(double val);

double sigmoidDerivative(double val); int main(){ cout << fixed << setprecision(3) << endl; //Format all the output.

srand((unsigned)time(0)); //Seed random number generator with system time.

ElmanNetwork();

testNetwork(); return 0;

} void ElmanNetwork(){

double err;

int sample = 0;

int iterations = 0;

bool stopLoop = false; assignRandomWeights(); //Train the network.

do { if(sample == 0){

for(int i = 0; i <= (inputNeurons - 1); i++){

inputs[i] = beVector[i];

} // i

} else {

for(int i = 0; i <= (inputNeurons - 1); i++){

inputs[i] = sampleInput[sample - 1][i];

} // i

} //After the samples are entered into the input units, the sample are

//then offset by one and entered into target-output units for

//later comparison.

if(sample == maxSamples - 1){

for(int i = 0; i <= (inputNeurons - 1); i++){

target[i] = beVector[i];

} // i

} else {

for(int i = 0; i <= (inputNeurons - 1); i++){

target[i] = sampleInput[sample][i];

} // i

} feedForward(); err = 0.0;

for(int i = 0; i <= (outputNeurons - 1); i++){

err += sqrt(target[i] - actual[i]);

} // i

err = 0.5 * err; if(iterations > trainingReps){

stopLoop = true;

}

iterations += 1; backPropagate(); sample += 1;

if(sample == maxSamples){

sample = 0;

}

} while(stopLoop == false); cout << "Iterations = " << iterations << endl;

} void testNetwork(){

int index;

int randomNumber, predicted;

bool stopTest, stopSample, successful; //Test the network with random input patterns.

stopTest = false;

for(int test = 0; test <= maxTests; test++){ //Enter Beginning string.

inputs[0] = 1.0;

inputs[1] = 0.0;

inputs[2] = 0.0;

inputs[3] = 0.0;

inputs[4] = 0.0;

inputs[5] = 0.0;

cout << "(0) "; feedForward(); stopSample = false;

successful = false;

index = 0; //note:If failed then index start from 0 again

/*However, the nature of this kind of recurrent network is easier to understand (at least to me),

imply by referring to the unit's position in serial order (i.e.; Y0, Y1, Y2, Y3, ...).

So for the purpose of this illustration, I'll just use strings of numbers like: 0, 3, 5, 2, 0,

where 0 refers to Y0, 3 refers to Y3, 5 refers to Y5, etc. Each string begins and ends with a terminal symbol; I'll use 0 for this example.*/

randomNumber = 0;

predicted = 0; do { for(int i = 0; i <= 5; i++){

cout << actual[i] << " ";

if(actual[i] >= 0.3){

//The output unit with the highest value (usually over 3.0)

//is the network's predicted unit that it expects to appear

//in the next input vector.

//For example, if the 3rd output unit has the highest value,

//the network expects the 3rd unit in the next input to

//be 1.0

//If the actual value isn't what it expected, the random

//sequence has failed, and a new test sequence begins.

predicted = i;

}

} // i

cout << "\n"; randomNumber = getRandomNumber(); //Enter a random letter. index += 1; //Increment to the next position.

if(index == 5){

stopSample = true;

} else {

cout << "(" << randomNumber << ") ";

} for( i = 0; i <= 5; i++){

if(i == randomNumber){//note:i==randomNumber&&i == predicted then succeed

inputs[i] = 1.0;

if(i == predicted){

successful = true;

//for(int k=0;k<5;k++)//have a look;

// cout<<"\nTang :the sequence is:"<<inputs[k]<<'\t';

//cout<<endl;

} else {

//Failure. Stop this sample and try a new sample.

stopSample = true;

}

} else {

inputs[i] = 0.0;

}

} // i feedForward(); } while(stopSample == false); //Enter another letter into this sample sequence. if((index > 4) && (successful == true)){ //note: stop the iteration until success a sequence matching success at least 5 times.

//If the random sequence happens to be in the correct order,

//the network reports success.

cout << "Success." << endl;

cout << "Completed " << test << " tests." << endl;

stopTest = true;

break;

} else {

cout << "Failed." << endl;

if(test > maxTests){

stopTest = true;

cout << "Completed " << test << " tests with no success." << endl;

break;

}

}

} // Test

} void feedForward(){

double sum; //Calculate input and context connections to hidden layer.

for(int hid = 0; hid <= (hiddenNeurons - 1); hid++){

sum = 0.0;

//from input to hidden...

for(int inp = 0; inp <= (inputNeurons - 1); inp++){

sum += inputs[inp] * wih[inp][hid];

} // inp

//from context to hidden...

for(int con = 0; con <= (contextNeurons - 1); con++){

sum += context[con] * wch[con][hid];

} // con

//Add in bias.

sum += wih[inputNeurons][hid];

sum += wch[contextNeurons][hid];

hidden[hid] = sigmoid(sum);

} // hid //Calculate the hidden to output layer.

for(int out = 0; out <= (outputNeurons - 1); out++){

sum = 0.0;

for(int hid = 0; hid <= (hiddenNeurons - 1); hid++){

sum += hidden[hid] * who[hid][out];

} // hid //Add in bias.

sum += who[hiddenNeurons][out];

actual[out] = sigmoid(sum);

} // out //Copy outputs of the hidden to context layer.

for(int con = 0; con <= (contextNeurons - 1); con++){

context[con] = hidden[con];

} // con } void backPropagate(){ //Calculate the output layer error (step 3 for output cell).

for(int out = 0; out <= (outputNeurons - 1); out++){

erro[out] = (target[out] - actual[out]) * sigmoidDerivative(actual[out]);

} // out //Calculate the hidden layer error (step 3 for hidden cell).

for(int hid = 0; hid <= (hiddenNeurons - 1); hid++){

errh[hid] = 0.0;

for(int out = 0; out <= (outputNeurons - 1); out++){

errh[hid] += erro[out] * who[hid][out];

} // out

errh[hid] *= sigmoidDerivative(hidden[hid]);

} // hid //Update the weights for the output layer (step 4).

for( out = 0; out <= (outputNeurons - 1); out++){

for(int hid = 0; hid <= (hiddenNeurons - 1); hid++){

who[hid][out] += (learnRate * erro[out] * hidden[hid]);

} // hid

//Update the bias.

who[hiddenNeurons][out] += (learnRate * erro[out]);

} // out //Update the weights for the hidden layer (step 4).

for( hid = 0; hid <= (hiddenNeurons - 1); hid++){

for(int inp = 0; inp <= (inputNeurons - 1); inp++){

wih[inp][hid] += (learnRate * errh[hid] * inputs[inp]);

} // inp

//Update the bias.

wih[inputNeurons][hid] += (learnRate * errh[hid]);

} // hid } void assignRandomWeights(){ for(int inp = 0; inp <= inputNeurons; inp++){

for(int hid = 0; hid <= (hiddenNeurons - 1); hid++){

//Assign a random weight value between -0.5 and 0.5

wih[inp][hid] = -0.5 + double(rand()/(RAND_MAX + 1.0));

} // hid

} // inp for(int con = 0; con <= contextNeurons; con++){

for(int hid = 0; hid <= (hiddenNeurons - 1); hid++){

//Assign a random weight value between -0.5 and 0.5

wch[con][hid] = -0.5 + double(rand()/(RAND_MAX + 1.0));

} // hid

} // con for(int hid = 0; hid <= hiddenNeurons; hid++){

for(int out = 0; out <= (outputNeurons - 1); out++){

//Assign a random weight value between -0.5 and 0.5

who[hid][out] = -0.5 + double(rand()/(RAND_MAX + 1.0));

} // out

} // hid for(int out = 0; out <= outputNeurons; out++){

for(int con = 0; con <= (contextNeurons - 1); con++){

//These are all fixed weights set to 0.5

whc[out][con] = 0.5;

} // con

} // out } int getRandomNumber(){

//Generate random value between 0 and 6.

return int(6*rand()/(RAND_MAX + 1.0));

} double sigmoid(double val){

return (1.0 / (1.0 + exp(-val)));

} double sigmoidDerivative(double val){

return (val * (1.0 - val));

}

Elman network with additional notes的更多相关文章

- 论文笔记之:Progressive Neural Network Google DeepMind

Progressive Neural Network Google DeepMind 摘要:学习去解决任务的复杂序列 --- 结合 transfer (迁移),并且避免 catastrophic f ...

- 详解循环神经网络(Recurrent Neural Network)

本文结构: 模型 训练算法 基于 RNN 的语言模型例子 代码实现 1. 模型 和全连接网络的区别 更细致到向量级的连接图 为什么循环神经网络可以往前看任意多个输入值 循环神经网络种类繁多,今天只看最 ...

- Heterogeneous Self-Organizing Network for Access and Backhaul

This application discloses methods for creating self-organizing networks implemented on heterogeneou ...

- Real-time storage area network

A cluster of computing systems is provided with guaranteed real-time access to data storage in a sto ...

- Gitlab的搭建

从网上看了一大堆的资料,最终选定按照github上的文档来搭建,虽然本人英文不好,就这样看着 这个博客弯曲完全是拷贝过来的,只为了做个笔记 原文地址:https://github.com/gitlab ...

- CentOS6.5Minimal安装Gitlab7.5

文章出处:http://www.restran.net/2015/04/09/gilab-centos-installation-note/ 在 CentOS 6.5 Minimal 系统环境下,用源 ...

- Windows Server 2008 HPC 版本介绍以及的Pack

最近接触了下 这个比较少见的 Windows Server版本 Windows Server 2008 HPC 微软官方的介绍 http://www.microsoft.com/china/hpc/ ...

- 【Caffe 测试】Training LeNet on MNIST with Caffe

Training LeNet on MNIST with Caffe We will assume that you have Caffe successfully compiled. If not, ...

- rsync Backups for Windows

Transfer your Windows Backups to an rsync server over SSH rsync.net provides cloud storage for offsi ...

随机推荐

- linux 利用python模块实现格式化json

非json格式示例 {"name": "chen2ha", "where": {"country": "Chi ...

- KafKa——学习笔记

学习时间:2020年02月03日10:03:41 官网地址 http://kafka.apache.org/intro.html kafka:消息队列介绍: 近两年发展速度很快.从1.0.0版本发布就 ...

- NSSCTF-[SWPU 2019]Network

下载附件打开之后发现是和ascii比较像,但是尝试解码发现不是ascii,然后这里问了一下大佬然后又翻了一下自己的笔记,最后发现是TTL,这里直接上脚本, import binascii with o ...

- css文字超出指定行数显示省略号

display: -webkit-box; overflow: hidden; word-break: break-all; /* break-all(允许在单词内换行.) */ text-overf ...

- Oracle数据库的下载、安装与卸载

Oracle数据库下载: 这里以Oracle 11g为例,推荐去Oracle官网下载 Oracle官网下载地址:https://www.oracle.com/database/technologies ...

- Linux概述及简单命令

Linux概述及简单命令 转自https://www.cnblogs.com/ayu305/p/Linux_basic.html 一.准备工作 1.环境选择:VMware\阿里云服务器 2.Linux ...

- Qt:QSqlDatabase

0.说明 QSqlDatabase类处理与数据库连接相关的操作.一个QSqlDatabase实例就代表了一个连接,连接时要提供访问数据库的driver,driver继承自QSqlDriver. 通过静 ...

- Linux下安装Apollo (Quick Start)

一.运行时环境 1.CentOS7 2.JDK1.8+ (安装JDK可参考 https://www.cnblogs.com/sportsky/p/15973713.html) 3.MySQL 5.6. ...

- Spring Cloud Gateway 不小心换了个 Web 容器就不能用了,我 TM 人傻了

个人创作公约:本人声明创作的所有文章皆为自己原创,如果有参考任何文章的地方,会标注出来,如果有疏漏,欢迎大家批判.如果大家发现网上有抄袭本文章的,欢迎举报,并且积极向这个 github 仓库 提交 i ...

- (六)React Ant Design Pro + .Net5 WebApi:后端环境搭建-EF Core

一. 简介 EFCore 是轻量化.可扩展.开源和跨平台版的常用数据访问技术,走你(官方文档) 二. 使用 1.安装数据库驱动包.PMC 工具包 不同的数据库有不同的包,参考,我用 PostgreSQ ...