OpenACC 异步计算

▶ 按照书上的例子,使用 async 导语实现主机与设备端的异步计算

● 代码,非异步的代码只要将其中的 async 以及第 29 行删除即可

#include <stdio.h>

#include <stdlib.h>

#include <openacc.h> #define N 10240000

#define COUNT 200 // 多算几次,增加耗时 int main()

{

int *a = (int *)malloc(sizeof(int)*N);

int *b = (int *)malloc(sizeof(int)*N);

int *c = (int *)malloc(sizeof(int)*N); #pragma acc enter data create(a[0:N]) async // 在设备上赋值 a

for (int i = ; i < COUNT; i++)

{

#pragma acc parallel loop async

for (int j = ; j < N; j++)

a[j] = (i + j) * ;

} for (int i = ; i < COUNT; i++) // 在主机上赋值 b

{

for (int j = ; j < N; j++)

b[j] = (i + j) * ;

} #pragma acc update host(a[0:N]) async // 异步必须 update a,否则还没同步就参与 c 的运算

#pragma acc wait // 非异步时去掉该行 for (int i = ; i < N; i++)

c[i] = a[i] + b[i]; #pragma acc update device(a[0:N]) async // 没啥用,增加耗时

#pragma acc exit data delete(a[0:N]) printf("\nc[1] = %d\n", c[]);

free(a);

free(b);

free(c);

//getchar();

return ;

}

● 输出结果(是否异步,差异仅在行号、耗时上)

//+-----------------------------------------------------------------------------非异步

D:\Code\OpenACC\OpenACCProject\OpenACCProject>pgcc main.c -acc -Minfo -o main_acc.exe

main:

, Generating enter data create(a[:])

, Accelerator kernel generated

Generating Tesla code

, #pragma acc loop gang, vector(128) /* blockIdx.x threadIdx.x */

, Generating implicit copyout(a[:])

, Generating update self(a[:])

, Generating update device(a[:])

Generating exit data delete(a[:]) D:\Code\OpenACC\OpenACCProject\OpenACCProject>main_acc.exe

launch CUDA kernel file=D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c function=main

line= device= threadid= queue= num_gangs= num_workers= vector_length= grid= block=

launch CUDA kernel file=D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c function=main

line= device= threadid= queue= num_gangs= num_workers= vector_length= grid= block= ... // 省略 launch CUDA kernel file=D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c function=main

line= device= threadid= queue= num_gangs= num_workers= vector_length= grid= block= c[] =

PGI: "acc_shutdown" not detected, performance results might be incomplete.

Please add the call "acc_shutdown(acc_device_nvidia)" to the end of your application to ensure that the performance results are complete. Accelerator Kernel Timing data

D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c

main NVIDIA devicenum=

time(us): ,

: data region reached time

: compute region reached times

: kernel launched times

grid: [] block: []

elapsed time(us): total=, max= min= avg=

: data region reached times

: update directive reached time

: data copyout transfers:

device time(us): total=, max=, min= avg=,

: update directive reached time

: data copyin transfers:

device time(us): total=, max=, min= avg=,

: data region reached time //------------------------------------------------------------------------------有异步

D:\Code\OpenACC\OpenACCProject\OpenACCProject>pgcc main.c -acc -Minfo -o main_acc.exe

main:

, Generating enter data create(a[:])

, Accelerator kernel generated

Generating Tesla code

, #pragma acc loop gang, vector(128) /* blockIdx.x threadIdx.x */

, Generating implicit copyout(a[:])

, Generating update self(a[:])

, Generating update device(a[:])

Generating exit data delete(a[:]) D:\Code\OpenACC\OpenACCProject\OpenACCProject>main_acc.exe

launch CUDA kernel file=D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c function=main

line= device= threadid= queue= num_gangs= num_workers= vector_length= grid= block=

launch CUDA kernel file=D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c function=main

line= device= threadid= queue= num_gangs= num_workers= vector_length= grid= block= ... // 省略 launch CUDA kernel file=D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c function=main

line= device= threadid= queue= num_gangs= num_workers= vector_length= grid= block= c[] =

PGI: "acc_shutdown" not detected, performance results might be incomplete.

Please add the call "acc_shutdown(acc_device_nvidia)" to the end of your application to ensure that the performance results are complete. Accelerator Kernel Timing data

Timing may be affected by asynchronous behavior

set PGI_ACC_SYNCHRONOUS to to disable async() clauses

D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c

main NVIDIA devicenum=

time(us): ,

: data region reached time

: compute region reached times

: kernel launched times

grid: [] block: []

elapsed time(us): total=, max= min= avg=

: data region reached times

: update directive reached time

: data copyout transfers:

device time(us): total=, max=, min= avg=,

: update directive reached time

: data copyin transfers:

device time(us): total=, max=, min= avg=,

: data region reached time

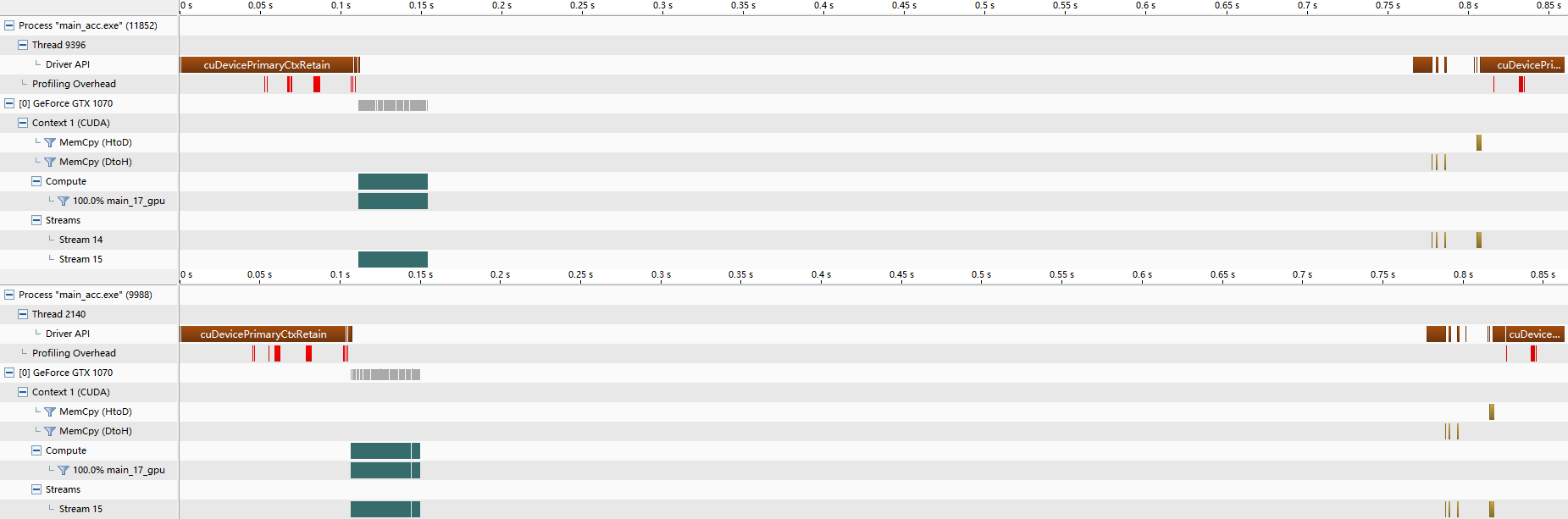

● Nvvp 的结果,我是真没看出来有较大的差别,可能例子举得不够好

● 在一个设备上同时使用两个命令队列

#include <stdio.h>

#include <stdlib.h>

#include <openacc.h> #define N 10240000

#define COUNT 200 int main()

{

int *a = (int *)malloc(sizeof(int)*N);

int *b = (int *)malloc(sizeof(int)*N);

int *c = (int *)malloc(sizeof(int)*N); #pragma acc enter data create(a[0:N]) async(1)

for (int i = ; i < COUNT; i++)

{

#pragma acc parallel loop async(1)

for (int j = ; j < N; j++)

a[j] = (i + j) * ;

} #pragma acc enter data create(b[0:N]) async(2)

for (int i = ; i < COUNT; i++)

{

#pragma acc parallel loop async(2)

for (int j = ; j < N; j++)

b[j] = (i + j) * ;

} #pragma acc enter data create(c[0:N]) async(2)

#pragma acc wait(1) async(2) #pragma acc parallel loop async(2)

for (int i = ; i < N; i++)

c[i] = a[i] + b[i]; #pragma acc update host(c[0:N]) async(2)

#pragma acc exit data delete(a[0:N], b[0:N], c[0:N]) printf("\nc[1] = %d\n", c[]);

free(a);

free(b);

free(c);

//getchar();

return ;

}

● 输出结果

D:\Code\OpenACC\OpenACCProject\OpenACCProject>pgcc main.c -acc -Minfo -o main_acc.exe

main:

, Generating enter data create(a[:])

, Accelerator kernel generated

Generating Tesla code

, #pragma acc loop gang, vector(128) /* blockIdx.x threadIdx.x */

, Generating implicit copyout(a[:])

, Generating enter data create(b[:])

, Accelerator kernel generated

Generating Tesla code

, #pragma acc loop gang, vector(128) /* blockIdx.x threadIdx.x */

, Generating implicit copyout(b[:])

, Generating enter data create(c[:])

, Accelerator kernel generated

Generating Tesla code

, #pragma acc loop gang, vector(128) /* blockIdx.x threadIdx.x */

, Generating implicit copyout(c[:])

Generating implicit copyin(b[:],a[:])

, Generating update self(c[:])

Generating exit data delete(c[:],b[:],a[:]) D:\Code\OpenACC\OpenACCProject\OpenACCProject>main_acc.exe c[] =

PGI: "acc_shutdown" not detected, performance results might be incomplete.

Please add the call "acc_shutdown(acc_device_nvidia)" to the end of your application to ensure that the performance results are complete. Accelerator Kernel Timing data

Timing may be affected by asynchronous behavior

set PGI_ACC_SYNCHRONOUS to to disable async() clauses

D:\Code\OpenACC\OpenACCProject\OpenACCProject\main.c

main NVIDIA devicenum=

time(us): ,

: data region reached time

: compute region reached times

: kernel launched times

grid: [] block: []

elapsed time(us): total=, max= min= avg=

: data region reached times

: data region reached time

: compute region reached times

: kernel launched times

grid: [] block: []

elapsed time(us): total=, max= min= avg=

: data region reached times

: data region reached time

: compute region reached time

: kernel launched time

grid: [] block: []

device time(us): total= max= min= avg=

: data region reached times

: update directive reached time

: data copyout transfers:

device time(us): total=, max=, min= avg=,

: data region reached time

● Nvvp 中,可以看到两个命令队列交替执行

● 在 PGI 命令行中使用命令 pgaccelinfo 查看设备信息

D:\Code\OpenACC\OpenACCProject\OpenACCProject>pgaccelinfo CUDA Driver Version: Device Number:

Device Name: GeForce GTX

Device Revision Number: 6.1

Global Memory Size:

Number of Multiprocessors:

Concurrent Copy and Execution: Yes

Total Constant Memory:

Total Shared Memory per Block:

Registers per Block:

Warp Size:

Maximum Threads per Block:

Maximum Block Dimensions: , ,

Maximum Grid Dimensions: x x

Maximum Memory Pitch: 2147483647B

Texture Alignment: 512B

Clock Rate: MHz

Execution Timeout: Yes

Integrated Device: No

Can Map Host Memory: Yes

Compute Mode: default

Concurrent Kernels: Yes

ECC Enabled: No

Memory Clock Rate: MHz

Memory Bus Width: bits

L2 Cache Size: bytes

Max Threads Per SMP:

Async Engines: // 有两个异步引擎,支持两个命令队列并行

Unified Addressing: Yes

Managed Memory: Yes

Concurrent Managed Memory: No

PGI Compiler Option: -ta=tesla:cc60

OpenACC 异步计算的更多相关文章

- Task:取消异步计算限制操作 & 捕获任务中的异常

Why:ThreadPool没有内建机制标记当前线程在什么时候完成,也没有机制在操作完成时获得返回值,因而推出了Task,更精确的管理异步线程. How:通过构造方法的参数TaskCreationOp ...

- 13.FutureTask异步计算

FutureTask 1.可取消的异步计算,FutureTask实现了Future的基本方法,提供了start.cancel 操作,可以查询计算是否完成,并且可以获取计算 的结果.结果 ...

- 怎样给ExecutorService异步计算设置超时

ExecutorService接口使用submit方法会返回一个Future<V>对象.Future表示异步计算的结果.它提供了检查计算是否完毕的方法,以等待计算的完毕,并获取计算的结果. ...

- java异步计算Future的使用(转)

从jdk1.5开始我们可以利用Future来跟踪异步计算的结果.在此之前主线程要想获得工作线程(异步计算线程)的结果是比较麻烦的事情,需要我们进行特殊的程序结构设计,比较繁琐而且容易出错.有了Futu ...

- 使用QFuture类监控异步计算的结果

版权声明:本文为博主原创文章,未经博主允许不得转载. https://blog.csdn.net/Amnes1a/article/details/65630701在Qt中,为我们提供了好几种使用线程的 ...

- gearman(异步计算)学习

Gearman是什么? 它是分布式的程序调用框架,可完成跨语言的相互调 用,适合在后台运行工作任务.最初是2005年perl版本,2008年发布C/C++版本.目前大部分源码都是(Gearmand服务 ...

- OpenACC 绘制曼德勃罗集

▶ 书上第四章,用一系列步骤优化曼德勃罗集的计算过程. ● 代码 // constants.h ; ; ; ; const double xmin=-1.7; ; const double ymin= ...

- OpenACC Julia 图形

▶ 书上的代码,逐步优化绘制 Julia 图形的代码 ● 无并行优化(手动优化了变量等) #include <stdio.h> #include <stdlib.h> #inc ...

- 如何解救在异步Java代码中已检测的异常

Java语言通过已检测异常语法所提供的静态异常检测功能非常实用,通过它程序开发人员可以用很便捷的方式表达复杂的程序流程. 实际上,如果某个函数预期将返回某种类型的数据,通过已检测异常,很容易就可以扩展 ...

随机推荐

- 在 Ubuntu 18.0-10上安装 MySQL8

直接使用apt install mysql-server安装,那么恭喜你踩坑. sudo apt install mysql-server默认会安装MySQL 5.7,将会出现一些莫名的问题,例如:安 ...

- SUST OJ 1675: Fehead的项目(单调栈)

1675: Fehead的项目 时间限制: 1 Sec 内存限制: 128 MB提交: 41 解决: 27[提交][状态][讨论版] 题目描述 Fehead俱乐部接手了一个项目,为了统计数据,他们 ...

- jsp之response方法

response简介 response对象:对客户端的请求作出回应,将Web服务器处理后的结果发回客户端. response对象:属于javax.servlet.HttpServletResponse ...

- IIS目录

一.目录浏览 一般网站部署后,需要禁用目录浏览, 若启用目录浏览的话,可以自定义开启哪些目录(只能根目录),和影藏哪些目录 iis中限制访问某个文件或某个类型的文件配置方法 注意:图片目录不要隐藏,不 ...

- POJ1733 Parity game

题意 Language:Default Parity game Time Limit: 1000MS Memory Limit: 65536K Total Submissions: 13833 Acc ...

- 杨恒说李的算法好-我问你听谁说的-龙哥说的(java中常见的List就2个)(list放入的是原子元素)

1.List中常用的 方法集合: 函数原型 ******************************************* ********************************** ...

- OASGraph 转换rest api graphql 试用

创建rest api lb4 appdemo 参考提示即可 安装 OASGraph git clone https://github.com/strongloop/oasgraph.git cd oa ...

- oracle 与sql serve 获取随机行数的数据

Oracle 随机获取N条数据 当我们获取数据时,可能会有这样的需求,即每次从表中获取数据时,是随机获取一定的记录,而不是每次都获取一样的数据,这时我们可以采取Oracle内部一些函数,来达到这 ...

- maven学习--进阶篇

2016-01-06 02:34:24 继承与聚合 (八)maven移植 讲到maven移植,大家可能第一反应就是是指将一个java项目部署到不同的环境中去,实际上,在maven中,它认为当你参加一个 ...

- jwt 的使用

jwt 是什么 ? json web token 的 简称,是一种无状态的 认证机制 原理:客户端 向服务器端请求一个 jwt 生成的 token ,这个token 带有 一些信息,下次 客户端 ...