Scrapy Architecture overview--官方文档

原文地址:https://doc.scrapy.org/en/latest/topics/architecture.html

This document describes the architecture of Scrapy and how its components interact.

Overview

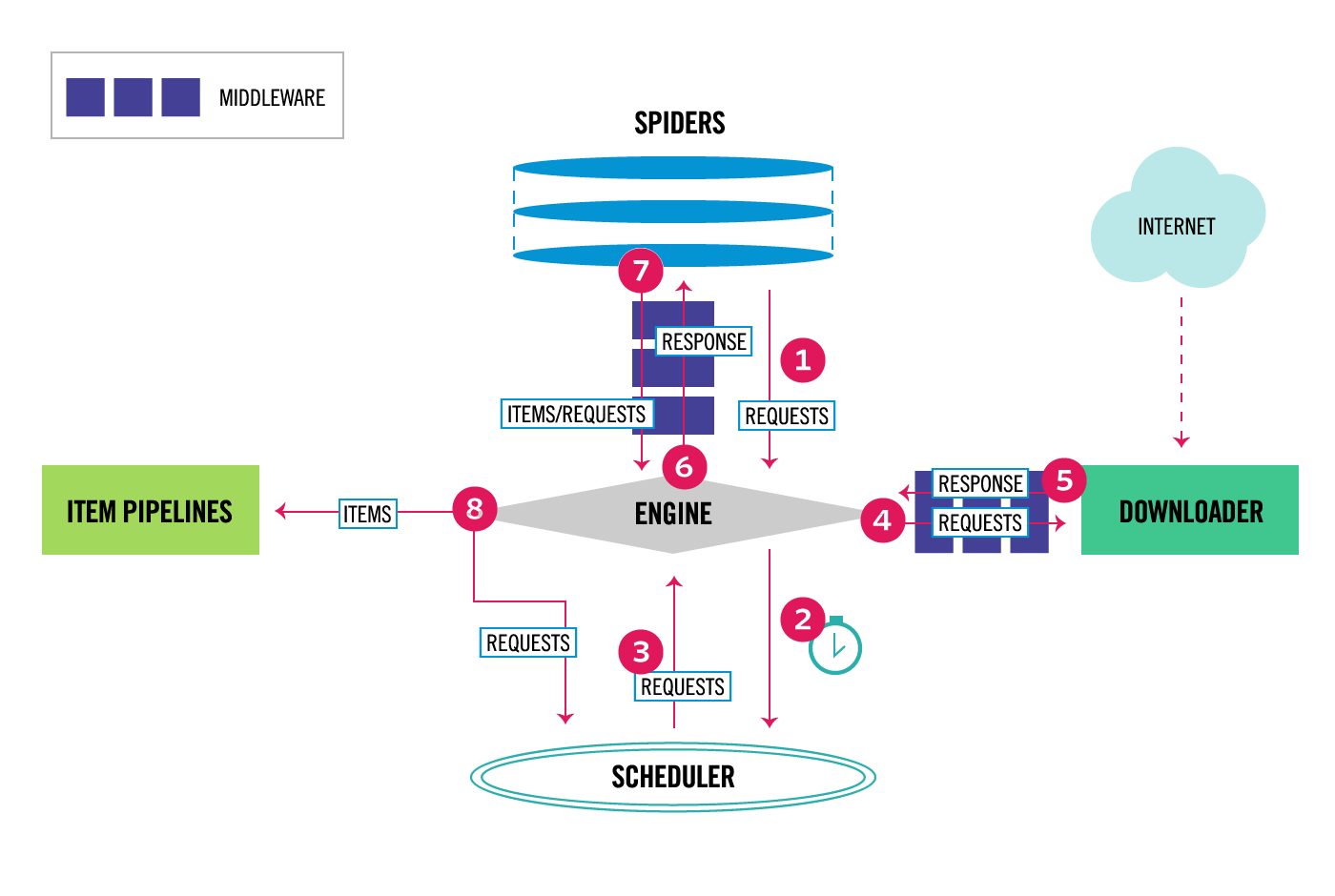

The following diagram shows an overview of the Scrapy architecture with its components and an outline of the data flow that takes place inside the system (shown by the red arrows). A brief description of the components is included below with links for more detailed information about them. The data flow is also described below.

Data flow

The data flow in Scrapy is controlled by the execution engine, and goes like this:

- The Engine gets the initial Requests to crawl from the Spider.

- The Engine schedules the Requests in the Scheduler and asks for the next Requests to crawl.

- The Scheduler returns the next Requests to the Engine.

- The Engine sends the Requests to the Downloader, passing through the Downloader Middlewares (see

process_request()). - Once the page finishes downloading the Downloader generates a Response (with that page) and sends it to the Engine, passing through the Downloader Middlewares (see

process_response()). - The Engine receives the Response from the Downloader and sends it to the Spider for processing, passing through the Spider Middleware (see

process_spider_input()). - The Spider processes the Response and returns scraped items and new Requests (to follow) to the Engine, passing through the Spider Middleware (see

process_spider_output()). - The Engine sends processed items to Item Pipelines, then send processed Requests to the Scheduler and asks for possible next Requests to crawl.

- The process repeats (from step 1) until there are no more requests from the Scheduler.

Components

Scrapy Engine

The engine is responsible for controlling the data flow between all components of the system, and triggering events when certain actions occur. See the Data Flow section above for more details.

Scheduler

The Scheduler receives requests from the engine and enqueues them for feeding them later (also to the engine) when the engine requests them.

Downloader

The Downloader is responsible for fetching web pages and feeding them to the engine which, in turn, feeds them to the spiders.

Spiders

Spiders are custom classes written by Scrapy users to parse responses and extract items (aka scraped items) from them or additional requests to follow. For more information see Spiders.

Item Pipeline

The Item Pipeline is responsible for processing the items once they have been extracted (or scraped) by the spiders. Typical tasks include cleansing, validation and persistence (like storing the item in a database). For more information see Item Pipeline.

Downloader middlewares

Downloader middlewares are specific hooks that sit between the Engine and the Downloader and process requests when they pass from the Engine to the Downloader, and responses that pass from Downloader to the Engine.

Use a Downloader middleware if you need to do one of the following:

- process a request just before it is sent to the Downloader (i.e. right before Scrapy sends the request to the website);

- change received response before passing it to a spider;

- send a new Request instead of passing received response to a spider;

- pass response to a spider without fetching a web page;

- silently drop some requests.

For more information see Downloader Middleware.

Spider middlewares

Spider middlewares are specific hooks that sit between the Engine and the Spiders and are able to process spider input (responses) and output (items and requests).

Use a Spider middleware if you need to

- post-process output of spider callbacks - change/add/remove requests or items;

- post-process start_requests;

- handle spider exceptions;

- call errback instead of callback for some of the requests based on response content.

For more information see Spider Middleware.

Event-driven networking

Scrapy is written with Twisted, a popular event-driven networking framework for Python. Thus, it’s implemented using a non-blocking (aka asynchronous) code for concurrency.

Scrapy Architecture overview--官方文档的更多相关文章

- hbase官方文档(转)

FROM:http://www.just4e.com/hbase.html Apache HBase™ 参考指南 HBase 官方文档中文版 Copyright © 2012 Apache Soft ...

- HBase官方文档

HBase官方文档 目录 序 1. 入门 1.1. 介绍 1.2. 快速开始 2. Apache HBase (TM)配置 2.1. 基础条件 2.2. HBase 运行模式: 独立和分布式 2.3. ...

- Spark官方文档 - 中文翻译

Spark官方文档 - 中文翻译 Spark版本:1.6.0 转载请注明出处:http://www.cnblogs.com/BYRans/ 1 概述(Overview) 2 引入Spark(Linki ...

- Spring 4 官方文档学习 Spring与Java EE技术的集成

本部分覆盖了以下内容: Chapter 28, Remoting and web services using Spring -- 使用Spring进行远程和web服务 Chapter 29, Ent ...

- Spark SQL 官方文档-中文翻译

Spark SQL 官方文档-中文翻译 Spark版本:Spark 1.5.2 转载请注明出处:http://www.cnblogs.com/BYRans/ 1 概述(Overview) 2 Data ...

- 人工智能系统Google开源的TensorFlow官方文档中文版

人工智能系统Google开源的TensorFlow官方文档中文版 2015年11月9日,Google发布人工智能系统TensorFlow并宣布开源,机器学习作为人工智能的一种类型,可以让软件根据大量的 ...

- Google Android官方文档进程与线程(Processes and Threads)翻译

android的多线程在开发中已经有使用过了,想再系统地学习一下,找到了android的官方文档,介绍进程与线程的介绍,试着翻译一下. 原文地址:http://developer.android.co ...

- OGR 官方文档

OGR 官方文档 http://www.gdal.org/ogr/index.html The OGR Simple Features Library is a C++ open source lib ...

- cassandra 3.x官方文档(5)---探测器

写在前面 cassandra3.x官方文档的非官方翻译.翻译内容水平全依赖本人英文水平和对cassandra的理解.所以强烈建议阅读英文版cassandra 3.x 官方文档.此文档一半是翻译,一半是 ...

- Cuda 9.2 CuDnn7.0 官方文档解读

目录 Cuda 9.2 CuDnn7.0 官方文档解读 准备工作(下载) 显卡驱动重装 CUDA安装 系统要求 处理之前安装的cuda文件 下载的deb安装过程 下载的runfile的安装过程 安装完 ...

随机推荐

- java 简单工厂模式实现

简单工厂模式:也可以叫做静态工厂方法,属于类创建型模式,根据不同的参数,返回不同的类实现. 主要包含了三个角色: A.抽象产品角色 一般用接口 或是 抽象类实现 B.具体的产品角色,具体的类的实现 C ...

- 位姿检索PoseRecognition:LSH算法.p稳定哈希

位姿检索使用了LSH方法,而不使用PNP方法,是有一定的来由的.主要的工作会转移到特征提取和检索的算法上面来,有得必有失.因此,放弃了解析的方法之后,又放弃了优化的方法,最后陷入了检索的汪洋大海. 0 ...

- 创建一个dynamics CRM workflow (四) - Development of Custom Workflows

首先我们需要确定windows workflow foundation 已经安装. 创建之后先移除MyCustomWorkflows 里面的 Activity.xaml 从packages\Micro ...

- Java什么时候用static,public,private,protected?

这么说吧,假如你是一个类: public表示你愿意其他人看见你的物品(字段.属性),或者你愿意帮别人做事(方法): private表示你不愿意其他任何人看见你的私人物品,也不愿意帮任何人做事: pro ...

- MySQL笔记4------面试问题

1.链接1:https://blog.csdn.net/derrantcm/article/details/51533885. 链接2:https://blog.csdn.net/derrantcm/ ...

- Project Euler 38 Pandigital multiples

题意: 将192分别与1.2.3相乘: 192 × 1 = 192192 × 2 = 384192 × 3 = 576 连接这些乘积,我们得到一个1至9全数字的数192384576.我们称192384 ...

- groupadd(创建组)重要参数介绍

-g :值定用户组GID值.除非接 -o 参数(如:groupadd -g 666 -o oldboy),否则ID值必须是唯一的数字(不能为负数). 如果不指定 -g 参数,则默认从500开始

- Win32 编程消息常量(C#)

public class WinMessages { #region 基本消息 public const int WM_NULL = 0x0000; public const int WM_CREAT ...

- jquery 去重

var yearArray = new Array(2009, 2009, 2010, 2010, 2009, 2010); $.unique(yearArray); alert(yearArray) ...

- 洛谷 P1124 文件压缩

P1124 文件压缩 题目背景 提高文件的压缩率一直是人们追求的目标.近几年有人提出了这样一种算法,它虽然只是单纯地对文件进行重排,本身并不压缩文件,但是经这种算法调整后的文件在大多数情况下都能获得比 ...