Paper Reading - Deep Captioning with Multimodal Recurrent Neural Networks ( m-RNN ) ( ICLR 2015 ) ★

Link of the Paper: https://arxiv.org/pdf/1412.6632.pdf

Main Points:

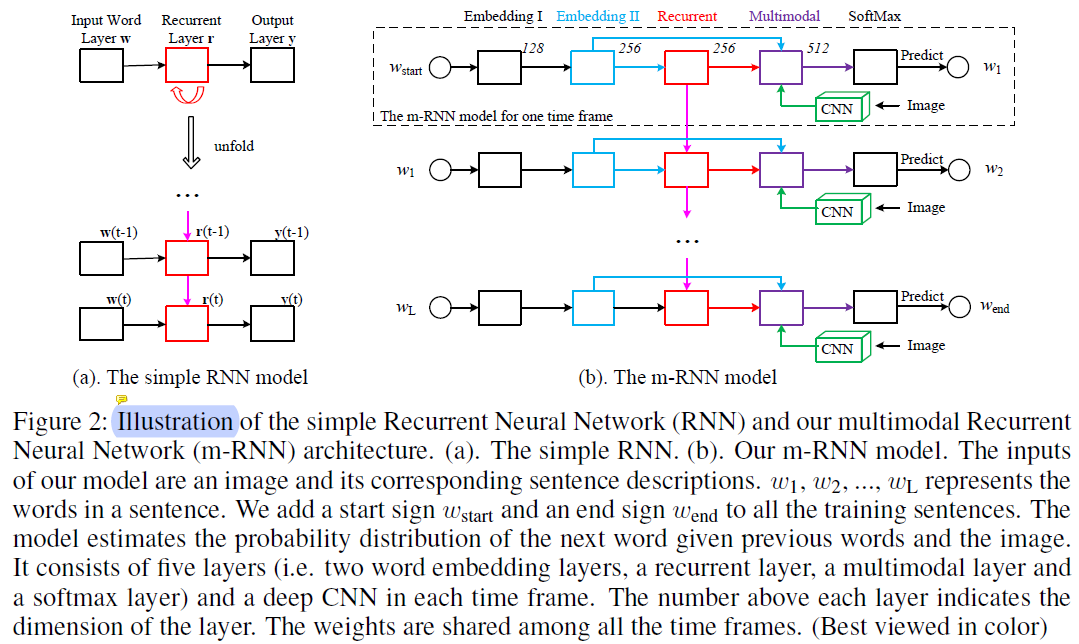

- The authors propose a multimodal Recurrent Neural Networks ( AlexNet/VGGNet + a multimodal layer + RNNs ). Their work has two major differences from these methods. Firstly, they incorporate a two-layer word embedding system in the m-RNN network structure which learns the word representation more efficiently than the single-layer word embedding. Secondly, they do not use the recurrent layer to store the visual information. The image representation is inputted to the m-RNN model along with every word in the sentence description.

- Most of the sentence-image multimodal models use pre-computed word embedding vectors as the initialization of their models. In contrast, the authors randomly initialize their word embedding layers and learn them from the training data.

- The m-RNN model is trained using a log-likelihood cost function. The errors can be backpropagated to the three parts ( the vision part, the language part, and the ) of the m-RNN model to update the model parameters simultaneously.

- The hyperparameters, such as layer dimensions and the choice of the non-linear activation functions, are tuned via cross-validation on Flickr8K dataset and are then fixed across all the experiments.

Other Key Points:

- Applications for Image Captioning: early childhood education, image retrieval, and navigation for the blind.

- There are generally three categories of methods for generating novel sentence descriptions for images. The first category assumes a specific rule of the language grammer. They parse the sentence and divide it into several parts. This kind of method generates sentences that are syntactically correct. The second category retrieves similar captioned images, and generates new descriptions by generalizing and re-composing the retrieved captions. The third category of methods, which is more related to our method, learns a probability density over the space of multimodal inputs, using for example, Deep Boltzmann Machines, and topic models. They generate sentences with richer and more flexible structure than the first group. The probability of generating sentences using the model can serve as the affinity metric for retrieval.

- Many previous methods treat the task of describing images as a retrieval task and formulate the problem as a ranking or embedding learning problem. They first extract the word and sentence features ( e.g. Socher et al.(2014) uses dependency tree Recursive Neural Network to extract sentence features ) as well as the image features. Then they optimize a ranking cost to learn an embedding model that maps both the sentence feature and the image feature to a common semantic feature space ( the same semantic space ). In this way, they can directly calculate the distance between images and sentences. These methods genarate image captions by retrieving them from a sentence database. Thus, they lack the ability of generating novel sentences or describing images that contain novel combinations of objects and scenes.

- Benchmark datasets for Image Captioning: IAPR TC-12 ( Grubinger et al.(2006) ), Flickr8K ( Rashtchian et al.(2010) ), Flickr30K ( Young et al.(2014) ) and MS COCO ( Lin et al.(2014) ).

- Evaluation Metrics for Sentence Generation: Sentence perplexity and BLUE scores.

- Tasks related to Image Captioning: Generating Novel Sentences, Retrieving Images Given a Sentence, Retrieving Sentences Given an Image.

- The m-RNN model is trained using Baidu's internal deep learning platform PADDLE.

Paper Reading - Deep Captioning with Multimodal Recurrent Neural Networks ( m-RNN ) ( ICLR 2015 ) ★的更多相关文章

- Paper Reading - Sequence to Sequence Learning with Neural Networks ( NIPS 2014 )

Link of the Paper: https://arxiv.org/pdf/1409.3215.pdf Main Points: Encoder-Decoder Model: Input seq ...

- 递归神经网络(Recurrent Neural Networks,RNN)

在深度学习领域,传统的多层感知机(MLP)具有出色的表现,取得了许多成功,它曾在许多不同的任务上——包括手写数字识别和目标分类上创造了记录.甚至到了今天,MLP在解决分类任务上始终都比其他方法要略胜一 ...

- Paper Reading - Deep Visual-Semantic Alignments for Generating Image Descriptions ( CVPR 2015 )

Link of the Paper: https://arxiv.org/abs/1412.2306 Main Points: An Alignment Model: Convolutional Ne ...

- Paper Reading - Mind’s Eye: A Recurrent Visual Representation for Image Caption Generation ( CVPR 2015 )

Link of the Paper: https://ieeexplore.ieee.org/document/7298856/ A Correlative Paper: Learning a Rec ...

- Hyperspectral Image Classification Using Similarity Measurements-Based Deep Recurrent Neural Networks

用RNN来做像素分类,输入是一系列相近的像素,长度人为指定为l,相近是利用像素相似度或是范围相似度得到的,计算个欧氏距离或是SAM. 数据是两个高光谱数据 1.Pavia University,Ref ...

- Attention and Augmented Recurrent Neural Networks

Attention and Augmented Recurrent Neural Networks CHRIS OLAHGoogle Brain SHAN CARTERGoogle Brain Sep ...

- The Unreasonable Effectiveness of Recurrent Neural Networks (RNN)

http://karpathy.github.io/2015/05/21/rnn-effectiveness/ There’s something magical about Recurrent Ne ...

- 课程五(Sequence Models),第一 周(Recurrent Neural Networks) —— 1.Programming assignments:Building a recurrent neural network - step by step

Building your Recurrent Neural Network - Step by Step Welcome to Course 5's first assignment! In thi ...

- (zhuan) Attention in Long Short-Term Memory Recurrent Neural Networks

Attention in Long Short-Term Memory Recurrent Neural Networks by Jason Brownlee on June 30, 2017 in ...

随机推荐

- SQL-还原数据库,数据库提示正在还原中的处理办法

还原数据库时,提示还原成功,可是数据库列表中该数据库显示正在还原中: 执行此命令即可: RESTORE DATABASE EnterPriseBuilding WITH RECOVERY 1. 至少有 ...

- Ubuntu install 错误 E:Unable to locate package

今天在 Ubuntu 上执行 sudo apt install sl 命令,结果报错:E:Unable to locate package sl 上网查询了一下,先更新一下 apt-get,执行:su ...

- 在CentOS7上安装MySQL5.7-源码包方式

缺点:后期升级不方便,生产中建议RPM包方式安装 CentOS7默认安装了和MySQL有兼容性的MariaDB数据库,在我们安装MySQL5.7之前为了避免发生冲突首先删除MariaDB. # rpm ...

- linux 学习第十六天(Samba配置)

Samba 服务 yum install samba mv smb.conf smb.conf.bak cat smb.conf.bak | grep -v "#" | grep ...

- 课时26.a标签其它属性(掌握)

4.a标签的href属性除了可以指定一个网络地址以外,还可以指定一个本地地址 a标签中还有一个属性,叫做title,a标签中的title和img标签中的title一样,都是用来控制鼠标悬停时显示的提示 ...

- 树莓派3B+学习笔记:5、安装vim

以下操作使用root账户登陆. 1.在终端中输入 apt-get install vim 输入“y”,回车: 2.等一下,安装完成: 3.用vim新建一个文本文件测试一下,在终端重输入 vim tes ...

- LIFO栈 ADT接口 实现十进制转其他进制

LIFO 接口 Stack.h //LIFO 链栈初始化 void InitStack(Stack top){ //LIFO 链栈判断栈空 boolean StackKEmpty(Stack top) ...

- SSM 框架基于ORACLE集成TKMYBATIS 和GENERATOR自动生成代码(Github源码)

基于前一个博客搭建的SSM框架 https://www.cnblogs.com/jiangyuqin/p/9870641.html 源码:https://github.com/JHeaven/ssm- ...

- 2017-2018-1 20155230 《信息安全技术》实验二——Windows口令破解

2017-2018-1 20155230 <信息安全技术>一.Windows口令破解 1.字典破解 (1)为本机创建新用户.为了达到实验效果,用户口令不要设置得过于复杂,可以选择自己的生日 ...

- 20155235 2006-2007-2 《Java程序设计》第1周学习总结

20155235 2006-2007-2 <Java程序设计>第1周学习总结 教材学习内容总结 第二章 使用的JRE不同,对JAVA的执行有什么影响 第三章 字符串的用法在JAVA和C中有 ...