hadoop hbase install (2)

reference: http://dblab.xmu.edu.cn/blog/install-hbase/

reference: http://dblab.xmu.edu.cn/blog/2139-2/

wget sudo wget http://archive.apache.org/dist/hbase/1.1.5/hbase-1.1.5-bin.tar.gz

sudo tar zvxf hbase-1.1.5-bin.tar.gz

sudo mv hbase-1.1.5 hbase

sudo chown -R hadoop ./hbase

./hbase/bin/hbase version

2019-01-25 17:23:11,310 INFO [main] util.VersionInfo: HBase 1.1.5

2019-01-25 17:23:11,311 INFO [main] util.VersionInfo: Source code repository git://diocles.local/Volumes/hbase-1.1.5/hbase revision=239b80456118175b340b2e562a5568b5c744252e

2019-01-25 17:23:11,311 INFO [main] util.VersionInfo: Compiled by ndimiduk on Sun May 8 20:29:26 PDT 2016

2019-01-25 17:23:11,312 INFO [main] util.VersionInfo: From source with checksum 7ad8dc6c5daba19e4aab081181a2457d

hadoop@iZuf68496ttdogcxs22w6sZ:/usr/local$ cat ~/.bashrc

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

export JRE_HOME=${JAVA_HOME}/jre

export CLASSPATH=.:${JAVA_HOME}/lib:${JRE_HOME}/lib

export PATH=$PATH:${JAVA_HOME}/bin:/usr/local/hbase/bin

hadoop@iZuf68496ttdogcxs22w6sZ:/usr/local/hbase/conf$ vim hbase-site.xml

<configuration>

<property>

<name>hbase.rootdir</name>

<value>hdfs://localhost:9000/hbase</value>

</property>

<property>

<name>hbase.cluster.distributed</name>

<value>true</value>

</property>

</configuration>

hadoop@iZuf68496ttdogcxs22w6sZ:/usr/local/hbase$ grep -v ^# ./conf/hbase-env.sh | grep -v ^$

export JAVA_HOME=/usr/lib/jvm/java-8-openjdk-amd64

export HBASE_CLASSPATH=/usr/local/hadoop/conf

export HBASE_OPTS="-XX:+UseConcMarkSweepGC"

export HBASE_MASTER_OPTS="$HBASE_MASTER_OPTS -XX:PermSize=128m -XX:MaxPermSize=128m"

export HBASE_REGIONSERVER_OPTS="$HBASE_REGIONSERVER_OPTS -XX:PermSize=128m -XX:MaxPermSize=128m"

export HBASE_MANAGES_ZK=true

hadoop@iZuf68496ttdogcxs22w6sZ:/usr/local/hbase$ ./bin/stop-hbase.sh

stopping hbase...............

localhost: stopping zookeeper.

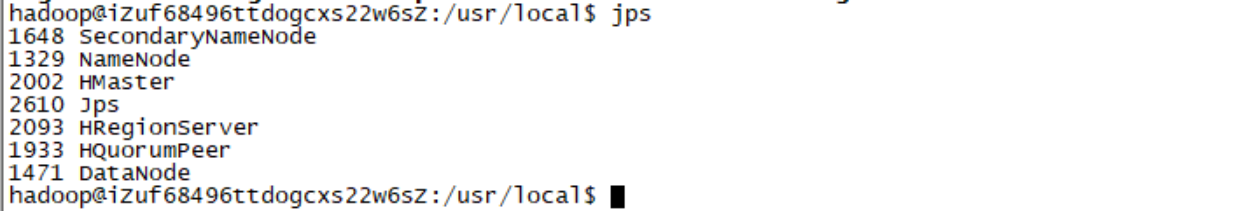

hadoop@iZuf68496ttdogcxs22w6sZ:/usr/local/hbase$ jps

1648 SecondaryNameNode

1329 NameNode

8795 Jps

1471 DataNode

hadoop@iZuf68496ttdogcxs22w6sZ:/usr/local/hbase$ ./bin/start-hbase.sh

localhost: starting zookeeper, logging to /usr/local/hbase/bin/../logs/hbase-hadoop-zookeeper-iZuf68496ttdogcxs22w6sZ.out

starting master, logging to /usr/local/hbase/bin/../logs/hbase-hadoop-master-iZuf68496ttdogcxs22w6sZ.out

OpenJDK 64-Bit Server VM warning: ignoring option PermSize=128m; support was removed in 8.0

OpenJDK 64-Bit Server VM warning: ignoring option MaxPermSize=128m; support was removed in 8.0

starting regionserver, logging to /usr/local/hbase/bin/../logs/hbase-hadoop-1-regionserver-iZuf68496ttdogcxs22w6sZ.out

note:we need to set hdfs dfs.replication=3

start hbase normal, we can find it in hdfs web page.

hbase(main):010:0> describe 'student'

Table student is ENABLED

student

COLUMN FAMILIES DESCRIPTION

{NAME => 'Sage', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0', BL

OCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

{NAME => 'Sdept', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0', B

LOCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

{NAME => 'Sname', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0', B

LOCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

{NAME => 'Ssex', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0', BL

OCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

{NAME => 'course', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0',

BLOCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

5 row(s) in 0.0330 seconds

hbase(main):011:0> disable 'student'

0 row(s) in 2.4280 seconds

hbase(main):012:0> drop 'student'

0 row(s) in 1.2700 seconds

hbase(main):013:0> list

TABLE

0 row(s) in 0.0040 seconds

=> []

hbase(main):002:0> create 'student','Sname','Ssex','Sage','Sdept','course'

0 row(s) in 1.3740 seconds

=> Hbase::Table - student

hbase(main):003:0> scan 'student'

ROW COLUMN+CELL

0 row(s) in 0.1200 seconds

hbase(main):004:0> describe 'student'

Table student is ENABLED

student

COLUMN FAMILIES DESCRIPTION

{NAME => 'Sage', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0', BL

OCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

{NAME => 'Sdept', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0', B

LOCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

{NAME => 'Sname', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0', B

LOCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

{NAME => 'Ssex', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0', BL

OCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

{NAME => 'course', BLOOMFILTER => 'ROW', VERSIONS => '1', IN_MEMORY => 'false', KEEP_DELETED_CELLS => 'FALSE', DATA_BLOCK_ENCODING => 'NONE', TTL => 'FOREVER', COMPRESSION => 'NONE', MIN_VERSIONS => '0',

BLOCKCACHE => 'true', BLOCKSIZE => '65536', REPLICATION_SCOPE => '0'}

5 row(s) in 0.0420 seconds

hbase(main):005:0> put 'student', '95001', 'Sname', 'LiYing'

0 row(s) in 0.1100 seconds

hbase(main):006:0> scan 'student'

ROW COLUMN+CELL

95001 column=Sname:, timestamp=1548640148767, value=LiYing

1 row(s) in 0.0220 seconds

hbase(main):007:0> put 'student','95001','course:math','80'

0 row(s) in 0.0680 seconds

hbase(main):008:0> scan 'student'

ROW COLUMN+CELL

95001 column=Sname:, timestamp=1548640148767, value=LiYing

95001 column=course:math, timestamp=1548640220401, value=80

1 row(s) in 0.0170 seconds

hbase(main):009:0> delete 'student','95001','Sname'

0 row(s) in 0.0300 seconds

hbase(main):011:0> scan 'student'

ROW COLUMN+CELL

95001 column=course:math, timestamp=1548640220401, value=80

1 row(s) in 0.0170 seconds

hbase(main):012:0> get 'student','95001'

COLUMN CELL

course:math timestamp=1548640220401, value=80

1 row(s) in 0.0260 seconds

hbase(main):013:0> deleteall 'student','95001'

0 row(s) in 0.0180 seconds

hbase(main):014:0> scan 'student'

ROW COLUMN+CELL

0 row(s) in 0.0170 seconds

hbase(main):015:0> disable 'student'

0 row(s) in 2.2720 seconds

hbase(main):017:0> drop 'student'

0 row(s) in 1.2530 seconds

hbase(main):018:0> list

TABLE

0 row(s) in 0.0060 seconds

=> []

hadoop hbase install (2)的更多相关文章

- Hadoop,HBase,Zookeeper源码编译并导入eclipse

基本理念:尽可能的参考官方英文文档 Hadoop: http://wiki.apache.org/hadoop/FrontPage HBase: http://hbase.apache.org/b ...

- [精华]Hadoop,HBase分布式集群和solr环境搭建

1. 机器准备(这里做測试用,目的准备5台CentOS的linux系统) 1.1 准备了2台机器,安装win7系统(64位) 两台windows物理主机: 192.168.131.44 adminis ...

- [推荐]Hadoop+HBase+Zookeeper集群的配置

[推荐]Hadoop+HBase+Zookeeper集群的配置 Hadoop+HBase+Zookeeper集群的配置 http://wenku.baidu.com/view/991258e881c ...

- hbase(ERROR: org.apache.hadoop.hbase.ipc.ServerNotRunningYetException: Server is not running yet)

今天启动clouder manager集群时候hbase list出现 (ERROR: org.apache.hadoop.hbase.ipc.ServerNotRunningYetException ...

- Cloudera集群中提交Spark任务出现java.lang.NoSuchMethodError: org.apache.hadoop.hbase.HTableDescriptor.addFamily错误解决

Cloudera及相关的组件版本 Cloudera: 5.7.0 Hbase: 1.20 Hadoop: 2.6.0 ZooKeeper: 3.4.5 就算是引用了相应的组件依赖,依然是报一样的错误! ...

- 【解决】org.apache.hadoop.hbase.ClockOutOfSyncException:

org.apache.hadoop.hbase.ClockOutOfSyncException: org.apache.hadoop.hbase.ClockOutOfSyncException: Se ...

- org.apache.hadoop.hbase.TableNotDisabledException 解决方法

Exception in thread "main" org.apache.hadoop.hbase.TableNotDisabledException: org.apache.h ...

- Java 向Hbase表插入数据报(org.apache.hadoop.hbase.client.HTablePool$PooledHTable cannot be cast to org.apac)

org.apache.hadoop.hbase.client.HTablePool$PooledHTable cannot be cast to org.apac 代码: //1.create HTa ...

- Hadoop,HBase集群环境搭建的问题集锦(四)

21.Schema.xml和solrconfig.xml配置文件里參数说明: 參考资料:http://www.hipony.com/post-610.html 22.执行时报错: 23., /comm ...

随机推荐

- 写Java代码的一些小技巧

写Java代码有三年多了,遇到过很多坑,也有一些小小的心得.特地分享出来供各位学习交流.这些技巧主要涉及谷歌Guava工具类的使用.Java 8新特性的使用.DSL风格开发.代码封装等技巧. 一.nu ...

- C#对两种类型动态库的使用

一.托管:如果一个动态库本身是基于.NET的,那么可以直接在工程引用里右键添加引用,如微软的COM技术[因为你依托的是微软的框架,所以需要regsvr32注册] 二.非托管:如果不是基于.NEt的,那 ...

- 【前端】纯html+css+javascript实现楼层跳跃式的页面布局

实现效果演示: 实现代码及注释: <!DOCTYPE html> <html> <head> <title>楼层跳跃式的页面布局</title&g ...

- RedHat6使用CentOS yum源 换yum

yum 简单介绍一下 yum 主要功能是更方便的添加/删除/更新RPM 包,自动解决包的倚赖性问题,便于管理大量系统的更新问题. yum 可以同时配置多个资源库(Repository),简洁的配置文件 ...

- kafka删除一个topic

前言 当我们在shell中执行topic删除命令的时候` kafka-topics --delete --topic xxxx --zookeeper xxx`,会显示,xxxx已经被标记为删除.然后 ...

- kylin从入门到实战:实际案例

版权申明:转载请注明出处.文章来源:http://bigdataer.net/?p=308 排版乱?请移步原文获得更好的阅读体验 前面两篇文章已经介绍了kylin的相关概念以及cube的一些原理,这篇 ...

- NRF24L01 射频收发 使用方法

在干啥 这两天在调nrf24l01,最终还是参考正点原子的例程才调通,看芯片手册太难了 还要说啥废话 废话说到这,接下来上代码 SPI协议 spi.c #include "spi.h&quo ...

- 数据库与hadoop与分布式文件系统的区别和联系

转载一篇关系数据库与Hadoop的关系的文章 1. 用向外扩展代替向上扩展 扩展商用关系型数据库的代价是非常昂贵的.它们的设计更容易向上扩展.要运行一个更大的数据库,就需要买一个更大的机器.事实上,往 ...

- Java I/O学习——File

File我们出看可能会根据字面意思理解为文件,其实它既代表文件又代表目录. 这里有一个例子可以列出指定目录下的所有文件或目录, 以及我们可以过滤得到我们想要的文件 import java.io.Fil ...

- ros python 订阅robot_pose

#!/usr/bin/env python import rospy import tf import time from tf.transformations import * from std_m ...